Database

Database

Mysql Tutorial

Mysql Tutorial

Detailed introduction to the InnoDB storage engine in MySQL (code example)

Detailed introduction to the InnoDB storage engine in MySQL (code example)

Detailed introduction to the InnoDB storage engine in MySQL (code example)

This article brings you a detailed introduction (code example) about the InnoDB storage engine in MySQL. It has certain reference value. Friends in need can refer to it. I hope it will be helpful to you.

InnoDB belongs to the storage engine layer in MySQL and is integrated into the database in the form of a plug-in. Starting from MySQL 5.5.8, InnoDB becomes its default storage engine. InnoDB storage engine supports transactions, and its design goal is mainly for OLTP applications. Its main features include: supporting transactions, row lock design to support high concurrency, foreign key support, automatic crash recovery, clustered index organization table structure, etc. (Related recommendations: MySQL Tutorial)

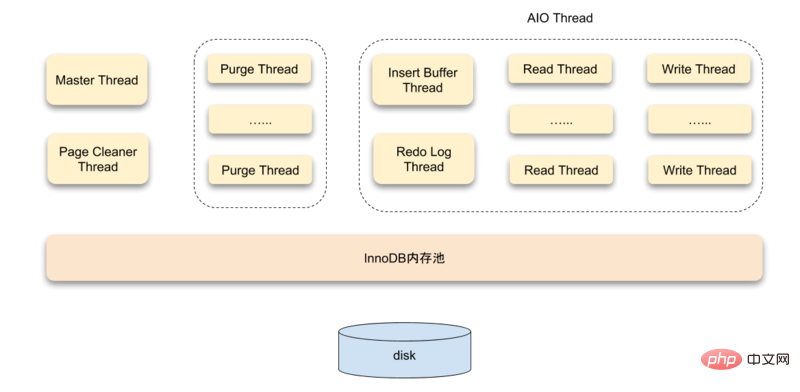

System Architecture

The InnoDB storage engine is composed of three parts: memory pool, background thread, and disk storage. .

Threads

InnoDB uses a multi-threading model, with multiple different threads in the background responsible for processing different tasks

Master Thread

Master Thread is the core background thread, which is mainly responsible for asynchronously refreshing the data in the buffer pool to the disk to ensure data consistency. Including dirty page refresh, merged insertion buffer, UNDO page recycling, etc.

IO Thread

In the InnoDB storage engine, asynchronous IO (Async IO) is used extensively to handle write IO requests. The job of IO Thread is mainly responsible for the callback of these IO requests.

Purge Thread

After a transaction is committed, the undo log used by it may no longer be needed, so Purge Thread is required to recycle the UNDO pages that have been allocated and used. InnoDB supports multiple Purge Threads, which can speed up the recycling of UNDO pages, increase CPU usage and improve storage engine performance.

Page Cleaner Thread

Page Cleaner Thread is used to replace the dirty page refresh operation in Master Thread. Its purpose is to reduce the work of the original Master Thread and the blocking of user query threads, and further improve Performance of the InnoDB storage engine.

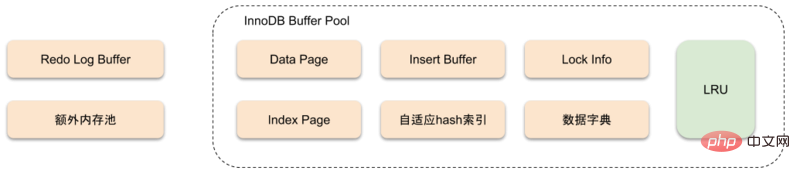

Memory

InnoDB Storage Engine Memory Structure

Buffer Pool

InnoDB Storage The engine is based on disk storage and manages the records in pages. But due to the gulf between CPU speed and disk speed, disk-based database systems often use buffer pool records to improve the overall performance of the database.

The buffer pool actually uses the speed of memory to compensate for the impact of slow disk speed on database performance. When the database performs a read operation, the page in the disk is first put into the buffer pool. The next time the same page is read, the page data is first obtained from the buffer pool to act as a cache.

For data modification operations, the page data in the buffer pool is first modified, and then flushed to the disk using a mechanism called Checkpoint.

The size of the buffer pool directly affects the overall performance of the database. For the InnoDB storage engine, the buffer pool configuration is set through the parameter innodb_buffer_pool_size. Use the SHOW VARIABLES LIKE 'innodb_buffer_pool_size' command to view the buffer pool configuration:

mysql> SHOW VARIABLES LIKE 'innodb_buffer_pool_size' \G *************************** 1. row *************************** Variable_name: innodb_buffer_pool_size Value: 134217728 1 row in set (0.01 sec)

The types of data pages cached in the buffer pool are: index pages, undo pages, insert buffers, adaptive hashing Index, InnoDB lock information, data dictionary information, etc. Index pages and data pages account for a large part of the buffer pool.

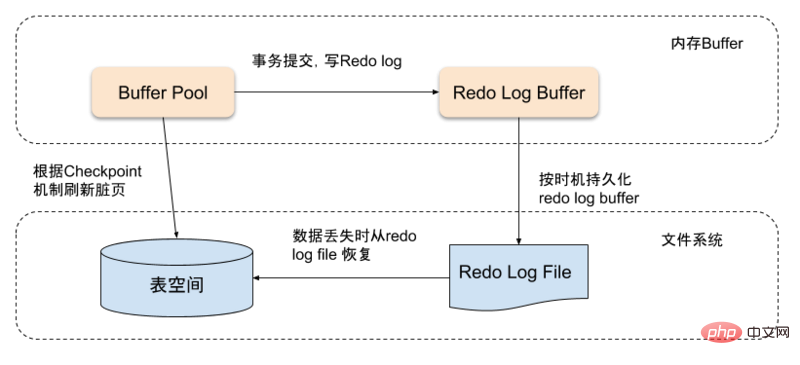

Redo log buffering

When the page data in the buffer pool is newer than the disk, the new data needs to be flushed to the disk. InnoDB uses the Write Ahead Log strategy to refresh data. That is, when a transaction is submitted, the redo log buffer is first written. The redo log buffer will be flushed to the reset log file at a certain frequency, and then the dirty pages will be flushed to the disk according to the checkpoint mechanism. .

The redo log buffer does not need to be set very large. Normally 8M can meet most application scenarios. The redo log supports the following three situations to trigger refresh:

Master Thread flushes the redo log buffer to the redo log file every second

When each transaction is committed, the redo log buffer is flushed to the redo log file

When the remaining space in the redo log buffer pool is less than 1/2, the redo log buffer is flushed to the redo log file. Make log files

Lock

The locks supported by InnoDB are:- ##Shared lock and exclusive lock

- Intention Lock

- Record Lock

Gap lock

Auto-increment lock

- Intention shared lock : About to acquire the shared lock of a certain row

- Intentional exclusive lock: About to acquire the exclusive lock of a certain row

Transaction

ACIDTransaction is the most important feature of the database as OLTP. When talking about transactions, we have to mention the four basic features of ACID:- Atomicity: The smallest unit of work for a transaction, either all successful or all failed

- Consistency: The start and end of a transaction Afterwards, the integrity of the database will not be destroyed

- Isolation (Isolation): Different transactions do not affect each other. The four isolation levels are RU (read uncommitted), RC ( Read committed), RR (repeatable read), SERIALIZABLE (serialization)

- Durability (Durability): After the transaction is submitted, the modification to the data is permanent, even if System failure will not be lost

- Read Uncommitted Read

- Read Committed Read Committed

- Repeatable Read Repeatable Read

- Serializable Serializable

- Dirty reading

- Non-repeatable read

- Phantom reading

Repeatable Read Repeatable Read

Repeatable Read, this level ensures that the results of reading the same record multiple times in the same transaction are consistent, and solves both phantom reads and non-repeatable in the InnoDB storage engine. Read the question.

The InnoDB engine solves the problem of phantom reads by using Next-Key Lock. Next-Key Lock is a combination of row lock and gap lock. When InnoDB scans the index record, it will first add a row lock (Record Lock) to the index record, and then add a gap to the gaps on both sides of the index record. Gap Lock. After adding the gap lock, other transactions cannot modify or insert records in this gap.

Serializable Serializable

Serializable is the highest isolation level. It avoids the problem of phantom reads by forcing transactions to be executed serially. However, Serializable will be executed on each row of data read. All are locked, so it may cause a lot of timeout and lock contention problems, so the concurrency drops sharply, and it is not recommended to use it in MySQL InnoDB.

Open transaction

BEGIN, BEGIN WORK, START TRANSACTION

- START TRANSACTION READ ONLY

- START TRANSACTION READ WRITE

- START TRANSACTION WITH CONSISTENT SNAPSHOT ## Opening a transaction will enter the engine layer and open a

. This operation is only valid under the RR isolation level, otherwise an error will be reported. Undo log

When the data is modified, the corresponding undo log will be recorded. If the transaction fails or rolls back, you can use the recorded undo log to roll back. Undo log is a logical log that records the data image before changes. If the current data needs to be read at the same time during modification, it can analyze the data of the previous version recorded in this row based on the version information. In addition, Undo log will also generate redo logs, because Undo log also requires persistence protection.

Transaction submission

- Use the global transaction ID generator to generate transaction NO, and add the current connection's transaction pointer (

- trx_t

) to the global commit transaction

trx_serial_listin the linked list () marks undo. If this transaction only uses one UndoPage and the usage is less than 3/4 Page, mark this Page as - TRX_UNDO_CACHED

, if it is not satisfied and is

insert undo, it will be marked asTRX_UNDO_TO_FREE, otherwise the undo is update undo and it will be marked asTRX_UNDO_TO_PURGE. Undos markedTRX_UNDO_CACHEDwill be recycled by the engine. Put - update undo

into the

history listofundo segment, and incrementrseg_history_len(global). At the same time, updateTRX_UNDO_TRX_NOon the Page. If the data is deleted, resetdelete_mark and change - undate undo

from Delete from

update_undo_list. If marked asTRX_UNDO_CACHED, add it to theupdate_undo_cachedqueue - mtr_commit

(Log undo/redo is written to the public buffer). At this point, the file-level transaction is committed. Even if it crashes at this time, the transaction can still be guaranteed to be submitted after restarting. The next thing to do is to update the memory data status (

trx_commit_in_memory) The read-only transaction only needs to change the - readview

from the global

Remove it from the readviewlinked list, and then reset the information in thetrx_tstructure. A read-write transaction first needs to set the transaction status toTRX_STATE_COMMITTED_IN_MEMORY, release all row locks and removetrx_tfromrw_trx_list,readviewRemoved from the globalreadviewlinked list. If there isinsert undo, remove it here. If there isupdate undo, wake up the Purge thread to clean up the garbage. Finally, reset the information intrx_tfor easy downloading. A transaction uses to rollback

- If it is a read-only transaction, it returns directly

- Determine whether to roll back the entire transaction or part of the transaction. If it is a part of the transaction, record how many Undo logs need to be kept, and roll back all the excess

- From

- update undo Find the last undo between

and

insert undo, and start rolling back from this undo If it is - update undo

, then Records marked for deletion are marked for cleaning, and updated data is rolled back to the oldest version. If it is

insert undo, delete the clustered index and secondary index directly If all undo has been rolled back or rolled back to the specified undo, stop and delete the Undo log

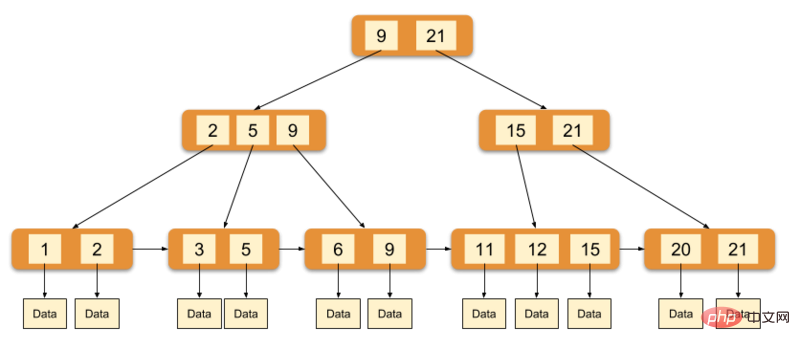

Index

The InnoDB engine uses the B-tree as the index structure. The data field of the leaf node of the primary key index stores complete field data, and the leaf nodes of the non-primary key index store the value data pointing to the primary key.

The above picture is a schematic diagram of the InnoDB main index (also a data file). You can see that the leaf nodes contain complete data records. This index is called a clustered index. Because InnoDB's data files themselves are aggregated by primary key, InnoDB requires that the table must have a primary key. If not explicitly specified, the MySQL system will automatically select a column that can uniquely identify the data record as the primary key. If such a column does not exist, then MySQL automatically generates an implicit field as the primary key for the InnoDB table. The length of this field is 6 bytes and the type is long.

InnoDB's auxiliary index data field stores the value of the corresponding record's primary key instead of the address. In other words, all secondary indexes in InnoDB reference the primary key as the data field. The implementation of the clustered index makes searching by primary key very efficient, but the auxiliary index search requires retrieving the index twice: first, retrieve the auxiliary index to obtain the primary key, and then use the primary key to retrieve the records in the primary index.

The above is the detailed content of Detailed introduction to the InnoDB storage engine in MySQL (code example). For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

PHP's big data structure processing skills

May 08, 2024 am 10:24 AM

PHP's big data structure processing skills

May 08, 2024 am 10:24 AM

Big data structure processing skills: Chunking: Break down the data set and process it in chunks to reduce memory consumption. Generator: Generate data items one by one without loading the entire data set, suitable for unlimited data sets. Streaming: Read files or query results line by line, suitable for large files or remote data. External storage: For very large data sets, store the data in a database or NoSQL.

How to use MySQL backup and restore in PHP?

Jun 03, 2024 pm 12:19 PM

How to use MySQL backup and restore in PHP?

Jun 03, 2024 pm 12:19 PM

Backing up and restoring a MySQL database in PHP can be achieved by following these steps: Back up the database: Use the mysqldump command to dump the database into a SQL file. Restore database: Use the mysql command to restore the database from SQL files.

How to optimize MySQL query performance in PHP?

Jun 03, 2024 pm 08:11 PM

How to optimize MySQL query performance in PHP?

Jun 03, 2024 pm 08:11 PM

MySQL query performance can be optimized by building indexes that reduce lookup time from linear complexity to logarithmic complexity. Use PreparedStatements to prevent SQL injection and improve query performance. Limit query results and reduce the amount of data processed by the server. Optimize join queries, including using appropriate join types, creating indexes, and considering using subqueries. Analyze queries to identify bottlenecks; use caching to reduce database load; optimize PHP code to minimize overhead.

How to insert data into a MySQL table using PHP?

Jun 02, 2024 pm 02:26 PM

How to insert data into a MySQL table using PHP?

Jun 02, 2024 pm 02:26 PM

How to insert data into MySQL table? Connect to the database: Use mysqli to establish a connection to the database. Prepare the SQL query: Write an INSERT statement to specify the columns and values to be inserted. Execute query: Use the query() method to execute the insertion query. If successful, a confirmation message will be output.

How to create a MySQL table using PHP?

Jun 04, 2024 pm 01:57 PM

How to create a MySQL table using PHP?

Jun 04, 2024 pm 01:57 PM

Creating a MySQL table using PHP requires the following steps: Connect to the database. Create the database if it does not exist. Select a database. Create table. Execute the query. Close the connection.

How to use MySQL stored procedures in PHP?

Jun 02, 2024 pm 02:13 PM

How to use MySQL stored procedures in PHP?

Jun 02, 2024 pm 02:13 PM

To use MySQL stored procedures in PHP: Use PDO or the MySQLi extension to connect to a MySQL database. Prepare the statement to call the stored procedure. Execute the stored procedure. Process the result set (if the stored procedure returns results). Close the database connection.

How to fix mysql_native_password not loaded errors on MySQL 8.4

Dec 09, 2024 am 11:42 AM

How to fix mysql_native_password not loaded errors on MySQL 8.4

Dec 09, 2024 am 11:42 AM

One of the major changes introduced in MySQL 8.4 (the latest LTS release as of 2024) is that the "MySQL Native Password" plugin is no longer enabled by default. Further, MySQL 9.0 removes this plugin completely. This change affects PHP and other app

The difference between oracle database and mysql

May 10, 2024 am 01:54 AM

The difference between oracle database and mysql

May 10, 2024 am 01:54 AM

Oracle database and MySQL are both databases based on the relational model, but Oracle is superior in terms of compatibility, scalability, data types and security; while MySQL focuses on speed and flexibility and is more suitable for small to medium-sized data sets. . ① Oracle provides a wide range of data types, ② provides advanced security features, ③ is suitable for enterprise-level applications; ① MySQL supports NoSQL data types, ② has fewer security measures, and ③ is suitable for small to medium-sized applications.