How to make redis distributed

1 Why use Redis

When using Redis in a project, there are two main considerations: performance and concurrency. If it is just for other functions such as distributed locks, there are other middlewares such as Zookpeer instead, and it is not necessary to use Redis.

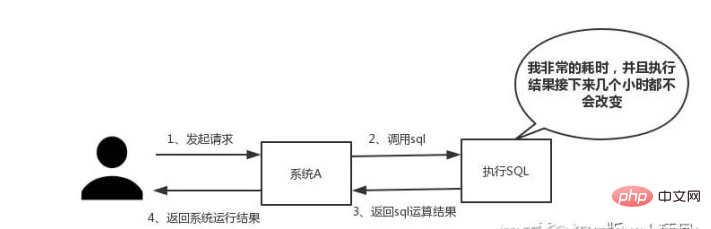

Performance:

As shown in the figure below, it is particularly suitable when we encounter SQL that needs to be executed for a particularly long time and the results do not change frequently. Put the running results into the cache. In this way, subsequent requests will be read from the cache, so that requests can be responded to quickly.

Especially in the flash sale system, at the same time, almost everyone is clicking and placing orders. . . The same operation is performed - querying the database for data.

Depending on the interaction effect, there is no fixed standard for response time. In an ideal world, our page jumps need to be resolved in an instant, and in-page operations need to be resolved in an instant.

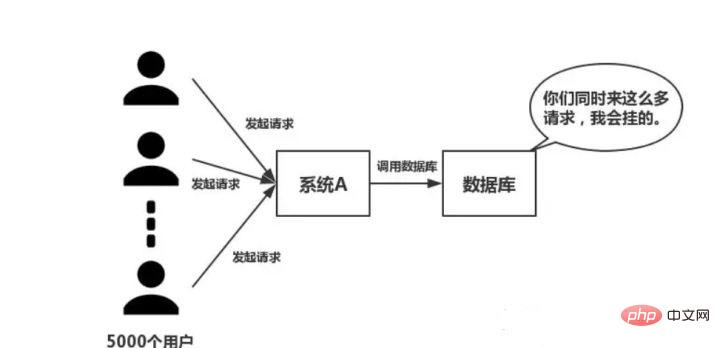

Concurrency:

As shown in the figure below, in the case of large concurrency, all requests directly access the database, and a connection exception will occur in the database. At this time, you need to use Redis to perform a buffering operation so that the request can access Redis first instead of directly accessing the database.

Frequently asked questions about using Redis

- Cache and database double-write consistency issues

- Cache Avalanche problem

- Cache breakdown problem

- Cache concurrency competition problem

Why is single-threaded Redis so fast

This question is an investigation of the internal mechanism of Redis. Many people don't know that Redis has a single-threaded working model.

The reasons are mainly due to the following three points:

- Pure memory operation

- Single-thread operation avoids frequent context switching

- Using a non-blocking I/O multiplexing mechanism

Let’s talk about the I/O multiplexing mechanism in detail, using an analogy: Xiao Ming opened a fast food in City A DianDian is responsible for fast food service in the city. Due to financial constraints, Xiao Ming hired a group of delivery people. Then Xiao Qu found that the funds were not enough and he could only buy a car to deliver express delivery.

Business Method 1

Every time a customer places an order, Xiao Ming will have a delivery person keep an eye on it and then have someone drive to deliver it. Slowly Xiaoqu discovered the following problems with this mode of operation:

Time was spent on grabbing cars, and most of the delivery people were idle. A car can be used to deliver it.

- As the number of orders increased, so did the number of delivery people. Xiao Ming found that the express store was getting more and more crowded and there was no way to hire new delivery people.

- Coordination among delivery personnel takes time.

- Based on the above shortcomings, Xiao Ming learned from the experience and proposed the second management method.

Business method two

Xiao Ming only hires one delivery person. When a customer places an order, Xiao Ming marks the delivery location and places it in one place. Finally, let the delivery person drive the car to deliver the items one by one, and come back to pick up the next one after delivery. Comparing the above two business methods, it is obvious that the second one is more efficient.

In the above metaphor:

- Each delivery person→Each thread

- Each order→Each Socket (I/O stream)

- The delivery location of the order→The different states of the Socket

- Customer food delivery request→Request from the client

- Mingqu’s business method→The code running on the server

- The number of cores of a car→CPU

So we have the following conclusion:

- The first business method is the traditional concurrency model, each Each I/O stream (order) is managed by a new thread (delivery worker).

- The second management method is I/O multiplexing. There is only a single thread (one delivery person) that manages multiple I/O streams by tracking the status of each I/O stream (the delivery location of each delivery person).

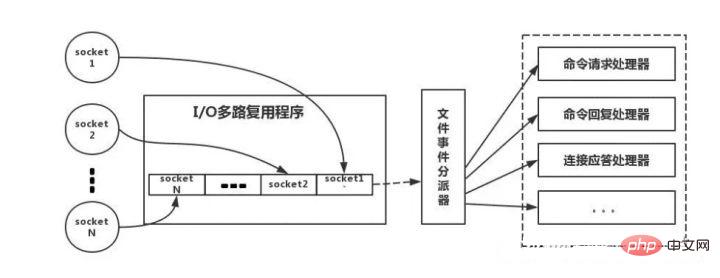

The following is an analogy to the real Redis thread model, as shown in the figure:

Redis-client will have different characteristics when operating Socket of event type. On the server side, there is an I/O multiplexer that puts it into a queue. Then, the file event dispatcher takes it from the queue in turn and forwards it to different event processors.

Three Redis data types and usage scenarios

A qualified programmer will use these five types.

String

The most common set/get operation, Value can be a String or a number. Generally, some complex counting functions are cached. The Value here stores a structured object, and it is more convenient to operate a certain field in it. When I was doing single sign-on, I used this data structure to store user information, using CookieId as the Key, and setting 30 minutes as the cache expiration time, which can simulate a Session-like effect very well. Using the List data structure, you can perform simple message queue functions. In addition, you canuse the lrange command to implement Redis-based paging function, which has excellent performance and good user experience. Because Set stacks a collection of unique values. Therefore, the global deduplication function can be implemented. Our systems are generally deployed in clusters, and it is troublesome to use the Set that comes with the JVM. In addition, uses intersection, union, difference and other operations to calculate common preferences, all preferences, your own unique preferences and other functions. Sorted Set has an additional weight parameter Score, and the elements in the set can be arranged according to Score. You can do ranking application and take TOP N operations. Sorted Set can be used to do delayed tasks. Whether Redis is used at home can be seen from this. For example, your Redis can only store 5G data, but if you write 10G, 5G of data will be deleted. How did you delete it? Have you thought about this issue? Correct answer: Redis adopts a regular deletion and lazy deletion strategy. Delete regularly, use a timer to monitor the Key, and delete it automatically when it expires. Although the memory is released in time, it consumes a lot of CPU resources. Under large concurrent requests, the CPU needs to use time to process the request instead of deleting the Key, so this strategy is not adopted. Regular deletion, Redis checks every 100ms by default, and deletes expired keys. It should be noted that Redis does not check all Keys every 100ms, but randomly selects them for checking. If you only adopt a regular deletion strategy, many keys will not be deleted by the time. So lazy deletion comes in handy. No, if regular deletion does not delete the Key. And you did not request the Key in time, which means that lazy deletion did not take effect. In this way, the memory of Redis will become higher and higher. Then the memory elimination mechanism should be adopted. There is a line of configuration in redis.conf: # maxmemory-policy volatile-lru Consistency issues can also be further divided intoeventual consistency and strong consistency. If the database and cache are double-written, there will inevitably be inconsistencies. The premise is that if there are strong consistency requirements for the data, it cannot be cached. Everything we do can only guarantee eventual consistency. In addition, the solution we have made can only reduce the probability of inconsistency. Therefore, data with strong consistency requirements cannot be cached. First, adopt the correct update strategy, update the database first, and then delete the cache . Secondly, because there may be a problem of failure to delete the cache, just provide a compensation measure, such as using message queues. These two problems are generally difficult for small and medium-sized traditional software companies to encounter. If you have a large concurrent project with a traffic of millions, these two issues must be considered deeply. Cache penetration means that hackers deliberately request data that does not exist in the cache, causing all requests to be sent to the database, causing the database connection to be abnormal. Cache avalanche, that is, the cache fails in a large area at the same time. At this time, another wave of requests comes. As a result, the requests are all sent to the database, resulting in an abnormal database connection. This problem is roughly,There are multiple subsystems to Set a Key at the same time. What should we pay attention to at this time? Everyone basically recommends using Redis transaction mechanism. But it is not recommended to use the Redis transaction mechanism. Because our production environment is basically a Redis cluster environment, and data sharding operations are performed. When a transaction involves multiple Key operations, these multiple Keys are not necessarily stored on the same redis-server. Therefore, the transaction mechanism of Redis is very useless. If you operate on this Key, does not require the order In this case, prepare a distribution Type lock, everyone goes to grab the lock, and just do the set operation after grabbing the lock, which is relatively simple. If you operate on this Key, requires the order Assuming there is a key1, system A needs to set key1 to valueA, system B needs to set key1 to valueB, and system C needs to set key1 to valueC. It is expected that the value of key1 will change in the order of valueA > valueB > valueC. At this time, when data is written to the database, we need to save a timestamp . Assume the timestamp is as follows: System A key 1 {valueA 3:00} System B key 1 { valueB 3:05} System C key 1 {valueC 3:10} So, assuming that System B grabs the lock first, set key1 to {valueB 3 :05}. Next, system A grabs the lock and finds that the timestamp of its own valueA is earlier than the timestamp in the cache, so it does not perform the set operation, and so on. Other methods, such as using queues and turning the set method into serial access, can also . For more Redis-related technical articles, please visit the Redis Tutorial## column to learn! Hash

List

Set

Sorted Set

Four Redis expiration strategy and memory elimination mechanism

Why not use a scheduled deletion strategy

Regular deletion How lazy deletion works

If regular deletion is used, there will be no other problems with lazy deletion.

This configuration is equipped with the memory elimination strategy:

Five Redis and database double-write consistency issues

6 How to deal with cache penetration and cache avalanche problems

Cache penetration solution:

Cache avalanche solution:

8 How to solve the concurrency competition Key problem of Redis

The above is the detailed content of How to make redis distributed. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

How to build the redis cluster mode

Apr 10, 2025 pm 10:15 PM

How to build the redis cluster mode

Apr 10, 2025 pm 10:15 PM

Redis cluster mode deploys Redis instances to multiple servers through sharding, improving scalability and availability. The construction steps are as follows: Create odd Redis instances with different ports; Create 3 sentinel instances, monitor Redis instances and failover; configure sentinel configuration files, add monitoring Redis instance information and failover settings; configure Redis instance configuration files, enable cluster mode and specify the cluster information file path; create nodes.conf file, containing information of each Redis instance; start the cluster, execute the create command to create a cluster and specify the number of replicas; log in to the cluster to execute the CLUSTER INFO command to verify the cluster status; make

How to clear redis data

Apr 10, 2025 pm 10:06 PM

How to clear redis data

Apr 10, 2025 pm 10:06 PM

How to clear Redis data: Use the FLUSHALL command to clear all key values. Use the FLUSHDB command to clear the key value of the currently selected database. Use SELECT to switch databases, and then use FLUSHDB to clear multiple databases. Use the DEL command to delete a specific key. Use the redis-cli tool to clear the data.

How to read redis queue

Apr 10, 2025 pm 10:12 PM

How to read redis queue

Apr 10, 2025 pm 10:12 PM

To read a queue from Redis, you need to get the queue name, read the elements using the LPOP command, and process the empty queue. The specific steps are as follows: Get the queue name: name it with the prefix of "queue:" such as "queue:my-queue". Use the LPOP command: Eject the element from the head of the queue and return its value, such as LPOP queue:my-queue. Processing empty queues: If the queue is empty, LPOP returns nil, and you can check whether the queue exists before reading the element.

How to use the redis command

Apr 10, 2025 pm 08:45 PM

How to use the redis command

Apr 10, 2025 pm 08:45 PM

Using the Redis directive requires the following steps: Open the Redis client. Enter the command (verb key value). Provides the required parameters (varies from instruction to instruction). Press Enter to execute the command. Redis returns a response indicating the result of the operation (usually OK or -ERR).

How to use redis lock

Apr 10, 2025 pm 08:39 PM

How to use redis lock

Apr 10, 2025 pm 08:39 PM

Using Redis to lock operations requires obtaining the lock through the SETNX command, and then using the EXPIRE command to set the expiration time. The specific steps are: (1) Use the SETNX command to try to set a key-value pair; (2) Use the EXPIRE command to set the expiration time for the lock; (3) Use the DEL command to delete the lock when the lock is no longer needed.

How to read the source code of redis

Apr 10, 2025 pm 08:27 PM

How to read the source code of redis

Apr 10, 2025 pm 08:27 PM

The best way to understand Redis source code is to go step by step: get familiar with the basics of Redis. Select a specific module or function as the starting point. Start with the entry point of the module or function and view the code line by line. View the code through the function call chain. Be familiar with the underlying data structures used by Redis. Identify the algorithm used by Redis.

How to solve data loss with redis

Apr 10, 2025 pm 08:24 PM

How to solve data loss with redis

Apr 10, 2025 pm 08:24 PM

Redis data loss causes include memory failures, power outages, human errors, and hardware failures. The solutions are: 1. Store data to disk with RDB or AOF persistence; 2. Copy to multiple servers for high availability; 3. HA with Redis Sentinel or Redis Cluster; 4. Create snapshots to back up data; 5. Implement best practices such as persistence, replication, snapshots, monitoring, and security measures.

How to use the redis command line

Apr 10, 2025 pm 10:18 PM

How to use the redis command line

Apr 10, 2025 pm 10:18 PM

Use the Redis command line tool (redis-cli) to manage and operate Redis through the following steps: Connect to the server, specify the address and port. Send commands to the server using the command name and parameters. Use the HELP command to view help information for a specific command. Use the QUIT command to exit the command line tool.