Master the main methods of crawling web pages with PHP

The main process is to obtain the entire web page, and then match it regularly (critical).

The main methods of PHP crawling pages, there are several methods that are based on the experience of online predecessors. They have not been used yet. Save them first and try them later.

1.file() function

2.file_get_contents() function

3.fopen()->fread()->fclose() mode

4.curl method (I mainly use this)

5.fsockopen() function socket mode

6.Plug-in (such as: http://sourceforge.net/projects/ snoopy/)

7.file() function

<?php //定义url $url='[http://t.qq.com](http://t.qq.com/)';//fiel函数读取内容数组 $lines_array=file($url);//拆分数组为字符串 $lines_string=implode('',$lines_array);//输出内容 echo $lines_string;

2. Use the file_get_contents method to implement it, which is relatively simple.

Using file_get_contents and fopen must be enabled allow_url_fopen. Method: Edit php.ini and set allow_url_fopen = On. When allow_url_fopen is turned off, neither fopen nor file_get_contents can open remote files.

$url="[http://news.sina.com.cn/c/nd/2016-10-23/doc-ifxwztru6951143.shtml](http://news.sina.com.cn/c/nd/2016-10-23/doc-ifxwztru6951143.shtml)";

$html=file_get_contents($url);

//如果出现中文乱码使用下面代码`

//$getcontent = iconv("gb2312", "utf-8",$html);

echo"<textarea style='width:800px;height:600px;'>".$html."</textarea>";3.fopen()->fread()->fclose()Mode, I haven’t used it yet, I will write it down when I see it

<?php

//定义url

$url='[http://t.qq.com](http://t.qq.com/)';//fopen以二进制方式打开

$handle=fopen($url,"rb");//变量初始化

$lines_string="";//循环读取数据

do{

$data=fread($handle,1024);

if(strlen($data)==0) {`

break;

}

$lines_string.=$data;

}while(true);//关闭fopen句柄,释放资源

fclose($handle);//输出内容

echo $lines_string;4. Use curl to implement (I usually use this).

Using curl requires space to enable curl. Method: Modify php.ini under Windows, remove the semicolon in front of extension=php_curl.dll, and copy ssleay32.dll and libeay32.dll to C:\WINDOWS\system32; under Linux, install the curl extension.

<?php

header("Content-Type: text/html;charset=utf-8");

date_default_timezone_set('PRC');

$url = "https://***********ycare";//要爬取的网址

$res = curl_get_contents($url);//curl封装方法

preg_match_all('/<script>(.*?)<\/script>/',$res,$arr_all);//这个网页中数据通过js包过来,所以直接抓js就可以

preg_match_all('/"id"\:"(.*?)",/',$arr_all[1][1],$arr1);//从js块中匹配要的数据

$list = array_unique($arr1[1]);//(可省)保证不重复

//以下则是同理,循环则可

for($i=0;$i<=6;$i=$i+2){

$detail_url = 'ht*****em/'.$list[$i];

$detail_res = curl_get_contents($detail_url);

preg_match_all('/<script>(.*?)<\/script>/',$detail_res,$arr_detail);

preg_match('/"desc"\:"(.*?)",/',$arr_detail[1][1],$arr_content);

***

***

***

$ret=curl_post('http://**********cms.php',$result);//此脚本未放在服务器上,原因大家懂就好哈。

}

function curl_get_contents($url,$cookie='',$referer='',$timeout=300,$ishead=0) {

$curl = curl_init();

curl_setopt($curl, CURLOPT_RETURNTRANSFER,1);

curl_setopt($curl, CURLOPT_FOLLOWLOCATION,1);

curl_setopt($curl, CURLOPT_URL,$url);

curl_setopt($curl, CURLOPT_TIMEOUT,$timeout);

curl_setopt($curl, CURLOPT_USERAGENT,'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/65.0.3325.181 Safari/537.36');

if($cookie)

{

curl_setopt( $curl, CURLOPT_COOKIE,$cookie);

}

if($referer)

{

curl_setopt ($curl,CURLOPT_REFERER,$referer);

}

$ssl = substr($url, 0, 8) == "https://" ? TRUE : FALSE;

if ($ssl)

{

curl_setopt($curl, CURLOPT_SSL_VERIFYHOST, false);

curl_setopt($curl, CURLOPT_SSL_VERIFYPEER, false);

}

$res = curl_exec($curl);

return $res;

curl_close($curl);

}

//curl post数据到服务器

function curl_post($url,$data){

$ch = curl_init();

curl_setopt($ch,CURLOPT_RETURNTRANSFER,1);

//curl_setopt($ch,CURLOPT_FOLLOWLOCATION, 1);

curl_setopt($ch, CURLOPT_SSL_VERIFYPEER, FALSE);

curl_setopt($ch,CURLOPT_USERAGENT,'Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/65.0.3325.181 Safari/537.36');

curl_setopt($ch,CURLOPT_URL,$url);

curl_setopt($ch,CURLOPT_POST,true);

curl_setopt($ch,CURLOPT_POSTFIELDS,$data);

$output = curl_exec($ch);

curl_close($ch);

return $output;

}

?>5.fsockopen()Function socket mode (never used, you can try it in the future)

Whether the socket mode can be executed correctly also depends on the server settings Relationship, you can check which communication protocols are enabled by the server through phpinfo

<?php

$fp = fsockopen("t.qq.com", 80, $errno, $errstr, 30);

if (!$fp) {

echo "$errstr ($errno)<br />\n";

} else {

$out = "GET / HTTP/1.1\r\n";

$out .= "Host: t.qq.com\r\n";

$out .= "Connection: Close\r\n\r\n";

fwrite($fp, $out);

while (!feof($fp)) {

echo fgets($fp, 128);

}

fclose($fp);

}6.snoopy plug-in, the latest version is Snoopy-1.2.4.zip Last Update: 2013-05-30, it is recommended that everyone use

Use snoopy, which is very popular on the Internet, for collection. This is a very powerful collection plug-in, and it is very convenient to use. You can also set an agent in it to simulate browser information.

Note: Setting the agent is in line 45 of the Snoopy.class.php file. Please search for "var formula input error_SERVER['HTTP_USER_AGENT']; in the file to get the browser information. Just copy the echoed content into the agent.

<?php //引入snoopy的类文件 require('Snoopy.class.php'); //初始化snoopy类 $snoopy=new Snoopy; $url="[http://t.qq.com](http://t.qq.com/)"; //开始采集内容` $snoopy->fetch($url); //保存采集内容到$lines_string $lines_string=$snoopy->results; //输出内容,嘿嘿,大家也可以保存在自己的服务器上 echo $lines_string;

Recommended related learning: php graphic tutorial

The above is the detailed content of Master the main methods of crawling web pages with PHP. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1385

1385

52

52

PHP 8.4 Installation and Upgrade guide for Ubuntu and Debian

Dec 24, 2024 pm 04:42 PM

PHP 8.4 Installation and Upgrade guide for Ubuntu and Debian

Dec 24, 2024 pm 04:42 PM

PHP 8.4 brings several new features, security improvements, and performance improvements with healthy amounts of feature deprecations and removals. This guide explains how to install PHP 8.4 or upgrade to PHP 8.4 on Ubuntu, Debian, or their derivati

How To Set Up Visual Studio Code (VS Code) for PHP Development

Dec 20, 2024 am 11:31 AM

How To Set Up Visual Studio Code (VS Code) for PHP Development

Dec 20, 2024 am 11:31 AM

Visual Studio Code, also known as VS Code, is a free source code editor — or integrated development environment (IDE) — available for all major operating systems. With a large collection of extensions for many programming languages, VS Code can be c

7 PHP Functions I Regret I Didn't Know Before

Nov 13, 2024 am 09:42 AM

7 PHP Functions I Regret I Didn't Know Before

Nov 13, 2024 am 09:42 AM

If you are an experienced PHP developer, you might have the feeling that you’ve been there and done that already.You have developed a significant number of applications, debugged millions of lines of code, and tweaked a bunch of scripts to achieve op

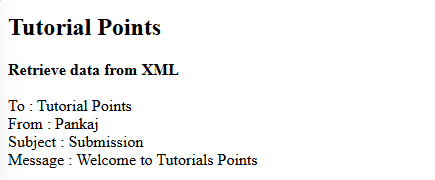

How do you parse and process HTML/XML in PHP?

Feb 07, 2025 am 11:57 AM

How do you parse and process HTML/XML in PHP?

Feb 07, 2025 am 11:57 AM

This tutorial demonstrates how to efficiently process XML documents using PHP. XML (eXtensible Markup Language) is a versatile text-based markup language designed for both human readability and machine parsing. It's commonly used for data storage an

Explain JSON Web Tokens (JWT) and their use case in PHP APIs.

Apr 05, 2025 am 12:04 AM

Explain JSON Web Tokens (JWT) and their use case in PHP APIs.

Apr 05, 2025 am 12:04 AM

JWT is an open standard based on JSON, used to securely transmit information between parties, mainly for identity authentication and information exchange. 1. JWT consists of three parts: Header, Payload and Signature. 2. The working principle of JWT includes three steps: generating JWT, verifying JWT and parsing Payload. 3. When using JWT for authentication in PHP, JWT can be generated and verified, and user role and permission information can be included in advanced usage. 4. Common errors include signature verification failure, token expiration, and payload oversized. Debugging skills include using debugging tools and logging. 5. Performance optimization and best practices include using appropriate signature algorithms, setting validity periods reasonably,

PHP Program to Count Vowels in a String

Feb 07, 2025 pm 12:12 PM

PHP Program to Count Vowels in a String

Feb 07, 2025 pm 12:12 PM

A string is a sequence of characters, including letters, numbers, and symbols. This tutorial will learn how to calculate the number of vowels in a given string in PHP using different methods. The vowels in English are a, e, i, o, u, and they can be uppercase or lowercase. What is a vowel? Vowels are alphabetic characters that represent a specific pronunciation. There are five vowels in English, including uppercase and lowercase: a, e, i, o, u Example 1 Input: String = "Tutorialspoint" Output: 6 explain The vowels in the string "Tutorialspoint" are u, o, i, a, o, i. There are 6 yuan in total

Explain late static binding in PHP (static::).

Apr 03, 2025 am 12:04 AM

Explain late static binding in PHP (static::).

Apr 03, 2025 am 12:04 AM

Static binding (static::) implements late static binding (LSB) in PHP, allowing calling classes to be referenced in static contexts rather than defining classes. 1) The parsing process is performed at runtime, 2) Look up the call class in the inheritance relationship, 3) It may bring performance overhead.

What are PHP magic methods (__construct, __destruct, __call, __get, __set, etc.) and provide use cases?

Apr 03, 2025 am 12:03 AM

What are PHP magic methods (__construct, __destruct, __call, __get, __set, etc.) and provide use cases?

Apr 03, 2025 am 12:03 AM

What are the magic methods of PHP? PHP's magic methods include: 1.\_\_construct, used to initialize objects; 2.\_\_destruct, used to clean up resources; 3.\_\_call, handle non-existent method calls; 4.\_\_get, implement dynamic attribute access; 5.\_\_set, implement dynamic attribute settings. These methods are automatically called in certain situations, improving code flexibility and efficiency.