How to import layout of cad model

How to import the layout of the cad model: first open the software and enter the layout space, and draw the graphics in the model; then click the layout at the bottom, double-click the blank space in the layout to adjust the size and position of the graphics; finally double-click the mouse Lay out the blank space outside, confirm the space, and practice repeatedly.

The operating environment of this article: Windows 7 system, autocad2020 version, Dell G3 computer.

How to import cad model layout:

1. Open the AutoCAD2007 software and enter the model layout space.

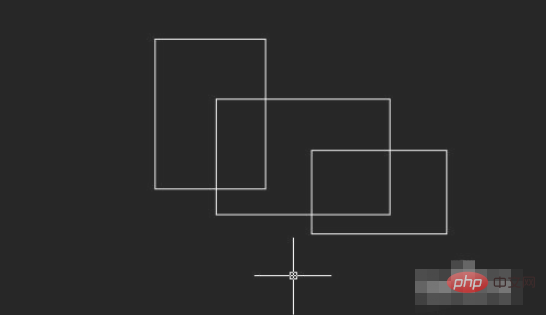

#2. Then draw the graphics in the model.

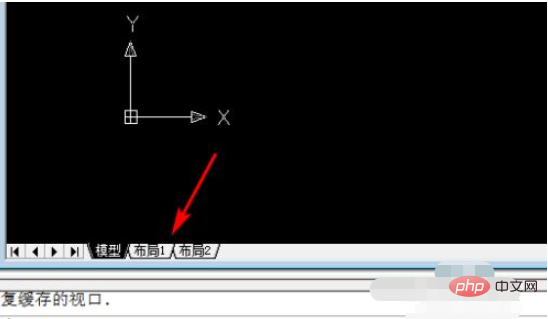

#3. Click Layout at the bottom, and the system will bring the graphics into the layout interface.

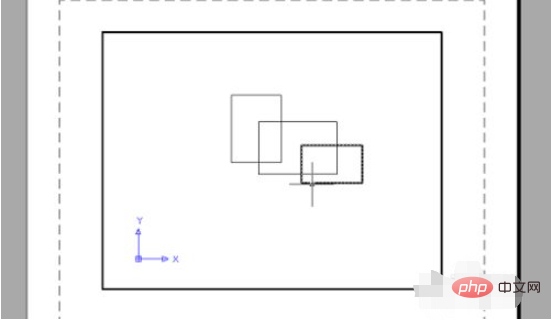

#4. Double-click the blank space in the layout to adjust the size and position of the graphic.

#5. Double-click the blank space outside the layout, confirm the space, and repeat the operation.

Related video recommendations: PHP programming from entry to proficiency

The above is the detailed content of How to import layout of cad model. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1374

1374

52

52

No OpenAI data required, join the list of large code models! UIUC releases StarCoder-15B-Instruct

Jun 13, 2024 pm 01:59 PM

No OpenAI data required, join the list of large code models! UIUC releases StarCoder-15B-Instruct

Jun 13, 2024 pm 01:59 PM

At the forefront of software technology, UIUC Zhang Lingming's group, together with researchers from the BigCode organization, recently announced the StarCoder2-15B-Instruct large code model. This innovative achievement achieved a significant breakthrough in code generation tasks, successfully surpassing CodeLlama-70B-Instruct and reaching the top of the code generation performance list. The unique feature of StarCoder2-15B-Instruct is its pure self-alignment strategy. The entire training process is open, transparent, and completely autonomous and controllable. The model generates thousands of instructions via StarCoder2-15B in response to fine-tuning the StarCoder-15B base model without relying on expensive manual annotation.

Yolov10: Detailed explanation, deployment and application all in one place!

Jun 07, 2024 pm 12:05 PM

Yolov10: Detailed explanation, deployment and application all in one place!

Jun 07, 2024 pm 12:05 PM

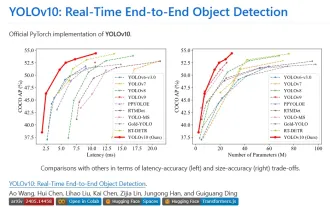

1. Introduction Over the past few years, YOLOs have become the dominant paradigm in the field of real-time object detection due to its effective balance between computational cost and detection performance. Researchers have explored YOLO's architectural design, optimization goals, data expansion strategies, etc., and have made significant progress. At the same time, relying on non-maximum suppression (NMS) for post-processing hinders end-to-end deployment of YOLO and adversely affects inference latency. In YOLOs, the design of various components lacks comprehensive and thorough inspection, resulting in significant computational redundancy and limiting the capabilities of the model. It offers suboptimal efficiency, and relatively large potential for performance improvement. In this work, the goal is to further improve the performance efficiency boundary of YOLO from both post-processing and model architecture. to this end

Tsinghua University took over and YOLOv10 came out: the performance was greatly improved and it was on the GitHub hot list

Jun 06, 2024 pm 12:20 PM

Tsinghua University took over and YOLOv10 came out: the performance was greatly improved and it was on the GitHub hot list

Jun 06, 2024 pm 12:20 PM

The benchmark YOLO series of target detection systems has once again received a major upgrade. Since the release of YOLOv9 in February this year, the baton of the YOLO (YouOnlyLookOnce) series has been passed to the hands of researchers at Tsinghua University. Last weekend, the news of the launch of YOLOv10 attracted the attention of the AI community. It is considered a breakthrough framework in the field of computer vision and is known for its real-time end-to-end object detection capabilities, continuing the legacy of the YOLO series by providing a powerful solution that combines efficiency and accuracy. Paper address: https://arxiv.org/pdf/2405.14458 Project address: https://github.com/THU-MIG/yo

binance official website URL Binance official website entrance latest genuine entrance

Dec 16, 2024 pm 06:15 PM

binance official website URL Binance official website entrance latest genuine entrance

Dec 16, 2024 pm 06:15 PM

This article focuses on the latest genuine entrances to Binance’s official website, including Binance Global’s official website, the US official website and the Academy’s official website. In addition, the article also provides detailed access steps, including using a trusted device, entering the correct URL, double-checking the website interface, verifying the website certificate, contacting customer support, etc., to ensure safe and reliable access to the Binance platform.

Google Gemini 1.5 technical report: Easily prove Mathematical Olympiad questions, the Flash version is 5 times faster than GPT-4 Turbo

Jun 13, 2024 pm 01:52 PM

Google Gemini 1.5 technical report: Easily prove Mathematical Olympiad questions, the Flash version is 5 times faster than GPT-4 Turbo

Jun 13, 2024 pm 01:52 PM

In February this year, Google launched the multi-modal large model Gemini 1.5, which greatly improved performance and speed through engineering and infrastructure optimization, MoE architecture and other strategies. With longer context, stronger reasoning capabilities, and better handling of cross-modal content. This Friday, Google DeepMind officially released the technical report of Gemini 1.5, which covers the Flash version and other recent upgrades. The document is 153 pages long. Technical report link: https://storage.googleapis.com/deepmind-media/gemini/gemini_v1_5_report.pdf In this report, Google introduces Gemini1

Review! Comprehensively summarize the important role of basic models in promoting autonomous driving

Jun 11, 2024 pm 05:29 PM

Review! Comprehensively summarize the important role of basic models in promoting autonomous driving

Jun 11, 2024 pm 05:29 PM

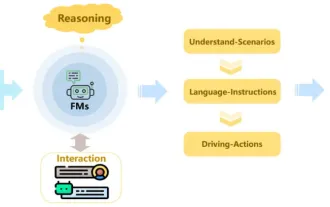

Written above & the author’s personal understanding: Recently, with the development and breakthroughs of deep learning technology, large-scale foundation models (Foundation Models) have achieved significant results in the fields of natural language processing and computer vision. The application of basic models in autonomous driving also has great development prospects, which can improve the understanding and reasoning of scenarios. Through pre-training on rich language and visual data, the basic model can understand and interpret various elements in autonomous driving scenarios and perform reasoning, providing language and action commands for driving decision-making and planning. The base model can be data augmented with an understanding of the driving scenario to provide those rare feasible features in long-tail distributions that are unlikely to be encountered during routine driving and data collection.

Do different data sets have different scaling laws? And you can predict it with a compression algorithm

Jun 07, 2024 pm 05:51 PM

Do different data sets have different scaling laws? And you can predict it with a compression algorithm

Jun 07, 2024 pm 05:51 PM

Generally speaking, the more calculations it takes to train a neural network, the better its performance. When scaling up a calculation, a decision must be made: increase the number of model parameters or increase the size of the data set—two factors that must be weighed within a fixed computational budget. The advantage of increasing the number of model parameters is that it can improve the complexity and expression ability of the model, thereby better fitting the training data. However, too many parameters can lead to overfitting, making the model perform poorly on unseen data. On the other hand, expanding the data set size can improve the generalization ability of the model and reduce overfitting problems. Let us tell you: As long as you allocate parameters and data appropriately, you can maximize performance within a fixed computing budget. Many previous studies have explored Scalingl of neural language models.

The shelling scandal makes the director of Stanford AI Lab angry! Two members of the plagiarism team took the blame and one person disappeared, and his criminal record was exposed. Netizens: Re-understand China's open source model

Jun 09, 2024 am 09:38 AM

The shelling scandal makes the director of Stanford AI Lab angry! Two members of the plagiarism team took the blame and one person disappeared, and his criminal record was exposed. Netizens: Re-understand China's open source model

Jun 09, 2024 am 09:38 AM

The incident of the Stanford team plagiarizing a large model from Tsinghua University came later - the Llama3-V team admitted plagiarism, and two of the undergraduates from Stanford even cut themselves off from another author. The latest apology tweets were sent by SiddharthSharma and AkshGarg. Not among them, Mustafa Aljadery (Lao Mu for short) from the University of Southern California is accused of being the main fault party, and he has been missing since yesterday: We hope that Lao Mu will make the first statement, but we have been unable to contact him since yesterday. Siddharth, myself (Akshi) and Lao Mu released Llama3-V together, and Lao Mu wrote the code for the project. Siddharth and my role is to help him get started on Medium and T