Backend Development

Backend Development

Python Tutorial

Python Tutorial

Detailed explanation of Python's urllib crawler, request module and parse module

Detailed explanation of Python's urllib crawler, request module and parse module

Detailed explanation of Python's urllib crawler, request module and parse module

Article Directory

- urllib

- request module

- Access URL

- Request class

- Other classes

- parse module

- Parse URL

- Escape URL

- robots.txt file

(Free learning recommendation: python video tutorial )

urllib

##urllib is used in Python to process URLs Toolkit, source code is located under /Lib/. It contains several modules: the request module used to open and read and write urls, the error module causing exceptions caused by the request module, and the error module used to parse urls. The parse module, the response module for response processing, and the robotparser

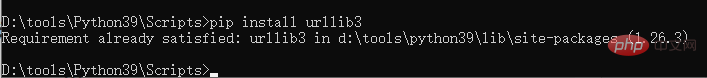

Note the version differences. urllib has 3 versions: Python2.X includes urllib, urllib2 modules, Python3. ## and urlparse are synthesized into the urllib package, while urllib3 is a new third-party tool package. If you encounter problems such as "No module named urllib2", it is almost always caused by different Python versions. urllib3

pip install urllib3 You can also download the latest code through GitHub:

git clone git://github.com/shazow/urllib3.git python setup.py install

urllib3 Reference documentation: https ://urllib3.readthedocs.io/en/latest/

request module

##urllib.request

module defines authentication , redirection, cookies and other applications to open url functions and classes. Let’s briefly introduce the request package, which is used for advanced non-underlying HTTP client interfaces and has stronger fault tolerance than the

module. request uses urllib3, which inherits the features of urllib2, supports HTTP connection maintenance and connection pooling, supports the use of cookies to maintain sessions, file uploads, automatic decompression, Unicode responses, HTTP(S) proxy, etc. For more details, please refer to the document http://requests.readthedocs.io. The following will introduce the common functions and classes of the urllib.request module.

Access URL

1. urlopen()

urllib.request.urlopen(url,data=None,[timeout,]*,cafile=None,capath=None,cadefault=false,context=None)

This function is used to capture URL data. Very important. With the parameters shown above, except for the URL parameters (string or Request object), the remaining parameters have default values.

①URL parameter<div class="code" style="position:relative; padding:0px; margin:0px;"><pre class="brush:php;toolbar:false">from urllib import requestwith request.urlopen("http://www.baidu.com") as f:

print(f.status)

print(f.getheaders())#运行结果如下200[('Bdpagetype', '1'), ('Bdqid', '0x8583c98f0000787e'), ('Cache-Control', 'private'), ('Content-Type', 'text/html;charset=utf-8'), ('Date', 'Fri, 19 Mar 2021 08:26:03 GMT'), ('Expires', 'Fri, 19 Mar 2021 08:25:27 GMT'), ('P3p', 'CP=" OTI DSP COR IVA OUR IND COM "'), ('P3p', 'CP=" OTI DSP COR IVA OUR IND COM "'), ('Server', 'BWS/1.1'), ('Set-Cookie', 'BAIDUID=B050D0981EE3A706D726852655C9FA21:FG=1; expires=Thu, 31-Dec-37 23:55:55 GMT; max-age=2147483647; path=/; domain=.baidu.com'), ('Set-Cookie', 'BIDUPSID=B050D0981EE3A706D726852655C9FA21; expires=Thu, 31-Dec-37 23:55:55 GMT; max-age=2147483647; path=/; domain=.baidu.com'), ('Set-Cookie', 'PSTM=1616142363; expires=Thu, 31-Dec-37 23:55:55 GMT; max-age=2147483647; path=/; domain=.baidu.com'), ('Set-Cookie', 'BAIDUID=B050D0981EE3A706FA20DF440C89F27F:FG=1; max-age=31536000; expires=Sat, 19-Mar-22 08:26:03 GMT; domain=.baidu.com; path=/; version=1; comment=bd'), ('Set-Cookie', 'BDSVRTM=0; path=/'), ('Set-Cookie', 'BD_HOME=1; path=/'), ('Set-Cookie', 'H_PS_PSSID=33272_33710_33690_33594_33600_33624_33714_33265; path=/; domain=.baidu.com'), ('Traceid', '161614236308368819309620754845011048574'), ('Vary', 'Accept-Encoding'), ('Vary', 'Accept-Encoding'), ('X-Ua-Compatible', 'IE=Edge,chrome=1'), ('Connection', 'close'), ('Transfer-Encoding', 'chunked')]</pre><div class="contentsignin">Copy after login</div></div>②data parameter

byes

object with data, otherwise it is None. After Python 3.2 it can be an iterable object. If so, the Content-Length parameter must be included inheaders

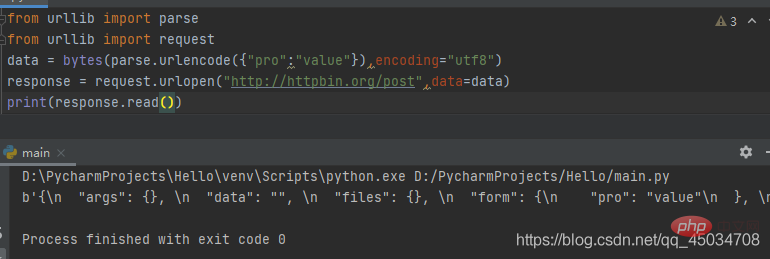

. When the HTTP request uses the POST method, data must have data; when using the GET method, data can be written as None. <div class="code" style="position:relative; padding:0px; margin:0px;"><pre class="brush:php;toolbar:false">from urllib import parsefrom urllib import request

data = bytes(parse.urlencode({"pro":"value"}),encoding="utf8")response = request.urlopen("http://httpbin.org/post",data=data)print(response.read())#运行结果如下b'{\n "args": {}, \n "data": "", \n "files": {}, \n "form": {\n "pro": "value"\n }, \n "headers": {\n "Accept-Encoding": "identity", \n "Content-Length": "9", \n "Content-Type": "application/x-www-form-urlencoded", \n "Host": "httpbin.org", \n "User-Agent": "Python-urllib/3.9", \n "X-Amzn-Trace-Id": "Root=1-60545f5e-7428b29435ce744004d98afa"\n }, \n "json": null, \n "origin": "112.48.80.243", \n "url": "http://httpbin.org/post"\n}\n'</pre><div class="contentsignin">Copy after login</div></div> To make a POST request for data, you need to transcode the

type or the iterable type. Here, byte conversion is performed through bytes(). Considering that the first parameter is a string, it is necessary to use the urlencode() method of the parse module (discussed below) to upload the The data is converted into a string, and the encoding format is specified as utf8. The test website httpbin.org can provide HTTP testing. From the returned content, we can see that the submission uses form as an attribute and a dictionary as an attribute value. ③timeout parameter This parameter is optional. Specify a timeout in seconds. If this time is exceeded, any operation will be blocked. If not specified, the default will be sock. The value corresponding to .GLOBAL_DEFAULT_TIMEOUT

After timeout, a urllib.error.URLError:<div class="code" style="position:relative; padding:0px; margin:0px;"><pre class="brush:php;toolbar:false">from urllib import request

response = request.urlopen("http://httpbin.org/get",timeout=1)print(response.read())#运行结果如下b'{\n "args": {}, \n "headers": {\n "Accept-Encoding": "identity", \n "Host": "httpbin.org", \n "User-Agent": "Python-urllib/3.9", \n "X-Amzn-Trace-Id": "Root=1-605469dd-76a6d963171127c213d9a9ab"\n }, \n "origin": "112.48.80.243", \n "url": "http://httpbin.org/get"\n}\n'</pre><div class="contentsignin">Copy after login</div></div>④Return the common methods and properties of the object

urlopen()

common parameters, this function returns the class file used as the context manager (context manager) Object, and includes the following methods:geturl(): Returns the requested URL, usually the redirected URL can still be obtained

- getcode(): Returns the HTTP status code after the response

- status attribute: Returns the HTTP status code after the response

- msg attribute: Request result

from urllib import request response = request.urlopen("http://httpbin.org/get")print(response.geturl())print("===========")print(response.info())print("===========")print(response.getcode())print("===========")print(response.status)print("===========")print(response.msg)Copy after loginRun result:

- 1xx(informational):请求已经收到,正在进行中。

- 2xx(successful):请求成功接收,解析,完成。

- 3xx(Redirection):需要重定向。

- 4xx(Client Error):客户端问题,请求存在语法错误,网址未找到。

- 5xx(Server Error):服务器问题。

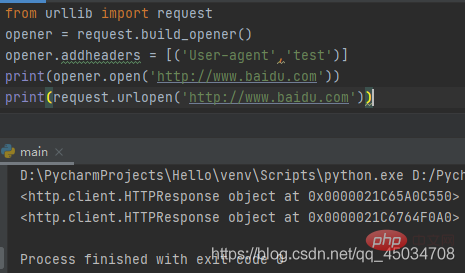

二、build_opener()

urllib.request.build_opener([handler1 [handler2, ...]])

该函数不支持验证、cookie及其他HTTP高级功能。要支持这些功能必须使用build_opener()函数自定义OpenerDirector对象,称之为Opener。

build_opener()函数返回的是OpenerDirector实例,而且是按给定的顺序链接处理程序的。作为OpenerDirector实例,可以从OpenerDirector类的定义看出他具有addheaders、handlers、handle_open、add_handler()、open()、close()等属性或方法。open()方法与urlopen()函数的功能相同。

上述代码通过修改http报头进行HTTP高级功能操作,然后利用返回对象open()进行请求,返回结果与urlopen()一样,只是内存位置不同而已。

实际上urllib.request.urlopen()方法只是一个Opener,如果安装启动器没有使用urlopen启动,调用的就是OpenerDirector.open()方法。那么如何设置默认全局启动器呢?就涉及下面的install_opener函数。

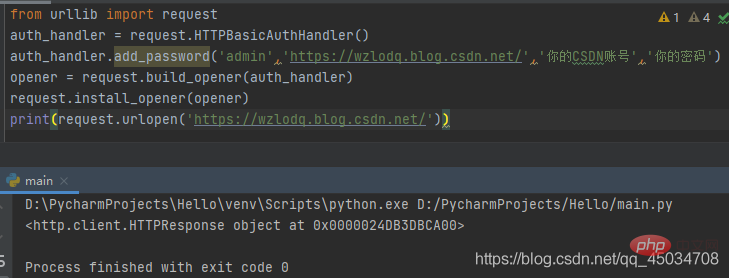

三、install_opener()

urllib.request.install_opener(opener)

安装OpenerDirector实例作为默认全局启动器。

首先导入request模块,实例化一个HTTPBasicAuthHandler对象,然后通过利用add_password()添加用户名和密码来创建一个认证处理器,利用urllib.request.build_opener()方法来调用该处理器以构建Opener,并使其作为默认全局启动器,这样Opener在发生请求时具备了认证功能。通过Opener的open()方法打开链接完成认证。

当然了,CSDN不需要账号密码也可以访问,读者还可以在其他网站上用自己的账号进行测试。

除了上述方法外,还有将路径转换为URL的pathname2url(path)、将URL转换为路径的url2pathname(path),以及返回方案至代理服务器URL映射字典的getproxies()等方法。

Request类

前面介绍的urlopen()方法可以满足一般基本URL请求,如果需要添加headers信息,就要考虑更为强大的Request类了。Request类是URL请求的抽象,包含了许多参数,并定义了一系列属性和方法。

一、定义

class urllib.request.Request(url,data=None,headers={},origin_req_host=None,unverifiable=False,method=None)- 参数url是有效网址的字符串,同

urlopen()方法中一样,data参数也是。 - headers是一个字典,可以通过

add_header()以键值进行调用。通常用于爬虫爬取数据时或者Web请求时更改User-Agent标头值参数来进行请求。 - origin_req_host是原始请求主机,比如请求的是针对HTML文档中的图像的,则该请求主机是包含图像页面所在的主机。

- Unverifiable指示请求是否是无法验证的。

- method指示使用的是HTTP请求方法。常用的有GET、POST、PUT、DELETE等,

代码示例:

from urllib import requestfrom urllib import parse

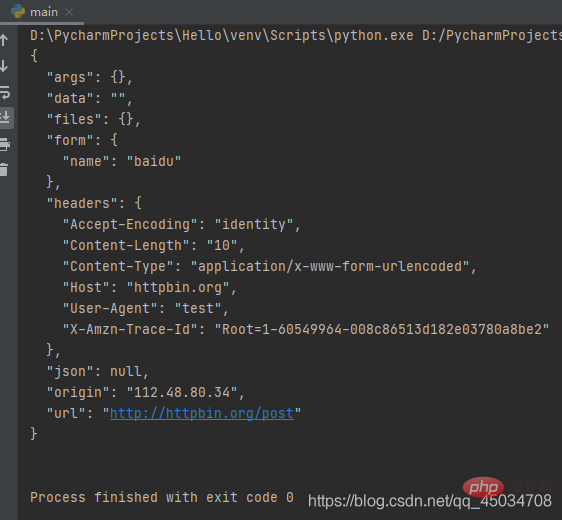

data = parse.urlencode({"name":"baidu"}).encode('utf-8')headers = {'User-Agent':'wzlodq'}req = request.Request(url="http://httpbin.org/post",data=data,headers=headers,method="POST")response = request.urlopen(req)print(response.read())#运行结果如下b'{\n "args": {}, \n "data": "", \n "files": {}, \n "form": {\n "name": "baidu"\n }, \n "headers": {\n "Accept-Encoding": "identity", \n "Content-Length": "10", \n "Content-Type": "application/x-www-form-urlencoded", \n "Host": "httpbin.org", \n "User-Agent": "wzlodq", \n "X-Amzn-Trace-Id": "Root=1-605491a4-1fcf3df01a8b3c3e22b5edce"\n }, \n "json": null, \n "origin": "112.48.80.34", \n "url": "http://httpbin.org/post"\n}\n'注意data参数和前面一样需是字节流类型的,不同的是调用Request类进行请求。

二、属性方法

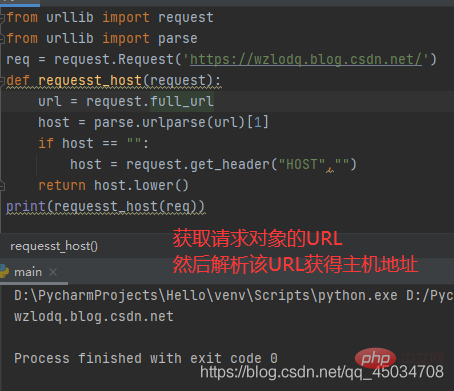

①Request.full_url

full_url属性包含setter、getter和deleter。如果原始请求URL片段存在,那么得到的full_url将返回原始请求的URL片段,通过添加修饰器@property将原始URL传递给构造函数。

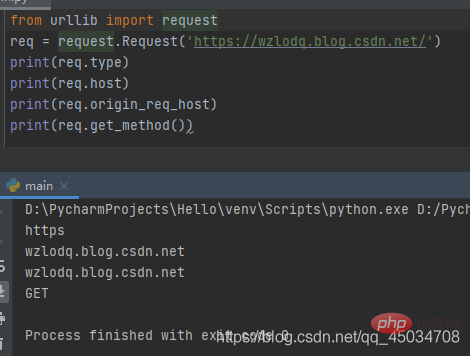

②Request.type:获取请求对象的协议类型。

③Request.host:获取URL主机,可能含有端口的主机。

④Request.origin_req_host:发出请求的原始主机,没有端口。

⑤Request.get_method():返回显示HTTP请求方法的字符串。

(插播反爬信息 )博主CSDN地址:https://wzlodq.blog.csdn.net/

⑥Request.add_header(key,val):向请求中添加标头。

from urllib import requestfrom urllib import parse

data = bytes(parse.urlencode({'name':'baidu'}),encoding='utf-8')req = request.Request('http://httpbin.org/post',data,method='POST')req.add_header('User-agent','test')response = request.urlopen(req)print(response.read().decode('utf-8'))

上述代码中,通过add_header()传入了User-Agent,在爬虫过程中,常常通过循环调用该方法来添加不同的User-Agent进行请求,避免服务器针对某一User-Agent的禁用。

其他类

BaseHandler为所有注册处理程序的基类,并且只处理注册的简单机制,从定义上看,BaseHandler提供了一个添加基类的add_parent()方法,后面介绍的类都是继承该类操作的。

- HTTPErrorProcessor:用于HTTP错误响应过程。

- HTTPDefaultErrorHandler:用于处理HTTP响应错误。

- ProxyHandler:用于设置代理。

- HTTPRedirectHandler:用于设置重定向。

- HTTPCookieProcessor:用于处理cookie。

- HEEPBasicAuthHandler:用于管理认证。

parse模块

parse模块用于分解URL字符串为各个组成部分,包括寻址方案、网络位置、路径等,也可将这些部分组成URL字符串,同时可以对“相对URL"进行转换等。

解析URL

一、urllib.parse.urlparse(urlstring,scheme=’’,allow_fragments=True)

解析URL为6个部分,即返回一个6元组(tuple子类的实例),tuple类具有下标所示的属性:

| 属性 | 说明 | 对应下标指数 | 不存在时的取值 |

|---|---|---|---|

| scheme | URL方案说明符 0 | scheme参数 | |

| netloc | 网络位置部分 | 1 | 空字符串 |

| path | 分层路径 | 2 | 空字符串 |

| params | 最后路径元素的参数 | 3 | 空字符串 |

| query | 查询组件 | 4 | 空字符串 |

| fragment | 片段标识符 | 5 | 空字符串 |

| username | 用户名 | None | |

| password | 密码 | None | |

| hostname | 主机名 | None | |

| port | 端口号 | None |

最后组成的URL结构为scheme://netloc/path;parameters?query#fragment

举个栗子:

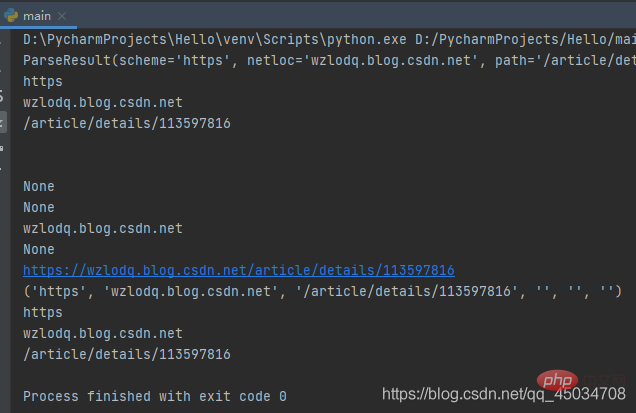

from urllib.parse import *res = urlparse('https://wzlodq.blog.csdn.net/article/details/113597816')print(res)print(res.scheme)print(res.netloc)print(res.path)print(res.params)print(res.query)print(res.username)print(res.password)print(res.hostname)print(res.port)print(res.geturl())print(tuple(res))print(res[0])print(res[1])print(res[2])

需要注意的是urlparse有时并不能很好地识别netloc,它会假定相对URL以路径分量开始,将其取值放在path中。

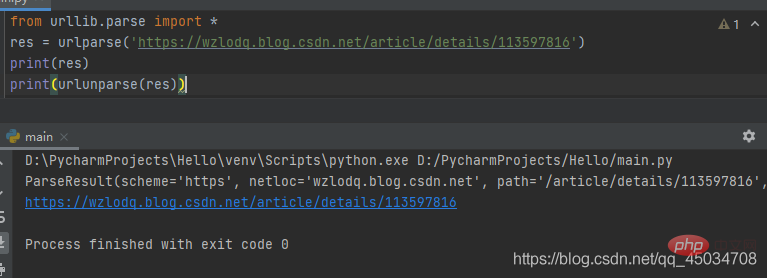

二、urllib.parse.urlunparse(parts)

是urlparse()的逆操作,即将urlparse()返回的原则构建一个URL。

三、urllib.parse.urlsplit(urlstring,scheme=’’.allow_fragments=True)

类似urlparse(),但不会分离参数,即返回的元组对象没有params元素,是一个五元组,对应下标指数也发生了改变。

from urllib.parse import *sp = urlsplit('https://wzlodq.blog.csdn.net/article/details/113597816')print(sp)#运行结果如下SplitResult(scheme='https', netloc='wzlodq.blog.csdn.net', path='/article/details/113597816', query='', fragment='')四、urllib.parse.urlunsplit(parts)

类似urlunparse(),是urlsplit()的逆操作,不再赘述。

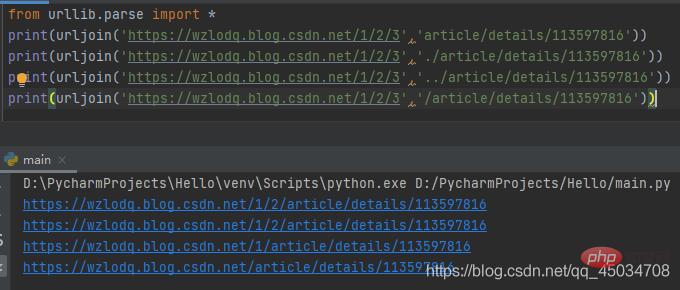

五、urllib.parse.urljoin(base,url,allow_fragments=True)

该函数主要组合基本网址(base)与另一个网址(url)以构建新的完整网址。

相对路径和绝对路径的url组合是不同的,而且相对路径是以最后部分路径进行替换处理的:

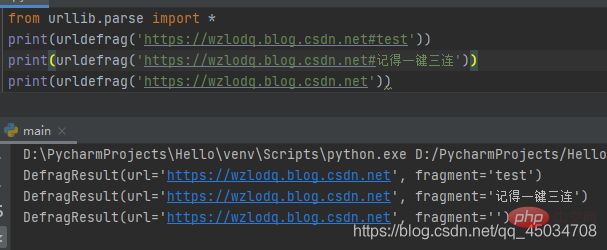

六、urllib.parse.urldefrag(url)

根据url进行分割,如果url包含片段标识符,就返回url对应片段标识符前的网址,fragment取片段标识符后的值。如果url没有片段标识符,那么fragment为空字符串。

转义URL

URL转义可以避免某些字符引起歧义,通过引用特殊字符并适当编排非ASCII文本使其作为URL组件安全使用。同时也支持反转这些操作,以便从URL组件内容重新创建原始数据。

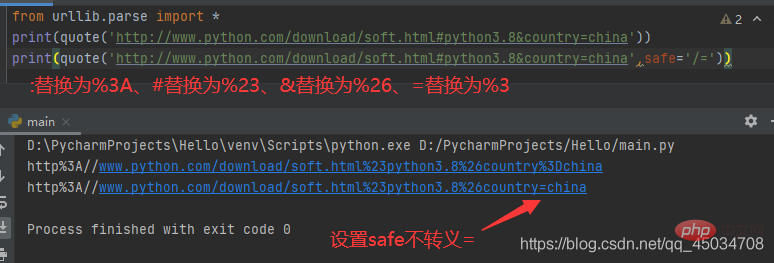

一、urllib.parse.quote(string,safe=’/’,encoding=None,errors=None)

使用%xx转义替换string中的特殊字符,其中字母、数字和字符’_.-‘不会进行转义。默认情况下,此函数用于转义URL的路径部分,可选的safe参数指定不应转义的其他ASCII字符——其默认值为’/’。

特别注意的是若string是bytes,encoding和errors就无法指定,否则报错TypeError。

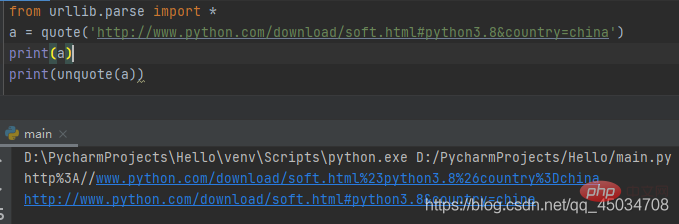

二、urllib.parse.unquote(string,encoding=‘utf-8’,errors=‘replace’)

该函数时quote()的逆操作,即将%xx转义为等效的单字符。参数encoding和errors用来指定%xx编码序列解码为Unicode字符,同bytes.decode()方法。

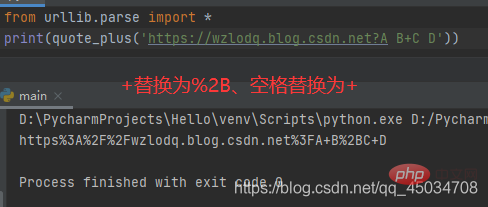

三、urllib.parse.quote_plus(string,safe=’’,encoding=None,errors=None)

该函数时quote()的增强版,与之不同的是用+替换空格,而且如果原始URL有字符,那么+将被转义。

四、urllib.parse.unquote_plus(string,encoding=‘utf-8’,errors=‘replace’)

类似unquote(),不再赘述。

五、urllib.parse.urlencode(query,doseq=False,safe=’’,encoding=None,errors=None,quote_via=quote_plus)

该函数前面提到过,通常在使用HTTP进行POST请求传递的数据进行编码时使用。

robots.txt文件

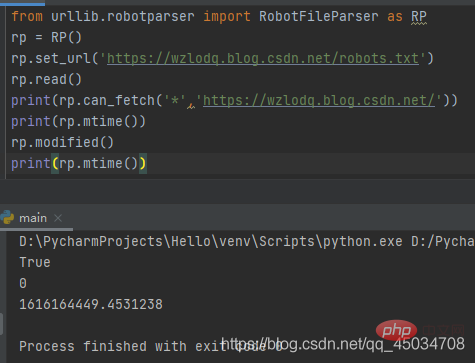

robotparser模块很简单,仅定义了3个类(RobotFileParser、RuleLine、Entry)。从__all__属性来看也就RobotFileParser一个类(用于处理有关特定用户代理是否可以发布robots.txt文件的网站上提前网址内容)。

robots文件类似一个协议文件,搜索引擎访问网站时查看的第一个文件,会告诉爬虫或者蜘蛛程序在服务器上可以查看什么文件。

RobotFileParser类有一个url参数,常用以下方法:

- set_url(): used to set the URL pointing to the robots.txt file.

- read(): Read the robots.txt URL and provide it to the parser.

- parse(): used to parse the robots.txt file.

- can_fetch(): Used to determine whether the URL can be advanced.

- mtime(): Returns the time when the robots.txt file was last crawled.

- modified(): Set the time when the robots.txt file was last crawled to the current time.

## Lots of free learning recommendations, please visit python tutorial(video)

The above is the detailed content of Detailed explanation of Python's urllib crawler, request module and parse module. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Is there any mobile app that can convert XML into PDF?

Apr 02, 2025 pm 08:54 PM

Is there any mobile app that can convert XML into PDF?

Apr 02, 2025 pm 08:54 PM

An application that converts XML directly to PDF cannot be found because they are two fundamentally different formats. XML is used to store data, while PDF is used to display documents. To complete the transformation, you can use programming languages and libraries such as Python and ReportLab to parse XML data and generate PDF documents.

How to open xml format

Apr 02, 2025 pm 09:00 PM

How to open xml format

Apr 02, 2025 pm 09:00 PM

Use most text editors to open XML files; if you need a more intuitive tree display, you can use an XML editor, such as Oxygen XML Editor or XMLSpy; if you process XML data in a program, you need to use a programming language (such as Python) and XML libraries (such as xml.etree.ElementTree) to parse.

Does XML modification require programming?

Apr 02, 2025 pm 06:51 PM

Does XML modification require programming?

Apr 02, 2025 pm 06:51 PM

Modifying XML content requires programming, because it requires accurate finding of the target nodes to add, delete, modify and check. The programming language has corresponding libraries to process XML and provides APIs to perform safe, efficient and controllable operations like operating databases.

Recommended XML formatting tool

Apr 02, 2025 pm 09:03 PM

Recommended XML formatting tool

Apr 02, 2025 pm 09:03 PM

XML formatting tools can type code according to rules to improve readability and understanding. When selecting a tool, pay attention to customization capabilities, handling of special circumstances, performance and ease of use. Commonly used tool types include online tools, IDE plug-ins, and command-line tools.

Is there a free XML to PDF tool for mobile phones?

Apr 02, 2025 pm 09:12 PM

Is there a free XML to PDF tool for mobile phones?

Apr 02, 2025 pm 09:12 PM

There is no simple and direct free XML to PDF tool on mobile. The required data visualization process involves complex data understanding and rendering, and most of the so-called "free" tools on the market have poor experience. It is recommended to use computer-side tools or use cloud services, or develop apps yourself to obtain more reliable conversion effects.

How to beautify the XML format

Apr 02, 2025 pm 09:57 PM

How to beautify the XML format

Apr 02, 2025 pm 09:57 PM

XML beautification is essentially improving its readability, including reasonable indentation, line breaks and tag organization. The principle is to traverse the XML tree, add indentation according to the level, and handle empty tags and tags containing text. Python's xml.etree.ElementTree library provides a convenient pretty_xml() function that can implement the above beautification process.

Is the conversion speed fast when converting XML to PDF on mobile phone?

Apr 02, 2025 pm 10:09 PM

Is the conversion speed fast when converting XML to PDF on mobile phone?

Apr 02, 2025 pm 10:09 PM

The speed of mobile XML to PDF depends on the following factors: the complexity of XML structure. Mobile hardware configuration conversion method (library, algorithm) code quality optimization methods (select efficient libraries, optimize algorithms, cache data, and utilize multi-threading). Overall, there is no absolute answer and it needs to be optimized according to the specific situation.

How to convert XML files to PDF on your phone?

Apr 02, 2025 pm 10:12 PM

How to convert XML files to PDF on your phone?

Apr 02, 2025 pm 10:12 PM

It is impossible to complete XML to PDF conversion directly on your phone with a single application. It is necessary to use cloud services, which can be achieved through two steps: 1. Convert XML to PDF in the cloud, 2. Access or download the converted PDF file on the mobile phone.