Take you step by step to understand Redis high availability cluster

This article brings you relevant knowledge about Redis, which mainly introduces cluster-related issues. Redis cluster is a distributed database solution. The cluster shares data through sharding. , and provides replication and failover functions. I hope it will be helpful to everyone.

Recommended learning: Redis learning tutorial

Several Redis high availability solutions. Including: "Master-Slave Mode", "Sentinel Mechanism" and "Sentinel Cluster".

- "Master-slave mode" has the advantages of separation of reading and writing, sharing of reading pressure, data backup, and providing multiple copies.

- The "Sentinel Mechanism" can automatically promote the slave node to the master node after the master node fails, and the service can be restored without manual intervention.

- "Sentinel Cluster" solves the problem of single point of failure and "misjudgment" caused by single-machine Sentinel.

Redis has gone from the simplest stand-alone version to data persistence, master-slave multiple copies, and sentinel clusters. Through such optimization, both performance and stability have become higher and higher.

But with the development of time, the company's business volume has experienced explosive growth. Can the architectural model at this time still be able to bear such a large amount of traffic?

For example, there is such a requirement: use Redis to save 50 million key-value pairs, each key-value pair is approximately 512B, in order to quickly deploy and externally To provide services, we use cloud hosts to run Redis instances. So, how to choose the memory capacity of the cloud host?

By calculation, the memory space occupied by these key-value pairs is approximately 25GB (50 million *512B).

The first solution that comes to mind is: choose a cloud host with 32GB memory to deploy Redis. Because 32GB of memory can save all data, and there is still 7GB left to ensure the normal operation of the system.

At the same time, RDB is also used to persist data to ensure that data can be recovered from RDB after a Redis instance failure.

However, during use, you will find that the response of Redis is sometimes very slow. Check the latest_fork_usec indicator value of Redis through the INFO command (indicating the time taken for the latest fork), and it is found that this indicator value is particularly high.

This is related to the persistence mechanism of Redis.

When using RDB for persistence, Redis will fork sub-process to complete, fork The operation time is proportional to the amount of data in Redis Relevant , while fork will block the main thread when executed. The larger the amount of data, the longer the main thread will be blocked due to the fork operation.

So, when using RDB to persist 25GB of data, the amount of data is large, and the child process running in the background is blocked when fork is created. The main thread is blocked, which causes Redis response to slow down.

Obviously this solution is not feasible, we must find other solutions.

How to save more data?

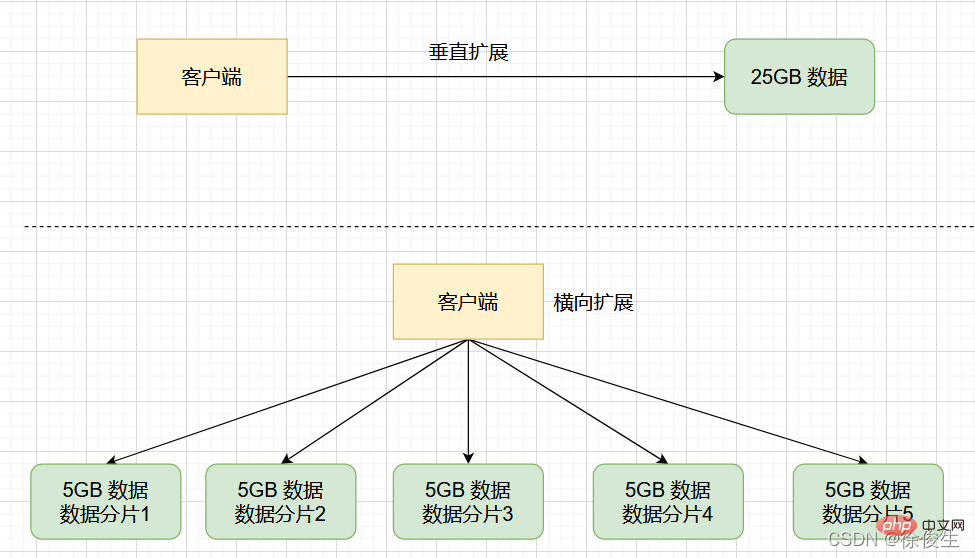

In order to save a large amount of data, we generally have two methods: "Vertical expansion" and "Horizontal expansion":

- Vertical Expansion: Upgrade the resource configuration of a single Redis instance, including increasing memory capacity, increasing disk capacity, and using a higher-configuration CPU;

- Horizontal expansion: horizontally increasing the number of current Redis instances.

First of all, the advantage of "vertical expansion" is that it is simple and direct to implement. However, this solution also faces two potential problems.

- The first problem is that when using RDB to persist data, if the amount of data increases, the memory required will also increase, and the main thread will

forkwhen the child process May be blocked. - Second question: Vertical expansion will be limited by hardware and cost. This is easy to understand. After all, it is easy to expand the memory from 32GB to 64GB. However, if you want to expand to 1TB, you will face limitations in hardware capacity and cost.

Compared with "vertical expansion", "horizontal expansion" is a better scalability solution. This is because if you want to save more data, if you adopt this solution, you only need to increase the number of Redis instances, and you don't have to worry about the hardware and cost limitations of a single instance.

Redis cluster is implemented based on "horizontal expansion". It forms a cluster by starting multiple Redis instances, and then divides the received data into multiple parts according to certain rules, and uses one instance for each part. save.

Redis Cluster

Redis cluster is a distributed database solution. The cluster is divided into sharding(sharding, also called slicing) ) to share data and provide replication and failover functions.

Going back to the scenario we just had, if the 25GB data is divided equally into 5 parts (of course, it does not need to be divided equally), and 5 instances are used to save it, each instance only needs to save 5GB data. As shown below:

Then, in a slicing cluster, when an instance generates an RDB for 5GB data, the amount of data is much smaller. fork The child process generally does not bring any significant overhead to the main thread. Prolonged blockage.

After using multiple instances to save data slices, we can not only save 25GB of data, but also avoid the sudden slowdown in response caused by fork when the child process blocks the main thread.

When using Redis in practice, as the business scale expands, it is usually unavoidable to save a large amount of data. And Redis cluster is a very good solution.

Let’s start studying how to build a Redis cluster?

Build a Redis cluster

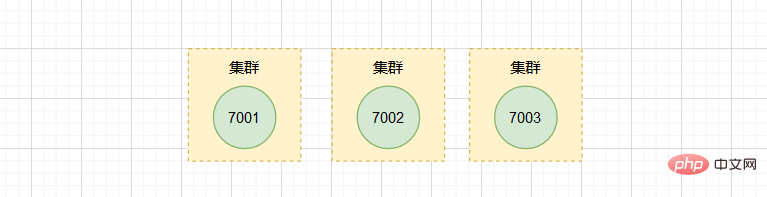

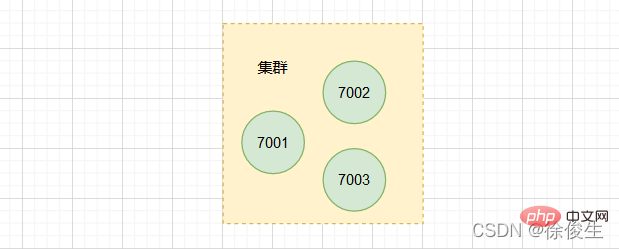

A Redis cluster usually consists of multiple nodes. At the beginning, each node is independent of each other, and there is no relationship between the nodes. To form a working cluster, we must connect the independent nodes to form a cluster containing multiple nodes.

We can connect each node through the CLUSTER MEET command:

CLUSTER MEET <ip> <port></port></ip>

- ip: the ip of the node to be added to the cluster

- port : Node port to be added to the cluster

Command description: By sending the CLUSTER MEET command to a node A, the node A that receives the command can add another node B to the node. In the cluster where A is located.

This is a bit abstract, let’s look at an example.

Suppose there are now three independent nodes 127.0.0.1:7001, 127.0.0.1:7002, 127.0.0.1:7003.

We first use the client to connect to node 7001:

$ redis-cli -c -p 7001

and then send commands to node 7001 , add node 7002 to the cluster where 7001 is located:

127.0.0.1:7001> CLUSTER MEET 127.0.0.1 7002

Similarly, we send the command to 7003 and also add it to # The cluster where ##7001 and 7002 are located.

127.0.0.1:7001> CLUSTER MEET 127.0.0.1 7003

You can view node information in the cluster through theCLUSTER NODES

command.

The cluster now contains three nodes:

The cluster now contains three nodes:

7001, 7002 and 7003. However, when using a single instance, it is very clear where the data exists and where the client accesses it. However, slicing clusters inevitably involve distributed management issues for multiple instances.

- How to distribute data among multiple instances after slicing?

- How does the client determine which instance the data it wants to access is on?

Redis Cluster solution that I will talk about next. However, we must first understand the connection and difference between slicing cluster and Redis Cluster.

Before Redis 3.0, the official did not provide specific solutions for slicing clusters. Starting from 3.0, the official provides a solution calledIn fact, slicing cluster is a general mechanism for saving large amounts of data. This mechanism can have different implementation solutions.Redis Cluster

for implementing slicing clusters.

Redis Cluster The scheme stipulates the corresponding rules for data and instances.

Redis Cluster solution uses Hash Slot (Hash Slot) to handle the mapping relationship between data and instances.

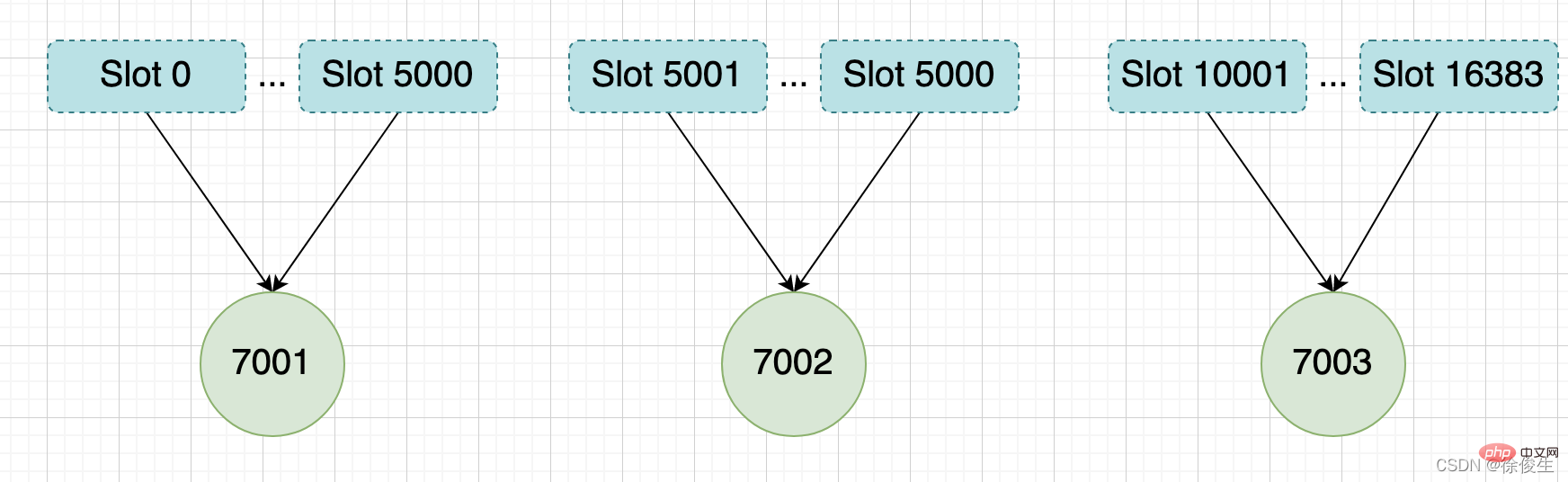

Redis Cluster solution, a slice cluster has a total of 16384 hash slots (2^14 ), these hash slots are similar to data partitions, each key-value pair will be mapped to a hash slot according to its key.

7001, 7002, and 7003 are connected through the CLUSTER MEET command. Go to the same cluster, but this cluster is currently in offline state, because the three nodes in the cluster have not been assigned any slots.

CLUSTER MEET command to manually establish connections between instances to form a cluster, and then use the CLUSTER ADDSLOTS command to specify the number of hash slots on each instance. number.

CLUSTER ADDSLOTS <slot> [slot ...]</slot>

Redis5.0 provides theCLUSTER CREATE

command to create a cluster. Using this command, Redis will automatically distribute these slots evenly among the cluster instances.

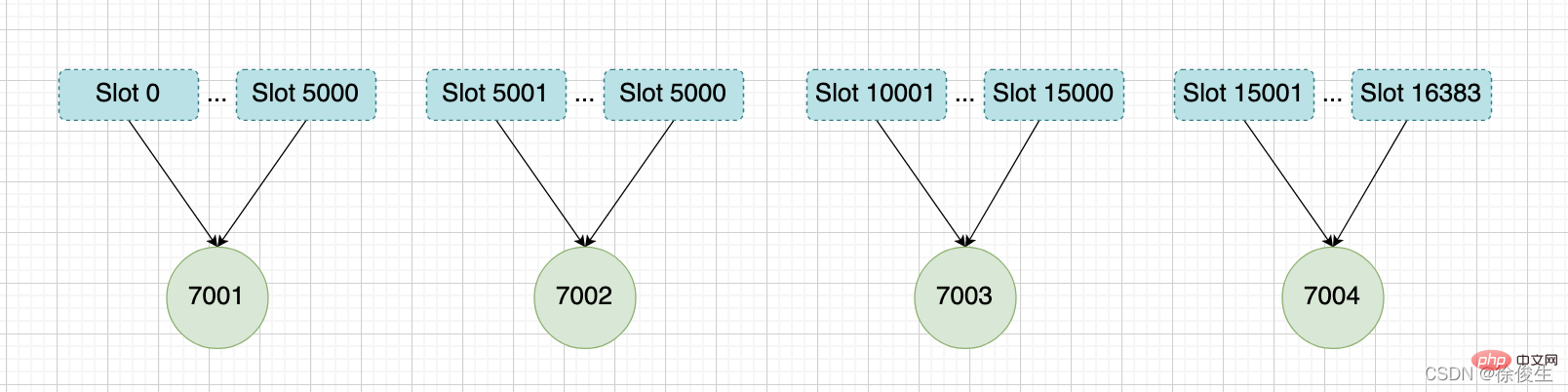

举个例子,我们通过以下命令,给 7001、7002、7003 三个节点分别指派槽。

将槽 0 ~ 槽5000 指派给 给 7001 :

127.0.0.1:7001> CLUSTER ADDSLOTS 0 1 2 3 4 ... 5000

将槽 5001 ~ 槽10000 指派给 给 7002 :

127.0.0.1:7002> CLUSTER ADDSLOTS 5001 5002 5003 5004 ... 10000

将槽 10001~ 槽 16383 指派给 给 7003 :

127.0.0.1:7003> CLUSTER ADDSLOTS 10001 10002 10003 10004 ... 16383

当三个 CLUSTER ADDSLOTS 命令都执行完毕之后,数据库中的 16384 个槽都已经被指派给了对应的节点,此时集群进入上线状态。

通过哈希槽,切片集群就实现了数据到哈希槽、哈希槽再到实例的分配。

但是,即使实例有了哈希槽的映射信息,客户端又是怎么知道要访问的数据在哪个实例上呢?

客户端如何定位数据?

一般来说,客户端和集群实例建立连接后,实例就会把哈希槽的分配信息发给客户端。但是,在集群刚刚创建的时候,每个实例只知道自己被分配了哪些哈希槽,是不知道其他实例拥有的哈希槽信息的。

那么,客户端是如何可以在访问任何一个实例时,就能获得所有的哈希槽信息呢?

Redis 实例会把自己的哈希槽信息发给和它相连接的其它实例,来完成哈希槽分配信息的扩散。当实例之间相互连接后,每个实例就有所有哈希槽的映射关系了。

客户端收到哈希槽信息后,会把哈希槽信息缓存在本地。当客户端请求键值对时,会先计算键所对应的哈希槽,然后就可以给相应的实例发送请求了。

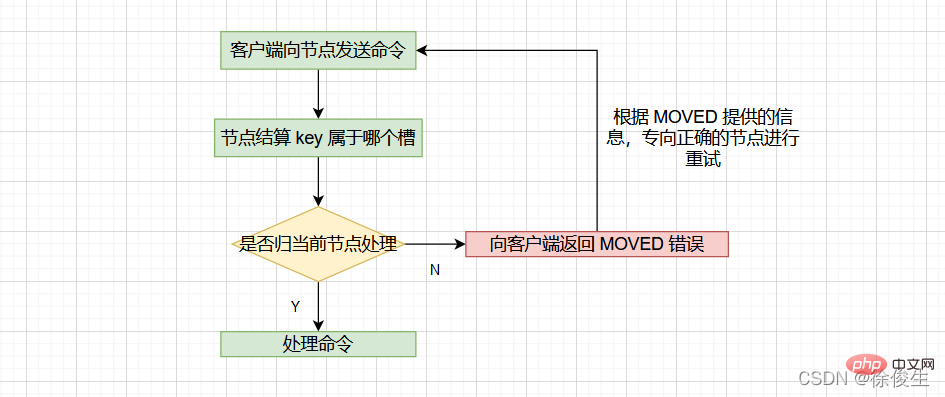

当客户端向节点请求键值对时,接收命令的节点会计算出命令要处理的数据库键属于哪个槽,并检查这个槽是否指派给了自己:

- 如果键所在的槽刚好指派给了当前节点,那么节点会直接执行这个命令;

- 如果没有指派给当前节点,那么节点会向客户端返回一个

MOVED错误,然后重定向(redirect)到正确的节点,并再次发送之前待执行的命令。

计算键属于哪个槽

节点通过以下算法来定义 key 属于哪个槽:

crc16(key,keylen) & 0x3FFF;

- crc16:用于计算 key 的 CRC-16 校验和

- 0x3FFF:换算成 10 进制是 16383

- & 0x3FFF:用于计算出一个介于 0~16383 之间的整数作为 key 的槽号。

通过

CLUSTER KEYSLOT <key></key>命令可以查看 key 属于哪个槽。

判断槽是否由当前节点负责处理

当节点计算出 key 所属的 槽 i 之后,节点会判断 槽 i 是否被指派了自己。那么如何判断呢?

每个节点会维护一个 「slots数组」,节点通过检查 slots[i] ,判断 槽 i 是否由自己负责:

- 如果说

slots[i]对应的节点是当前节点的话,那么说明槽 i由当前节点负责,节点可以执行客户端发送的命令; - 如果说

slots[i]对应的不是当前节点,节点会根据slots[i]所指向的节点向客户端返回MOVED错误,指引客户端转到正确的节点。

MOVED 错误

格式:

MOVED <slot> <ip>:<port></port></ip></slot>

- slot:键所在的槽

- ip:负责处理槽 slot 节点的 ip

- port:负责处理槽 slot 节点的 port

比如:MOVED 10086 127.0.0.1:7002,表示,客户端请求的键值对所在的哈希槽 10086,实际是在 127.0.0.1:7002 这个实例上。

通过返回的 MOVED 命令,就相当于把哈希槽所在的新实例的信息告诉给客户端了。

这样一来,客户端就可以直接和 7002 连接,并发送操作请求了。

同时,客户端还会更新本地缓存,将该槽与 Redis 实例对应关系更新正确。

集群模式的

redis-cli客户端在接收到MOVED错误时,并不会打印出MOVED错误,而是根据MOVED错误自动进行节点转向,并打印出转向信息,所以我们是看不见节点返回的MOVED错误的。而使用单机模式的redis-cli客户端可以打印MOVED错误。

其实,Redis 告知客户端重定向访问新实例分两种情况:MOVED 和 ASK 。下面我们分析下 ASK 重定向命令的使用方法。

重新分片

在集群中,实例和哈希槽的对应关系并不是一成不变的,最常见的变化有两个:

- 在集群中,实例有新增或删除,Redis 需要重新分配哈希槽;

- 为了负载均衡,Redis 需要把哈希槽在所有实例上重新分布一遍。

重新分片可以在线进行,也就是说,重新分片的过程中,集群不需要下线。

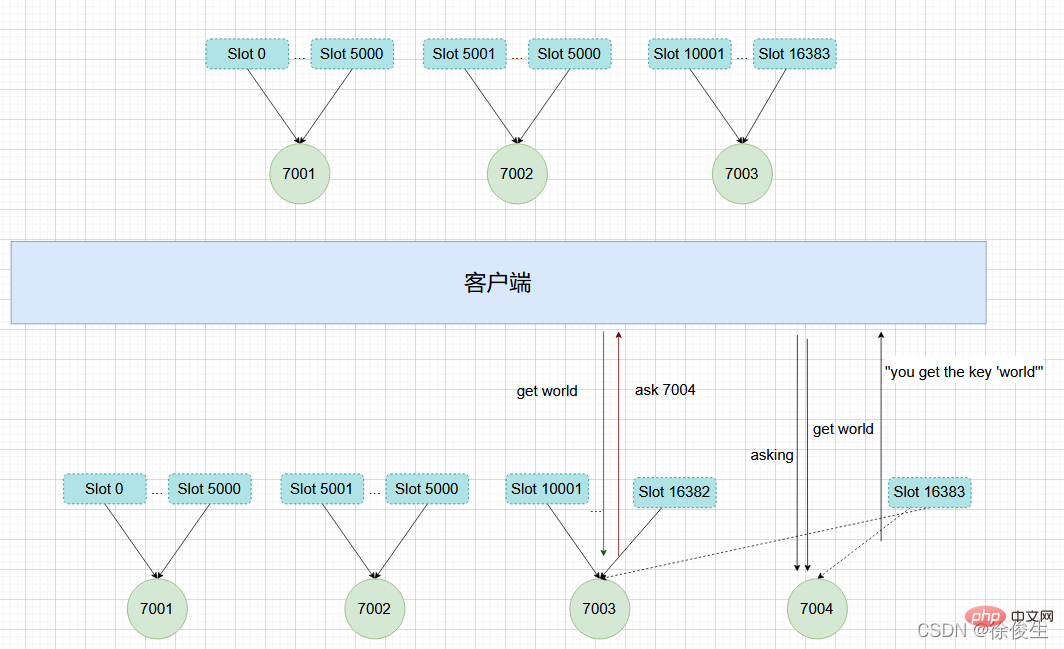

举个例子,上面提到,我们组成了 7001、7002、7003 三个节点的集群,我们可以向这个集群添加一个新节点127.0.0.1:7004。

$ redis-cli -c -p 7001 127.0.0.1:7001> CLUSTER MEET 127.0.0.1 7004 OK

然后通过重新分片,将原本指派给节点 7003 的槽 15001 ~ 槽 16383 改为指派给 7004。

在重新分片的期间,源节点向目标节点迁移槽的过程中,可能会出现这样一种情况:如果某个槽的数据比较多,部分迁移到新实例,还有一部分没有迁移咋办?

在这种迁移部分完成的情况下,客户端就会收到一条 ASK 报错信息。

ASK 错误

如果客户端向目标节点发送一个与数据库键有关的命令,并且这个命令要处理的键正好属于被迁移的槽时:

- 源节点会先在自己的数据库里查找指定的键,如果找到的话,直接执行命令;

- 相反,如果源节点没有找到,那么这个键就有可能已经迁移到了目标节点,源节点就会向客户端发送一个

ASK错误,指引客户端转向目标节点,并再次发送之前要执行的命令。

看起来好像有点复杂,我们举个例子来解释一下。

如上图所示,节点 7003 正在向 7004 迁移 槽 16383,这个槽包含 hello 和 world,其中键 hello 还留在节点 7003,而 world 已经迁移到 7004。

我们向节点 7003 发送关于 hello 的命令 这个命令会直接执行:

127.0.0.1:7003> GET "hello" "you get the key 'hello'"

如果我们向节点 7003 发送 world 那么客户端就会被重定向到 7004:

127.0.0.1:7003> GET "world" -> (error) ASK 16383 127.0.0.1:7004

客户端在接收到 ASK 错误之后,先发送一个 ASKING 命令,然后在发送 GET "world" 命令。

ASKING命令用于打开节点的ASKING标识,打开之后才可以执行命令。

ASK 和 MOVED 的区别

ASK 错误和 MOVED 错误都会导致客户端重定向,它们的区别在于:

- MOVED 错误代表槽的负责权已经从一个节点转移到了另一个节点:在客户端收到关于

槽 i的MOVED错误之后,客户端每次遇到关于槽 i的命令请求时,都可以直接将命令请求发送至MOVED错误指向的节点,因为该节点就是目前负责槽 i的节点。 - 而 ASK 只是两个节点迁移槽的过程中的一种临时措施:在客户端收到关于

槽 i的ASK错误之后,客户端只会在接下来的一次命令请求中将关于槽 i的命令请求发送到ASK错误指向的节点,但是 ,如果客户端再次请求槽 i中的数据,它还是会给原来负责槽 i的节点发送请求。

这也就是说,ASK 命令的作用只是让客户端能给新实例发送一次请求,而且也不会更新客户端缓存的哈希槽分配信息。而不像 MOVED 命令那样,会更改本地缓存,让后续所有命令都发往新实例。

我们现在知道了 Redis 集群的实现原理。下面我们再来分析下,Redis 集群如何实现高可用的呢?

复制与故障转移

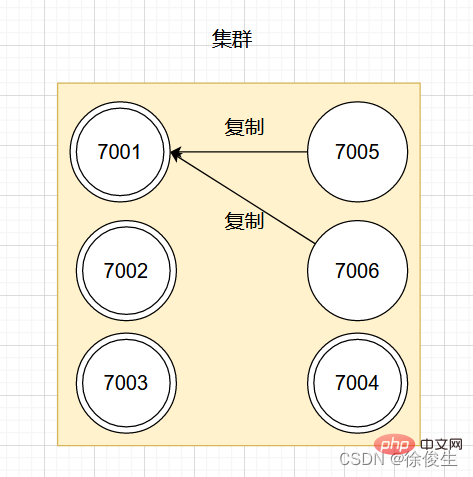

Redis 集群中的节点也是分为主节点和从节点。

- 主节点用于处理槽

- 从节点用于复制主节点,如果被复制的主节点下线,可以代替主节点继续提供服务。

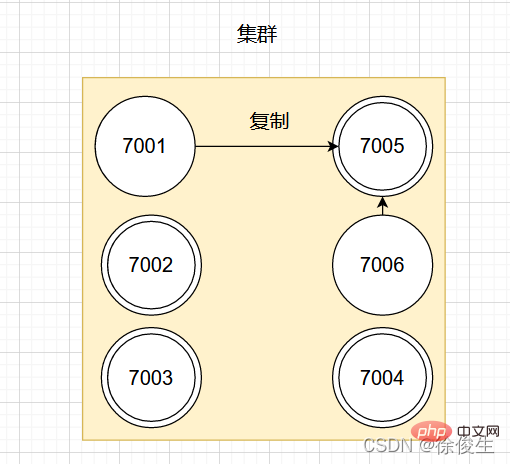

举个例子,对于包含 7001 ~ 7004 的四个主节点的集群,可以添加两个节点:7005、7006。并将这两个节点设置为 7001 的从节点。

设置从节点命令:

CLUSTER REPLICATE <node_id></node_id>

如图:

如果此时,主节点 7001 下线,那么集群中剩余正常工作的主节点将在 7001 的两个从节点中选出一个作为新的主节点。

例如,节点 7005 被选中,那么原来由节点 7001 负责处理的槽会交给节点 7005 处理。而节点 7006 会改为复制新主节点 7005。如果后续 7001 重新上线,那么它将成为 7005 的从节点。如下图所示:

故障检测

集群中每个节点会定期向其他节点发送 PING 消息,来检测对方是否在线。如果接收消息的一方没有在规定时间内返回 PONG 消息,那么接收消息的一方就会被发送方标记为「疑似下线」。

集群中的各个节点会通过互相发消息的方式来交换各节点的状态信息。

节点的三种状态:

- 在线状态

- 疑似下线状态 PFAIL

- 已下线状态 FAIL

一个节点认为某个节点失联了并不代表所有的节点都认为它失联了。在一个集群中,半数以上负责处理槽的主节点都认定了某个主节点下线了,集群才认为该节点需要进行主从切换。

Redis 集群节点采用 Gossip 协议来广播自己的状态以及自己对整个集群认知的改变。比如一个节点发现某个节点失联了 (PFail),它会将这条信息向整个集群广播,其它节点也就可以收到这点失联信息。

我们都知道,哨兵机制可以通过监控、自动切换主库、通知客户端实现故障自动切换。那么 Redis Cluster 又是如何实现故障自动转移呢?

故障转移

当一个从节点发现自己正在复制的主节点进入了「已下线」状态时,从节点将开始对下线主节点进行故障切换。

故障转移的执行步骤:

- 在复制下线主节点的所有从节点里,选中一个从节点

- 被选中的从节点执行

SLAVEOF no one命令,成为主节点 - 新的主节点会撤销所有对已下线主节点的槽指派,将这些槽全部指派给自己

- 新的主节点向集群广播一条

PONG消息,让集群中其他节点知道,该节点已经由从节点变为主节点,且已经接管了原主节点负责的槽 - 新的主节点开始接收自己负责处理槽有关的命令请求,故障转移完成。

选主

这个选主方法和哨兵的很相似,两者都是基于 Raft算法 的领头算法实现的。流程如下:

- 集群的配置纪元是一个自增计数器,初始值为0;

- 当集群里的某个节点开始一次故障转移操作时,集群配置纪元加 1;

- 对于每个配置纪元,集群里每个负责处理槽的主节点都有一次投票的机会,第一个向主节点要求投票的从节点将获得主节点的投票;

- 当从节点发现自己复制的主节点进入「已下线」状态时,会向集群广播一条消息,要求收到这条消息,并且具有投票权的主节点为自己投票;

- 如果一个主节点具有投票权,且尚未投票给其他从节点,那么该主节点会返回一条消息给要求投票的从节点,表示支持从节点成为新的主节点;

- 每个参与选举的从节点会计算获得了多少主节点的支持;

- 如果集群中有 N 个具有投票权的主节点,当一个从节点收到的支持票

大于等于 N/2 + 1时,该从节点就会当选为新的主节点; - 如果在一个配置纪元里没有从节点收集到足够多的票数,那么集群会进入一个新的配置纪元,并再次进行选主。

消息

集群中的各个节点通过发送和接收消息来进行通信,我们把发送消息的节点称为发送者,接收消息的称为接收者。

节点发送的消息主要有五种:

- MEET Message

- PING Message

- PONG Message

- FAIL Message

- PUBLISH Message

Cluster Each node in the exchanges the status information of different nodes through the Gossip protocol. Gossip is composed of MEET, PING, PONG Composed of three kinds of messages.

Every time the sender sends a MEET, PING, PONG message, he will randomly select two nodes from his known node list. node (can be a master node or a slave node) and sent to the recipient together. When the

receiver receives the MEET, PING, PONG message, it performs different processing based on whether it knows these two nodes:

- If the selected node does not exist and is receiving a known node list, it means that it is the first contact, and the recipient will communicate based on the IP and port number of the selected node;

- If it already exists, it means that the communication has been completed before, and then the information of the original selected node will be updated.

Recommended learning: Redis tutorial

The above is the detailed content of Take you step by step to understand Redis high availability cluster. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Solution to 0x80242008 error when installing Windows 11 10.0.22000.100

May 08, 2024 pm 03:50 PM

Solution to 0x80242008 error when installing Windows 11 10.0.22000.100

May 08, 2024 pm 03:50 PM

1. Start the [Start] menu, enter [cmd], right-click [Command Prompt], and select Run as [Administrator]. 2. Enter the following commands in sequence (copy and paste carefully): SCconfigwuauservstart=auto, press Enter SCconfigbitsstart=auto, press Enter SCconfigcryptsvcstart=auto, press Enter SCconfigtrustedinstallerstart=auto, press Enter SCconfigwuauservtype=share, press Enter netstopwuauserv , press enter netstopcryptS

Golang API caching strategy and optimization

May 07, 2024 pm 02:12 PM

Golang API caching strategy and optimization

May 07, 2024 pm 02:12 PM

The caching strategy in GolangAPI can improve performance and reduce server load. Commonly used strategies are: LRU, LFU, FIFO and TTL. Optimization techniques include selecting appropriate cache storage, hierarchical caching, invalidation management, and monitoring and tuning. In the practical case, the LRU cache is used to optimize the API for obtaining user information from the database. The data can be quickly retrieved from the cache. Otherwise, the cache can be updated after obtaining it from the database.

Caching mechanism and application practice in PHP development

May 09, 2024 pm 01:30 PM

Caching mechanism and application practice in PHP development

May 09, 2024 pm 01:30 PM

In PHP development, the caching mechanism improves performance by temporarily storing frequently accessed data in memory or disk, thereby reducing the number of database accesses. Cache types mainly include memory, file and database cache. Caching can be implemented in PHP using built-in functions or third-party libraries, such as cache_get() and Memcache. Common practical applications include caching database query results to optimize query performance and caching page output to speed up rendering. The caching mechanism effectively improves website response speed, enhances user experience and reduces server load.

How to upgrade Win11 English 21996 to Simplified Chinese 22000_How to upgrade Win11 English 21996 to Simplified Chinese 22000

May 08, 2024 pm 05:10 PM

How to upgrade Win11 English 21996 to Simplified Chinese 22000_How to upgrade Win11 English 21996 to Simplified Chinese 22000

May 08, 2024 pm 05:10 PM

First you need to set the system language to Simplified Chinese display and restart. Of course, if you have changed the display language to Simplified Chinese before, you can just skip this step. Next, start operating the registry, regedit.exe, directly navigate to HKEY_LOCAL_MACHINESYSTEMCurrentControlSetControlNlsLanguage in the left navigation bar or the upper address bar, and then modify the InstallLanguage key value and Default key value to 0804 (if you want to change it to English en-us, you need First set the system display language to en-us, restart the system and then change everything to 0409) You must restart the system at this point.

How to use Redis cache in PHP array pagination?

May 01, 2024 am 10:48 AM

How to use Redis cache in PHP array pagination?

May 01, 2024 am 10:48 AM

Using Redis cache can greatly optimize the performance of PHP array paging. This can be achieved through the following steps: Install the Redis client. Connect to the Redis server. Create cache data and store each page of data into a Redis hash with the key "page:{page_number}". Get data from cache and avoid expensive operations on large arrays.

How to find the update file downloaded by Win11_Share the location of the update file downloaded by Win11

May 08, 2024 am 10:34 AM

How to find the update file downloaded by Win11_Share the location of the update file downloaded by Win11

May 08, 2024 am 10:34 AM

1. First, double-click the [This PC] icon on the desktop to open it. 2. Then double-click the left mouse button to enter [C drive]. System files will generally be automatically stored in C drive. 3. Then find the [windows] folder in the C drive and double-click to enter. 4. After entering the [windows] folder, find the [SoftwareDistribution] folder. 5. After entering, find the [download] folder, which contains all win11 download and update files. 6. If we want to delete these files, just delete them directly in this folder.

PHP Redis caching applications and best practices

May 04, 2024 am 08:33 AM

PHP Redis caching applications and best practices

May 04, 2024 am 08:33 AM

Redis is a high-performance key-value cache. The PHPRedis extension provides an API to interact with the Redis server. Use the following steps to connect to Redis, store and retrieve data: Connect: Use the Redis classes to connect to the server. Storage: Use the set method to set key-value pairs. Retrieval: Use the get method to obtain the value of the key.

How to optimize function performance for different PHP versions?

Apr 25, 2024 pm 03:03 PM

How to optimize function performance for different PHP versions?

Apr 25, 2024 pm 03:03 PM

Methods to optimize function performance for different PHP versions include: using analysis tools to identify function bottlenecks; enabling opcode caching or using an external caching system; adding type annotations to improve performance; and selecting appropriate string concatenation and sorting algorithms according to the PHP version.