Backend Development

Backend Development

Python Tutorial

Python Tutorial

Python detailed analysis of multi-threaded crawlers and common search algorithms

Python detailed analysis of multi-threaded crawlers and common search algorithms

Python detailed analysis of multi-threaded crawlers and common search algorithms

This article brings you relevant knowledge about python, which mainly introduces issues related to multi-threaded crawler development and common search algorithms. Let’s take a look at it together. I hope it will be helpful to everyone. help.

Recommended learning: python video tutorial

Multi-threaded crawler

Advantages of multi-threading

After mastering requests and regular expressions, you can start to actually crawl some simple URLs.

However, the crawler at this time has only one process and one thread, so it is called a single-threaded crawler. Single-threaded crawlers only visit one page at a time and cannot fully utilize the computer's network bandwidth. A page is only a few hundred KB at most, so when a crawler crawls a page, the extra network speed and the time between initiating the request and getting the source code are wasted. If the crawler can access 10 pages at the same time, it is equivalent to increasing the crawling speed by 10 times. In order to achieve this goal, you need to use multi-threading technology.

The Python language has a global interpreter lock (Global Interpreter Lock, GIL). This causes Python's multi-threading to be pseudo-multi-threading, that is, it is essentially a thread, but this thread only does each thing for a few milliseconds. After a few milliseconds, it saves the scene and changes to other things. After a few milliseconds, it does other things again. After one round, return to the first thing, resume the scene, work for a few milliseconds, and continue to change... A single thread on a micro scale is like doing several things at the same time on a macro scale. This mechanism has little impact on I/O (Input/Output, input/output) intensive operations, but on CPU calculation-intensive operations, since only one core of the CPU can be used, it will have a significant impact on performance. Big impact. Therefore, if you are involved in computationally intensive programs, you need to use multiple processes. Python's multi-processes are not affected by the GIL. Crawlers are I/O-intensive programs, so using multi-threading can greatly improve crawling efficiency.

Multiprocess library: multiprocessing

Multiprocessing itself is Python's multiprocess library, used to handle operations related to multiprocesses. However, since memory and stack resources cannot be shared directly between processes, and the cost of starting a new process is much greater than that of threads, using multi-threads to crawl has more advantages than using multiple processes.

There is a dummy module under multiprocessing, which allows Python threads to use various methods of multiprocessing.

There is a Pool class under dummy, which is used to implement the thread pool.

This thread pool has a map() method, which allows all threads in the thread pool to execute a function "at the same time".

For example:

After learning the for loop

for i in range(10): print(i*i)

Of course this way of writing can get results, but the code is calculated one by one, which is not very efficient. . If you use multi-threading technology to allow the code to calculate the squares of many numbers at the same time, you need to use multiprocessing.dummy to achieve it:

Example of multi-threading usage:

from multiprocessing.dummy import Pooldef cal_pow(num):

return num*num

pool=Pool(3)num=[x for x in range(10)]result=pool.map(cal_pow,num)print('{}'.format(result))In the above code , first defines a function to calculate the square, and then initializes a thread pool with 3 threads. These three threads are responsible for calculating the square of 10 numbers. Whoever finishes calculating the number on hand first will take the next number and continue calculating until all the numbers are calculated.

In this example, The map() method of the thread pool receives two parameters. The first parameter is the function name, and the second parameter is a list. Note: The first parameter is only the name of the function and cannot contain parentheses . The second parameter is an iterable object. Each element in this iterable object will be received as a parameter by the function clac_power2(). In addition to lists, tuples, sets, or dictionaries can be used as the second parameter of map().

Multi-threaded crawler development

Because crawlers are I/O-intensive operations, especially when requesting web page source code, if you use a single thread to develop, it will waste a lot of time. Waiting for the web page to return, so applying multi-threading technology to the crawler can greatly improve the operating efficiency of the crawler. As an example. It takes 50 minutes to wash clothes in the washing machine, 15 minutes to boil water in the kettle, and 1 hour to memorize vocabulary. If you wait for the washing machine to wash the clothes first, then boil the water after the clothes are washed, and then recite the vocabulary after the water is boiled, it will take a total of 125 minutes.

But if you put it another way, looking at it as a whole, 3 things can run at the same time. Suppose you suddenly have two other people, one of whom is responsible for putting the clothes in the washing machine and waiting for the washing machine to finish. , another person is responsible for boiling water and waiting for the water to boil, and you only need to memorize the words. When the water boils, the clone responsible for boiling the water disappears first. When the washing machine finishes washing the clothes, the clone responsible for washing the clothes disappears. Finally, you memorize the words yourself. It only takes 60 minutes to complete 3 things at the same time.

Of course, everyone will definitely find that the above example is not the actual situation in life. In reality, no one is separated. What happens in real life is that when people memorize words, they concentrate on memorizing them; after the water is boiled, the kettle will make a sound to remind you; when the clothes are washed, the washing machine will make a "didi" sound. So just take the corresponding actions when the reminder comes. There is no need to check every minute. The above two differences are actually the differences between multi-threading and event-driven asynchronous models. This section talks about multi-threaded operations, and we will talk about the crawler framework using asynchronous operations later. Now you just need to remember that when the number of actions that need to be operated is not large, there is no difference in the performance of the two methods, but once the number of actions increases significantly, the efficiency improvement of multi-threading will decrease, and it will even be worse than single-threading. . And by that time, only asynchronous operations are the solution to the problem.

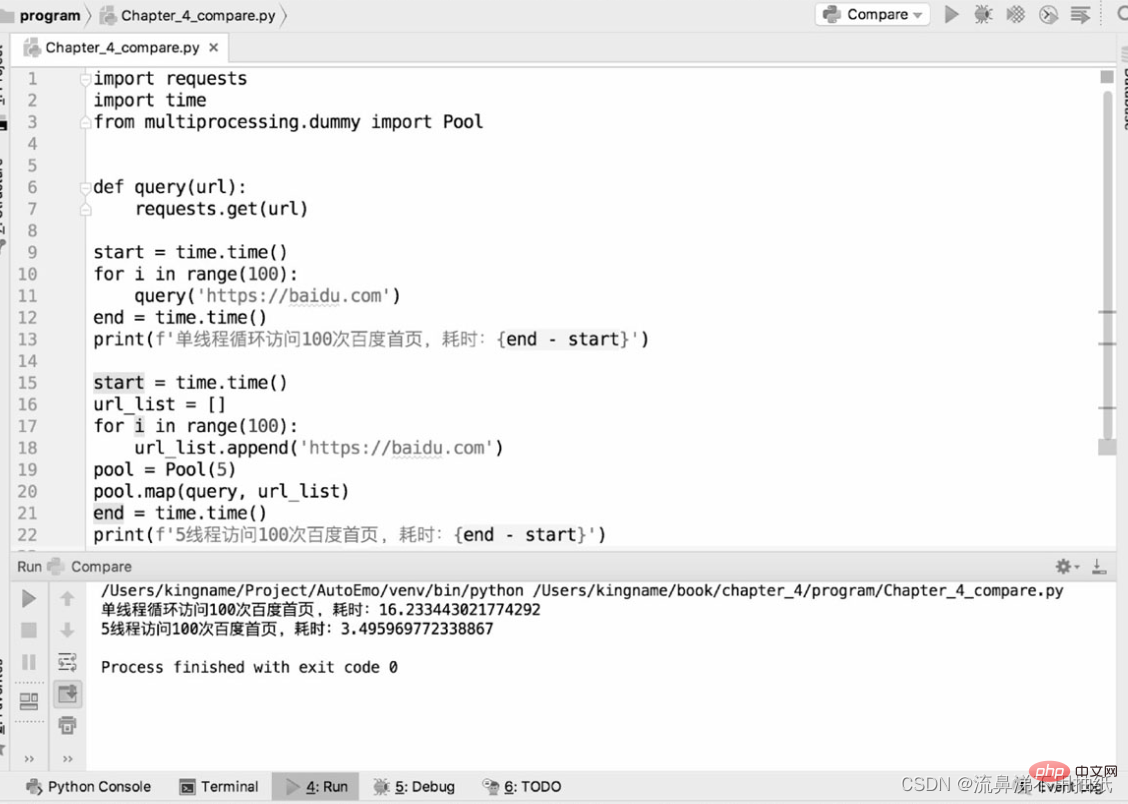

The following two pieces of code are used to compare the performance differences between single-threaded crawlers and multi-threaded crawlers in crawling the bd homepage:

As can be seen from the running results, one thread takes about 16.2s, 5 The thread takes about 3.5s, which is about one-fifth of the time of a single thread. You can also see the effect of 5 threads "running simultaneously" from the time perspective. But it doesn't mean that the bigger the thread pool is, the better. It can also be seen from the above results that the running time of five threads is actually a little more than one-fifth of the running time of one thread. The extra point is actually the time of thread switching. This also reflects from the side that Python's multi-threading is still serial at a micro level. Therefore, if the thread pool is set too large, the overhead caused by thread switching may offset the performance improvement brought by multi-threading. The size of the thread pool needs to be determined according to the actual situation, and there is no exact data. Readers can set different sizes for testing and comparison in specific application scenarios to find the most suitable data.

Common search algorithms for crawlers

Depth first search

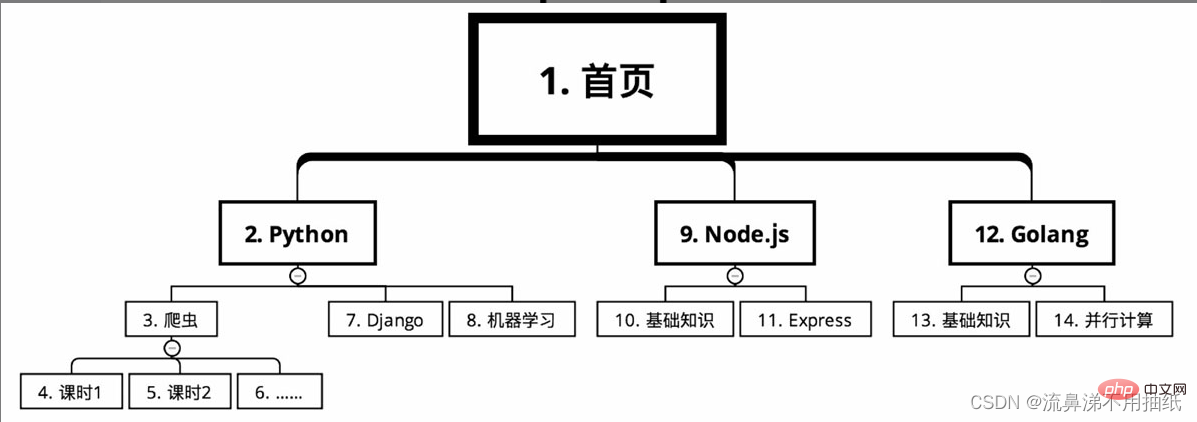

The course classification of an online education website needs to be crawled course information. Starting from the home page, the courses are divided into several major categories, such as Python, Node.js and Golang according to language. There are many courses under each major category, such as crawlers, Django and machine learning under Python. Each course is divided into many class hours.

In the case of depth-first search, the crawling route is as shown in the figure (serial number from small to large)

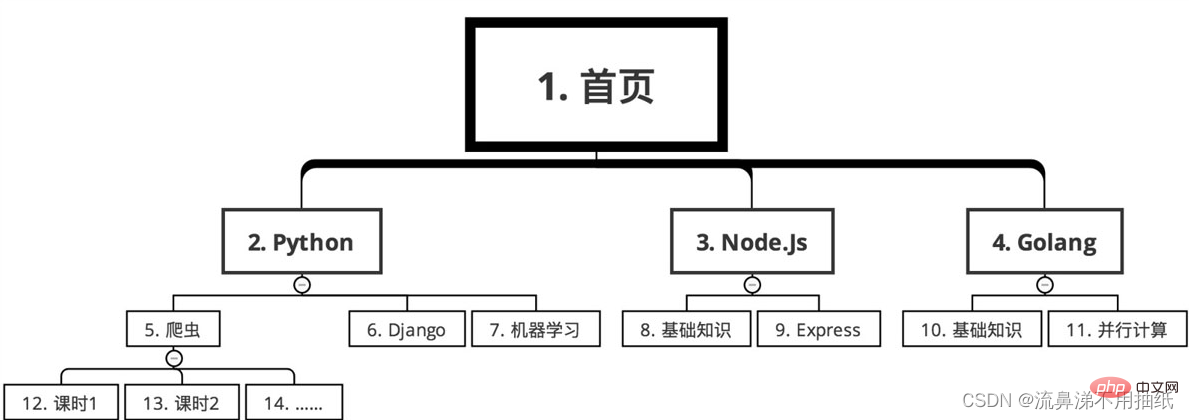

Breadth-first search

Sequence The following

algorithm selection

For example, you want to crawl all the restaurant information in a website nationwide and the order information of each restaurant. Assuming that the depth-first algorithm is used, then first crawl to restaurant A from a certain link, and then immediately crawl the order information of restaurant A. Since there are hundreds of thousands of restaurants across the country, it may take 12 hours to climb them all. The problem caused by this is that the order volume of restaurant A may reach 8 o'clock in the morning, while the order volume of restaurant B may reach 8 o'clock in the evening. Their order volume differs by 12 hours. For popular restaurants, 12 hours may result in an income gap of millions. In this way, when doing data analysis, the 12-hour time difference will make it difficult to compare the sales performance of restaurants A and B. Relative to order volume, restaurant volume changes are much smaller. Therefore, if you use breadth-first search, first crawl all restaurants from 0:00 in the middle of the night to 12:00 noon the next day, and then focus on crawling the order volume of each restaurant from 14:00 to 20:00 the next day. In this way, it only took 6 hours to complete the order crawling task, narrowing the difference in order volume caused by the time difference. At the same time, since the shop's crawling every few days has little impact, the number of requests has also been reduced, making it more difficult for the crawler to be discovered by the website.

For another example, to analyze real-time public opinion, you need to crawl Baidu Tieba. A popular forum may have tens of thousands of pages of posts, assuming the earliest posts date back to 2010. If breadth-first search is used, first obtain the titles and URLs of all posts in this Tieba, and then enter each post based on these URLs to obtain information on each floor. However, since it is real-time public opinion, posts from 7 years ago are of little significance for current analysis. What is more important are new posts, so new content should be captured first. Compared with past content, real-time content is the most important. Therefore, for crawling Tieba content, depth-first search should be used. When you see a post, go in quickly and crawl the information of each floor. After crawling one post, you can crawl to the next post. Of course, these two search algorithms are not either/or and need to be chosen flexibly according to the actual situation. In many cases, they can also be used at the same time.

Recommended learning: python video tutorial

The above is the detailed content of Python detailed analysis of multi-threaded crawlers and common search algorithms. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1382

1382

52

52

PHP and Python: Code Examples and Comparison

Apr 15, 2025 am 12:07 AM

PHP and Python: Code Examples and Comparison

Apr 15, 2025 am 12:07 AM

PHP and Python have their own advantages and disadvantages, and the choice depends on project needs and personal preferences. 1.PHP is suitable for rapid development and maintenance of large-scale web applications. 2. Python dominates the field of data science and machine learning.

How is the GPU support for PyTorch on CentOS

Apr 14, 2025 pm 06:48 PM

How is the GPU support for PyTorch on CentOS

Apr 14, 2025 pm 06:48 PM

Enable PyTorch GPU acceleration on CentOS system requires the installation of CUDA, cuDNN and GPU versions of PyTorch. The following steps will guide you through the process: CUDA and cuDNN installation determine CUDA version compatibility: Use the nvidia-smi command to view the CUDA version supported by your NVIDIA graphics card. For example, your MX450 graphics card may support CUDA11.1 or higher. Download and install CUDAToolkit: Visit the official website of NVIDIACUDAToolkit and download and install the corresponding version according to the highest CUDA version supported by your graphics card. Install cuDNN library:

Detailed explanation of docker principle

Apr 14, 2025 pm 11:57 PM

Detailed explanation of docker principle

Apr 14, 2025 pm 11:57 PM

Docker uses Linux kernel features to provide an efficient and isolated application running environment. Its working principle is as follows: 1. The mirror is used as a read-only template, which contains everything you need to run the application; 2. The Union File System (UnionFS) stacks multiple file systems, only storing the differences, saving space and speeding up; 3. The daemon manages the mirrors and containers, and the client uses them for interaction; 4. Namespaces and cgroups implement container isolation and resource limitations; 5. Multiple network modes support container interconnection. Only by understanding these core concepts can you better utilize Docker.

Python vs. JavaScript: Community, Libraries, and Resources

Apr 15, 2025 am 12:16 AM

Python vs. JavaScript: Community, Libraries, and Resources

Apr 15, 2025 am 12:16 AM

Python and JavaScript have their own advantages and disadvantages in terms of community, libraries and resources. 1) The Python community is friendly and suitable for beginners, but the front-end development resources are not as rich as JavaScript. 2) Python is powerful in data science and machine learning libraries, while JavaScript is better in front-end development libraries and frameworks. 3) Both have rich learning resources, but Python is suitable for starting with official documents, while JavaScript is better with MDNWebDocs. The choice should be based on project needs and personal interests.

MiniOpen Centos compatibility

Apr 14, 2025 pm 05:45 PM

MiniOpen Centos compatibility

Apr 14, 2025 pm 05:45 PM

MinIO Object Storage: High-performance deployment under CentOS system MinIO is a high-performance, distributed object storage system developed based on the Go language, compatible with AmazonS3. It supports a variety of client languages, including Java, Python, JavaScript, and Go. This article will briefly introduce the installation and compatibility of MinIO on CentOS systems. CentOS version compatibility MinIO has been verified on multiple CentOS versions, including but not limited to: CentOS7.9: Provides a complete installation guide covering cluster configuration, environment preparation, configuration file settings, disk partitioning, and MinI

How to operate distributed training of PyTorch on CentOS

Apr 14, 2025 pm 06:36 PM

How to operate distributed training of PyTorch on CentOS

Apr 14, 2025 pm 06:36 PM

PyTorch distributed training on CentOS system requires the following steps: PyTorch installation: The premise is that Python and pip are installed in CentOS system. Depending on your CUDA version, get the appropriate installation command from the PyTorch official website. For CPU-only training, you can use the following command: pipinstalltorchtorchvisiontorchaudio If you need GPU support, make sure that the corresponding version of CUDA and cuDNN are installed and use the corresponding PyTorch version for installation. Distributed environment configuration: Distributed training usually requires multiple machines or single-machine multiple GPUs. Place

How to choose the PyTorch version on CentOS

Apr 14, 2025 pm 06:51 PM

How to choose the PyTorch version on CentOS

Apr 14, 2025 pm 06:51 PM

When installing PyTorch on CentOS system, you need to carefully select the appropriate version and consider the following key factors: 1. System environment compatibility: Operating system: It is recommended to use CentOS7 or higher. CUDA and cuDNN:PyTorch version and CUDA version are closely related. For example, PyTorch1.9.0 requires CUDA11.1, while PyTorch2.0.1 requires CUDA11.3. The cuDNN version must also match the CUDA version. Before selecting the PyTorch version, be sure to confirm that compatible CUDA and cuDNN versions have been installed. Python version: PyTorch official branch

How to install nginx in centos

Apr 14, 2025 pm 08:06 PM

How to install nginx in centos

Apr 14, 2025 pm 08:06 PM

CentOS Installing Nginx requires following the following steps: Installing dependencies such as development tools, pcre-devel, and openssl-devel. Download the Nginx source code package, unzip it and compile and install it, and specify the installation path as /usr/local/nginx. Create Nginx users and user groups and set permissions. Modify the configuration file nginx.conf, and configure the listening port and domain name/IP address. Start the Nginx service. Common errors need to be paid attention to, such as dependency issues, port conflicts, and configuration file errors. Performance optimization needs to be adjusted according to the specific situation, such as turning on cache and adjusting the number of worker processes.