Web Front-end

Web Front-end

JS Tutorial

JS Tutorial

Detailed explanation of how to use Node.js to develop a simple image crawling function

Detailed explanation of how to use Node.js to develop a simple image crawling function

Detailed explanation of how to use Node.js to develop a simple image crawling function

How to use Node for crawling? The following article will talk about using Node.js to develop a simple image crawling function. I hope it will be helpful to you!

The main purpose of the crawler is to collect some specific data that is publicly available on the Internet. Using this data, we can analyze some trends and compare them, or train models for deep learning, etc. In this issue, we will introduce a node.js package specially used for web crawling - node-crawler, and we will use it to complete a simple crawler case to crawl Pictures on the web page and downloaded locally.

Text

node-crawler is a lightweight node.js crawler tool that takes into account both efficiency and Convenience, supports distributed crawler system, supports hard coding, and supports http front-level proxy. Moreover, it is entirely written by nodejs and inherently supports non-blocking asynchronous IO, which provides great convenience for the crawler's pipeline operation mechanism. At the same time, it supports quick selection of DOM (you can use jQuery syntax). It can be said to be a killer function for the task of grabbing specific parts of the web page. There is no need to hand-write regular expressions, which improves Reptile development efficiency.

Installation and introduction

We first create a new project and create index.js as the entry file.

Then install the crawler library node-crawler .

# PNPM pnpm add crawler # NPM npm i -S crawler # Yarn yarn add crawler

Then use require to introduce it.

// index.js

const Crawler = require("crawler");Create an instance

// index.js

let crawler = new Crawler({

timeout:10000,

jQuery:true,

})

function getImages(uri) {

crawler.queue({

uri,

callback: (err, res, done) => {

if (err) throw err;

}

})

}From now on we will start to write a method to get the image of the html page. crawler After instantiation, it is mainly placed in its queue for Write link and callback methods. This callback function will be called after each request is processed.

I would like to explain here that Crawler uses the request library, so the parameter list available for configuration in Crawler is request A superset of the parameters of the library, that is, all configurations in the request library are applicable to Crawler.

Element Capture

Maybe you also saw the jQuery parameter just now. You guessed it right, it can be captured using the syntax of jQuery DOM element.

// index.js

let data = []

function getImages(uri) {

crawler.queue({

uri,

callback: (err, res, done) => {

if (err) throw err;

let $ = res.$;

try {

let $imgs = $("img");

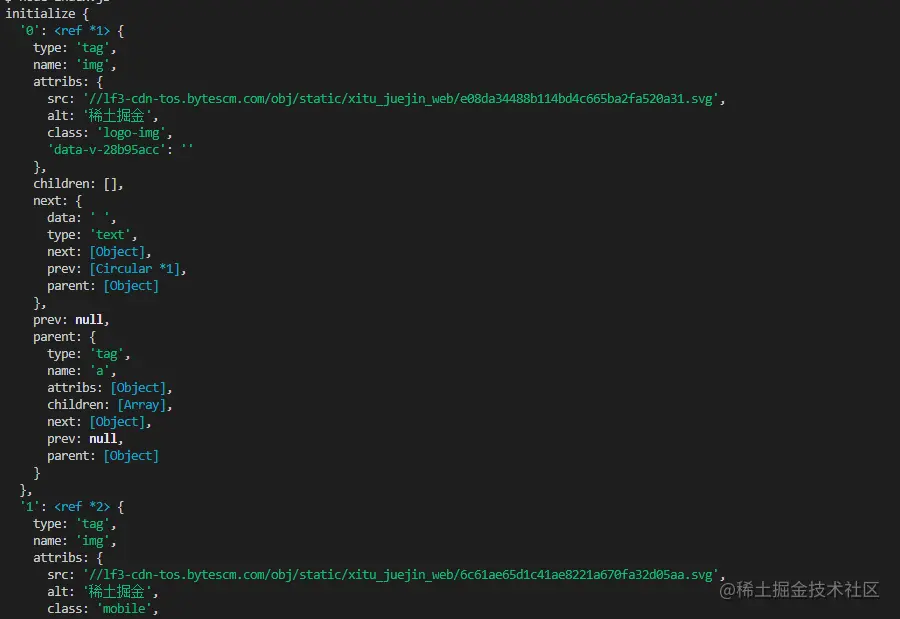

Object.keys($imgs).forEach(index => {

let img = $imgs[index];

const { type, name, attribs = {} } = img;

let src = attribs.src || "";

if (type === "tag" && src && !data.includes(src)) {

let fileSrc = src.startsWith('http') ? src : `https:${src}`

let fileName = src.split("/")[src.split("/").length-1]

downloadFile(fileSrc, fileName) // 下载图片的方法

data.push(src)

}

});

} catch (e) {

console.error(e);

done()

}

done();

}

})

}You can see that $ was used to capture the img tag in the request. Then we use the following logic to process the link to the completed image and strip out the name so that it can be saved and named later. An array is also defined here, its purpose is to save the captured image address. If the same image address is found in the next capture, the download will not be processed repeatedly.

The following is the information printed using $("img") on the Nuggets homepage html:

Download pictures

Before downloading, we need to install a nodejs package—— axios, yes you read that right, axios Not only provided to the front end, it can also be used by the back end. But because downloading pictures needs to be processed into a data stream, responseType is set to stream. Then you can use the pipe method to save the data flow file.

const { default: axios } = require("axios");

const fs = require('fs');

async function downloadFile(uri, name) {

let dir = "./imgs"

if (!fs.existsSync(dir)) {

await fs.mkdirSync(dir)

}

let filePath = `${dir}/${name}`

let res = await axios({

url: uri,

responseType: 'stream'

})

let ws = fs.createWriteStream(filePath)

res.data.pipe(ws)

res.data.on("close",()=>{

ws.close();

})

}Because there may be a lot of pictures, so if you want to put them in one folder, you need to determine whether there is such a folder. If not, create one. Then use the createWriteStream method to save the obtained data stream to the folder in the form of a file.

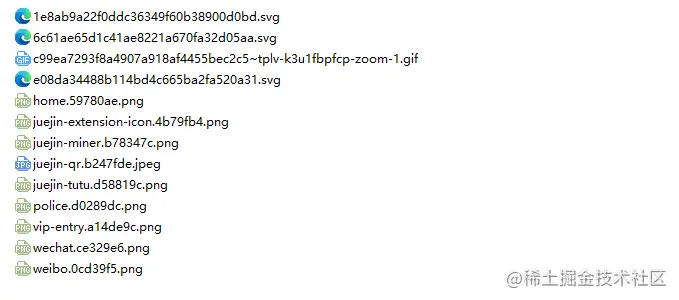

Then we can try it. For example, we capture the pictures under the html of the Nuggets homepage:

// index.js

getImages("https://juejin.cn/")After execution, we can find that all the pictures in the static html have been captured.

node index.js

Conclusion

At the end, you can also see that this code may not work SPA (Single Page Application). Since there is only one HTML file in a single-page application, and all the content on the web page is dynamically rendered, it remains the same. No matter what, you can directly handle its data request to collect the information you want. No.

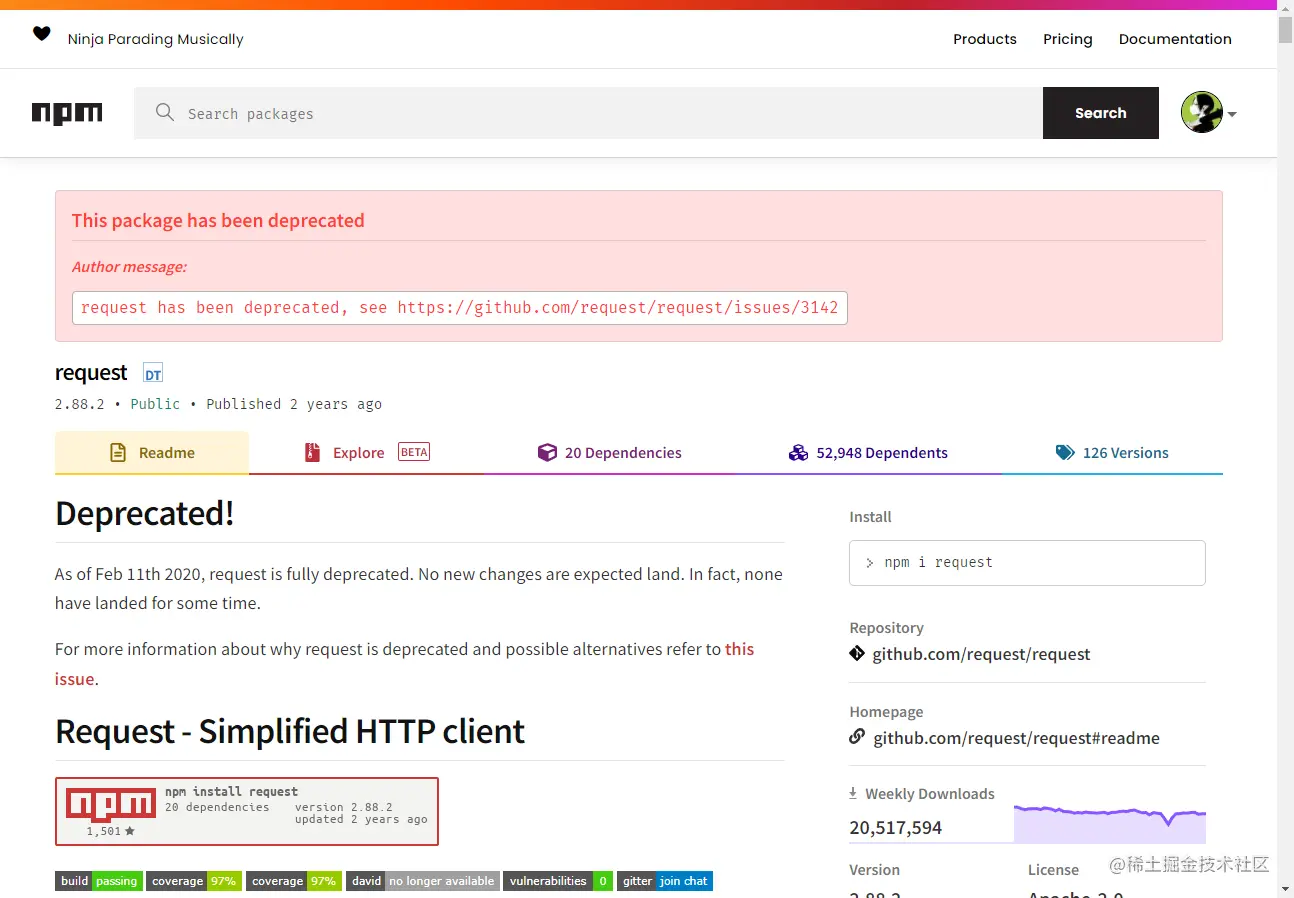

One more thing to say is that many friends use request.js when processing requests to download images. Of course, this is possible and even requires less code, but I want to say What's more, this library has been deprecated in 2020. It is better to replace it with a library that has been updated and maintained.

For more node-related knowledge, please visit: nodejs tutorial!

The above is the detailed content of Detailed explanation of how to use Node.js to develop a simple image crawling function. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Is nodejs a backend framework?

Apr 21, 2024 am 05:09 AM

Is nodejs a backend framework?

Apr 21, 2024 am 05:09 AM

Node.js can be used as a backend framework as it offers features such as high performance, scalability, cross-platform support, rich ecosystem, and ease of development.

How to connect nodejs to mysql database

Apr 21, 2024 am 06:13 AM

How to connect nodejs to mysql database

Apr 21, 2024 am 06:13 AM

To connect to a MySQL database, you need to follow these steps: Install the mysql2 driver. Use mysql2.createConnection() to create a connection object that contains the host address, port, username, password, and database name. Use connection.query() to perform queries. Finally use connection.end() to end the connection.

What are the global variables in nodejs

Apr 21, 2024 am 04:54 AM

What are the global variables in nodejs

Apr 21, 2024 am 04:54 AM

The following global variables exist in Node.js: Global object: global Core module: process, console, require Runtime environment variables: __dirname, __filename, __line, __column Constants: undefined, null, NaN, Infinity, -Infinity

What is the difference between npm and npm.cmd files in the nodejs installation directory?

Apr 21, 2024 am 05:18 AM

What is the difference between npm and npm.cmd files in the nodejs installation directory?

Apr 21, 2024 am 05:18 AM

There are two npm-related files in the Node.js installation directory: npm and npm.cmd. The differences are as follows: different extensions: npm is an executable file, and npm.cmd is a command window shortcut. Windows users: npm.cmd can be used from the command prompt, npm can only be run from the command line. Compatibility: npm.cmd is specific to Windows systems, npm is available cross-platform. Usage recommendations: Windows users use npm.cmd, other operating systems use npm.

Pi Node Teaching: What is a Pi Node? How to install and set up Pi Node?

Mar 05, 2025 pm 05:57 PM

Pi Node Teaching: What is a Pi Node? How to install and set up Pi Node?

Mar 05, 2025 pm 05:57 PM

Detailed explanation and installation guide for PiNetwork nodes This article will introduce the PiNetwork ecosystem in detail - Pi nodes, a key role in the PiNetwork ecosystem, and provide complete steps for installation and configuration. After the launch of the PiNetwork blockchain test network, Pi nodes have become an important part of many pioneers actively participating in the testing, preparing for the upcoming main network release. If you don’t know PiNetwork yet, please refer to what is Picoin? What is the price for listing? Pi usage, mining and security analysis. What is PiNetwork? The PiNetwork project started in 2019 and owns its exclusive cryptocurrency Pi Coin. The project aims to create a one that everyone can participate

Is there a big difference between nodejs and java?

Apr 21, 2024 am 06:12 AM

Is there a big difference between nodejs and java?

Apr 21, 2024 am 06:12 AM

The main differences between Node.js and Java are design and features: Event-driven vs. thread-driven: Node.js is event-driven and Java is thread-driven. Single-threaded vs. multi-threaded: Node.js uses a single-threaded event loop, and Java uses a multi-threaded architecture. Runtime environment: Node.js runs on the V8 JavaScript engine, while Java runs on the JVM. Syntax: Node.js uses JavaScript syntax, while Java uses Java syntax. Purpose: Node.js is suitable for I/O-intensive tasks, while Java is suitable for large enterprise applications.

Is nodejs a back-end development language?

Apr 21, 2024 am 05:09 AM

Is nodejs a back-end development language?

Apr 21, 2024 am 05:09 AM

Yes, Node.js is a backend development language. It is used for back-end development, including handling server-side business logic, managing database connections, and providing APIs.

Which one to choose between nodejs and java?

Apr 21, 2024 am 04:40 AM

Which one to choose between nodejs and java?

Apr 21, 2024 am 04:40 AM

Node.js and Java each have their pros and cons in web development, and the choice depends on project requirements. Node.js excels in real-time applications, rapid development, and microservices architecture, while Java excels in enterprise-grade support, performance, and security.