Backend Development

Backend Development

Python Tutorial

Python Tutorial

Understand the Python crawler parser BeautifulSoup4 in one article

Understand the Python crawler parser BeautifulSoup4 in one article

Understand the Python crawler parser BeautifulSoup4 in one article

This article brings you relevant knowledge about Python, which mainly sorts out issues related to the crawler parser BeautifulSoup4. Beautiful Soup is a Python that can extract data from HTML or XML files. Library, which can implement the usual methods of document navigation, search, and modification of documents through your favorite converter. Let’s take a look at it. I hope it will be helpful to everyone.

[Related recommendations: Python3 video tutorial ]

1. Introduction to the BeautifulSoup4 library

1. Introduction

Beautiful Soup is a Python library that can extract data from HTML or XML files. It can implement the usual ways of document navigation, search, and modification of documents through your favorite converter. Beautiful Soup will help You save hours or even days of work time.

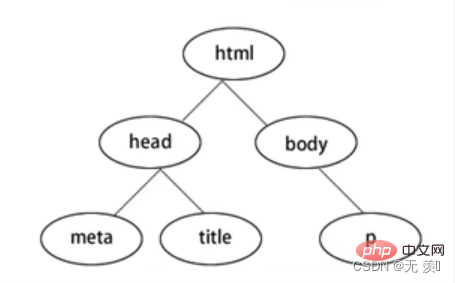

BeautifulSoup4 converts the web page into a DOM tree:

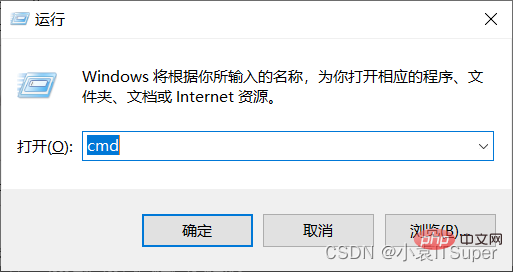

2. Download the module

1. Click on the window computerwin key R, enter: cmd

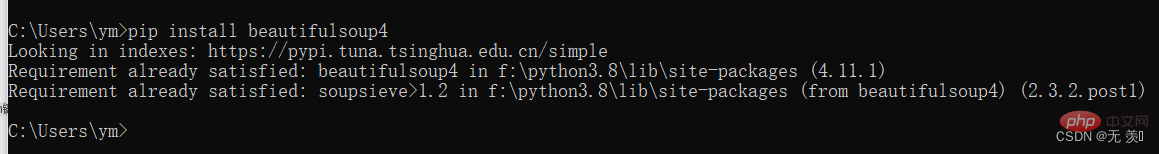

##2. Install beautifulsoup4, Enter the corresponding pip command : pip install beautifulsoup4 . I have already installed the version that appears and the installation was successful.

3. Guide package

form bs4 import BeautifulSoup

| Usage | Advantages | Disadvantages | |

|---|---|---|---|

BeautifulSoup(html,'html.parser') | Python's built-in standard library, execution speed Moderate, strong document fault tolerancePython 2.7.3 and versions before Python3.2.2 have poor document fault tolerance | ||

BeautifulSoup(html,'lxml') | Fast speed, strong document fault toleranceNeed to install C language library | ||

BeautifulSoup(html,'xml' | Fast speed, the only parser that supports XMLRequires the installation of C language library | ||

BeautifulSoup(html,'htm5llib') | The best fault tolerance, browser way Parse documents and generate documents in HTMLS formatSlow speed, does not rely on external extensions |

The above is the detailed content of Understand the Python crawler parser BeautifulSoup4 in one article. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1389

1389

52

52

Choosing Between PHP and Python: A Guide

Apr 18, 2025 am 12:24 AM

Choosing Between PHP and Python: A Guide

Apr 18, 2025 am 12:24 AM

PHP is suitable for web development and rapid prototyping, and Python is suitable for data science and machine learning. 1.PHP is used for dynamic web development, with simple syntax and suitable for rapid development. 2. Python has concise syntax, is suitable for multiple fields, and has a strong library ecosystem.

PHP and Python: Different Paradigms Explained

Apr 18, 2025 am 12:26 AM

PHP and Python: Different Paradigms Explained

Apr 18, 2025 am 12:26 AM

PHP is mainly procedural programming, but also supports object-oriented programming (OOP); Python supports a variety of paradigms, including OOP, functional and procedural programming. PHP is suitable for web development, and Python is suitable for a variety of applications such as data analysis and machine learning.

Can vs code run in Windows 8

Apr 15, 2025 pm 07:24 PM

Can vs code run in Windows 8

Apr 15, 2025 pm 07:24 PM

VS Code can run on Windows 8, but the experience may not be great. First make sure the system has been updated to the latest patch, then download the VS Code installation package that matches the system architecture and install it as prompted. After installation, be aware that some extensions may be incompatible with Windows 8 and need to look for alternative extensions or use newer Windows systems in a virtual machine. Install the necessary extensions to check whether they work properly. Although VS Code is feasible on Windows 8, it is recommended to upgrade to a newer Windows system for a better development experience and security.

Is the vscode extension malicious?

Apr 15, 2025 pm 07:57 PM

Is the vscode extension malicious?

Apr 15, 2025 pm 07:57 PM

VS Code extensions pose malicious risks, such as hiding malicious code, exploiting vulnerabilities, and masturbating as legitimate extensions. Methods to identify malicious extensions include: checking publishers, reading comments, checking code, and installing with caution. Security measures also include: security awareness, good habits, regular updates and antivirus software.

How to run programs in terminal vscode

Apr 15, 2025 pm 06:42 PM

How to run programs in terminal vscode

Apr 15, 2025 pm 06:42 PM

In VS Code, you can run the program in the terminal through the following steps: Prepare the code and open the integrated terminal to ensure that the code directory is consistent with the terminal working directory. Select the run command according to the programming language (such as Python's python your_file_name.py) to check whether it runs successfully and resolve errors. Use the debugger to improve debugging efficiency.

Can visual studio code be used in python

Apr 15, 2025 pm 08:18 PM

Can visual studio code be used in python

Apr 15, 2025 pm 08:18 PM

VS Code can be used to write Python and provides many features that make it an ideal tool for developing Python applications. It allows users to: install Python extensions to get functions such as code completion, syntax highlighting, and debugging. Use the debugger to track code step by step, find and fix errors. Integrate Git for version control. Use code formatting tools to maintain code consistency. Use the Linting tool to spot potential problems ahead of time.

Can vscode be used for mac

Apr 15, 2025 pm 07:36 PM

Can vscode be used for mac

Apr 15, 2025 pm 07:36 PM

VS Code is available on Mac. It has powerful extensions, Git integration, terminal and debugger, and also offers a wealth of setup options. However, for particularly large projects or highly professional development, VS Code may have performance or functional limitations.

Can vscode run ipynb

Apr 15, 2025 pm 07:30 PM

Can vscode run ipynb

Apr 15, 2025 pm 07:30 PM

The key to running Jupyter Notebook in VS Code is to ensure that the Python environment is properly configured, understand that the code execution order is consistent with the cell order, and be aware of large files or external libraries that may affect performance. The code completion and debugging functions provided by VS Code can greatly improve coding efficiency and reduce errors.