How to solve mysql deep paging problem

This article brings you relevant knowledge about mysql, mainly introducing the elegant solution to the mysql deep paging problem. This article will discuss how to optimize deep paging when the mysql table has a large amount of data. Pagination problem, and attached is the pseudo code of a recent case of optimizing slow SQL problem. I hope it will be helpful to everyone.

Recommended learning: mysql video tutorial

In the daily demand development process, I believe everyone will be familiar with limit, but using limit, when the offset (offset) is very large, you will find that the query efficiency is getting slower and slower. When the limit is 2000 at the beginning, it may take 200ms to query the required data. However, when the limit is 4000 offset 100000, you will find that its query efficiency already requires about 1S. If it is larger, it will only get worse and worse. slow.

Summary

This article will discuss how to optimize the deep paging problem when the mysql table has a large amount of data, and attach the pseudo code of a recent case of optimizing the slow sql problem.

1. Limit deep paging problem description

Let’s take a look at the table structure first (just give an example, the table structure is incomplete and useless fields will not be displayed)

CREATE TABLE `p2p_detail_record` ( `id` varchar(32) COLLATE utf8mb4_bin NOT NULL DEFAULT '' COMMENT '主键', `batch_num` int NOT NULL DEFAULT '0' COMMENT '上报数量', `uptime` bigint NOT NULL DEFAULT '0' COMMENT '上报时间', `uuid` varchar(64) COLLATE utf8mb4_bin NOT NULL DEFAULT '' COMMENT '会议id', `start_time_stamp` bigint NOT NULL DEFAULT '0' COMMENT '开始时间', `answer_time_stamp` bigint NOT NULL DEFAULT '0' COMMENT '应答时间', `end_time_stamp` bigint NOT NULL DEFAULT '0' COMMENT '结束时间', `duration` int NOT NULL DEFAULT '0' COMMENT '持续时间', PRIMARY KEY (`id`), KEY `idx_uuid` (`uuid`), KEY `idx_start_time_stamp` (`start_time_stamp`) //索引, ) ENGINE=InnoDB DEFAULT CHARSET=utf8mb4 COLLATE=utf8mb4_bin COMMENT='p2p通话记录详情表';

Suppose the deep paging SQL we want to query looks like this

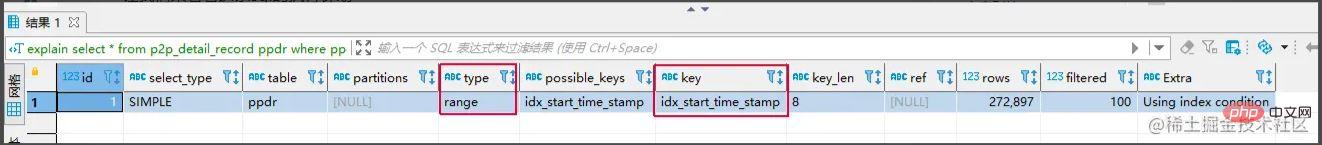

select * from p2p_detail_record ppdr where ppdr .start_time_stamp >1656666798000 limit 0,2000

The query efficiency is 94ms. Is it very fast? So if we limit 100000 or 2000, the query efficiency is 1.5S, which is already very slow. What if there are more?

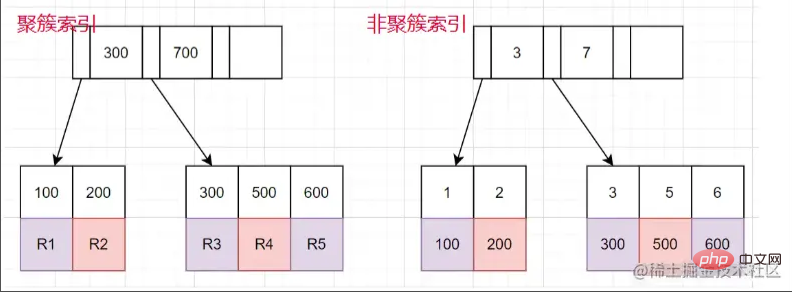

Clustered index: Leaf nodes store the entire row of data.

Non-clustered index: The leaf node stores the primary key value corresponding to the entire row of data.

The process of using non-clustered index query

- Find the corresponding leaf node through the non-clustered index tree , get the value of the primary key. Then get the value of the primary key and return to the

- clustered index tree to find the corresponding entire row of data. (The whole process is called table return)

limit 100000,10 will scan 100010 rows, while limit 0,10 will only scan 10 rows. Here we need to return to the table 100010 times, and a lot of time is spent on returning the table.

Core idea of the solution: Can we know in advance which primary key ID to start from, so as to reduce the number of table returns?

Common solutionsThrough subqueries Optimizationselect *

from p2p_detail_record ppdr

where id >= (select id from p2p_detail_record ppdr2 where ppdr2 .start_time_stamp >1656666798000 limit 100000,1)

limit 2000

Copy after login

The same query result is also the 2000th item starting from 10W. The query efficiency is 200ms, which is much faster. select * from p2p_detail_record ppdr where id >= (select id from p2p_detail_record ppdr2 where ppdr2 .start_time_stamp >1656666798000 limit 100000,1) limit 2000

Tag recording method: In fact, mark which one was queried last time, and check again next time When the time comes, start scanning from this bar down. Similar to the effect of bookmarks

select * from p2p_detail_record ppdr where ppdr.id > 'bb9d67ee6eac4cab9909bad7c98f54d4' order by id limit 2000 备注:bb9d67ee6eac4cab9909bad7c98f54d4是上次查询结果的最后一条ID

id index is hit. But this method has several disadvantages.

- 1. It can only be queried on consecutive pages, not across pages. 2. A field similar to

- continuous auto-increment is needed (orber by id can be used).

- Using

- through subquery optimization

Advantages: You can query across pages, and you can check the data on whichever page you want to check.

Disadvantages: is not as efficient as tag recording method. Reason: For example, after you need to check 100,000 pieces of data, you also need to query the 1000th piece of data corresponding to the non-clustered index first, and then get the ID starting from the 100,000th piece for query.

- Use

- tag recording method

Advantages: The query efficiency is very stable and very fast.

shortcoming:

- 不跨页查询,

- 需要一种类似连续自增的字段

关于第二点的说明: 该点一般都好解决,可使用任意不重复的字段进行排序即可。若使用可能重复的字段进行排序的字段,由于mysql对于相同值的字段排序是无序,导致如果正好在分页时,上下页中可能存在相同的数据。

实战案例

需求: 需要查询查询某一时间段的数据量,假设有几十万的数据量需要查询出来,进行某些操作。

需求分析 1、分批查询(分页查询),设计深分页问题,导致效率较慢。

CREATE TABLE `p2p_detail_record` ( `id` varchar(32) COLLATE utf8mb4_bin NOT NULL DEFAULT '' COMMENT '主键', `batch_num` int NOT NULL DEFAULT '0' COMMENT '上报数量', `uptime` bigint NOT NULL DEFAULT '0' COMMENT '上报时间', `uuid` varchar(64) COLLATE utf8mb4_bin NOT NULL DEFAULT '' COMMENT '会议id', `start_time_stamp` bigint NOT NULL DEFAULT '0' COMMENT '开始时间', `answer_time_stamp` bigint NOT NULL DEFAULT '0' COMMENT '应答时间', `end_time_stamp` bigint NOT NULL DEFAULT '0' COMMENT '结束时间', `duration` int NOT NULL DEFAULT '0' COMMENT '持续时间', PRIMARY KEY (`id`), KEY `idx_uuid` (`uuid`), KEY `idx_start_time_stamp` (`start_time_stamp`) //索引, ) ENGINE=InnoDB DEFAULT CHARSET=utf8mb4 COLLATE=utf8mb4_bin COMMENT='p2p通话记录详情表';

伪代码实现:

//最小ID

String lastId = null;

//一页的条数

Integer pageSize = 2000;

List<P2pRecordVo> list ;

do{

list = listP2pRecordByPage(lastId,pageSize); //标签记录法,记录上次查询过的Id

lastId = list.get(list.size()-1).getId(); //获取上一次查询数据最后的ID,用于记录

//对数据的操作逻辑

XXXXX();

}while(isNotEmpty(list));

<select id ="listP2pRecordByPage">

select *

from p2p_detail_record ppdr where 1=1

<if test = "lastId != null">

and ppdr.id > #{lastId}

</if>

order by id asc

limit #{pageSize}

</select>这里有个小优化点: 可能有的人会先对所有数据排序一遍,拿到最小ID,但是这样对所有数据排序,然后去min(id),耗时也蛮长的,其实第一次查询,可不带lastId进行查询,查询结果也是一样。速度更快。

总结

1、当业务需要从表中查出大数据量时,而又项目架构没上ES时,可考虑使用标签记录法的方式,对查询效率进行优化。

2、从需求上也应该尽可能避免,在大数据量的情况下,分页查询最后一页的功能。或者限制成只能一页一页往后划的场景。

推荐学习:mysql视频教程

The above is the detailed content of How to solve mysql deep paging problem. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1378

1378

52

52

MySQL: Simple Concepts for Easy Learning

Apr 10, 2025 am 09:29 AM

MySQL: Simple Concepts for Easy Learning

Apr 10, 2025 am 09:29 AM

MySQL is an open source relational database management system. 1) Create database and tables: Use the CREATEDATABASE and CREATETABLE commands. 2) Basic operations: INSERT, UPDATE, DELETE and SELECT. 3) Advanced operations: JOIN, subquery and transaction processing. 4) Debugging skills: Check syntax, data type and permissions. 5) Optimization suggestions: Use indexes, avoid SELECT* and use transactions.

How to open phpmyadmin

Apr 10, 2025 pm 10:51 PM

How to open phpmyadmin

Apr 10, 2025 pm 10:51 PM

You can open phpMyAdmin through the following steps: 1. Log in to the website control panel; 2. Find and click the phpMyAdmin icon; 3. Enter MySQL credentials; 4. Click "Login".

How to create navicat premium

Apr 09, 2025 am 07:09 AM

How to create navicat premium

Apr 09, 2025 am 07:09 AM

Create a database using Navicat Premium: Connect to the database server and enter the connection parameters. Right-click on the server and select Create Database. Enter the name of the new database and the specified character set and collation. Connect to the new database and create the table in the Object Browser. Right-click on the table and select Insert Data to insert the data.

How to create a new connection to mysql in navicat

Apr 09, 2025 am 07:21 AM

How to create a new connection to mysql in navicat

Apr 09, 2025 am 07:21 AM

You can create a new MySQL connection in Navicat by following the steps: Open the application and select New Connection (Ctrl N). Select "MySQL" as the connection type. Enter the hostname/IP address, port, username, and password. (Optional) Configure advanced options. Save the connection and enter the connection name.

MySQL: An Introduction to the World's Most Popular Database

Apr 12, 2025 am 12:18 AM

MySQL: An Introduction to the World's Most Popular Database

Apr 12, 2025 am 12:18 AM

MySQL is an open source relational database management system, mainly used to store and retrieve data quickly and reliably. Its working principle includes client requests, query resolution, execution of queries and return results. Examples of usage include creating tables, inserting and querying data, and advanced features such as JOIN operations. Common errors involve SQL syntax, data types, and permissions, and optimization suggestions include the use of indexes, optimized queries, and partitioning of tables.

MySQL and SQL: Essential Skills for Developers

Apr 10, 2025 am 09:30 AM

MySQL and SQL: Essential Skills for Developers

Apr 10, 2025 am 09:30 AM

MySQL and SQL are essential skills for developers. 1.MySQL is an open source relational database management system, and SQL is the standard language used to manage and operate databases. 2.MySQL supports multiple storage engines through efficient data storage and retrieval functions, and SQL completes complex data operations through simple statements. 3. Examples of usage include basic queries and advanced queries, such as filtering and sorting by condition. 4. Common errors include syntax errors and performance issues, which can be optimized by checking SQL statements and using EXPLAIN commands. 5. Performance optimization techniques include using indexes, avoiding full table scanning, optimizing JOIN operations and improving code readability.

How to use single threaded redis

Apr 10, 2025 pm 07:12 PM

How to use single threaded redis

Apr 10, 2025 pm 07:12 PM

Redis uses a single threaded architecture to provide high performance, simplicity, and consistency. It utilizes I/O multiplexing, event loops, non-blocking I/O, and shared memory to improve concurrency, but with limitations of concurrency limitations, single point of failure, and unsuitable for write-intensive workloads.

How to recover data after SQL deletes rows

Apr 09, 2025 pm 12:21 PM

How to recover data after SQL deletes rows

Apr 09, 2025 pm 12:21 PM

Recovering deleted rows directly from the database is usually impossible unless there is a backup or transaction rollback mechanism. Key point: Transaction rollback: Execute ROLLBACK before the transaction is committed to recover data. Backup: Regular backup of the database can be used to quickly restore data. Database snapshot: You can create a read-only copy of the database and restore the data after the data is deleted accidentally. Use DELETE statement with caution: Check the conditions carefully to avoid accidentally deleting data. Use the WHERE clause: explicitly specify the data to be deleted. Use the test environment: Test before performing a DELETE operation.