Compared with domestic shopping websites, the Amazon website can directly use the most basic requests of python to make requests. The access is not too frequent, and the data we want can be obtained without triggering the protection mechanism. This time, we will briefly introduce the basic crawling process through the following three parts:

Use the get request of requests to obtain the page content of the Amazon list and details page

Use css/xpath to parse the obtained content and obtain key data

The role of dynamic IP and how to use it

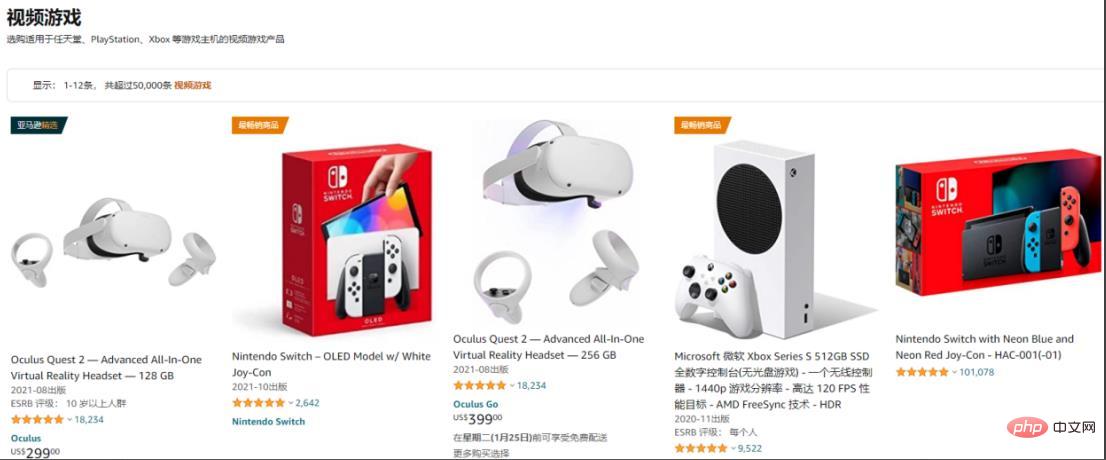

Take the game area as an example:

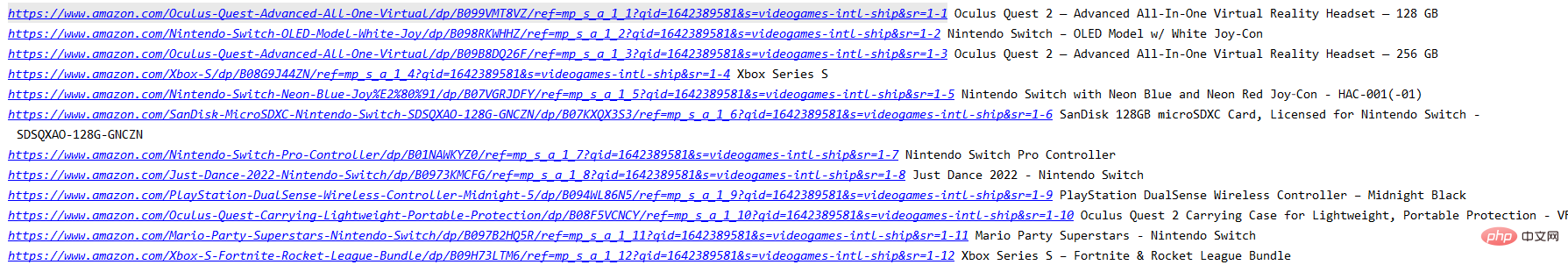

Get the product information that can be obtained in the list, Such as product name, details link, and further access to other content.

Use requests.get() to obtain the web page content, set the header, and use the xpath selector to select the content of the relevant tags:

import requests

from parsel import Selector

from urllib.parse import urljoin

spiderurl = 'https://www.amazon.com/s?i=videogames-intl-ship'

headers = {

"authority": "www.amazon.com",

"user-agent": "Mozilla/5.0 (iPhone; CPU iPhone OS 10_3_3 like Mac OS X) AppleWebKit/603.3.8 (KHTML, like Gecko) Mobile/14G60 MicroMessenger/6.5.19 NetType/4G Language/zh_TW",

}

resp = requests.get(spiderurl, headers=headers)

content = resp.content.decode('utf-8')

select = Selector(text=content)

nodes = select.xpath("//a[@title='product-detail']")

for node in nodes:

itemUrl = node.xpath("./@href").extract_first()

itemName = node.xpath("./div/h2/span/text()").extract_first()

if itemUrl and itemName:

itemUrl = urljoin(spiderurl,itemUrl)#用urljoin方法凑完整链接

print(itemUrl,itemName)The information currently available on the current list page that has been obtained at this time :

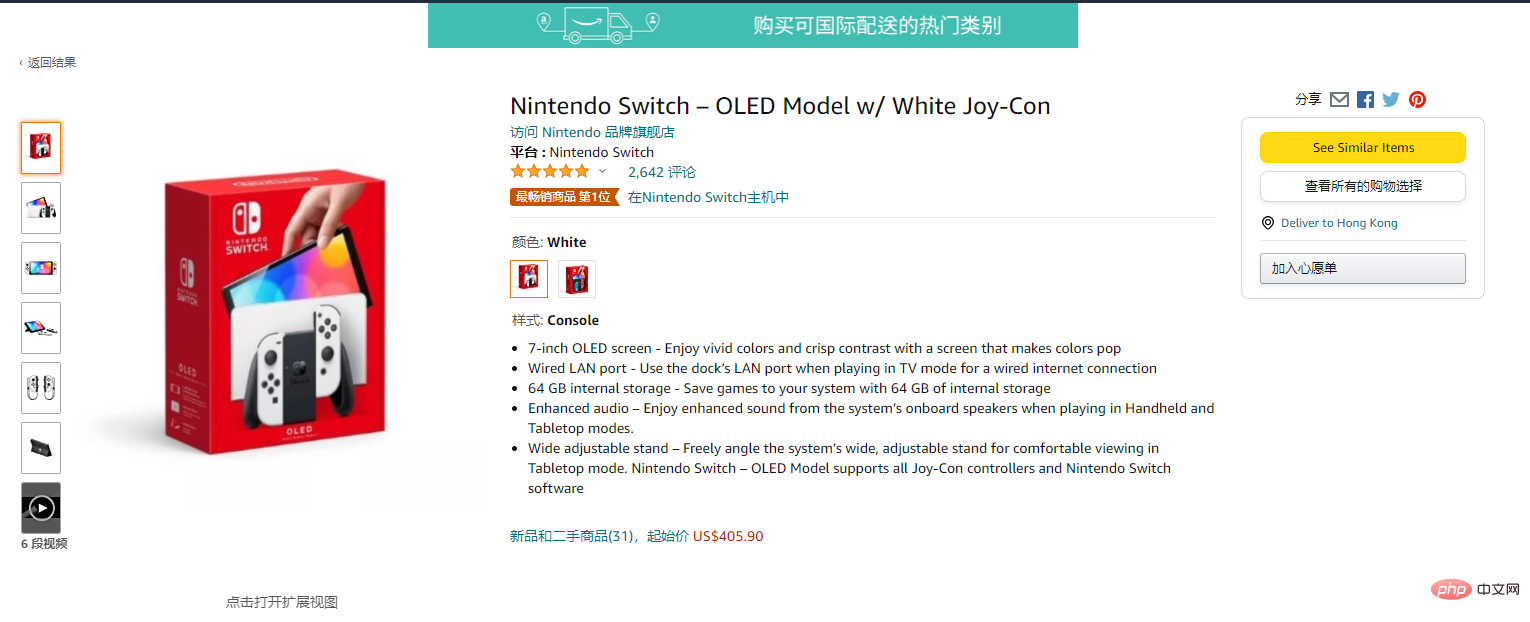

Enter the details page:

res = requests.get(itemUrl, headers=headers)

content = res.content.decode('utf-8')

Select = Selector(text=content)

itemPic = Select.css('#main-image::attr(src)').extract_first()

itemPrice = Select.css('.a-offscreen::text').extract_first()

itemInfo = Select.css('#feature-bullets').extract_first()

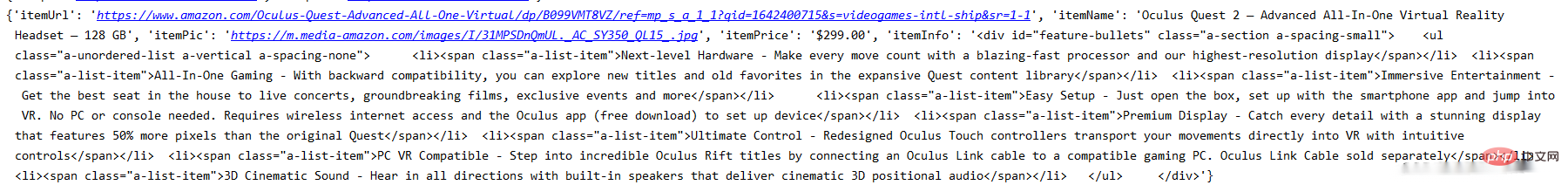

data = {}

data['itemUrl'] = itemUrl

data['itemName'] = itemName

data['itemPic'] = itemPic

data['itemPrice'] = itemPrice

data['itemInfo'] = itemInfo

print(data)

http://www.ipidea.net/?utm-source=PHP&utm-keyword=?PHP

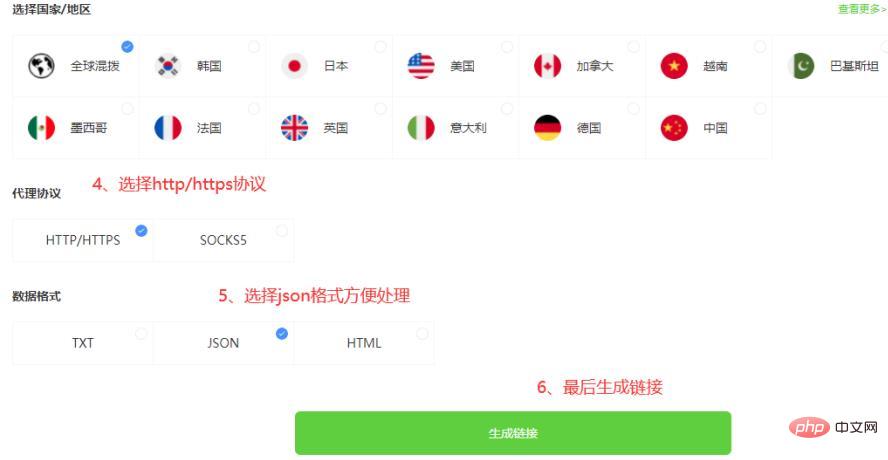

There are two ways to use the proxy, One is to obtain the IP address through the API, and also use the account password. The method is as follows:3.1.1 API to obtain the agent

3.1.2 api obtain ip code

def getProxies():

# 获取且仅获取一个ip

api_url = '生成的api链接'

res = requests.get(api_url, timeout=5)

try:

if res.status_code == 200:

api_data = res.json()['data'][0]

proxies = {

'http': 'http://{}:{}'.format(api_data['ip'], api_data['port']),

'https': 'http://{}:{}'.format(api_data['ip'], api_data['port']),

}

print(proxies)

return proxies

else:

print('获取失败')

except:

print('获取失败')3.2.1 Account password acquisition agent (registration address: http: //www.ipidea.net/?utm-source=PHP&utm-keyword=?PHP)

Because it is account and password verification, you need to go to the account center to fill in the information to create a sub-account:

# 获取账密ip

def getAccountIp():

# 测试完成后返回代理proxy

mainUrl = 'https://api.myip.la/en?json'

headers = {

"Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8",

"User-Agent": "Mozilla/5.0 (iPhone; CPU iPhone OS 10_3_3 like Mac OS X) AppleWebKit/603.3.8 (KHTML, like Gecko) Mobile/14G60 MicroMessenger/6.5.19 NetType/4G Language/zh_TW",

}

entry = 'http://{}-zone-custom{}:proxy.ipidea.io:2334'.format("帐号", "密码")

proxy = {

'http': entry,

'https': entry,

}

try:

res = requests.get(mainUrl, headers=headers, proxies=proxy, timeout=10)

if res.status_code == 200:

return proxy

except Exception as e:

print("访问失败", e)

pass# coding=utf-8

import requests

from parsel import Selector

from urllib.parse import urljoin

def getProxies():

# 获取且仅获取一个ip

api_url = '生成的api链接'

res = requests.get(api_url, timeout=5)

try:

if res.status_code == 200:

api_data = res.json()['data'][0]

proxies = {

'http': 'http://{}:{}'.format(api_data['ip'], api_data['port']),

'https': 'http://{}:{}'.format(api_data['ip'], api_data['port']),

}

print(proxies)

return proxies

else:

print('获取失败')

except:

print('获取失败')

spiderurl = 'https://www.amazon.com/s?i=videogames-intl-ship'

headers = {

"authority": "www.amazon.com",

"user-agent": "Mozilla/5.0 (iPhone; CPU iPhone OS 10_3_3 like Mac OS X) AppleWebKit/603.3.8 (KHTML, like Gecko) Mobile/14G60 MicroMessenger/6.5.19 NetType/4G Language/zh_TW",

}

proxies = getProxies()

resp = requests.get(spiderurl, headers=headers, proxies=proxies)

content = resp.content.decode('utf-8')

select = Selector(text=content)

nodes = select.xpath("//a[@title='product-detail']")

for node in nodes:

itemUrl = node.xpath("./@href").extract_first()

itemName = node.xpath("./div/h2/span/text()").extract_first()

if itemUrl and itemName:

itemUrl = urljoin(spiderurl,itemUrl)

proxies = getProxies()

res = requests.get(itemUrl, headers=headers, proxies=proxies)

content = res.content.decode('utf-8')

Select = Selector(text=content)

itemPic = Select.css('#main-image::attr(src)').extract_first()

itemPrice = Select.css('.a-offscreen::text').extract_first()

itemInfo = Select.css('#feature-bullets').extract_first()

data = {}

data['itemUrl'] = itemUrl

data['itemName'] = itemName

data['itemPic'] = itemPic

data['itemPrice'] = itemPrice

data['itemInfo'] = itemInfo

print(data)通过上面的步骤,可以实现最基础的亚马逊的信息获取。

目前只获得最基本的数据,若想获得更多也可以自行修改xpath/css选择器去拿到你想要的内容。而且稳定的动态IP能是你进行请求的时候少一点等待的时间,无论是编写中的测试还是小批量的爬取,都能提升工作的效率。以上就是全部的内容。

The above is the detailed content of Get Amazon product information using Python. For more information, please follow other related articles on the PHP Chinese website!