Recently, our online gateway was replaced by APISIX, and we encountered some problems. One of the more difficult problems to solve is the process isolation problem of APISIX.

APISIX The mutual influence of different types of requests

The first thing we encountered was the problem that the APISIX Prometheus plug-in affected the normal business interface response when there was too much monitoring data. When the Prometheus plug-in is enabled, the monitoring information collected internally by APISIX can be obtained through the HTTP interface and then displayed on a specific dashboard.

curl http://172.30.xxx.xxx:9091/apisix/prometheus/metrics

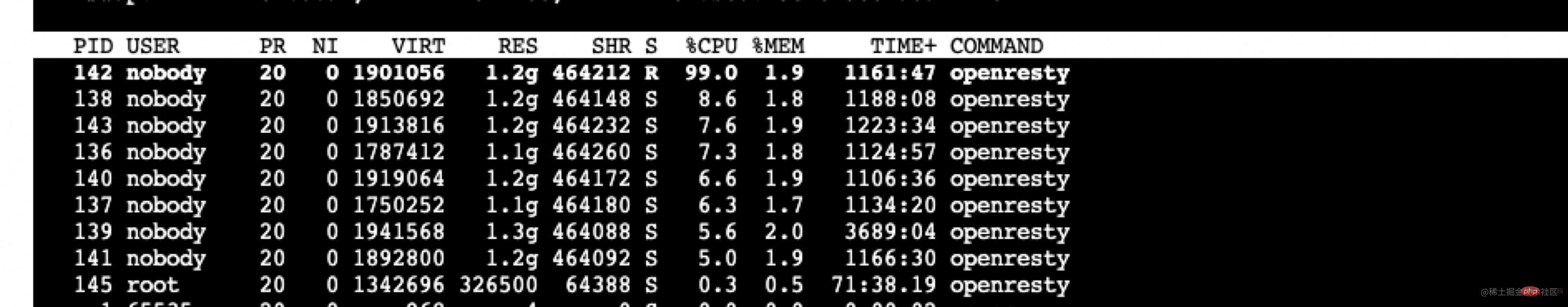

The business system connected to our gateway is very complex, with 4000 routes. Every time the Prometheus plug-in is pulled, the number of metrics exceeds 500,000 and the size exceeds 80M. This part of the information needs to be assembled and sent at the lua layer. , when a request is made, the CPU usage of the worker process that handles this request will be very high, and the processing time will exceed 2s, resulting in a 2s delay for this worker process to process normal business requests. [Recommendation: Nginx Tutorial]

The temporary measure that came to mind at that time was to modify the Prometheus plug-in to reduce the range and quantity of collection and sending, and temporarily bypassed it first. This problem. After analyzing the information collected by the Prometheus plug-in, the number of data collected is as follows.

407171 apisix_http_latency_bucket 29150 apisix_http_latency_sum 29150 apisix_http_latency_count 20024 apisix_bandwidth 17707 apisix_http_status 11 apisix_etcd_modify_indexes 6 apisix_nginx_http_current_connections 1 apisix_node_info

Based on the actual needs of our business, some information has been removed and some delays have been reduced.

Then after consultation on github issue (github.com/apache/apis…), I found that APISIX provides this function in the commercial version. Because I still want to use the open source version directly, this problem can be bypassed for the time being, so I will not delve further.

But we encountered another problem later, that is, the Admin API processing was not processed in time during the business peak. We use the Admin API to perform version switching. During a peak business period, the APISIX load was high, which affected Admin-related interfaces and caused occasional timeout failures in version switching.

The reason here is obvious, and the impact is bidirectional: the previous Prometheus plug-in is an APISIX internal request that affects normal business requests. The opposite is true here, normal business requests affect requests within APISIX. Therefore, it is crucial to isolate APISIX internal requests from normal business requests, so it took a while to implement this function.

The above correspondence will generate the following nginx.conf The sample configuration file is as follows.

// 9091 端口处理 Prometheus 插件接口请求

server {

listen 0.0.0.0:9091;

access_log off;

location / {

content_by_lua_block {

local prometheus = require("apisix.plugins.prometheus.exporter")

prometheus.export_metrics()

}

}

}// 9180 端口处理 admin 接口

server {

listen 0.0.0.0:9180;

location /apisix/admin {

content_by_lua_block {

apisix.http_admin()

}

}

}// 正常处理 80 和 443 的业务请求

server {

listen 0.0.0.0:80;

listen 0.0.0.0:443 ssl;

server_name _;

location / {

proxy_pass $upstream_scheme://apisix_backend$upstream_uri;

access_by_lua_block {

apisix.http_access_phase()

}

}Modify Nginx source code to achieve process isolation

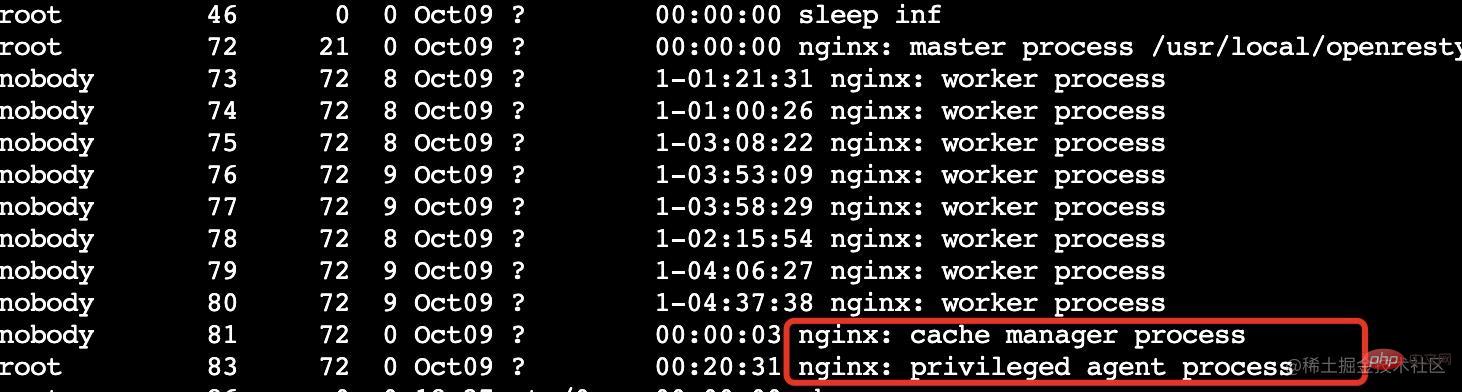

Students who are familiar with OpenResty should know that OpenResty has been expanded on the basis of Nginx and added privilege

privileged agent The privileged process does not listen to any ports and does not provide any services to the outside world. It is mainly used for scheduled tasks, etc.

What we need to do is to add one or more worker processes to specifically handle requests within APISIX.

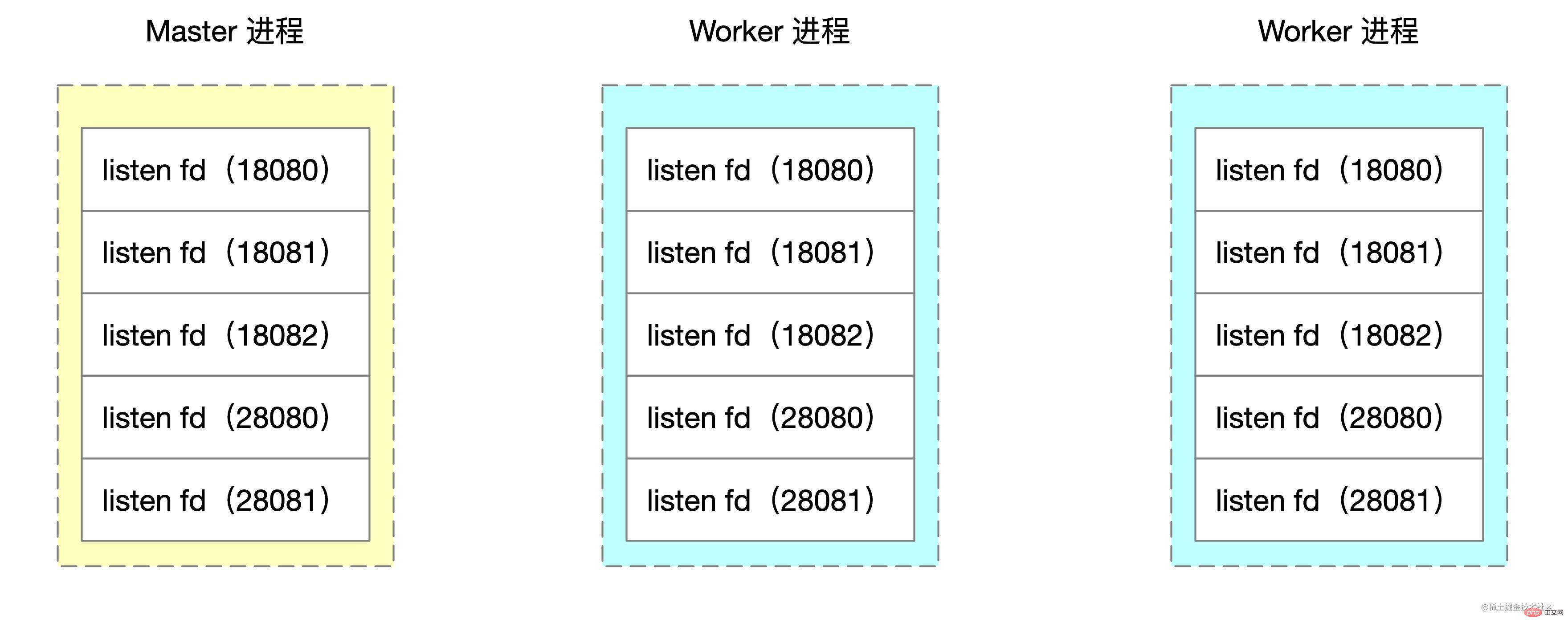

Nginx adopts multi-process mode, and the master process will call bind and listen to listen to the socket. The worker process created by the fork function will copy the socket handles of these listen states.

The pseudocode for creating worker child processes in the Nginx source code is as follows:

voidngx_master_process_cycle(ngx_cycle_t *cycle) {

ngx_setproctitle("master process");

ngx_start_worker_processes() for (i = 0; i < n; i++) { // 根据 cpu 核心数创建子进程

ngx_spawn_process(i, "worker process");

pid = fork();

ngx_worker_process_cycle()

ngx_setproctitle("worker process") for(;;) { // worker 子进程的无限循环

// ...

}

}

} for(;;) { // ... master 进程的无限循环

}

}What we need to modify is to start 1 or N more workers in the for loop Process, specially designed to handle requests for specific ports.

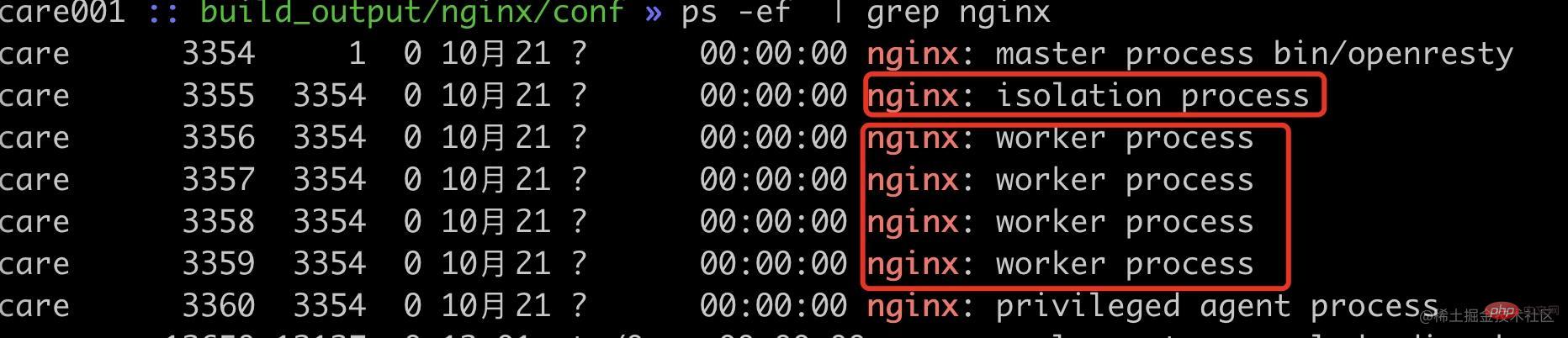

The demo here takes starting a worker process as an example. The logic of modifying ngx_start_worker_processes is as follows. Start one more worker process. The command name is "isolation process" to indicate the internal isolation process.

static voidngx_start_worker_processes(ngx_cycle_t *cycle, ngx_int_t n, ngx_int_t type){ ngx_int_t i;

// ...

for (i = 0; i < n + 1; i++) { // 这里将 n 改为了 n+1,多启动一个进程

if (i == 0) { // 将子进程组中的第一个作为隔离进程

ngx_spawn_process(cycle, ngx_worker_process_cycle,

(void *) (intptr_t) i, "isolation process", type);

} else {

ngx_spawn_process(cycle, ngx_worker_process_cycle,

(void *) (intptr_t) i, "worker process", type);

}

} // ...}Then the logic in ngx_worker_process_cycle performs special processing on worker No. 0. The demo here uses 18080, 18081, and 18082 as isolation ports.

static voidngx_worker_process_cycle(ngx_cycle_t *cycle, void *data)

{

ngx_int_t worker = (intptr_t) data;

int ports[3];

ports[0] = 18080;

ports[1] = 18081;

ports[2] = 18082;

ngx_worker_process_init(cycle, worker);

if (worker == 0) { // 处理 0 号 worker

ngx_setproctitle("isolation process"); ngx_close_not_isolation_listening_sockets(cycle, ports, 3);

} else { // 处理非 0 号 worker

ngx_setproctitle("worker process"); ngx_close_isolation_listening_sockets(cycle, ports, 3);

}

}Two new methods are written here

ngx_close_not_isolation_listening_sockets: Only keep the listening of the isolated port and cancel the listening of other portsngx_close_isolation_listening_sockets: Close the listening of the isolation port, and only retain the normal business listening port, that is, process the normal business ##ngx_close_not_isolation_listening_sockets The simplified code is as follows:

// used in isolation processvoidngx_close_not_isolation_listening_sockets(ngx_cycle_t *cycle, int isolation_ports[], int port_num){ ngx_connection_t *c; int port_match = 0; ngx_listening_t* ls = cycle->listening.elts; for (int i = 0; i < cycle->listening.nelts; i++) {

c = ls[i].connection; // 从 sockaddr 结构体中获取端口号

in_port_t port = ngx_inet_get_port(ls[i].sockaddr) ; // 判断当前端口号是否是需要隔离的端口

int is_isolation_port = check_isolation_port(port, isolation_ports, port_num); // 如果不是隔离端口,则取消监听事情的处理

if (c && !is_isolation_port) { // 调用 epoll_ctl 移除事件监听

ngx_del_event(c->read, NGX_READ_EVENT, 0);

ngx_free_connection(c);

c->fd = (ngx_socket_t) -1;

} if (!is_isolation_port) {

port_match++;

ngx_close_socket(ls[i].fd); // close 当前 fd

ls[i].fd = (ngx_socket_t) -1;

}

}

cycle->listening.nelts -= port_match;

}ngx_close_isolation_listening_sockets Close all isolation ports and only keep normal business port listening. The simplified code is as follows.

voidngx_close_isolation_listening_sockets(ngx_cycle_t *cycle, int isolation_ports[], int port_num){ ngx_connection_t *c; int port_match;

port_match = 0; ngx_listening_t * ls = cycle->listening.elts; for (int i = 0; i < cycle->listening.nelts; i++) {

c = ls[i].connection; in_port_t port = ngx_inet_get_port(ls[i].sockaddr) ; int is_isolation_port = check_isolation_port(port, isolation_ports, port_num); // 如果是隔离端口,关闭监听

if (c && is_isolation_port) {

ngx_del_event(c->read, NGX_READ_EVENT, 0);

ngx_free_connection(c);

c->fd = (ngx_socket_t) -1;

} if (is_isolation_port) {

port_match++;

ngx_close_socket(ls[i].fd); // 关闭 fd

ls[i].fd = (ngx_socket_t) -1;

}

}

cle->listening.nelts -= port_match;

}Effect Verification

Here we use 18080~18082 ports as isolation port verification, and other ports as normal business end ports. In order to simulate the situation where the request occupies a high CPU, here we use lua to calculate sqrt multiple times to better verify Nginx's worker load balancing.server {

listen 18080; // 18081,18082 配置一样

server_name localhost;

location / {

content_by_lua_block {

local sum = 0;

for i = 1,10000000,1 do sum = sum + math.sqrt(i)

end

ngx.say(sum)

}

}

}

server {

listen 28080;

server_name localhost;

location / {

content_by_lua_block {

local sum = 0;

for i = 1,10000000,1 do sum = sum + math.sqrt(i)

end

ngx.say(sum)

}

}

}

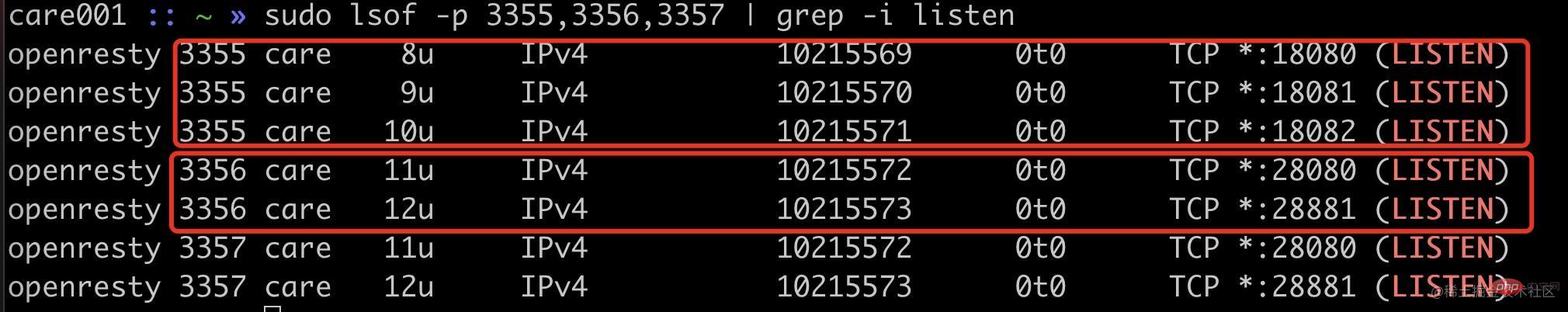

可以看到现在已经启动了 1 个内部隔离 worker 进程(pid=3355),4 个普通 worker 进程(pid=3356~3359)。

首先我们可以看通过端口监听来确定我们的改动是否生效。

可以看到隔离进程 3355 进程监听了 18080、18081、18082,普通进程 3356 等进程监听了 20880、20881 端口。

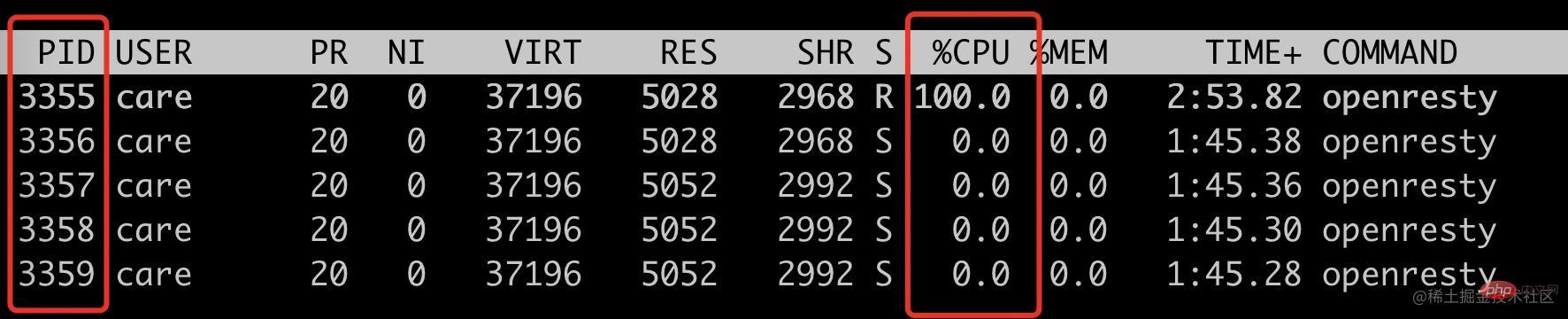

使用 ab 请求 18080 端口,看看是否只会把 3355 进程 CPU 跑满。

ab -n 10000 -c 10 localhost:18080top -p 3355,3356,3357,3358,3359

可以看到此时只有 3355 这个 isolation process 被跑满。

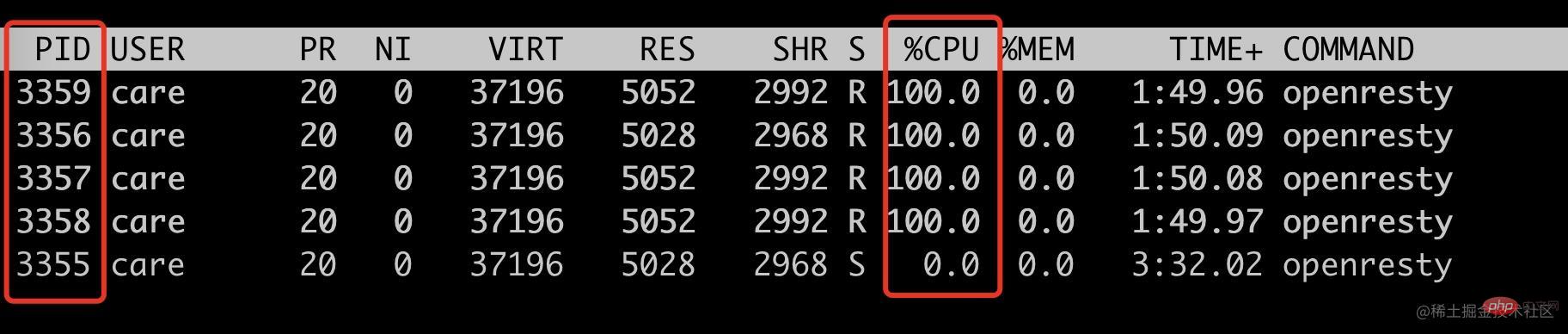

接下来看看非隔离端口请求,是否只会跑满其它四个 woker process。

ab -n 10000 -c 10 localhost:28080top -p 3355,3356,3357,3358,3359

符合预期,只会跑满 4 个普通 worker 进程(pid=3356~3359),此时 3355 的 cpu 使用率为 0。

到此,我们就通过修改 Nginx 源码实现了特定基于端口号的进程隔离方案。此 demo 中的端口号是写死的,我们实际使用的时候是通过 lua 代码传入的。

init_by_lua_block { local process = require "ngx.process"

local ports = {18080, 18081, 18083} local ok, err = process.enable_isolation_process(ports) if not ok then

ngx.log(ngx.ERR, "enable enable_isolation_process failed") return

else

ngx.log(ngx.ERR, "enable enable_isolation_process success") end}复制代码这里需要 lua 通过 ffi 传入到 OpenResty 中,这里不是本文的重点,就不展开讲述。

后记

这个方案有一点 hack,能比较好的解决当前我们遇到的问题,但是也是有成本的,需要维护自己的 OpenResty 代码分支,喜欢折腾的同学或者实在需要此特性可以试试。

上述方案只是我对 Nginx 源码的粗浅了解做的改动,如果有使用不当的地方欢迎跟我反馈。

The above is the detailed content of An in-depth analysis of how to implement worker process isolation through Nginx source code. For more information, please follow other related articles on the PHP Chinese website!

nginx restart

nginx restart

Detailed explanation of nginx configuration

Detailed explanation of nginx configuration

Detailed explanation of nginx configuration

Detailed explanation of nginx configuration

Is python front-end or back-end?

Is python front-end or back-end?

What are the differences between tomcat and nginx

What are the differences between tomcat and nginx

The difference between front-end and back-end

The difference between front-end and back-end

Introduction to the main work content of the backend

Introduction to the main work content of the backend

Mac shortcut key list

Mac shortcut key list

Popular explanation of what Metaverse XR means

Popular explanation of what Metaverse XR means