Technology peripherals

Technology peripherals

AI

AI

Real 3D creation is here, you must gesture with your hands! AI can't take my job this time, right?

Real 3D creation is here, you must gesture with your hands! AI can't take my job this time, right?

Real 3D creation is here, you must gesture with your hands! AI can't take my job this time, right?

On the road of AR advancement. There will continue to be new industry roles and technical forces joining in, jointly pushing AR towards our ultimate imagination: augmented reality for the masses in the true sense.

This article is reprinted with the authorization of AI New Media Qubit (public account ID: QbitAI). Please contact the source for reprinting.

ARThe content has been bitter for a long time.

In the past period of time, AR manufacturers have been rushing to launch consumer-grade AR glasses, with new tricks in terms of weight, battery life, and functions, but they cannot escape criticism:

What else can you do besides watching movies?

Is the current consumer-grade AR really AR?

For users, if the role of AR glasses is just to shrink a projector to the size of a lens and put it in front of their eyes, it will be difficult to meet the emerging new needs of consumers in the long term.

Have you been borrowing the content ecosystem from your mobile phone?

It seems undesirable. After all, the biggest imagination of AR lies in how to become a new independent terminal. What's more, mobile phone content is limited to 2D, which is fundamentally different from the 3D mentioned repeatedly in the basic definition of augmented reality.

So what should we do?

Short selling own 60,000 units The leading player in the industry of AR glasses inventory recently surrendered his answer sheet against the ebb of the Metaverse:

Through an AR application, They allow ordinary people to build their own AR digital space in 10 minutes. And it still has to be created in a physical space by hand. Does AI have nothing to say this time?

Of course this is a joke, the real purpose behind it is to

enable more developers and creators to use their imagination in the AR space and expand the AR content ecosystem, And further promote the spread of AR to the public level. So what exactly is this app? Can it achieve such an ambitious goal on its own? What undercurrents in the AR industry are revealed behind the scenes?

What is the spiritual realm?

Rokid founder and CEO Zhu Mingming said that Lingjing is an epoch-making product in the AR industry that allows everyone to participate in the creation of AR digital content.

Official definition,

Lingjing is an AR space creation tool. What it can do is create AR content in 3D space, and the threshold is very low and available to everyone. The specific operation steps are divided into 5 steps:

Scan space with mobile phone- Cloud space reconstruction

- Online scene layout

- Multi-terminal one-click release

- AR terminal experience

- In other words, through the ordinary mobile phone camera, Lingjing can obtain spatial information and then reconstruct it in the cloud. Then you can complete the creation according to your own ideas.

#The created AR content can be published directly on the platform, and multiple people can publish their creations in one space. Finally, you only need to put on AR glasses to experience it.

#The created AR content can be published directly on the platform, and multiple people can publish their creations in one space. Finally, you only need to put on AR glasses to experience it.

After the whole process, a space of about 10 square meters can be completed in just

10 minutes.

According to reports, Lingjing’s content rendering is also based on Rokid’s own 3D rendering engine, which can greatly reduce the time and labor costs of the entire content creation and implementation.

According to reports, Lingjing’s content rendering is also based on Rokid’s own 3D rendering engine, which can greatly reduce the time and labor costs of the entire content creation and implementation.

You must know that the current implementation process of AR content creation is basically still a long chain process.

It is necessary to have certain product resources, development resources and content creation resources, and then a professional team will package the resources for on-site testing, and finally conduct acceptance. Commonly used creative tools such as Unity and UnrealEngine also have barriers to use, requiring users to have certain basic development capabilities.

Moreover, the spiritual realm also emphasizes a major feature:

Cooperation. Not only achieves multi-terminal consistency, that is, the creative content space can be seen on mobile phones, PCs, and AR glasses; it also enables multiple people to participate in AR creation in the same space.

This positioning is obviously more popular. Users in the industry and ordinary consumers can get started and experience it. At the same time, some new requirements will also be put forward, such as high concurrency requirements. , terminal computing power, content creation effects, etc.

Then the question is—

How is the spiritual realm achieved?

Behind this is actually a comprehensive upgrade of

a complete set of technology stacks, including hardware, systems, cloud services, algorithms, etc.Let’s first look at the hardware. This time, in conjunction with the release of Lingjing, Rokid has upgraded AR glasses from the traditional “double fisheye RGB three-camera solution” to “Single-camera RGB lightweight SLAM

”.The benefits of the monocular solution are very direct: simple structure, reduced hardware design complexity, small motherboard area, greatly reduced cost-to-power ratio, and the user can directly feel the improvement in wearing comfort and battery life.

In terms of landing applications, it is reported that the hardware equipped with Lingjing also only has one camera.

Because the number of cameras is compressed to the last one, the monocular solution can bring many advantages such as lower power consumption, simpler structure, lower cost, etc., and is comfortable The wearable and more affordable price is in line with Lingjing’s demand of “making AR more inclusive”.

But the monocular solution does have shortcomings. For example, it cannot obtain absolute scale information, so it needs to restore the scale information through initialization, and the quality of initialization directly affects the final accuracy of the algorithm. The absolute scale accuracy of monocular calculation is not high enough, and there is also uncertainty in scale convergence. These bring great challenges to the development of monocular SLAM algorithms and will directly affect the AR experience.

However, Rokid said that corresponding solutions have already appeared in the industry.

Algorithmically, with the assistance of IMU, there are many solutions for single-purpose static initialization and dynamic initialization. After the initialization is completed, a more accurate absolute scale prior is basically obtained. Combined with algorithms based on filtering, for example, various SLAM parameters can be continuously optimized in the later use process to further improve the positioning accuracy of the algorithm. At the same time, there are also many technical explorations in SLAM algorithms based on deep learning technology.

From this, we can also feel that Rokid’s philosophy is: AR products are not just stacked sensors, but software, hardware, algorithms and scenarios as a whole to provide users with a more comfortable comprehensive AR experience.

Software improvements are mainly reflected in 3D point cloud and SLAM.

And uses device-cloud collaboration to balance the requirements of computing power, power consumption and high concurrency.

Rokid Application Platform Center Department ManagerWatson introduced:

Visual positioning technology based on 3D point cloud and device-side SLAM technology (Simultaneous localization and mapping, synchronous positioning and map construction), which can be compared to high-precision road maps and vehicle space perception in autonomous driving.

In AR scenarios, some developers use point clouds for global rough positioning and SLAM for local precise positioning, and the two are loosely coupled together.

Rokid this time loosely coupled this and made a deeper integration. It realizes only positioning and tracking on the terminal, and spatial mapping on the cloud.

This device-cloud collaborative computing method can improve positioning accuracy in some scenarios, such as scenes with weak textures and changing environments, and can also reduce the computing power of the mobile terminal. In principle, it mainly takes advantage of the advantages of RTC (Real time communication) in weak network confrontation/low-latency transmission and video image compression.

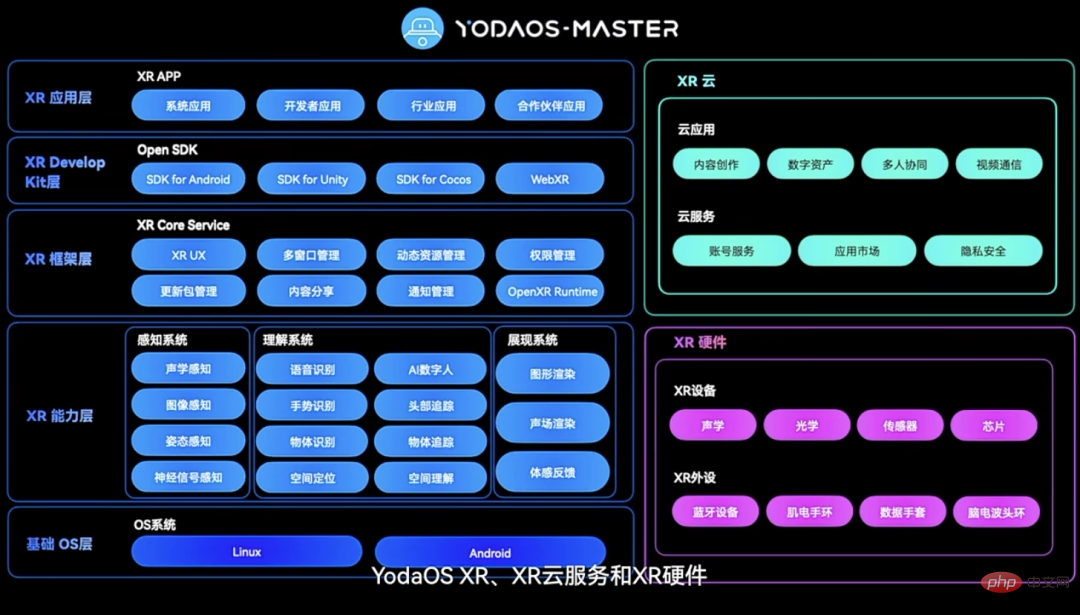

The implementation of tight coupling relies on Rokid’s newly upgraded underlying operating system YodaOS-Master.

Specific changes are reflected in the three levels of XR system, XR cloud service and XR hardware.

# After the system is fully upgraded, Rokid can build a more complete closed-loop OS ecosystem to better connect developers and consumers.

After digging into the technology stack of Lingjing, it is not difficult to find that Rokid is well prepared for this release.

The core purpose is very clear: to lower the threshold of AR content creation and strengthen the AR content creation team.

You only need a mobile phone to experience it, and the time is shortened to 10 minutes. This is indeed a condition that almost everyone can meet, and you will also have the opportunity to feel what the so-called "real AR" is like. Think about it, it can indeed attract a wave of curious people.

This also makes people curious, why is the spiritual realm officially launched at this moment? And why should we focus on lowering the threshold?

The answer still depends on the dimensions of market demand, AR industry development, and experience verification of industry players.

Why do you want to do spiritual realm?

The most direct influencing factor comes from market demand.

Chen Xi, vice president of Rokid and head of the Digital Culture Division, said that currently in the field of cultural blogs and cultural tourism, the demand for AR content is very prominent. She gave an amazing data to illustrate:

The number of people who experienced Rokid AR in cultural museum scenes during the Spring Festival this year reached 40,000.

Across the country, such as Guangdong Provincial Museum, Xi'an Museum, Shaanxi Natural History Museum, Suzhou Museum, Liangzhu Ancient City Heritage Park, etc., have successively launched AR guides.

The reason why AR will quickly enter the cultural and museum field is also easy to understand. It can greatly enrich the content in the 3D space and present cultural knowledge to the public in a more intuitive, rich and diverse way. in front of visitors.

The number of registered museums nationwide exceeds 6,000. As more and more museums put forward demands, the long-chain content production model in the past made it difficult to respond quickly. What's more, this model of implementation by plan also has high requirements on human and material costs.

Therefore, there is an urgent need in the market for an efficient and easy-to-use productivity tool that can accelerate the creation of AR content and reduce costs.

On the other side, it is a similar situation in industry.

Rokid Vice President and Head of Product Technology CenterJiang Tao said that it is somewhat similar to the cultural museum scene. On the front line of industrial production, they also hope to display as much information as possible in 3D space. .

In this way, when front-line personnel are operating, they no longer need to go to some instruments to view data and verify the situation. Instead, they can see the required information directly at the work site.

In Jiang Tao’s words, starting from the big concept of the industrial metaverse, completing digital transformation means completing the infrastructure of the industrial metaverse. Only then will the integrated and analyzed data be fed back to the site. Only then will the journey be truly completed. The whole closed loop.

Since industrial scenarios naturally put forward higher requirements for cost reduction and efficiency improvement, the demand and market for AR in industrial scenarios may be greater.

In many factories, many real AR production lines have been set up:

In addition, in the education field, The use of AR to assist teaching is also gradually becoming popular.

For example, AR-based security education is no longer just indoctrination in written and video forms. Children can learn how to escape in simulated fire scenes, and their memory points will be deeper, and the educational effect will naturally be better.

In this scenario, school teachers often need to continuously update AR content based on course requirements. A low-threshold content production tool that can be used on mobile phones does meet real needs.

The above is the direct demand brought by the market.

Deeper influencing factors are the requirements for AR’s current evolution.

The lack of AR native content ecology has always been complained about, which has become a major reason why many people are unwilling to experience AR devices.

Borrowing ecology from mobile phones? Although the content richness has increased in the short term, the displayed content is more limited to the 2D level. The real AR content has not been displayed. Will it make people think that the end of AR glasses is mobile phone accessories?

Thus, for the AR industry, almost everyone realizes that enriching the content ecosystem is the top priority for current development. For example, when Apple was exploring AR in its earliest days, it was also the first to release the AR Kit development platform, continuing to use the APP Store style in the AR field.

After all, only when the content is rich will users be attracted and AR will have a chance to move forward. So the current question becomes how to enrich AR native content.

The efficiency of AR manufacturers alone is obviously not high enough, and the AR interaction scenarios explored over the past few years are still very limited.

Calling more developers and creators to join is gradually becoming a consensus in the industry, and the barriers to use and costs must be lowered.

It is not difficult to understand that as a senior player in the industry, Rokid chose to launch an AR space engine like Lingjing.

But why now?

Rokid’s answer is: its own experience accumulation in the cultural and museum field has verified the market’s real demand for AR.

Chen Xi recalled the various scenes that were still vivid in his mind when he first cooperated with Liangzhu Ancient City Heritage Park.

She remembered that when Rokid and the park proposed that AR could be used to restore cultural relics, the park immediately expressed interest. The entire project took about 3 months from initial proposal to implementation, and finally met the public during the National Day in 2020.

In the beginning, Rokid provided 200 pairs of AR glasses for rent a day. As a result, all the equipment was rented out in about an hour. And many of the people who are curious about AR are the elderly and children, which is beyond Rokid’s expectation.

With the application of real scenarios, we can actually accumulate very valuable development experience from the front line. For example, it is assumed that people will visit in the order of the tour, but in reality this is not the case. Rokid must ensure that visiting out of order will not affect the display of the AR effect. In response to the poor indoor lighting conditions of the museum, the technical team also made special optimizations... In the end, these optimizations and iterations were reflected in the design of the spiritual realm.

Chen Xi also said with emotion that it is undeniable that not everyone is willing to spend thousands of yuan to buy a special device to experience AR, but if you only spend 50 yuan to rent it, it is a lot Everyone is willing to try .

Then in the future, when there are enough application scenarios, the value will slowly be reflected. At that time, it may be the time when AR truly moves towards the consumer side.

From this, it is not difficult to understand why the spiritual realm is launched at this moment.

Because it can use a small lever to leverage a huge group of developers and creators, mobilizing hundreds or thousands of people to jointly explore a wide range of AR content and application scenarios.

This will help AR reach the masses more quickly and grow into a truly next-generation mobile computing terminal device.

What will the spiritual realm bring?

As an AR native content productivity tool, the emergence of Lingjing sends a signal to both inside and outside the industry:

It’s time to develop the AR content ecosystem.

And it is no longer borrowing ecology from mobile phones or other terminals, but starting from AR itself to show "what is real AR".

On the one hand, refer to the current absolute dominance of mobile computing terminalsSmartphone, which quickly attracted a large number of users precisely after the emergence of popular content applications.

On the other hand, there is a fundamental difference between AR native content and terminal content such as mobile phones. It needs to be 3D.

If we continue to borrow ecology from mobile phones, the public’s understanding of AR may only be the virtual large screen of mobile phones. This will limit the imagination of the development of the entire technology and industry, and will also affect the independent development of AR.

Thus, developing AR native content is what must be done now.

Although currently restricted by computing power, hardware and other conditions, AR content cannot yet meet everyone’s ultimate expectations. But just like the beginning of Windows, didn’t it only have simple applications like Minesweeper and Spider Solitaire?

We need to give technology enough patience and wait for changes to happen.

What's more, the idea of integrating devices and clouds does provide a new solution to the current computing power problem.

If we look at it from a perspective outside the AR industry, the emergence of spiritual realms also provides a new opportunity for developers and creators. After all, the rise of each generation of mobile computing terminals will be accompanied by the emergence of a large number of excellent content applications and software.

In short, on the way forward in AR. There will continue to be new industry roles and technical forces joining in, jointly pushing AR towards our ultimate imagination: augmented reality for the masses in the true sense.

What do you think?

The above is the detailed content of Real 3D creation is here, you must gesture with your hands! AI can't take my job this time, right?. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1378

1378

52

52

Why is Gaussian Splatting so popular in autonomous driving that NeRF is starting to be abandoned?

Jan 17, 2024 pm 02:57 PM

Why is Gaussian Splatting so popular in autonomous driving that NeRF is starting to be abandoned?

Jan 17, 2024 pm 02:57 PM

Written above & the author’s personal understanding Three-dimensional Gaussiansplatting (3DGS) is a transformative technology that has emerged in the fields of explicit radiation fields and computer graphics in recent years. This innovative method is characterized by the use of millions of 3D Gaussians, which is very different from the neural radiation field (NeRF) method, which mainly uses an implicit coordinate-based model to map spatial coordinates to pixel values. With its explicit scene representation and differentiable rendering algorithms, 3DGS not only guarantees real-time rendering capabilities, but also introduces an unprecedented level of control and scene editing. This positions 3DGS as a potential game-changer for next-generation 3D reconstruction and representation. To this end, we provide a systematic overview of the latest developments and concerns in the field of 3DGS for the first time.

Learn about 3D Fluent emojis in Microsoft Teams

Apr 24, 2023 pm 10:28 PM

Learn about 3D Fluent emojis in Microsoft Teams

Apr 24, 2023 pm 10:28 PM

You must remember, especially if you are a Teams user, that Microsoft added a new batch of 3DFluent emojis to its work-focused video conferencing app. After Microsoft announced 3D emojis for Teams and Windows last year, the process has actually seen more than 1,800 existing emojis updated for the platform. This big idea and the launch of the 3DFluent emoji update for Teams was first promoted via an official blog post. Latest Teams update brings FluentEmojis to the app Microsoft says the updated 1,800 emojis will be available to us every day

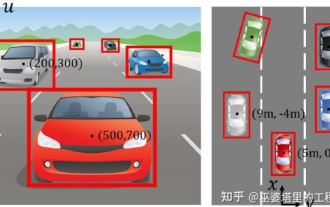

Choose camera or lidar? A recent review on achieving robust 3D object detection

Jan 26, 2024 am 11:18 AM

Choose camera or lidar? A recent review on achieving robust 3D object detection

Jan 26, 2024 am 11:18 AM

0.Written in front&& Personal understanding that autonomous driving systems rely on advanced perception, decision-making and control technologies, by using various sensors (such as cameras, lidar, radar, etc.) to perceive the surrounding environment, and using algorithms and models for real-time analysis and decision-making. This enables vehicles to recognize road signs, detect and track other vehicles, predict pedestrian behavior, etc., thereby safely operating and adapting to complex traffic environments. This technology is currently attracting widespread attention and is considered an important development area in the future of transportation. one. But what makes autonomous driving difficult is figuring out how to make the car understand what's going on around it. This requires that the three-dimensional object detection algorithm in the autonomous driving system can accurately perceive and describe objects in the surrounding environment, including their locations,

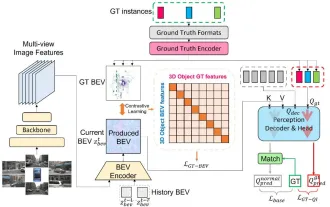

CLIP-BEVFormer: Explicitly supervise the BEVFormer structure to improve long-tail detection performance

Mar 26, 2024 pm 12:41 PM

CLIP-BEVFormer: Explicitly supervise the BEVFormer structure to improve long-tail detection performance

Mar 26, 2024 pm 12:41 PM

Written above & the author’s personal understanding: At present, in the entire autonomous driving system, the perception module plays a vital role. The autonomous vehicle driving on the road can only obtain accurate perception results through the perception module. The downstream regulation and control module in the autonomous driving system makes timely and correct judgments and behavioral decisions. Currently, cars with autonomous driving functions are usually equipped with a variety of data information sensors including surround-view camera sensors, lidar sensors, and millimeter-wave radar sensors to collect information in different modalities to achieve accurate perception tasks. The BEV perception algorithm based on pure vision is favored by the industry because of its low hardware cost and easy deployment, and its output results can be easily applied to various downstream tasks.

Paint 3D in Windows 11: Download, Installation, and Usage Guide

Apr 26, 2023 am 11:28 AM

Paint 3D in Windows 11: Download, Installation, and Usage Guide

Apr 26, 2023 am 11:28 AM

When the gossip started spreading that the new Windows 11 was in development, every Microsoft user was curious about how the new operating system would look like and what it would bring. After speculation, Windows 11 is here. The operating system comes with new design and functional changes. In addition to some additions, it comes with feature deprecations and removals. One of the features that doesn't exist in Windows 11 is Paint3D. While it still offers classic Paint, which is good for drawers, doodlers, and doodlers, it abandons Paint3D, which offers extra features ideal for 3D creators. If you are looking for some extra features, we recommend Autodesk Maya as the best 3D design software. like

Get a virtual 3D wife in 30 seconds with a single card! Text to 3D generates a high-precision digital human with clear pore details, seamlessly connecting with Maya, Unity and other production tools

May 23, 2023 pm 02:34 PM

Get a virtual 3D wife in 30 seconds with a single card! Text to 3D generates a high-precision digital human with clear pore details, seamlessly connecting with Maya, Unity and other production tools

May 23, 2023 pm 02:34 PM

ChatGPT has injected a dose of chicken blood into the AI industry, and everything that was once unthinkable has become basic practice today. Text-to-3D, which continues to advance, is regarded as the next hotspot in the AIGC field after Diffusion (images) and GPT (text), and has received unprecedented attention. No, a product called ChatAvatar has been put into low-key public beta, quickly garnering over 700,000 views and attention, and was featured on Spacesoftheweek. △ChatAvatar will also support Imageto3D technology that generates 3D stylized characters from AI-generated single-perspective/multi-perspective original paintings. The 3D model generated by the current beta version has received widespread attention.

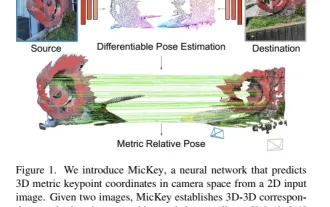

The latest from Oxford University! Mickey: 2D image matching in 3D SOTA! (CVPR\'24)

Apr 23, 2024 pm 01:20 PM

The latest from Oxford University! Mickey: 2D image matching in 3D SOTA! (CVPR\'24)

Apr 23, 2024 pm 01:20 PM

Project link written in front: https://nianticlabs.github.io/mickey/ Given two pictures, the camera pose between them can be estimated by establishing the correspondence between the pictures. Typically, these correspondences are 2D to 2D, and our estimated poses are scale-indeterminate. Some applications, such as instant augmented reality anytime, anywhere, require pose estimation of scale metrics, so they rely on external depth estimators to recover scale. This paper proposes MicKey, a keypoint matching process capable of predicting metric correspondences in 3D camera space. By learning 3D coordinate matching across images, we are able to infer metric relative

An in-depth interpretation of the 3D visual perception algorithm for autonomous driving

Jun 02, 2023 pm 03:42 PM

An in-depth interpretation of the 3D visual perception algorithm for autonomous driving

Jun 02, 2023 pm 03:42 PM

For autonomous driving applications, it is ultimately necessary to perceive 3D scenes. The reason is simple. A vehicle cannot drive based on the perception results obtained from an image. Even a human driver cannot drive based on an image. Because the distance of objects and the depth information of the scene cannot be reflected in the 2D perception results, this information is the key for the autonomous driving system to make correct judgments on the surrounding environment. Generally speaking, the visual sensors (such as cameras) of autonomous vehicles are installed above the vehicle body or on the rearview mirror inside the vehicle. No matter where it is, what the camera gets is the projection of the real world in the perspective view (PerspectiveView) (world coordinate system to image coordinate system). This view is very similar to the human visual system,