Open letter urges moratorium on artificial intelligence research

The Future of Life Institute has released an open letter calling for a six-month moratorium on some forms of artificial intelligence research. Citing "serious risks to society and humanity," the group asked AI labs to suspend research on AI systems more powerful than GPT-4 until more "safety guardrails" can be put in place around them.

The Future of Life Institute wrote in an open letter on March 22: “Artificial intelligence systems with the ability to compete with humans will have a profound impact on society and humanity. poses significant risks." "Advanced artificial intelligence could represent profound changes in the history of life on Earth and should be planned and managed with commensurate care and resources. Unfortunately, this level of planning and management has not been achieved."

The institute believes that If there is no artificial intelligence governance framework-such as the Asilomar AI Principles, which is the famous An expanded version of Simov's Three Laws of Robotics. These 23 guidelines were developed by the institute.) - We lack appropriate inspection mechanisms to ensure that artificial intelligence develops in a planned and controlled manner. This is what we are facing today.

The letter reads: “Unfortunately, this level of planning and management has not occurred, despite the fact that the AI lab has been in a state of spiral out of control in recent months. The race to develop and deploy increasingly powerful digital minds that no one—not even their creators—can understand, predict, or reliably control.”

In the absence of a voluntary moratorium by AI researchers, the Future of Life Institute urges governments to take action to prevent harm from continued research into large-scale AI models.

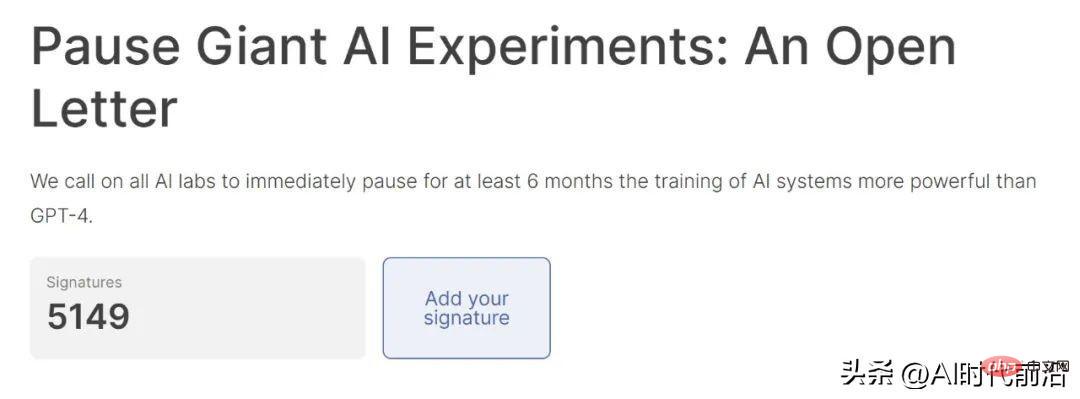

Top artificial intelligence researchers are divided over whether to pause research. More than 5,000 people have signed in support of the open letter, including Turing Award winner Yoshua Bengio, OpenAI co-founder Elon Musk and Apple co-founder Steve Wozniak.

# However, not everyone is convinced that banning research on AI systems more powerful than GPT-4 is in our best interests.

"I did not sign this letter," Meta chief artificial intelligence scientist and Turing Award winner Yann LeCun (2018 Turing Award winner with Bengio and Geoffrey Hinton) tweeted Said above. "I don't agree with its premise." The current artificial intelligence craze was launched two years ago through research on neural networks. Back then, deep neural networks were a major focus of artificial intelligence researchers around the world.

With the Transformer paper published by Google in 2017, artificial intelligence research has entered overdrive. Soon, researchers noticed the unexpected power riches of large language models, such as the ability to learn mathematics, chain-of-thought reasoning, and instruction following.

The public got a taste of what these LLMs can do in late November 2022 when OpenAI released ChatGPT to the world. Since then, the tech community has been working to implement LLM in everything they do, and the “arms race” to build bigger, more powerful models has gained additional momentum, as demonstrated by GPT-4’s March 15 announcement. release.

#While some AI experts have expressed concern about the negative impacts of LLM, including a tendency to lie, the risk of private data disclosure, and the potential impact on employment, It has done little to cool down the overwhelming public demand for new AI capabilities. Nvidia CEO Jensen Huang says we may be at a turning point in artificial intelligence. But the genie seems to be out of the bottle, and there’s no telling where it will go next.

The above is the detailed content of Open letter urges moratorium on artificial intelligence research. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

ChatGPT now allows free users to generate images by using DALL-E 3 with a daily limit

Aug 09, 2024 pm 09:37 PM

ChatGPT now allows free users to generate images by using DALL-E 3 with a daily limit

Aug 09, 2024 pm 09:37 PM

DALL-E 3 was officially introduced in September of 2023 as a vastly improved model than its predecessor. It is considered one of the best AI image generators to date, capable of creating images with intricate detail. However, at launch, it was exclus

Bytedance Cutting launches SVIP super membership: 499 yuan for continuous annual subscription, providing a variety of AI functions

Jun 28, 2024 am 03:51 AM

Bytedance Cutting launches SVIP super membership: 499 yuan for continuous annual subscription, providing a variety of AI functions

Jun 28, 2024 am 03:51 AM

This site reported on June 27 that Jianying is a video editing software developed by FaceMeng Technology, a subsidiary of ByteDance. It relies on the Douyin platform and basically produces short video content for users of the platform. It is compatible with iOS, Android, and Windows. , MacOS and other operating systems. Jianying officially announced the upgrade of its membership system and launched a new SVIP, which includes a variety of AI black technologies, such as intelligent translation, intelligent highlighting, intelligent packaging, digital human synthesis, etc. In terms of price, the monthly fee for clipping SVIP is 79 yuan, the annual fee is 599 yuan (note on this site: equivalent to 49.9 yuan per month), the continuous monthly subscription is 59 yuan per month, and the continuous annual subscription is 499 yuan per year (equivalent to 41.6 yuan per month) . In addition, the cut official also stated that in order to improve the user experience, those who have subscribed to the original VIP

Context-augmented AI coding assistant using Rag and Sem-Rag

Jun 10, 2024 am 11:08 AM

Context-augmented AI coding assistant using Rag and Sem-Rag

Jun 10, 2024 am 11:08 AM

Improve developer productivity, efficiency, and accuracy by incorporating retrieval-enhanced generation and semantic memory into AI coding assistants. Translated from EnhancingAICodingAssistantswithContextUsingRAGandSEM-RAG, author JanakiramMSV. While basic AI programming assistants are naturally helpful, they often fail to provide the most relevant and correct code suggestions because they rely on a general understanding of the software language and the most common patterns of writing software. The code generated by these coding assistants is suitable for solving the problems they are responsible for solving, but often does not conform to the coding standards, conventions and styles of the individual teams. This often results in suggestions that need to be modified or refined in order for the code to be accepted into the application

Can fine-tuning really allow LLM to learn new things: introducing new knowledge may make the model produce more hallucinations

Jun 11, 2024 pm 03:57 PM

Can fine-tuning really allow LLM to learn new things: introducing new knowledge may make the model produce more hallucinations

Jun 11, 2024 pm 03:57 PM

Large Language Models (LLMs) are trained on huge text databases, where they acquire large amounts of real-world knowledge. This knowledge is embedded into their parameters and can then be used when needed. The knowledge of these models is "reified" at the end of training. At the end of pre-training, the model actually stops learning. Align or fine-tune the model to learn how to leverage this knowledge and respond more naturally to user questions. But sometimes model knowledge is not enough, and although the model can access external content through RAG, it is considered beneficial to adapt the model to new domains through fine-tuning. This fine-tuning is performed using input from human annotators or other LLM creations, where the model encounters additional real-world knowledge and integrates it

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

Editor |ScienceAI Question Answering (QA) data set plays a vital role in promoting natural language processing (NLP) research. High-quality QA data sets can not only be used to fine-tune models, but also effectively evaluate the capabilities of large language models (LLM), especially the ability to understand and reason about scientific knowledge. Although there are currently many scientific QA data sets covering medicine, chemistry, biology and other fields, these data sets still have some shortcomings. First, the data form is relatively simple, most of which are multiple-choice questions. They are easy to evaluate, but limit the model's answer selection range and cannot fully test the model's ability to answer scientific questions. In contrast, open-ended Q&A

SOTA performance, Xiamen multi-modal protein-ligand affinity prediction AI method, combines molecular surface information for the first time

Jul 17, 2024 pm 06:37 PM

SOTA performance, Xiamen multi-modal protein-ligand affinity prediction AI method, combines molecular surface information for the first time

Jul 17, 2024 pm 06:37 PM

Editor | KX In the field of drug research and development, accurately and effectively predicting the binding affinity of proteins and ligands is crucial for drug screening and optimization. However, current studies do not take into account the important role of molecular surface information in protein-ligand interactions. Based on this, researchers from Xiamen University proposed a novel multi-modal feature extraction (MFE) framework, which for the first time combines information on protein surface, 3D structure and sequence, and uses a cross-attention mechanism to compare different modalities. feature alignment. Experimental results demonstrate that this method achieves state-of-the-art performance in predicting protein-ligand binding affinities. Furthermore, ablation studies demonstrate the effectiveness and necessity of protein surface information and multimodal feature alignment within this framework. Related research begins with "S

ChatGPT is now available for macOS with the release of a dedicated app

Jun 27, 2024 am 10:05 AM

ChatGPT is now available for macOS with the release of a dedicated app

Jun 27, 2024 am 10:05 AM

Open AI’s ChatGPT Mac application is now available to everyone, having been limited to only those with a ChatGPT Plus subscription for the last few months. The app installs just like any other native Mac app, as long as you have an up to date Apple S

SearchGPT: Open AI takes on Google with its own AI search engine

Jul 30, 2024 am 09:58 AM

SearchGPT: Open AI takes on Google with its own AI search engine

Jul 30, 2024 am 09:58 AM

Open AI is finally making its foray into search. The San Francisco company has recently announced a new AI tool with search capabilities. First reported by The Information in February this year, the new tool is aptly called SearchGPT and features a c