Overview: Collaborative sensing technology for autonomous driving

arXiv review paper "Collaborative Perception for Autonomous Driving: Current Status and Future Trend", August 23, 2022, Shanghai Jiao Tong University.

Perception is one of the key modules of the autonomous driving system. However, the limited capabilities of bicycles create a bottleneck in improving perception performance. In order to break through the limitations of single perception, collaborative perception is proposed to enable vehicles to share information and perceive the environment outside the line of sight and outside the field of view. This article reviews promising work related to collaborative sensing technology, including basic concepts, collaborative models, and key elements and applications. Finally, open challenges and issues in this research area are discussed and further directions are given.

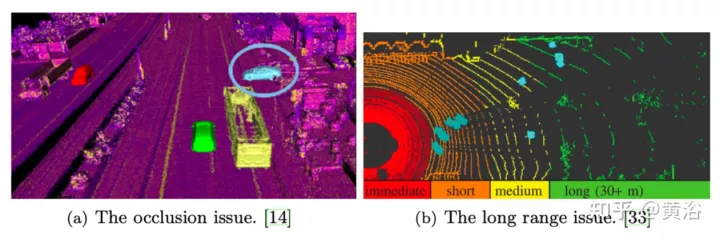

As shown in the figure, two important problems with single perception are long-distance occlusion and sparse data. The solution to these problems is that vehicles in the same area share common perception information (CPM, collective perception message) with each other and collaboratively perceive the environment, which is called collaborative sensing or collaborative sensing.

Thanks to the construction of communication infrastructure and the development of communication technologies such as V2X, vehicles can exchange information in a reliable way, thereby achieving collaboration. Recent work has shown that collaborative sensing between vehicles can improve the accuracy of environmental perception as well as the robustness and safety of transportation systems.

In addition, autonomous vehicles are usually equipped with high-fidelity sensors for reliable perception, resulting in expensive costs. Collaborative sensing can alleviate the stringent requirements of a single vehicle on sensing equipment.

Cooperative sensing shares information with nearby vehicles and infrastructure, enabling autonomous vehicles to overcome certain perception limitations such as occlusion and short field of view. However, achieving real-time and robust collaborative sensing requires solving some challenges caused by communication capacity and noise. Recently, some work has studied strategies for collaborative sensing, including what is collaboration, when to collaborate, how to collaborate, alignment of shared information, etc.

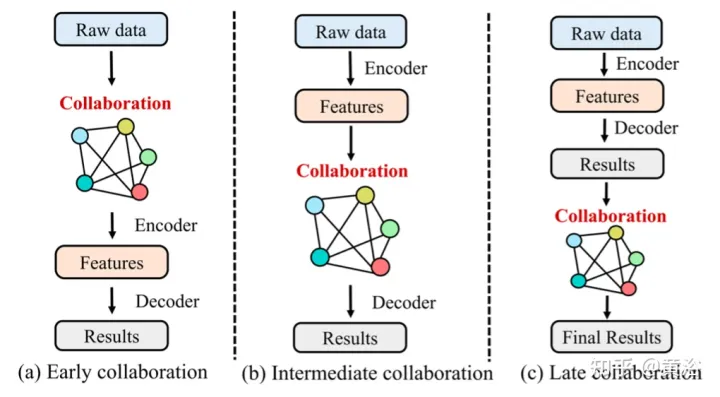

Similar to fusion, there are four categories of collaboration:

##1 Early collaboration

Early collaboration in Collaborate in the input space to share raw sensory data between vehicles and infrastructure. It aggregates the raw measurements of all vehicles and infrastructure to get a holistic view. Therefore, each vehicle can perform the following processing and complete perception based on the overall perspective, which can fundamentally solve the occlusion and long-distance problems that arise in single perception. However, sharing raw sensory data requires extensive communication and easily congests the communication network with excessive data load, which hinders its practical application in most cases.2. Late Collaboration

Later collaboration collaborates in the output space, which promotes the fusion of the perception results output by each agent and achieves refinement. Although late stage collaboration is bandwidth economical, it is very sensitive to positioning errors of agents and suffers from high estimation errors and noise due to incomplete local observations.3 Intermediate collaboration

Intermediate collaboration performs collaboration in the intermediate feature space. It is capable of transmitting intermediate features generated by individual agent prediction models. After fusing these features, each agent decodes the fused features and produces a perceptual result. Conceptually, representative information can be compressed into these features, saving communication bandwidth compared to early collaboration and improving perception compared to late collaboration. In practice, the design of this collaborative strategy is algorithmically challenging in two aspects: i) how to select the most effective and compact features from raw measurements for transmission; and ii) how to maximize Integrate the characteristics of other intelligences to enhance the perception ability of each intelligence.4 Hybrid Synergy

As mentioned above, each synergy mode has its advantages and disadvantages. Therefore, some works adopt hybrid collaboration, combining two or more collaboration modes to optimize collaboration strategies. The main factors of collaborative sensing include:1 Collaborative graph

The graph is a powerful tool for collaborative sensing modeling because it models non-European The data structure has good interpretability. In some works, the vehicles participating in collaborative sensing form a complete collaborative graph, in which each vehicle is a node and the collaborative relationship between two vehicles is the edge between the two nodes.2 Attitude Alignment

Since collaborative sensing requires fusing data from vehicles and infrastructure at different locations and at different times, achieving accurate data alignment is critical to successful collaborative sensing. It's important.3 Information Fusion

Information fusion is the core component of the multi-agent system. Its goal is to fuse the largest amount of information from other agents in an effective way. part.4 Resource allocation based on reinforcement learning

The limited communication bandwidth in real environments requires full utilization of available communication resources, which makes resource allocation and spectrum sharing very important. In vehicular communication environments, rapidly changing channel conditions and growing service demands make the optimization of allocation problems very complex and difficult to solve using traditional optimization methods. Some works utilize multi-agent reinforcement learning (MARL) to solve optimization problems.

Applications of collaborative sensing:

1 3D target detection

3D target detection based on lidar point cloud is the most popular in collaborative sensing research The problem. The reasons are as follows: i) LiDAR point clouds have more spatial dimensions than images and videos. ii) LiDAR point clouds can preserve personal information, such as faces and license plate numbers, to a certain extent. iii) Point cloud data is an appropriate data type for fusion because it loses less than pixels when aligned from different poses. iv) 3D object detection is a basic task for autonomous driving perception, on which many tasks such as tracking and motion prediction are based.

2 Semantic Segmentation

Semantic segmentation of 3D scenes is also a key task required for autonomous driving. Collaborative semantic segmentation of 3D scene objects. Given 3D scene observations (images, lidar point clouds, etc.) from multiple agents, a semantic segmentation mask is generated for each agent.

Challenging issues:

1 Communication robustness

Effective co-unification relies on reliable communication between agents. However, communication is not perfect in practice: i) as the number of vehicles in the network increases, the available communication bandwidth of each vehicle is limited; ii) due to inevitable communication delays, it is difficult for vehicles to receive real-time information from other vehicles; iii) Communication may sometimes be interrupted, resulting in communication interruption; iv) V2X communication is damaged and reliable services cannot always be provided. Although communication technology continues to develop and the quality of communication services continues to improve, the above problems will still exist for a long time. However, most existing works assume that information can be shared in a real-time and lossless manner, so it is of great significance for further work to consider these communication constraints and design robust collaborative sensing systems.

2 Heterogeneity and cross-modality

Most collaborative perception work focuses on lidar point cloud-based perception. However, there are many more types of data available for sensing, such as images and millimeter-wave radar point clouds. This is a potential way to leverage multimodal sensor data for more effective collaboration. Furthermore, in some scenarios, there are different levels of autonomous vehicles providing different qualities of information. Therefore, how to collaborate in heterogeneous vehicle networks is a problem for further practical application of collaborative sensing. Unfortunately, few works focus on heterogeneous and cross-modal collaborative sensing, which also becomes an open challenge.

3 Large-scale data sets

The development of large-scale data sets and deep learning methods has improved perceptual performance. However, existing datasets in the field of collaborative sensing research are either small in size or not publicly available.

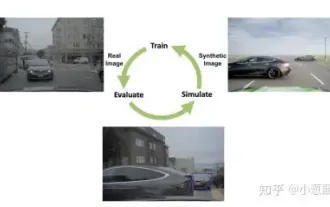

The lack of public large-scale data sets hinders further development of collaborative sensing. Furthermore, most data sets are based on simulations. Although simulation is an economical and safe method to verify algorithms, real data sets are also needed to apply collaborative sensing in practice.

The above is the detailed content of Overview: Collaborative sensing technology for autonomous driving. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1387

1387

52

52

Why is Gaussian Splatting so popular in autonomous driving that NeRF is starting to be abandoned?

Jan 17, 2024 pm 02:57 PM

Why is Gaussian Splatting so popular in autonomous driving that NeRF is starting to be abandoned?

Jan 17, 2024 pm 02:57 PM

Written above & the author’s personal understanding Three-dimensional Gaussiansplatting (3DGS) is a transformative technology that has emerged in the fields of explicit radiation fields and computer graphics in recent years. This innovative method is characterized by the use of millions of 3D Gaussians, which is very different from the neural radiation field (NeRF) method, which mainly uses an implicit coordinate-based model to map spatial coordinates to pixel values. With its explicit scene representation and differentiable rendering algorithms, 3DGS not only guarantees real-time rendering capabilities, but also introduces an unprecedented level of control and scene editing. This positions 3DGS as a potential game-changer for next-generation 3D reconstruction and representation. To this end, we provide a systematic overview of the latest developments and concerns in the field of 3DGS for the first time.

How to solve the long tail problem in autonomous driving scenarios?

Jun 02, 2024 pm 02:44 PM

How to solve the long tail problem in autonomous driving scenarios?

Jun 02, 2024 pm 02:44 PM

Yesterday during the interview, I was asked whether I had done any long-tail related questions, so I thought I would give a brief summary. The long-tail problem of autonomous driving refers to edge cases in autonomous vehicles, that is, possible scenarios with a low probability of occurrence. The perceived long-tail problem is one of the main reasons currently limiting the operational design domain of single-vehicle intelligent autonomous vehicles. The underlying architecture and most technical issues of autonomous driving have been solved, and the remaining 5% of long-tail problems have gradually become the key to restricting the development of autonomous driving. These problems include a variety of fragmented scenarios, extreme situations, and unpredictable human behavior. The "long tail" of edge scenarios in autonomous driving refers to edge cases in autonomous vehicles (AVs). Edge cases are possible scenarios with a low probability of occurrence. these rare events

Choose camera or lidar? A recent review on achieving robust 3D object detection

Jan 26, 2024 am 11:18 AM

Choose camera or lidar? A recent review on achieving robust 3D object detection

Jan 26, 2024 am 11:18 AM

0.Written in front&& Personal understanding that autonomous driving systems rely on advanced perception, decision-making and control technologies, by using various sensors (such as cameras, lidar, radar, etc.) to perceive the surrounding environment, and using algorithms and models for real-time analysis and decision-making. This enables vehicles to recognize road signs, detect and track other vehicles, predict pedestrian behavior, etc., thereby safely operating and adapting to complex traffic environments. This technology is currently attracting widespread attention and is considered an important development area in the future of transportation. one. But what makes autonomous driving difficult is figuring out how to make the car understand what's going on around it. This requires that the three-dimensional object detection algorithm in the autonomous driving system can accurately perceive and describe objects in the surrounding environment, including their locations,

The Stable Diffusion 3 paper is finally released, and the architectural details are revealed. Will it help to reproduce Sora?

Mar 06, 2024 pm 05:34 PM

The Stable Diffusion 3 paper is finally released, and the architectural details are revealed. Will it help to reproduce Sora?

Mar 06, 2024 pm 05:34 PM

StableDiffusion3’s paper is finally here! This model was released two weeks ago and uses the same DiT (DiffusionTransformer) architecture as Sora. It caused quite a stir once it was released. Compared with the previous version, the quality of the images generated by StableDiffusion3 has been significantly improved. It now supports multi-theme prompts, and the text writing effect has also been improved, and garbled characters no longer appear. StabilityAI pointed out that StableDiffusion3 is a series of models with parameter sizes ranging from 800M to 8B. This parameter range means that the model can be run directly on many portable devices, significantly reducing the use of AI

This article is enough for you to read about autonomous driving and trajectory prediction!

Feb 28, 2024 pm 07:20 PM

This article is enough for you to read about autonomous driving and trajectory prediction!

Feb 28, 2024 pm 07:20 PM

Trajectory prediction plays an important role in autonomous driving. Autonomous driving trajectory prediction refers to predicting the future driving trajectory of the vehicle by analyzing various data during the vehicle's driving process. As the core module of autonomous driving, the quality of trajectory prediction is crucial to downstream planning control. The trajectory prediction task has a rich technology stack and requires familiarity with autonomous driving dynamic/static perception, high-precision maps, lane lines, neural network architecture (CNN&GNN&Transformer) skills, etc. It is very difficult to get started! Many fans hope to get started with trajectory prediction as soon as possible and avoid pitfalls. Today I will take stock of some common problems and introductory learning methods for trajectory prediction! Introductory related knowledge 1. Are the preview papers in order? A: Look at the survey first, p

Let's talk about end-to-end and next-generation autonomous driving systems, as well as some misunderstandings about end-to-end autonomous driving?

Apr 15, 2024 pm 04:13 PM

Let's talk about end-to-end and next-generation autonomous driving systems, as well as some misunderstandings about end-to-end autonomous driving?

Apr 15, 2024 pm 04:13 PM

In the past month, due to some well-known reasons, I have had very intensive exchanges with various teachers and classmates in the industry. An inevitable topic in the exchange is naturally end-to-end and the popular Tesla FSDV12. I would like to take this opportunity to sort out some of my thoughts and opinions at this moment for your reference and discussion. How to define an end-to-end autonomous driving system, and what problems should be expected to be solved end-to-end? According to the most traditional definition, an end-to-end system refers to a system that inputs raw information from sensors and directly outputs variables of concern to the task. For example, in image recognition, CNN can be called end-to-end compared to the traditional feature extractor + classifier method. In autonomous driving tasks, input data from various sensors (camera/LiDAR

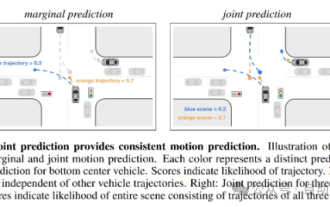

SIMPL: A simple and efficient multi-agent motion prediction benchmark for autonomous driving

Feb 20, 2024 am 11:48 AM

SIMPL: A simple and efficient multi-agent motion prediction benchmark for autonomous driving

Feb 20, 2024 am 11:48 AM

Original title: SIMPL: ASimpleandEfficientMulti-agentMotionPredictionBaselineforAutonomousDriving Paper link: https://arxiv.org/pdf/2402.02519.pdf Code link: https://github.com/HKUST-Aerial-Robotics/SIMPL Author unit: Hong Kong University of Science and Technology DJI Paper idea: This paper proposes a simple and efficient motion prediction baseline (SIMPL) for autonomous vehicles. Compared with traditional agent-cent

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

Target detection is a relatively mature problem in autonomous driving systems, among which pedestrian detection is one of the earliest algorithms to be deployed. Very comprehensive research has been carried out in most papers. However, distance perception using fisheye cameras for surround view is relatively less studied. Due to large radial distortion, standard bounding box representation is difficult to implement in fisheye cameras. To alleviate the above description, we explore extended bounding box, ellipse, and general polygon designs into polar/angular representations and define an instance segmentation mIOU metric to analyze these representations. The proposed model fisheyeDetNet with polygonal shape outperforms other models and simultaneously achieves 49.5% mAP on the Valeo fisheye camera dataset for autonomous driving