Nine ways to use machine learning to launch attacks

Machine learning and artificial intelligence (AI) are becoming core technologies for some threat detection and response tools. Its ability to learn on the fly and automatically adapt to cyber threat dynamics empowers security teams.

However, some malicious hackers will also use machine learning and AI to expand their network attacks, circumvent security controls, and find new vulnerabilities at an unprecedented speed with devastating consequences. Common ways hackers exploit these two technologies are as follows.

1. Spam

Omida analyst Fernando Montenegro said that epidemic prevention personnel use machine learning technology Detecting spam has been around for decades. “Spam prevention is the most successful initial use case for machine learning.” You can adjust your behavior. They use legitimate tools to make their attacks more successful. "With enough submissions, you can recover what the model is, and then you can tailor your attack to bypass that model."

It's not just spam filters that are vulnerable . Any security vendor that provides a score or some other output is open to abuse. "Not everyone has this problem, but if you're not careful, someone will take advantage of this output."

2. More sophisticated phishing emails

Attackers don’t just use machine learning security tools to test whether their emails can pass spam filters. They also use machine learning to craft these emails. "They advertise these services on criminal forums. They use these techniques to generate more sophisticated phishing emails and create false personas to advance their scams," said Adam Malone, a partner at Ernst & Young's technology consulting firm.

#The use of machine learning is highlighted when advertising these services, and it may not just be marketing rhetoric, but reality. “You’ll know if you try it,” Malone said. “The effect is really good.”

Attackers can use machine learning to creatively customize phishing emails to prevent them from being Mark your email as spam to give targeted users a chance to click through. They customize more than just email text. Attackers will use AI to generate realistic-looking photos, social media profiles and other materials to make communications appear as authentic and trustworthy as possible.

3. More efficient password guessing

Cybercriminals also use machine learning to guess passwords. "We have evidence that they are using password guessing engines more frequently and with higher success rates." Cybercriminals are creating better dictionaries to crack stolen hashes.

They also use machine learning to identify security controls so that they can guess passwords with fewer attempts, increasing the probability of successfully breaching a system.

4. Deepfakes

The most alarming misuse of artificial intelligence is deepfake tools: generating video or audio that can look fake tool. "Being able to imitate other people's voices or looks is very effective in deceiving people." Montenegro said, "If someone pretends to be my voice, you will probably be tricked as well."

In fact, in the past few years, A series of major cases disclosed here show that fake audio can cost companies hundreds, thousands or even millions of dollars. "People will get calls from their bosses — and it's fake," said Murat Kantarcioglu, a professor of computer science at the University of Texas. More commonly, scammers use AI generates photos, user profiles, and phishing emails that look authentic, making their emails look more trustworthy. This is big business. According to an FBI report, business email fraud has resulted in losses of more than $43 billion since 2016. Last fall, media reported that a bank in Hong Kong was defrauded into transferring $35 million to a criminal gang simply because a bank employee received a call from a company director whom he knew. He recognized the director's voice and authorized the transfer without question.

5. Neutralize off-the-shelf security tools

Many security tools commonly used today have some form of artificial intelligence or machine learning built into them. For example, antivirus software relies on more than basic signatures when looking for suspicious behavior. "Anything available on the Internet, especially open source, can be exploited by bad actors."

Attackers can use these tools, not to defend against attacks, but to adjust themselves of malware until detection can be bypassed. "AI models have many blind spots." Kantarcioglu said, "You can adjust it by changing the characteristics of the attack, such as the number of packets sent, the resources of the attack, etc."

Moreover, attackers are leveraging more than just AI-powered security tools. AI is just one of a bunch of different technologies. For example, users can often learn to identify phishing emails by looking for grammatical errors. And AI-powered grammar checkers, like Grammarly, can help attackers improve their writing.

6. Reconnaissance

Machine learning can be used for reconnaissance, allowing attackers to view a target’s traffic patterns, defenses, and potential vulnerabilities. Reconnaissance is not an easy task and is beyond the reach of ordinary cybercriminals. "If you want to use AI for reconnaissance, you have to have certain skills. So, I think that only advanced state hackers will use these technologies."

However, once to a certain extent, Once it is commercialized and this technology is provided as a service through the underground black market, many people can take advantage of it. “This could also happen if a hacker nation-state develops a toolkit that uses machine learning and releases it to the criminal community,” Mellen said. “But cybercriminals still need to understand the role and effectiveness of machine learning applications. The method of exploitation, this is the threshold for exploitation."

7. Autonomous Agent

If the enterprise finds that it is under attack, disconnect the affected system's Internet connection, the malware may not be able to connect back to its command and control (C2) server to receive further instructions. "An attacker may want to develop an intelligent model that can persist for a long time even if it cannot be directly controlled." Kantarcioglu said, "But for ordinary cybercrime, I don't think this is particularly important."

8. AI Poisoning

An attacker can trick a machine learning model by feeding it new information. Alexey Rubtsov, senior associate researcher at the Global Risk Institute, said: "Adversaries can manipulate training data sets. For example, they deliberately bias the model so that the machine learns the wrong way."

For example For example, a hacker can manipulate a hijacked user account to log in to the system at 2 a.m. every day to perform harmless work, causing the system to think that there is nothing suspicious about working at 2 a.m., thus reducing the security levels that users must pass.

The Microsoft Tay chatbot was taught to be racist in 2016 for a similar reason. The same approach can be used to train a system to think that a specific type of malware is safe, or that a specific crawler behavior is completely normal.

9. AI Fuzz Testing

Legitimate software developers and penetration testers use fuzz testing software to generate random sample inputs in an attempt to crash the application program or find loopholes. Enhanced versions of this type of software use machine learning to generate input in a more targeted and organized manner, such as prioritizing text strings most likely to cause problems. This type of fuzz testing tool can achieve better testing results when used by enterprises, but it is also more deadly in the hands of attackers.

These techniques are among the reasons why cybersecurity approaches such as security patches, anti-phishing education, and micro-segmentation remain vital. "That's one of the reasons why defense in depth is so important," said Forrester's Mellen. "You have to put up multiple roadblocks, not just the one that attackers can use against you."

Lack of expertise hinders malicious hackers from exploiting machine learning and AI

Investing in machine learning requires a lot of expertise, and machine learning-related expertise is currently scarce Skill. And, because many vulnerabilities remain unpatched, there are many easy ways for attackers to break through corporate defenses.

“There are a lot of low-hanging fruit, and there are a lot of other ways to make money without using machine learning and artificial intelligence to launch attacks.” Mellen said, “In my experience, "In the vast majority of cases, attackers do not utilize these techniques." However, as enterprise defenses improve and cybercriminals and hacker nations continue to invest in attack development, the balance may soon begin to shift.

The above is the detailed content of Nine ways to use machine learning to launch attacks. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1378

1378

52

52

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

In the fields of machine learning and data science, model interpretability has always been a focus of researchers and practitioners. With the widespread application of complex models such as deep learning and ensemble methods, understanding the model's decision-making process has become particularly important. Explainable AI|XAI helps build trust and confidence in machine learning models by increasing the transparency of the model. Improving model transparency can be achieved through methods such as the widespread use of multiple complex models, as well as the decision-making processes used to explain the models. These methods include feature importance analysis, model prediction interval estimation, local interpretability algorithms, etc. Feature importance analysis can explain the decision-making process of a model by evaluating the degree of influence of the model on the input features. Model prediction interval estimate

Transparent! An in-depth analysis of the principles of major machine learning models!

Apr 12, 2024 pm 05:55 PM

Transparent! An in-depth analysis of the principles of major machine learning models!

Apr 12, 2024 pm 05:55 PM

In layman’s terms, a machine learning model is a mathematical function that maps input data to a predicted output. More specifically, a machine learning model is a mathematical function that adjusts model parameters by learning from training data to minimize the error between the predicted output and the true label. There are many models in machine learning, such as logistic regression models, decision tree models, support vector machine models, etc. Each model has its applicable data types and problem types. At the same time, there are many commonalities between different models, or there is a hidden path for model evolution. Taking the connectionist perceptron as an example, by increasing the number of hidden layers of the perceptron, we can transform it into a deep neural network. If a kernel function is added to the perceptron, it can be converted into an SVM. this one

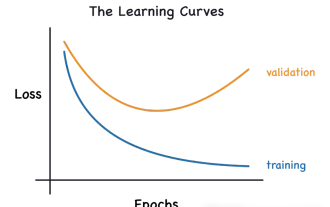

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

This article will introduce how to effectively identify overfitting and underfitting in machine learning models through learning curves. Underfitting and overfitting 1. Overfitting If a model is overtrained on the data so that it learns noise from it, then the model is said to be overfitting. An overfitted model learns every example so perfectly that it will misclassify an unseen/new example. For an overfitted model, we will get a perfect/near-perfect training set score and a terrible validation set/test score. Slightly modified: "Cause of overfitting: Use a complex model to solve a simple problem and extract noise from the data. Because a small data set as a training set may not represent the correct representation of all data." 2. Underfitting Heru

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

In the 1950s, artificial intelligence (AI) was born. That's when researchers discovered that machines could perform human-like tasks, such as thinking. Later, in the 1960s, the U.S. Department of Defense funded artificial intelligence and established laboratories for further development. Researchers are finding applications for artificial intelligence in many areas, such as space exploration and survival in extreme environments. Space exploration is the study of the universe, which covers the entire universe beyond the earth. Space is classified as an extreme environment because its conditions are different from those on Earth. To survive in space, many factors must be considered and precautions must be taken. Scientists and researchers believe that exploring space and understanding the current state of everything can help understand how the universe works and prepare for potential environmental crises

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Common challenges faced by machine learning algorithms in C++ include memory management, multi-threading, performance optimization, and maintainability. Solutions include using smart pointers, modern threading libraries, SIMD instructions and third-party libraries, as well as following coding style guidelines and using automation tools. Practical cases show how to use the Eigen library to implement linear regression algorithms, effectively manage memory and use high-performance matrix operations.

Canada plans to ban hacking tool Flipper Zero as car theft problem surges

Jul 17, 2024 am 03:06 AM

Canada plans to ban hacking tool Flipper Zero as car theft problem surges

Jul 17, 2024 am 03:06 AM

This website reported on February 12 that the Canadian government plans to ban the sale of hacking tool FlipperZero and similar devices because they are labeled as tools that thieves can use to steal cars. FlipperZero is a portable programmable test tool that helps test and debug various hardware and digital devices through multiple protocols, including RFID, radio, NFC, infrared and Bluetooth, and has won the favor of many geeks and hackers. Since the release of the product, users have demonstrated FlipperZero's capabilities on social media, including using replay attacks to unlock cars, open garage doors, activate doorbells and clone various digital keys. ▲FlipperZero copies the McLaren keychain and unlocks the car Canadian Industry Minister Franço

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Translator | Reviewed by Li Rui | Chonglou Artificial intelligence (AI) and machine learning (ML) models are becoming increasingly complex today, and the output produced by these models is a black box – unable to be explained to stakeholders. Explainable AI (XAI) aims to solve this problem by enabling stakeholders to understand how these models work, ensuring they understand how these models actually make decisions, and ensuring transparency in AI systems, Trust and accountability to address this issue. This article explores various explainable artificial intelligence (XAI) techniques to illustrate their underlying principles. Several reasons why explainable AI is crucial Trust and transparency: For AI systems to be widely accepted and trusted, users need to understand how decisions are made

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

MetaFAIR teamed up with Harvard to provide a new research framework for optimizing the data bias generated when large-scale machine learning is performed. It is known that the training of large language models often takes months and uses hundreds or even thousands of GPUs. Taking the LLaMA270B model as an example, its training requires a total of 1,720,320 GPU hours. Training large models presents unique systemic challenges due to the scale and complexity of these workloads. Recently, many institutions have reported instability in the training process when training SOTA generative AI models. They usually appear in the form of loss spikes. For example, Google's PaLM model experienced up to 20 loss spikes during the training process. Numerical bias is the root cause of this training inaccuracy,