Technology peripherals

Technology peripherals

AI

AI

AI writes novels, paintings, and cuts videos. Generative AI is even more popular!

AI writes novels, paintings, and cuts videos. Generative AI is even more popular!

AI writes novels, paintings, and cuts videos. Generative AI is even more popular!

Recently, generative AI has become popular again! A WeChat applet called "Dream Stealer" became an instant hit, reaching a record of adding 50,000 new users every day.

Dream Stealer is an AI platform that can generate images based on input text. It is a branch of AIGC (AI-Generated Content).

After the user uses their imagination and enters a text description, Dream Stealer can generate pictures in three ratios: 1:1, 9:16 and 16:9, and there are 24 painting styles to choose from - in addition to Basic painting types such as oil painting, watercolor, and sketch also include special styles such as cyberpunk, vaporwave, pixel art, Ghibli, and CG rendering.

Picture: Technology Cloud Report editor used "Dream Stealer" WeChat applet to generate

In fact, this is not the first "Yiwensheng" Graph” AI software. From Midjourney to Stable Diffusion, generative AI has been the hottest topic in the past two years.

As an important direction in the development of AI, generative AI has great potential for development.

According to data from Gartner in the first half of the year, it is expected that generative AI will account for 10% of all generated data by 2025, compared with less than 1% currently.

Some people believe that 2022 will be the first year when generative AI matures from technology to penetrate into the fundamentals of society.

The explosive growth of generative AI: from pictures to videos

In recent years, the development of AI technology in the visual field can be described as "rapid".

In January last year, OpenAI, a company dedicated to "benefiting all mankind with general artificial intelligence", released the epoch-making DALL-E based on the GPT-3 model, which realizes the generation of images from text.

In April this year, the second-generation DALL-E 2 model released by OpenAI once again set a new benchmark in the field of image generation.

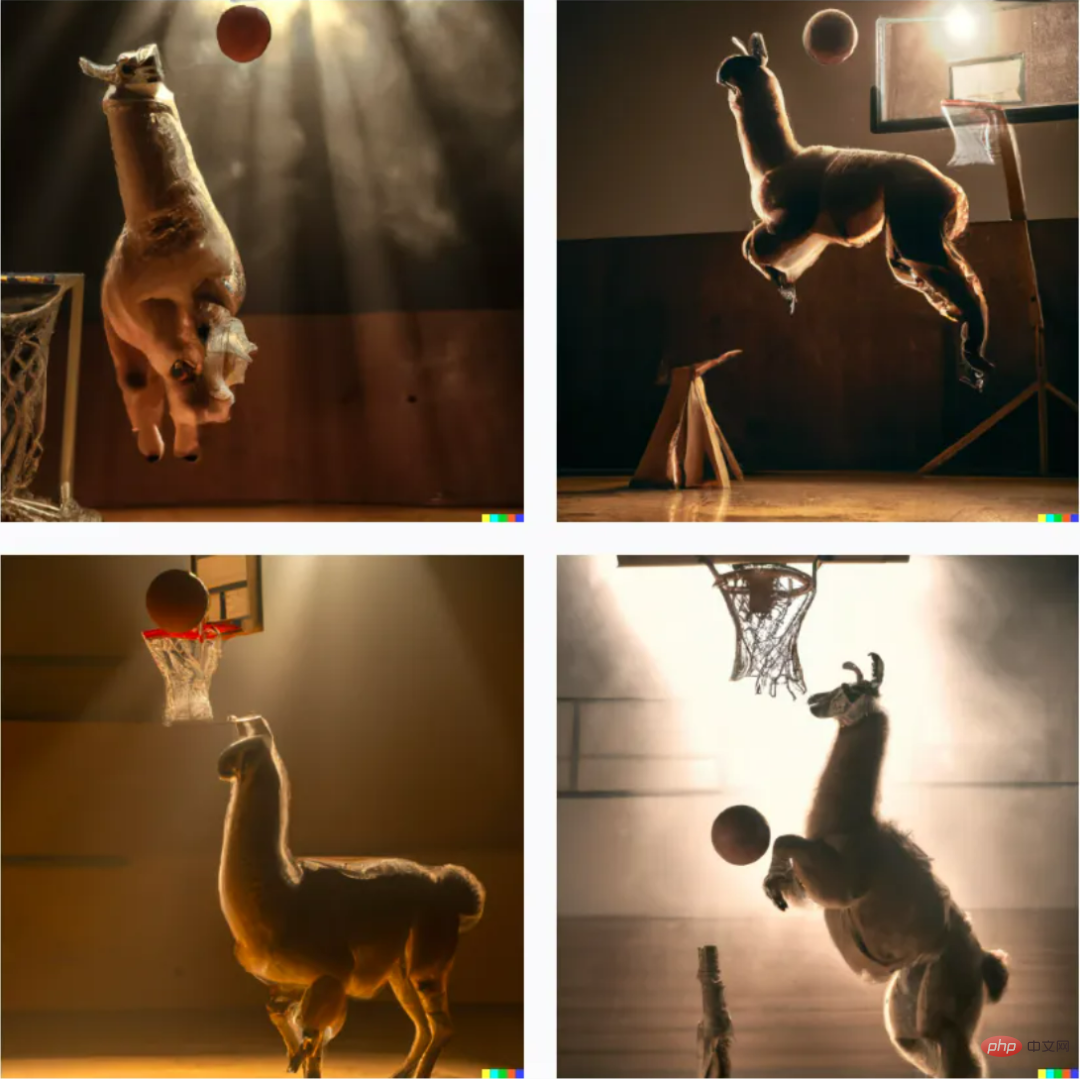

Users can generate corresponding images through short text descriptions (prompt), so that people who cannot draw can also turn their imagination into artistic creations, such as the sentence "Alpaca playing basketball" The four pictures generated by this look very much in line with everyone’s expected imagination.

DALL-E 2 model generated image example

Not only that, as the granularity of the text description continues to be refined, the generated images will also become more and more sophisticated. The more accurate it is, the effect will be quite shocking to non-professionals.

But models like DALL-E 2 still stay in the field of two-dimensional creation, that is, image generation, and cannot generate 360-degree 3D models without dead ends.

However, this is still not difficult for very creative algorithm researchers. One of the latest achievements of Google Research-DreamFusion model, can generate 3D models by inputting simple text prompts, which can not only generate 3D models under different lighting conditions. Rendering is performed below, and the generated 3D model also has characteristics such as density and color. It can even integrate multiple generated 3D models into one scene.

After generating 3D images, Meta’s algorithm staff further opened up their ideas, challenged higher difficulties, and began to explore using text prompts to directly generate videos.

Although video is essentially the superposition of a series of images, compared to generating images, when using text to generate a video, it is not only necessary to generate multiple frames in the same scene, but also to ensure that adjacent frames coherence between. Since there is very little high-quality video data available when training the model, but the amount of calculation is very large, it greatly increases the complexity of the video generation task.

In September this year, researchers from Meta released Make-A-Video, a high-quality short video generation model based on artificial intelligence, which is equivalent to the video version of DALL-E, also nicknamed "Make videos with your mouth" means you can create new video content through text prompts. The key technology behind it also comes from the "text-image" synthesis technology used by image generators such as DALL-E.

Only one week later, Google CEO Pichai officially announced two models to challenge Meta’s Make-A-Video head-on, namely Imagen Video and Phenaki.

Compared with Make-A-Video, Imagen Video highlights the high-definition characteristics of video, can generate 1280*768 resolution, 24 frames per second video clips, and can also understand and generate works of different artistic styles. ;

Understand the 3D structure of objects and will not deform during rotation display;

It even inherits Imagen’s ability to accurately depict text, and on this basis, only simple descriptions can generate various creative ideas animation.

Imagen Video generated video example

And Phenaki can generate a lower resolution long shot of more than 2 minutes based on a prompt of about 200 words. , tells a relatively complete story.

Phenaki Generated Video Example

Currently, there are many generative AI applications in China.

For example, ByteDance’s Jianying APP provides AI-generated video functions and can be used for free.

The cut-out picture-text function is similar to Google. Creators can generate a creative short video through a few keywords or a short paragraph of text.

Clip Screen can also intelligently match video materials based on text descriptions, and package videos into more vertical content works, including finance, history, humanities and other categories.

In January 2022, NetEase launched the one-stop AI music creation platform "NetEase Tianyin", which AI-generated New Year greetings edited by users into songs, and launched a web-side professional version in the first half of the year.

In September 2021, Caiyun Xiaomeng APP was launched, which can create various types of text. Users only need to give a beginning of 1-1000 words, and Caiyun Xiaomeng can continue to write the following story.

In fact, there are many forms of AI creation. When generative AI technology is applied to writing, machine versions of journalists, novelists, poets, screenwriters, etc. can be born. When it is applied to the fields of painting, music and dance, it can "cultivate" painters, composers and editors. Dance staff.

Behind the explosion of generative AI

In the past year, generative AI has developed even better. Software giants in the AI field such as Google, Microsoft, and Meta have promoted this technology internally and integrated generative AI into their products.

Why is generative AI suddenly popular?

In fact, generative AI technology has been developing rapidly. However, due to the high technical threshold, it was mostly limited to a small circle in the technology world.

Looking back at the development history of AI technology, we will find that the explosion of generative AI is inseparable from three factors: better models, more data, and more calculations.

Before 2015, small models were considered the "state-of-the-art technology" for understanding language. These small models excel at analytical tasks and are deployed in jobs ranging from predicting delivery times to classifying fraud.

However, their expressive power is not strong enough for general generation tasks. Generating human-level writing or code is still just a dream.

In 2017, Google Research published a landmark paper (Attention is All You Need) describing a new neural network architecture for natural language understanding, called transformers, that can generate quality Superior language models, at the same time, are more parallelizable and require significantly less training time.

Of course, as models get larger, they start to show superhuman performance. The amount of computation used to train these models increased by six orders of magnitude from 2015 to 2020, with the results exceeding benchmarks for human performance in handwriting, speech and image recognition, reading comprehension, and language understanding.

Among them, OpenAI's GPT-3 stands out. The performance of this model has made a huge leap over GPT-2, showing better capabilities from code generation to joke writing.

Despite all the advances in fundamental research, these models are not universal.

They are large, difficult to run (requiring GPU coordination), not widely available (unavailable or only in closed beta), and expensive to use as a cloud service.

But despite these limitations, the earliest generative AI applications are beginning to enter the battlefield.

Afterwards, as computing became cheaper, the industry continued to develop better algorithms and larger models.

Developer permissions are expanded from closed beta to open beta, or in some cases, open source.

Now that the platform layer is solid, coupled with models continuing to get better, faster, and cheaper, and access to models tending to be free and open source, the AI application layer is ripe for creativity to explode.

For example, in August this year, the text-image generation model Stable Diffusion was open sourced. Successors can better use this open source tool to dig out a richer content ecology and popularize it to a wider range of C-end users. play a vital role.

The popularity of Stable Diffusion is essentially that open source releases creativity.

Generative AI faces real challenges

The venture capital institution Sequoia Capital mentioned in a blog post on its official website: “Generative AI has the potential to generate trillions Economic value in U.S. dollars.

” According to Sequoia Capital, generative AI can transform every industry that requires humans to create original works, from games to advertising to law.

Specifically, the application scenarios of generative AI in the future are very broad. In addition to content production industries such as cultural creation and news, generative AI will also be used in health care, digital commerce, manufacturing, Agriculture and other industries have rich application prospects, such as helping doctors detect lesions in X-ray, CT and other equipment scans, creating digital twins of goods, assisting in testing product quality, etc.

There is also abundant application space in popular technologies such as XR, digital twins, and autonomous vehicles.

But it is worth noting that there are still many problems that need to be solved in current generative AI.

For example, in the entertainment field, one of the reasons why many people use generative AI for creation is to avoid copyright issues, but this does not mean that there are no hidden dangers.

On the one hand, AI creation also recombines the learned data according to requirements. Although the granularity is getting finer and finer, it is inevitable that some sharp-eyed people will see that it may be a reference. Some netizens even said on social platforms that they had vaguely seen traces of suspected signatures on an AI-generated picture.

On the other hand, most of the current AI generation platforms do not claim copyright or clearly state that they can be used for commercial purposes. However, as generative AI gradually becomes commercialized, does such a copyright environment exist? Whether new copyright issues will arise also needs to be discussed.

The logic and security of generative AI also need to be improved. Current generative AI is prone to making common sense mistakes, and is also prone to problems in areas that require long-term memory.

For example, in the process of AI-generated novels, there are often inconsistencies due to the long length.

Therefore, even if generative AI can already be applied in many fields, in order to really put generative AI to work, a large amount of training must be done to avoid the "mistakes" caused by AI. heavy losses.

After all, application scenarios such as medical and manufacturing do not have the same room for trial and error as the cultural and creative industries.

Conclusion

Although generative AI is currently inseparable from human intervention, it is undeniable that generative AI still has great potential development potential.

The emergence of generative AI means that AI begins to assume a new role in real-life content, expanding from "observation and prediction" to "direct generation and decision-making". In other words, generative AI creates, not just analyzes.

As OpenAI CEO Sam Altman said: "Generative AI reminds us that it is difficult to make predictions about artificial intelligence.

Ten years ago, the conventional wisdom was: AI would impact physical labor first; then, cognitive labor; and then, maybe one day, it could do creative work. Now it looks like it will happen in the reverse order."

The above is the detailed content of AI writes novels, paintings, and cuts videos. Generative AI is even more popular!. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

Bytedance Cutting launches SVIP super membership: 499 yuan for continuous annual subscription, providing a variety of AI functions

Jun 28, 2024 am 03:51 AM

Bytedance Cutting launches SVIP super membership: 499 yuan for continuous annual subscription, providing a variety of AI functions

Jun 28, 2024 am 03:51 AM

This site reported on June 27 that Jianying is a video editing software developed by FaceMeng Technology, a subsidiary of ByteDance. It relies on the Douyin platform and basically produces short video content for users of the platform. It is compatible with iOS, Android, and Windows. , MacOS and other operating systems. Jianying officially announced the upgrade of its membership system and launched a new SVIP, which includes a variety of AI black technologies, such as intelligent translation, intelligent highlighting, intelligent packaging, digital human synthesis, etc. In terms of price, the monthly fee for clipping SVIP is 79 yuan, the annual fee is 599 yuan (note on this site: equivalent to 49.9 yuan per month), the continuous monthly subscription is 59 yuan per month, and the continuous annual subscription is 499 yuan per year (equivalent to 41.6 yuan per month) . In addition, the cut official also stated that in order to improve the user experience, those who have subscribed to the original VIP

Context-augmented AI coding assistant using Rag and Sem-Rag

Jun 10, 2024 am 11:08 AM

Context-augmented AI coding assistant using Rag and Sem-Rag

Jun 10, 2024 am 11:08 AM

Improve developer productivity, efficiency, and accuracy by incorporating retrieval-enhanced generation and semantic memory into AI coding assistants. Translated from EnhancingAICodingAssistantswithContextUsingRAGandSEM-RAG, author JanakiramMSV. While basic AI programming assistants are naturally helpful, they often fail to provide the most relevant and correct code suggestions because they rely on a general understanding of the software language and the most common patterns of writing software. The code generated by these coding assistants is suitable for solving the problems they are responsible for solving, but often does not conform to the coding standards, conventions and styles of the individual teams. This often results in suggestions that need to be modified or refined in order for the code to be accepted into the application

Can fine-tuning really allow LLM to learn new things: introducing new knowledge may make the model produce more hallucinations

Jun 11, 2024 pm 03:57 PM

Can fine-tuning really allow LLM to learn new things: introducing new knowledge may make the model produce more hallucinations

Jun 11, 2024 pm 03:57 PM

Large Language Models (LLMs) are trained on huge text databases, where they acquire large amounts of real-world knowledge. This knowledge is embedded into their parameters and can then be used when needed. The knowledge of these models is "reified" at the end of training. At the end of pre-training, the model actually stops learning. Align or fine-tune the model to learn how to leverage this knowledge and respond more naturally to user questions. But sometimes model knowledge is not enough, and although the model can access external content through RAG, it is considered beneficial to adapt the model to new domains through fine-tuning. This fine-tuning is performed using input from human annotators or other LLM creations, where the model encounters additional real-world knowledge and integrates it

Seven Cool GenAI & LLM Technical Interview Questions

Jun 07, 2024 am 10:06 AM

Seven Cool GenAI & LLM Technical Interview Questions

Jun 07, 2024 am 10:06 AM

To learn more about AIGC, please visit: 51CTOAI.x Community https://www.51cto.com/aigc/Translator|Jingyan Reviewer|Chonglou is different from the traditional question bank that can be seen everywhere on the Internet. These questions It requires thinking outside the box. Large Language Models (LLMs) are increasingly important in the fields of data science, generative artificial intelligence (GenAI), and artificial intelligence. These complex algorithms enhance human skills and drive efficiency and innovation in many industries, becoming the key for companies to remain competitive. LLM has a wide range of applications. It can be used in fields such as natural language processing, text generation, speech recognition and recommendation systems. By learning from large amounts of data, LLM is able to generate text

Five schools of machine learning you don't know about

Jun 05, 2024 pm 08:51 PM

Five schools of machine learning you don't know about

Jun 05, 2024 pm 08:51 PM

Machine learning is an important branch of artificial intelligence that gives computers the ability to learn from data and improve their capabilities without being explicitly programmed. Machine learning has a wide range of applications in various fields, from image recognition and natural language processing to recommendation systems and fraud detection, and it is changing the way we live. There are many different methods and theories in the field of machine learning, among which the five most influential methods are called the "Five Schools of Machine Learning". The five major schools are the symbolic school, the connectionist school, the evolutionary school, the Bayesian school and the analogy school. 1. Symbolism, also known as symbolism, emphasizes the use of symbols for logical reasoning and expression of knowledge. This school of thought believes that learning is a process of reverse deduction, through existing

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

Editor |ScienceAI Question Answering (QA) data set plays a vital role in promoting natural language processing (NLP) research. High-quality QA data sets can not only be used to fine-tune models, but also effectively evaluate the capabilities of large language models (LLM), especially the ability to understand and reason about scientific knowledge. Although there are currently many scientific QA data sets covering medicine, chemistry, biology and other fields, these data sets still have some shortcomings. First, the data form is relatively simple, most of which are multiple-choice questions. They are easy to evaluate, but limit the model's answer selection range and cannot fully test the model's ability to answer scientific questions. In contrast, open-ended Q&A

SOTA performance, Xiamen multi-modal protein-ligand affinity prediction AI method, combines molecular surface information for the first time

Jul 17, 2024 pm 06:37 PM

SOTA performance, Xiamen multi-modal protein-ligand affinity prediction AI method, combines molecular surface information for the first time

Jul 17, 2024 pm 06:37 PM

Editor | KX In the field of drug research and development, accurately and effectively predicting the binding affinity of proteins and ligands is crucial for drug screening and optimization. However, current studies do not take into account the important role of molecular surface information in protein-ligand interactions. Based on this, researchers from Xiamen University proposed a novel multi-modal feature extraction (MFE) framework, which for the first time combines information on protein surface, 3D structure and sequence, and uses a cross-attention mechanism to compare different modalities. feature alignment. Experimental results demonstrate that this method achieves state-of-the-art performance in predicting protein-ligand binding affinities. Furthermore, ablation studies demonstrate the effectiveness and necessity of protein surface information and multimodal feature alignment within this framework. Related research begins with "S

SK Hynix will display new AI-related products on August 6: 12-layer HBM3E, 321-high NAND, etc.

Aug 01, 2024 pm 09:40 PM

SK Hynix will display new AI-related products on August 6: 12-layer HBM3E, 321-high NAND, etc.

Aug 01, 2024 pm 09:40 PM

According to news from this site on August 1, SK Hynix released a blog post today (August 1), announcing that it will attend the Global Semiconductor Memory Summit FMS2024 to be held in Santa Clara, California, USA from August 6 to 8, showcasing many new technologies. generation product. Introduction to the Future Memory and Storage Summit (FutureMemoryandStorage), formerly the Flash Memory Summit (FlashMemorySummit) mainly for NAND suppliers, in the context of increasing attention to artificial intelligence technology, this year was renamed the Future Memory and Storage Summit (FutureMemoryandStorage) to invite DRAM and storage vendors and many more players. New product SK hynix launched last year