Technology peripherals

Technology peripherals

AI

AI

Meta researchers make a new AI attempt: teaching robots to navigate physically without maps or training

Meta researchers make a new AI attempt: teaching robots to navigate physically without maps or training

Meta researchers make a new AI attempt: teaching robots to navigate physically without maps or training

The artificial intelligence department of Meta Platforms company recently stated that they are teaching AI models how to learn to walk in the physical world with the support of a small amount of training data, and have made rapid progress.

This research can significantly shorten the time for the AI model to acquire visual navigation capabilities. Previously, achieving such goals required repeated "reinforcement learning" using large data sets.

Meta AI researchers said that this exploration of AI visual navigation will have a significant impact on the virtual world. The basic idea of the project is not complicated: to help AI navigate physical space just like humans do, simply through observation and exploration.

Meta AI department explained, “For example, if we want AR glasses to guide us to find keys, we must find a way to help AI understand the layout of unfamiliar and changing environments. After all, this is a very detailed and small requirement. , it is impossible to rely forever on high-precision preset maps that consume a lot of computing power. Humans do not need to know the exact location or length of the coffee table to easily move around the corners of the table without any collision."

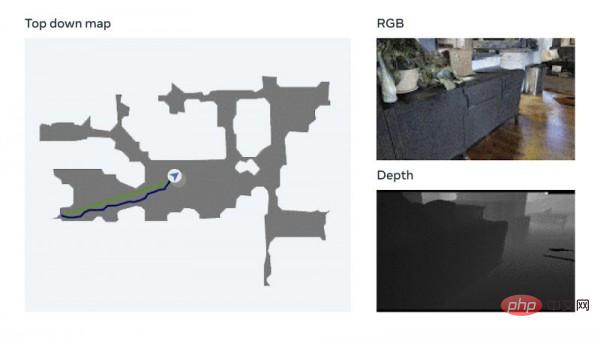

To this end, Meta decided to focus its efforts on "embodied AI", that is, training AI systems through interactive mechanisms in 3D simulations. In this area, Meta said it has established a promising "point target navigation model" that can navigate in new environments without any maps or GPS sensors.

The model uses a technology called visual measurement, which allows the AI to track its current position based on visual input. Meta said that this data augmentation technology can quickly train effective neural models without the need for manual data annotation. Meta also mentioned that they have completed testing on their own Habitat 2.0 embodied AI training platform (which uses the Realistic PointNav benchmark task to run virtual space simulations), with a success rate of 94%.

Meta explained, “Although our method has not fully solved all scenarios in the data set, this research has initially proved that the ability to navigate in real-world environments is not necessarily Explicit mapping is required to implement."

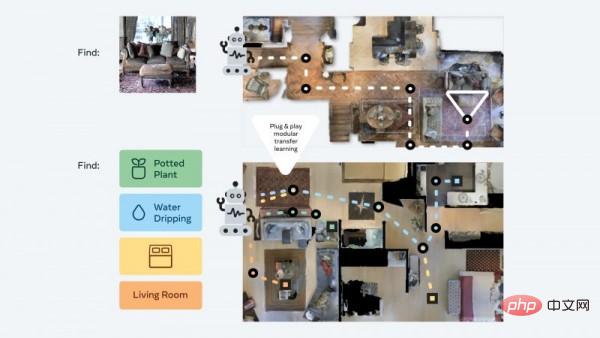

In order to further improve AI navigation training without relying on maps, Meta has established a training data set called Habitat-Web, which contains more than 100,000 Different object-goal navigation methods demonstrated by humans. The Habitat simulator running on a web browser can smoothly connect to Amazon.com's Mechanical Turk service, allowing users to safely operate virtual robots remotely. Meta said the resulting data will be used as training material to help AI agents achieve "state-of-the-art results." Scanning the room to understand the overall spatial characteristics, checking whether there are obstacles in corners, etc. are all efficient object search behaviors that AI can learn from humans.

In addition, the Meta AI team has also developed a so-called "plug and play" modular approach that can help robots navigate a variety of semantic navigation tasks and goal modes through a unique set of "zero-sample experience learning framework" Achieve generalization. In this way, AI agents can still acquire basic navigation skills without the need for resource-intensive maps and training, and can perform different tasks in a 3D environment without additional adjustments.

#Meta explains that these agents continuously search for image targets during training. They receive a photo taken at a random location in the environment and then use autonomous navigation to try to find the location. Meta researchers said, "Our method reduces the training data to 1/12.5, and the success rate is 14% higher than the latest transfer learning technology."

Constellation Research analyst Holger Mueller said in an interview Zhong said that this latest development of Meta is expected to play a key role in its metaverse development plan. He believes that if the virtual world can become the norm in the future, AI must be able to understand this new space, and the cost of understanding should not be too high.

Mueller added, “AI’s ability to understand the physical world needs to be expanded by software-based methods. Meta is currently taking this path, and has made progress in embodied AI, developing AI that does not require training. Software that can autonomously understand its surrounding environment. I'm excited to see early practical applications of this."

These real-life use cases may not be far away from us. Meta said the next step is to advance these results from navigation to mobile operations and develop AI agents that can perform specific tasks (such as identifying a wallet and returning it to its owner).

The above is the detailed content of Meta researchers make a new AI attempt: teaching robots to navigate physically without maps or training. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1652

1652

14

14

1412

1412

52

52

1303

1303

25

25

1250

1250

29

29

1224

1224

24

24

Bytedance Cutting launches SVIP super membership: 499 yuan for continuous annual subscription, providing a variety of AI functions

Jun 28, 2024 am 03:51 AM

Bytedance Cutting launches SVIP super membership: 499 yuan for continuous annual subscription, providing a variety of AI functions

Jun 28, 2024 am 03:51 AM

This site reported on June 27 that Jianying is a video editing software developed by FaceMeng Technology, a subsidiary of ByteDance. It relies on the Douyin platform and basically produces short video content for users of the platform. It is compatible with iOS, Android, and Windows. , MacOS and other operating systems. Jianying officially announced the upgrade of its membership system and launched a new SVIP, which includes a variety of AI black technologies, such as intelligent translation, intelligent highlighting, intelligent packaging, digital human synthesis, etc. In terms of price, the monthly fee for clipping SVIP is 79 yuan, the annual fee is 599 yuan (note on this site: equivalent to 49.9 yuan per month), the continuous monthly subscription is 59 yuan per month, and the continuous annual subscription is 499 yuan per year (equivalent to 41.6 yuan per month) . In addition, the cut official also stated that in order to improve the user experience, those who have subscribed to the original VIP

Context-augmented AI coding assistant using Rag and Sem-Rag

Jun 10, 2024 am 11:08 AM

Context-augmented AI coding assistant using Rag and Sem-Rag

Jun 10, 2024 am 11:08 AM

Improve developer productivity, efficiency, and accuracy by incorporating retrieval-enhanced generation and semantic memory into AI coding assistants. Translated from EnhancingAICodingAssistantswithContextUsingRAGandSEM-RAG, author JanakiramMSV. While basic AI programming assistants are naturally helpful, they often fail to provide the most relevant and correct code suggestions because they rely on a general understanding of the software language and the most common patterns of writing software. The code generated by these coding assistants is suitable for solving the problems they are responsible for solving, but often does not conform to the coding standards, conventions and styles of the individual teams. This often results in suggestions that need to be modified or refined in order for the code to be accepted into the application

Can fine-tuning really allow LLM to learn new things: introducing new knowledge may make the model produce more hallucinations

Jun 11, 2024 pm 03:57 PM

Can fine-tuning really allow LLM to learn new things: introducing new knowledge may make the model produce more hallucinations

Jun 11, 2024 pm 03:57 PM

Large Language Models (LLMs) are trained on huge text databases, where they acquire large amounts of real-world knowledge. This knowledge is embedded into their parameters and can then be used when needed. The knowledge of these models is "reified" at the end of training. At the end of pre-training, the model actually stops learning. Align or fine-tune the model to learn how to leverage this knowledge and respond more naturally to user questions. But sometimes model knowledge is not enough, and although the model can access external content through RAG, it is considered beneficial to adapt the model to new domains through fine-tuning. This fine-tuning is performed using input from human annotators or other LLM creations, where the model encounters additional real-world knowledge and integrates it

The first open source model to surpass GPT4o level! Llama 3.1 leaked: 405 billion parameters, download links and model cards are available

Jul 23, 2024 pm 08:51 PM

The first open source model to surpass GPT4o level! Llama 3.1 leaked: 405 billion parameters, download links and model cards are available

Jul 23, 2024 pm 08:51 PM

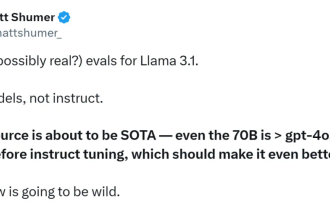

Get your GPU ready! Llama3.1 finally appeared, but the source is not Meta official. Today, the leaked news of the new Llama large model went viral on Reddit. In addition to the basic model, it also includes benchmark results of 8B, 70B and the maximum parameter of 405B. The figure below shows the comparison results of each version of Llama3.1 with OpenAIGPT-4o and Llama38B/70B. It can be seen that even the 70B version exceeds GPT-4o on multiple benchmarks. Image source: https://x.com/mattshumer_/status/1815444612414087294 Obviously, version 3.1 of 8B and 70

New affordable Meta Quest 3S VR headset appears on FCC, suggesting imminent launch

Sep 04, 2024 am 06:51 AM

New affordable Meta Quest 3S VR headset appears on FCC, suggesting imminent launch

Sep 04, 2024 am 06:51 AM

The Meta Connect 2024event is set for September 25 to 26, and in this event, the company is expected to unveil a new affordable virtual reality headset. Rumored to be the Meta Quest 3S, the VR headset has seemingly appeared on FCC listing. This sugge

The strongest model Llama 3.1 405B is officially released, Zuckerberg: Open source leads a new era

Jul 24, 2024 pm 08:23 PM

The strongest model Llama 3.1 405B is officially released, Zuckerberg: Open source leads a new era

Jul 24, 2024 pm 08:23 PM

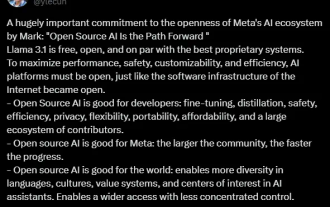

Just now, the long-awaited Llama 3.1 has been officially released! Meta officially issued a voice that "open source leads a new era." In the official blog, Meta said: "Until today, open source large language models have mostly lagged behind closed models in terms of functionality and performance. Now, we are ushering in a new era led by open source. We publicly released MetaLlama3.1405B, which we believe It is the largest and most powerful open source basic model in the world. To date, the total downloads of all Llama versions have exceeded 300 million times, and we have just begun.” Meta founder and CEO Zuckerberg also wrote an article. Long article "OpenSourceAIIsthePathForward",

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

Editor |ScienceAI Question Answering (QA) data set plays a vital role in promoting natural language processing (NLP) research. High-quality QA data sets can not only be used to fine-tune models, but also effectively evaluate the capabilities of large language models (LLM), especially the ability to understand and reason about scientific knowledge. Although there are currently many scientific QA data sets covering medicine, chemistry, biology and other fields, these data sets still have some shortcomings. First, the data form is relatively simple, most of which are multiple-choice questions. They are easy to evaluate, but limit the model's answer selection range and cannot fully test the model's ability to answer scientific questions. In contrast, open-ended Q&A

Analyst discusses launch pricing for rumoured Meta Quest 3S VR headset

Aug 27, 2024 pm 09:35 PM

Analyst discusses launch pricing for rumoured Meta Quest 3S VR headset

Aug 27, 2024 pm 09:35 PM

Over a year has now passed from Meta's initial release of the Quest 3 (curr. $499.99 on Amazon). Since then, Apple has shipped the considerably more expensive Vision Pro, while Byte Dance has now unveiled the Pico 4 Ultra in China. However, there is