Technology peripherals

Technology peripherals

AI

AI

How to correctly define test phase training? Sequential inference and domain-adaptive clustering methods

How to correctly define test phase training? Sequential inference and domain-adaptive clustering methods

How to correctly define test phase training? Sequential inference and domain-adaptive clustering methods

Domain adaptation is an important method to solve transfer learning. The current domain adaptation method relies on the data of the original domain and the target domain for synchronous training. When the source domain data is not available and the target domain data is not completely visible, test-time training becomes a new domain adaptation method. Current research on Test-Time Training (TTT) widely utilizes self-supervised learning, contrastive learning, self-training and other methods. However, how to define TTT in real environments is often ignored, resulting in a lack of comparability between different methods.

Recently, South China University of Technology, the A*STAR team and Pengcheng Laboratory jointly proposed a systematic classification criterion for TTT problems, by distinguishing whether the method has sequential reasoning ability (Sequential Inference) and whether the source domain training objectives need to be modified, the current methods are classified in detail. At the same time, a method based on anchored clustering of target domain data is proposed, which achieves the highest classification accuracy under various TTT classifications. This article points out the right direction for subsequent research on TTT and avoids confusion in experimental settings. The results are not comparable. The research paper has been accepted for NeurIPS 2022.

- ##Paper: https://arxiv.org/abs/2206.02721

- Code: https://github.com/Gorilla-Lab-SCUT/TTAC

The success of deep learning is mainly due to the large amount of annotated data and the assumption that the training set and the test set are independent and identically distributed. In general, when it is necessary to train on synthetic data and then test on real data, the above assumptions cannot be met, which is also called domain shift. To alleviate this problem, Domain Adaptation (DA) was born. Existing DA jobs either require access to data from the source and target domains during training, or train on multiple domains simultaneously. The former requires the model to always have access to source domain data during adaptation training, while the latter requires more expensive calculations. In order to reduce the dependence on source domain data, source domain data cannot be accessed due to privacy issues or storage overhead. Source-Free Domain Adaptation (SFDA) without source domain data solves the domain adaptation problem of inaccessible source domain data. The author found that SFDA needs to be trained on the entire target data set for multiple rounds to achieve convergence. SFDA cannot solve such problems when facing streaming data and the need to make timely inference predictions. This more realistic setting that requires timely adaptation to streaming data and making inference predictions is called Test-Time Training (TTT) or Test-Time Adaptation (TTA).

The author noticed that there is confusion in the community about the definition of TTT which leads to unfair comparisons. The paper classifies existing TTT methods based on two key factors:

- For data that appears in a streaming format and requires timely prediction of the currently occurring data, It is called One-Pass Adaptation; for other protocols that do not meet the above settings, it is called Multi-Pass Adaptation. The model may need to be updated on the entire test set for multiple rounds. , and then make inference predictions from beginning to end.

- Modify the training loss equation of the source domain according to whether it is necessary, such as introducing additional self-supervised branches to achieve a more effective TTT.

The goal of this paper is to solve the most realistic and challenging TTT protocol, that is, single-round adaptation without modifying the training loss equation. This setting is similar to the TTA proposed by TENT [1], but is not limited to using lightweight information from the source domain, such as statistics of features. Given the goal of TTT to adapt efficiently at test time, this assumption is computationally efficient and greatly improves the performance of TTT. The authors named this new TTT protocol sequential Test Time Training (sTTT).

In addition to the above classification of different TTT methods, the paper also proposes two technologies to make sTTT more effective and accurate:

- The paper proposes the Test-Time Anchored Clustering (TTAC) method.

- In order to reduce the impact of erroneous pseudo-labels on cluster updates, the paper filters pseudo-labels based on the network's prediction stability and confidence in the sample.

2. Method introduction

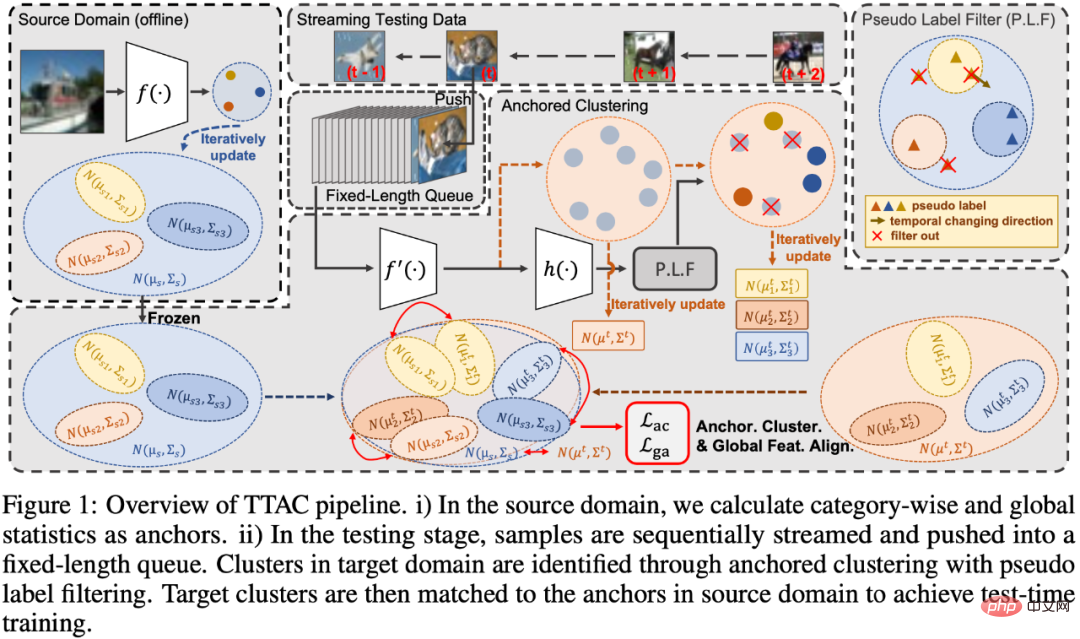

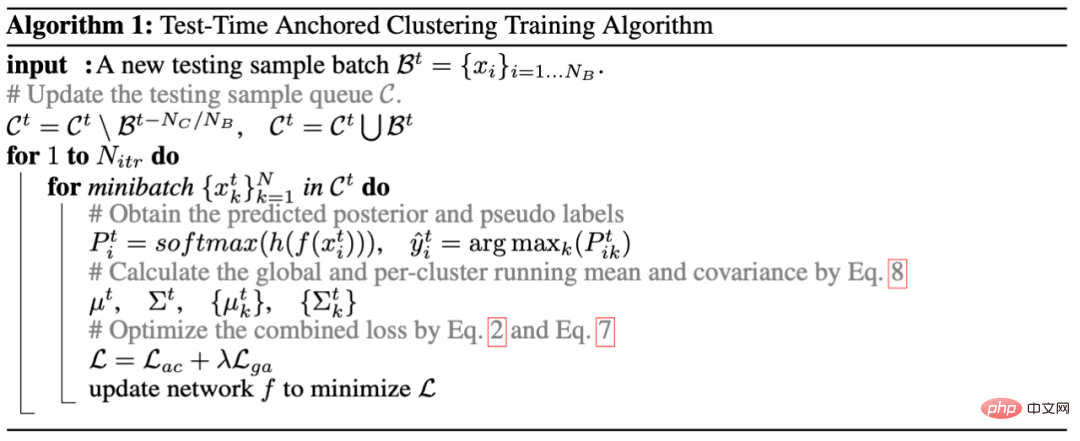

The paper is divided into four parts to explain the proposed method, which are 1) introducing the anchor of test-time training (TTT) fixed clustering module, as shown in the Anchored Clustering part in Figure 1; 2) introduce some strategies for filtering pseudo labels, as shown in the Pseudo Label Filter part in Figure 1; 3) different from the use of L2 distance in TTT [2] To measure the distance between two distributions, the author uses KL divergence to measure the distance between two global feature distributions; 4) Introduce an effective update iteration method for feature statistics in the test-time training (TTT) process. Finally, the fifth section gives the process code of the entire algorithm.

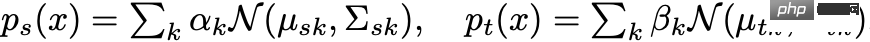

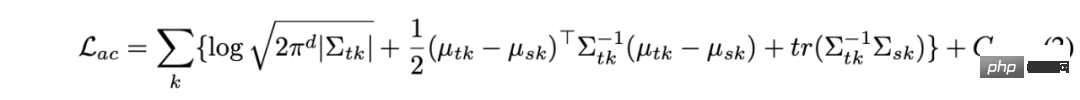

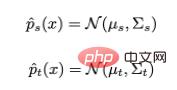

In the first part, in anchored clustering, the author first uses a mixture of Gaussians to model the features of the target domain, where each Gaussian component represents A discovered cluster. The authors then use the distribution of each category in the source domain as anchor points for the distribution in the target domain for matching. In this way, test data features can form clusters at the same time, and the clusters are associated with source domain categories, thereby achieving generalization to the target domain. In summary, the features of the source domain and the target domain are modeled according to the category information respectively:

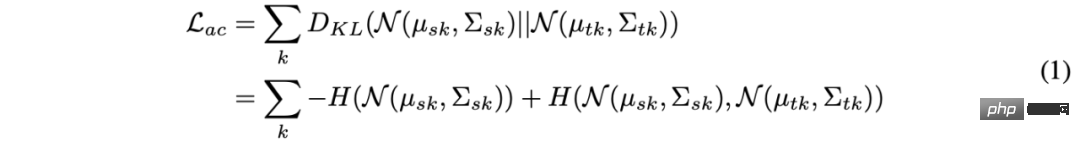

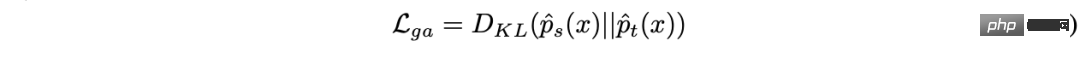

and then measure the two through KL divergence Mix the distance of Gaussian distribution and achieve the matching of the two domain features by reducing the KL divergence. However, there is no closed-form solution for directly solving the KL divergence on two mixed Gaussian distributions, which prevents the use of effective gradient optimization methods. In this paper, the author allocates the same number of clusters in the source and target domains, and each target domain cluster is assigned to a source domain cluster, so that the KL divergence solution of the entire mixed Gaussian can be turned into each pair Sum of KL divergences between Gaussians. The following formula:

The closed form solution of the above formula is:

In Formula 2, the parameters of the source domain cluster can be collected offline, and because only lightweight statistical data is used, it will not cause privacy leakage problems and only uses a small amount of computing and storage overhead. For variables in the target domain, the use of pseudo-labels is involved. For this purpose, the author designed an effective and lightweight pseudo-label filtering strategy.

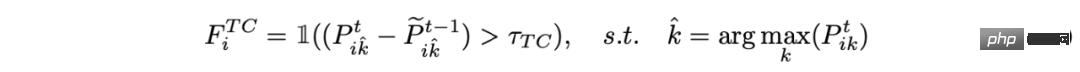

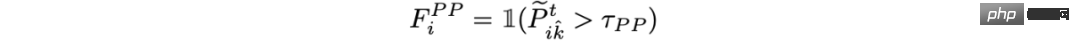

The second part of the pseudo label filtering strategy is mainly divided into two parts:

1) Filtering of consistency prediction in time series:

2) Filtering based on posterior probability:

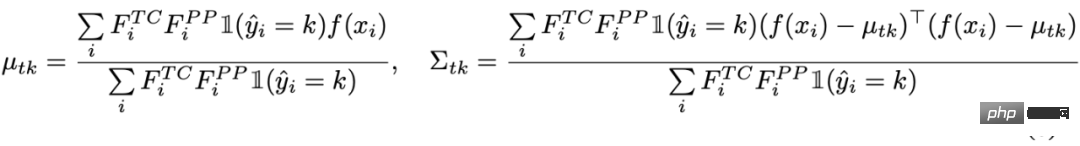

Finally , use the filtered samples to solve for the statistics of the target domain cluster:

Part 3: In anchored clustering, some of the filtered samples do not participate in the estimation of the target domain. The author also performs global feature alignment on all test samples, similar to the approach to clusters in anchored clustering. Here all samples are regarded as an overall cluster, and

is defined in the source domain and target domain respectively.

Then again align the global feature distribution with the goal of minimizing KL divergence:

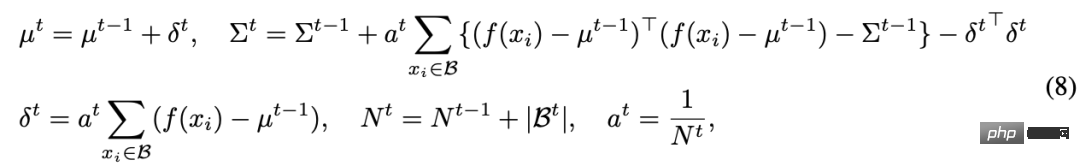

Fourth The above three parts all introduce some domain alignment methods, but in the TTT process, it is not simple to estimate the distribution of a target domain because we cannot observe the data of the entire target domain. In cutting-edge work, TTT [2] uses a feature queue to store past partial samples to calculate a local distribution to estimate the overall distribution. But this not only brings memory overhead, but also leads to a trade off between accuracy and memory. In this paper, the author proposes an iterative update of statistics to alleviate memory overhead. The specific iterative update formula is as follows:

In general, the entire algorithm is as shown in Algorithm 1:

3. Experimental results

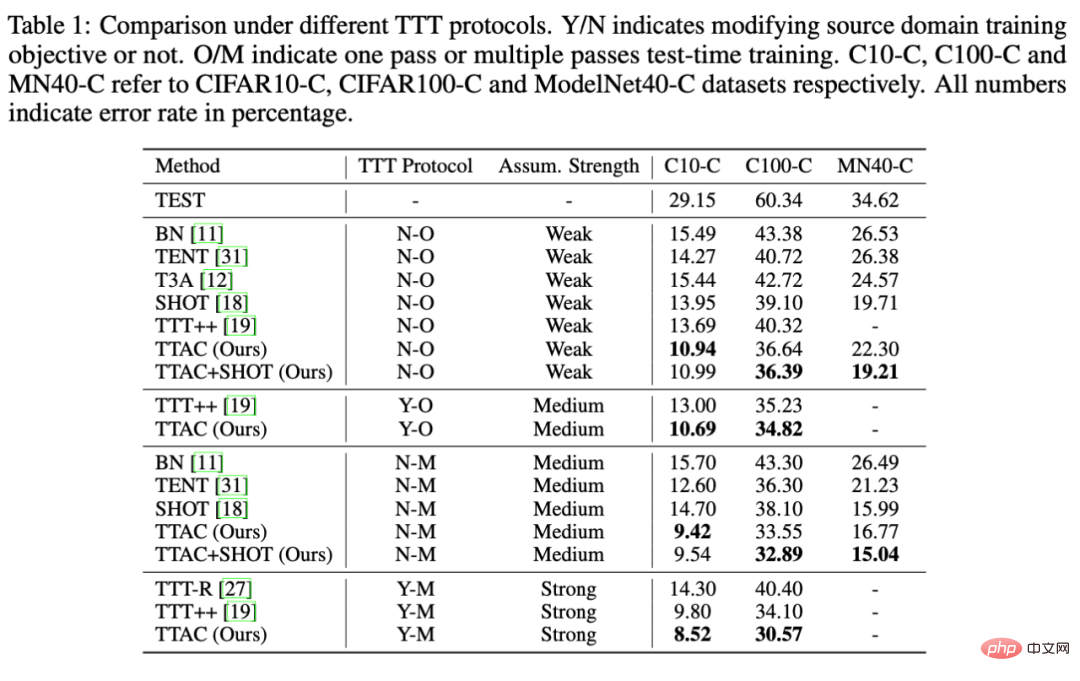

As mentioned in the introduction, the author in this paper attaches great importance to the fair comparison of different methods under different TTT strategies. The author classifies all TTT methods according to the following two key factors: 1) whether one-pass adaptation protocol (One-Pass Adaptation) and 2) modifying the training loss equation of the source domain, respectively recorded as Y/N to indicate the need or no need to modify Source domain training equation, O/M represents single-round adaptation or multi-round adaptation. In addition, the author conducted sufficient comparative experiments and some further analysis on 6 benchmark data sets.

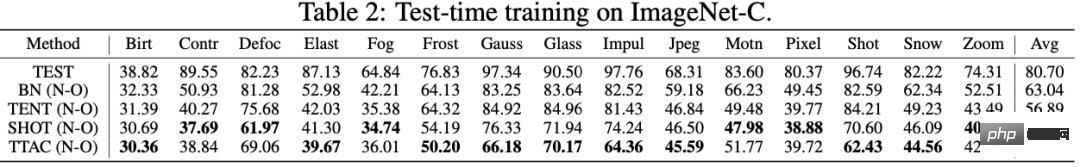

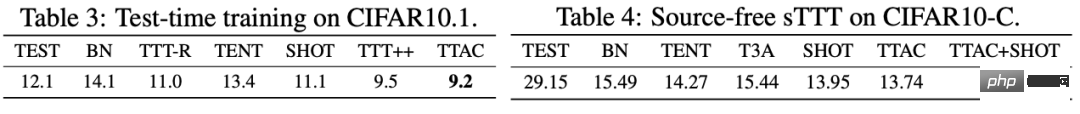

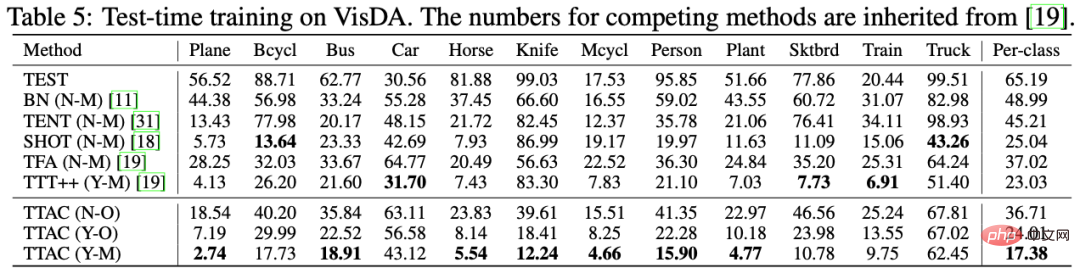

As shown in Table 1, TTT [2] appears under both N-O and Y-O protocols because TTT [2] has an additional self-supervised branch. We use N-O The loss of the self-supervised branch will not be added under the protocol, while the loss of this molecule can be used normally under Y-O. TTAC also uses the same self-supervised branch as TTT [2] under Y-O. As can be seen from the table, TTAC has achieved optimal results under all TTT protocols and all data sets; on both CIFAR10-C and CIFAR100-C data sets, TTAC has achieved an improvement of more than 3%. Table 2 - Table 5 show the data on ImageNet-C, CIFAR10.1, and VisDA respectively. TTAC has achieved the best results.

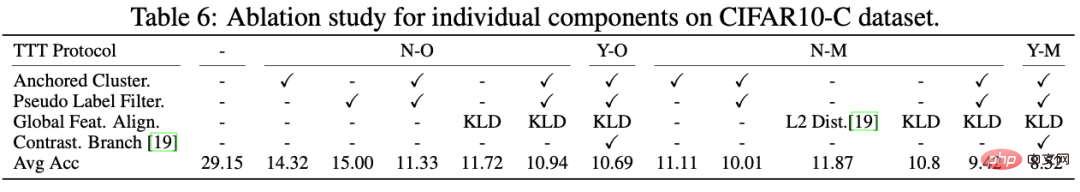

also, The author conducted rigorous ablation experiments under multiple TTT protocols at the same time, and clearly saw the role of each component, as shown in Table 6. First of all, from the comparison between L2 Dist and KLD, it can be seen that using KL divergence to measure the two distributions has a better effect; secondly, it is found that if Anchored Clustering or pseudo-label supervision is used alone, the improvement is only 14%, but if combined With Anchored Cluster and Pseudo Label Filter, you can see a significant performance improvement of 29.15% -> 11.33%. This also shows the necessity and effective combination of each component.

Наконец, автор полностью анализирует TTAC по пяти измерениям в конце текста, а именно совокупную производительность при sTTT (Н-О) и TSNE-визуализацию функций TTAC. , анализ TTT, независимый от исходного домена, анализ очередей тестовых образцов и раундов обновления, вычислительные затраты, измеряемые во времени настенных часов. Более интересные доказательства и анализ приведены в приложении к статье.

4.Резюме

В этой статье лишь кратко представлены основные моменты этой работы TTAC: классификация и сравнение существующих методов TTT, предлагаемых методов и различных экспериментов в рамках Классификация протоколов ТТТ. Более подробное обсуждение и анализ будут представлены в статье и приложении. Мы надеемся, что эта работа может стать справедливым эталоном для методов ТТТ и что будущие исследования будут сравниваться в рамках соответствующих протоколов.

The above is the detailed content of How to correctly define test phase training? Sequential inference and domain-adaptive clustering methods. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

0.What does this article do? We propose DepthFM: a versatile and fast state-of-the-art generative monocular depth estimation model. In addition to traditional depth estimation tasks, DepthFM also demonstrates state-of-the-art capabilities in downstream tasks such as depth inpainting. DepthFM is efficient and can synthesize depth maps within a few inference steps. Let’s read about this work together ~ 1. Paper information title: DepthFM: FastMonocularDepthEstimationwithFlowMatching Author: MingGui, JohannesS.Fischer, UlrichPrestel, PingchuanMa, Dmytr

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

Imagine an artificial intelligence model that not only has the ability to surpass traditional computing, but also achieves more efficient performance at a lower cost. This is not science fiction, DeepSeek-V2[1], the world’s most powerful open source MoE model is here. DeepSeek-V2 is a powerful mixture of experts (MoE) language model with the characteristics of economical training and efficient inference. It consists of 236B parameters, 21B of which are used to activate each marker. Compared with DeepSeek67B, DeepSeek-V2 has stronger performance, while saving 42.5% of training costs, reducing KV cache by 93.3%, and increasing the maximum generation throughput to 5.76 times. DeepSeek is a company exploring general artificial intelligence

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI is indeed changing mathematics. Recently, Tao Zhexuan, who has been paying close attention to this issue, forwarded the latest issue of "Bulletin of the American Mathematical Society" (Bulletin of the American Mathematical Society). Focusing on the topic "Will machines change mathematics?", many mathematicians expressed their opinions. The whole process was full of sparks, hardcore and exciting. The author has a strong lineup, including Fields Medal winner Akshay Venkatesh, Chinese mathematician Zheng Lejun, NYU computer scientist Ernest Davis and many other well-known scholars in the industry. The world of AI has changed dramatically. You know, many of these articles were submitted a year ago.

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Boston Dynamics Atlas officially enters the era of electric robots! Yesterday, the hydraulic Atlas just "tearfully" withdrew from the stage of history. Today, Boston Dynamics announced that the electric Atlas is on the job. It seems that in the field of commercial humanoid robots, Boston Dynamics is determined to compete with Tesla. After the new video was released, it had already been viewed by more than one million people in just ten hours. The old people leave and new roles appear. This is a historical necessity. There is no doubt that this year is the explosive year of humanoid robots. Netizens commented: The advancement of robots has made this year's opening ceremony look like a human, and the degree of freedom is far greater than that of humans. But is this really not a horror movie? At the beginning of the video, Atlas is lying calmly on the ground, seemingly on his back. What follows is jaw-dropping

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

Earlier this month, researchers from MIT and other institutions proposed a very promising alternative to MLP - KAN. KAN outperforms MLP in terms of accuracy and interpretability. And it can outperform MLP running with a larger number of parameters with a very small number of parameters. For example, the authors stated that they used KAN to reproduce DeepMind's results with a smaller network and a higher degree of automation. Specifically, DeepMind's MLP has about 300,000 parameters, while KAN only has about 200 parameters. KAN has a strong mathematical foundation like MLP. MLP is based on the universal approximation theorem, while KAN is based on the Kolmogorov-Arnold representation theorem. As shown in the figure below, KAN has

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

I cry to death. The world is madly building big models. The data on the Internet is not enough. It is not enough at all. The training model looks like "The Hunger Games", and AI researchers around the world are worrying about how to feed these data voracious eaters. This problem is particularly prominent in multi-modal tasks. At a time when nothing could be done, a start-up team from the Department of Renmin University of China used its own new model to become the first in China to make "model-generated data feed itself" a reality. Moreover, it is a two-pronged approach on the understanding side and the generation side. Both sides can generate high-quality, multi-modal new data and provide data feedback to the model itself. What is a model? Awaker 1.0, a large multi-modal model that just appeared on the Zhongguancun Forum. Who is the team? Sophon engine. Founded by Gao Yizhao, a doctoral student at Renmin University’s Hillhouse School of Artificial Intelligence.

Kuaishou version of Sora 'Ke Ling' is open for testing: generates over 120s video, understands physics better, and can accurately model complex movements

Jun 11, 2024 am 09:51 AM

Kuaishou version of Sora 'Ke Ling' is open for testing: generates over 120s video, understands physics better, and can accurately model complex movements

Jun 11, 2024 am 09:51 AM

What? Is Zootopia brought into reality by domestic AI? Exposed together with the video is a new large-scale domestic video generation model called "Keling". Sora uses a similar technical route and combines a number of self-developed technological innovations to produce videos that not only have large and reasonable movements, but also simulate the characteristics of the physical world and have strong conceptual combination capabilities and imagination. According to the data, Keling supports the generation of ultra-long videos of up to 2 minutes at 30fps, with resolutions up to 1080p, and supports multiple aspect ratios. Another important point is that Keling is not a demo or video result demonstration released by the laboratory, but a product-level application launched by Kuaishou, a leading player in the short video field. Moreover, the main focus is to be pragmatic, not to write blank checks, and to go online as soon as it is released. The large model of Ke Ling is already available in Kuaiying.

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

The latest video of Tesla's robot Optimus is released, and it can already work in the factory. At normal speed, it sorts batteries (Tesla's 4680 batteries) like this: The official also released what it looks like at 20x speed - on a small "workstation", picking and picking and picking: This time it is released One of the highlights of the video is that Optimus completes this work in the factory, completely autonomously, without human intervention throughout the process. And from the perspective of Optimus, it can also pick up and place the crooked battery, focusing on automatic error correction: Regarding Optimus's hand, NVIDIA scientist Jim Fan gave a high evaluation: Optimus's hand is the world's five-fingered robot. One of the most dexterous. Its hands are not only tactile