Technology peripherals

Technology peripherals

AI

AI

Completely crush AdamW! Google's new optimizer has small memory and high efficiency. Netizens: Training GPT 2 is really fast

Completely crush AdamW! Google's new optimizer has small memory and high efficiency. Netizens: Training GPT 2 is really fast

Completely crush AdamW! Google's new optimizer has small memory and high efficiency. Netizens: Training GPT 2 is really fast

The optimizer is an optimization algorithm and plays a key role in neural network training. In recent years, researchers have introduced a large number of manual optimizers, most of which are adaptive optimizers. Adam and Adafactor optimizers still occupy the mainstream of training neural networks, especially in the fields of language, vision and multi-modality.

In addition to manually introducing optimizers, another direction is for the program to automatically discover optimization algorithms. Someone has previously proposed L2O (learning to optimize), which discovers optimizers by training neural networks. However, these black-box optimizers are usually trained on a limited number of small tasks and have difficulty generalizing to large models.

Others have tried other approaches, applying reinforcement learning or Monte Carlo sampling to discover new optimizers. However, in order to simplify the search, these methods usually restrict the search space and thus limit the possibility of discovering other optimizers. Therefore, current methods have not yet reached SOTA level.

In recent years, it is worth mentioning AutoML-Zero, which attempts to search every component of the machine learning pipeline when evaluating tasks, which is very useful for the discovery of optimizers.

##In this article, Researchers from Google and UCLA propose a method to discover optimization algorithms for deep neural network training through program search, and then discover Lion(EvoLved Sign Momentum) optimizer. Achieving this goal faces two challenges: first, finding high-quality algorithms in the infinitely sparse program space, and second, selecting algorithms that can generalize from small tasks to larger, SOTA tasks. To address these challenges, the research employs a range of techniques, including evolutionary search with hot starts and restarts, abstract execution, funnel selection, and program simplification.

- Paper address: https://arxiv.org/pdf/2302.06675.pdf

- Project address: https://github.com/google/automl/tree/master/lion##

Xiangning Chen, the first author of the paper, said: Our symbolic program search found an effective optimizer that only tracks momentum - Lion. Compared with Adam, it achieved 88.3% zero-sample and 91.1% fine-tuned ImageNet accuracy, as well as up to 5x (compared to ViT), 2.3x (compared to diffusion model) and 2x (compared to LM). ) training efficiency.

In addition, Lion reduces the pre-training computation on JFT by up to 5 times, improves the training efficiency of diffusion models by 2.3 times, and achieves better FID scores, It also provides similar or better performance in language modeling, saving up to 2 times the computational effort.

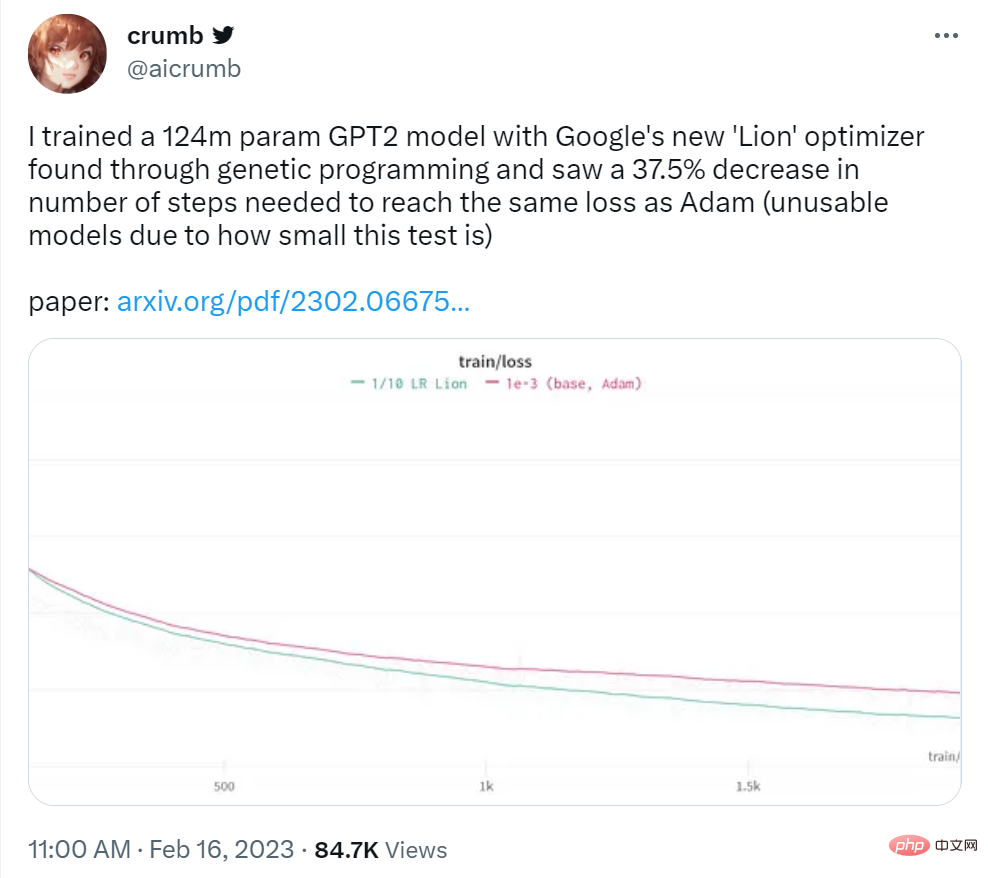

Twitter user crumb said: He used Google's Lion optimizer to train a 124M parameter GPT2 model and found that the number of steps required to achieve the same loss as Adam was reduced by 37.5%.

## Source: https://twitter.com/aicrumb/status/1626053855329898496

Symbolic Discovery of AlgorithmsThis paper uses symbolic representation in the form of a program to have the following advantages: (1) It conforms to the fact that the algorithm must be executed as a program fact; (2) compared to parametric models such as neural networks, symbolic representations such as programs are easier to analyze, understand, and transfer to new tasks; (3) program length can be used to estimate the complexity of different programs, making it easier to choose a more Simple, often more versatile program. This work focuses on optimizers for deep neural network training, but the approach is generally applicable to other tasks.

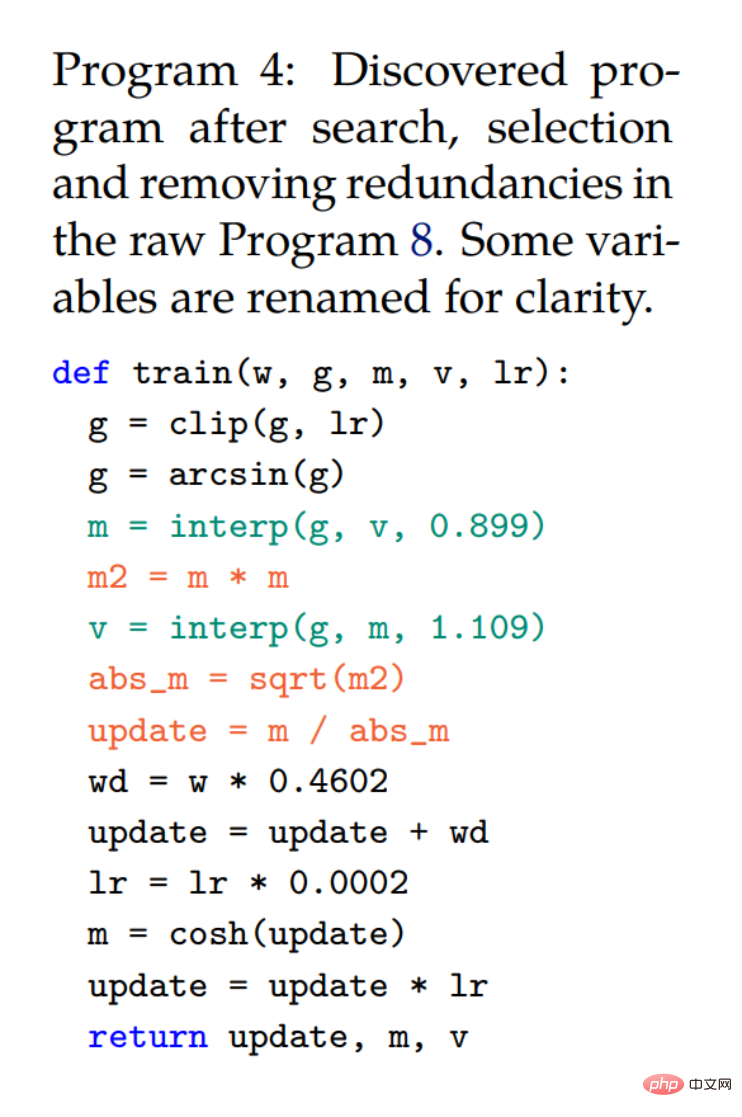

In the figure below, Program 2. This simplified code snippet uses the same signature as AdamW to ensure that the discovered algorithm has a smaller or equal memory footprint; Program 3 is given Example representation of AdamW.

This research adopts the following techniques to address the challenges posed by infinite and sparse search spaces. First, regularization is applied because it is simple, scalable, and successful in many AutoML search tasks; second, it is to simplify the redundancy in the program space; finally, to reduce the search cost, this study reduces the model size and the number of training examples by and steps away from the target task to reduce costs.

Left: Shown are the means and standard errors of five evolutionary search experiments. Right: Both the percentage of redundant statements and the cache hit rate increase as the search progresses.

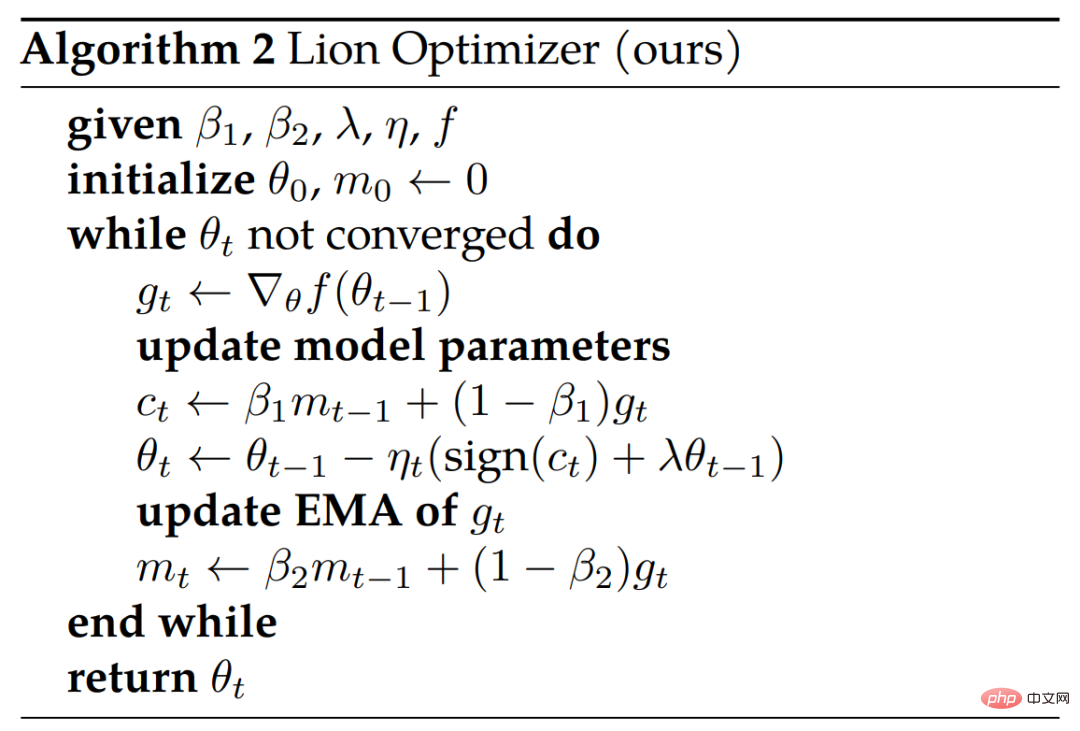

Derivation and analysis of LionResearchers stated that the optimizer Lion has simplicity, high memory efficiency, and powerful performance in search and meta-validation. .

Derivation

The search and funnel selection process led to Program 4, which was derived from the original Program 8 (Appendix ) is obtained by automatically deleting redundant statements. The researcher further simplified and obtained the final algorithm (Lion) in Program 1. Several unnecessary elements were removed from Program 4 during the simplification process. where the cosh function is removed since m will be reallocated in the next iteration (line 3). Statements using arcsin and clip were also removed because the researchers observed no loss of quality without them. The three red statements are converted into a symbolic function.

Although m and v are used together in Program 4, v only changes the way the momentum is updated (two interpolation functions with constants ∼0.9 and ∼1.1 are equivalent to one with ∼ 0.99 function) and does not need to be tracked separately. Note that the bias correction is no longer needed as it does not change the direction.

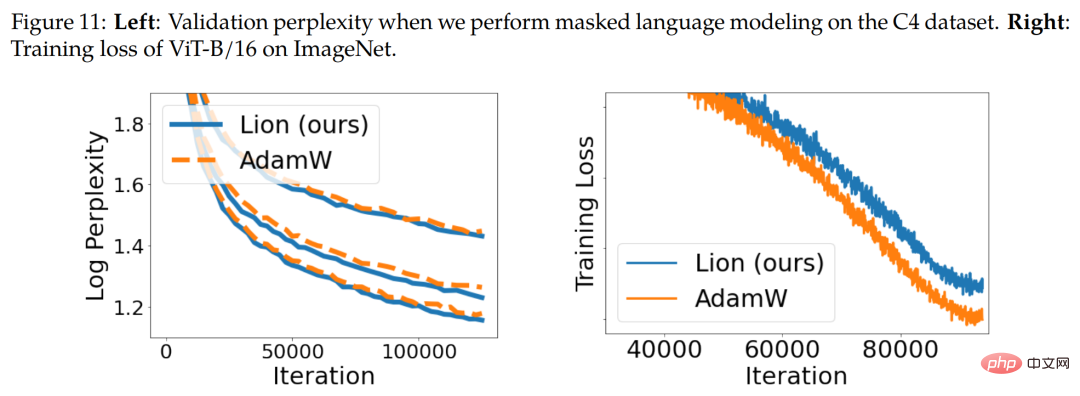

Symbol updating and regularization. The Lion algorithm produces updates with uniform magnitude in all dimensions through symbolic operations, which is different in principle from various adaptive optimizers. Intuitively, symbolic operations add noise to updates, act as a form of regularization and aid generalization. Figure 11 below (right) shows one piece of evidence.

Momentum tracking. The default EMA factor for tracking momentum in Lion is 0.99 (β_2) compared to the 0.9 commonly used in AdamW and momentum SGD. This choice of EMA factors and interpolation allows Lion to strike a balance between remembering 10 times the history of the momentum gradient and putting more weight on the current gradient in updates.

Hyperparameter and batch size selection. Compared to AdamW and Adafactor, Lion is simpler and has fewer hyperparameters since it does not require ϵ and factorization related parameters. Lion requires a smaller learning rate, and thus a larger decoupled weight decay, to achieve a similar effective weight decay strength (lr * λ).

Memory and runtime advantages. Lion only saves momentum and has a smaller memory footprint than popular adaptive optimizers like AdamW, which is useful when training large models and/or working with large batch sizes. For example, AdamW requires at least 16 TPU V4 chips to train ViT-B/16 with an image resolution of 224 and a batch size of 4,096, while Lion only requires 8 (both with bfloat16 momentum).

Lion evaluation results

In the experimental part, the researchers evaluated Lion on various benchmarks, mainly comparing it with the popular AdamW (or when the memory becomes Adafactor at bottleneck) for comparison.

Image Classification

Researchers perform experiments covering various datasets and architectures on image classification tasks . In addition to training from scratch on ImageNet, they also pre-trained on two larger mature datasets, ImageNet-21K and JFT. Image size defaults to 224.

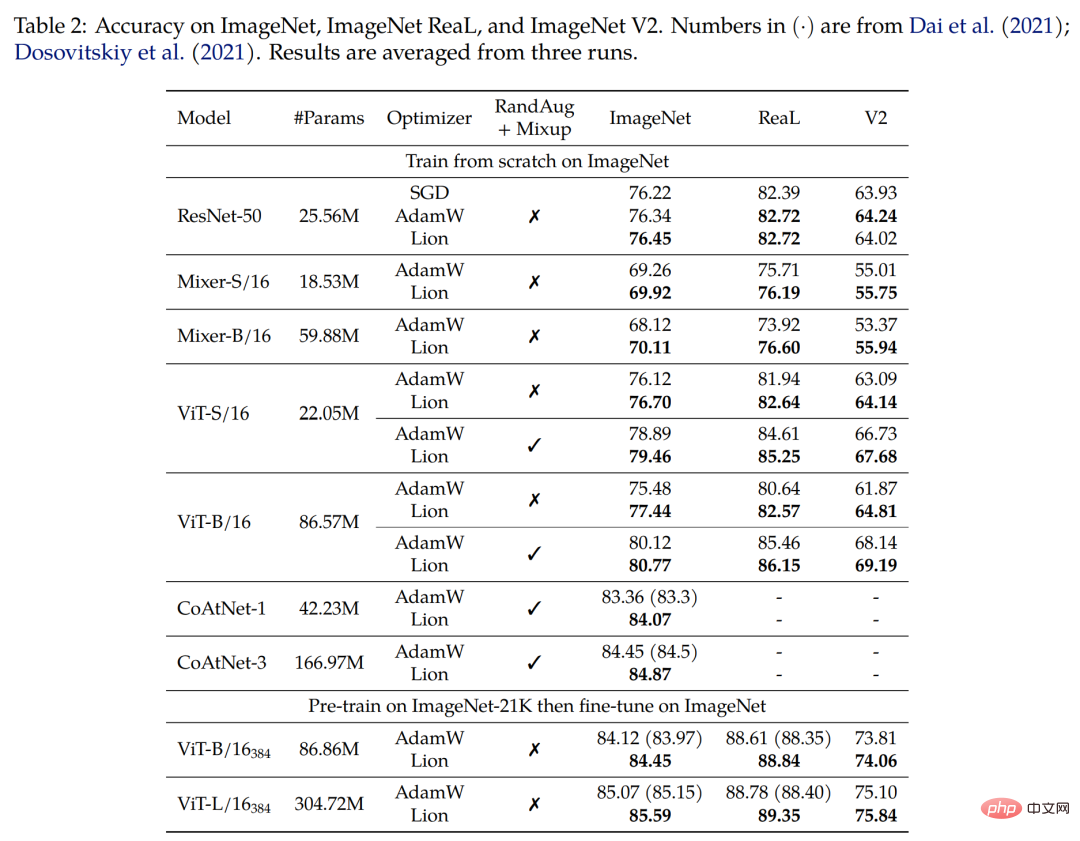

First train from scratch on ImageNet. The researchers trained ResNet-50 for 90 epochs with a batch size of 1,024, and the other models for 300 epochs with a batch size of 4,096. As shown in Table 2 below, Lion significantly outperforms AdamW on various architectures.

Secondly, pre-train on ImageNet-21K. The researchers pre-trained ViT-B/16 and ViT-L/16 on ImageNet-21K for 90 epochs with a batch size of 4,096. Table 2 below shows that Lion still outperforms AdamW even if the training set is expanded 10 times.

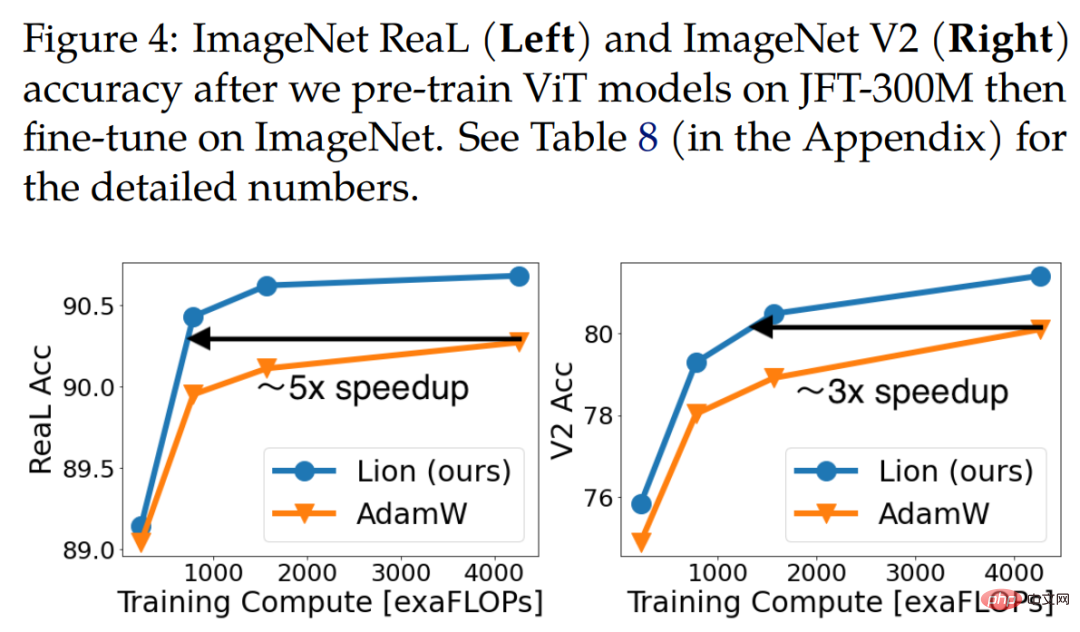

Finally pre-train on JFT. In order to push the limits, researchers conduct a large number of experiments on JFT. Figure 4 below shows the accuracy of three ViT models (ViT-B/16, ViT-L/16 and ViT-H/14) under different pre-training budgets on JFT-300M. Lion enables ViT-L/16 to match the performance of AdamW’s ViT-H/14 trained on ImageNet and ImageNet V2, but at 3x lower pre-training cost.

Table 3 below shows the fine-tuning results, with higher resolution and Polyak averaging . The ViT-L/16 used by the researchers matches the results of ViT-H/14 previously trained by AdamW, while having 2x fewer parameters. After extending the pre-training dataset to JFT-3B, Lion-trained ViT-g/14 outperforms previous ViT-G/14 results with 1.8x fewer parameters.

Visual language contrastive learning

This section focuses on CLIP style visual language contrastive training. Rather than learning all parameters from scratch, the researchers initialized the image encoder using a powerful pre-trained model.

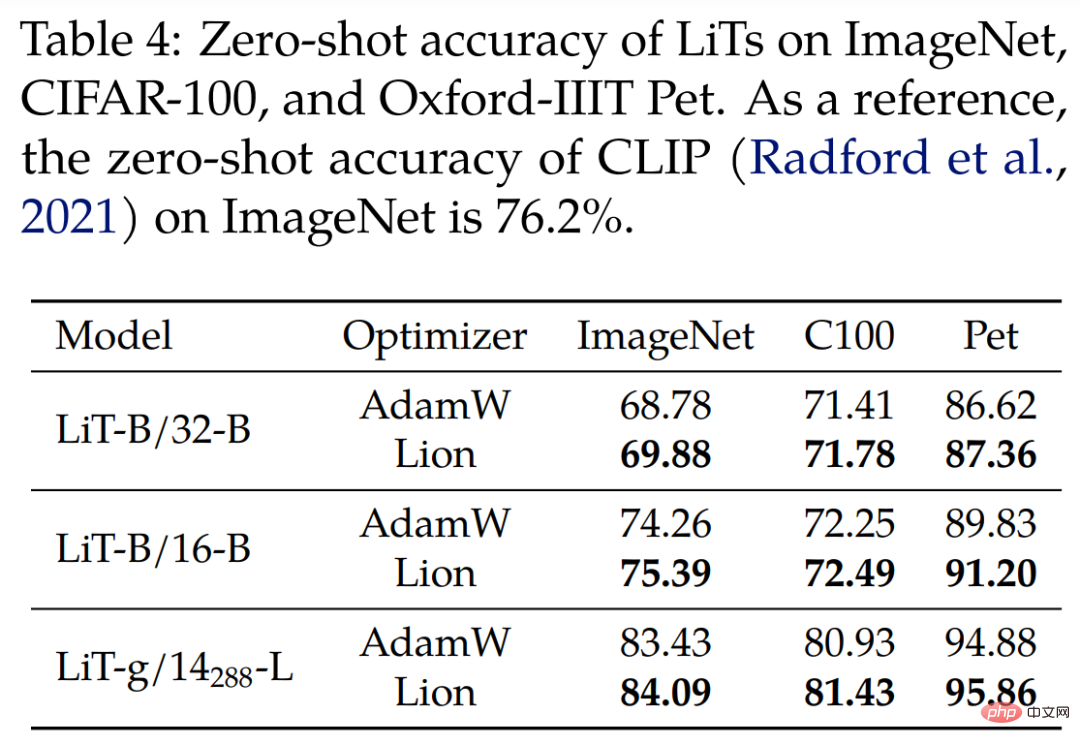

For Locked Image Text Tuning (LiT), the researchers compared Lion and AdamW on LiT by training text encoders in a comparative manner using the same frozen pre-trained ViT. Table 4 below shows the zero-shot image classification results at 3 model scales, with Lion demonstrating continued improvement over AdamW.

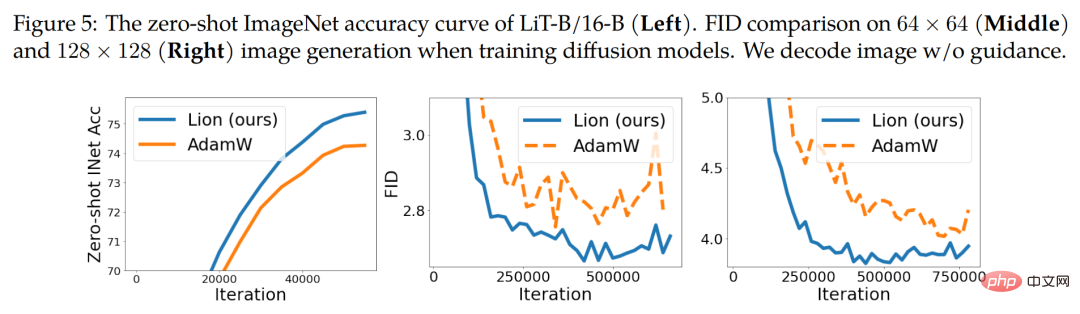

Figure 5 (left) below shows an example zero-shot learning curve of LiT-B/16-B, and Similar results were obtained on the other two datasets.

##Diffusion model

Recently, diffusion models have achieved great success in image generation. Given its huge potential, we tested Lion on unconditional image synthesis and multimodal text-to-image generation.

For image synthesis on ImageNet, the researchers used the improved U-Net architecture introduced in the 2021 paper "Diffusion models beat gans on image synthesis" to perform 64×64 on ImageNet , 128×128 and 256×256 image generation. As shown in Figure 5 above (middle and right), Lion can achieve better quality and faster convergence on FID scores.

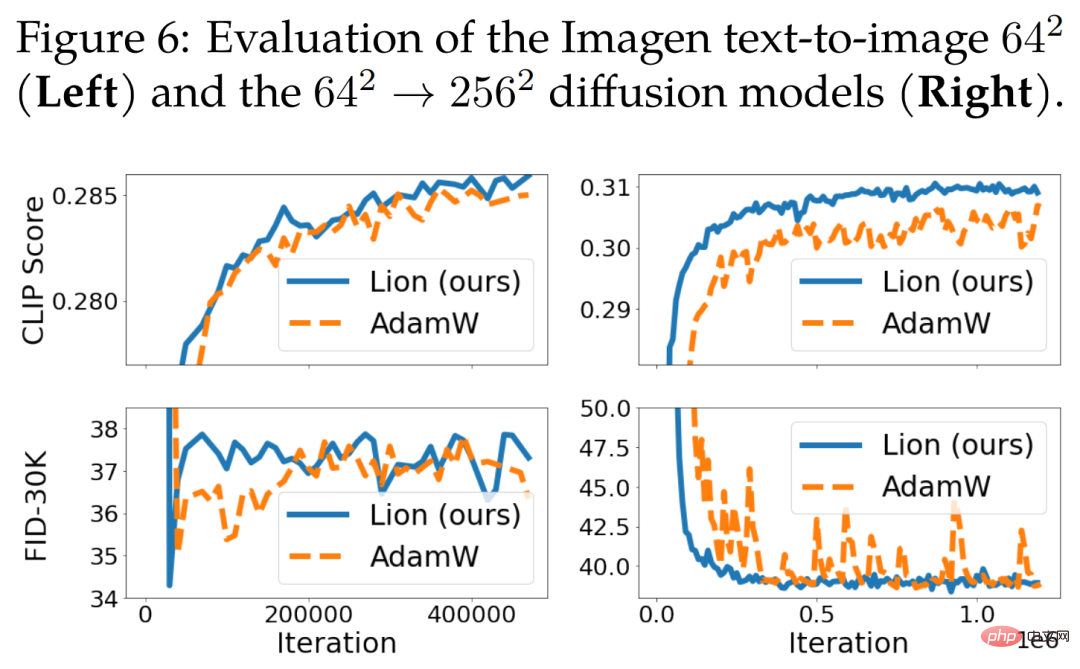

For text-to-image generation, Figure 6 below shows the learning curve. Although there is no significant improvement on the 64 × 64 base model, Lion outperforms AdamW on the text-conditional super-resolution model. Lion achieves higher CLIP scores and has smaller noisy FID metrics compared to AdamW.

#Language Modeling and Fine-tuning

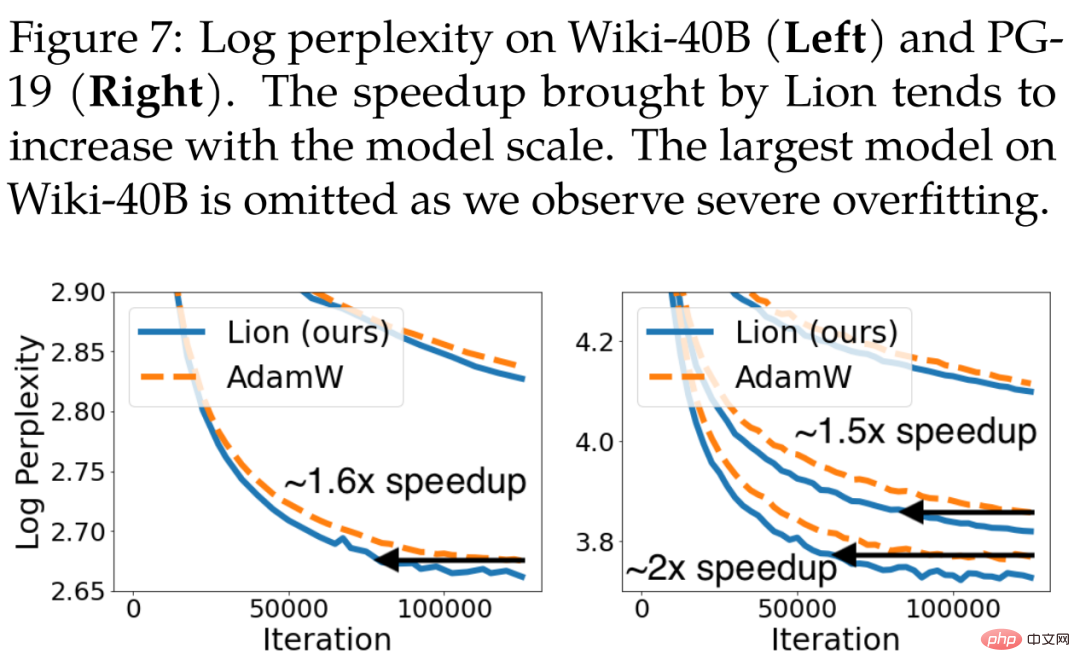

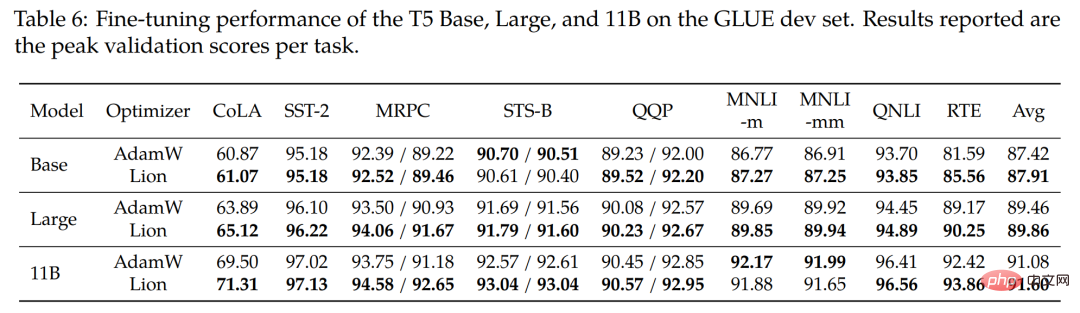

This section focuses on language modeling and fine-tuning. On pure language tasks, researchers found that adjusting β_1 and β_2 can improve the quality of AdamW and Lion.For autoregressive language modeling, Figure 7 below shows the token-level perplexity of Wiki-40B and the word-level perplexity of PG-19. Lion consistently achieves lower verification perplexity than AdamW. It achieves speedups of 1.6x and 1.5x when training medium-sized models on Wiki-40B and PG-19, respectively. PG-19 further achieves a 2x speedup when the model increases to large size.

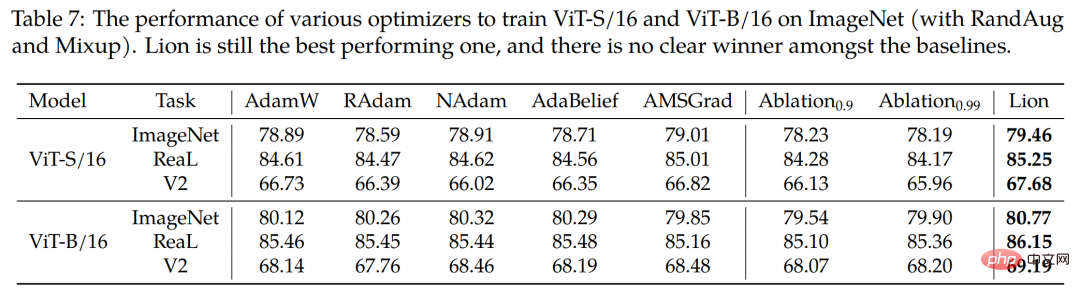

The study also uses four popular optimizers RAdam, NAdam, AdaBelief and AMSGrad to train ViT-S/16 and ViT-B/16 on ImageNet (using RandAug and Mixup). As shown in Table 7 below, Lion remains the top performer.

The above is the detailed content of Completely crush AdamW! Google's new optimizer has small memory and high efficiency. Netizens: Training GPT 2 is really fast. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

Open source! Beyond ZoeDepth! DepthFM: Fast and accurate monocular depth estimation!

Apr 03, 2024 pm 12:04 PM

0.What does this article do? We propose DepthFM: a versatile and fast state-of-the-art generative monocular depth estimation model. In addition to traditional depth estimation tasks, DepthFM also demonstrates state-of-the-art capabilities in downstream tasks such as depth inpainting. DepthFM is efficient and can synthesize depth maps within a few inference steps. Let’s read about this work together ~ 1. Paper information title: DepthFM: FastMonocularDepthEstimationwithFlowMatching Author: MingGui, JohannesS.Fischer, UlrichPrestel, PingchuanMa, Dmytr

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

Imagine an artificial intelligence model that not only has the ability to surpass traditional computing, but also achieves more efficient performance at a lower cost. This is not science fiction, DeepSeek-V2[1], the world’s most powerful open source MoE model is here. DeepSeek-V2 is a powerful mixture of experts (MoE) language model with the characteristics of economical training and efficient inference. It consists of 236B parameters, 21B of which are used to activate each marker. Compared with DeepSeek67B, DeepSeek-V2 has stronger performance, while saving 42.5% of training costs, reducing KV cache by 93.3%, and increasing the maximum generation throughput to 5.76 times. DeepSeek is a company exploring general artificial intelligence

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI is indeed changing mathematics. Recently, Tao Zhexuan, who has been paying close attention to this issue, forwarded the latest issue of "Bulletin of the American Mathematical Society" (Bulletin of the American Mathematical Society). Focusing on the topic "Will machines change mathematics?", many mathematicians expressed their opinions. The whole process was full of sparks, hardcore and exciting. The author has a strong lineup, including Fields Medal winner Akshay Venkatesh, Chinese mathematician Zheng Lejun, NYU computer scientist Ernest Davis and many other well-known scholars in the industry. The world of AI has changed dramatically. You know, many of these articles were submitted a year ago.

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Boston Dynamics Atlas officially enters the era of electric robots! Yesterday, the hydraulic Atlas just "tearfully" withdrew from the stage of history. Today, Boston Dynamics announced that the electric Atlas is on the job. It seems that in the field of commercial humanoid robots, Boston Dynamics is determined to compete with Tesla. After the new video was released, it had already been viewed by more than one million people in just ten hours. The old people leave and new roles appear. This is a historical necessity. There is no doubt that this year is the explosive year of humanoid robots. Netizens commented: The advancement of robots has made this year's opening ceremony look like a human, and the degree of freedom is far greater than that of humans. But is this really not a horror movie? At the beginning of the video, Atlas is lying calmly on the ground, seemingly on his back. What follows is jaw-dropping

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

Earlier this month, researchers from MIT and other institutions proposed a very promising alternative to MLP - KAN. KAN outperforms MLP in terms of accuracy and interpretability. And it can outperform MLP running with a larger number of parameters with a very small number of parameters. For example, the authors stated that they used KAN to reproduce DeepMind's results with a smaller network and a higher degree of automation. Specifically, DeepMind's MLP has about 300,000 parameters, while KAN only has about 200 parameters. KAN has a strong mathematical foundation like MLP. MLP is based on the universal approximation theorem, while KAN is based on the Kolmogorov-Arnold representation theorem. As shown in the figure below, KAN has

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

The vitality of super intelligence awakens! But with the arrival of self-updating AI, mothers no longer have to worry about data bottlenecks

Apr 29, 2024 pm 06:55 PM

I cry to death. The world is madly building big models. The data on the Internet is not enough. It is not enough at all. The training model looks like "The Hunger Games", and AI researchers around the world are worrying about how to feed these data voracious eaters. This problem is particularly prominent in multi-modal tasks. At a time when nothing could be done, a start-up team from the Department of Renmin University of China used its own new model to become the first in China to make "model-generated data feed itself" a reality. Moreover, it is a two-pronged approach on the understanding side and the generation side. Both sides can generate high-quality, multi-modal new data and provide data feedback to the model itself. What is a model? Awaker 1.0, a large multi-modal model that just appeared on the Zhongguancun Forum. Who is the team? Sophon engine. Founded by Gao Yizhao, a doctoral student at Renmin University’s Hillhouse School of Artificial Intelligence.

Kuaishou version of Sora 'Ke Ling' is open for testing: generates over 120s video, understands physics better, and can accurately model complex movements

Jun 11, 2024 am 09:51 AM

Kuaishou version of Sora 'Ke Ling' is open for testing: generates over 120s video, understands physics better, and can accurately model complex movements

Jun 11, 2024 am 09:51 AM

What? Is Zootopia brought into reality by domestic AI? Exposed together with the video is a new large-scale domestic video generation model called "Keling". Sora uses a similar technical route and combines a number of self-developed technological innovations to produce videos that not only have large and reasonable movements, but also simulate the characteristics of the physical world and have strong conceptual combination capabilities and imagination. According to the data, Keling supports the generation of ultra-long videos of up to 2 minutes at 30fps, with resolutions up to 1080p, and supports multiple aspect ratios. Another important point is that Keling is not a demo or video result demonstration released by the laboratory, but a product-level application launched by Kuaishou, a leading player in the short video field. Moreover, the main focus is to be pragmatic, not to write blank checks, and to go online as soon as it is released. The large model of Ke Ling is already available in Kuaiying.

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

Target detection is a relatively mature problem in autonomous driving systems, among which pedestrian detection is one of the earliest algorithms to be deployed. Very comprehensive research has been carried out in most papers. However, distance perception using fisheye cameras for surround view is relatively less studied. Due to large radial distortion, standard bounding box representation is difficult to implement in fisheye cameras. To alleviate the above description, we explore extended bounding box, ellipse, and general polygon designs into polar/angular representations and define an instance segmentation mIOU metric to analyze these representations. The proposed model fisheyeDetNet with polygonal shape outperforms other models and simultaneously achieves 49.5% mAP on the Valeo fisheye camera dataset for autonomous driving