Author|Yun Zhao

On March 9, Microsoft Germany CTO Andreas Braun brought a long-awaited news at the AI kickoff conference: "We will When GPT-4 launches next week, we will launch a multi-modal mode that offers completely different possibilities - such as video."

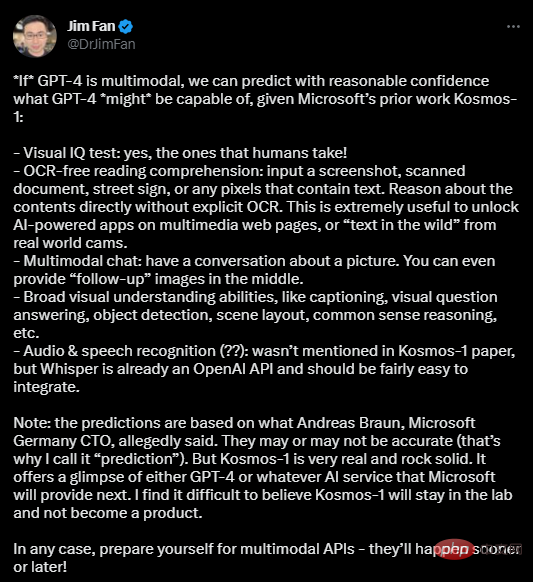

## Jim Fan, a Stanford Ph.D. and Nvidia AI scientist, made 5 specific predictions based on this:

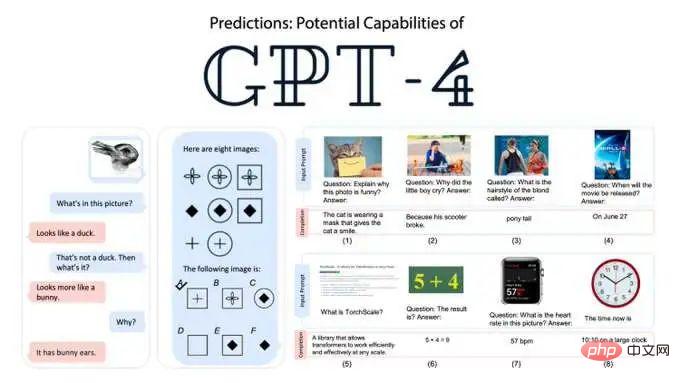

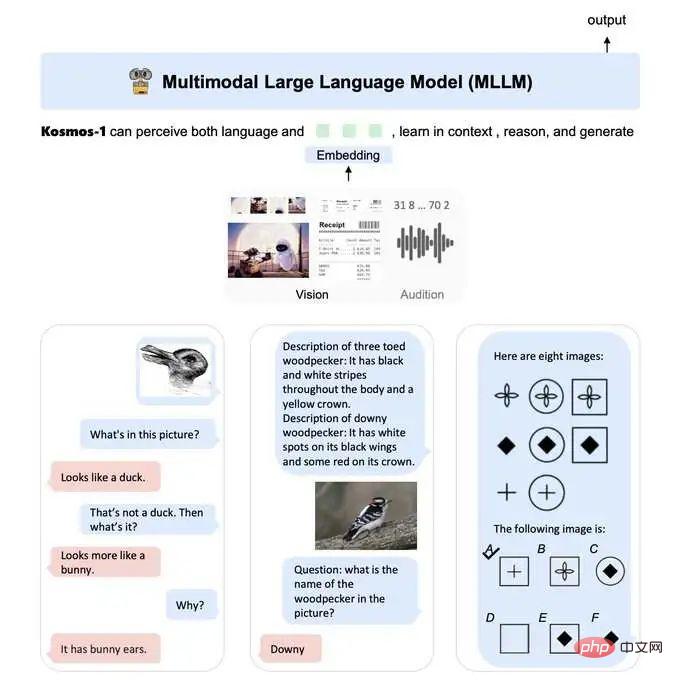

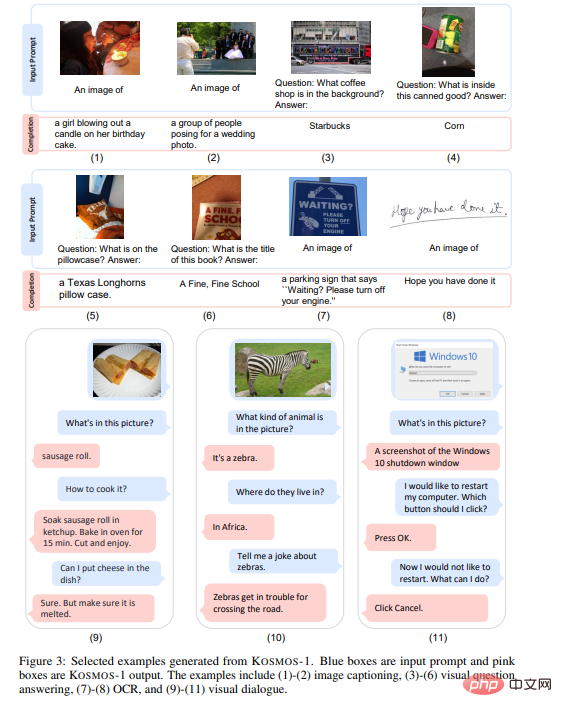

(1) Visual IQ test: Yes, a test for humans! (2) No OCR reading comprehension: input screenshots, scanned documents, street signs or any pixels containing text. Reason directly about content without explicit OCR. This is useful for unlocking AI-driven applications on multimedia web pages or "text in the wild" from real-world cameras. (3) Multi-modal chat: Have a conversation about pictures. You can even provide a "follow-up" picture halfway through. (4) Extensive visual understanding capabilities, such as subtitles, visual question and answer, object detection, scene layout, common sense reasoning, etc. (5) Audio and speech recognition: Not mentioned in the Kosmos-1 paper, but Whisper is already an OpenAI API and should be easy to integrate.

Jim believes that there may be some discrepancies in the predictions based on Andreas’ recent announcement. But Kosmos-1 has already done this. There’s reason to believe it provides capabilities for GPT-4 or whatever AI service Microsoft will offer next. "It's hard to believe that Kosmos-1 will remain in the laboratory and not become a product."

Multi-modal large model application examples: image capture, image question and answer, OCR, visual dialogue

Jim advises practitioners, "Please be prepared for multi-modal APIs - they will appear sooner or later!"

2. GPT-4 will become AGI ? Far from enough

First of all, the issue of accuracy is still not enough. When asked about operational reliability and factual fidelity, Siebler, senior artificial intelligence expert at Microsoft Germany, said that the AI will not always answer correctly, so verification is necessary. Microsoft is currently creating a confidence metric to address this issue. Customers typically only use AI support on their own datasets, primarily for reading comprehension and querying inventory data, where the models are already quite accurate. However, the text generated by the models is still generative and therefore not easily verifiable. "We built a feedback loop around it, both for and against," Siebler said. "It's an iterative process."

Secondly, there is not enough data. Even though the multi-modal GPT-4 is about to demonstrate powerful vision, hearing, reading comprehension and reasoning capabilities, this is just the tip of the iceberg of AGI. Taking humanoid robots as an example, it is difficult to unify the control data of the robots, and , these control data are related to the robot hardware and vary greatly. Therefore, training data from different real robots cannot be easily combined, which is qualitatively different from data such as text, video, image, audio, etc.

3. Two rumors about GPT-4

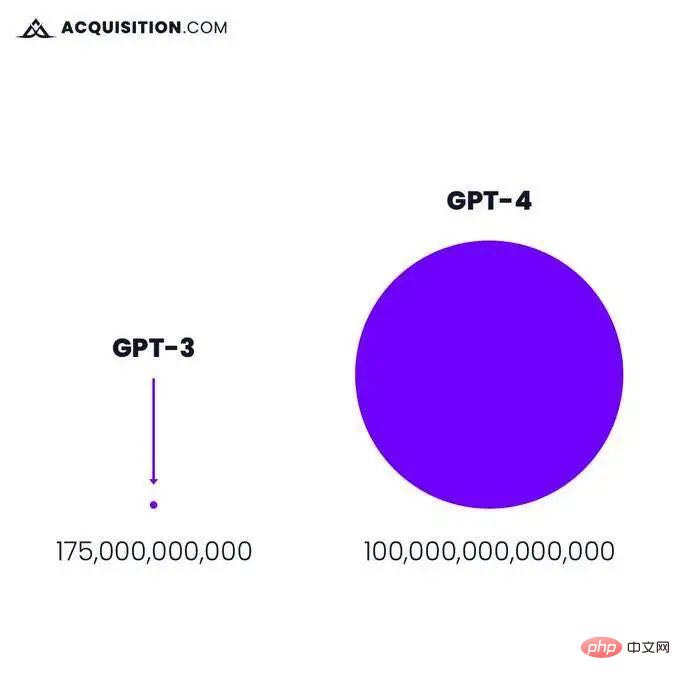

GPT-4 is a new language model being created by OpenAI that can generate text similar to human speech. It will advance the technology used by ChatGPT, which is based on GPT-3.5.

As early as August 2021, industry experts speculated that GPT-4 would have 100 trillion parameters, but at that time some people said: Building AI with more parameters may not necessarily Guarantees better performance and may affect responsiveness.

The above is the detailed content of Two rumors and latest predictions for GPT-4!. For more information, please follow other related articles on the PHP Chinese website!

Get window handle method

Get window handle method

The difference between rest api and api

The difference between rest api and api

What should I do if the copy shortcut key doesn't work?

What should I do if the copy shortcut key doesn't work?

Website dead link detection method

Website dead link detection method

How to delete a file in linux

How to delete a file in linux

What are the servers that are exempt from registration?

What are the servers that are exempt from registration?

The role of conceptual models

The role of conceptual models

How to delete my WeChat address

How to delete my WeChat address