How to make better use of data in causal inference?

Apr 11, 2023 pm 07:43 PM

Introduction: The title of this sharing is "How to make better use of data in causal inference?" ", which mainly introduces the team's recent work related to published papers on cause and effect. This report introduces how we can use more data to make causal inferences from two aspects. One is to use historical control data to explicitly mitigate confusion bias, and the other is causal inference under the fusion of multi-source data.

Full text table of contents:

- Causal inference background

- Correction Causal Tree GBCT

- Causal Data Fusion

- In Ant’s business applications

1. Causal inference background

Common machine learning prediction problems are generally Set in the same system, for example, independent and identical distribution is usually assumed, such as predicting the probability of lung cancer among smokers, picture classification and other prediction problems. The question of causation is concerned with the mechanism behind the data. Common questions such as "Does smoking cause lung cancer?" Similar questions are causation issues.

There are two very important types of data in the problem of causal effect estimation: one type is observation data, and the other type is experimental data generated by randomized controlled experiments. .

- Observation data is the data accumulated in our actual life or products. For example, the smoking data shows that some people like to smoke, while the observational data is related to smokers. In the end, some of the smokers will get cancer. The machine learning prediction problem is to estimate the conditional probability P (get lung cancer | smoking), that is, given the conditions of smoking, the probability of observing a smoker getting lung cancer. In the above observational data, the distribution of smoking is actually not random: everyone's preference for smoking is different, and it is also affected by the environment.

- #The best way to answer causal questions is to conduct a randomized controlled experiment. Experimental data are obtained through randomized controlled experiments. In a randomized controlled trial, assignment to treatment is random. Suppose you need to conduct an experiment to get the conclusion "whether smoking causes lung cancer." First, you need to find enough people, force half of them to smoke, and force the other half not to smoke, and observe the probability of lung cancer in the two groups. Although randomized controlled trials are not possible in some scenarios due to factors such as ethics and policies, randomized controlled trials can still be conducted in some fields, such as A/B testing in search promotion.

The causal estimation problem E(Y|do(X)) problem and the traditional prediction or classification problem The main difference between E(Y|X) is that the intervention symbol do proposed by Judy Pearl appears in the given condition. Intervene to force the X variable to a certain value. The estimation of causal effects in this report mainly refers to estimating causal effects from observational data.

#How to better utilize data in causal inference? This report will introduce such a topic using recent papers published by two teams as examples.

- The first job is how to make better use of historical comparison data. For example, if a marketing promotion event is held at a certain point in time, the time before this time point is called "pre-intervention", and the time after this time point is called "post-intervention". We hope to know the actual effect of intervention before we intervene, so as to assist us in making the next decision. Before the start of this marketing campaign, we have historical performance data of users. The first task is to introduce how to make good use of "pre-intervention" data to assist in data correction work to better evaluate the effect of intervention.

- #The second work mainly introduces how to better utilize multi-source heterogeneous data. Such problems are often involved in machine learning. Common problems include domain adaptation, transfer learning, etc. In today's report, we will consider the utilization of multi-source heterogeneous data from a causal perspective, that is, assuming that there are multiple data sources, how to better estimate the causal effect.

2. Corrective Cause and Effect Tree GBCT

1. Traditional Cause and Effect Tree

Tree algorithm mainly consists of two modules:

- Split criterion: Split a node into two child nodes according to the split criterion

- Parameter estimation: After the split is completed, for example, when the split is finally stopped, the causal effect of the new sample or group is predicted on the leaf node according to the parameter estimation method

Some traditional causal tree algorithms split based on the heterogeneity of causal effects. The basic idea is to hope that the causal effects of the left sub-node and the right sub-node after splitting will be significantly different, and the differences can be captured through splitting. Heterogeneity of causal effects in data distributions.

The splitting criterion of the traditional causal tree, such as:

- The splitting criterion of the uplift tree is to maximize the causal effect of the left and right child nodes Difference, the measure of difference uses distance measures such as Euclidean distance and KL divergence;

- ##causal tree splitting criterion can be intuitively explained as maximizing the square of the causal effect . It can be mathematically proven that this splitting criterion is equivalent to maximizing the variance of the causal effects of leaf nodes.

In the causal tree, the child nodes are obtained by splitting. Can we ensure that the distribution of the left child node and the right child node obtained by the split is homogeneous?

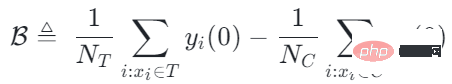

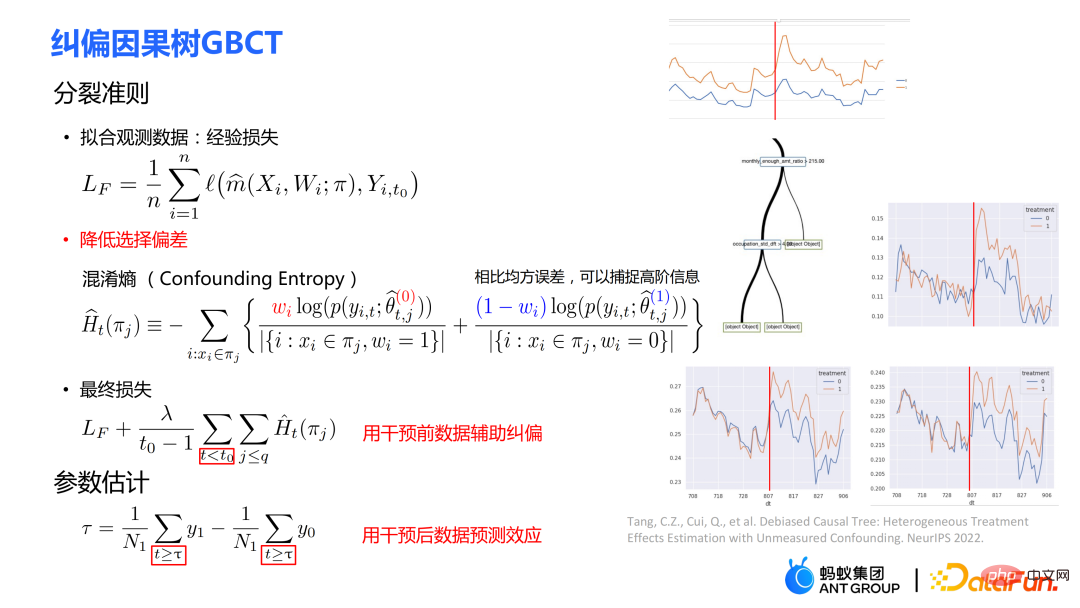

2. Correction Causal Tree GBCT

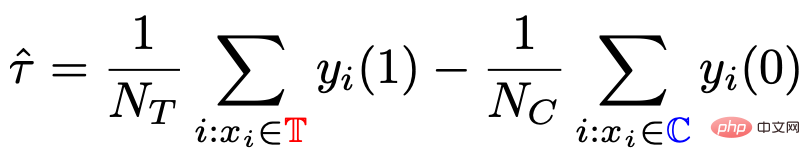

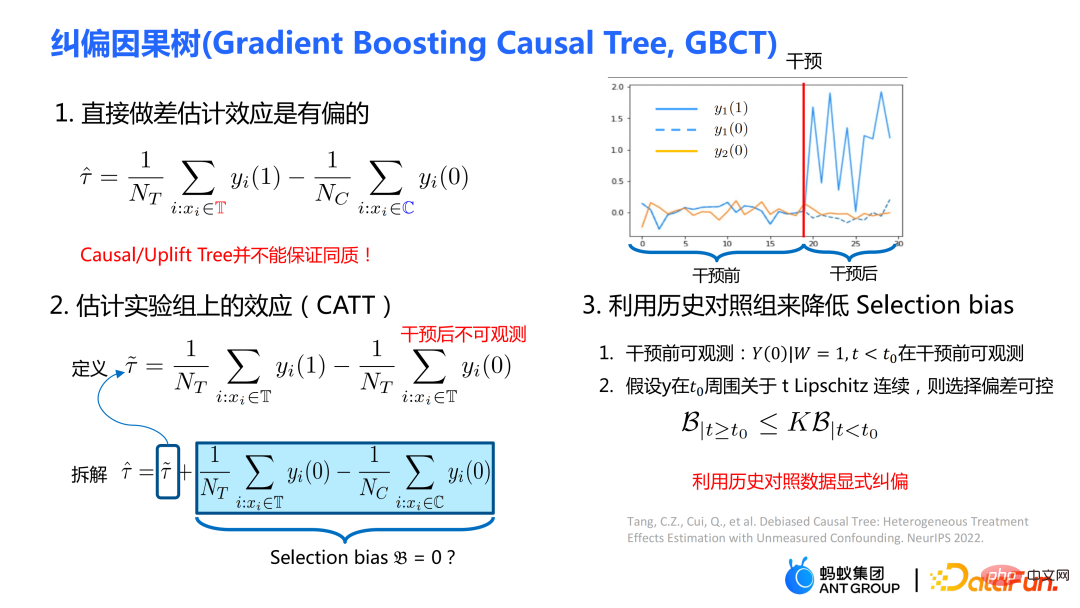

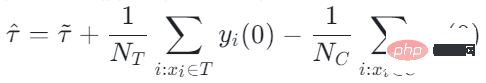

The traditional causal tree and uplift tree cannot guarantee the left-hand side after splitting. The distribution of child nodes and right child nodes is homogeneous. Therefore, the traditional estimates  # mentioned in the previous section are biased.

# mentioned in the previous section are biased.

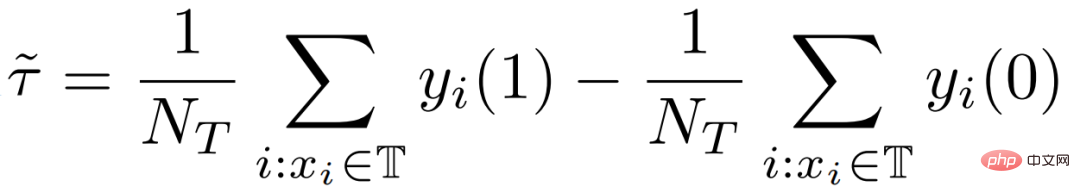

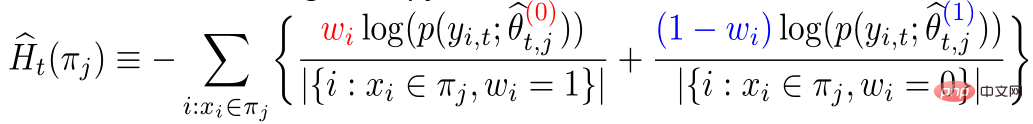

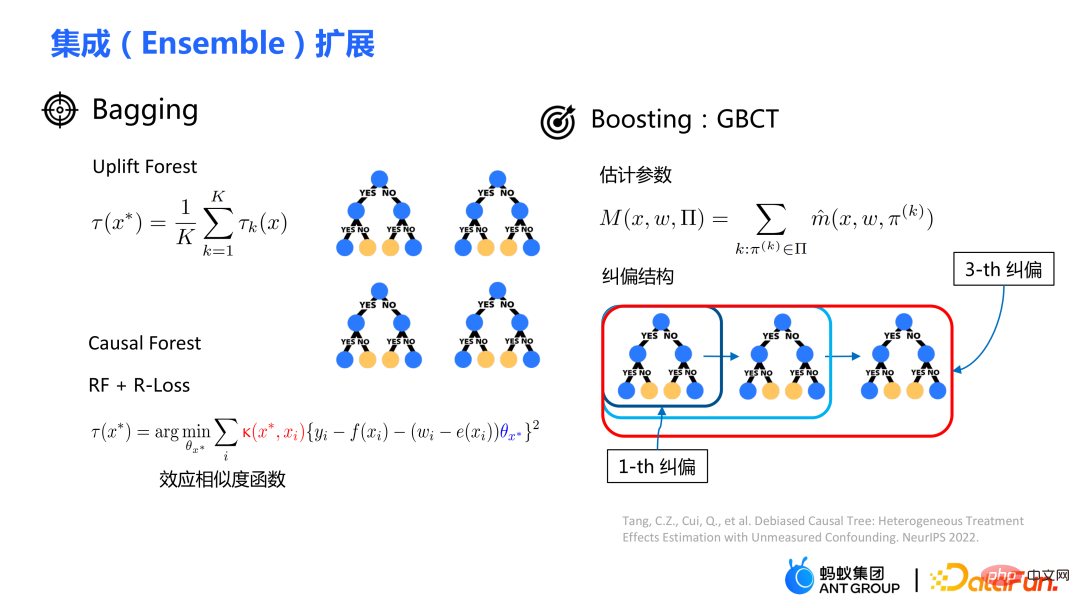

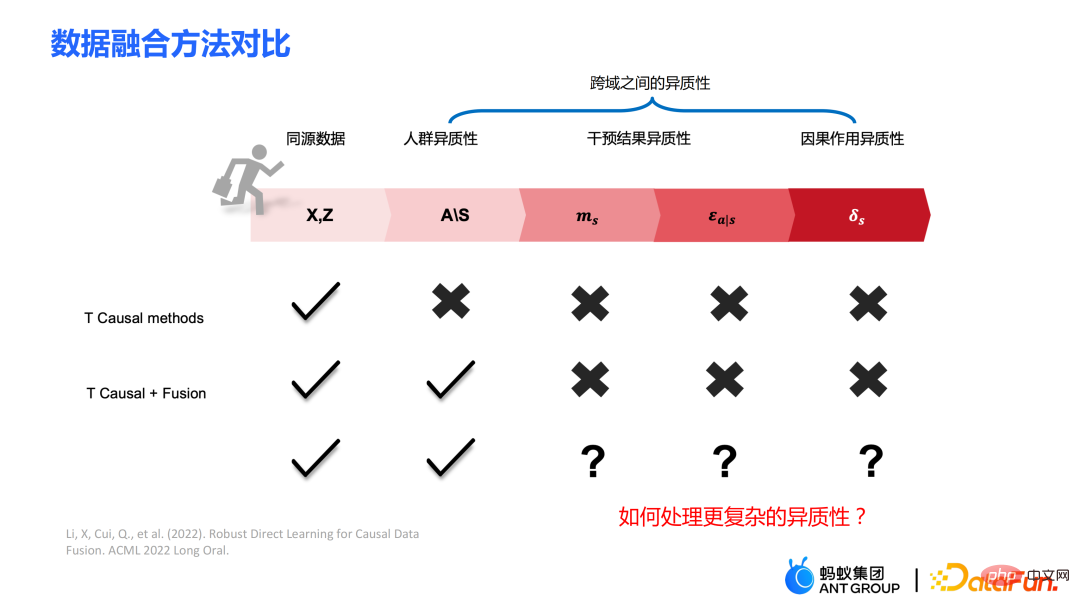

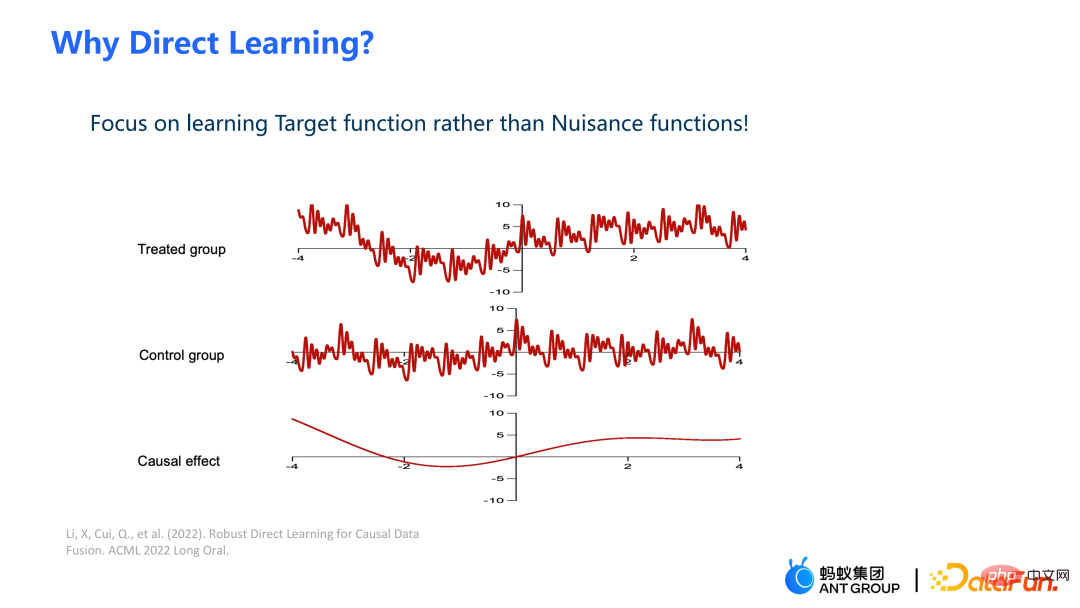

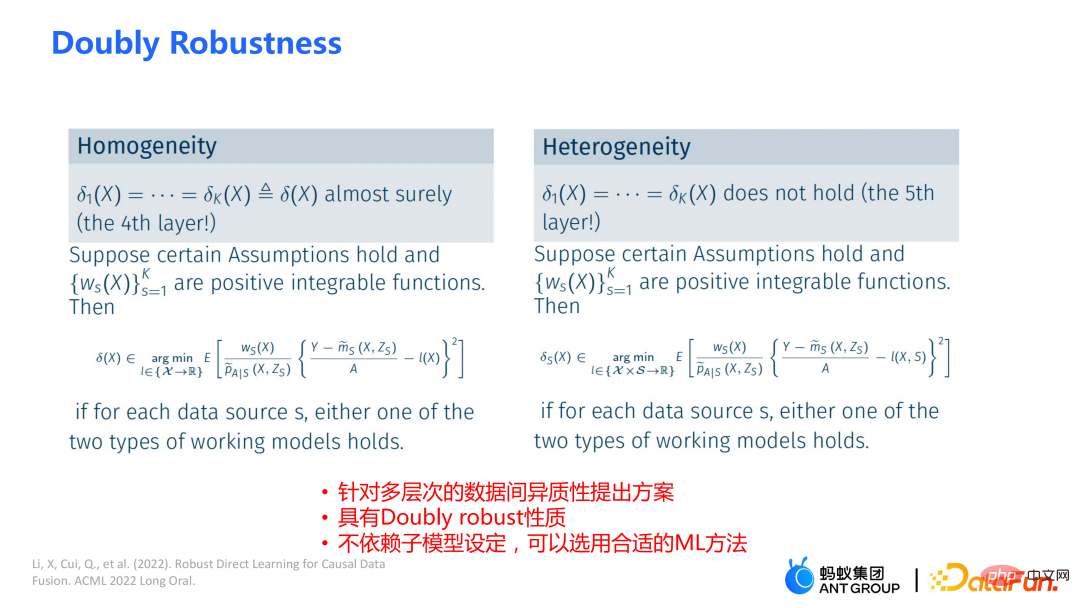

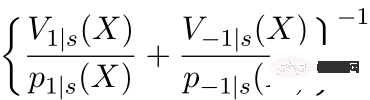

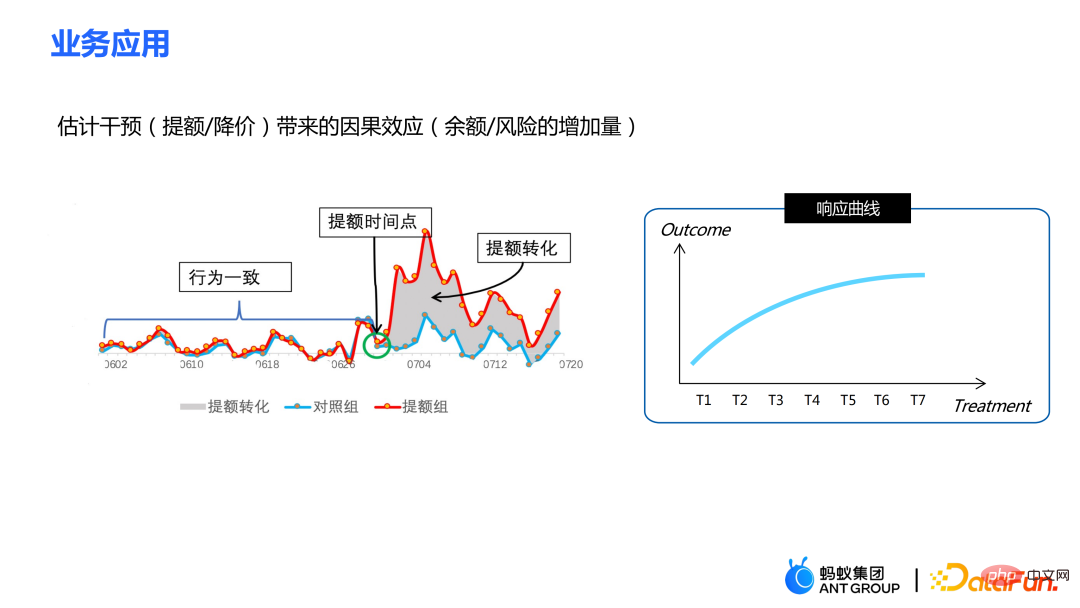

Our work focuses on estimating the average causal effect CATT over the experimental group (treatment group). CATT is defined as:

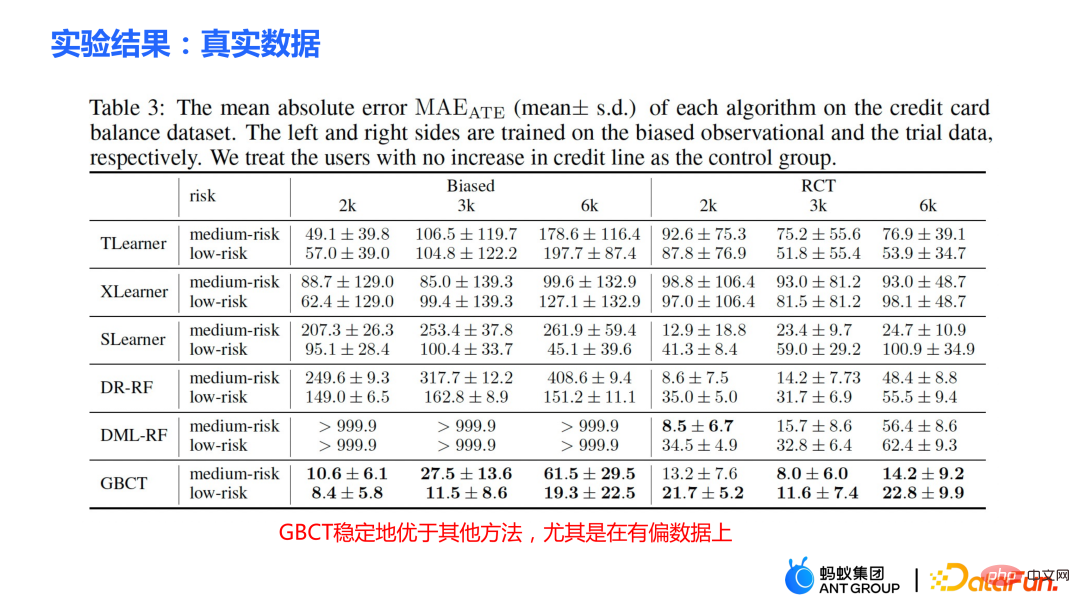

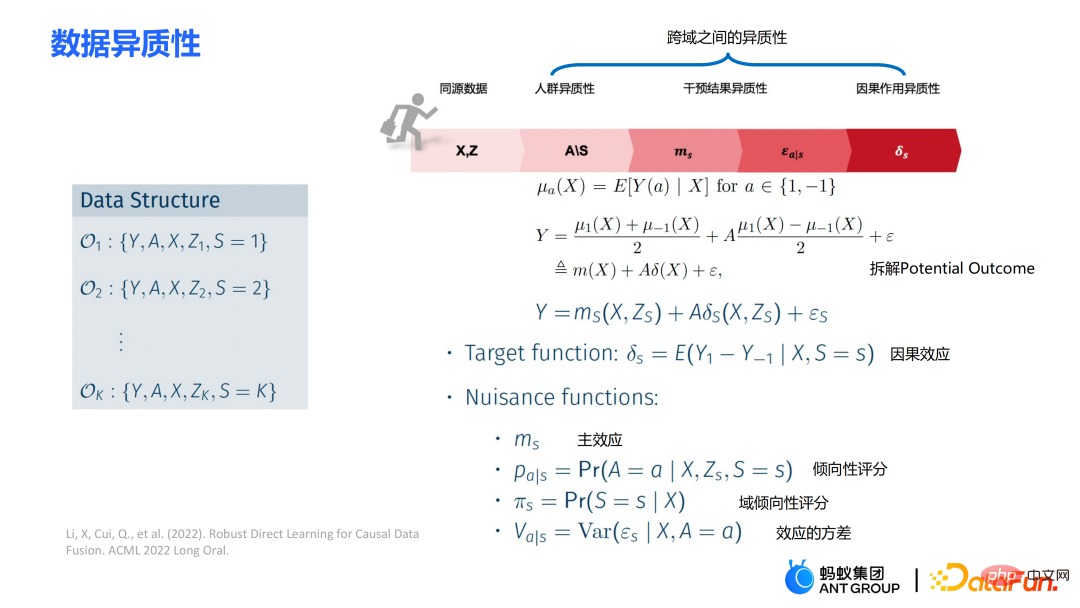

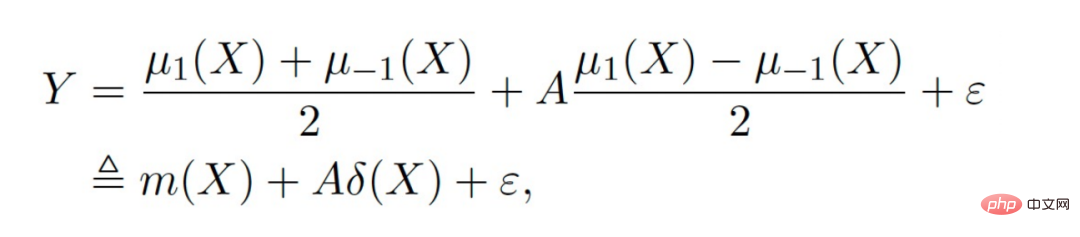

##Further, the traditional causal effect estimate can be split into two parts: Selection bias/confounding bias can be defined as: ##The intuitive meaning is the estimated value when treatment=0 in the experimental group, minus the estimated value when treatment=0 in the control group. In traditional causal trees, the above bias is not characterized, and selection bias may affect our estimates, resulting in the final estimate being biased. The intuitive meaning is: in the experimental group, use the model of the control group for estimation; In the control group, use the model of the experimental group for estimation; make the estimates of the two parts as close as possible, so that the distributions of the experimental group and the control group are as close to the same as possible. The use of confusion entropy is one of the main contributions of our work. The integration of traditional tree models includes methods such as bagging and boosting. The integration method used by uplift forest or causal forest is the bagging method. The integration of uplift forest is direct summation, while the integration of causal forest requires solving a loss function. Due to the explicit correction module designed in GBCT, GBCT supports integration using the boosting method. The basic idea is similar to boosting: after the first tree is corrected, the second tree is corrected, and the third tree is corrected... Two parts of experiments were done: ① Simulation experiment. Under simulation experiments containing ground truth, test whether the GBCT method can achieve the expected results. The data generation for the simulation experiment is divided into two parts (the first column Φ in the table represents selection bias. The larger the Φ value, the stronger the corresponding selection bias; the value in the table is MAE. The smaller the MAE value, the better the method) : ##②Real credit card limit increase data. A randomized controlled experiment was conducted, and biased data were constructed based on the randomized controlled experiment. Across different settings, the GBCT method consistently outperforms traditional methods, especially on biased data, performing significantly better than traditional methods. The second task is causal data fusion, that is, how to better Estimating causal effects. Main symbols: multiple data sources, Y is outcome, A is treatment, X is the association of concern Variables, Z are other covariates of each data source (domain) except X, S is the indicator of the domain used to indicate which domain it belongs to, and μ is the expected value of the potential outcome. Decompose the outcome into the following expression: ##target function δ is used to estimate the causal effect on each domain, In addition, nuisance functions include main effects, propensity scores, domain propensity scores, variances of effects, etc. Some traditional methods, such as meta learner, etc., assume that the data is of the same origin, that is, the distribution is consistent . Some traditional data fusion methods can handle the heterogeneity of populations across domains, but cannot explicitly capture the heterogeneity of intervention outcomes and causal effects across domains. Our work focuses on dealing with more complex heterogeneity across domains, including heterogeneity across domains in intervention outcomes and heterogeneity across domains in causal effects. The three modules are combined to get the final estimate. The three highlights of the WMDL algorithm are: In this work, we did not estimate the outcome of the experimental group and the outcome of the control group, and then make the difference to obtain the cause and effect. Instead of estimating the effect, we directly estimate the causal effect, that is, Direct Learning. The benefit of Direct Learning is that it can avoid higher frequency noise signals in the experimental and control groups. The left part assumes that the causal effects are the same among multiple domains, but the outcomes may be heterogeneous. ; The right part assumes that the causal effects between each domain are different, that is, between different domains, even if its covariates are the same, their causal effects are also different. The formula is derived based on the disassembly formula. Outcome Y minus main effect divided by treatment is estimated to be I(X), and the optimal solution obtained is δ(X). The numerator in This work has three advantages: ① Through different designs, it can not only handle the heterogeneity of intervention results, but also Deal with the heterogeneity between causal effects; ② It has the property of doubly robustness. The proof is given in the paper that as long as the estimate of either the domain's propensity score model or the main effect model is unbiased, the final estimate will be unbiased (the actual situation is a little more complicated, see the paper for details); #③ This work mainly designed the semi-parametric model framework. Each module of the model can use any machine learning model, and the entire model can even be designed into a neural network to achieve end-to-end learning. Weighting’s module is derived from the efficiency bound theory in statistics. It mainly contains two aspects of information: ① ② Through the design of the propensity score function on the denominator, the overlapping samples in the experimental group and the control group are given a comparative weight. Large weight; #③ Use V to characterize the noise in the data. Since the noise is in the denominator, samples with less noise will get larger weights. By cleverly combining the above three parts, the distribution differences between different domains and the performance of different causal information can be mapped into a unified domain . Regardless of homogeneous causal effects or heterogeneous causal effects, WMDL (Weighted Multi-domain Direct Learning ) methods have better results. The picture on the right shows an ablation experiment on the weighting module. The experiment shows the effectiveness of the weighting module. In summary, the WMDL method consistently performs better than other methods, and the estimated variance is relatively small. In financial credit risk control scenarios, intervention methods such as quota increases and price reductions are expected to achieve expected effects such as changes in balances or risks. In some actual scenarios, the correction work of GBCT will use the historical performance in the period before the forehead lift (the status of the experimental group and the control group without forehead lift can be obtained), and carry out explicit correction through historical information, so that the intervention Later estimates will be more accurate. If GBCT is split into a child node so that the pre-intervention behaviors are aligned, the post-intervention causal effects will be easier to estimate. (Obtained after correction) In the figure, the red color is the forehead raising group, the blue color is the no forehead raising group, and the gray area in the middle is the estimated causal effect. GBCT helps us make better intelligent decisions and control the balance and risks of credit products. A1: The main idea of GBCT correction is to use historical comparison information to explicitly reduce selection bias. The GBCT method and the DID double difference method have similarities and differences: A2: If all confounding variables have been observed, the assumption of ignorability is satisfied, to some extent, although the selection bias is not explicitly reduced, the experiment It is also possible to achieve alignment between the group and the control group through traditional methods. Experiments show that the performance of GBCT is slightly better, and the results are more stable through explicit correction. Assume that there are some unobserved confounding variables. This kind of scenario is very common in practice. Unobserved confounding also exists in historical control data. Variables, such as changes in family circumstances and income before the quota is raised, may not be observable, but users’ financial behavior has been reflected in historical data. We hope to explicitly reduce the selection bias through methods such as confusion entropy through historical performance information, so that when the tree is split, the heterogeneity between confounding variables can be characterized into the split child nodes. Among the child nodes, the unobserved confounding variables are relatively close so that they have a greater probability, so the estimated causal effects are relatively more accurate. A3: Comparison has been made. Double Machine Learning is a semi-parametric method. Our work in this article focuses more on tree-based methods, so the base learners selected are tree or forest related methods. DML-RF in the table is the Double Machine Learning version of Random Forest. #Compared with DML, GBCT mainly considers how to use historical comparison data. In the comparative method, the historical outcome will be processed directly as a covariate, but this processing method obviously does not make good use of the information. A4: This problem is a very essential problem in the financial scene. In search promotion, the difference between offline and online can be partially overcome through online learning or A/B testing. In financial scenarios, it is not easy to conduct experiments online due to policy influence; in addition, the performance observation period is usually longer. For example, it takes at least one month to observe user feedback for credit products. Therefore it is actually very difficult to solve this problem perfectly. We generally adopt the following approach: use test data from different periods (OOT) for verification during offline evaluation and observe the robustness of its performance. If the test performance is relatively stable, then there is relatively more reason to believe that its online performance is also good.

##Specific approach:

##Specific approach:

② Parameter estimation

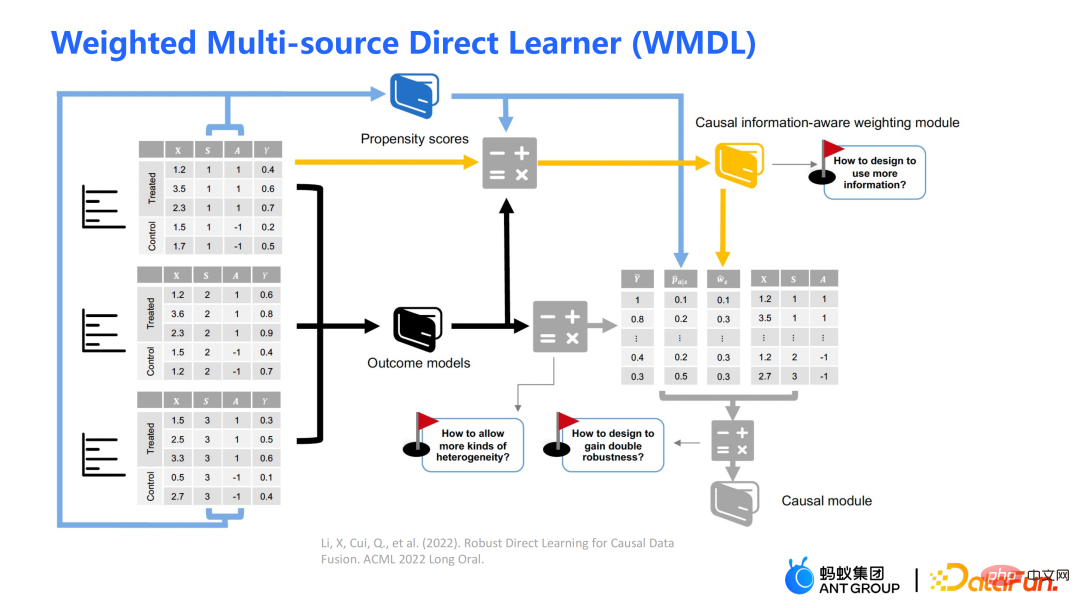

3. Causal data fusion

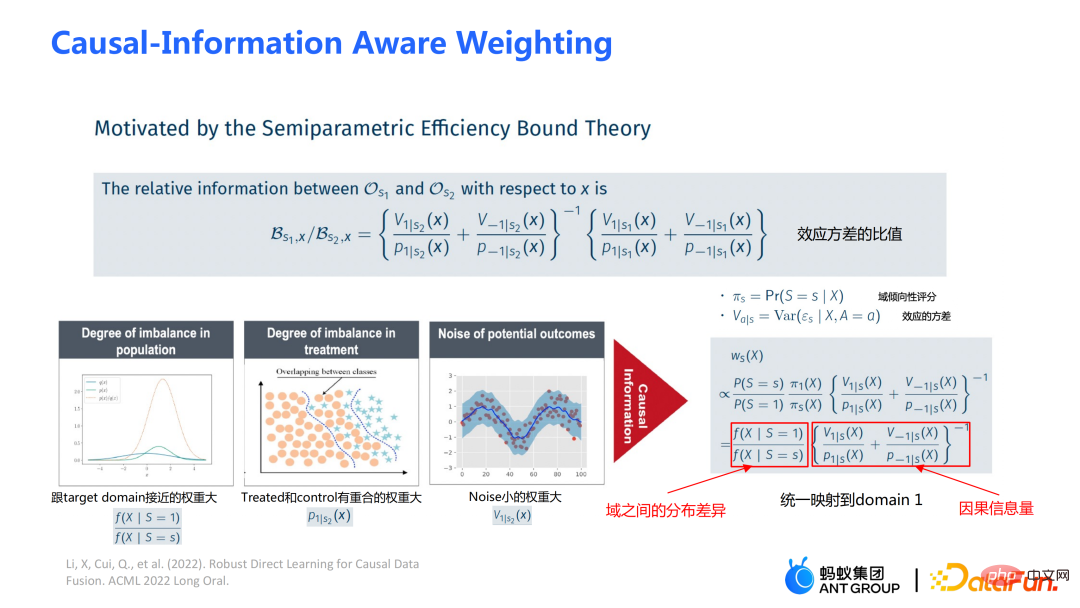

is the causal information-aware weighting module to be mentioned later, which is a major contribution of our work; the denominator is similar to the propensity score in the doubly robust method, except that in this work both Domain information is taken into account. If the causal effects between different domains are different, the indicator information of the domain will also be considered.

is the causal information-aware weighting module to be mentioned later, which is a major contribution of our work; the denominator is similar to the propensity score in the doubly robust method, except that in this work both Domain information is taken into account. If the causal effects between different domains are different, the indicator information of the domain will also be considered.

is a module for balanced conversion of distribution differences between domains;

is a module for balanced conversion of distribution differences between domains;  is a causal information module. The three pictures on the left can be used to assist understanding: If the distribution difference between the source domain and the target domain is large, priority will be given to samples that are closer to the target domain. Weight;

is a causal information module. The three pictures on the left can be used to assist understanding: If the distribution difference between the source domain and the target domain is large, priority will be given to samples that are closer to the target domain. Weight;

4. Business applications in Ant

5. Question and Answer Session

#Q1: What are the similarities and differences between GBCT correction and double difference method (DID)?

Q2: GBCT will perform better on unobserved confounding variables. Is there any more intuitive explanation?

Q3: Have you compared GBCT with Double Machine Learning (DML)?

#Q4: A similar problem that may be encountered in business is that there may be selection bias offline. However, online bias may be somewhat different from offline bias. At this time, when doing effect evaluation offline, there may be no way to estimate the offline effect very accurately.

The above is the detailed content of How to make better use of data in causal inference?. For more information, please follow other related articles on the PHP Chinese website!

Hot Article

Hot tools Tags

Hot Article

Hot Article Tags

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

This article will take you to understand SHAP: model explanation for machine learning

Transparent! An in-depth analysis of the principles of major machine learning models!

Apr 12, 2024 pm 05:55 PM

Transparent! An in-depth analysis of the principles of major machine learning models!

Apr 12, 2024 pm 05:55 PM

Transparent! An in-depth analysis of the principles of major machine learning models!

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

Identify overfitting and underfitting through learning curves

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

The evolution of artificial intelligence in space exploration and human settlement engineering

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Explainable AI: Explaining complex AI/ML models

Outlook on future trends of Golang technology in machine learning

May 08, 2024 am 10:15 AM

Outlook on future trends of Golang technology in machine learning

May 08, 2024 am 10:15 AM

Outlook on future trends of Golang technology in machine learning

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude