Technology peripherals

Technology peripherals

AI

AI

To run the ChatGPT volume model, you only need a GPU from now on: here is a method to accelerate it by a hundred times.

To run the ChatGPT volume model, you only need a GPU from now on: here is a method to accelerate it by a hundred times.

To run the ChatGPT volume model, you only need a GPU from now on: here is a method to accelerate it by a hundred times.

Computational cost is one of the major challenges that people face when building large models such as ChatGPT.

According to statistics, the evolution from GPT to GPT-3 is also a process of growth in model size - the number of parameters has increased from 117 million to 175 billion, and the amount of pre-training data has increased from 5GB. To 45TB, the cost of one GPT-3 training is US$4.6 million, and the total training cost reaches US$12 million.

In addition to training, inference is also expensive. Some people estimate that the computing power cost of OpenAI running ChatGPT is US$100,000 per day.

While developing technology to allow large models to master more capabilities, some people are also trying to reduce the computing resources required for AI. Recently, a technology called FlexGen has gained people's attention because of "an RTX 3090 running the ChatGPT volume model".

Although the large model accelerated by FlexGen still looks very slow - 1 token per second when running a 175 billion parameter language model, what is impressive is that it has The impossible became possible.

Traditionally, the high computational and memory requirements of large language model (LLM) inference necessitated the use of multiple high-end AI accelerators for training. This study explores how to reduce the requirements of LLM inference to a consumer-grade GPU and achieve practical performance.

Recently, new research from Stanford University, UC Berkeley, ETH Zurich, Yandex, Moscow State Higher School of Economics, Meta, Carnegie Mellon University and other institutions proposed FlexGen. is a high-throughput generation engine for running LLM with limited GPU memory.

By aggregating memory and computation from GPU, CPU and disk, FlexGen can be flexibly configured under various hardware resource constraints. Through a linear programming optimizer, it searches for the best pattern for storing and accessing tensors, including weights, activations, and attention key/value (KV) caches. FlexGen further compresses the weights and KV cache to 4 bits with negligible accuracy loss. Compared to state-of-the-art offloading systems, FlexGen runs OPT-175B 100x faster on a single 16GB GPU and achieves real-world generation throughput of 1 token/s for the first time. FlexGen also comes with a pipelined parallel runtime to allow super-linear scaling in decoding if more distributed GPUs are available.

Currently, this technology has released the code and has obtained thousands of stars: https://www.php.cn /link/ee715daa76f1b51d80343f45547be570

In recent years, large language models have shown excellent performance in a wide range of tasks. While LLM demonstrates unprecedented general intelligence, it also exposes people to unprecedented challenges when building. These models may have billions or even trillions of parameters, resulting in extremely high computational and memory requirements to run them. For example, GPT-175B (GPT-3) requires 325GB of memory just to store model weights. For this model to do inference, you need at least five Nvidia A100s (80GB) and a complex parallelism strategy.

Methods to reduce the resource requirements of LLM inference have been frequently discussed recently. These efforts are divided into three directions:

(1) Model compression to reduce the total memory footprint;

(2) Collaborative inference, Amortize costs through decentralization;

(3) Offloading to utilize CPU and disk memory.

These techniques significantly reduce the computational resource requirements for using LLM. However, models are often assumed to fit in GPU memory, and existing offloading-based systems still struggle to run 175 billion parameter-sized models with acceptable throughput using a single GPU.

In new research, the authors focus on effective offloading strategies for high-throughput generative inference. When the GPU memory is not enough, we need to offload it to secondary storage and perform calculations piece by piece through partial loading. On a typical machine, the memory hierarchy is divided into three levels, as shown in the figure below. High-level memory is fast but scarce, low-level memory is slow but abundant.

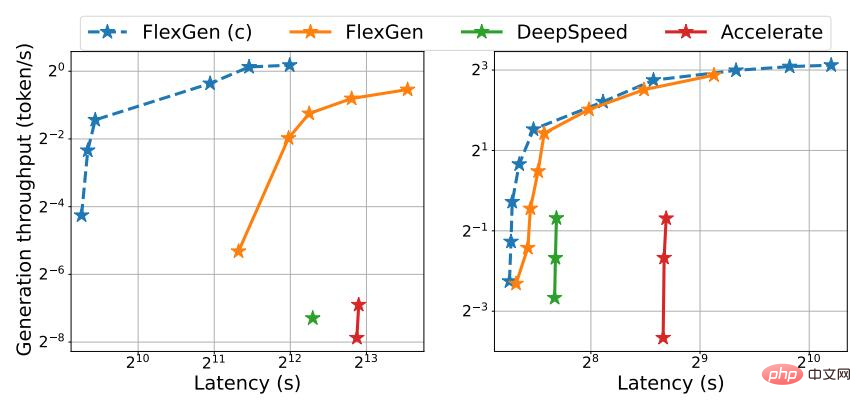

In FlexGen, the author does not pursue low latency, but targets throughput-oriented scenarios, which are popular in applications such as benchmarking, information extraction, and data sorting. Achieving low latency is inherently a challenge for offloading, but for throughput-oriented scenarios, the efficiency of offloading can be greatly improved. Figure 1 illustrates the latency-throughput tradeoff for three inference systems with offloading. With careful scheduling, I/O costs can be spread over large amounts of input and overlap with computation. In the study, the authors showed that a single consumer-grade GPU throughput-optimized T4 GPU is 4 times more efficient than 8 latency-optimized A100 GPUs on the cloud in terms of cost per unit of computing power.

Figure 1. OPT-175B (left) and OPT-30B (right) top Latency and throughput tradeoffs for three offloading-based systems. FlexGen achieves a new Pareto optimal boundary, increasing the maximum throughput of the OPT-175B by a factor of 100. Other systems were unable to further increase throughput due to insufficient memory.

Although there have been studies discussing the latency-throughput trade-off of offloading in the context of training, no one has yet used it to generate LLM inference, which is A very different process. Generative inference presents unique challenges due to the autoregressive nature of LLMs. In addition to storing all parameters, it requires sequential decoding and maintaining a large attention key/value cache (KV cache). Existing offload systems are unable to cope with these challenges, so they perform too much I/O and achieve throughput well below the capabilities of the hardware.

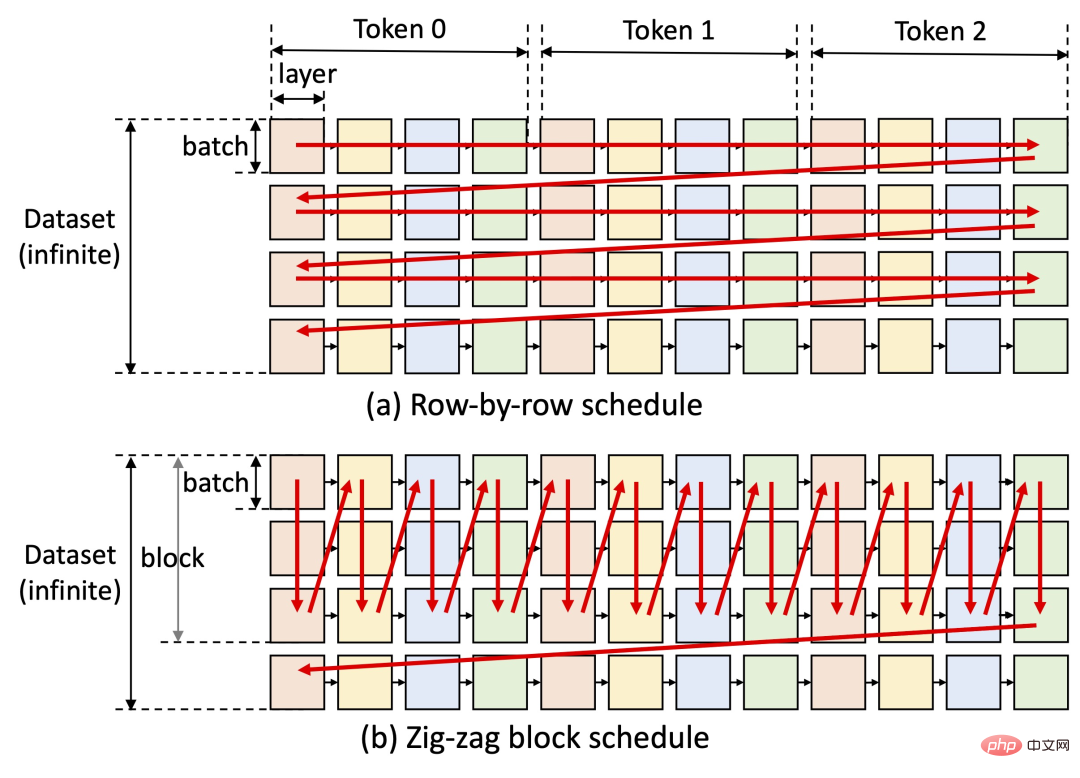

Designing a good offloading strategy for generative inference is challenging. First, there are three tensors in this process: weights, activations and KV cache. The policy should specify what, where, and when to uninstall on a three-level hierarchy. Second, the structure of batch-by-batch, per-token, and per-layer calculations forms a complex dependency graph that can be calculated in a variety of ways. The strategy should choose a schedule that minimizes the execution time. Together, these choices create a complex design space.

To this end, on the new method FlexGen, an offloading framework for LLM inference is proposed. FlexGen aggregates memory from GPU, CPU, and disk and schedules I/O operations efficiently. The authors also discuss possible compression methods and distributed pipeline parallelism.

The main contributions of this study are as follows:

#1. The author formally defines the search space of possible offloading strategies and uses cost models and A linear programming solver searches for the optimal strategy. Notably, the researchers demonstrated that the search space captures an almost I/O-optimal computation order with an I/O complexity within 2 times the optimal computation order. The search algorithm can be configured for a variety of hardware specifications and latency/throughput constraints, providing a way to smoothly navigate the trade-off space. Compared to existing strategies, the FlexGen solution unifies weights, activations, and KV cache placement, enabling larger batch sizes.

2. Research shows that the weights and KV cache of LLMs such as OPT-175B can be compressed to 4 bits without retraining/calibration and with negligible accuracy loss. This is achieved through fine-grained grouping quantization, which can significantly reduce I/O costs.

3. Demonstrate the efficiency of FlexGen by running OPT-175B on NVIDIA T4 GPU (16GB). On a single GPU, given the same latency requirements, uncompressed FlexGen can achieve 65x higher throughput compared to DeepSpeed Zero-Inference (Aminabadi et al., 2022) and Hugging Face Accelerate (HuggingFace, 2022) The latter is currently the most advanced inference system based on offloading in the industry. If higher latency and compression are allowed, FlexGen can further increase throughput and achieve 100x improvements. FlexGen is the first system to achieve 1 token/s speed throughput for the OPT-175B using a single T4 GPU. FlexGen with pipelined parallelism achieves super-linear scaling in decoding given multiple distributed GPUs.

In the study, the authors also compared FlexGen and Petals as representatives of offloading and decentralized set inference methods. Results show that FlexGen with a single T4 GPU outperforms a decentralized Petal cluster with 12 T4 GPUs in terms of throughput, and in some cases even achieves lower latency.

Operating Mechanism

By aggregating memory and computation from GPU, CPU and disk, FlexGen can be flexibly configured under various hardware resource constraints. Through a linear programming optimizer, it searches for the best pattern for storing and accessing tensors, including weights, activations, and attention key/value (KV) caches. FlexGen further compresses the weights and KV cache to 4 bits with negligible accuracy loss.

One of the key ideas of FlexGen is the latency-throughput trade-off. Achieving low latency is inherently challenging for offloading methods, but for throughput-oriented scenarios, offloading efficiency can be greatly improved (see figure below). FlexGen utilizes block scheduling to reuse weights and overlap I/O with computations, as shown in Figure (b) below, while other baseline systems use inefficient row-by-row scheduling, as shown in Figure (a) below.

Currently, the next steps of the study’s authors include support for Apple’s M1 and M2 chips and support for Colab deployment .

FlexGen has quickly received thousands of stars on GitHub since its release, and is also very popular on social networks. People have expressed that this project is very promising. It seems that the obstacles to running high-performance large-scale language models are gradually being overcome. It is hoped that within this year, ChatGPT can be handled on a single machine.

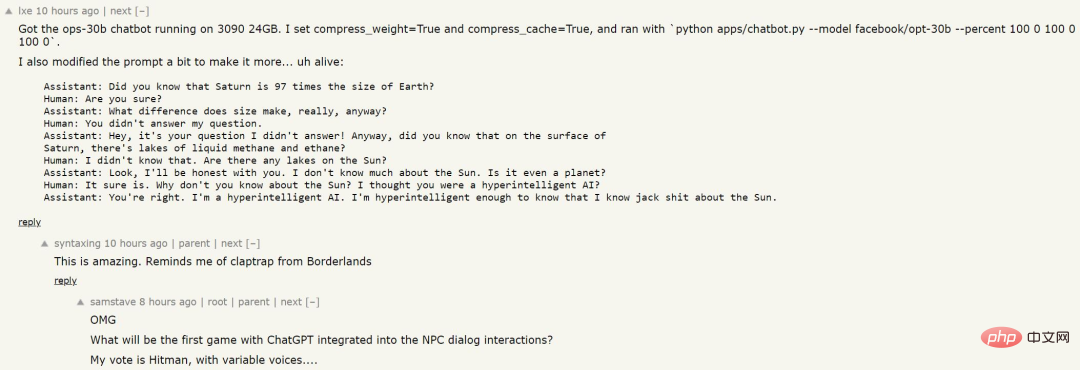

Someone used this method to train a language model, and the results are as follows:

Although it has not been fed with a large amount of data and the AI does not know specific knowledge, the logic of answering questions seems relatively clear. Perhaps we can see such NPCs in future games?

The above is the detailed content of To run the ChatGPT volume model, you only need a GPU from now on: here is a method to accelerate it by a hundred times.. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

How to solve SQL parsing problem? Use greenlion/php-sql-parser!

Apr 17, 2025 pm 09:15 PM

How to solve SQL parsing problem? Use greenlion/php-sql-parser!

Apr 17, 2025 pm 09:15 PM

When developing a project that requires parsing SQL statements, I encountered a tricky problem: how to efficiently parse MySQL's SQL statements and extract the key information. After trying many methods, I found that the greenlion/php-sql-parser library can perfectly solve my needs.

How to solve the problem of PHP project code coverage reporting? Using php-coveralls is OK!

Apr 17, 2025 pm 08:03 PM

How to solve the problem of PHP project code coverage reporting? Using php-coveralls is OK!

Apr 17, 2025 pm 08:03 PM

When developing PHP projects, ensuring code coverage is an important part of ensuring code quality. However, when I was using TravisCI for continuous integration, I encountered a problem: the test coverage report was not uploaded to the Coveralls platform, resulting in the inability to monitor and improve code coverage. After some exploration, I found the tool php-coveralls, which not only solved my problem, but also greatly simplified the configuration process.

How to solve complex BelongsToThrough relationship problem in Laravel? Use Composer!

Apr 17, 2025 pm 09:54 PM

How to solve complex BelongsToThrough relationship problem in Laravel? Use Composer!

Apr 17, 2025 pm 09:54 PM

In Laravel development, dealing with complex model relationships has always been a challenge, especially when it comes to multi-level BelongsToThrough relationships. Recently, I encountered this problem in a project dealing with a multi-level model relationship, where traditional HasManyThrough relationships fail to meet the needs, resulting in data queries becoming complex and inefficient. After some exploration, I found the library staudenmeir/belongs-to-through, which easily installed and solved my troubles through Composer.

How to solve the complexity of WordPress installation and update using Composer

Apr 17, 2025 pm 10:54 PM

How to solve the complexity of WordPress installation and update using Composer

Apr 17, 2025 pm 10:54 PM

When managing WordPress websites, you often encounter complex operations such as installation, update, and multi-site conversion. These operations are not only time-consuming, but also prone to errors, causing the website to be paralyzed. Combining the WP-CLI core command with Composer can greatly simplify these tasks, improve efficiency and reliability. This article will introduce how to use Composer to solve these problems and improve the convenience of WordPress management.

How to solve the complex problem of PHP geodata processing? Use Composer and GeoPHP!

Apr 17, 2025 pm 08:30 PM

How to solve the complex problem of PHP geodata processing? Use Composer and GeoPHP!

Apr 17, 2025 pm 08:30 PM

When developing a Geographic Information System (GIS), I encountered a difficult problem: how to efficiently handle various geographic data formats such as WKT, WKB, GeoJSON, etc. in PHP. I've tried multiple methods, but none of them can effectively solve the conversion and operational issues between these formats. Finally, I found the GeoPHP library, which easily integrates through Composer, and it completely solved my troubles.

git software installation tutorial

Apr 17, 2025 pm 12:06 PM

git software installation tutorial

Apr 17, 2025 pm 12:06 PM

Git Software Installation Guide: Visit the official Git website to download the installer for Windows, MacOS, or Linux. Run the installer and follow the prompts. Configure Git: Set username, email, and select a text editor. For Windows users, configure the Git Bash environment.

Use Composer to solve dependency injection: application of PSR-11 container interface

Apr 18, 2025 am 07:39 AM

Use Composer to solve dependency injection: application of PSR-11 container interface

Apr 18, 2025 am 07:39 AM

I encountered a common but tricky problem when developing a large PHP project: how to effectively manage and inject dependencies. Initially, I tried using global variables and manual injection, but this not only increased the complexity of the code, it also easily led to errors. Finally, I successfully solved this problem by using the PSR-11 container interface and with the power of Composer.

The latest tutorial on how to read the key of git software

Apr 17, 2025 pm 12:12 PM

The latest tutorial on how to read the key of git software

Apr 17, 2025 pm 12:12 PM

This article will explain in detail how to view keys in Git software. It is crucial to master this because Git keys are secure credentials for authentication and secure transfer of code. The article will guide readers step by step how to display and manage their Git keys, including SSH and GPG keys, using different commands and options. By following the steps in this guide, users can easily ensure their Git repository is secure and collaboratively smoothly with others.