Technology peripherals

Technology peripherals

AI

AI

Xiaozha spent a lot of money! Meta has developed an AI model specifically for the Metaverse

Xiaozha spent a lot of money! Meta has developed an AI model specifically for the Metaverse

Xiaozha spent a lot of money! Meta has developed an AI model specifically for the Metaverse

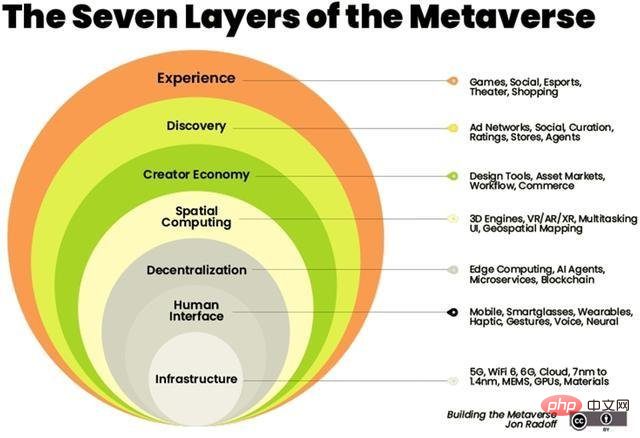

Artificial intelligence will become the backbone of the virtual world.

Artificial intelligence can be combined with a variety of related technologies in the metaverse, such as computer vision, natural language processing, blockchain and digital twins.

#In February, Zuckerberg showed off what the Metaverse would look like at Inside The Lab, the company’s first virtual event. He said the company is developing a new series of generative AI models that will allow users to generate their own virtual reality avatars simply by describing them.

Zuckerberg announced a series of upcoming projects, such as Project CAIRaoke, a fully end-to-end neural model for building on-device voice assistants that can help users communicate with their voice assistants more naturally . Meanwhile, Meta is working hard to build a universal speech translator that provides direct speech-to-speech translation for all languages.

A few months later, Meta fulfilled their promise. However, Meta isn't the only tech company with skin in the game. Companies such as NVIDIA have also released their own self-developed AI models to provide a richer Metaverse experience.

Open source pre-trained Transformer (OPT-175 billion parameters)

GAN verse 3D

GANverse 3D is developed by NVIDIA AI Research and is A model that uses deep learning to process 2D images into 3D animated versions, a tool described in a research paper published at ICLR and CVPR last year, can produce simulations faster and at a lower cost.

This model uses StyleGAN to automatically generate multiple views from a single image. The application can be imported as an extension to NVIDIA Omniverse to accurately render 3D objects in virtual worlds. Omniverse launched by NVIDIA helps users create simulations of their final ideas in a virtual environment.

The production of 3D models has become a key factor in building the metaverse. Retailers such as Nike and Forever21 have set up their virtual stores in the Metaverse to drive e-commerce sales.

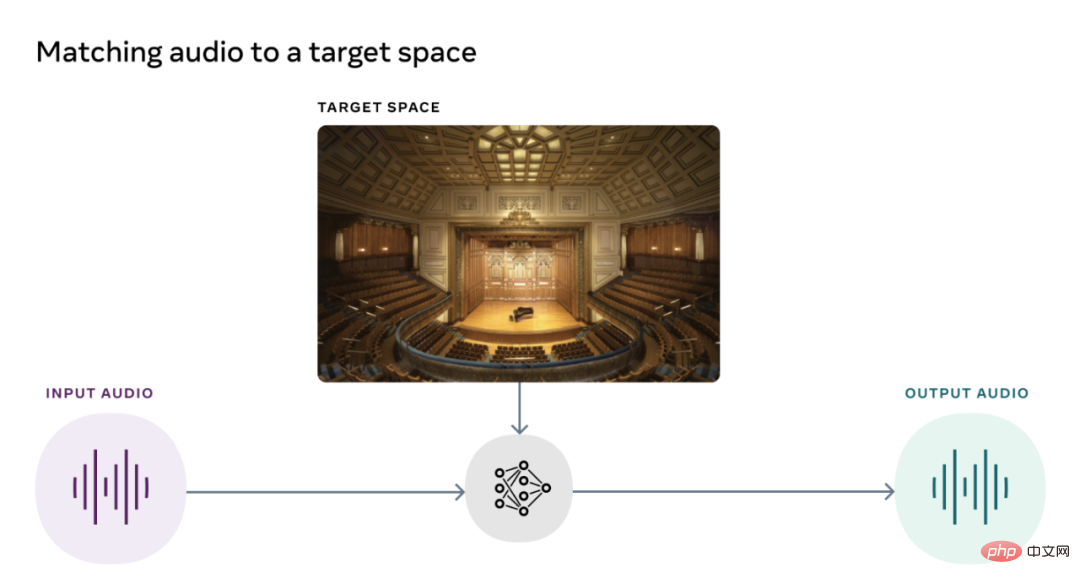

Visual Acoustic Matching Model (AViTAR)

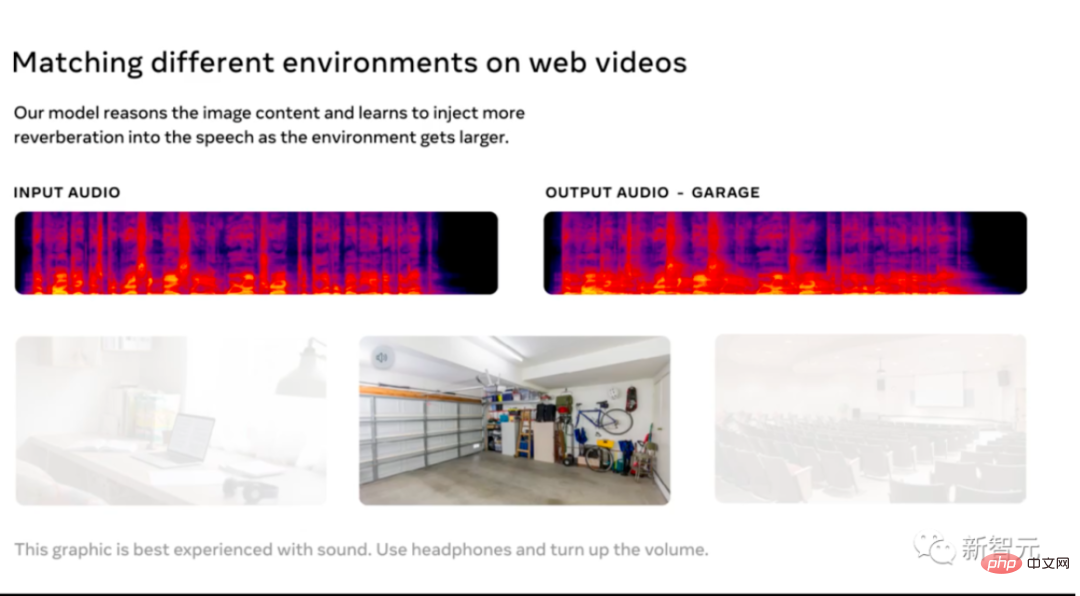

Meta’s Reality Lab team collaborated with the University of Texas to build an artificial intelligence model that to improve the sound quality of metaspace. This model helps match audio and video in a scene. It transforms audio clips to make them sound like they were recorded in a specific environment. The model uses self-supervised learning after extracting data from random online videos. Ideally, users should be able to view their favorite memories on their AR glasses and hear the exact sounds produced by the actual experience.

Meta AI has released AViTAR as open source, along with two other acoustic models, which is very rare considering that sound is an often overlooked part of the metaverse experience.

Visually Impacted Vibration Reduction (VIDA)

The second acoustic model released by Meta AI is used to remove reverberation in acoustics.

The model is trained on a large-scale dataset with various realistic audio renderings from 3D models of homes. Reverb not only reduces the quality of the audio, making it difficult to understand, but it also improves the accuracy of automatic speech recognition.

VIDA is unique in that it uses audio for observation as well as visual cues. Improving on typical audio-only approaches, VIDA can enhance speech and identify voices and speakers.

Visual Voice (VisualVoice)

VisualVoice, the third acoustic model released by Meta AI, can extract speech from videos. Like VIDA, VisualVoice is trained on audio-visual cues from unlabeled videos. The model has automatically separated speech.

This model has important application scenarios, such as making technology for the hearing-impaired, enhancing the sound of wearable AR devices, transcribing speech from online videos in noisy environments, etc.

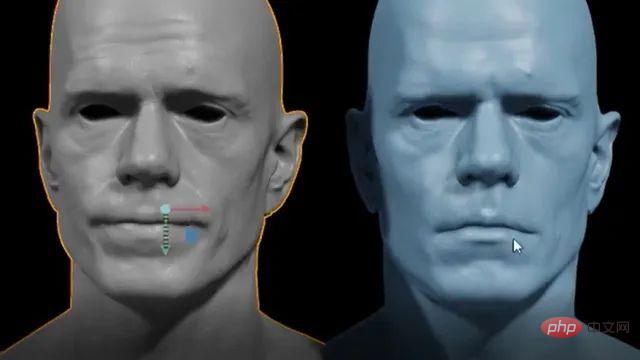

Audio2Face

Last year, Nvidia released an open beta of Omniverse Audio2Face to generate AI-driven facial animations to match any voiceover. This tool simplifies the long and tedious process of animating games and visual effects. The app also allows users to issue commands in multiple languages.

At the beginning of this year, Nvidia released an update to the tool, adding features such as BlendShape Generation to help users create a set of blendhapes from a neutral avatar. Additionally, the functionality of a streaming audio player has been added, allowing streaming of audio data using text-to-speech applications. Audio2Face sets up a 3D character model that can be animated with audio tracks. The audio is then fed into a deep neural network. Users can also edit characters in post-processing to change their performance.

The above is the detailed content of Xiaozha spent a lot of money! Meta has developed an AI model specifically for the Metaverse. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

How to achieve the effect of high input elements but high text at the bottom?

Apr 04, 2025 pm 10:27 PM

How to achieve the effect of high input elements but high text at the bottom?

Apr 04, 2025 pm 10:27 PM

How to achieve the height of the input element is very high but the text is located at the bottom. In front-end development, you often encounter some style adjustment requirements, such as setting a height...

How to correctly display the locally installed 'Jingnan Mai Round Body' on the web page?

Apr 05, 2025 pm 10:33 PM

How to correctly display the locally installed 'Jingnan Mai Round Body' on the web page?

Apr 05, 2025 pm 10:33 PM

Using locally installed font files in web pages Recently, I downloaded a free font from the internet and successfully installed it into my system. Now...

How to select a child element with the first class name item through CSS?

Apr 05, 2025 pm 11:24 PM

How to select a child element with the first class name item through CSS?

Apr 05, 2025 pm 11:24 PM

When the number of elements is not fixed, how to select the first child element of the specified class name through CSS. When processing HTML structure, you often encounter different elements...

Where to get the material for H5 page production

Apr 05, 2025 pm 11:33 PM

Where to get the material for H5 page production

Apr 05, 2025 pm 11:33 PM

The main sources of H5 page materials are: 1. Professional material website (paid, high quality, clear copyright); 2. Homemade material (high uniqueness, but time-consuming); 3. Open source material library (free, need to be carefully screened); 4. Picture/video website (copyright verified is required). In addition, unified material style, size adaptation, compression processing, and copyright protection are key points that need to be paid attention to.

How to quickly build a foreground page using AI programming tools?

Apr 04, 2025 pm 08:24 PM

How to quickly build a foreground page using AI programming tools?

Apr 04, 2025 pm 08:24 PM

Quickly build the front-end page: Shortcuts for back-end developers As a back-end developer with three to four years of experience, you may be interested in basic JavaScript, CSS...

Does H5 page production require continuous maintenance?

Apr 05, 2025 pm 11:27 PM

Does H5 page production require continuous maintenance?

Apr 05, 2025 pm 11:27 PM

The H5 page needs to be maintained continuously, because of factors such as code vulnerabilities, browser compatibility, performance optimization, security updates and user experience improvements. Effective maintenance methods include establishing a complete testing system, using version control tools, regularly monitoring page performance, collecting user feedback and formulating maintenance plans.

Setting flex: 1 1 0 What is the difference between setting flex-basis and not setting flex-basis?

Apr 05, 2025 am 09:39 AM

Setting flex: 1 1 0 What is the difference between setting flex-basis and not setting flex-basis?

Apr 05, 2025 am 09:39 AM

The difference between flex:110 in Flex layout and flex-basis not set In Flex layout, how to set flex...

How to efficiently remove specific conditional expressions in script tags in HTML strings?

Apr 05, 2025 pm 12:45 PM

How to efficiently remove specific conditional expressions in script tags in HTML strings?

Apr 05, 2025 pm 12:45 PM

Efficiently modifying HTML string content This article will explore how to modify an HTML string, with the goal of removing...