We all know that 90% of the human brain is self-supervised learning, and organisms will constantly make predictions about what will happen next. Self-supervised learning means that decisions can be made without external intervention. There are only a few cases where we will accept external feedback, such as the teacher saying: "You made a mistake." Now some scholars have discovered that the self-supervised learning mechanism of large language models is very much like our brains. Quanta Magazine, a well-known popular science media, recently reported that more and more studies have found that self-supervised learning models, especially the self-learning methods of large-scale language models, are very similar to the learning model of our brains.

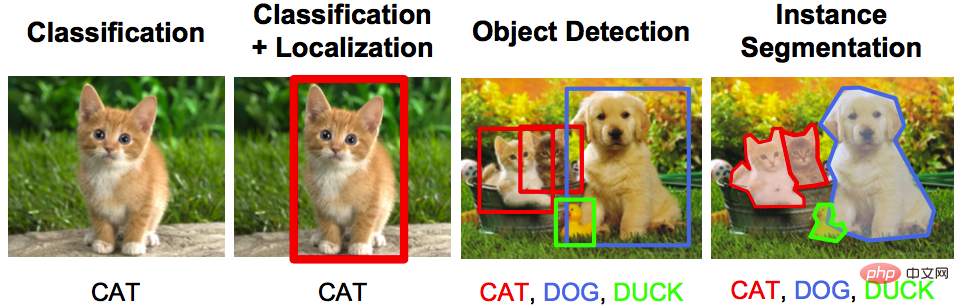

Common AI systems in the past used a large amount of labeled data for training. For example, images might be labeled "tabby cat" or "tiger cat" to train an artificial neural network to correctly distinguish between tabby and tiger.

## This kind of "self-supervised" training requires manual and laborious work Label data, and neural networks often take shortcuts and learn to associate labels with minimal, and sometimes superficial, information. For example, a neural network might use the presence of grass to identify photos of cows, since cows are often photographed in fields. Alexei Efros, a computer scientist at the University of California, Berkeley, said: The algorithms we are training are like It's undergraduates who haven't been to class for an entire semester, and although they didn't study the material systematically, they did well on the exam.

Furthermore, for researchers interested in the intersection of animal intelligence and machine intelligence, this kind of "supervised learning" may be limited to it Revealed about the biological brain. Many animals, including humans, do not use labeled data sets to learn. For the most part, they explore their environment on their own, and in doing so, they gain a rich and deep understanding of the world.

#Now some computational neuroscientists have begun exploring neural networks trained with little or no human-labeled data. Recent findings suggest that computational models of animal visual and auditory systems built using self-supervised learning models more closely approximate brain function than supervised learning models.

#To some neuroscientists, artificial neural networks seem to be beginning to reveal ways to use the brain as an analogy for machine learning. Flawed Supervision

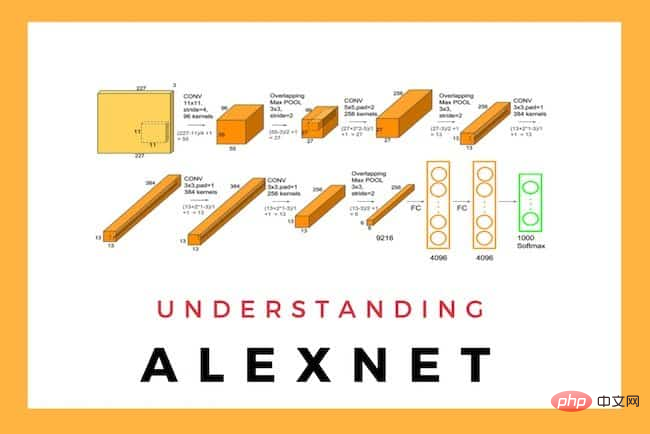

About 10 years ago, brain models inspired by artificial neural networks began to emerge, and at the same time a project called AlexNet Neural networks have revolutionized the task of classifying unknown images.

This result was published in the paper "ImageNet Classification with Deep Convolutional Neural Networks" by Alex Krizhevsky, Ilya Sutskever, and Geoffrey E. Hinton.

This result was published in the paper "ImageNet Classification with Deep Convolutional Neural Networks" by Alex Krizhevsky, Ilya Sutskever, and Geoffrey E. Hinton.

Paper address: https://dl.acm.org/doi/10.1145/3065386 Like all neural networks, this network consists of multiple layers Composed of artificial neurons, in which the weights of connections between different neurons are different.

If the neural network fails to correctly classify an image, the learning algorithm updates the weights of the connections between neurons to reduce the likelihood of misclassification in the next round of training sex. The algorithm repeats this process multiple times, adjusting the weights, until the network's error rate is acceptably low. Later, neuroscientists used AlexNet to develop the first computational model of the primate visual system.

When monkeys and artificial neural networks are shown the same image , the activity of real neurons and artificial neurons showed similar responses. Similar results were obtained on artificial models of hearing and odor detection. But as the field developed, researchers realized the limitations of self-supervised training. In 2017, computer scientist Leon Gatys of the University of Tübingen in Germany and his colleagues took a photo of a Ford Model T and then covered the photo with a leopard skin pattern.

And the artificial intelligence neural network correctly classified the original image as Model T, but see the modified image as a leopard. The reason is that it only focuses on the image texture and does not understand the shape of the car (or leopard). Self-supervised learning models are designed to avoid such problems. Friedemann Zenke, a computational neuroscientist at the Friedrich Michel Institute for Biomedical Research in Basel, Switzerland, said,

In this approach, humans do not label the data; instead, the labels come from the data itself. Self-supervised algorithms essentially create gaps in the data and ask the neural network to fill them.

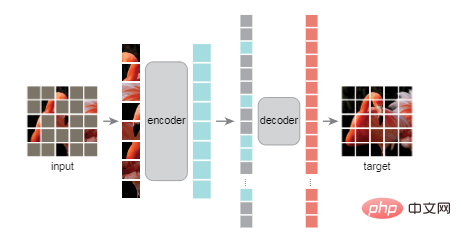

# For example, in a so-called large language model, a training algorithm will show a neural network the first few words of a sentence and ask it to predict the next word. When trained using large amounts of text collected from the Internet, the model appeared to learn the syntactic structure of the language, demonstrating impressive linguistic prowess—all without external labels or supervision. Similar efforts are underway in computer vision. At the end of 2021, He Kaiming and his colleagues demonstrated the famous masked auto-encoder research "Masked Auto-Encoder" (MAE).

##Paper address: https://arxiv.org/ abs/2111.06377 MAE converts the unmasked portion into a latent representation—a compressed mathematical description that contains important information about the object. In the case of images, the underlying representation might be a mathematical description that includes the shape of the objects in the image. The decoder then converts these representations back into full images.

In such a system, some neuroscientists believe that , our brains are actually self-supervised learning. "I think there's no question that 90 percent of what the brain does is self-supervised learning," says Blake Richards, a computational neuroscientist at McGill University and Quebec's Institute of Artificial Intelligence (Mila). The biological brain is Think of it as constantly predicting, for example, the future position of an object as it moves, or the next word in a sentence, much like a self-supervised learning algorithm trying to predict gaps in an image or a piece of text.

## Computational neuroscientist Blake Richards created an AI system that mimics a living body Visual Networks in the Brain Richards and his team created a self-supervised model that hints at an answer. They trained an artificial intelligence that combined two different neural networks.

The first, called the ResNet architecture, is designed for processing images; the second, called the Recurrent Network, can keep track of previous input sequences , predict the next expected input. To train the joint AI, the team starts with a sequence of videos, say 10 frames, and lets ResNet process them one by one.

#Then, the recurrent network predicts the latent representation of frame 11 instead of simply matching the previous 10 frames. Self-supervised learning algorithms compare predicted values to actual values and instruct the neural network to update its weights to make better predictions.

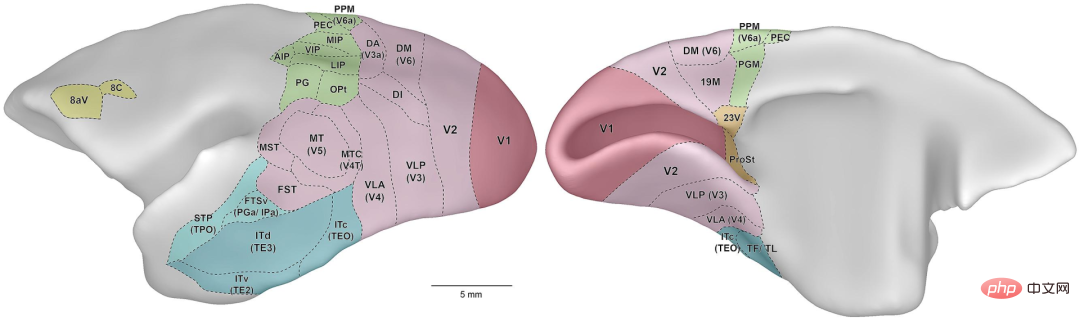

#To test it further, the researchers showed the AI a set of videos that researchers at the Allen Institute for Brain Science in Seattle had previously shown to mice. video. Like primates, mice have brain regions dedicated to static images and movement. Allen researchers recorded neural activity in the visual cortex of mice while the mice watched videos.

#Richards’ team found similarities in the way AI and living brains respond to videos. During training, one pathway in the artificial neural network became more similar to a ventral, object-detection area of the mouse brain, while another pathway became similar to a dorsal area focused on movement.

These results suggest that our visual system has two specialized pathways as they help predict the future of vision; a single pathway is not good enough . Models of the human auditory system tell a similar story. In June, a team led by Jean-Rémi King, a research scientist at Meta AI, trained an artificial intelligence called Wav2Vec 2.0, which uses a neural network to convert audio into latent representations. The researchers masked some of these representations and then fed them into another component of the neural network called a converter.

#During the training process, the converter predicts blocked information. In the process, the AI as a whole learns to transform sounds into underlying representations, again, without the need for labels. The team used approximately 600 hours of speech data to train the network. "That's about what a kid gets in the first two years of experience," King said.

## Meta AI’s Jean-Remy King helped train developed an artificial intelligence that processes audio in a way that mimics the brain—in part by predicting what should happen next. Once the system was trained, the researchers played it audiobook portions in English, French, and Mandarin, and then fed the AI The performance was compared with data from 412 people, all native speakers of the three languages, who listened to the same length of audio while MRI scans were imaging their brains.

The results show that although the fMRI images are noisy and have low resolution, the AI neural network and the human brain "not only correlate with each other, but also function in a systematic way." way to relate”. Activity in the early layers of AI is consistent with activity in the primary auditory cortex, while activity in the deepest layers of AI is consistent with activity in higher levels of the brain, such as the prefrontal cortex. "

This is beautiful data, not conclusive, but compelling evidence of how we learn language. A lot of it is predicting what will be said next." Someone disagrees: simulated brain? The models and algorithms are far behind

Of course, not everyone agrees with this statement. Josh McDermott, a computational neuroscientist at MIT, has used supervised and self-supervised learning to study models of vision and hearing. His lab engineered synthetic audio and visual signals that, to humans, would be just elusive noise. #However, to artificial neural networks, these signals appear to be indistinguishable from real language and images. This shows that the representations formed deep in neural networks, even with self-supervised learning, are not the same as those in our brains. McDermott said: "These self-supervised learning methods are an improvement because you can learn representations that can support a lot of recognition behaviors without needing all the labels. But there are still many characteristics of supervised models." The algorithm itself also needs more improvements. For example, in Meta AI's Wav2Vec 2.0 model, the AI only predicts the potential representation of a sound for tens of milliseconds, which is shorter than the time it takes for a person to utter a noise syllable, let alone predict a word. #To truly make AI models similar to the human brain, we still have a lot to do, Jin said. If the similarities between the brain and self-supervised learning models discovered so far hold true in other sensory tasks, it would be an even stronger indication that whatever miraculous abilities our brains have, some form of self-supervised learning is required.

The above is the detailed content of 90% of the human brain is self-supervised learning. How far is a large AI model from simulating the brain?. For more information, please follow other related articles on the PHP Chinese website!