Technology peripherals

Technology peripherals

AI

AI

The current prompt project is too much like divination, and communicating with art AI is like a word game

The current prompt project is too much like divination, and communicating with art AI is like a word game

The current prompt project is too much like divination, and communicating with art AI is like a word game

Enter "Pac-Man game interface, Pac-Man, ghost, ink, blink, Clyde, Pac-Maze, Pac-Man, Mondrian style, modern art" to the AI painting tool Midjourney , the picture obtained after "modernism bloomed".

Isn’t the input phrase of “prompt project” interesting?

When you enter a text prompt into an AI drawing tool such as DALL-E or Midtravel to have it generate a picture, or ask Copilot, an AI tool that automatically generates code, to write some software, they get The result can be called a work of art.

We can call this process "engineering", which sounds precise and logical. But if you go to the Discord platform and look at the prompts people type into the Midjourney app, you'll see something like this:

galaxy arising from a brain, 8k, octane render, micro detailed — upbeta — test — creative

my teeth are yellow, hello world :: would you like me a little better if they were white like yours — s 5000 — q 2 — upbeta — v 3

hg giger lovecraft nightmarish realm where monsters eternally reign terror

chaos corrupted the once valor knight, transforming them into a powerful villian. Horns bursted from their heads, wing and tails grew from their sides, fingers and toes grew into claws. this is what does the void does. this is how life loses….

reason There must be a correct way to write prompts. The reality is that writing prompts often feels traceless. It's like when using a magic spell, you accidentally put the words in the spell in the wrong place. , it’s easy to mess things up.

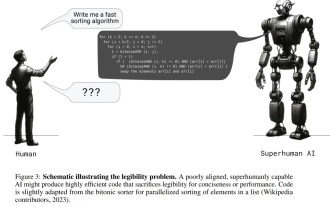

To put it funny, writing prompts seems like humans trying to coax "an eager and confused pack animal" into doing work. We think it understands what we're saying, but the way it communicates is by yelling and running around.

What causes this phenomenon?

It can be said that this is a very strange moment in the history of artificial intelligence. For decades, artificial intelligence has advanced in the "shadow" of the Turing test (not always, but often), which holds that "intelligent" AI behaves and communicates in exactly the same way as intelligent humans.

According to Turing's ideas, for example, if an artificial life form can discuss current events, then it can be considered intelligent. In recent years, we've expanded this expectation of clear, precise, natural language into everyday devices: talking to Apple Siri and Amazon Alexa, asking about the weather or setting a timer.

But it is completely different from the artificial intelligence "dialogue" that produces works of art. We try to get them to create something. This means that if the AI makes a mistake, the consequences are much more severe. No one cares if an online chatbot suddenly goes offline while chatting. It wouldn’t be a big deal if the chatbot wasn’t streaming the NBA live.

But what if we have a specific creative need that AI can satisfy? What if we want it to write a blog post with a specific content and style? We certainly need to make sure we can communicate with it correctly.

This means we have to start thinking about what AI is thinking, or rather, how it thinks. We must further develop what psychologists call machines’ “theory of mind.” “Sounds like fantasy, right?” As OpenAI co-founder Andrej Karpathy told me when talking about Copilot. "It's not something you're used to seeing. It's not like human theory of mind. It's like an alien artifact that emerged from a massive optimization process."

Andrej Karpathy

The author is not saying that these artificial intelligences are actually conscious, intelligent or anything else. They are just very subtle pattern recognizers and sequence completers, internally more like a chaotic ocean of mathematics.

But, because we give them commands with words, this puts us in a strange psychological relationship - trying to figure out what's going on inside.

The author is reminded of how the ancient Greeks interacted with the Delphic oracle. The Oracle of Delphi was believed to have knowledge of the past, present and future. The answers to questions can be weird because essentially it's like talking to a foreigner and who knows what results you'll get?

Communicating with artistic AI is like a word game

Scientists studying the inner workings of artistic robots have documented some of the strange internal states of these machines. Recently, two researchers at the University of Texas at Austin discovered that DALL-E 2 generated an apparent garbled phrase that appeared to have some consistent meaning within the model itself.

They noticed that the model generated the phrase "Apoploe vesrreitais," and when they fed it back to DALL-E 2 as a prompt, it drew birds. Similarly, receiving "Contarra ccetnxniams luryca tanniounons" will draw an insect or pest. Use "Wa ch zod ahakes rea" to create pictures of seafood.

Why is this? How did the model generate this strange new internal language? Scientists know nothing about this, although it appears to be an adversarial artifact of DALL-E 2's text encoder.

Similarly, prompt writing experts say that repeating phrases is a skill, as Michael Taylor writes in Prompt Engineering: From Words to Art.

Link: https://www.saxifrage.xyz/post/prompt-engineering

DALL-E 2. Midtravel or other AI art tools need to really capture important features when generating images, and simple repetition works surprisingly well here. Take this set of prompts as an example: "homer simpson, from the simpsons, eating a donut, homer simpson, homer simpson, homer simpson"

It feels like we need to hypnotize artificial intelligence to use It focuses on topics we care about. You can also see this in the large number of descriptive words that prompt writers typically use. Take a look at the image generated by Xe Iaso combined with stable diffusion:

I have to say that the picture is still a bit poetic. Communicating with the artistic AI feels like a word game - like playing Charades or Taboo, you have to trigger the AI to generate the right results by having a conversation around a topic. Beyond that, the goal is to find the right incantation to awaken the spirits that inhabit that altar of intermediaries and summon them to do your bidding. As Xe said, "I'm not sure why people call prompt 'project'. I personally prefer to call it 'divination'."

Perhaps, we need to make some rigorous clarifications on the prompt generation model. Because it requires us to communicate in a completely insane way, it's unlikely to meet the requirements of the Turing Test and is not intellectually "like" us. The author firmly believes that one day artistic AI will be like us! But now, they're really, really weird.

The above is the detailed content of The current prompt project is too much like divination, and communicating with art AI is like a word game. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

The author of ControlNet has another hit! The whole process of generating a painting from a picture, earning 1.4k stars in two days

Jul 17, 2024 am 01:56 AM

The author of ControlNet has another hit! The whole process of generating a painting from a picture, earning 1.4k stars in two days

Jul 17, 2024 am 01:56 AM

It is also a Tusheng video, but PaintsUndo has taken a different route. ControlNet author LvminZhang started to live again! This time I aim at the field of painting. The new project PaintsUndo has received 1.4kstar (still rising crazily) not long after it was launched. Project address: https://github.com/lllyasviel/Paints-UNDO Through this project, the user inputs a static image, and PaintsUndo can automatically help you generate a video of the entire painting process, from line draft to finished product. follow. During the drawing process, the line changes are amazing. The final video result is very similar to the original image: Let’s take a look at a complete drawing.

Topping the list of open source AI software engineers, UIUC's agent-less solution easily solves SWE-bench real programming problems

Jul 17, 2024 pm 10:02 PM

Topping the list of open source AI software engineers, UIUC's agent-less solution easily solves SWE-bench real programming problems

Jul 17, 2024 pm 10:02 PM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com The authors of this paper are all from the team of teacher Zhang Lingming at the University of Illinois at Urbana-Champaign (UIUC), including: Steven Code repair; Deng Yinlin, fourth-year doctoral student, researcher

Posthumous work of the OpenAI Super Alignment Team: Two large models play a game, and the output becomes more understandable

Jul 19, 2024 am 01:29 AM

Posthumous work of the OpenAI Super Alignment Team: Two large models play a game, and the output becomes more understandable

Jul 19, 2024 am 01:29 AM

If the answer given by the AI model is incomprehensible at all, would you dare to use it? As machine learning systems are used in more important areas, it becomes increasingly important to demonstrate why we can trust their output, and when not to trust them. One possible way to gain trust in the output of a complex system is to require the system to produce an interpretation of its output that is readable to a human or another trusted system, that is, fully understandable to the point that any possible errors can be found. For example, to build trust in the judicial system, we require courts to provide clear and readable written opinions that explain and support their decisions. For large language models, we can also adopt a similar approach. However, when taking this approach, ensure that the language model generates

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

Jun 24, 2024 pm 03:04 PM

From RLHF to DPO to TDPO, large model alignment algorithms are already 'token-level'

Jun 24, 2024 pm 03:04 PM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com In the development process of artificial intelligence, the control and guidance of large language models (LLM) has always been one of the core challenges, aiming to ensure that these models are both powerful and safe serve human society. Early efforts focused on reinforcement learning methods through human feedback (RL

arXiv papers can be posted as 'barrage', Stanford alphaXiv discussion platform is online, LeCun likes it

Aug 01, 2024 pm 05:18 PM

arXiv papers can be posted as 'barrage', Stanford alphaXiv discussion platform is online, LeCun likes it

Aug 01, 2024 pm 05:18 PM

cheers! What is it like when a paper discussion is down to words? Recently, students at Stanford University created alphaXiv, an open discussion forum for arXiv papers that allows questions and comments to be posted directly on any arXiv paper. Website link: https://alphaxiv.org/ In fact, there is no need to visit this website specifically. Just change arXiv in any URL to alphaXiv to directly open the corresponding paper on the alphaXiv forum: you can accurately locate the paragraphs in the paper, Sentence: In the discussion area on the right, users can post questions to ask the author about the ideas and details of the paper. For example, they can also comment on the content of the paper, such as: "Given to

A significant breakthrough in the Riemann Hypothesis! Tao Zhexuan strongly recommends new papers from MIT and Oxford, and the 37-year-old Fields Medal winner participated

Aug 05, 2024 pm 03:32 PM

A significant breakthrough in the Riemann Hypothesis! Tao Zhexuan strongly recommends new papers from MIT and Oxford, and the 37-year-old Fields Medal winner participated

Aug 05, 2024 pm 03:32 PM

Recently, the Riemann Hypothesis, known as one of the seven major problems of the millennium, has achieved a new breakthrough. The Riemann Hypothesis is a very important unsolved problem in mathematics, related to the precise properties of the distribution of prime numbers (primes are those numbers that are only divisible by 1 and themselves, and they play a fundamental role in number theory). In today's mathematical literature, there are more than a thousand mathematical propositions based on the establishment of the Riemann Hypothesis (or its generalized form). In other words, once the Riemann Hypothesis and its generalized form are proven, these more than a thousand propositions will be established as theorems, which will have a profound impact on the field of mathematics; and if the Riemann Hypothesis is proven wrong, then among these propositions part of it will also lose its effectiveness. New breakthrough comes from MIT mathematics professor Larry Guth and Oxford University

Axiomatic training allows LLM to learn causal reasoning: the 67 million parameter model is comparable to the trillion parameter level GPT-4

Jul 17, 2024 am 10:14 AM

Axiomatic training allows LLM to learn causal reasoning: the 67 million parameter model is comparable to the trillion parameter level GPT-4

Jul 17, 2024 am 10:14 AM

Show the causal chain to LLM and it learns the axioms. AI is already helping mathematicians and scientists conduct research. For example, the famous mathematician Terence Tao has repeatedly shared his research and exploration experience with the help of AI tools such as GPT. For AI to compete in these fields, strong and reliable causal reasoning capabilities are essential. The research to be introduced in this article found that a Transformer model trained on the demonstration of the causal transitivity axiom on small graphs can generalize to the transitive axiom on large graphs. In other words, if the Transformer learns to perform simple causal reasoning, it may be used for more complex causal reasoning. The axiomatic training framework proposed by the team is a new paradigm for learning causal reasoning based on passive data, with only demonstrations

The first Mamba-based MLLM is here! Model weights, training code, etc. have all been open source

Jul 17, 2024 am 02:46 AM

The first Mamba-based MLLM is here! Model weights, training code, etc. have all been open source

Jul 17, 2024 am 02:46 AM

The AIxiv column is a column where this site publishes academic and technical content. In the past few years, the AIxiv column of this site has received more than 2,000 reports, covering top laboratories from major universities and companies around the world, effectively promoting academic exchanges and dissemination. If you have excellent work that you want to share, please feel free to contribute or contact us for reporting. Submission email: liyazhou@jiqizhixin.com; zhaoyunfeng@jiqizhixin.com. Introduction In recent years, the application of multimodal large language models (MLLM) in various fields has achieved remarkable success. However, as the basic model for many downstream tasks, current MLLM consists of the well-known Transformer network, which