Solve unstructured data problems with machine learning

Translator | Bugatti

Reviewer | Sun Shujuan

The data revolution is in full swing. The total amount of digital data created in the next five years will be double the amount of data generated to date, and unstructured data will define this new era of digital experiences.

Unstructured data refers to information that does not follow traditional models or is not suitable for structured database formats, accounting for more than 80% of all new enterprise data. To prepare for this shift, many companies are looking for innovative ways to manage, analyze and make the most of all the data available in a variety of tools, including business analytics and artificial intelligence. But policymakers also face an old problem: How to maintain and improve the quality of large, unwieldy data sets?

Machine learning is the solution. Advances in machine learning technology now enable organizations to efficiently process unstructured data and improve quality assurance efforts. With the data revolution just around the corner, where is your company struggling? Faced with a trove of valuable but unmanageable data sets, or using data to drive your business forward?

Unstructured data requires more than just copy-pasting

The value of accurate, timely, and consistent data to modern businesses is indisputable, and it is as important as cloud computing and digital applications. Still, poor data quality costs companies an average of $13 million per year.

To solve data problems, you use statistical methods to measure the shape of the data, which enables data teams to track changes, weed out outliers, and eliminate data drift. Controls based on statistical methods remain valuable for judging data quality and determining how and when data sets should be used before critical decisions are made. Although this statistical method is effective, it is generally reserved for structured data sets, which are suitable for objective and quantitative measurements.

But what about data that doesn’t quite fit in Microsoft Excel or Google Sheets? Includes:

- Internet of Things: sensor data, stock data, and log data

- Multimedia: photos, audio, and video

- Rich media: geospatial data, satellite imagery , weather data, and surveillance data

- Documents: word processing documents, spreadsheets, presentations, email, and communication data

When these types of unstructured data come into play, Incomplete or inaccurate information can easily enter the model. If errors go unnoticed, data problems can accumulate, wreaking havoc on everything from quarterly reporting to forecasting and forecasting. A simple copy-and-paste approach from structured to unstructured data is not enough and may actually make the business worse.

The often said "garbage in, garbage out" applies very well to unstructured data sets. Maybe it's time to ditch your current approach to data.

Things to note when using machine learning to ensure data quality

When considering solutions for unstructured data, machine learning should be the first choice. This is because machine learning can analyze massive data sets and quickly find patterns in messy data. With the right training, machine learning models can learn to interpret, organize, and classify any form of unstructured data type.

For example, machine learning models can learn to recommend rules for data analysis, cleansing, and scaling, making work in industries such as healthcare and insurance more efficient and precise. Likewise, machine learning programs can identify and classify text data by topic or sentiment in unstructured data sources, such as those found on social media or in email records.

As you improve your data quality efforts through machine learning, keep a few key considerations in mind:

- Automate: Manual data operations such as data decoupling and correction are tedious and time-consuming. They are also increasingly obsolete operations given today's automation capabilities, which take care of tedious day-to-day operations and allow data teams to focus on more important, more efficient work. To incorporate automation into your data pipeline, simply ensure that standardized operating procedures and governance models are in place to encourage streamlined, predictable processes around any automation activities.

- Don’t overlook human oversight: The complexity of data will always require a level of expertise and context that only humans can provide, whether it’s structured or unstructured data. While machine learning and other digital solutions will help data teams, don’t rely on technology alone. Instead, empower teams to leverage technology while providing regular oversight of individual data processes. This compromise can correct data errors that cannot be handled by any existing technical measures. Later, the model can be retrained based on these differences.

- Detect root cause: When an exception or other data error occurs, it is often not a single event. If you ignore deeper issues when collecting and analyzing data, your organization risks pervasive quality issues throughout your data pipeline. Even the best machine learning initiatives cannot address errors generated upstream, and selective human intervention can again solidify the overall data flow and prevent significant errors.

- Don’t make assumptions about quality: To analyze data quality over the long term, find ways to qualitatively measure unstructured data rather than making assumptions about the shape of the data. You can create and test "what-if" scenarios to develop your own unique measurement methods, expected outputs, and parameters. Running experiments with your data provides a deterministic way to calculate data quality and performance, and you can automatically measure the data quality itself. This step ensures that quality control is always in place and serves as an essential feature of the data ingestion pipeline, rather than an afterthought.

Unstructured data is a treasure trove of new opportunities and insights. However, only 18% of organizations currently leverage their unstructured data, and data quality is one of the main factors holding back more businesses.

As unstructured data becomes more popular and more relevant to daily business decisions and operations, machine learning-based quality control provides much-needed assurance that your data is relevant and accurate ,useful. If you're not stuck on data quality, you can focus on using data to move your company forward.

Think of the opportunities that arise when you take control of your data or, better yet, let machine learning do the work for you.

Original title: Solve the problem of unstructured data with machine learning , Author: Edgar Honing

The above is the detailed content of Solve unstructured data problems with machine learning. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1381

1381

52

52

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

In the fields of machine learning and data science, model interpretability has always been a focus of researchers and practitioners. With the widespread application of complex models such as deep learning and ensemble methods, understanding the model's decision-making process has become particularly important. Explainable AI|XAI helps build trust and confidence in machine learning models by increasing the transparency of the model. Improving model transparency can be achieved through methods such as the widespread use of multiple complex models, as well as the decision-making processes used to explain the models. These methods include feature importance analysis, model prediction interval estimation, local interpretability algorithms, etc. Feature importance analysis can explain the decision-making process of a model by evaluating the degree of influence of the model on the input features. Model prediction interval estimate

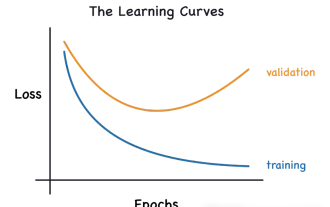

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

This article will introduce how to effectively identify overfitting and underfitting in machine learning models through learning curves. Underfitting and overfitting 1. Overfitting If a model is overtrained on the data so that it learns noise from it, then the model is said to be overfitting. An overfitted model learns every example so perfectly that it will misclassify an unseen/new example. For an overfitted model, we will get a perfect/near-perfect training set score and a terrible validation set/test score. Slightly modified: "Cause of overfitting: Use a complex model to solve a simple problem and extract noise from the data. Because a small data set as a training set may not represent the correct representation of all data." 2. Underfitting Heru

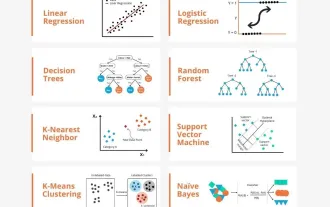

Transparent! An in-depth analysis of the principles of major machine learning models!

Apr 12, 2024 pm 05:55 PM

Transparent! An in-depth analysis of the principles of major machine learning models!

Apr 12, 2024 pm 05:55 PM

In layman’s terms, a machine learning model is a mathematical function that maps input data to a predicted output. More specifically, a machine learning model is a mathematical function that adjusts model parameters by learning from training data to minimize the error between the predicted output and the true label. There are many models in machine learning, such as logistic regression models, decision tree models, support vector machine models, etc. Each model has its applicable data types and problem types. At the same time, there are many commonalities between different models, or there is a hidden path for model evolution. Taking the connectionist perceptron as an example, by increasing the number of hidden layers of the perceptron, we can transform it into a deep neural network. If a kernel function is added to the perceptron, it can be converted into an SVM. this one

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

In the 1950s, artificial intelligence (AI) was born. That's when researchers discovered that machines could perform human-like tasks, such as thinking. Later, in the 1960s, the U.S. Department of Defense funded artificial intelligence and established laboratories for further development. Researchers are finding applications for artificial intelligence in many areas, such as space exploration and survival in extreme environments. Space exploration is the study of the universe, which covers the entire universe beyond the earth. Space is classified as an extreme environment because its conditions are different from those on Earth. To survive in space, many factors must be considered and precautions must be taken. Scientists and researchers believe that exploring space and understanding the current state of everything can help understand how the universe works and prepare for potential environmental crises

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Common challenges faced by machine learning algorithms in C++ include memory management, multi-threading, performance optimization, and maintainability. Solutions include using smart pointers, modern threading libraries, SIMD instructions and third-party libraries, as well as following coding style guidelines and using automation tools. Practical cases show how to use the Eigen library to implement linear regression algorithms, effectively manage memory and use high-performance matrix operations.

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Translator | Reviewed by Li Rui | Chonglou Artificial intelligence (AI) and machine learning (ML) models are becoming increasingly complex today, and the output produced by these models is a black box – unable to be explained to stakeholders. Explainable AI (XAI) aims to solve this problem by enabling stakeholders to understand how these models work, ensuring they understand how these models actually make decisions, and ensuring transparency in AI systems, Trust and accountability to address this issue. This article explores various explainable artificial intelligence (XAI) techniques to illustrate their underlying principles. Several reasons why explainable AI is crucial Trust and transparency: For AI systems to be widely accepted and trusted, users need to understand how decisions are made

Five schools of machine learning you don't know about

Jun 05, 2024 pm 08:51 PM

Five schools of machine learning you don't know about

Jun 05, 2024 pm 08:51 PM

Machine learning is an important branch of artificial intelligence that gives computers the ability to learn from data and improve their capabilities without being explicitly programmed. Machine learning has a wide range of applications in various fields, from image recognition and natural language processing to recommendation systems and fraud detection, and it is changing the way we live. There are many different methods and theories in the field of machine learning, among which the five most influential methods are called the "Five Schools of Machine Learning". The five major schools are the symbolic school, the connectionist school, the evolutionary school, the Bayesian school and the analogy school. 1. Symbolism, also known as symbolism, emphasizes the use of symbols for logical reasoning and expression of knowledge. This school of thought believes that learning is a process of reverse deduction, through existing

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

Editor |ScienceAI Question Answering (QA) data set plays a vital role in promoting natural language processing (NLP) research. High-quality QA data sets can not only be used to fine-tune models, but also effectively evaluate the capabilities of large language models (LLM), especially the ability to understand and reason about scientific knowledge. Although there are currently many scientific QA data sets covering medicine, chemistry, biology and other fields, these data sets still have some shortcomings. First, the data form is relatively simple, most of which are multiple-choice questions. They are easy to evaluate, but limit the model's answer selection range and cannot fully test the model's ability to answer scientific questions. In contrast, open-ended Q&A