Technology peripherals

Technology peripherals

AI

AI

Is Meta's open source ChatGPT replacement easy to use? The test results and modification methods have been released, 5.2k stars in 2 days

Is Meta's open source ChatGPT replacement easy to use? The test results and modification methods have been released, 5.2k stars in 2 days

Is Meta's open source ChatGPT replacement easy to use? The test results and modification methods have been released, 5.2k stars in 2 days

ChatGPT’s continued popularity has long made major technology companies restless.

Just in the past week, Meta "open sourced" a new large model series——LLaMA(Large Language Model Meta AI), the number of parameters ranges from 7 billion to 65 billion. Because LLaMA has fewer parameters but better performance than many previously released large models, many researchers were excited when it was released.

For example, the 13 billion parameter LLaMA model can outperform the 175 billion parameter GPT-3 "on most benchmarks" and can run on a single V100 GPU; The largest LLaMA model with 65 billion parameters is comparable to Google's Chinchilla-70B and PaLM-540B.

The reduction in the number of parameters is a good thing for ordinary researchers and commercial organizations, but does LLaMA really perform as well as the paper says? Compared with the current ChatGPT, can LLaMA barely compete? To answer these questions, some researchers have tested this model.

Some companies are already trying to make up for LLaMA’s shortcomings, and want to see if they can make LLaMA perform better by adding training methods such as RLHF.

LLaMA Preliminary Review

This review comes from a Medium author named @Enryu. It compares the performance of LLaMA and ChatGPT on three challenging tasks of joke interpretation, zero-shot classification, and code generation. The related blog post is "Mini-post: first look at LLaMA".

The author is running LLaMA 7B/13B version on RTX 3090/RTX 4090 and 33B version on a single A100.

It should be noted that unlike ChatGPT, other models are not based on instruction fine-tuning, so the structure of prompt is different.

Explaining a Joke

This is a use case shown in Google's original PaLM paper: given a joke, let Model to explain why it's funny. This mission requires a combination of world knowledge and some basic logic. All models before PaLM were unable to do this. The authors extracted some examples from the PaLM paper and compared the performance of LLaMA-7B, LLaMA-13B, LLaMA-33B with ChatGPT.

#As you can see, the results are terrible. These models get some laughs but don’t really understand, they just randomly generate a stream of relevant text. Although ChatGPT performs as poorly as LLaMA-33B (several other models are even worse), it follows a different strategy: it generates a lot of text and hopes that at least some of its answers are correct (but most of them are obviously No), is it very similar to everyone’s strategy for answering questions during exams?

However, ChatGPT at least got the joke about Schmidthuber. But overall, the performance of these models on the zero-sample joke interpretation task is far from PaLM (unless the examples of PaLM are carefully selected).

Zero-sample classification

The second task considered by the author is more challenging - clickbait )Classification. Since even humans can't agree on what clickbait is, the authors provide some examples for these models in the prompt (so actually small samples rather than zero samples). The following is the prompt of LLaMa:

1 2 3 4 5 6 7 8 9 |

|

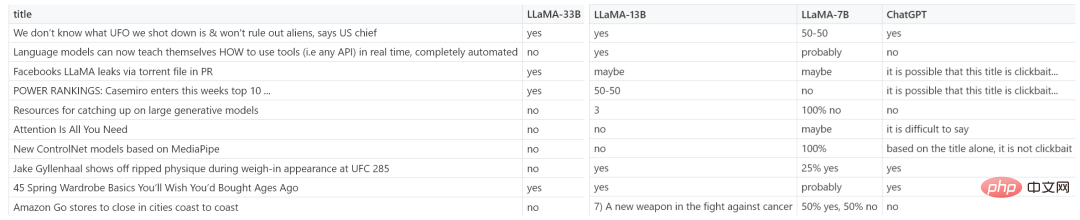

The picture below shows more example results of LLaMA-7B, LLaMA-13B, LLaMA-33B and ChatGPT.

很明显,赢家为 LLaMA-33B,它是唯一一个能够遵循所有请求格式(yes/no)的模型,并且预测合理。ChatGPT 也还可以,但有些预测不太合理,格式也有错误。较小的模型(7B/13B)不适用于该任务。

代码生成

虽然 LLM 擅长人文学科,但在 STEM 学科上表现糟糕。LLaMA 虽然有基准测试结果,但作者在代码生成领域尝试了一些特别的东西,即将人类语言零样本地转换为 SQL 查询。这并不是很实用,在现实生活中直接编写查询会更有效率。这里只作为代码生成任务的一个示例。

在 prompt 中,作者提供表模式(table schema)以及想要实现的目标,要求模型给出 SQL 查询。如下为一些随机示例,老实说,ChatGPT 看起来效果更好。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 |

|

从测试结果来看,LLaMA 在一些任务上表现还不错,但在另一些任务上和 ChatGPT 还有一些差距。如果能像 ChatGPT 一样加入一些「训练秘籍」,效果会不会大幅提升?

加入 RLHF,初创公司 Nebuly AI 开源 ChatLLaMA 训练方法

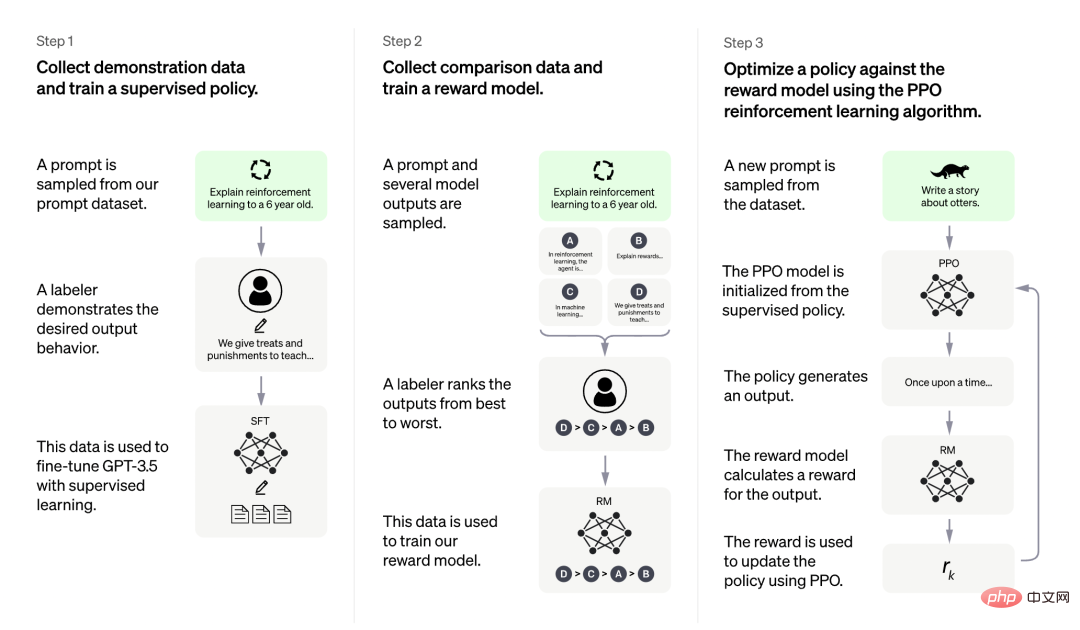

虽然 LLaMA 发布之初就得到众多研究者的青睐,但是少了 RLHF 的加持,从上述评测结果来看,还是差点意思。

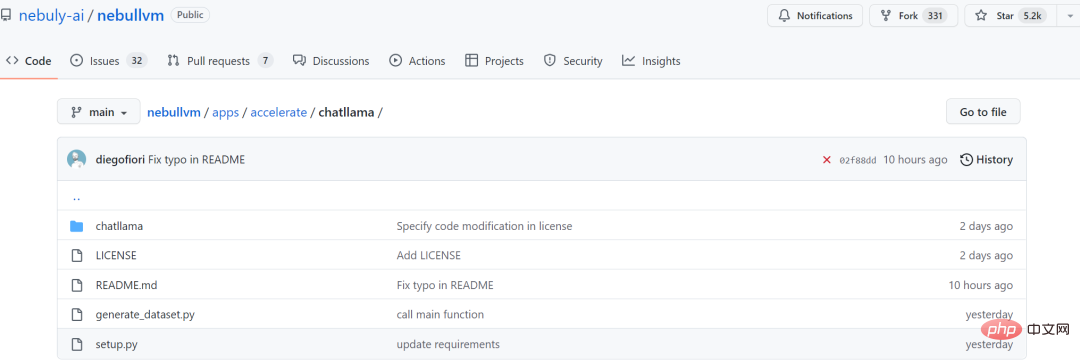

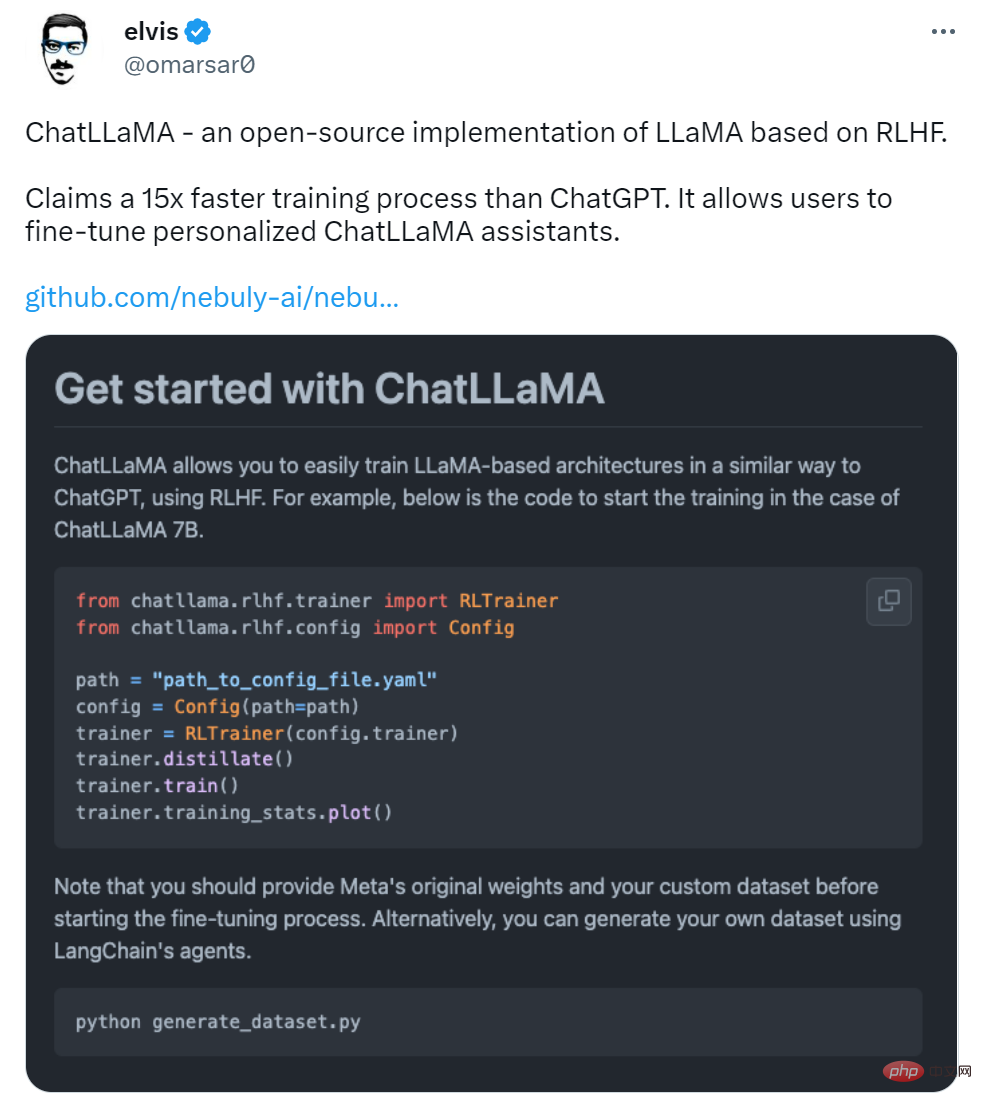

在 LLaMA 发布三天后,初创公司 Nebuly AI 开源了 RLHF 版 LLaMA(ChatLLaMA)的训练方法。它的训练过程类似 ChatGPT,该项目允许基于预训练的 LLaMA 模型构建 ChatGPT 形式的服务。项目上线刚刚 2 天,狂揽 5.2K 星。

项目地址:https://github.com/nebuly-ai/nebullvm/tree/main/apps/accelerate/chatllama

ChatLLaMA 训练过程算法实现主打比 ChatGPT 训练更快、更便宜,我们可以从以下四点得到验证:

- ChatLLaMA 是一个完整的开源实现,允许用户基于预训练的 LLaMA 模型构建 ChatGPT 风格的服务;

- 与 ChatGPT 相比,LLaMA 架构更小,但训练过程和单 GPU 推理速度更快,成本更低;

- ChatLLaMA 内置了对 DeepSpeed ZERO 的支持,以加速微调过程;

- 该库还支持所有的 LLaMA 模型架构(7B、13B、33B、65B),因此用户可以根据训练时间和推理性能偏好对模型进行微调。

图源:https://openai.com/blog/chatgpt

更是有研究者表示,ChatLLaMA 比 ChatGPT 训练速度最高快 15 倍。

不过有人对这一说法提出质疑,认为该项目没有给出准确的衡量标准。

项目刚刚上线 2 天,还处于早期阶段,用户可以通过以下添加项进一步扩展:

- 带有微调权重的 Checkpoint;

- 用于快速推理的优化技术;

- 支持将模型打包到有效的部署框架中。

Nebuly AI 希望更多人加入进来,创造更高效和开放的 ChatGPT 类助手。

该如何使用呢?首先是使用 pip 安装软件包:

1 |

|

然后是克隆 LLaMA 模型:

1 2 3 |

|

一切准备就绪后,就可以运行了,项目中介绍了 ChatLLaMA 7B 的训练示例,感兴趣的小伙伴可以查看原项目。

The above is the detailed content of Is Meta's open source ChatGPT replacement easy to use? The test results and modification methods have been released, 5.2k stars in 2 days. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

Imagine an artificial intelligence model that not only has the ability to surpass traditional computing, but also achieves more efficient performance at a lower cost. This is not science fiction, DeepSeek-V2[1], the world’s most powerful open source MoE model is here. DeepSeek-V2 is a powerful mixture of experts (MoE) language model with the characteristics of economical training and efficient inference. It consists of 236B parameters, 21B of which are used to activate each marker. Compared with DeepSeek67B, DeepSeek-V2 has stronger performance, while saving 42.5% of training costs, reducing KV cache by 93.3%, and increasing the maximum generation throughput to 5.76 times. DeepSeek is a company exploring general artificial intelligence

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI is indeed changing mathematics. Recently, Tao Zhexuan, who has been paying close attention to this issue, forwarded the latest issue of "Bulletin of the American Mathematical Society" (Bulletin of the American Mathematical Society). Focusing on the topic "Will machines change mathematics?", many mathematicians expressed their opinions. The whole process was full of sparks, hardcore and exciting. The author has a strong lineup, including Fields Medal winner Akshay Venkatesh, Chinese mathematician Zheng Lejun, NYU computer scientist Ernest Davis and many other well-known scholars in the industry. The world of AI has changed dramatically. You know, many of these articles were submitted a year ago.

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

The performance of JAX, promoted by Google, has surpassed that of Pytorch and TensorFlow in recent benchmark tests, ranking first in 7 indicators. And the test was not done on the TPU with the best JAX performance. Although among developers, Pytorch is still more popular than Tensorflow. But in the future, perhaps more large models will be trained and run based on the JAX platform. Models Recently, the Keras team benchmarked three backends (TensorFlow, JAX, PyTorch) with the native PyTorch implementation and Keras2 with TensorFlow. First, they select a set of mainstream

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Boston Dynamics Atlas officially enters the era of electric robots! Yesterday, the hydraulic Atlas just "tearfully" withdrew from the stage of history. Today, Boston Dynamics announced that the electric Atlas is on the job. It seems that in the field of commercial humanoid robots, Boston Dynamics is determined to compete with Tesla. After the new video was released, it had already been viewed by more than one million people in just ten hours. The old people leave and new roles appear. This is a historical necessity. There is no doubt that this year is the explosive year of humanoid robots. Netizens commented: The advancement of robots has made this year's opening ceremony look like a human, and the degree of freedom is far greater than that of humans. But is this really not a horror movie? At the beginning of the video, Atlas is lying calmly on the ground, seemingly on his back. What follows is jaw-dropping

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

Earlier this month, researchers from MIT and other institutions proposed a very promising alternative to MLP - KAN. KAN outperforms MLP in terms of accuracy and interpretability. And it can outperform MLP running with a larger number of parameters with a very small number of parameters. For example, the authors stated that they used KAN to reproduce DeepMind's results with a smaller network and a higher degree of automation. Specifically, DeepMind's MLP has about 300,000 parameters, while KAN only has about 200 parameters. KAN has a strong mathematical foundation like MLP. MLP is based on the universal approximation theorem, while KAN is based on the Kolmogorov-Arnold representation theorem. As shown in the figure below, KAN has

Recommended: Excellent JS open source face detection and recognition project

Apr 03, 2024 am 11:55 AM

Recommended: Excellent JS open source face detection and recognition project

Apr 03, 2024 am 11:55 AM

Face detection and recognition technology is already a relatively mature and widely used technology. Currently, the most widely used Internet application language is JS. Implementing face detection and recognition on the Web front-end has advantages and disadvantages compared to back-end face recognition. Advantages include reducing network interaction and real-time recognition, which greatly shortens user waiting time and improves user experience; disadvantages include: being limited by model size, the accuracy is also limited. How to use js to implement face detection on the web? In order to implement face recognition on the Web, you need to be familiar with related programming languages and technologies, such as JavaScript, HTML, CSS, WebRTC, etc. At the same time, you also need to master relevant computer vision and artificial intelligence technologies. It is worth noting that due to the design of the Web side

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

Target detection is a relatively mature problem in autonomous driving systems, among which pedestrian detection is one of the earliest algorithms to be deployed. Very comprehensive research has been carried out in most papers. However, distance perception using fisheye cameras for surround view is relatively less studied. Due to large radial distortion, standard bounding box representation is difficult to implement in fisheye cameras. To alleviate the above description, we explore extended bounding box, ellipse, and general polygon designs into polar/angular representations and define an instance segmentation mIOU metric to analyze these representations. The proposed model fisheyeDetNet with polygonal shape outperforms other models and simultaneously achieves 49.5% mAP on the Valeo fisheye camera dataset for autonomous driving

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

The latest video of Tesla's robot Optimus is released, and it can already work in the factory. At normal speed, it sorts batteries (Tesla's 4680 batteries) like this: The official also released what it looks like at 20x speed - on a small "workstation", picking and picking and picking: This time it is released One of the highlights of the video is that Optimus completes this work in the factory, completely autonomously, without human intervention throughout the process. And from the perspective of Optimus, it can also pick up and place the crooked battery, focusing on automatic error correction: Regarding Optimus's hand, NVIDIA scientist Jim Fan gave a high evaluation: Optimus's hand is the world's five-fingered robot. One of the most dexterous. Its hands are not only tactile