Technology peripherals

Technology peripherals

AI

AI

ML research out of the circle in 2022: the popular Stable Diffusion, generalist agent Gato, LeCun retweets

ML research out of the circle in 2022: the popular Stable Diffusion, generalist agent Gato, LeCun retweets

ML research out of the circle in 2022: the popular Stable Diffusion, generalist agent Gato, LeCun retweets

2022 is coming to an end. During this year, a large number of valuable papers emerged in the field of machine learning, which had a profound impact on the machine learning community.

Today, ML & NLP researcher, Meta AI technology product marketing manager, and DAIR.AI founder Elvis S. summarized 12 hot machine learning papers in 2022 . The post went viral and was retweeted by Turing Award winner Yann LeCun.

Next, let’s look at them one by one.

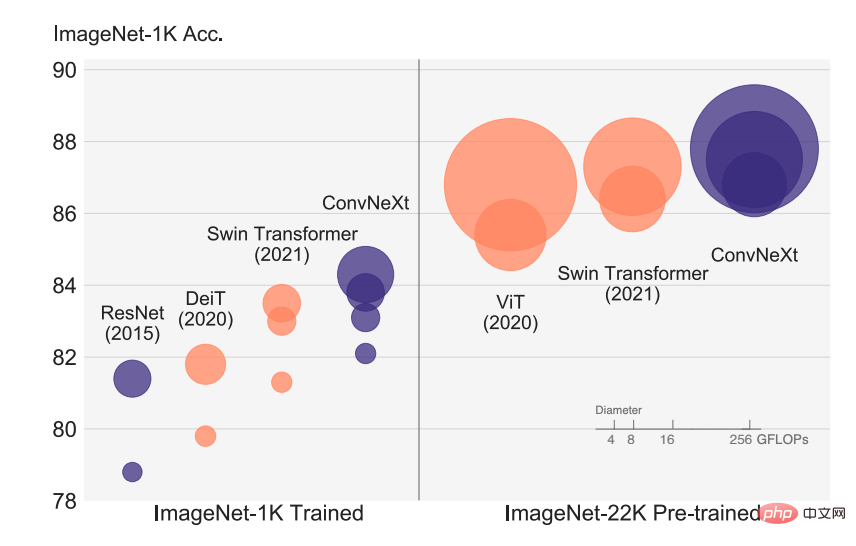

Paper 1: A ConvNet for the 2020s

The rapid development of visual recognition began with the introduction of ViT, which quickly replaced the traditional ConvNet , becoming a SOTA image classification model. The ViT model has many challenges in a series of computer vision tasks including target detection, semantic segmentation, etc. Therefore, some researchers proposed hierarchical Swin Transformer and reintroduced ConvNet prior, making Transformer actually feasible as a general visual backbone and showing excellent performance on various visual tasks.

However, the effectiveness of this hybrid approach is still largely due to the inherent advantages of Transformer rather than the inductive bias inherent in convolution. In this article, researchers from FAIR and UC Berkeley re-examined the design space and tested the limits of what pure ConvNet can achieve. Researchers gradually "upgraded" the standard ResNet to a visual Transformer design, and in the process discovered several key components that caused the performance difference.

##Paper address: https://arxiv.org/abs/2201.03545v2

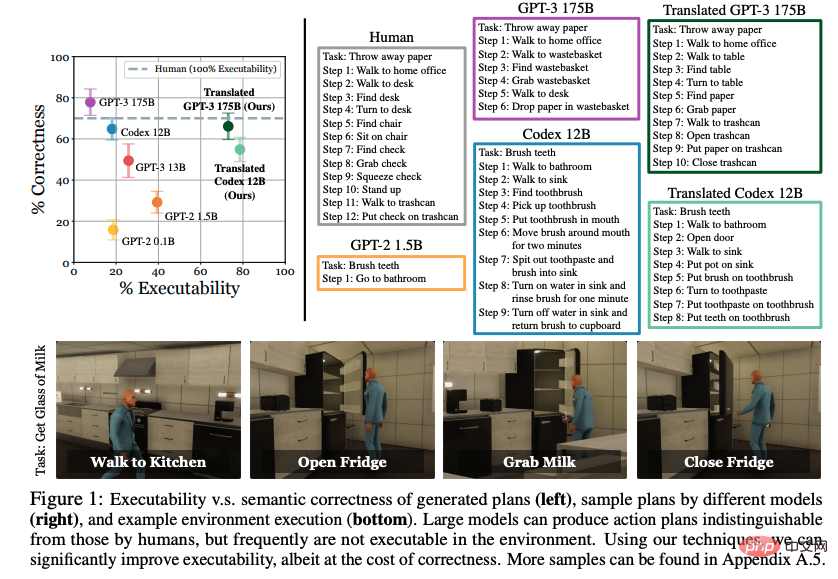

Paper 2: Language Models as Zero-Shot Planners: Extracting Actionable Knowledge for Embodied Agents

World knowledge learned through large language models (LLM) Can it be used for actions in an interactive environment? In this paper, researchers from UC Berkeley, CMU, and Google explore the possibility of expressing natural language as a set of selected actionable steps. Previous work focused on learning how to act from explicitly distributed examples, but they surprisingly found that if the pretrained language model is large enough and given appropriate hints, high-level tasks can be effectively decomposed into mid-level planning without further training. However, plans developed by LLM often do not map accurately to acceptable actions.

The steps proposed by the researchers condition on existing demonstrations and semantically transform plans into acceptable actions. Evaluations in a VirtualHome environment show that their proposed approach significantly improves the executability of LLM baselines. Human evaluation reveals a trade-off between enforceability and correctness, but shows signs of the possibility of extracting actionable knowledge from language models.

##Paper address: https://arxiv.org/abs/2201.07207v2

Paper 3: OFA: Unifying Architectures, Tasks, and Modalities Through a Simple Sequence-to-Sequence Learning Framework

This is Alibaba Damo Academy The launched unified multi-modal multi-task model framework OFA summarizes the three characteristics that the general model best meets at this stage, namely modality independence, task independence, and task diversity. This paper was accepted by ICML 2022.In the field of graphics and text, OFA represents classic tasks such as visual grounding, VQA, image caption, image classification, text2image generation, and language modeling through a unified seq2seq framework and shares them between tasks Input and output of different modes, and making Finetune and pre-training consistent, without adding additional parameter structures.

Paper address: https://arxiv.org/abs/2202.03052v2

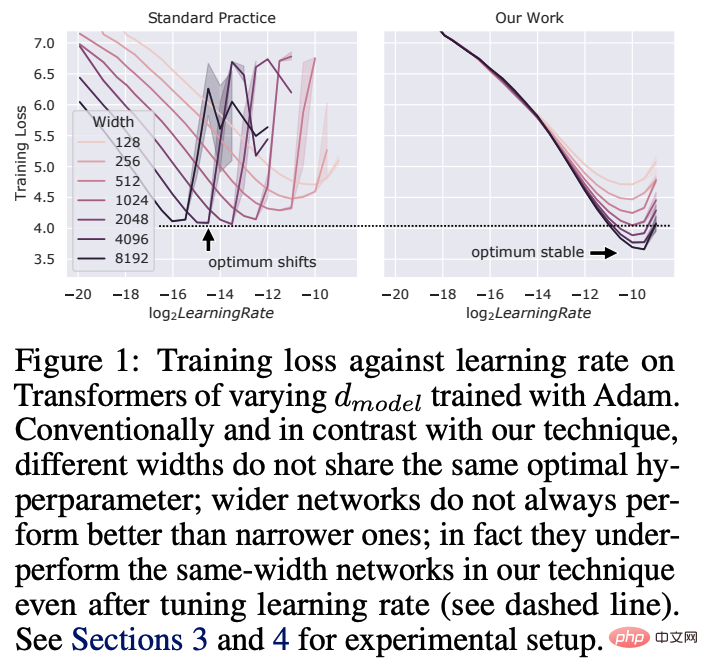

##Paper 4: Tensor Programs V: Tuning Large Neural Networks via Zero-Shot Hyperparameter Transfer

Hyperparameter (HP) tuning in deep learning is a costly process, for neural networks with billions of parameters This is especially true for the Internet. In this paper, researchers from Microsoft and OpenAI show that in the recently discovered Maximal Update Parametrization (muP), many optimal HPs remain stable even if the model size changes.

This led to a new HP tuning paradigm they called muTransfer, which parameterizes the target model in muP and does not directly perform HP tuning on smaller models. And migrate them to the full-scale model with zero samples, which also means that there is no need to directly tune the latter model at all. Researchers verified muTransfer on Transformer and ResNet. For example, by migrating from a 40M parameter model, performance is better than the published 6.7B GPT-3 model at a tuning cost of only 7% of the total pre-training cost.

##Paper address: https://arxiv.org/abs/2203.03466v2

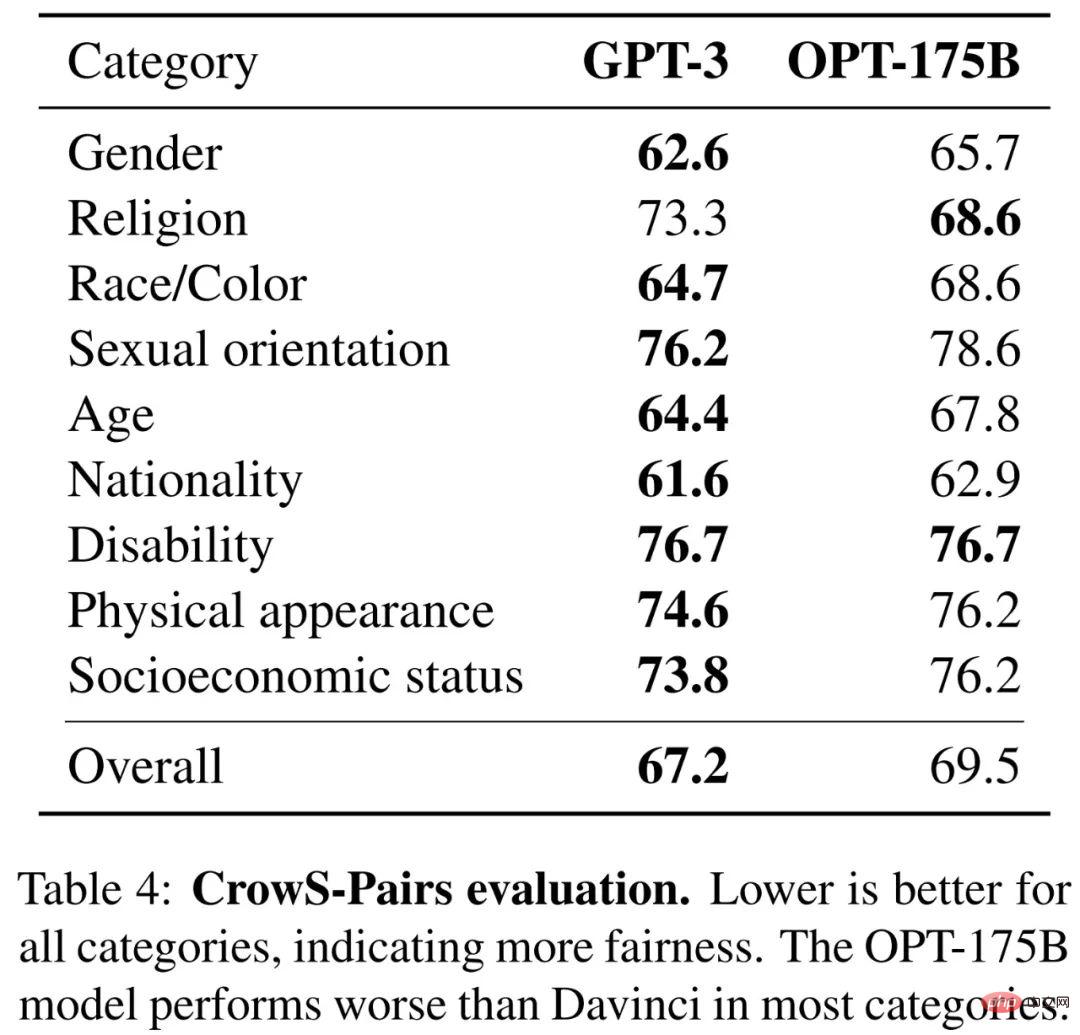

Paper 5: OPT: Open Pre-trained Transformer Language Models

Large models are often trained for thousands of computing days. It has demonstrated extraordinary capabilities in sample and few-shot learning. But given their computational cost, these large models are difficult to replicate without adequate funding. For the few models available through the API, there is no access to their complete model weights, making it difficult to study.In this article, Meta AI researchers proposed Open Pre-trained Transformers (OPT), which is a set of pre-trained transformers models only for decoders, with parameters ranging from 125M to 175B varies. They showed that OPT-175B performs comparably to GPT-3 but required only 1/7 the carbon footprint to develop.

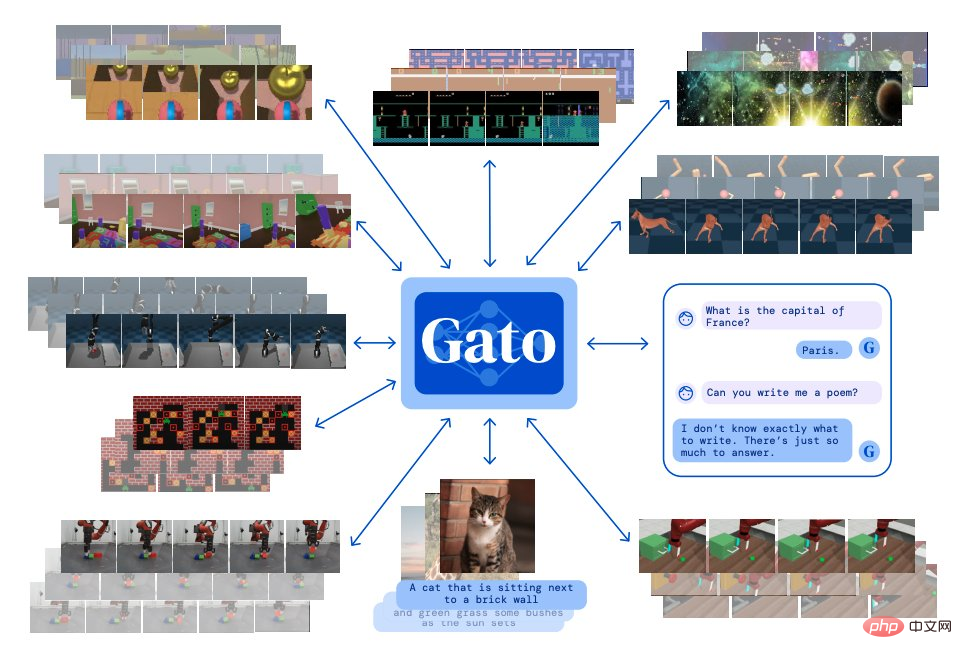

Paper 6: A Generalist Agent

#Inspired by large-scale language modeling, Deepmind built a single "generalist" agent Gato. It has multi-modal, multi-task, and multi-embodiment characteristics.

Gato can play Atari games, output subtitles for pictures, chat with others, stack blocks with a robotic arm, and more. Additionally, Gato can decide whether to output text, joint torques, button presses, or other tokens based on context.

Unlike most agents that play games, Gato is able to play many games using the same training model, rather than training for each game individually. .

Unlike most agents that play games, Gato is able to play many games using the same training model, rather than training for each game individually. .

##Paper address: https://arxiv.org/abs/2205.06175v3

##Paper address: https://arxiv.org/abs/2205.06175v3

Paper 7: Solving Quantitative Reasoning Problems with Language Models

Researchers from Google proposed a deep learning language model called Minerva. Quantitative mathematical problems can be solved through step-by-step reasoning. Its solutions include numerical calculations and symbolic manipulation without relying on external tools such as calculators.

In addition, Minerva combines a variety of techniques, including small sample prompts, thought chaining, scratchpad prompts, and majority voting principles to achieve SOTA performance on STEM reasoning tasks.

Minerva is built on the basis of PaLM (Pathways Language Model) and further trained on the 118GB data set. The data set comes from scientific and technological papers on arXiv and includes the use of LaTeX and MathJax. Or other mathematical expressions of web page data for further training.

The picture below shows an example of how Minerva solves the problem:

Paper address: https: //arxiv.org/abs/2206.14858

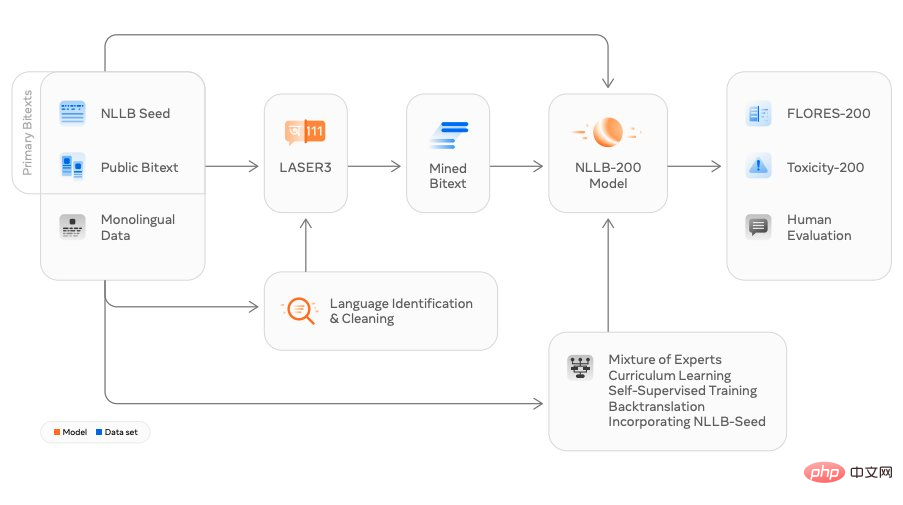

##Paper 8: No Language Left Behind: Scaling Human-Centered Machine Translation

Researchers from Meta AI have released the translation model NLLB (No Language Left Behind), which literally translates to "No language can be left behind". It can support any translation between 200 languages, except Chinese, English, French and Japanese. In addition to translations into commonly used languages, NLLB can also translate into many niche languages including Luganda, Urdu, etc.

Meta claims that this is the world's first design that uses a single model to translate into multiple languages. They hope to use this to help more people interact across languages on social platforms. At the same time, it improves users’ interactive experience in the future metaverse.

##Paper address: https://arxiv.org/abs/2207.04672v3

Paper 9: High-Resolution Image Synthesis with Latent Diffusion Models

Stable Diffusion has become popular in recent times, focusing on this technology There are countless studies.This research is based on researchers from the University of Munich and Runway's CVPR 2022 paper "High-Resolution Image Synthesis with Latent Diffusion Models", and collaborates with teams such as Eleuther AI and LAION Finish. Stable Diffusion can run on a consumer-grade GPU with 10 GB VRAM and generate a 512x512 pixel image in seconds without pre- and post-processing.

In only four months, this open source project has received 38K stars.

Project address: https://github.com/CompVis/stable-diffusion

Stable Diffusion generated image example display:

OpenAI released the open source model Whisper, which is close to human level in English speech recognition and has high accuracy.

Whisper is an automatic speech recognition (ASR, Automatic Speech Recognition) system. OpenAI collected 680,000 hours of 98 languages and multi-task supervision data from the Internet. train. In addition to speech recognition, Whisper can also transcribe multiple languages and translate those languages into English.

Paper address: https://arxiv.org/abs/2212.04356

##Paper 11: Make-A-Video: Text-to-Video Generation without Text-Video Data

Researchers from Meta AI proposed a state-of-the-art text-to-video model: Make-A-Video, A video can be generated from a given text prompt.

Make-A-Video has three advantages: (1) It accelerates the training of T2V (Text-to-Video) models and does not require learning visual and multi-modal representations from scratch , (2) it does not require paired text-video data, (3) the generated video inherits several advantages of today's image generation models.

This technology is designed to enable text-to-video generation, producing unique videos using only a few words or lines of text. The picture below shows a dog wearing superhero clothes and a red cape, flying in the sky:

Paper address: https://arxiv.org/abs/2209.14792

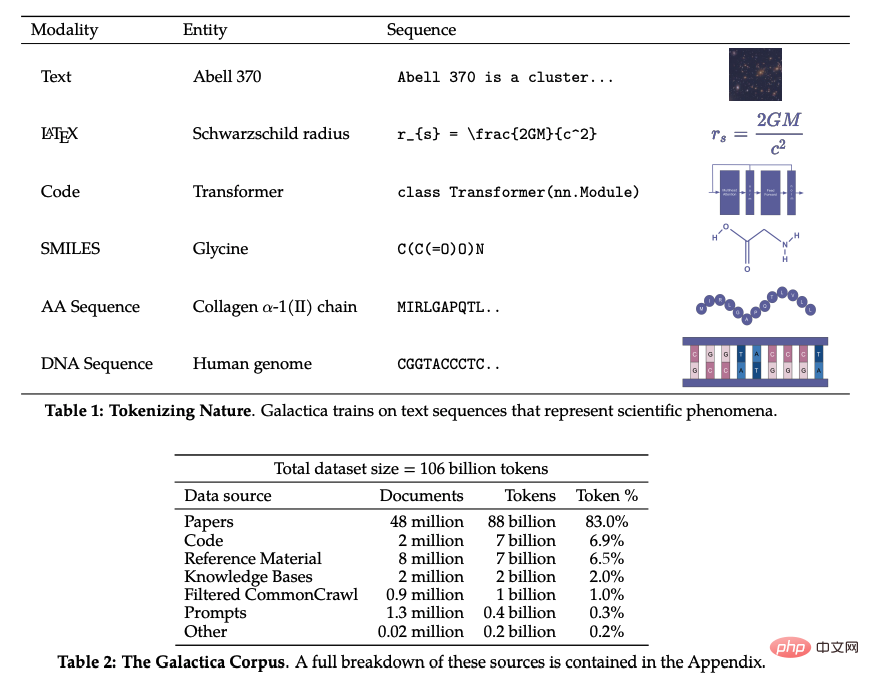

Paper 12: Galactica: A Large Language Model for Science

In recent years, with the advancement of research in various subject areas, scientific literature and data have exploded, making it increasingly difficult for academic researchers to discover useful insights from large amounts of information. Usually, people use search engines to obtain scientific knowledge, but search engines cannot organize scientific knowledge autonomously.

Recently, the research team at Meta AI proposed Galactica, a new large-scale language model that can store, combine and reason about scientific knowledge. Galactica can summarize a review paper, generate encyclopedia queries of entries, and provide knowledgeable answers to questions.

##Paper address: https://arxiv.org/abs/2211.09085

The above is the detailed content of ML research out of the circle in 2022: the popular Stable Diffusion, generalist agent Gato, LeCun retweets. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

15 recommended open source free image annotation tools

Mar 28, 2024 pm 01:21 PM

15 recommended open source free image annotation tools

Mar 28, 2024 pm 01:21 PM

Image annotation is the process of associating labels or descriptive information with images to give deeper meaning and explanation to the image content. This process is critical to machine learning, which helps train vision models to more accurately identify individual elements in images. By adding annotations to images, the computer can understand the semantics and context behind the images, thereby improving the ability to understand and analyze the image content. Image annotation has a wide range of applications, covering many fields, such as computer vision, natural language processing, and graph vision models. It has a wide range of applications, such as assisting vehicles in identifying obstacles on the road, and helping in the detection and diagnosis of diseases through medical image recognition. . This article mainly recommends some better open source and free image annotation tools. 1.Makesens

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

In the fields of machine learning and data science, model interpretability has always been a focus of researchers and practitioners. With the widespread application of complex models such as deep learning and ensemble methods, understanding the model's decision-making process has become particularly important. Explainable AI|XAI helps build trust and confidence in machine learning models by increasing the transparency of the model. Improving model transparency can be achieved through methods such as the widespread use of multiple complex models, as well as the decision-making processes used to explain the models. These methods include feature importance analysis, model prediction interval estimation, local interpretability algorithms, etc. Feature importance analysis can explain the decision-making process of a model by evaluating the degree of influence of the model on the input features. Model prediction interval estimate

Transparent! An in-depth analysis of the principles of major machine learning models!

Apr 12, 2024 pm 05:55 PM

Transparent! An in-depth analysis of the principles of major machine learning models!

Apr 12, 2024 pm 05:55 PM

In layman’s terms, a machine learning model is a mathematical function that maps input data to a predicted output. More specifically, a machine learning model is a mathematical function that adjusts model parameters by learning from training data to minimize the error between the predicted output and the true label. There are many models in machine learning, such as logistic regression models, decision tree models, support vector machine models, etc. Each model has its applicable data types and problem types. At the same time, there are many commonalities between different models, or there is a hidden path for model evolution. Taking the connectionist perceptron as an example, by increasing the number of hidden layers of the perceptron, we can transform it into a deep neural network. If a kernel function is added to the perceptron, it can be converted into an SVM. this one

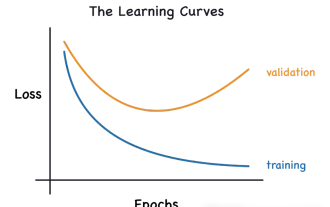

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

This article will introduce how to effectively identify overfitting and underfitting in machine learning models through learning curves. Underfitting and overfitting 1. Overfitting If a model is overtrained on the data so that it learns noise from it, then the model is said to be overfitting. An overfitted model learns every example so perfectly that it will misclassify an unseen/new example. For an overfitted model, we will get a perfect/near-perfect training set score and a terrible validation set/test score. Slightly modified: "Cause of overfitting: Use a complex model to solve a simple problem and extract noise from the data. Because a small data set as a training set may not represent the correct representation of all data." 2. Underfitting Heru

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

In the 1950s, artificial intelligence (AI) was born. That's when researchers discovered that machines could perform human-like tasks, such as thinking. Later, in the 1960s, the U.S. Department of Defense funded artificial intelligence and established laboratories for further development. Researchers are finding applications for artificial intelligence in many areas, such as space exploration and survival in extreme environments. Space exploration is the study of the universe, which covers the entire universe beyond the earth. Space is classified as an extreme environment because its conditions are different from those on Earth. To survive in space, many factors must be considered and precautions must be taken. Scientists and researchers believe that exploring space and understanding the current state of everything can help understand how the universe works and prepare for potential environmental crises

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Common challenges faced by machine learning algorithms in C++ include memory management, multi-threading, performance optimization, and maintainability. Solutions include using smart pointers, modern threading libraries, SIMD instructions and third-party libraries, as well as following coding style guidelines and using automation tools. Practical cases show how to use the Eigen library to implement linear regression algorithms, effectively manage memory and use high-performance matrix operations.

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Translator | Reviewed by Li Rui | Chonglou Artificial intelligence (AI) and machine learning (ML) models are becoming increasingly complex today, and the output produced by these models is a black box – unable to be explained to stakeholders. Explainable AI (XAI) aims to solve this problem by enabling stakeholders to understand how these models work, ensuring they understand how these models actually make decisions, and ensuring transparency in AI systems, Trust and accountability to address this issue. This article explores various explainable artificial intelligence (XAI) techniques to illustrate their underlying principles. Several reasons why explainable AI is crucial Trust and transparency: For AI systems to be widely accepted and trusted, users need to understand how decisions are made

Outlook on future trends of Golang technology in machine learning

May 08, 2024 am 10:15 AM

Outlook on future trends of Golang technology in machine learning

May 08, 2024 am 10:15 AM

The application potential of Go language in the field of machine learning is huge. Its advantages are: Concurrency: It supports parallel programming and is suitable for computationally intensive operations in machine learning tasks. Efficiency: The garbage collector and language features ensure that the code is efficient, even when processing large data sets. Ease of use: The syntax is concise, making it easy to learn and write machine learning applications.