Technology peripherals

Technology peripherals

AI

AI

The number of parameters is 1/50, Meta releases 11 billion parameter model, defeating Google PaLM

The number of parameters is 1/50, Meta releases 11 billion parameter model, defeating Google PaLM

The number of parameters is 1/50, Meta releases 11 billion parameter model, defeating Google PaLM

We can understand large language models (LLMs) as small sample learners, which can learn new tasks with few examples, or even learn with only simple instructions, where the number of model parameters is Scaling with the size of the training data is key to the model's ability to generalize. This improvement in LLMs is due to greater computing power and storage capabilities. Intuitively, improved inference capabilities will lead to better generalization and thus less sample learning, however it is unclear to what extent effective small sample learning requires extensive knowledge of model parameters.

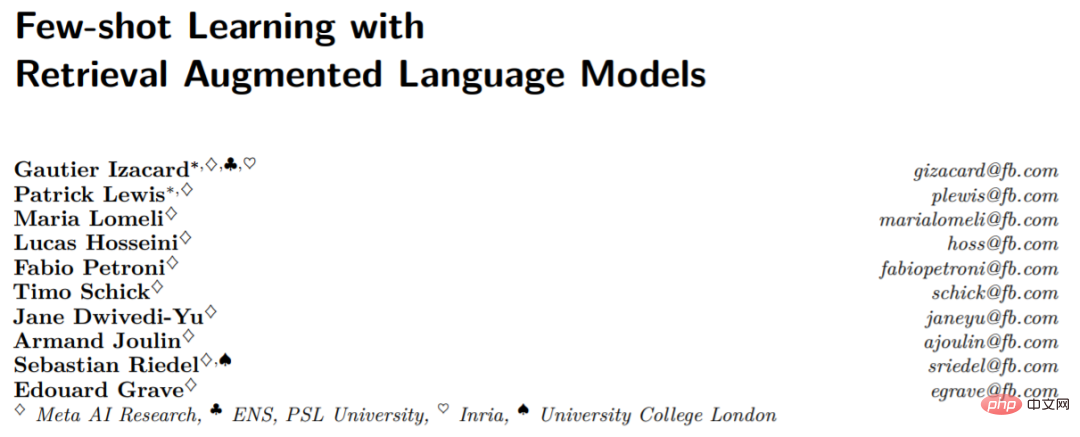

So far, retrieval enhancement models have not demonstrated convincing small-sample learning capabilities. In the paper, researchers from Meta AI Research and other institutions ask whether small sample learning requires the model to store a large amount of information in its parameters, and whether storage can be decoupled from generalization. They proposed Atlas, which is a type of retrieval-enhanced language model that has strong small-sample learning capabilities, even though the number of parameters is lower than other current powerful small-sample learning models.

The model uses non-parametric storage, that is, using a neural retriever based on large external non-static knowledge sources to enhance the parametric language model. In addition to storage capabilities, such architectures are attractive due to their advantages in adaptability, interpretability, and efficiency.

Paper address: https://arxiv.org/pdf/2208.03299.pdf

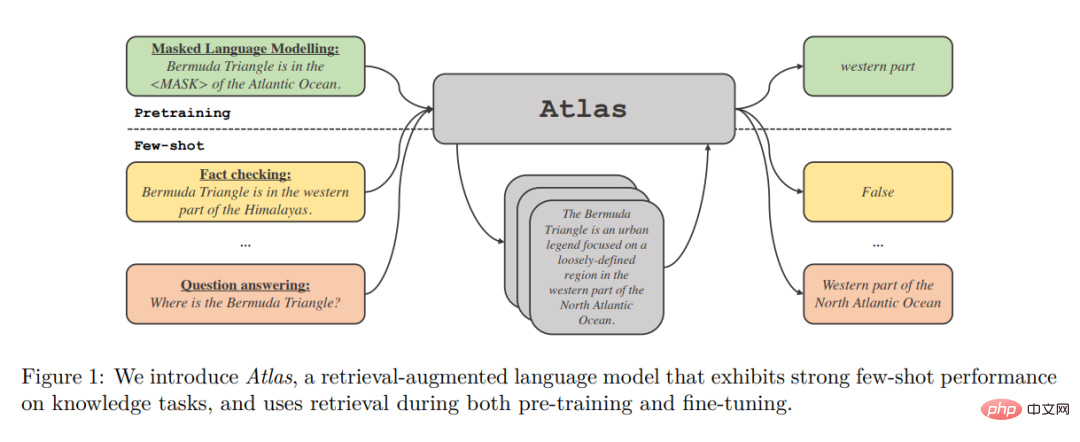

Atlas retrieval of related documents is a universal density retriever based on the Contriever dual-encoder architecture. When retrieving files, it retrieves related files based on the current context. The retrieved documents along with the current context are processed by a sequence-to-sequence model that uses the Fusion-in-Decoder architecture to generate the corresponding output.

The authors study the impact of different techniques on the performance of training Atlas on small-scale datasets on a range of downstream tasks, including question answering and fact checking. The study found that the joint pre-training component is crucial for small sample performance, and the authors evaluated many existing and novel pre-training tasks and schemes. Atlas has strong downstream performance in both small sample and resource-rich environments.

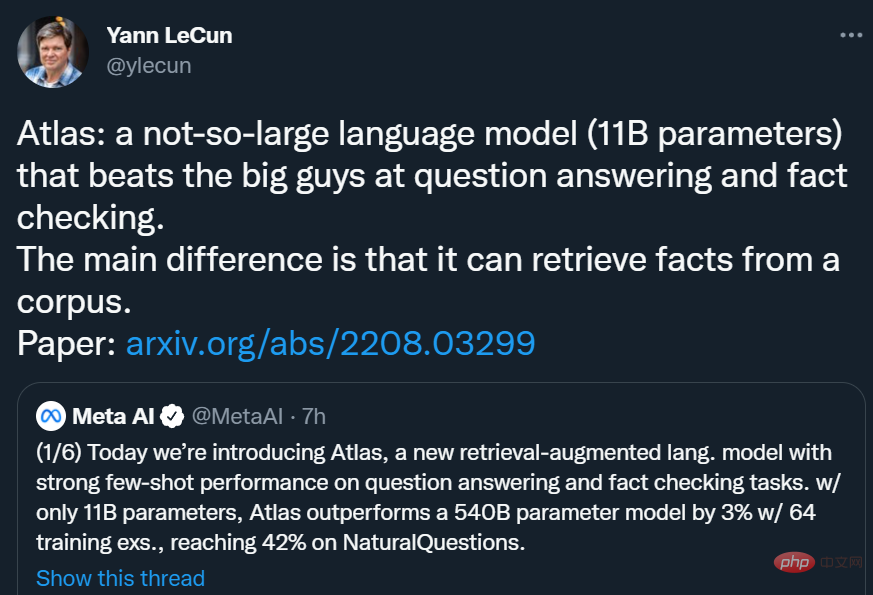

With only 11B parameters, Atlas achieved 42.4% accuracy on NaturalQuestions (NQ) using 64 training examples, which is higher than the 540B parameter model PaLM (39.6%) out nearly 3 percentage points, reaching an accuracy of 64.0% in the full data set setting (Full).

Yann LeCun said: Atlas is a not too big language model (11B parameters), in Q&A and Facts Beats the "big guy" in verification. The main difference of Atlas is that it can retrieve facts from a corpus.

Method Overview

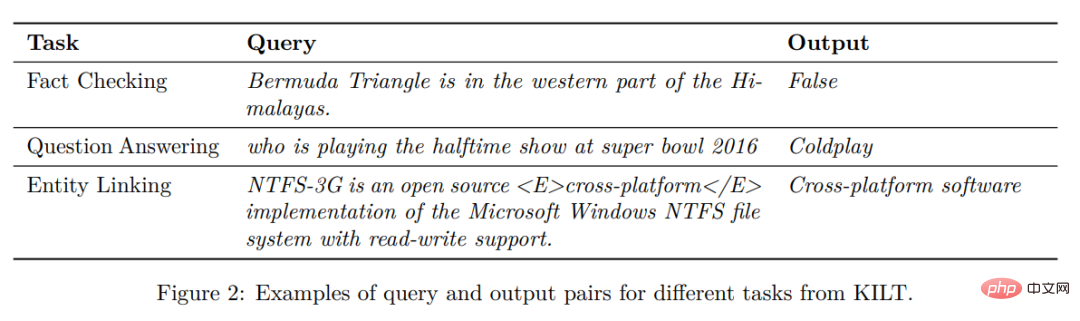

Atlas follows a text-to-text framework. This means that the general framework of all tasks is: the system takes a text query as input and generates text output. For example, in the case of question and answer tasks, the query corresponds to a question and the model needs to generate an answer. In the case of classification tasks, the query corresponds to text input and the model generates class labels, i.e., the words corresponding to the labels. The KILT benchmark in Figure 2 gives more examples of downstream tasks. Many natural language processing tasks require knowledge, and Atlas aims to enhance standard text-to-text models with retrieval, since retrieval may be critical to the model's ability to learn in small sample scenarios.

Architecture

The Atlas model is based on two sub-models: the retriever and the language model. When performing a task, from question answering to generating Wikipedia articles, the model first retrieves the top k relevant documents from a large text corpus through a retriever. These documents, along with the query, are then given as input to the language model, which generates output. Both the retriever and the language model are based on pre-trained transformer networks, which are described in detail below.

Retrieval: Atlas's retriever module is based on Contriever, an information retrieval technology based on continuous density embedding. Contriever uses a dual-encoder architecture where queries and documents are independently embedded by transformer encoders. Average pooling is applied to the output of the last layer to obtain a vector representation of each query or document. Then by calculating the dot product of the mutual embeddings between the query and each document, their similarity scores are obtained. The Contriever model is pre-trained using the MoCo contrastive loss and uses only unsupervised data. One of the advantages of density retrievers is that both query and document encoders can be trained without document annotations using standard techniques such as gradient descent and distillation.

Language model: For language model, Atlas relies on the T5 sequence-to-sequence architecture. The model also relies on a Fusion-in-Decoder modification of the sequence-to-sequence model and processes each document independently in the encoder. The model then concatenates the outputs of the encoders corresponding to different documents and performs cross-attention on a single sequence in the decoder. The model connects the query to each document in the encoder. Another way to process retrieved documents in a language model is to concatenate the query and all documents and use this long sequence as input to the model. But this method is less scalable, that is, it will not scale as the number of documents increases, because the self-attention mechanism in the encoder will lead to a time complexity of O(n^2) (where n is the number of documents ).

Experimental results

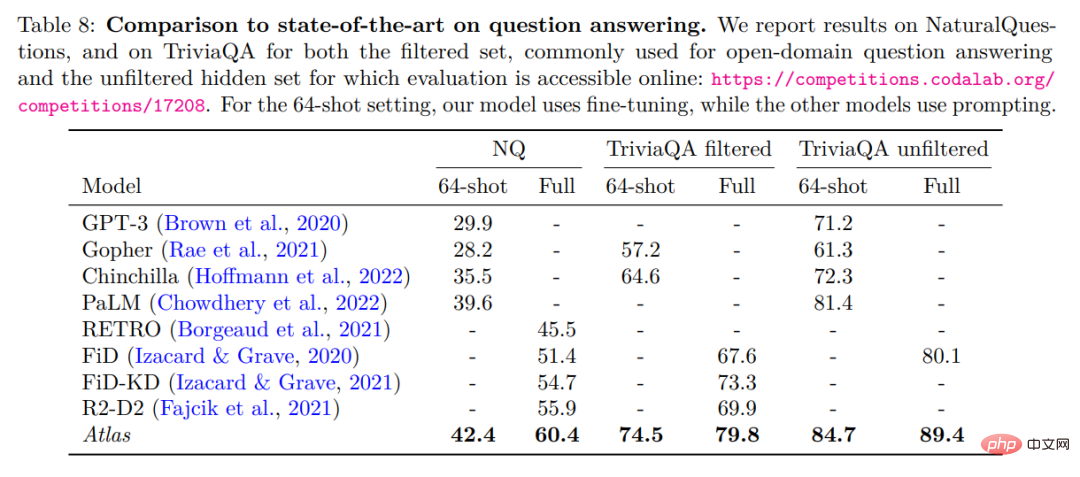

The authors evaluate Atlas on two open-domain question answering benchmarks, NaturalQuestions and TriviaQA. And a small sample data set of 64 samples and a complete training set were used to compare with previous work. The detailed comparison is shown in the table below.

Performs best in 64-shot question answering with NaturalQuestions and TriviaQA. In particular it outperforms larger models (PaLM) or models that require more training computations (Chinchilla). Atlas can also achieve optimal results when using the full training set, such as increasing the accuracy of NaturalQuestions from 55.9% to 60.4%. This result was obtained under the default settings of Atlas, using an index consisting of CCNet and the December 2021 Wikipedia corpus. The table below shows the test results on the fact-checking dataset FEVER.

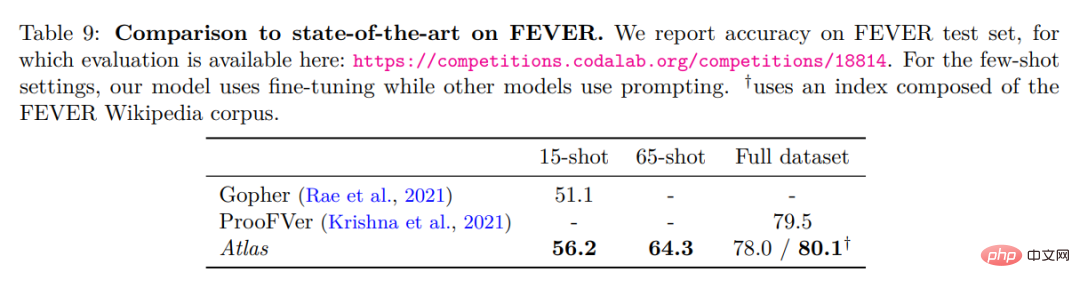

Atlas In the 64-shot case, the training samples are sampled from the full training set. Atlas achieved an accuracy of 64.3%. In the 15-shot case, 5 samples are uniformly sampled from each class. Compared with the Gopher results, the Atlas accuracy is 56.2%, which is 5.1 percentage points higher than Gopher. The Atlas model was fine-tuned on the full training set and achieved an accuracy of 78%, which is 1.5% lower than ProoFVer. ProoFVer uses a specialized architecture to train retrievers with sentence-level annotations and is provided by the Wikipedia corpus published with FEVER, while Atlas retrieves from CCNet and the December 2021 Wikipedia dump. When given an index consisting of the FEVER Wikipedia corpus, Atlas achieved an optimal level of 80.1%.

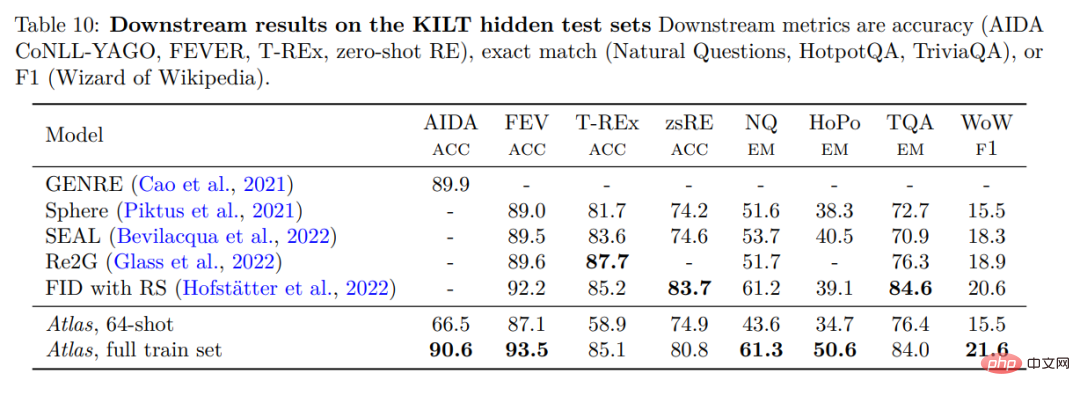

To validate the performance of Atlas, Atlas was evaluated on KILT, a benchmark consisting of several different knowledge-intensive tasks. The table below shows the results on the test set.

Atlas 64-shot far outperformed random algorithms in experiments and even matched some fine-tuned ones on the leaderboard The models are comparable. For example, on FEVER, Atlas 64-shot is only 2-2.5 points behind Sphere, SEAL and Re2G, while on zero-shot RE it outperforms Sphere and SEAL. On the full dataset, Atlas’ performance is within 3% of the best model in 3 datasets, but it is the best in the remaining 5 datasets.

The above is the detailed content of The number of parameters is 1/50, Meta releases 11 billion parameter model, defeating Google PaLM. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

Imagine an artificial intelligence model that not only has the ability to surpass traditional computing, but also achieves more efficient performance at a lower cost. This is not science fiction, DeepSeek-V2[1], the world’s most powerful open source MoE model is here. DeepSeek-V2 is a powerful mixture of experts (MoE) language model with the characteristics of economical training and efficient inference. It consists of 236B parameters, 21B of which are used to activate each marker. Compared with DeepSeek67B, DeepSeek-V2 has stronger performance, while saving 42.5% of training costs, reducing KV cache by 93.3%, and increasing the maximum generation throughput to 5.76 times. DeepSeek is a company exploring general artificial intelligence

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI is indeed changing mathematics. Recently, Tao Zhexuan, who has been paying close attention to this issue, forwarded the latest issue of "Bulletin of the American Mathematical Society" (Bulletin of the American Mathematical Society). Focusing on the topic "Will machines change mathematics?", many mathematicians expressed their opinions. The whole process was full of sparks, hardcore and exciting. The author has a strong lineup, including Fields Medal winner Akshay Venkatesh, Chinese mathematician Zheng Lejun, NYU computer scientist Ernest Davis and many other well-known scholars in the industry. The world of AI has changed dramatically. You know, many of these articles were submitted a year ago.

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

The performance of JAX, promoted by Google, has surpassed that of Pytorch and TensorFlow in recent benchmark tests, ranking first in 7 indicators. And the test was not done on the TPU with the best JAX performance. Although among developers, Pytorch is still more popular than Tensorflow. But in the future, perhaps more large models will be trained and run based on the JAX platform. Models Recently, the Keras team benchmarked three backends (TensorFlow, JAX, PyTorch) with the native PyTorch implementation and Keras2 with TensorFlow. First, they select a set of mainstream

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Boston Dynamics Atlas officially enters the era of electric robots! Yesterday, the hydraulic Atlas just "tearfully" withdrew from the stage of history. Today, Boston Dynamics announced that the electric Atlas is on the job. It seems that in the field of commercial humanoid robots, Boston Dynamics is determined to compete with Tesla. After the new video was released, it had already been viewed by more than one million people in just ten hours. The old people leave and new roles appear. This is a historical necessity. There is no doubt that this year is the explosive year of humanoid robots. Netizens commented: The advancement of robots has made this year's opening ceremony look like a human, and the degree of freedom is far greater than that of humans. But is this really not a horror movie? At the beginning of the video, Atlas is lying calmly on the ground, seemingly on his back. What follows is jaw-dropping

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

Earlier this month, researchers from MIT and other institutions proposed a very promising alternative to MLP - KAN. KAN outperforms MLP in terms of accuracy and interpretability. And it can outperform MLP running with a larger number of parameters with a very small number of parameters. For example, the authors stated that they used KAN to reproduce DeepMind's results with a smaller network and a higher degree of automation. Specifically, DeepMind's MLP has about 300,000 parameters, while KAN only has about 200 parameters. KAN has a strong mathematical foundation like MLP. MLP is based on the universal approximation theorem, while KAN is based on the Kolmogorov-Arnold representation theorem. As shown in the figure below, KAN has

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

Target detection is a relatively mature problem in autonomous driving systems, among which pedestrian detection is one of the earliest algorithms to be deployed. Very comprehensive research has been carried out in most papers. However, distance perception using fisheye cameras for surround view is relatively less studied. Due to large radial distortion, standard bounding box representation is difficult to implement in fisheye cameras. To alleviate the above description, we explore extended bounding box, ellipse, and general polygon designs into polar/angular representations and define an instance segmentation mIOU metric to analyze these representations. The proposed model fisheyeDetNet with polygonal shape outperforms other models and simultaneously achieves 49.5% mAP on the Valeo fisheye camera dataset for autonomous driving

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

The latest video of Tesla's robot Optimus is released, and it can already work in the factory. At normal speed, it sorts batteries (Tesla's 4680 batteries) like this: The official also released what it looks like at 20x speed - on a small "workstation", picking and picking and picking: This time it is released One of the highlights of the video is that Optimus completes this work in the factory, completely autonomously, without human intervention throughout the process. And from the perspective of Optimus, it can also pick up and place the crooked battery, focusing on automatic error correction: Regarding Optimus's hand, NVIDIA scientist Jim Fan gave a high evaluation: Optimus's hand is the world's five-fingered robot. One of the most dexterous. Its hands are not only tactile

The latest from Oxford University! Mickey: 2D image matching in 3D SOTA! (CVPR\'24)

Apr 23, 2024 pm 01:20 PM

The latest from Oxford University! Mickey: 2D image matching in 3D SOTA! (CVPR\'24)

Apr 23, 2024 pm 01:20 PM

Project link written in front: https://nianticlabs.github.io/mickey/ Given two pictures, the camera pose between them can be estimated by establishing the correspondence between the pictures. Typically, these correspondences are 2D to 2D, and our estimated poses are scale-indeterminate. Some applications, such as instant augmented reality anytime, anywhere, require pose estimation of scale metrics, so they rely on external depth estimators to recover scale. This paper proposes MicKey, a keypoint matching process capable of predicting metric correspondences in 3D camera space. By learning 3D coordinate matching across images, we are able to infer metric relative