Technology peripherals

Technology peripherals

AI

AI

Yann LeCun opens up about Google Research: Targeted communication has been around for a long time, where is your innovation?

Yann LeCun opens up about Google Research: Targeted communication has been around for a long time, where is your innovation?

Yann LeCun opens up about Google Research: Targeted communication has been around for a long time, where is your innovation?

Recently, academic Turing Award winner Yann LeCun questioned a Google study.

Some time ago, Google AI proposed a general hierarchical loss structure for multi-layer neural networks in its new research "LocoProp: Enhancing BackProp via Local Loss Optimization" Framework LocoProp, which achieves performance close to second-order methods while using only first-order optimizers.

More specifically, the framework reimagines a neural network as a modular composition of multiple layers, where each layer uses its own weight regularizer, target output and loss function, ultimately simultaneously achieving Performance and efficiency.

Google experimentally verified the effectiveness of its approach on benchmark models and datasets, narrowing the gap between first- and second-order optimizers. In addition, Google researchers stated that their local loss construction method is the first time that square loss is used as a local loss.

Source: @Google AI

Some people’s comments about this research by Google are that it is great and interesting. However, some people expressed different views, including Turing Award winner Yann LeCun.

He believes that there are many versions of what we now call target props, some dating back to 1986. So, what is the difference between Google’s LocoProp and them?

Photo source: @Yann LeCun

Haohan Wang, who is about to become an assistant professor at UIUC, agrees with LeCun’s question. He said it was sometimes surprising why some authors thought such a simple idea was the first of its kind. Maybe they did something different, but the publicity team couldn't wait to come out and claim everything...

Photo source: @HaohanWang

However , some people are "not cold" to LeCun, thinking that he raises questions out of competitive considerations or even "starts a war." LeCun responded, claiming that his question had nothing to do with competition, and gave the example of former members of his laboratory such as Marc'Aurelio Ranzato, Karol Gregor, koray kavukcuoglu, etc., who have all used some versions of target propagation, and now they all work at Google DeepMind. .

Photo source: @Gabriel Jimenez@Yann LeCun

Some people even teased Yann LeCun, "When you can't beat Jürgen Schmidhuber, become him. 》

Is Yann LeCun right? Let’s first take a look at what this Google study is about. Is there any outstanding innovation?

Google LocoProp: Enhanced backpropagation with local loss optimization

This research was completed by three researchers from Google: Ehsan Amid, Rohan Anil, and Manfred K. Warmuth.

Paper address: https://proceedings.mlr.press/v151/amid22a/amid22a.pdf

This article believes that deep neural network (DNN) There are two key factors for success: model design and training data, but few researchers discuss optimization methods for updating model parameters. Our training of the DNN involves minimizing the loss function, which is used to predict the difference between the true value and the model's predicted value, and using backpropagation to update the parameters.

The simplest weight update method is stochastic gradient descent, that is, in each step, the weight moves in the negative direction relative to the gradient. In addition, there are advanced optimization methods, such as momentum optimizer, AdaGrad, etc. These optimizers are often called first-order methods because they typically only use information from the first-order derivatives to modify the update direction.

There are also more advanced optimization methods such as Shampoo, K-FAC, etc., which have been proven to improve convergence and reduce the number of iterations. These methods can capture changes in gradients. Using this additional information, higher-order optimizers can discover more efficient update directions for the trained model by taking into account correlations between different parameter groups. The disadvantage is that computing higher-order update directions is computationally more expensive than first-order updates.

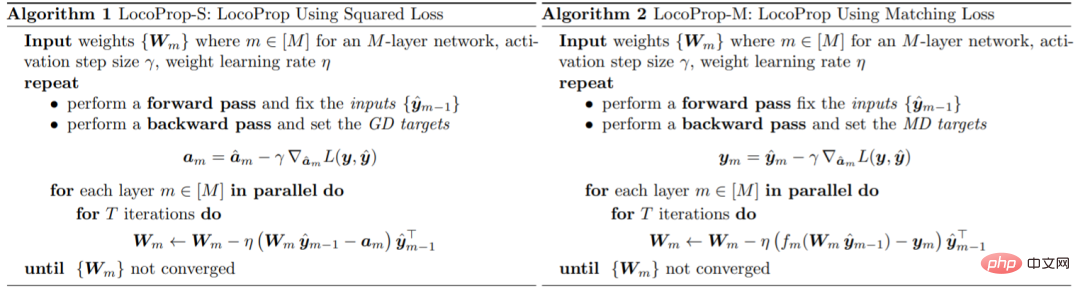

In the paper, Google introduced a framework for training DNN models: LocoProp, which conceives of neural networks as modular combinations of layers. Generally speaking, each layer of a neural network performs a linear transformation on the input, followed by a nonlinear activation function. In this study, each layer of the network was assigned its own weight regularizer, output target, and loss function. The loss function of each layer is designed to match the activation function of that layer. Using this form, training a given small batch of local losses can be minimized, iterating between layers in parallel.

Google uses this first-order optimizer for parameter updates, thus avoiding the computational cost required by higher-order optimizers.

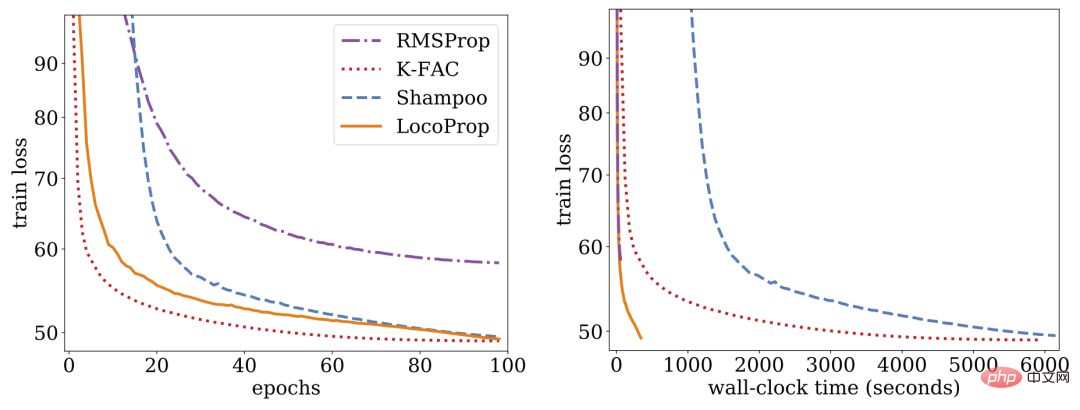

Research shows that LocoProp outperforms first-order methods on deep autoencoder benchmarks and performs comparably to higher-order optimizers such as Shampoo and K-FAC without high memory and computational requirements.

LocoProp: Enhanced Backpropagation through Local Loss Optimization

Typically neural networks are viewed as composite functions that convert the input of each layer into an output express. LocoProp adopts this perspective when decomposing the network into layers. In particular, instead of updating a layer's weights to minimize a loss function on the output, LocoProp applies a predefined local loss function specific to each layer. For a given layer, the loss function is chosen to match the activation function, for example, a tanh loss would be chosen for a layer with tanh activation. Additionally, the regularization term ensures that the updated weights do not deviate too far from their current values.

Similar to backpropagation, LocoProp applies a forward pass to compute activations. In the backward pass, LocoProp sets targets for neurons in each layer. Finally, LocoProp decomposes model training into independent problems across layers, where multiple local updates can be applied to the weights of each layer in parallel.

Google conducted experiments on deep autoencoder models, a common benchmark for evaluating the performance of optimization algorithms. They performed extensive optimization on multiple commonly used first-order optimizers, including SGD, SGD with momentum, AdaGrad, RMSProp, Adam, and higher-order optimizers including Shampoo, K-FAC, and compared the results with LocoProp. The results show that the LocoProp method performs significantly better than first-order optimizers and is comparable to high-order optimizers, while being significantly faster when running on a single GPU.

The above is the detailed content of Yann LeCun opens up about Google Research: Targeted communication has been around for a long time, where is your innovation?. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1377

1377

52

52

How to add a new column in SQL

Apr 09, 2025 pm 02:09 PM

How to add a new column in SQL

Apr 09, 2025 pm 02:09 PM

Add new columns to an existing table in SQL by using the ALTER TABLE statement. The specific steps include: determining the table name and column information, writing ALTER TABLE statements, and executing statements. For example, add an email column to the Customers table (VARCHAR(50)): ALTER TABLE Customers ADD email VARCHAR(50);

What is the syntax for adding columns in SQL

Apr 09, 2025 pm 02:51 PM

What is the syntax for adding columns in SQL

Apr 09, 2025 pm 02:51 PM

The syntax for adding columns in SQL is ALTER TABLE table_name ADD column_name data_type [NOT NULL] [DEFAULT default_value]; where table_name is the table name, column_name is the new column name, data_type is the data type, NOT NULL specifies whether null values are allowed, and DEFAULT default_value specifies the default value.

SQL Clear Table: Performance Optimization Tips

Apr 09, 2025 pm 02:54 PM

SQL Clear Table: Performance Optimization Tips

Apr 09, 2025 pm 02:54 PM

Tips to improve SQL table clearing performance: Use TRUNCATE TABLE instead of DELETE, free up space and reset the identity column. Disable foreign key constraints to prevent cascading deletion. Use transaction encapsulation operations to ensure data consistency. Batch delete big data and limit the number of rows through LIMIT. Rebuild the index after clearing to improve query efficiency.

How to set default values when adding columns in SQL

Apr 09, 2025 pm 02:45 PM

How to set default values when adding columns in SQL

Apr 09, 2025 pm 02:45 PM

Set the default value for newly added columns, use the ALTER TABLE statement: Specify adding columns and set the default value: ALTER TABLE table_name ADD column_name data_type DEFAULT default_value; use the CONSTRAINT clause to specify the default value: ALTER TABLE table_name ADD COLUMN column_name data_type CONSTRAINT default_constraint DEFAULT default_value;

Use DELETE statement to clear SQL tables

Apr 09, 2025 pm 03:00 PM

Use DELETE statement to clear SQL tables

Apr 09, 2025 pm 03:00 PM

Yes, the DELETE statement can be used to clear a SQL table, the steps are as follows: Use the DELETE statement: DELETE FROM table_name; Replace table_name with the name of the table to be cleared.

How to deal with Redis memory fragmentation?

Apr 10, 2025 pm 02:24 PM

How to deal with Redis memory fragmentation?

Apr 10, 2025 pm 02:24 PM

Redis memory fragmentation refers to the existence of small free areas in the allocated memory that cannot be reassigned. Coping strategies include: Restart Redis: completely clear the memory, but interrupt service. Optimize data structures: Use a structure that is more suitable for Redis to reduce the number of memory allocations and releases. Adjust configuration parameters: Use the policy to eliminate the least recently used key-value pairs. Use persistence mechanism: Back up data regularly and restart Redis to clean up fragments. Monitor memory usage: Discover problems in a timely manner and take measures.

phpmyadmin creates data table

Apr 10, 2025 pm 11:00 PM

phpmyadmin creates data table

Apr 10, 2025 pm 11:00 PM

To create a data table using phpMyAdmin, the following steps are essential: Connect to the database and click the New tab. Name the table and select the storage engine (InnoDB recommended). Add column details by clicking the Add Column button, including column name, data type, whether to allow null values, and other properties. Select one or more columns as primary keys. Click the Save button to create tables and columns.

Monitor Redis Droplet with Redis Exporter Service

Apr 10, 2025 pm 01:36 PM

Monitor Redis Droplet with Redis Exporter Service

Apr 10, 2025 pm 01:36 PM

Effective monitoring of Redis databases is critical to maintaining optimal performance, identifying potential bottlenecks, and ensuring overall system reliability. Redis Exporter Service is a powerful utility designed to monitor Redis databases using Prometheus. This tutorial will guide you through the complete setup and configuration of Redis Exporter Service, ensuring you seamlessly build monitoring solutions. By studying this tutorial, you will achieve fully operational monitoring settings