A neural network-based strategy to enhance quantum simulations

Recent quantum computers provide a promising platform for finding the ground state of quantum systems, a fundamental task in physics, chemistry and materials science. However, recent methods are limited by noise and limited recent quantum hardware resources.

Researchers at the University of Waterloo in Canada have introduced neural error mitigation, which uses neural networks to improve estimates of ground-state and ground-state observables obtained using recent quantum simulations. To demonstrate the method's broad applicability, the researchers employed neural error mitigation to find H2 prepared by a variational quantum eigensolver. and LiH molecular Hamiltonian and the ground state of the lattice Schwinger model.

Experimental results show that neural error mitigation improves numerical and experimental variational quantum feature solver calculations to produce low energy errors, high fidelity, and robustness to more complex solvable Accurate estimation of observed quantities such as order parameters and entanglement entropy without requiring additional quantum resources. Furthermore, neural error mitigation is independent of the quantum state preparation algorithm used, the quantum hardware implementing it, and the specific noise channels affecting the experiment, contributing to its versatility as a quantum simulation tool.

The research is titled "Neural Error Mitigation of Near-Term Quantum Simulations" and was published in "Nature Machine" on July 20, 2022 Intelligence》.

Since the early 20th century, scientists have been developing comprehensive theories that describe the behavior of quantum mechanical systems. However, the computational costs required to study these systems often exceed the capabilities of current scientific computing methods and hardware. Therefore, computational infeasibility remains an obstacle to the practical application of these theories to scientific and technical problems.

Simulation of quantum systems on quantum computers (referred to here as quantum simulation) shows promise in overcoming these obstacles and has been a fundamental driving force behind the concept and creation of quantum computers. In particular, quantum simulations of the ground and steady states of quantum many-body systems beyond the capabilities of classical computers are expected to have a significant impact on nuclear physics, particle physics, quantum gravity, condensed matter physics, quantum chemistry, and materials science. The capabilities of current and near-term quantum computers continue to be limited by limitations such as the number of qubits and the effects of noise. Quantum error correction technology can eliminate errors caused by noise, providing a way for fault-tolerant quantum computing. In practice, however, implementing quantum error correction incurs significant overhead in terms of the number of qubits required and low error rates, both of which are beyond the capabilities of current and near-term devices.

Until fault-tolerant quantum simulations can be achieved, modern variational algorithms significantly alleviate the need for quantum hardware and take advantage of the capabilities of noisy, moderate-scale quantum devices.

A prominent example is the variational quantum eigensolver (VQE), a hybrid quantum classical algorithm that iterates through a series of variational optimization of parameterized quantum circuits Ground approach to the lowest energy eigenvalue of the target Hamiltonian. Among other variational algorithms, this has become a leading strategy for achieving quantum advantage using recent devices and accelerating progress in multiple fields of science and technology.

Experimental implementation of variational quantum algorithms remains a challenge for many scientific problems because noisy mid-scale quantum devices are affected by various noise sources and defects. Currently, several quantum error mitigation (QEM) methods for mitigating these issues have been proposed and experimentally verified, thereby improving quantum computing without the quantum resources required for quantum error correction.

Typically, these methods use specific information about the noise channels affecting quantum computation, hardware implementation, or the quantum algorithm itself; including implicit representations of noise models and how they affect the desired Estimation of observed quantities, specific knowledge of the state subspace in which prepared quantum states should reside, and characterization and mitigation of noise sources on various components of quantum computing, such as single-qubit and two-qubit gate errors, as well as state preparation and measurement error.

Machine learning techniques have recently been repurposed as tools to solve complex problems in quantum many-body physics and quantum information processing, providing an alternative approach to QEM. Here, researchers from the University of Waterloo introduce a QEM strategy called neural error mitigation (NEM), which uses neural networks to mitigate errors in the approximate preparation of the quantum ground state of the Hamiltonian.

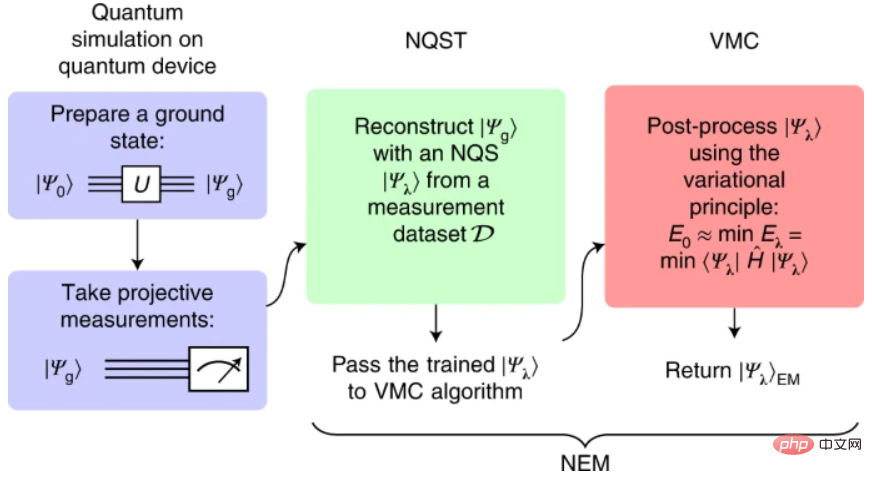

The NEM algorithm consists of two steps. First, the researchers performed neural quantum state (NQS) tomography (NQST) to train NQS ansatz to represent approximate ground states prepared by noisy quantum devices using experimentally accessible measurements. Inspired by traditional quantum state tomography (QST), NQST is a data-driven QST machine learning method that uses a limited number of measurements to efficiently reconstruct complex quantum states.

The variational Monte Carlo (VMC) algorithm is then applied on the same NQS ansatz (also known as NEM ansatz) to improve the representation of the unknown ground state. In the spirit of VQE, VMC approximates the ground state of the Hamiltonian based on the classical variational ansatz, in the example NQS ansatz.

Illustration: NEM program. (Source: Paper)

Here, the researchers used an autoregressive generative neural network as NEM ansatz; more specifically, they used the Transformer architecture and showed that the model Performing well as an NQS. Due to its ability to simulate long-range temporal and spatial correlations, this architecture has been used in many state-of-the-art experiments in the fields of natural language and image processing, and has the potential to simulate long-range quantum correlations.

NEM has several advantages over other error mitigation techniques. First, it has low experimental overhead; it requires only a simple set of experimentally feasible measurements to learn the properties of noisy quantum states prepared by VQE. Therefore, the overhead of error mitigation in NEM is shifted from quantum resources (i.e., performing additional quantum experiments and measurements) to classical computing resources for machine learning. In particular, the researchers noted that the main cost of NEM is performing VMC before convergence. Another advantage of NEM is that it is independent of the quantum simulation algorithm, the device that implements it, and the specific noise channels that affect the quantum simulation. Therefore, it can also be combined with other QEM techniques and can be applied to simulate quantum analog or digital quantum circuits.

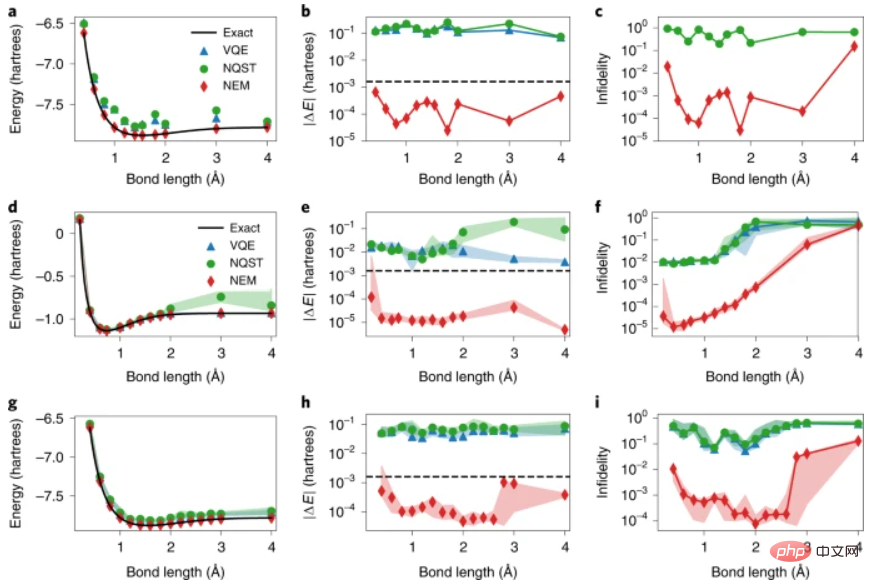

Illustration: Experimental and numerical NEM results of the molecular Hamiltonian. (Source: Paper)

Illustration: Experimental and numerical NEM results of the molecular Hamiltonian. (Source: Paper)

NEM also solves the problem of low measurement accuracy that arises when estimating quantum observables using recent quantum devices. This is particularly important in quantum simulations, where accurate estimation of quantum observables is crucial for practical applications. NEM essentially solves the problem of low measurement accuracy at each step of the algorithm. In a first step, NQST improves the variance of the observable estimates at the cost of introducing a small estimate bias. This bias, as well as the residual variance, can be further reduced by training NEM ansatz with VMC, which leads to zero-variance expectations of energy estimates after reaching the ground state.

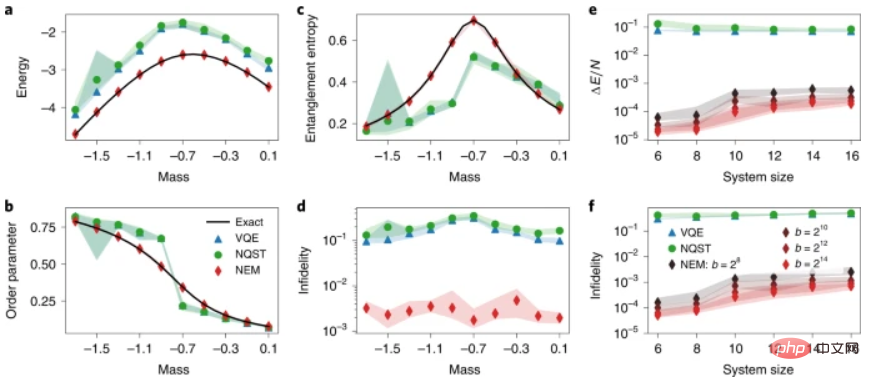

Illustration: The properties of the NEM applied to the ground state of the lattice Schwinger model. (Source: paper)

Illustration: The properties of the NEM applied to the ground state of the lattice Schwinger model. (Source: paper)

By combining the use of parametric quantum circuits as the VQE of ansatz, and the use of neural networks as the NQST and VMC of ansatz, NEM combines two parametric quantum state families and three optimization problems regarding its losses. The researchers raised questions about the nature of the relationships between these families of states, their losses and quantum advantages. Examining these relationships provides a new way to study the potential of noisy, medium-scale quantum algorithms in the pursuit of quantum advantage. This may facilitate a better demarcation between simulations of classically tractable quantum systems and simulations that require quantum resources.

Paper link: https://www.nature.com/articles/s42256-022-00509-0

Related reports: https://techxplore.com/news/2022-08-neural-networkbased-strategy-near-term-quantum.html

The above is the detailed content of A neural network-based strategy to enhance quantum simulations. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

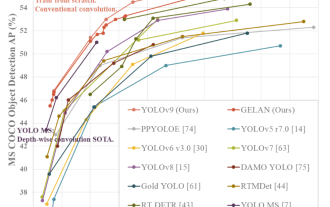

YOLO is immortal! YOLOv9 is released: performance and speed SOTA~

Feb 26, 2024 am 11:31 AM

YOLO is immortal! YOLOv9 is released: performance and speed SOTA~

Feb 26, 2024 am 11:31 AM

Today's deep learning methods focus on designing the most suitable objective function so that the model's prediction results are closest to the actual situation. At the same time, a suitable architecture must be designed to obtain sufficient information for prediction. Existing methods ignore the fact that when the input data undergoes layer-by-layer feature extraction and spatial transformation, a large amount of information will be lost. This article will delve into important issues when transmitting data through deep networks, namely information bottlenecks and reversible functions. Based on this, the concept of programmable gradient information (PGI) is proposed to cope with the various changes required by deep networks to achieve multi-objectives. PGI can provide complete input information for the target task to calculate the objective function, thereby obtaining reliable gradient information to update network weights. In addition, a new lightweight network framework is designed

The foundation, frontier and application of GNN

Apr 11, 2023 pm 11:40 PM

The foundation, frontier and application of GNN

Apr 11, 2023 pm 11:40 PM

Graph neural networks (GNN) have made rapid and incredible progress in recent years. Graph neural network, also known as graph deep learning, graph representation learning (graph representation learning) or geometric deep learning, is the fastest growing research topic in the field of machine learning, especially deep learning. The title of this sharing is "Basics, Frontiers and Applications of GNN", which mainly introduces the general content of the comprehensive book "Basics, Frontiers and Applications of Graph Neural Networks" compiled by scholars Wu Lingfei, Cui Peng, Pei Jian and Zhao Liang. . 1. Introduction to graph neural networks 1. Why study graphs? Graphs are a universal language for describing and modeling complex systems. The graph itself is not complicated, it mainly consists of edges and nodes. We can use nodes to represent any object we want to model, and edges to represent two

An overview of the three mainstream chip architectures for autonomous driving in one article

Apr 12, 2023 pm 12:07 PM

An overview of the three mainstream chip architectures for autonomous driving in one article

Apr 12, 2023 pm 12:07 PM

The current mainstream AI chips are mainly divided into three categories: GPU, FPGA, and ASIC. Both GPU and FPGA are relatively mature chip architectures in the early stage and are general-purpose chips. ASIC is a chip customized for specific AI scenarios. The industry has confirmed that CPUs are not suitable for AI computing, but they are also essential in AI applications. GPU Solution Architecture Comparison between GPU and CPU The CPU follows the von Neumann architecture, the core of which is the storage of programs/data and serial sequential execution. Therefore, the CPU architecture requires a large amount of space to place the storage unit (Cache) and the control unit (Control). In contrast, the computing unit (ALU) only occupies a small part, so the CPU is performing large-scale parallel computing.

'The owner of Bilibili UP successfully created the world's first redstone-based neural network, which caused a sensation on social media and was praised by Yann LeCun.'

May 07, 2023 pm 10:58 PM

'The owner of Bilibili UP successfully created the world's first redstone-based neural network, which caused a sensation on social media and was praised by Yann LeCun.'

May 07, 2023 pm 10:58 PM

In Minecraft, redstone is a very important item. It is a unique material in the game. Switches, redstone torches, and redstone blocks can provide electricity-like energy to wires or objects. Redstone circuits can be used to build structures for you to control or activate other machinery. They themselves can be designed to respond to manual activation by players, or they can repeatedly output signals or respond to changes caused by non-players, such as creature movement and items. Falling, plant growth, day and night, and more. Therefore, in my world, redstone can control extremely many types of machinery, ranging from simple machinery such as automatic doors, light switches and strobe power supplies, to huge elevators, automatic farms, small game platforms and even in-game machines. built computer. Recently, B station UP main @

A drone that can withstand strong winds? Caltech uses 12 minutes of flight data to teach drones to fly in the wind

Apr 09, 2023 pm 11:51 PM

A drone that can withstand strong winds? Caltech uses 12 minutes of flight data to teach drones to fly in the wind

Apr 09, 2023 pm 11:51 PM

When the wind is strong enough to blow the umbrella, the drone is stable, just like this: Flying with the wind is a part of flying in the air. From a large level, when the pilot lands the aircraft, the wind speed may be Bringing challenges to them; on a smaller level, gusty winds can also affect drone flight. Currently, drones either fly under controlled conditions, without wind, or are operated by humans using remote controls. Drones are controlled by researchers to fly in formations in the open sky, but these flights are usually conducted under ideal conditions and environments. However, for drones to autonomously perform necessary but routine tasks, such as delivering packages, they must be able to adapt to wind conditions in real time. To make drones more maneuverable when flying in the wind, a team of engineers from Caltech

Multi-path, multi-domain, all-inclusive! Google AI releases multi-domain learning general model MDL

May 28, 2023 pm 02:12 PM

Multi-path, multi-domain, all-inclusive! Google AI releases multi-domain learning general model MDL

May 28, 2023 pm 02:12 PM

Deep learning models for vision tasks (such as image classification) are usually trained end-to-end with data from a single visual domain (such as natural images or computer-generated images). Generally, an application that completes vision tasks for multiple domains needs to build multiple models for each separate domain and train them independently. Data is not shared between different domains. During inference, each model will handle a specific domain. input data. Even if they are oriented to different fields, some features of the early layers between these models are similar, so joint training of these models is more efficient. This reduces latency and power consumption, and reduces the memory cost of storing each model parameter. This approach is called multi-domain learning (MDL). In addition, MDL models can also outperform single

1.3ms takes 1.3ms! Tsinghua's latest open source mobile neural network architecture RepViT

Mar 11, 2024 pm 12:07 PM

1.3ms takes 1.3ms! Tsinghua's latest open source mobile neural network architecture RepViT

Mar 11, 2024 pm 12:07 PM

Paper address: https://arxiv.org/abs/2307.09283 Code address: https://github.com/THU-MIG/RepViTRepViT performs well in the mobile ViT architecture and shows significant advantages. Next, we explore the contributions of this study. It is mentioned in the article that lightweight ViTs generally perform better than lightweight CNNs on visual tasks, mainly due to their multi-head self-attention module (MSHA) that allows the model to learn global representations. However, the architectural differences between lightweight ViTs and lightweight CNNs have not been fully studied. In this study, the authors integrated lightweight ViTs into the effective

New research reveals the potential of quantum Monte Carlo to surpass neural networks in breaking through limitations, and a Nature sub-issue details the latest progress

Apr 24, 2023 pm 09:16 PM

New research reveals the potential of quantum Monte Carlo to surpass neural networks in breaking through limitations, and a Nature sub-issue details the latest progress

Apr 24, 2023 pm 09:16 PM

After four months, another collaborative work between ByteDance Research and Chen Ji's research group at the School of Physics at Peking University has been published in the top international journal Nature Communications: the paper "Towards the ground state of molecules via diffusion Monte Carlo neural networks" combines neural networks with diffusion Monte Carlo methods, greatly improving the application of neural network methods in quantum chemistry. The calculation accuracy, efficiency and system scale on related tasks have become the latest SOTA. Paper link: https://www.nature.com