Since Meta released the "open source version of ChatGPT" LLaMA, the academic community has been in a carnival.

First, Stanford proposed the 7 billion parameter Alpaca, followed by the 13 billion parameter Vicuna released by UC Berkeley, CMU, Stanford, UCSD and MBZUAI, which was achieved in more than 90% of cases. Ability to rival ChatGPT and Bard.

Today, the "Volume King" UC Berkeley LMSys org released another 7 billion parameter Vicuna -

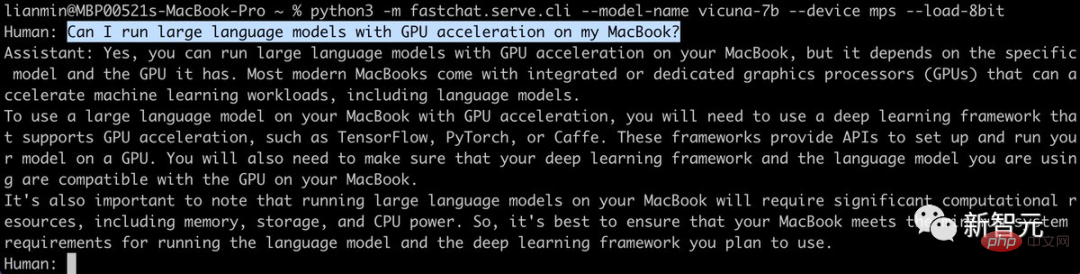

It is not only small in size, but also highly efficient , powerful, and can run on Mac with M1/M2 chip with just two lines of commands, and can also enable GPU acceleration!

## Project address: https://github.com/lm-sys/FastChat/#fine-tuning

Just today, researchers from Hugging Face also released a 7 billion parameter model-StackLLaMA. This is a model fine-tuned on LLaMA-7B through human feedback reinforcement learning.

Vicuna-7B: Really single GPU, Mac can runLess than a week after the release of the model, UC Berkeley LMSys org announced the results of Vicuna-13B Weights.

Among them, a single GPU requires about 28GB of video memory, while using only the CPU requires about 60GB of memory.

The 7 billion parameter version released this time is much smaller - the demand is cut in half.

In other words, running Vicuna-7B with a single GPU only requires 14GB of video memory; while running with a pure CPU requires only 30GB of memory.

Not only that, we can also enable GPU acceleration on Macs equipped with Apple's self-developed chips or AMD GPUs through the Metal backend.

When the 13B model was released, many netizens complained:

What I thought was a single GPU: 4090Actual single GPU: 28GB video memory and above

Now, this problem also exists The new solution - using 8-bit compression to directly reduce the memory usage by about half, but the quality of the model will be slightly reduced.

13B model’s 28GB video memory instantly changes to 14GB; 7B model’s 14GB video memory instantly changes to 7GB, is there any! (But due to activation, the actual usage will be higher than this)

In this regard, researchers from LMSys org said that if you encounter insufficient memory or video memory, you can use Add --load-8bit to the above command to enable 8-bit compression.

Moreover, whether it is CPU, GPU or Metal, whether it is 7B model or 13B model, it is applicable to all.

python3 -m fastchat.serve.cli --model-name /path/to/vicuna/weights --load-8bit

Today, Hugging Face researchers published a blog StackLLaMA: A practical guide to training LLaMA with RLHF.

Current large language models ChatGPT, GPT-4 and Claude all use reinforcement learning with human feedback (RLHF) to fine-tune the behavior of the model to produce Responses that are more in line with user intent.

Here, HF researchers trained the LlaMa model to use RLHF to answer all steps on Stack Exchange using a combination of the following:

· Supervised Fine Tuning (SFT)

· 奖励/偏好建模(RM)

· 人类反馈强化学习 (RLHF)

要注意了!

训练StackLLaMA的主要目标是提供一个教程和指南,介绍如何使用RLHF来训练模型,而不是主要关注模型的性能表现。

换句话说,该模型在生成答案方面非常滑稽,比如问它「我的花园里有一只骆驼,怎样才能把它赶走?」

StackLLaMA最后给出的一个总括「如果以上方法都不奏效,就要召集增援了。如果有不止一个人想抓住这个奇特的小家伙,为什么不召集一个团队呢?齐心协力,集中力量,这个问题应该很快就解决了」。

在进行RLHF时,最重要的是从一个强有力的模型开始。因为RLHF只是一个微调步骤,以便让模型与我们期望的互动方式和响应方式相一致。

当前,Meta开源的LLaMA模型参数大小从7B到65B不等,并且在1T到1.4T的token上进行了训练,是目前开源比较强大的模型。

因此,研究人员使用7B模型作为后续微调的基础。

在数据集选用上,研究人员使用了StackExchange数据集,包括所有的问题和答案(还有StackOverflow和其他主题)。

选用该数据集的好处是,答案伴随着点赞数和接受答案的标签一起给出。

研究人员根据A General Language Assistant as a Laboratory for Alignment论文中描述的方法,给每个答案打分:

score = log2 (1 + upvotes) rounded to the nearest integer, plus 1 if the questioner accepted the answer (we assign a score of −1 if the number of upvotes is negative).

对于奖励模型,始终需要每个问题两个答案来进行比较。

而有些问题有几十个答案,导致可能存在许多的可选对。因此,研究者对每个问题最多采样十个答案对,以限制每个问题的数据点数。

最后,通过将HTML转换为Markdown来清除格式,使模型输出更可读。

即使训练最小的LLaMA模型也需要大量的内存。通过计算7B 参数模型将使用(2+8)*7B=70GB 内存空间。当计算注意力分数等中间值时,可能需要更多。因此,即使在单个80GB的A100上也无法训练该模型。

一种方法是使用更高效的优化器和半精度训练,将更多信息压缩到内存中,但内存仍旧不够用。

另一种选择是使用参数高效微调(PEFT)技术,例如PEFT库,它可以在8位模型上执行低秩适应(LoRA)。

线性层的低秩适应: 在冻结层(蓝色)旁边添加额外参数(橙色),并将结果编码的隐藏状态与冻结层的隐藏状态相加。

以8位加载模型大大减少了内存占用,因为每个参数只需要一个字节的权重。比如,7B LLaMA在内存中是7 GB。

LoRA不直接训练原始权重,而是在一些特定的层 (通常是注意力层) 上添加小的适配器层,因此可训练参数的数量大大减少。

在这种情况下,一个经验法则是为每十亿参数分配约1.2-1.4GB的内存(取决于批次大小和序列长度),以适应整个微调设置。

这可以以较低成本微调更大的模型(在NVIDIA A100 80GB上训练高达50-60B规模的模型)。这些技术已经能够在消费级设备,比如树莓派、手机,和GoogleColab上对大型模型进行微调。

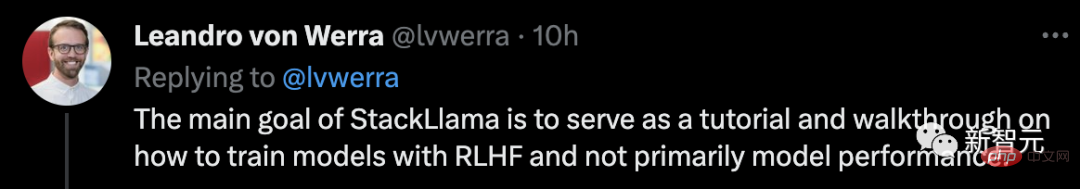

研究人员发现尽管现在可以把非常大的模型放入当个GPU中,但是训练可能仍然非常缓慢。

在此,研究人员使用了数据并行策略:将相同的训练设置复制到单个GPU中,并将不同的批次传递给每个GPU。

在开始训练奖励模型并使用RL调整模型之前,若要模型在任何情况下遵循指令,便需要指令调优。

实现这一点最简单的方法是,使用来自领域或任务的文本继续训练语言模型。

为了有效地使用数据,研究者使用一种称为「packing」的技术:在文本之间使用一个EOS标记连接许多文本,并切割上下文大小的块以填充批次,而无需任何填充。

通过这种方法,训练效率更高,因为通过模型的每个token也进行了训练。

原则上,研究人员可以使用RLHF直接通过人工标注对模型进行微调。然而,这需要在每次优化迭代之后将一些样本发送给人类进行评级。

由于需要大量的训练样本来实现收敛,人类阅读和标注速度固有的延迟,不仅昂贵,还非常缓慢。

因此,研究人员在RL调整模型之前,在收集的人工标注上训练一个奖励模型。奖励建模的目的是模仿人类对文本的评价,这一方法比直接反馈更有效。

在实践中,最好的方法是预测两个示例的排名,奖励模型会根据提示X提供两个候选项,并且必须预测哪一个会被人类标注员评价更高。

通过StackExchange 数据集,研究人员根据分数推断出用户更喜欢这两个答案中的哪一个。有了这些信息和上面定义的损失,就可以修改transformers.Trainer 。通过添加一个自定义的损失函数进行训练。

class RewardTrainer(Trainer):def compute_loss(self, model, inputs, return_outputs=False):

rewards_j = model(input_ids=inputs["input_ids_j"],attention_mask=inputs["attention_mask_j"])[0]

rewards_k = model(input_ids=inputs["input_ids_k"], attention_mask=inputs["attention_mask_k"])[0]

loss = -nn.functional.logsigmoid(rewards_j - rewards_k).mean()

if return_outputs:

return loss, {"rewards_j": rewards_j, "rewards_k": rewards_k}

return loss研究人员利用100,000对候选子集,并在50,000对候选的支持集上进行评估。

训练通过Weights & Biases进行记录,在8-A100 GPU上花费了几个小时,模型最终的准确率为67%。

虽然这听起来分数不高,但是这个任务对于人类标注员来说也非常困难。

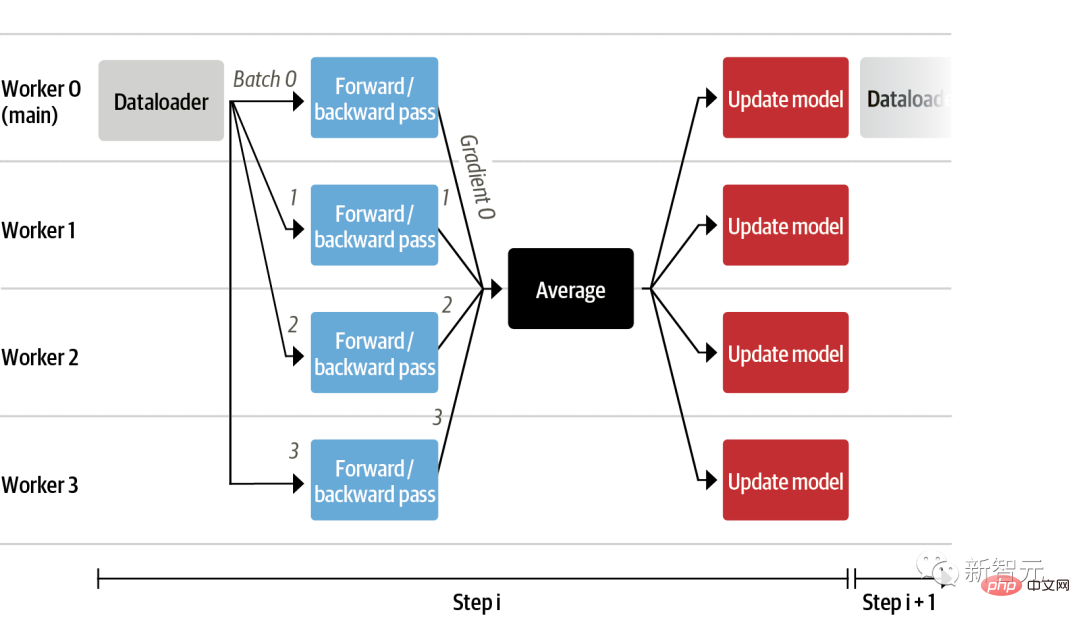

有了经过微调的语言模型和奖励模型,现在可以运行RL循环,大致分为以下三个步骤:

· 根据提示生成响应

· 根据奖励模型对回答进行评分

· 对评级进行强化学习策略优化

在对查询和响应提示进行标记并传递给模型之前,模板如下。同样的模版也适用于SFT,RM 和RLHF阶段。

Question: <Query> Answer: <Response>

使用RL训练语言模型的一个常见问题是,模型可以通过生成完全胡言乱语来学习利用奖励模型,从而导致奖励模型得到不合实际的奖励。

为了平衡这一点,研究人员在奖励中增加了一个惩罚:保留一个没有训练的模型进行参考,并通过计算 KL散度将新模型的生成与参考模型的生成进行比较。

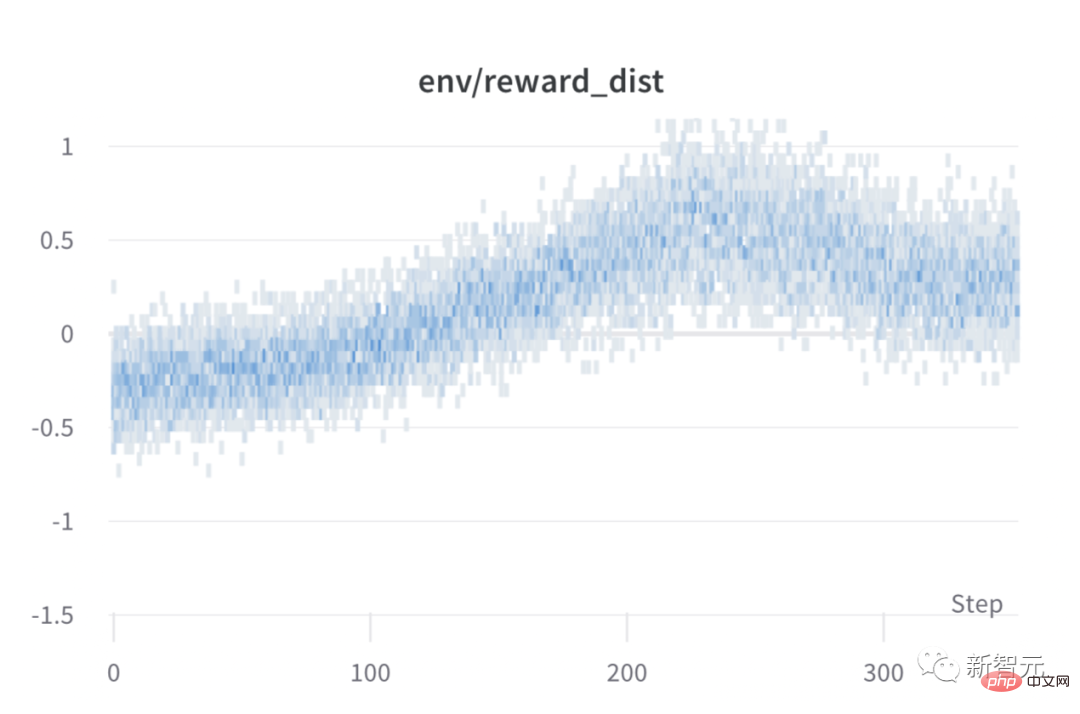

在训练期间对每个步骤进行批次奖励,模型的性能在大约1000个步骤后趋于稳定。

The above is the detailed content of ChatGPT replaces 'Little Alpaca' and can be run on Mac! 2 lines of code for a single GPU, UC Berkeley releases another 7 billion parameter open source model. For more information, please follow other related articles on the PHP Chinese website!

Drawing software

Drawing software

How to find the greatest common divisor in C language

How to find the greatest common divisor in C language

Usage of Type keyword in Go

Usage of Type keyword in Go

WeChat payment deduction sequence

WeChat payment deduction sequence

How to solve operation timed out

How to solve operation timed out

Introduction to Java special effects implementation methods

Introduction to Java special effects implementation methods

What does frame rate mean?

What does frame rate mean?

What is highlighting in jquery

What is highlighting in jquery

Reasons why website access prompts internal server error

Reasons why website access prompts internal server error