Here's how to teach ChatGPT how to read pictures

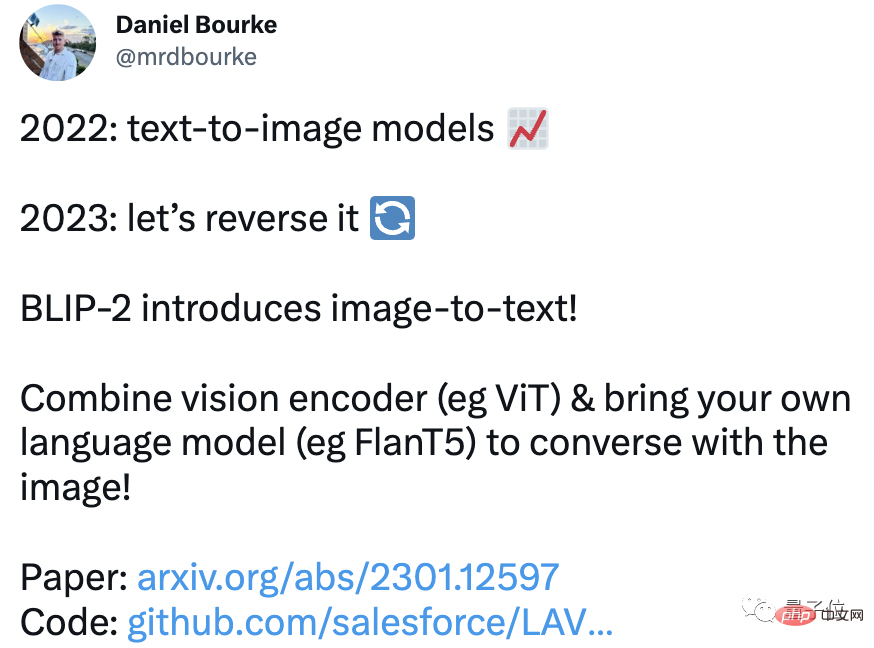

The "Wen Sheng Tu" model will be popular in 2022, so what will be popular in 2023?

The answer from machine learning engineer Daniel Bourke is: the other way around!

No, a newly released "pictures and text" model has exploded on the Internet, and its excellent effects have caused many netizens to repost and like it.

is not only a basic "look at pictures and speak" function, but also can write love poems, explain plots, design dialogues for objects in pictures, etc., this AI can do all Hold it firmly!

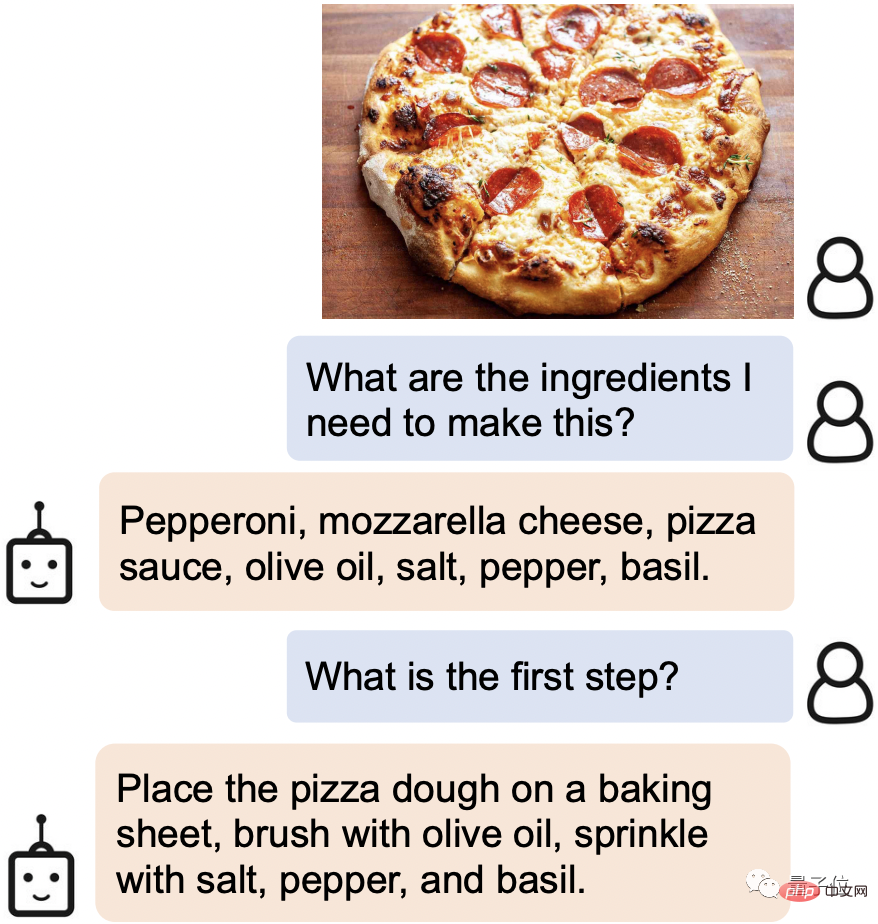

For example, when you find tempting food on the Internet, just send it the picture, and it will immediately identify the required ingredients and cooking steps:

Even some of Leeuwenhoek’s details in the picture can be “seen” clearly.

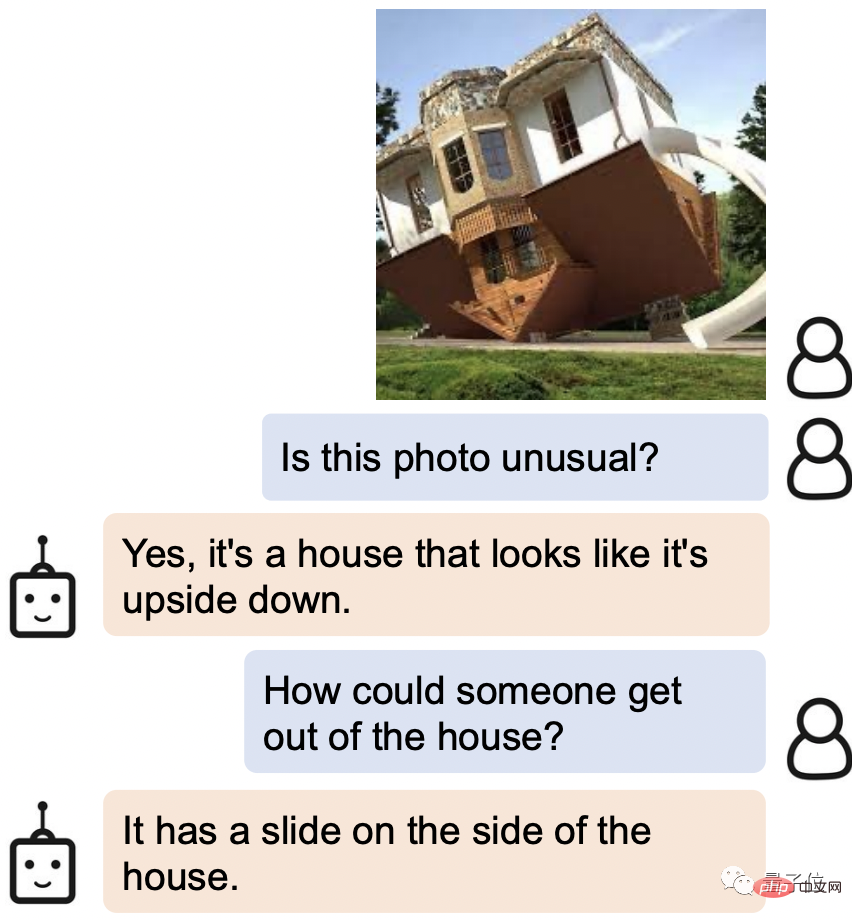

When asked how to get out of the upside-down house in the picture, AI's answer was: Isn't there a slide on the side?

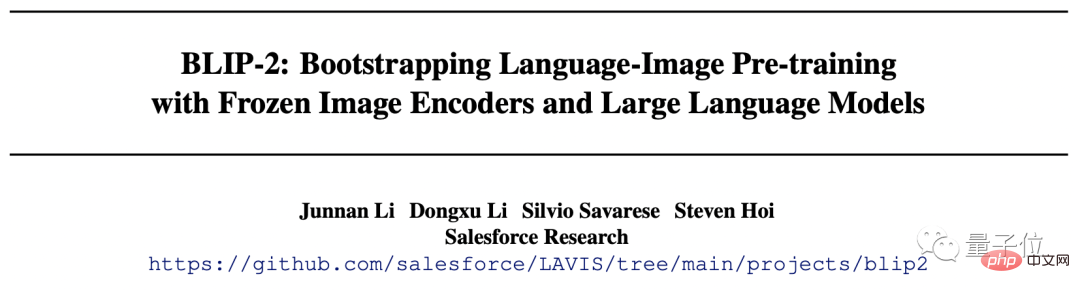

This new AI is called BLIP-2 (Bootstrapping Language-Image Pre-training 2), and the code is currently open source.

The most important thing is that, unlike previous research, BLIP-2 uses a universal pre-training framework, so it can be connected to your own language model arbitrarily.

Some netizens are already imagining the powerful combination after changing the interface to ChatGPT.

Steven Hoi, one of the authors, even said: BLIP-2 will be the "multi-modal version of ChatGPT" in the future.

So, what other magical places are there in BLIP-2? Look down together.

First-class understanding ability

The gameplay of BLIP-2 can be said to be very diverse.

You only need to provide a picture, and you can talk to it, and it can meet various requirements such as telling stories, reasoning, and generating personalized text.

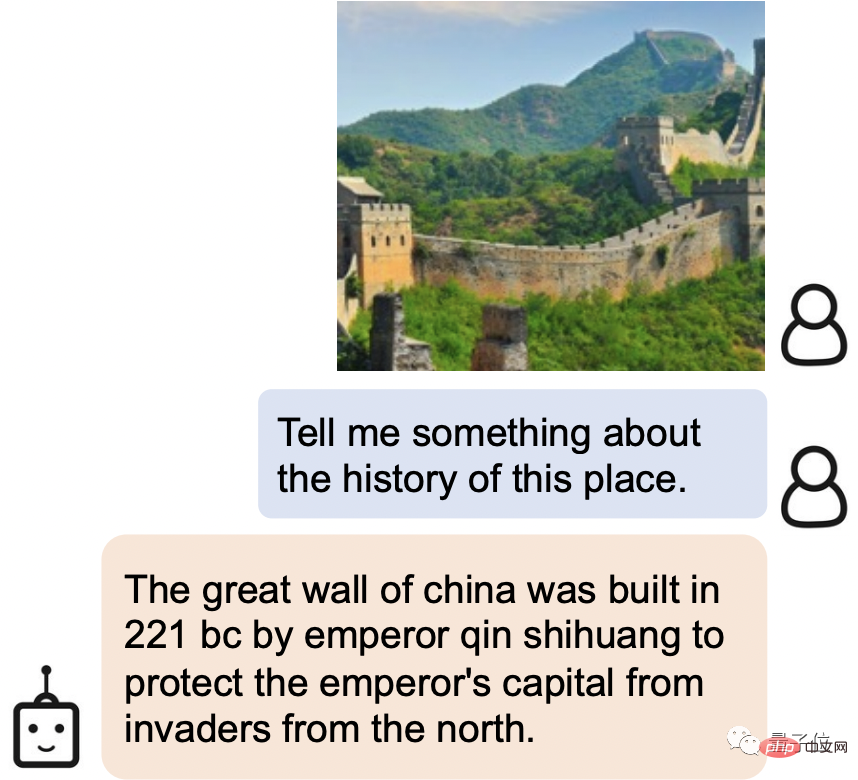

For example, BLIP-2 can not only easily identify the scenic spot in the picture as the Great Wall, but also introduce the history of the Great Wall:

The Great Wall of China was built by Qin Shihuang in 221 BC to protect the imperial capital. Built to protect against invasion from the north.

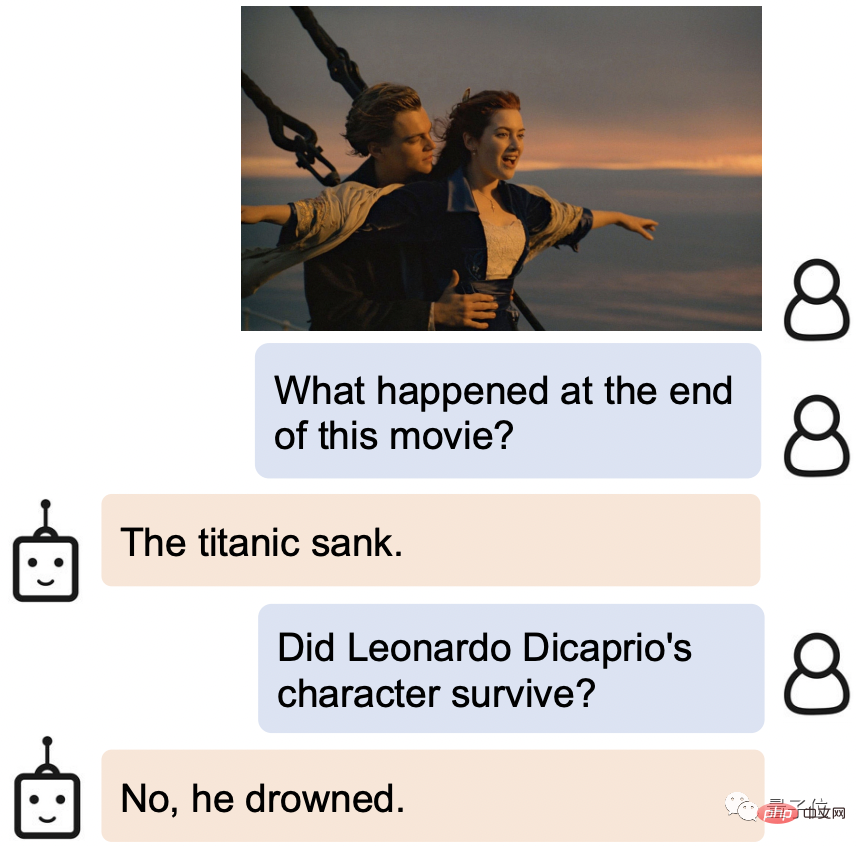

Give it a movie still, BLIP-2 not only knows where it comes from, but also knows the ending of the story: the sinking of the Titanic, male The Lord drowned.

BLIP-2 also grasps the human expression very accurately.

When asked what the man's expression in this picture was and why he was like this, BLIP-2's answer was: he was afraid of the chicken because it was flying towards him.

What’s even more amazing is that BLIP-2 also performs very well on many open questions.

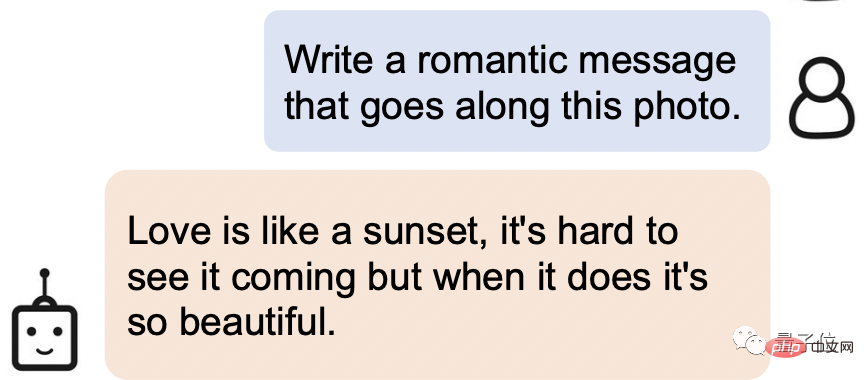

Let it write a romantic sentence based on the picture below:

Its answer is this: Love is like a sunset, It's hard to see it coming, but when it happens, it's so beautiful.

Not only does this person have perfect understanding, but he also has strong literary attainments!

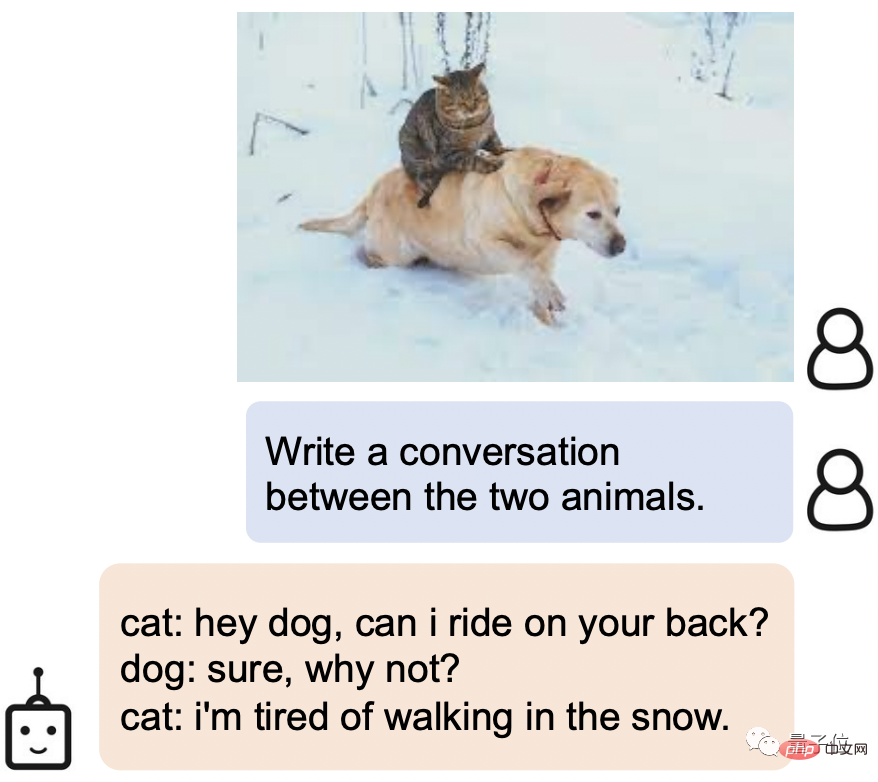

Let it generate a dialogue for the two animals in the picture. BLIP-2 can also easily handle the arrogant cat x silly cute dog Settings:

Cat: Hey, dog, can I ride on your back?

Dog: Of course, why not?

Cat: I'm tired of walking in the snow.

So, how does BLIP-2 achieve such a powerful understanding ability?

Implementing new SOTA on multiple visual language tasks

Considering that the end-to-end training cost of large-scale models is getting higher and higher, BLIP-2 uses a general and efficient pre-training method. Training strategy:

Bootstrap visual language pre-training from off-the-shelf frozen pre-trained image encoders and frozen large language models.

This also means that everyone can choose the model they want to use.

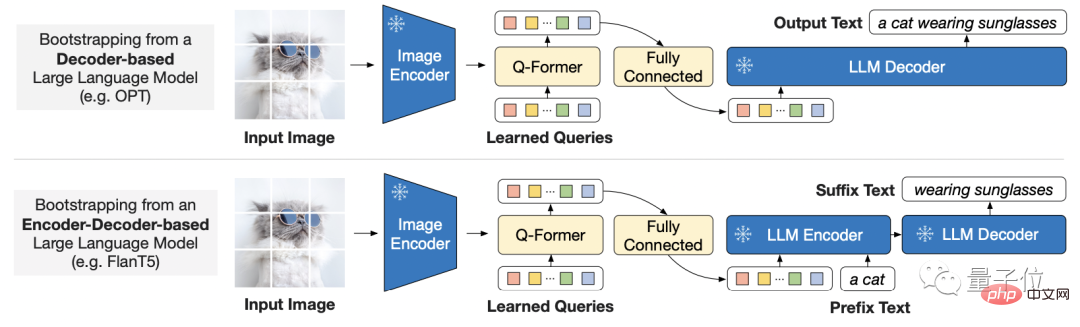

In order to bridge the gap between modes, the researcher proposed a lightweight query Transformer.

The Transformer is pre-trained in two stages:

The first stage guides visual language representation learning from the frozen image encoder, and the second stage guides vision from the frozen language model to language generation study.

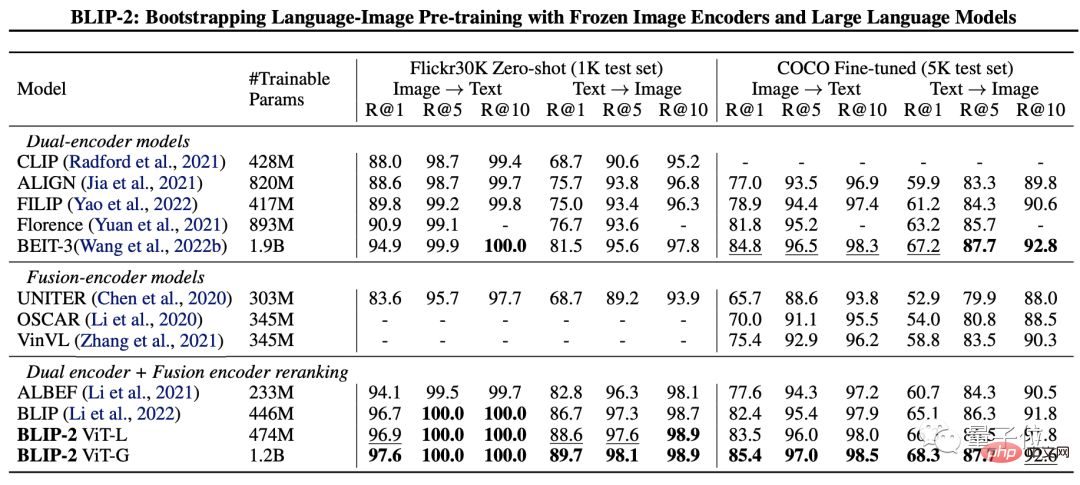

In order to test the performance of BLIP-2, the researchers started from zero-sample image-text generation, visual question answering, image-text retrieval, and image subtitles respectively. It was evaluated on the task.

The final results show that BLIP-2 achieved SOTA on multiple visual language tasks.

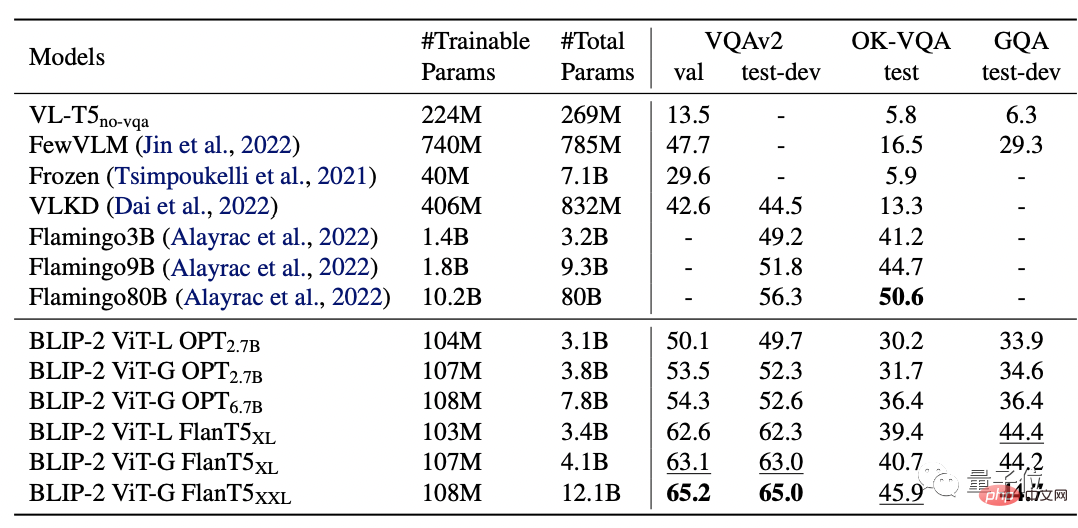

Among them, BLIP-2 is 8.7% higher than Flamingo 80B on zero-shot VQAv2, and the training parameters are reduced by 54 times.

And it is obvious that a stronger image encoder or a stronger language model will produce better performance.

It is worth mentioning that the researcher also mentioned at the end of the paper that BLIP-2 still has a shortcoming, that is, the lack of context learning ability :

Each sample contains only one image-text pair, and it is currently impossible to learn the correlation between multiple image-text pairs in a single sequence.

Research Team

The research team of BLIP-2 comes from Salesforce Research.

The first author is Junnan Li, who is also the author of BLIP, which was launched a year ago.

is currently a senior research scientist at Salesforce Asia Research Institute. Graduated from the University of Hong Kong with a bachelor's degree and a Ph.D. from the National University of Singapore.

The research field is very wide, including self-supervised learning, semi-supervised learning, weakly supervised learning, and visual-language.

The following is the paper link and GitHub link of BLIP-2. Interested friends can pick it up~

Paper link: https://arxiv.org/pdf/2301.12597. pdf

GitHub link: https://github.com/salesforce/LAVIS/tree/main/projects/blip2

Reference link: [1]https://twitter.com/mrdbourke /status/1620353263651688448

[2]https://twitter.com/LiJunnan0409/status/1620259379223343107

The above is the detailed content of Here's how to teach ChatGPT how to read pictures. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1378

1378

52

52

ChatGPT now allows free users to generate images by using DALL-E 3 with a daily limit

Aug 09, 2024 pm 09:37 PM

ChatGPT now allows free users to generate images by using DALL-E 3 with a daily limit

Aug 09, 2024 pm 09:37 PM

DALL-E 3 was officially introduced in September of 2023 as a vastly improved model than its predecessor. It is considered one of the best AI image generators to date, capable of creating images with intricate detail. However, at launch, it was exclus

How to write a novel in the Tomato Free Novel app. Share the tutorial on how to write a novel in Tomato Novel.

Mar 28, 2024 pm 12:50 PM

How to write a novel in the Tomato Free Novel app. Share the tutorial on how to write a novel in Tomato Novel.

Mar 28, 2024 pm 12:50 PM

Tomato Novel is a very popular novel reading software. We often have new novels and comics to read in Tomato Novel. Every novel and comic is very interesting. Many friends also want to write novels. Earn pocket money and edit the content of the novel you want to write into text. So how do we write the novel in it? My friends don’t know, so let’s go to this site together. Let’s take some time to look at an introduction to how to write a novel. Share the Tomato novel tutorial on how to write a novel. 1. First open the Tomato free novel app on your mobile phone and click on Personal Center - Writer Center. 2. Jump to the Tomato Writer Assistant page - click on Create a new book at the end of the novel.

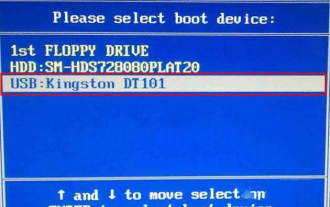

How to enter bios on Colorful motherboard? Teach you two methods

Mar 13, 2024 pm 06:01 PM

How to enter bios on Colorful motherboard? Teach you two methods

Mar 13, 2024 pm 06:01 PM

Colorful motherboards enjoy high popularity and market share in the Chinese domestic market, but some users of Colorful motherboards still don’t know how to enter the bios for settings? In response to this situation, the editor has specially brought you two methods to enter the colorful motherboard bios. Come and try it! Method 1: Use the U disk startup shortcut key to directly enter the U disk installation system. The shortcut key for the Colorful motherboard to start the U disk with one click is ESC or F11. First, use Black Shark Installation Master to create a Black Shark U disk boot disk, and then turn on the computer. When you see the startup screen, continuously press the ESC or F11 key on the keyboard to enter a window for sequential selection of startup items. Move the cursor to the place where "USB" is displayed, and then

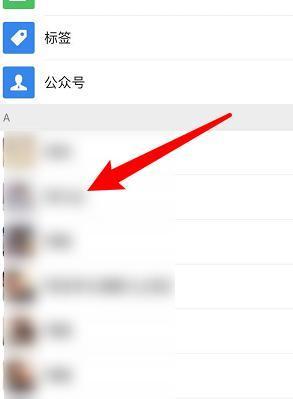

How to recover deleted contacts on WeChat (simple tutorial tells you how to recover deleted contacts)

May 01, 2024 pm 12:01 PM

How to recover deleted contacts on WeChat (simple tutorial tells you how to recover deleted contacts)

May 01, 2024 pm 12:01 PM

Unfortunately, people often delete certain contacts accidentally for some reasons. WeChat is a widely used social software. To help users solve this problem, this article will introduce how to retrieve deleted contacts in a simple way. 1. Understand the WeChat contact deletion mechanism. This provides us with the possibility to retrieve deleted contacts. The contact deletion mechanism in WeChat removes them from the address book, but does not delete them completely. 2. Use WeChat’s built-in “Contact Book Recovery” function. WeChat provides “Contact Book Recovery” to save time and energy. Users can quickly retrieve previously deleted contacts through this function. 3. Enter the WeChat settings page and click the lower right corner, open the WeChat application "Me" and click the settings icon in the upper right corner to enter the settings page.

The secret of hatching mobile dragon eggs is revealed (step by step to teach you how to successfully hatch mobile dragon eggs)

May 04, 2024 pm 06:01 PM

The secret of hatching mobile dragon eggs is revealed (step by step to teach you how to successfully hatch mobile dragon eggs)

May 04, 2024 pm 06:01 PM

Mobile games have become an integral part of people's lives with the development of technology. It has attracted the attention of many players with its cute dragon egg image and interesting hatching process, and one of the games that has attracted much attention is the mobile version of Dragon Egg. To help players better cultivate and grow their own dragons in the game, this article will introduce to you how to hatch dragon eggs in the mobile version. 1. Choose the appropriate type of dragon egg. Players need to carefully choose the type of dragon egg that they like and suit themselves, based on the different types of dragon egg attributes and abilities provided in the game. 2. Upgrade the level of the incubation machine. Players need to improve the level of the incubation machine by completing tasks and collecting props. The level of the incubation machine determines the hatching speed and hatching success rate. 3. Collect the resources required for hatching. Players need to be in the game

How to set font size on mobile phone (easily adjust font size on mobile phone)

May 07, 2024 pm 03:34 PM

How to set font size on mobile phone (easily adjust font size on mobile phone)

May 07, 2024 pm 03:34 PM

Setting font size has become an important personalization requirement as mobile phones become an important tool in people's daily lives. In order to meet the needs of different users, this article will introduce how to improve the mobile phone use experience and adjust the font size of the mobile phone through simple operations. Why do you need to adjust the font size of your mobile phone - Adjusting the font size can make the text clearer and easier to read - Suitable for the reading needs of users of different ages - Convenient for users with poor vision to use the font size setting function of the mobile phone system - How to enter the system settings interface - In Find and enter the "Display" option in the settings interface - find the "Font Size" option and adjust it. Adjust the font size with a third-party application - download and install an application that supports font size adjustment - open the application and enter the relevant settings interface - according to the individual

Quickly master: How to open two WeChat accounts on Huawei mobile phones revealed!

Mar 23, 2024 am 10:42 AM

Quickly master: How to open two WeChat accounts on Huawei mobile phones revealed!

Mar 23, 2024 am 10:42 AM

In today's society, mobile phones have become an indispensable part of our lives. As an important tool for our daily communication, work, and life, WeChat is often used. However, it may be necessary to separate two WeChat accounts when handling different transactions, which requires the mobile phone to support logging in to two WeChat accounts at the same time. As a well-known domestic brand, Huawei mobile phones are used by many people. So what is the method to open two WeChat accounts on Huawei mobile phones? Let’s reveal the secret of this method. First of all, you need to use two WeChat accounts at the same time on your Huawei mobile phone. The easiest way is to

The difference between Go language methods and functions and analysis of application scenarios

Apr 04, 2024 am 09:24 AM

The difference between Go language methods and functions and analysis of application scenarios

Apr 04, 2024 am 09:24 AM

The difference between Go language methods and functions lies in their association with structures: methods are associated with structures and are used to operate structure data or methods; functions are independent of types and are used to perform general operations.