On December 2, PyTorch 2.0 was officially released!

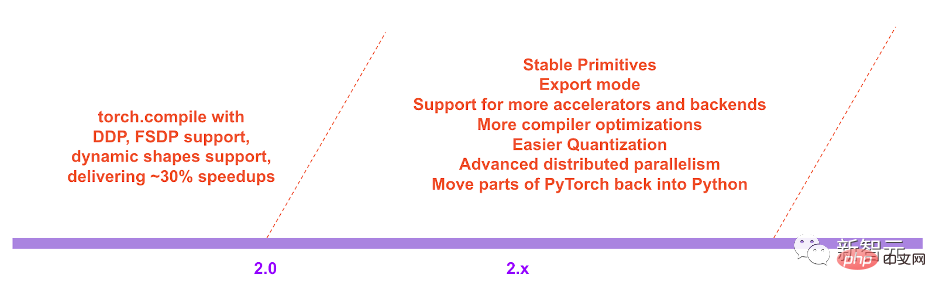

This update not only pushes the performance of PyTorch to new heights, but also adds support for dynamic shapes and distribution.

In addition, the 2.0 series will also move some PyTorch code from C back to Python.

Currently, PyTorch 2.0 is still in the testing phase, and the first stable version is expected to be available in early March 2023.

Over the past few years, PyTorch has innovated and iterated from 1.0 to the recent 1.13, and moved to the newly formed PyTorch Foundation to become part of the Linux Foundation.

The challenge with the current version of PyTorch is that eager-mode has difficulty keeping up with ever-increasing GPU bandwidth and crazier model architectures.

The birth of PyTorch 2.0 will fundamentally change and improve the way PyTorch runs at the compiler level.

As we all know, (Py) in PyTorch comes from the open source Python programming language widely used in data science.

However, PyTorch’s code does not entirely use Python, but gives part of it to C.

However, in the future 2.x series, the PyTorch project team plans to move the code related to torch.nn back to Python.

Beyond that, since PyTorch 2.0 is a completely add-on (and optional) feature, 2.0 is 100% backwards compatible.

In other words, the code base is the same, the API is the same, and the way to write models is the same.

Capture safely using Python framework evaluation hooks The PyTorch program is a major innovation developed by the team in graph capture over the past five years.

Overloads PyTorch's autograd engine as a tracing autodiff for generating lookahead reverse traces.

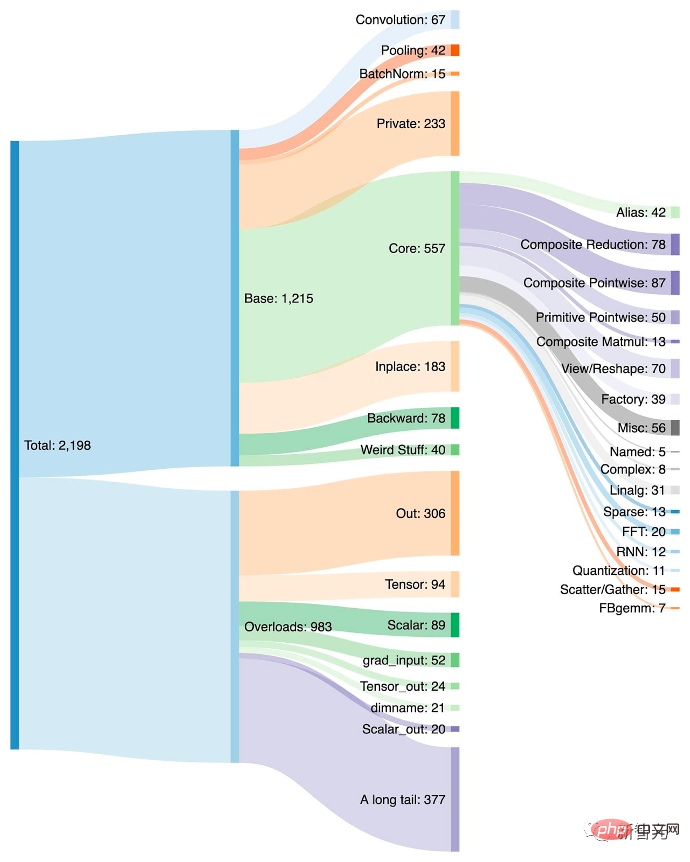

Reduced ~2000+ PyTorch operators into a closed set of ~250 primitive operators that developers can target operator to build a complete PyTorch backend. The barrier to writing PyTorch functionality or backends is greatly lowered.

A deep learning compiler that can generate fast code for multiple accelerators and backends. For Nvidia's GPUs, it uses OpenAI Triton as a key building block.

It is worth noting that TorchDynamo, AOTAutograd, PrimTorch and TorchInductor are all written in Python and support dynamic shapes.

By introducing the new compilation mode "torch.compile", PyTorch 2.0 can be accelerated with just one line of code Model training.

No tricks required here, just run torch.compile() and that’s it:

opt_module = torch.compile(module)

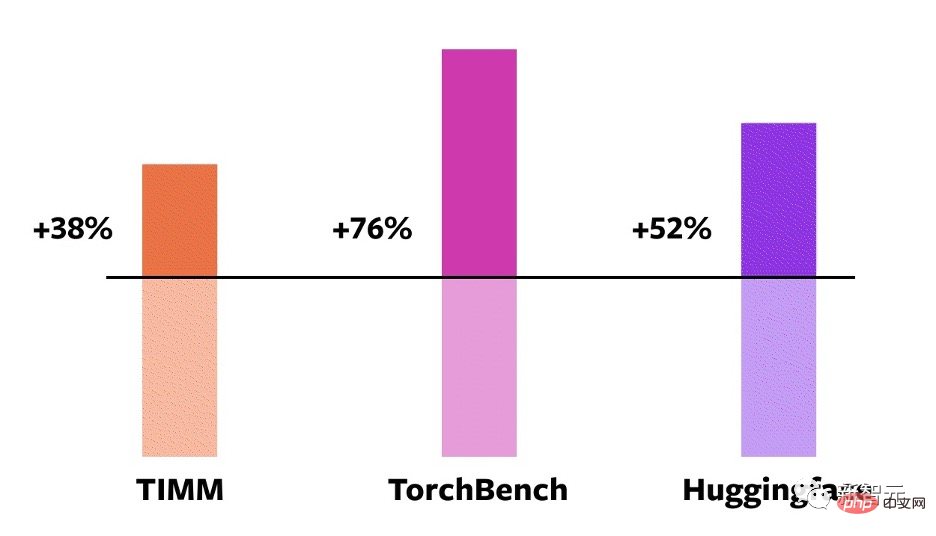

In order to verify these technologies, the team carefully created test benchmarks, including image classification, object detection, image generation and other tasks, as well as various NLP tasks such as language modeling, question answering, sequence classification, recommendation systems and Reinforcement learning. Among them, these benchmarks can be divided into three categories:

Test results show that in these 163 models spanning vision, NLP and other fields On the open source model, the training speed has been improved by 38%-76%.

Comparison on NVIDIA A100 GPU

In addition, the team also Benchmarks were conducted on some popular open source PyTorch models and substantial speedups from 30% to 2x were obtained.

Developer Sylvain Gugger said: "With just one line of code, PyTorch 2.0 can achieve a 1.5x to 2.0x speedup when training Transformers models. This is self-mixing precision training The most exciting thing since its advent!"

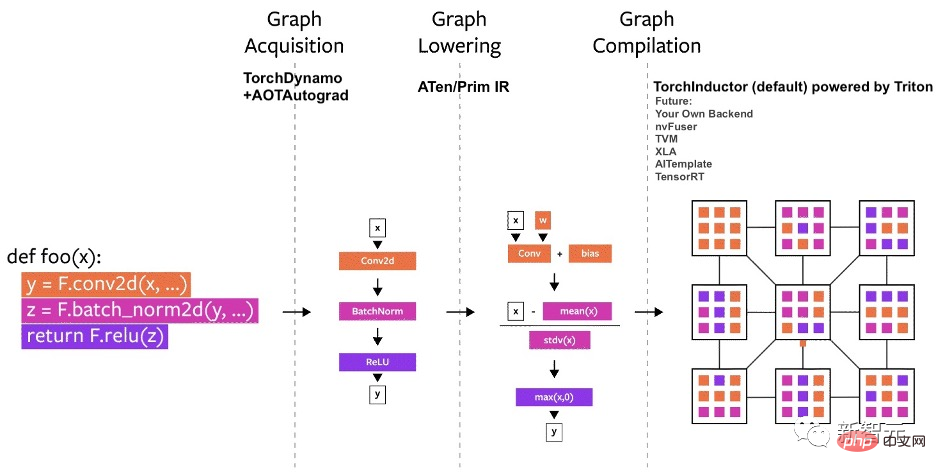

PyTorch's compiler can be broken down into three parts:

Among them, when building the PyTorch compiler, the acquisition of graphs is more Difficult challenge.

At the beginning of this year, the team started working on TorchDynamo. This method uses A CPython feature introduced in PEP-0523, called the Framework Evaluation API.

To this end, the team took a data-driven approach to verify the effectiveness of TorchDynamo on graph capture - by using more than 7,000 Github projects written in PyTorch as validation set.

The results show that TorchDynamo can perform graph capture correctly and safely 99% of the time, with negligible overhead.

For PyTorch 2.0’s new compiler backend, the team took inspiration from how users write high-performance custom kernels : Increasing use of the Triton language.

TorchInductor uses Pythonic-defined loop-by-loop level IR to automatically map PyTorch models to generated Triton code on the GPU and C/OpenMP on the CPU.

TorchInductor's core loop-level IR only contains about 50 operators, and it is implemented in Python, making it easy to extend.

To speed up training, you need to capture not only user-level code, but also backpropagation.

AOTAutograd can use PyTorch's torch_dispatch extension mechanism to track the Autograd engine, capture backpropagation "in advance", and then use TorchInductor to accelerate the forward and backward channels.

PyTorch has more than 1200 operators, and if you take into account the various overloads of each operator, there are more than 2000 indivual. Therefore, writing back-end or cross-domain functionality becomes an energy-consuming task.

In the PrimTorch project, the team defined two smaller and more stable operator sets:

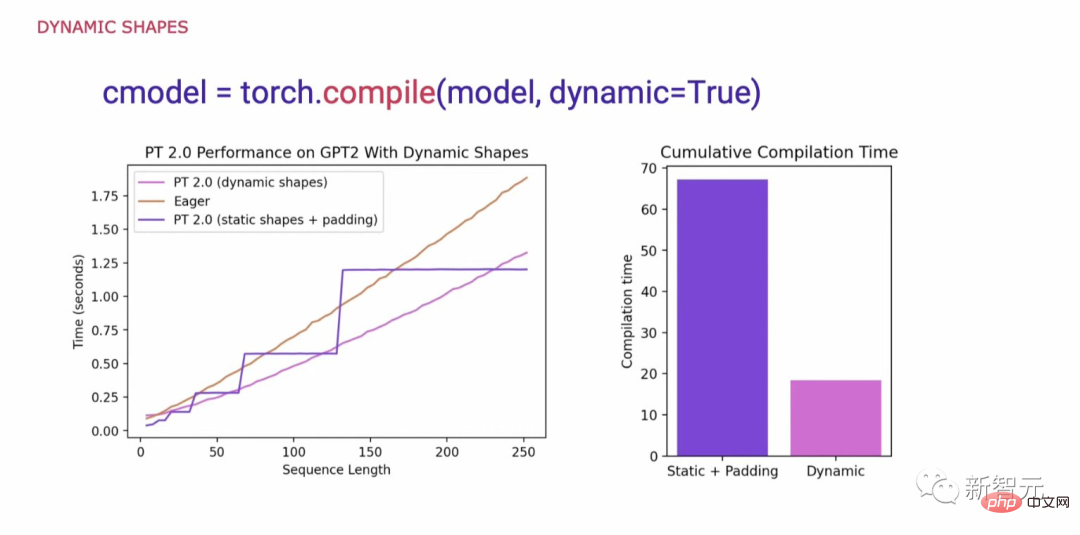

A key requirement when researching what is necessary to support the generality of PyTorch code is to support dynamic shapes and allow models to accept tensors of different sizes without causing recompilation every time the shape changes.

When dynamic shapes are not supported, a common workaround is to pad them to the nearest power of 2. However, as we can see from the chart below, it incurs a significant performance overhead and also results in significantly longer compilation times.

Now, with support for dynamic shapes, PyTorch 2.0 has achieved up to 40% higher performance than Eager.

Finally, in the roadmap for PyTorch 2.x, the team hopes to further push the compilation model forward in terms of performance and scalability.

The above is the detailed content of One line of code, making elixirs twice as fast! PyTorch 2.0 comes out in surprise, LeCun enthusiastically forwards it. For more information, please follow other related articles on the PHP Chinese website!