Write a Python program to crawl the fund flow of sectors

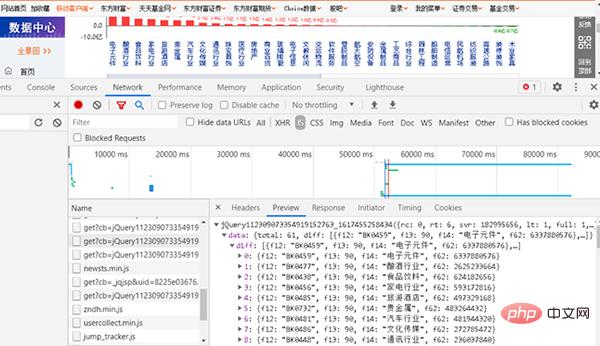

Through the above example of crawling the capital flow of individual stocks, you should be able to learn to write your own crawling code. Now consolidate it and do a similar small exercise. You need to write your own Python program to crawl the fund flow of online sectors. The crawled URL is http://data.eastmoney.com/bkzj/hy.html, and the display interface is shown in Figure 1.

# 图 1 The Faculty Stream URL interface

1, find js

# Directly press the F12 key, open the development commissioning tool and find the data The corresponding web page is shown in Figure 2.

Figure 2 Find the web page corresponding to JS

and then enter the URL into the browser. The URL is relatively long.

http://push2.eastmoney.com/api/qt/clist/get?cb=jQuery112309073354919152763_1617455258434&pn=1&pz=500&po=1&np=1&fields=f12,f13,f14,f62&fid=f62&fs=m:9 0+ t:2&ut=b2884a393a59ad64002292a3e90d46a5&_=1617455258435

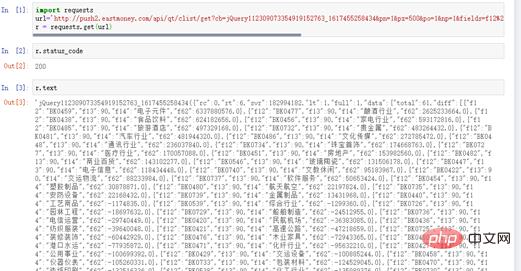

At this time, you will get feedback from the website, as shown in Figure 3.

Figure 3 Obtaining sections and capital flows from the website

The content corresponding to this URL is the content we want to crawl.

2, request request and response response status

Write the crawler code, see the following code for details:

# coding=utf-8 import requests url=" http://push2.eastmoney.com/api/qt/clist/get?cb=jQuery112309073354919152763_ 1617455258436&fid=f62&po=1&pz=50&pn=1&np=1&fltt=2&invt=2&ut=b2884a393a59ad64002292a3 e90d46a5&fs=m%3A90+t%3A2&fields=f12%2Cf14%2Cf2%2Cf3%2Cf62%2Cf184%2Cf66%2Cf69%2Cf72%2 Cf75%2Cf78%2Cf81%2Cf84%2Cf87%2Cf204%2Cf205%2Cf124" r = requests.get(url)

r.status_code displays 200, indicating that the response status is normal. r.text also has data, indicating that crawling the capital flow data is successful, as shown in Figure 4.

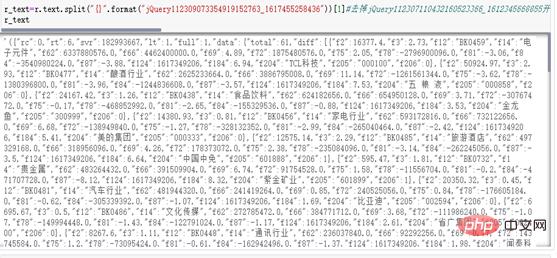

Figure 4 response status

3, clean str into JSON standard format

(1) Analyze r.text data. Its internal format is standard JSON, but with some extra prefixes in front. Remove the jQ prefix and use the split() function to complete this operation. See the following code for details:

r_text=r.text.split("{}".format("jQuery112309073354919152763_1617455258436"))[1]

r_textThe running results are shown in Figure 5.

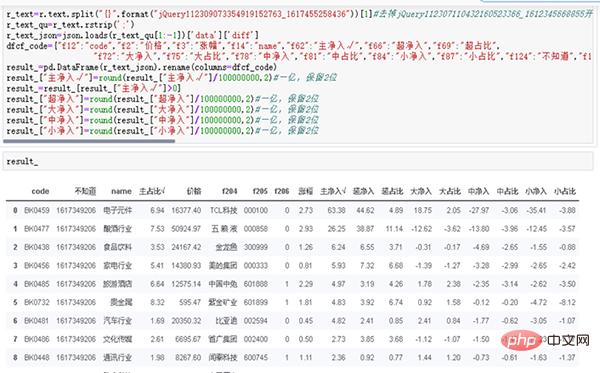

r_text_qu=r_text.rstrip(';')

r_text_json=json.loads(r_text_qu[1:-1])['data']['diff']

dfcf_code={"f12":"code","f2":"价格","f3":"涨幅","f14":"name","f62":"主净入√","f66":"超净入","f69":"超占比", "f72":"大净入","f75":"大占比","f78":"中净入","f81":"中占比","f84":"小净入","f87":"小占比","f124":"不知道","f184":"主占比√"}

result_=pd.DataFrame(r_text_json).rename(columns=dfcf_code)

result_["主净入√"]=round(result_["主净入√"]/100000000,2)#一亿,保留2位

result_=result_[result_["主净入√"]>0]

result_["超净入"]=round(result_["超净入"]/100000000,2)#一亿,保留2位

result_["大净入"]=round(result_["大净入"]/100000000,2)#一亿,保留2位

result_["中净入"]=round(result_["中净入"]/100000000,2)#一亿,保留2位

result_["小净入"]=round(result_["小净入"]/100000000,2)#一亿,保留2位

result_ Through the above two examples of fund crawling, you must have understood some of the methods of using crawlers. The core idea is:

Through the above two examples of fund crawling, you must have understood some of the methods of using crawlers. The core idea is:

(1) Select the advantages of capital flows of individual stocks;

(2) Obtain the URL and analyze it;

(3) Use crawlers to collect data Get and save data.

Figure 6 Data SavingSummaryJSON format data is one of the standardized data formats used by many websites. The lightweight data exchange format is very easy to read and write, and can effectively improve network transmission efficiency. The first thing to crawl is the string in str format. Through data processing and processing, it is turned into standard JSON format, and then into Pandas format.Through case analysis and actual combat, we must learn to write our own code to crawl financial data and have the ability to convert it into JSON standard format. Complete daily data crawling and data storage work to provide effective data support for future historical testing and historical analysis of data.

Of course, capable readers can save the results to databases such as MySQL, MongoDB, or even the cloud database Mongo Atlas. The author will not focus on the explanation here. We focus entirely on the study of quantitative learning and strategy. Using txt format to save data can completely solve the problem of early data storage, and the data is also complete and effective.

The above is the detailed content of Write a Python program to crawl the fund flow of sectors. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Is there any mobile app that can convert XML into PDF?

Apr 02, 2025 pm 08:54 PM

Is there any mobile app that can convert XML into PDF?

Apr 02, 2025 pm 08:54 PM

An application that converts XML directly to PDF cannot be found because they are two fundamentally different formats. XML is used to store data, while PDF is used to display documents. To complete the transformation, you can use programming languages and libraries such as Python and ReportLab to parse XML data and generate PDF documents.

Is the conversion speed fast when converting XML to PDF on mobile phone?

Apr 02, 2025 pm 10:09 PM

Is the conversion speed fast when converting XML to PDF on mobile phone?

Apr 02, 2025 pm 10:09 PM

The speed of mobile XML to PDF depends on the following factors: the complexity of XML structure. Mobile hardware configuration conversion method (library, algorithm) code quality optimization methods (select efficient libraries, optimize algorithms, cache data, and utilize multi-threading). Overall, there is no absolute answer and it needs to be optimized according to the specific situation.

How to convert XML files to PDF on your phone?

Apr 02, 2025 pm 10:12 PM

How to convert XML files to PDF on your phone?

Apr 02, 2025 pm 10:12 PM

It is impossible to complete XML to PDF conversion directly on your phone with a single application. It is necessary to use cloud services, which can be achieved through two steps: 1. Convert XML to PDF in the cloud, 2. Access or download the converted PDF file on the mobile phone.

How to control the size of XML converted to images?

Apr 02, 2025 pm 07:24 PM

How to control the size of XML converted to images?

Apr 02, 2025 pm 07:24 PM

To generate images through XML, you need to use graph libraries (such as Pillow and JFreeChart) as bridges to generate images based on metadata (size, color) in XML. The key to controlling the size of the image is to adjust the values of the <width> and <height> tags in XML. However, in practical applications, the complexity of XML structure, the fineness of graph drawing, the speed of image generation and memory consumption, and the selection of image formats all have an impact on the generated image size. Therefore, it is necessary to have a deep understanding of XML structure, proficient in the graphics library, and consider factors such as optimization algorithms and image format selection.

How to open xml format

Apr 02, 2025 pm 09:00 PM

How to open xml format

Apr 02, 2025 pm 09:00 PM

Use most text editors to open XML files; if you need a more intuitive tree display, you can use an XML editor, such as Oxygen XML Editor or XMLSpy; if you process XML data in a program, you need to use a programming language (such as Python) and XML libraries (such as xml.etree.ElementTree) to parse.

Recommended XML formatting tool

Apr 02, 2025 pm 09:03 PM

Recommended XML formatting tool

Apr 02, 2025 pm 09:03 PM

XML formatting tools can type code according to rules to improve readability and understanding. When selecting a tool, pay attention to customization capabilities, handling of special circumstances, performance and ease of use. Commonly used tool types include online tools, IDE plug-ins, and command-line tools.

Is there a mobile app that can convert XML into PDF?

Apr 02, 2025 pm 09:45 PM

Is there a mobile app that can convert XML into PDF?

Apr 02, 2025 pm 09:45 PM

There is no APP that can convert all XML files into PDFs because the XML structure is flexible and diverse. The core of XML to PDF is to convert the data structure into a page layout, which requires parsing XML and generating PDF. Common methods include parsing XML using Python libraries such as ElementTree and generating PDFs using ReportLab library. For complex XML, it may be necessary to use XSLT transformation structures. When optimizing performance, consider using multithreaded or multiprocesses and select the appropriate library.

What is the function of C language sum?

Apr 03, 2025 pm 02:21 PM

What is the function of C language sum?

Apr 03, 2025 pm 02:21 PM

There is no built-in sum function in C language, so it needs to be written by yourself. Sum can be achieved by traversing the array and accumulating elements: Loop version: Sum is calculated using for loop and array length. Pointer version: Use pointers to point to array elements, and efficient summing is achieved through self-increment pointers. Dynamically allocate array version: Dynamically allocate arrays and manage memory yourself, ensuring that allocated memory is freed to prevent memory leaks.