Technology peripherals

Technology peripherals

AI

AI

How does GNN model spatiotemporal information? A review of 'Spatial-temporal Graph Neural Network' at Queen Mary University of London, a concise explanation of the spatio-temporal graph neural network method

How does GNN model spatiotemporal information? A review of 'Spatial-temporal Graph Neural Network' at Queen Mary University of London, a concise explanation of the spatio-temporal graph neural network method

How does GNN model spatiotemporal information? A review of 'Spatial-temporal Graph Neural Network' at Queen Mary University of London, a concise explanation of the spatio-temporal graph neural network method

These powerful algorithms have gained huge interest in the past few years. However, this performance is based on the assumption of static graph structure, which limits the performance of graph neural networks when data changes over time. Sequential graph neural network is an extension of graph neural network that considers time factors.

In recent years, various sequential graph neural network algorithms have been proposed and have achieved performance superior to other deep learning algorithms in multiple time-related applications. This review discusses interesting topics related to spatiotemporal graph neural networks, including algorithms, applications, and open challenges.

Paper address: https://www.php.cn/link/1915523773b16865a73a38acc952ccda

1. Introduction

Graph Neural Network (GNN) is a type of deep learning model specifically designed to process graph-structured data. These models exploit graph topology to learn meaningful representations of the nodes and edges of the graph. Graph neural networks are an extension of traditional convolutional neural networks and have proven effective in tasks such as graph classification, node classification, and link prediction. One of the key advantages of GNNs is that they maintain good performance even as the size of the underlying graph grows, because the number of learnable parameters is independent of the number of nodes in the graph. Graph neural networks (GNN) have been widely used in various fields such as recommendation systems, drug discovery and biology, and resource allocation in autonomous systems. However, these models are limited to static graph data, where the graph structure is fixed. In recent years, time-varying graph data has attracted increasing attention, appearing in various systems and carrying valuable temporal information. Applications of time-varying graph data include multivariate time series data, social networks, audio-visual systems, etc.

In order to meet this demand, a new GNN family has emerged: spatiotemporal GNN, which takes into account the spatial and temporal dimensions of the data by learning the temporal representation of the graph structure. This paper provides a comprehensive review of state-of-the-art spatiotemporal graph neural networks. This article begins with a brief overview of different types of spatiotemporal graph neural networks and their basic assumptions. The specific algorithms used in spatiotemporal GNNs are studied in more detail, while also providing a useful taxonomy for grouping these models. The paper also provides an overview of various applications of spatiotemporal GNNs, highlighting key areas where these models have been used to achieve state-of-the-art results. Finally, challenges facing the field and future research directions are discussed. In conclusion, this review aims to provide a comprehensive and in-depth study of spatiotemporal graph neural networks, highlighting the current state of the field, the key challenges that still need to be addressed, and the exciting future possibilities of these models.

2. Algorithm

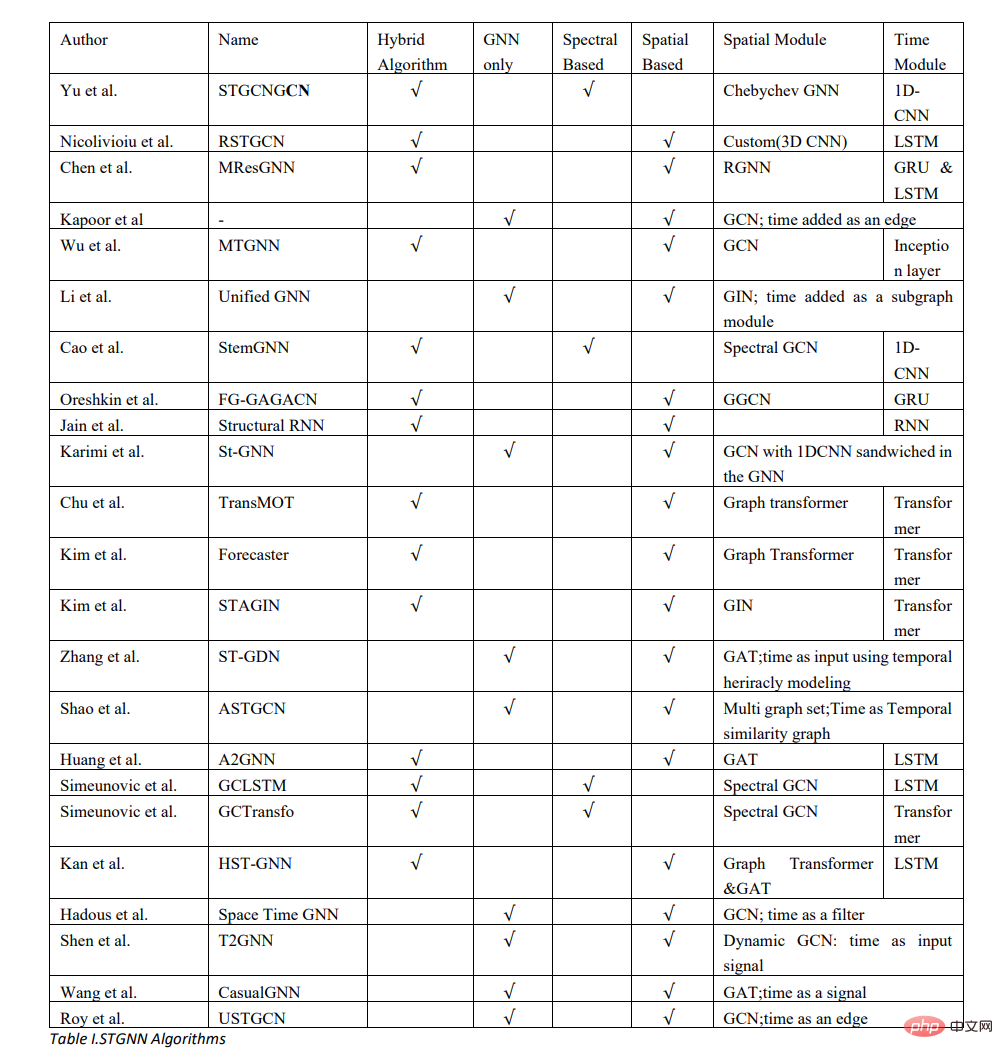

From an algorithmic perspective, spatiotemporal graph neural networks can be divided into two categories: spectrum-based and space-based. Another classification category is methods that introduce time variation: another machine learning algorithm or defining time in a graph structure.

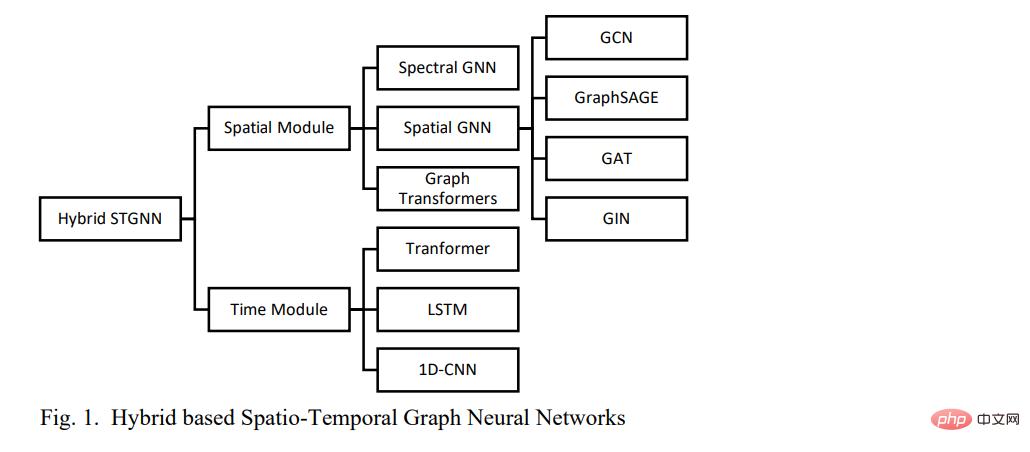

2.1 Hybrid spatiotemporal graph neural network

The hybrid spatiotemporal graph neural network consists of two main components: spatial component and temporal component. In hybrid spatiotemporal graph neural networks, graph neural network algorithms are used to model spatial dependencies in data.

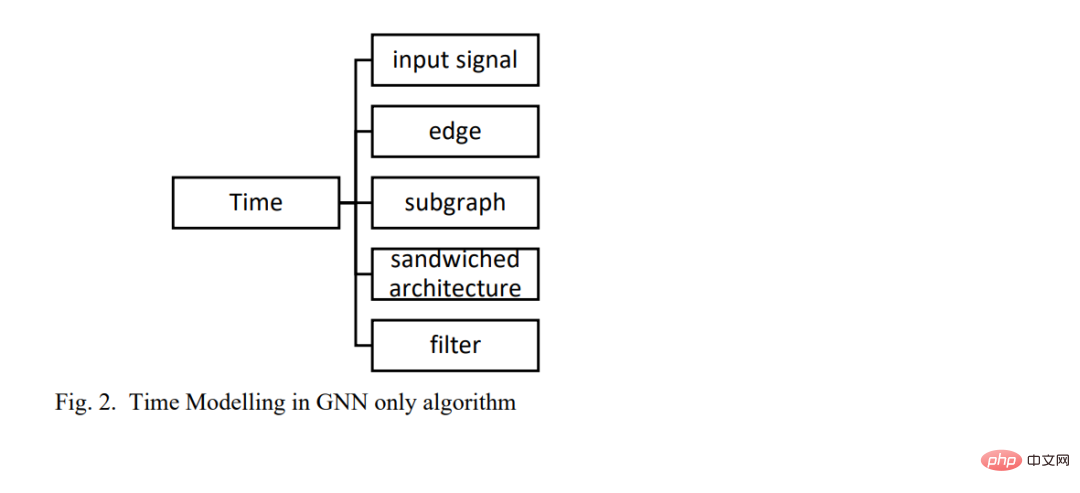

2.2 Solo-Graph Neural Network

Another way to model time in a spatio-temporal graph neural network is to define the time frame in the GNN itself. Various methods have been proposed, including: defining time as edges, inputting time as signals into GNNs, modeling time as subgraphs, and sandwiching other machine learning architectures into GNNs (Figure 2).

3. Application

3.1 Multivariable time series forecast

Processed by graph neural network Inspired by the ability of relational dependence [10], spatiotemporal graph neural networks are widely used in multivariate time series prediction. Applications include traffic forecasting, Covid forecasting, photovoltaic power consumption, RSU communications and seismic applications.

3.2 Character interaction

In machine learning and computer vision, spatiotemporal domain learning is still a very challenging problem. The main challenge is how to model interactions between objects and higher-level concepts in large spatiotemporal contexts [18]. In such a difficult learning task, it is crucial to effectively model spatial relationships, local appearance, and complex interactions and changes over time. [18] introduced a spatiotemporal graph neural network model that loops in space and time, suitable for capturing the local appearance and complex high-level interactions of different entities and objects in changing world scenes [18].

3.3 Dynamic graph representation

Sequential graph representation learning has always been considered a very important aspect in graph machine learning [15,31]. Aiming at the limitation that existing methods rely on discrete snapshots of sequence diagrams and cannot capture powerful representations, [3] proposed a dynamic graph representation learning method based on spatiotemporal graph neural networks. Furthermore, [15] now use spatiotemporal GNN to dynamically represent brain maps. Multi-target tracking Multi-target tracking in videos relies heavily on modeling the spatio-temporal interactions between targets [16]. [16] proposed a spatiotemporal graph neural network algorithm to model the spatial and temporal interactions between objects.

3.4 Sign Language Interpretation

Sign language uses a visual-manual method to convey meaning and is the main communication tool for the deaf and hard of hearing groups. To bridge the communication gap between spoken language users and sign language users, machine learning technology is introduced. Traditionally, neural machine translation has been widely adopted, but more advanced methods are needed to capture the spatial properties of sign languages. [13] proposed a sign language translation system based on spatiotemporal graph neural network, which has a strong ability to capture the spatiotemporal structure of sign language and achieved the best performance compared with the traditional neural machine translation method [13] .

3.5 Technology Growth Ranking

Understanding the growth rate of technology is a core key to the business strategy of the technology department. Additionally, forecasting the growth rates of technologies and their relationships with each other can aid business decisions in product definition, marketing strategies, and R&D. [32] proposed a prediction method for social network technology growth ranking based on spatiotemporal graph neural network.

4. Conclusion

Graph neural networks have gained tremendous interest in the past few years. These powerful algorithms extend deep learning models to non-Euclidean spaces. However, graph neural networks are limited to static graph structure assumptions, which limits the performance of graph neural networks when data changes over time. Sequential graph neural network is an extension of graph neural network that considers time factors. This article provides a comprehensive overview of spatiotemporal graph neural networks. This paper proposes a taxonomy that divides spatiotemporal graph neural networks into two categories based on time-varying methods. The wide range of applications of spatiotemporal graph neural networks are also discussed. Finally, future research directions are proposed based on the open challenges currently faced by spatiotemporal graph neural networks.

Reference materials:https://www.php.cn/link/1915523773b16865a73a38acc952ccda

The above is the detailed content of How does GNN model spatiotemporal information? A review of 'Spatial-temporal Graph Neural Network' at Queen Mary University of London, a concise explanation of the spatio-temporal graph neural network method. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

Methods and steps for using BERT for sentiment analysis in Python

Jan 22, 2024 pm 04:24 PM

Methods and steps for using BERT for sentiment analysis in Python

Jan 22, 2024 pm 04:24 PM

BERT is a pre-trained deep learning language model proposed by Google in 2018. The full name is BidirectionalEncoderRepresentationsfromTransformers, which is based on the Transformer architecture and has the characteristics of bidirectional encoding. Compared with traditional one-way coding models, BERT can consider contextual information at the same time when processing text, so it performs well in natural language processing tasks. Its bidirectionality enables BERT to better understand the semantic relationships in sentences, thereby improving the expressive ability of the model. Through pre-training and fine-tuning methods, BERT can be used for various natural language processing tasks, such as sentiment analysis, naming

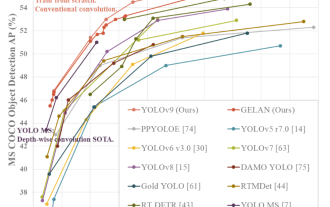

YOLO is immortal! YOLOv9 is released: performance and speed SOTA~

Feb 26, 2024 am 11:31 AM

YOLO is immortal! YOLOv9 is released: performance and speed SOTA~

Feb 26, 2024 am 11:31 AM

Today's deep learning methods focus on designing the most suitable objective function so that the model's prediction results are closest to the actual situation. At the same time, a suitable architecture must be designed to obtain sufficient information for prediction. Existing methods ignore the fact that when the input data undergoes layer-by-layer feature extraction and spatial transformation, a large amount of information will be lost. This article will delve into important issues when transmitting data through deep networks, namely information bottlenecks and reversible functions. Based on this, the concept of programmable gradient information (PGI) is proposed to cope with the various changes required by deep networks to achieve multi-objectives. PGI can provide complete input information for the target task to calculate the objective function, thereby obtaining reliable gradient information to update network weights. In addition, a new lightweight network framework is designed

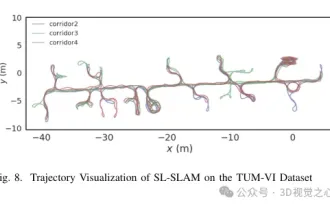

Beyond ORB-SLAM3! SL-SLAM: Low light, severe jitter and weak texture scenes are all handled

May 30, 2024 am 09:35 AM

Beyond ORB-SLAM3! SL-SLAM: Low light, severe jitter and weak texture scenes are all handled

May 30, 2024 am 09:35 AM

Written previously, today we discuss how deep learning technology can improve the performance of vision-based SLAM (simultaneous localization and mapping) in complex environments. By combining deep feature extraction and depth matching methods, here we introduce a versatile hybrid visual SLAM system designed to improve adaptation in challenging scenarios such as low-light conditions, dynamic lighting, weakly textured areas, and severe jitter. sex. Our system supports multiple modes, including extended monocular, stereo, monocular-inertial, and stereo-inertial configurations. In addition, it also analyzes how to combine visual SLAM with deep learning methods to inspire other research. Through extensive experiments on public datasets and self-sampled data, we demonstrate the superiority of SL-SLAM in terms of positioning accuracy and tracking robustness.

Latent space embedding: explanation and demonstration

Jan 22, 2024 pm 05:30 PM

Latent space embedding: explanation and demonstration

Jan 22, 2024 pm 05:30 PM

Latent Space Embedding (LatentSpaceEmbedding) is the process of mapping high-dimensional data to low-dimensional space. In the field of machine learning and deep learning, latent space embedding is usually a neural network model that maps high-dimensional input data into a set of low-dimensional vector representations. This set of vectors is often called "latent vectors" or "latent encodings". The purpose of latent space embedding is to capture important features in the data and represent them into a more concise and understandable form. Through latent space embedding, we can perform operations such as visualizing, classifying, and clustering data in low-dimensional space to better understand and utilize the data. Latent space embedding has wide applications in many fields, such as image generation, feature extraction, dimensionality reduction, etc. Latent space embedding is the main

Understand in one article: the connections and differences between AI, machine learning and deep learning

Mar 02, 2024 am 11:19 AM

Understand in one article: the connections and differences between AI, machine learning and deep learning

Mar 02, 2024 am 11:19 AM

In today's wave of rapid technological changes, Artificial Intelligence (AI), Machine Learning (ML) and Deep Learning (DL) are like bright stars, leading the new wave of information technology. These three words frequently appear in various cutting-edge discussions and practical applications, but for many explorers who are new to this field, their specific meanings and their internal connections may still be shrouded in mystery. So let's take a look at this picture first. It can be seen that there is a close correlation and progressive relationship between deep learning, machine learning and artificial intelligence. Deep learning is a specific field of machine learning, and machine learning

Super strong! Top 10 deep learning algorithms!

Mar 15, 2024 pm 03:46 PM

Super strong! Top 10 deep learning algorithms!

Mar 15, 2024 pm 03:46 PM

Almost 20 years have passed since the concept of deep learning was proposed in 2006. Deep learning, as a revolution in the field of artificial intelligence, has spawned many influential algorithms. So, what do you think are the top 10 algorithms for deep learning? The following are the top algorithms for deep learning in my opinion. They all occupy an important position in terms of innovation, application value and influence. 1. Deep neural network (DNN) background: Deep neural network (DNN), also called multi-layer perceptron, is the most common deep learning algorithm. When it was first invented, it was questioned due to the computing power bottleneck. Until recent years, computing power, The breakthrough came with the explosion of data. DNN is a neural network model that contains multiple hidden layers. In this model, each layer passes input to the next layer and

1.3ms takes 1.3ms! Tsinghua's latest open source mobile neural network architecture RepViT

Mar 11, 2024 pm 12:07 PM

1.3ms takes 1.3ms! Tsinghua's latest open source mobile neural network architecture RepViT

Mar 11, 2024 pm 12:07 PM

Paper address: https://arxiv.org/abs/2307.09283 Code address: https://github.com/THU-MIG/RepViTRepViT performs well in the mobile ViT architecture and shows significant advantages. Next, we explore the contributions of this study. It is mentioned in the article that lightweight ViTs generally perform better than lightweight CNNs on visual tasks, mainly due to their multi-head self-attention module (MSHA) that allows the model to learn global representations. However, the architectural differences between lightweight ViTs and lightweight CNNs have not been fully studied. In this study, the authors integrated lightweight ViTs into the effective

How to use CNN and Transformer hybrid models to improve performance

Jan 24, 2024 am 10:33 AM

How to use CNN and Transformer hybrid models to improve performance

Jan 24, 2024 am 10:33 AM

Convolutional Neural Network (CNN) and Transformer are two different deep learning models that have shown excellent performance on different tasks. CNN is mainly used for computer vision tasks such as image classification, target detection and image segmentation. It extracts local features on the image through convolution operations, and performs feature dimensionality reduction and spatial invariance through pooling operations. In contrast, Transformer is mainly used for natural language processing (NLP) tasks such as machine translation, text classification, and speech recognition. It uses a self-attention mechanism to model dependencies in sequences, avoiding the sequential computation in traditional recurrent neural networks. Although these two models are used for different tasks, they have similarities in sequence modeling, so