Technology peripherals

Technology peripherals

AI

AI

AI knows what you are thinking and draws it for you. The project code has been open source

AI knows what you are thinking and draws it for you. The project code has been open source

AI knows what you are thinking and draws it for you. The project code has been open source

In the science fiction novel "The Three-Body Problem", the Trisolaran people who attempt to occupy the earth are given a very unique setting: they share information through brain waves, and they are transparent in their thinking and not conspiratorial. For them, thinking and speaking are the same word. Human beings, on the other hand, took advantage of the opaque nature of their thinking to come up with the "Wall-Facing Plan", and finally succeeded in deceiving the Trisolarans and achieved a staged victory.

So the question is, is human thinking really completely opaque? With the emergence of some technical means, the answer to this question seems not so absolute. Many researchers are trying to decode the mysteries of human thinking and decode some brain signals into text, images and other information.

Recently, Two research teams have made important progress in the direction of image decoding at the same time, and related papers have been accepted by CVPR 2023.

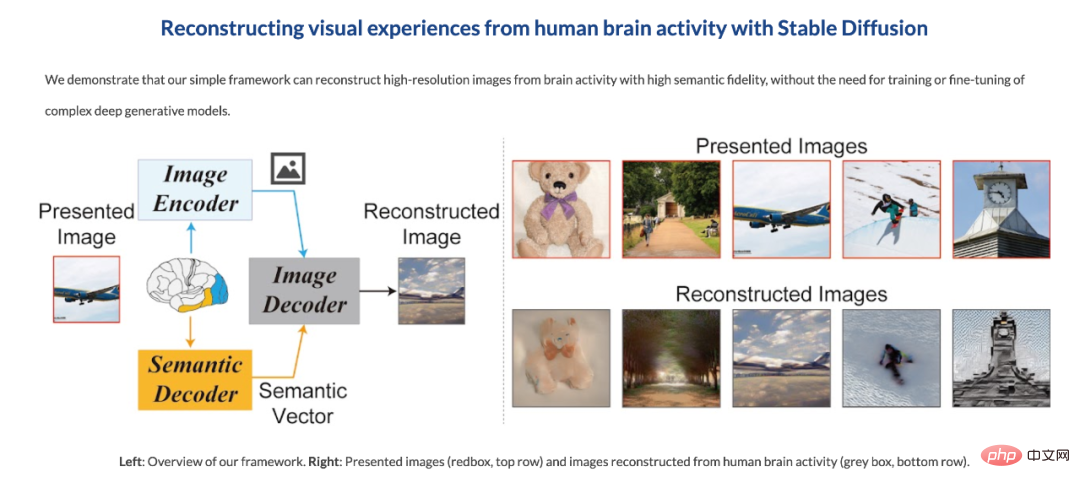

The first team is from Osaka University. They use the recently very popular Stable Diffusion to reconstruct brain activity patterns from human brain activity images obtained by functional magnetic resonance imaging (fMRI). High-resolution, high-precision images (see "Stable Diffusion reads your brain signals to reproduce images, and the research was accepted by CVPR").

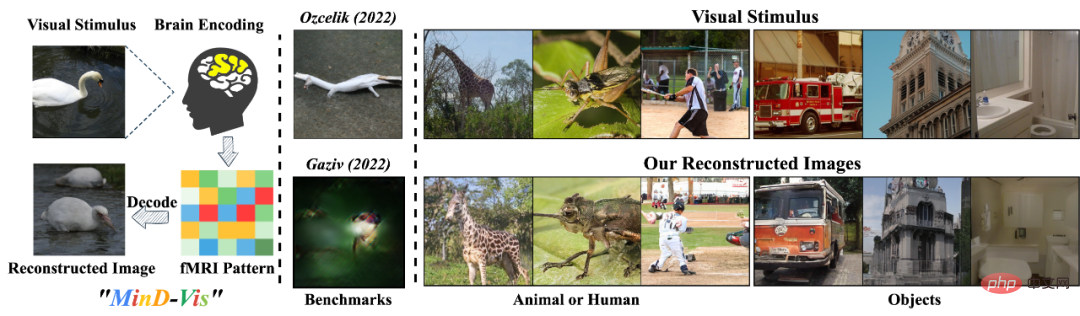

##Coincidentally, at almost the same time, Chinese teams from the National University of Singapore, the Chinese University of Hong Kong and Stanford University also produced similar results. They developed a human visual decoder called "MinD-Vis", which can directly decode from fMRI data through a pre-trained mask modeling and latent diffusion model. Human visual stimulation. It generates these images that are not only reasonably detailed, but also accurately represent the semantics and features of the image (such as texture and shape). Currently, the code for this research is open source.

Thesis title: Seeing Beyond the Brain: Conditional Diffusion Model with Sparse Masked Modeling for Vision Decoding

- Paper link: http://arxiv.org/abs/2211.06956

- Code link: https://github.com/zjc062/mind-vis

- ## Project link: https://mind-vis.github.io/

Research Overview

"What you see is what you think."Human perception and prior knowledge are closely related in the brain. Our perception of the world is not only affected by objective stimuli, but also by our experience. These effects form complex brain activity. Understanding these brain activities and decoding the information is one of the important goals of cognitive neuroscience, where decoding visual information is a challenging problem.

Functional magnetic resonance imaging (fMRI) is a commonly used non-invasive and effective method for recovering visual information such as image categories.

The purpose of MinD-Vis is to explore the possibility of using deep learning models to decode visual stimuli directly from fMRI data.

When previous methods decode complex neural activities directly from fMRI data, there is a lack of {fMRI - image} pairing and effective biological guidance, so the reconstructed images are usually blurry and Semantically meaningless. Therefore, it is an important challenge to effectively learn fMRI representations, which help establish the connection between brain activity and visual stimuli.

Additionally, individual variability complicates the problem, and we need to learn representations from large datasets and relax the constraints of generating conditional synthesis from fMRI.

Therefore, The author believes that using self-supervised learning (Self-supervised learning with pre-text task) plus a large-scale generative model can enable the model to be fine-tuned on a relatively small data set With contextual knowledge and amazing generative abilities. Driven by the above analysis, MinD-Vis proposed a mask signal modeling and a biconditional latent diffusion model for human visual decoding. The specific contributions are as follows:

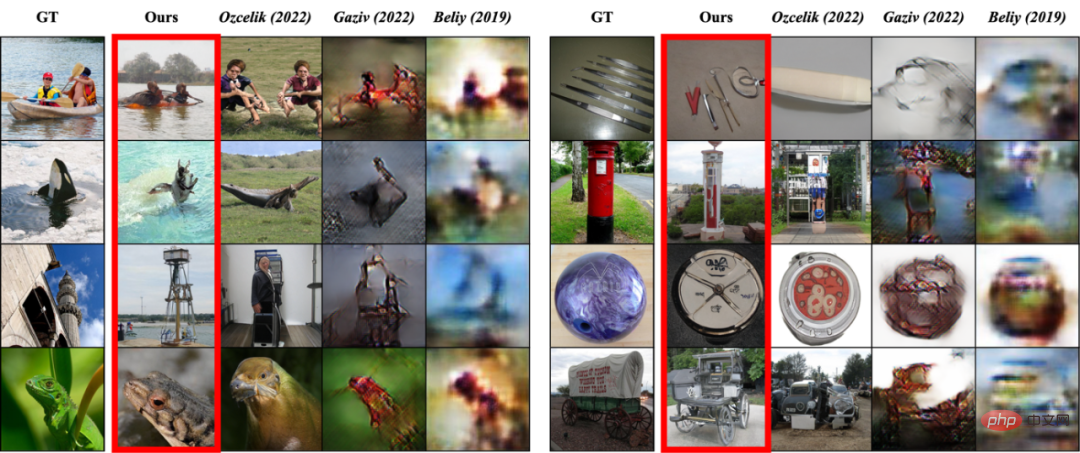

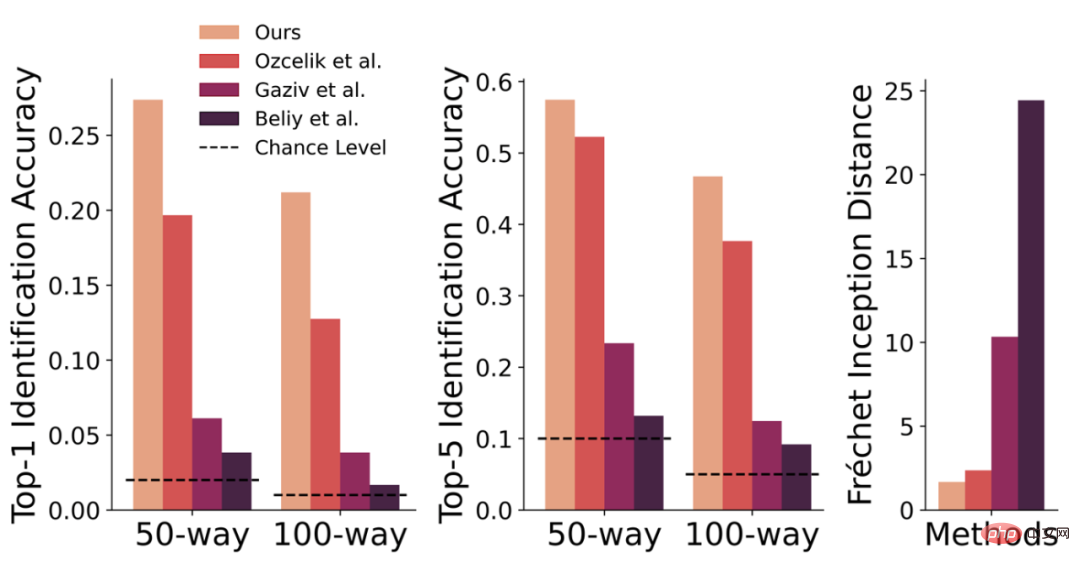

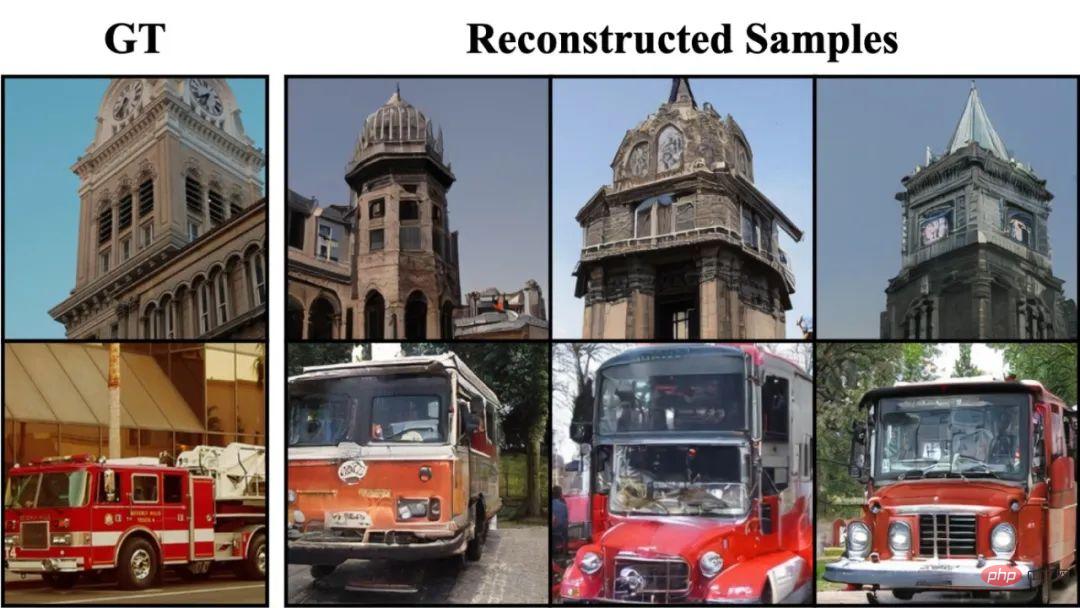

Comparison with previous methods – Generation quality

Comparison with previous methods – Quantitative comparison of evaluation indicators

Self-supervised learning for large-scale generative models

Since collecting {fMRI - image} pairs is very expensive and time-consuming, this task has always suffered from a lack of data annotation. In addition, each data set and each individual's data will have a certain domain offset.

In this task, the researchers aimed to establish a connection between brain activity and visual stimuli and thereby generate corresponding image information.

To do this, they used self-supervised learning and large-scale generative models. They believe this approach allows models to be fine-tuned on relatively small datasets and gain contextual knowledge and stunning generative capabilities.

MinD-Vis Framework

The following will introduce the MinD-Vis framework in detail and introduce the reasons and ideas for the design.

fMRI data has these characteristics and problems:

- fMRI uses 3D voxels (voxels) to measure brain blood oxygen level correlation (BOLD) changes to observe changes in brain activity. The amplitudes of neighboring voxels are often similar, indicating the presence of spatial redundancy in fMRI data.

- When calculating fMRI data, the Region of Interest (ROI) is usually extracted and the data is converted into a 1D vector. In this task, only the signal from the visual cortex of the brain is extracted. Therefore, the number of voxels (about 4000) is much less than the number of pixels in the image (256*256*3). Such data is not processed in terms of latitude and usual There is a considerable gap in the way image data is used.

- Due to individual differences, differences in experimental design, and the complexity of brain signals, each data set and each individual's data will have a certain domain shift.

- For a fixed visual stimulus, researchers hope that the image restored by the model will be semantically consistent; however, due to individual differences, everyone has different reactions to this visual stimulus, and researchers also hope that the image restored by the model will be semantically consistent. Hopefully the model will have some variance and flexibility.

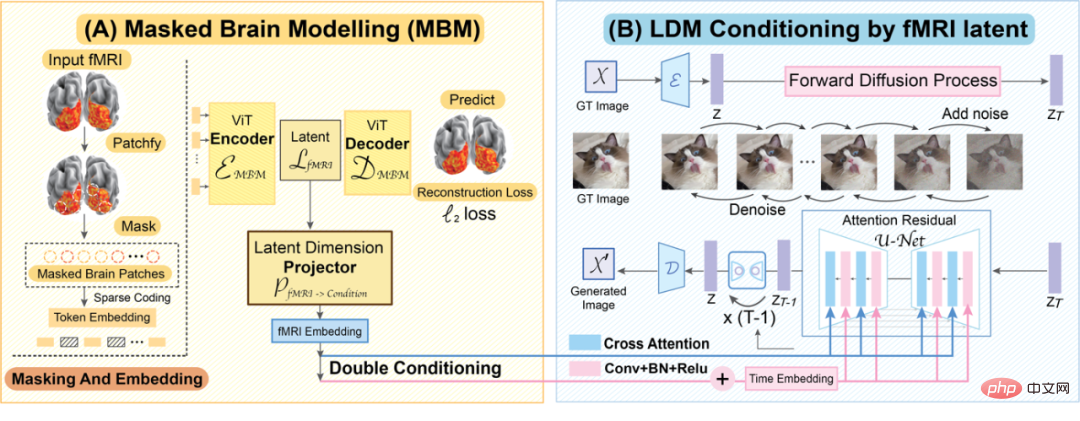

To address these issues, MinD-Vis consists of two stages:

- Utilize large-scale fMRI datasets To train Masked Autoencoder to learn fMRI representation.

- Integrate the pre-trained fMRI encoder and LDM through cross-attention conditioning and time-step conditioning to perform double conditioning for conditional synthesis. Then, we jointly finetune the cross attention head in LDM by using paired {fMRI, Image}.

These two steps will be introduced in detail here.

MinD-Vis Overview

(A) Sparse-Coded Masked Brain Modeling (SC-MBM) (MinD-Vis Overview left)

Due to the redundancy of fMRI spatial information, even if most of it is Masked, fMRI data can still be recovered. Therefore, in the first stage of MinD-Vis, most of the fMRI data are masked to save computational time. Here, the author uses an approach similar to Masked Autoencoder:

- Divide fMRI voxels into patches

- Use equal to the patch size Convert the step size 1D convolution layer into embedding

- Add the remaining fMRI patch to positional embedding and use it as the input of the vision transformer

- Decoding Obtain the reconstructed data

- Calculate the loss between the reconstructed data and the original data

- Optimize the model through backpropagation to make the reconstructed data as possible as possible Similar to the original data

- Repeat steps 2-6 to train the final model

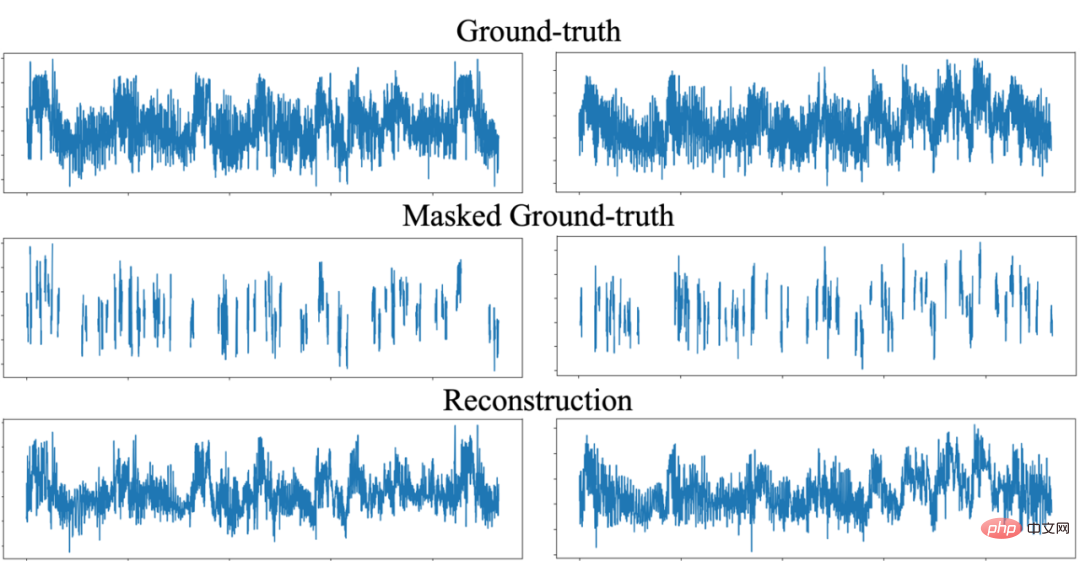

##SC-MBM can effectively restore the masked fMRI information

This design is similar to Mask What is the difference between ed Autoencoder?

- When mask modeling is applied to natural images, the model generally uses an embedding-to-patch-size ratio of equal to or slightly greater than 1.

- In this task, the author used a relatively large embedding-to-patch-size ratio, which can significantly increase the information capacity and create a large representation space for fMRI. This kind of Design also corresponds to sparse encoding of information in the brain*.

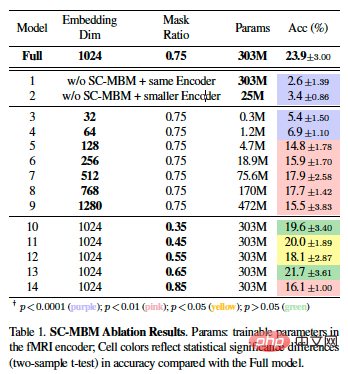

Ablation experiment of SC-MBM

(B) Double-Conditioned LDM (DC-LDM) (MinD-Vis Overview right)

Large-scale was performed in Stage A After context learning, the fMRI encoder can convert the fMRI data into a sparse representation with locality constraints. Here, the authors formulate the decoding task as a conditional generation problem and use pre-trained LDM to solve this problem.

- #LDM operates on the latent space of the image, with the fMRI data z as conditional information, and the goal is to learn to form the image through a back-diffusion process.

- In image generation tasks, variety and consistency are opposite goals, and fMRI to images relies more on generative consistency.

- To ensure generation consistency, the author combines cross attention conditioning and time step conditioning, and uses a conditional mechanism with time embedding in the middle layer of UNet.

- They further reformulated the optimization objective formula into a double adjustment alternating formula.

We demonstrate the stability of our method by decoding images in different random states multiple times.

Fine-tuning

After the fMRI encoder is pre-trained by SC-MBM, it is combined with the pre-trained LDM by double conditioning integrated together. Here, author:

- Use the convolutional layer to merge the output of the encoder into the latent dimension;

- Jointly optimize the fMRI encoder, cross attention heads and projection heads, and other parts are fixed ;

- Fine-tuning cross attention heads is the key to connecting the pre-trained conditioning space and fMRI latent space;

- After passing the fMRI image to the peer In the process of fine-tuning on the end, clearer connections between fMRI and image features will be learned through large-capacity fMRI representations.

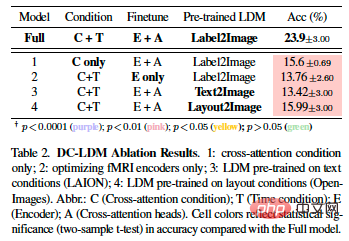

Ablation experiment of DC-LDM

Extra details

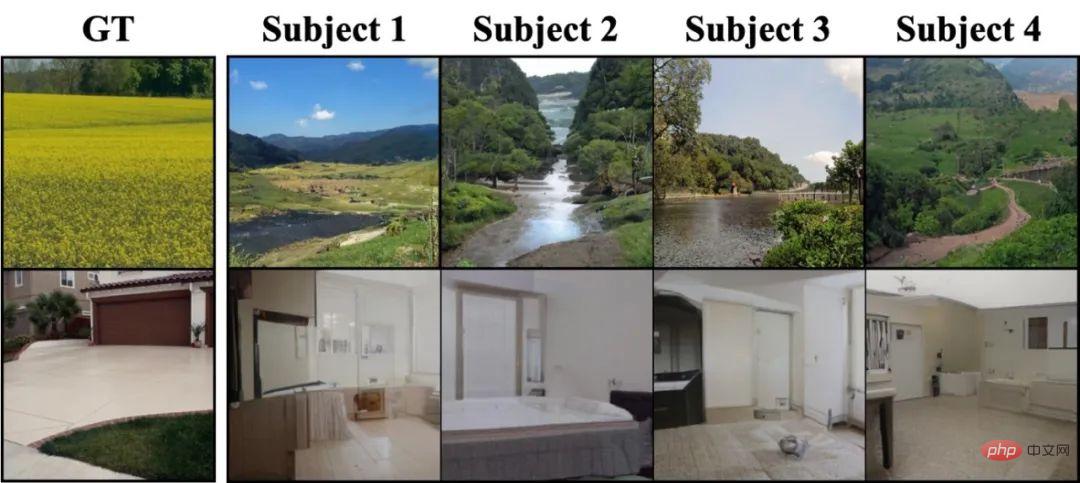

Surprisingly, MinD-Vis can decode some details that do not actually exist in the ground truth image, but are very relevant to the image content. For example, when the picture is a natural scenery, MinD-Vis decodes a river and a blue sky; when it is a house, MinD-Vis decodes a similar interior decoration. This has both advantages and disadvantages. The good thing is that this shows that we can decode what we imagined; the bad thing is that this may affect the evaluation of the decoding results.

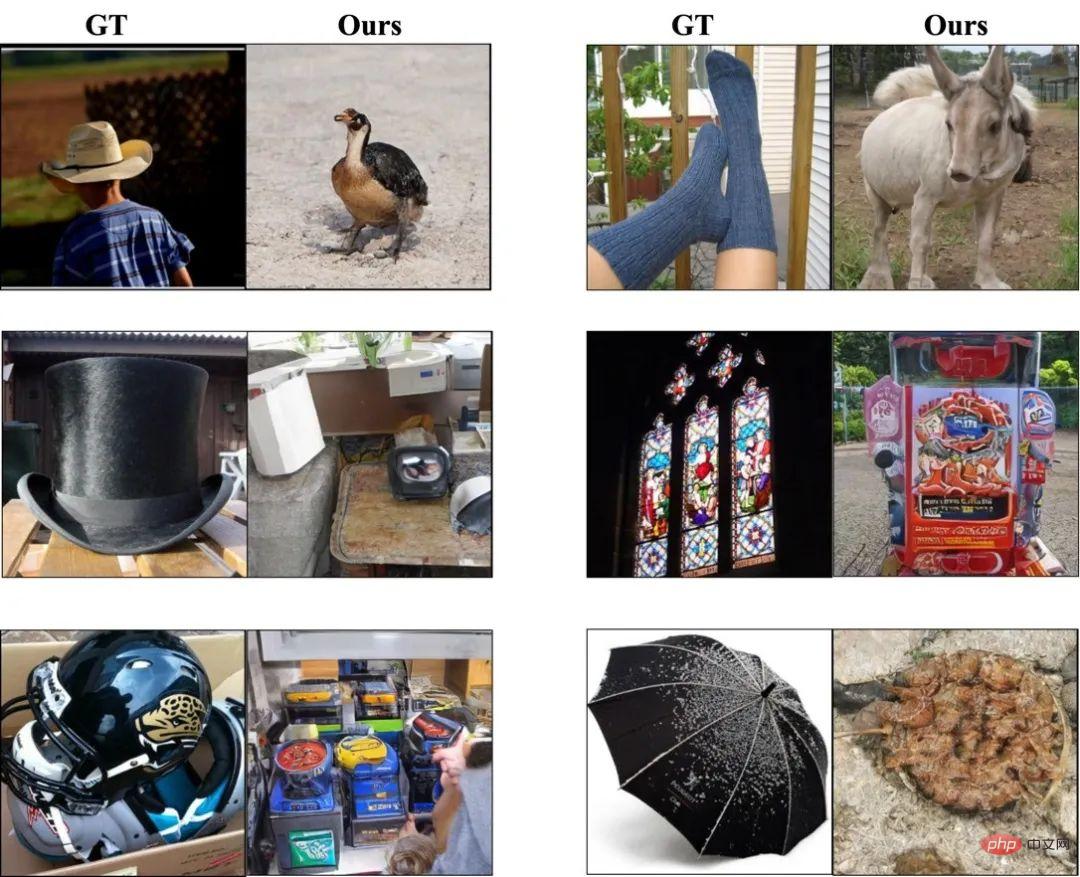

##A collection of favorite rollovers

The author believes that when the number of training samples is small, the difficulty of decoding the stimulus will be different. For example, the GOD dataset contains more animal training samples than clothing. This means that a word semantically similar to "furry" is more likely to be decoded as an animal rather than a garment, as shown in the image above, where a sock is decoded as a sheep.

Experimental settingDataset

Here, the author used three public data set.

- First stage of pre-training: used the Human Connectome Project, which provides 136,000 fMRI data segments, no images, only fMRI.

- Fine-tuning the Encoder and the second-stage generation model: Generic Object Decoding Dataset (GOD) and Brain, Object, Landscape Dataset (BOLD5000) data sets were used. These two data sets provide 1250 and 5254 {fMRI, Image} pairs respectively, of which 50 and 113 were taken as test sets respectively.

Model structure

The design of the model structure (ViT and diffusion model) of this article mainly refers to past literature. Please refer to the text for model parameter details. Likewise, they also adopt an asymmetric architecture: the encoder aims to learn meaningful fMRI representations, while the decoder tries to predict the obscured blocks. Therefore, we follow the previous design and make the decoder smaller, which we discard after pre-training.

Evaluation Index

Like previous literature, the author also used n-way top-1 and top-5 classification accuracy to Evaluate the semantic correctness of the results. This is a method that evaluates results by calculating top-1 and top-5 classification accuracy for n-1 randomly selected categories and the correct category over multiple trials. Unlike previous methods, here they adopt a more direct and replicable evaluation method, using a pre-trained ImageNet1K classifier to judge the semantic correctness of the generated images instead of using handcrafted features. Additionally, they used the Fréchet inception distance (FID) as a reference to evaluate the quality of the generated images. However, due to the limited number of images in the dataset, FID may not perfectly assess the image distribution.

Effect

The experiments in this article are conducted at the individual level, that is, the model is trained and tested on the same individual. For comparison with previous literature, the results for the third subject of the GOD data set are reported here, and the results for the other subjects are listed in the Appendix.

Written at the end

Through this project, the author demonstrated the feasibility of restoring visual information of the human brain through fMRI. However, there are many issues that need to be addressed in this field, such as how to better handle differences between individuals, how to reduce the impact of noise and interference on decoding, and how to combine fMRI decoding with other neuroscience techniques to achieve a more comprehensive understanding. Mechanisms and functions of the human brain. At the same time, we also need to better understand and respect the ethical and legal issues surrounding the human brain and individual privacy.

In addition, we also need to explore wider application scenarios, such as medicine and human-computer interaction, in order to transform this technology into practical applications. In the medical field, fMRI decoding technology may be used in the future to help special groups such as visually impaired people, hearing impaired people, and even patients with general paralysis to decode their thoughts. Due to physical disabilities, these people are unable to express their thoughts and wishes through traditional communication methods. By using fMRI technology, scientists can decode their brain activity to access their thoughts and wishes, allowing them to communicate with them more naturally and efficiently. In the field of human-computer interaction, fMRI decoding technology can be used to develop more intelligent and adaptive human-computer interfaces and control systems, such as decoding the user's brain activity to achieve a more natural and efficient human-computer interaction experience.

We believe that with the support of large-scale data sets and large model computing power, fMRI decoding will have a broader and far-reaching impact, promoting the development of cognitive neuroscience and artificial intelligence. develop.

Note: *Biological basis for learning visual stimulus representations in the brain using sparse coding: Sparse coding has been proposed as a strategy for the representation of sensory information. Research shows that visual stimuli are sparsely encoded in the visual cortex, which increases information transmission efficiency and reduces redundancy in the brain. Using fMRI, the visual content of natural scenes can be reconstructed from small amounts of data collected in the visual cortex. Sparse coding can be an efficient way of coding in computer vision. The article mentioned the SC-MBM method, which divides fMRI data into small blocks to introduce locality constraints, and then sparsely encodes each small block into a high-dimensional vector space, which can be used as a biologically effective and efficient brain feature learner. , used for visual encoding and decoding.

The above is the detailed content of AI knows what you are thinking and draws it for you. The project code has been open source. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1387

1387

52

52

How to get logged in user information in WordPress for personalized results

Apr 19, 2025 pm 11:57 PM

How to get logged in user information in WordPress for personalized results

Apr 19, 2025 pm 11:57 PM

Recently, we showed you how to create a personalized experience for users by allowing users to save their favorite posts in a personalized library. You can take personalized results to another level by using their names in some places (i.e., welcome screens). Fortunately, WordPress makes it very easy to get information about logged in users. In this article, we will show you how to retrieve information related to the currently logged in user. We will use the get_currentuserinfo(); function. This can be used anywhere in the theme (header, footer, sidebar, page template, etc.). In order for it to work, the user must be logged in. So we need to use

How to elegantly obtain entity class variable names to build database query conditions?

Apr 19, 2025 pm 11:42 PM

How to elegantly obtain entity class variable names to build database query conditions?

Apr 19, 2025 pm 11:42 PM

When using MyBatis-Plus or other ORM frameworks for database operations, it is often necessary to construct query conditions based on the attribute name of the entity class. If you manually every time...

Java BigDecimal operation: How to accurately control the accuracy of calculation results?

Apr 19, 2025 pm 11:39 PM

Java BigDecimal operation: How to accurately control the accuracy of calculation results?

Apr 19, 2025 pm 11:39 PM

Java...

How to solve the problem of username and password authentication failure when connecting to local EMQX using Eclipse Paho?

Apr 19, 2025 pm 04:54 PM

How to solve the problem of username and password authentication failure when connecting to local EMQX using Eclipse Paho?

Apr 19, 2025 pm 04:54 PM

How to solve the problem of username and password authentication failure when connecting to local EMQX using EclipsePaho's MqttAsyncClient? Using Java and Eclipse...

How to properly configure apple-app-site-association file in pagoda nginx to avoid 404 errors?

Apr 19, 2025 pm 07:03 PM

How to properly configure apple-app-site-association file in pagoda nginx to avoid 404 errors?

Apr 19, 2025 pm 07:03 PM

How to correctly configure apple-app-site-association file in Baota nginx? Recently, the company's iOS department sent an apple-app-site-association file and...

How to package in IntelliJ IDEA for specific Git versions to avoid including unfinished code?

Apr 19, 2025 pm 08:18 PM

How to package in IntelliJ IDEA for specific Git versions to avoid including unfinished code?

Apr 19, 2025 pm 08:18 PM

In IntelliJ...

How to process and display percentage numbers in Java?

Apr 19, 2025 pm 10:48 PM

How to process and display percentage numbers in Java?

Apr 19, 2025 pm 10:48 PM

Display and processing of percentage numbers in Java In Java programming, the need to process and display percentage numbers is very common, for example, when processing Excel tables...

How to efficiently query large amounts of personnel data through natural language processing?

Apr 19, 2025 pm 09:45 PM

How to efficiently query large amounts of personnel data through natural language processing?

Apr 19, 2025 pm 09:45 PM

Effective method of querying personnel data through natural language processing How to efficiently use natural language processing (NLP) technology when processing large amounts of personnel data...