Technology peripherals

Technology peripherals

AI

AI

Helping artificial intelligence move to a new stage, the YLearn causal learning open source project is released

Helping artificial intelligence move to a new stage, the YLearn causal learning open source project is released

Helping artificial intelligence move to a new stage, the YLearn causal learning open source project is released

On July 12, 2022, Jiuzhang Yunji DataCanvas Company released another breakthrough open source technology achievement-YLearn causal learning open source project, and successfully held an online press conference.

The conference is themed "From prediction to decision-making, understandable AI", and specially invites experts in the field of causal learning & artificial intelligence: Jiuzhang Yunji DataCanvas co-founder and CTOShang Mingdong, Founder & Chairman of CSDN, Founding Partner of Geek Bang Venture Capital Jiang Tao, Permanent Associate Professor and Doctoral Supervisor of Department of Computer Science, Tsinghua University Cui Peng and YLearn R&D Team , jointly discuss the latest research results of causal learning in academia and industry, and jointly promote the rapid development of causal science.

YLearn - the AI key that opens the door to "automated decision-making"

YLearn causal learning open source project, is global The first one-stop open source algorithm toolkit that handles the complete process of causal learning. It is the first to solve the "causal discovery, causal quantity identification, causal effect estimation, counterfactual inference and strategy learning" in causal learning. The five key issues have the characteristics of one-stop, new and comprehensive, and wide-ranging uses. It reduces the threshold for "decision makers" to the lowest level and helps governments and enterprises effectively improve their automated "decision-making" capabilities.

YLearn causal learning open source project is Jiuzhang Yunji DataCanvas company’s successor to DAT automatic machine learning toolkit and DingoDB real-time interactive analysis database After that, the third open source heavy tool was released. Since then, Jiuzhang Yunji DataCanvas' open source basic software has further expanded its territory. The open source basic tool series integrating cutting-edge AI technologies such as AutoML and causal learning will further accelerate the release of the value of data intelligence in the government and the entire industry.

By combining innovative insights from cutting-edge academic fields and market applications, the Jiuzhang Yunji DataCanvas open source project R&D team found that although the currently widely used business "prediction" results based on machine learning are improving business income The effect in this regard has been very significant, but as governments and enterprises have increasingly strong demands for "autonomous AI" and "intelligent decision-making", decision-makers need an understandable "reason" that can explain why a decision was made. The presentation of "causal relationships" has thus become an indispensable function for data analysis and intelligent decision-making, but machine learning that only provides data "correlation" cannot do this. The integration of

with "Causal Learning" technology will become the best solution to this problem, and the YLearn causal learning open source project was born.

YLearn causal learning open source project (hereinafter referred to as "YLearn") also has the "open source, flexible and automatic" gene of Jiuzhang Yunji DataCanvas product. Based on the open source community, YLearn aims to fill the gap in the market that lacks a complete, comprehensive, end-to-end causal learning toolkit, and work with global open source contributors to create an end-to-end, most complete, and most systematic's causal learning algorithm toolkit directly reduces the usage cost of "decision makers" from the tool side.

Currently, YLearn consists of CausalDiscovery, CausalModel, EstimatorModel, Policy, Interpreter and other components. Each component supports independent use and unified packaging. Through these flexible components, YLearn implements functions such as using causal diagrams to represent causal relationships in data sets, identifying causal effects, probability expressions, and various estimation models, and will continue to add and improve performance following cutting-edge research.

In order to further lower the threshold of use, in addition to making the usage process clear and simple, YLearn will also integrate AutoML automatic machine learning, the core technology of Jiuzhang Yunji DataCanvas Company. With the support of AutoML technology, YLearn will realize advanced "automated" functions such as automatic parameter adjustment, automatic optimization, and one-click automatic generation of multiple decision-making solutions corresponding to the result "Y"; in addition, YLearn will also realize a visual decision-making map based on causal relationships. , such as setting operational indicators for enterprise operations, and deduce the impact and benefits of different decisions in an interactive way.

The YLearn causal learning open source project, which provides automated causality analysis, will provide important support for decision-makers to understand the logic of AI decision-making and enhance the credibility of AI decision-making. It will also open the door to "automated decision-making" for governments and enterprises. AI key.

Causal learning - leading artificial intelligence to a new stage

The potential of causal learning and its influence on the future direction of artificial intelligence technology have been recognized by academia and industry. Judea Pearl, winner of the Turing Award in 2011 and the father of Bayesian networks, once mentioned that "without the ability to reason about causal relationships, the development of AI will be fundamentally limited."

Tsinghua University Department of Computer Science, permanent associate professor and doctoral supervisorCui Peng pointed out at this conference that "causal statistics will play an important role in the theoretical foundation of the new generation of artificial intelligence." The root cause of the current limitations of artificial intelligence is "knowing it, but not knowing why." Among them, the "ran" in "knowing it" refers to the "correlation" relationship between data, and "so-ran" refers to the "causal" relationship between data. Through years of research on introducing causal statistics into machine learning, Professor Cui's team found that causal statistics have outstanding performance in solving the stability problems, interpretability problems, and algorithm fairness problems of machine learning.

The commercial market also calls for accelerating the industrial application of causal learning technology. Gartner's latest causal learning innovation insight report "Innovation Insight: Causal

AI" points out that "artificial intelligence must move beyond correlation-based predictions toward causality-based solutions to achieve better decisions and more efficient Big automation. ...Causal artificial intelligence is crucial to the future."

Causal learning technology will greatly improve the autonomy, explainability, adaptability and robustness of artificial intelligence technology. These features will further reduce costs and increase efficiency for governments and enterprises that implement digital intelligence upgrades based on AI technology, and reap unexpected data value.

Open source tool - the engine of innovative application of AI technology

A cutting-edge technology can achieve successful large-scale application in the commercial market, which cannot be separated from the boost of powerful open source tools and catalysis.

Just like Sklearn (one of the most well-known programming modules in the field of machine learning) is to the application of machine learning technology, and TensorFlow and PyTorch (two full-featured frameworks for building deep learning models) are to The great significance and value of the application of deep learning technology also urgently requires an "open source tool" to break through application bottlenecks in the field of causal learning.

The emergence of the YLearn causal learning open source toolkit solves the "stuck" problem of the lack of a powerful and complete causal learning toolkit on the market, accelerating the introduction of causal learning technology from the "laboratory" to the "industry" application". Jiang Tao, founder & chairman of CSDN and founding partner of Geek Bang Venture Capital, said, "China's open source is at the right time. Only when technology becomes more popular will there be a bigger market. YLearn will be more sophisticated about AI technology in various industries." Going deeper will have great impetus. The development of my country's software industry is the foundation for the growth of the open source industry and provides it with growth soil. The country attaches great importance to the development of the open source industry and has implemented it in the "14th Five-Year Plan" "For the first time, open source has been incorporated into the top-level design in the plan. Shang Mingdong, co-founder and CTO of Jiuzhang Yunji DataCanvas, said in his speech at the press conference, "2022 has entered the year of take-off for open source. We believe that in the field of AI, software is infrastructure. Compared with application software, open source is the ‘main battlefield’ for basic software. ”

Adhering to the product culture of Jiuzhang Yunji DataCanvas Company that closely revolves around the technological innovation concept of “data intelligence” and “integrating and applying AI technology to actual business scenarios”, Jiuzhang Yunji DataCanvas open source project R&D team innovates and iterates While open source tools, we continue to absorb the needs and feedback from practical applications in various scenarios from the government and industry. At the same time, the AI basic software product series of Jiuzhang Yunji DataCanvas is being continuously integrated and applied with independently developed open source tools, and will also accelerate Government and enterprise customers enjoy the business value brought by the application of AI fusion technology.

The above is the detailed content of Helping artificial intelligence move to a new stage, the YLearn causal learning open source project is released. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1377

1377

52

52

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

In the fields of machine learning and data science, model interpretability has always been a focus of researchers and practitioners. With the widespread application of complex models such as deep learning and ensemble methods, understanding the model's decision-making process has become particularly important. Explainable AI|XAI helps build trust and confidence in machine learning models by increasing the transparency of the model. Improving model transparency can be achieved through methods such as the widespread use of multiple complex models, as well as the decision-making processes used to explain the models. These methods include feature importance analysis, model prediction interval estimation, local interpretability algorithms, etc. Feature importance analysis can explain the decision-making process of a model by evaluating the degree of influence of the model on the input features. Model prediction interval estimate

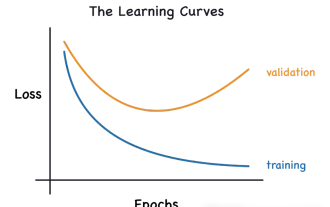

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

This article will introduce how to effectively identify overfitting and underfitting in machine learning models through learning curves. Underfitting and overfitting 1. Overfitting If a model is overtrained on the data so that it learns noise from it, then the model is said to be overfitting. An overfitted model learns every example so perfectly that it will misclassify an unseen/new example. For an overfitted model, we will get a perfect/near-perfect training set score and a terrible validation set/test score. Slightly modified: "Cause of overfitting: Use a complex model to solve a simple problem and extract noise from the data. Because a small data set as a training set may not represent the correct representation of all data." 2. Underfitting Heru

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

In the 1950s, artificial intelligence (AI) was born. That's when researchers discovered that machines could perform human-like tasks, such as thinking. Later, in the 1960s, the U.S. Department of Defense funded artificial intelligence and established laboratories for further development. Researchers are finding applications for artificial intelligence in many areas, such as space exploration and survival in extreme environments. Space exploration is the study of the universe, which covers the entire universe beyond the earth. Space is classified as an extreme environment because its conditions are different from those on Earth. To survive in space, many factors must be considered and precautions must be taken. Scientists and researchers believe that exploring space and understanding the current state of everything can help understand how the universe works and prepare for potential environmental crises

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Common challenges faced by machine learning algorithms in C++ include memory management, multi-threading, performance optimization, and maintainability. Solutions include using smart pointers, modern threading libraries, SIMD instructions and third-party libraries, as well as following coding style guidelines and using automation tools. Practical cases show how to use the Eigen library to implement linear regression algorithms, effectively manage memory and use high-performance matrix operations.

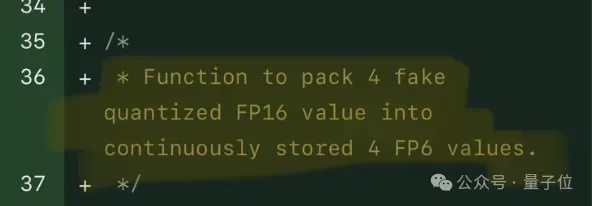

Single card running Llama 70B is faster than dual card, Microsoft forced FP6 into A100 | Open source

Apr 29, 2024 pm 04:55 PM

Single card running Llama 70B is faster than dual card, Microsoft forced FP6 into A100 | Open source

Apr 29, 2024 pm 04:55 PM

FP8 and lower floating point quantification precision are no longer the "patent" of H100! Lao Huang wanted everyone to use INT8/INT4, and the Microsoft DeepSpeed team started running FP6 on A100 without official support from NVIDIA. Test results show that the new method TC-FPx's FP6 quantization on A100 is close to or occasionally faster than INT4, and has higher accuracy than the latter. On top of this, there is also end-to-end large model support, which has been open sourced and integrated into deep learning inference frameworks such as DeepSpeed. This result also has an immediate effect on accelerating large models - under this framework, using a single card to run Llama, the throughput is 2.65 times higher than that of dual cards. one

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Translator | Reviewed by Li Rui | Chonglou Artificial intelligence (AI) and machine learning (ML) models are becoming increasingly complex today, and the output produced by these models is a black box – unable to be explained to stakeholders. Explainable AI (XAI) aims to solve this problem by enabling stakeholders to understand how these models work, ensuring they understand how these models actually make decisions, and ensuring transparency in AI systems, Trust and accountability to address this issue. This article explores various explainable artificial intelligence (XAI) techniques to illustrate their underlying principles. Several reasons why explainable AI is crucial Trust and transparency: For AI systems to be widely accepted and trusted, users need to understand how decisions are made

Five schools of machine learning you don't know about

Jun 05, 2024 pm 08:51 PM

Five schools of machine learning you don't know about

Jun 05, 2024 pm 08:51 PM

Machine learning is an important branch of artificial intelligence that gives computers the ability to learn from data and improve their capabilities without being explicitly programmed. Machine learning has a wide range of applications in various fields, from image recognition and natural language processing to recommendation systems and fraud detection, and it is changing the way we live. There are many different methods and theories in the field of machine learning, among which the five most influential methods are called the "Five Schools of Machine Learning". The five major schools are the symbolic school, the connectionist school, the evolutionary school, the Bayesian school and the analogy school. 1. Symbolism, also known as symbolism, emphasizes the use of symbols for logical reasoning and expression of knowledge. This school of thought believes that learning is a process of reverse deduction, through existing

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

MetaFAIR teamed up with Harvard to provide a new research framework for optimizing the data bias generated when large-scale machine learning is performed. It is known that the training of large language models often takes months and uses hundreds or even thousands of GPUs. Taking the LLaMA270B model as an example, its training requires a total of 1,720,320 GPU hours. Training large models presents unique systemic challenges due to the scale and complexity of these workloads. Recently, many institutions have reported instability in the training process when training SOTA generative AI models. They usually appear in the form of loss spikes. For example, Google's PaLM model experienced up to 20 loss spikes during the training process. Numerical bias is the root cause of this training inaccuracy,