Technology peripherals

Technology peripherals

AI

AI

Do you need to ask about language models when dating your boyfriend? Nature: Propose ideas and summarize notes. GPT-3 has become a contemporary 'scientific research worker'

Do you need to ask about language models when dating your boyfriend? Nature: Propose ideas and summarize notes. GPT-3 has become a contemporary 'scientific research worker'

Do you need to ask about language models when dating your boyfriend? Nature: Propose ideas and summarize notes. GPT-3 has become a contemporary 'scientific research worker'

Let a monkey press the keys randomly on a typewriter. As long as you give it long enough, it can type out the complete works of Shakespeare.

What if it was a monkey that understood grammar and semantics? The answer is that even scientific research can be done for you!

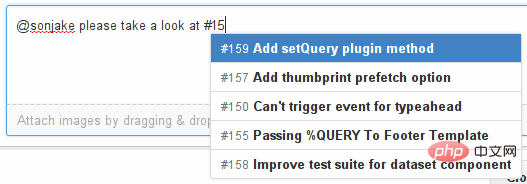

The development momentum of language models is very rapid. A few years ago, it could only automatically complete the next word to be input on the input method. Today, it can already help researchers analyze and writing scientific papers and generating code.

The training of large language models (LLM) generally requires massive text data for support.

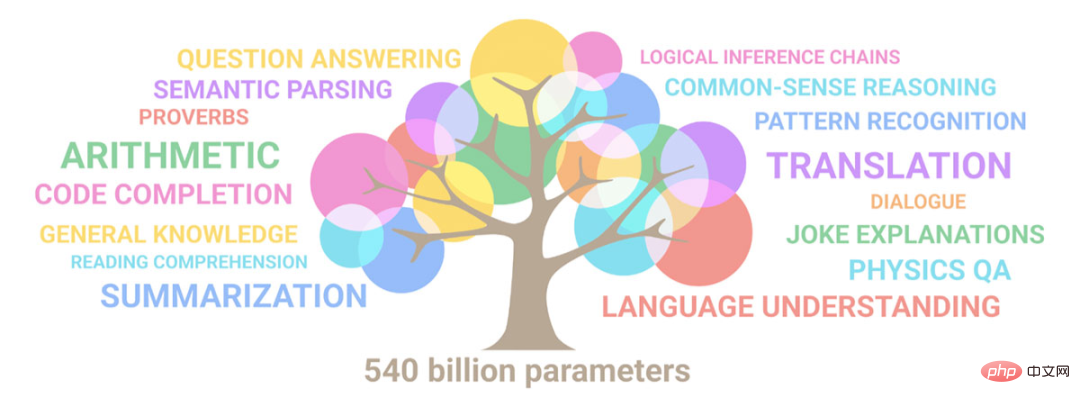

In 2020, OpenAI released the GPT-3 model with 175 billion parameters. It can write poems, do math problems, and almost everything the generative model can do. GPT-3 has already achieved the ultimate. Even today, GPT-3 is still the baseline for many language models to be compared and surpassed.

After the release of GPT-3, it quickly sparked heated discussions on Twitter and other social media. A large number of researchers were surprised by this weird "human-like writing" method.

After GPT-3 releases online services, users can enter text at will and let the model return the following. The minimum charge per 750 words processed is only $0.0004, which is very affordable. .

Recently, an article was published in the Nature column on science and technology. Unexpectedly, in addition to helping you write essays, these language models can also help you "do scientific research"!

Let the machine think for you

Hafsteinn Einarsson, a computer scientist at the University of Iceland in Reykjavik, said: I use it almost every day GPT-3, such as revising the abstract of a paper.

When Einarsson was preparing the copy at a meeting in June, although GPT-3 made many useless modification suggestions, there were also some helpful ones, such as "Make the research question in the abstract" "The beginning of the manuscript is more clear", and you won't realize this kind of problem when you read the manuscript yourself, unless you ask someone else to read it for you. And why can't this other person be "GPT-3"?

Language models can even help you improve your experimental design!

In another project, Einarsson wanted to use the Pictionary game to collect language data among participants.

After giving a description of the game, GPT-3 gave some suggestions for game modifications. In theory, researchers could also request new attempts at experimental protocols.

#Some researchers also use language models to generate paper titles or make text more readable.

The method used by Mina Lee, a doctoral student who is a professor of computer science at Stanford University, is to enter "Use these keywords to generate a paper title" into GPT-3 as a prompt, and the model will We will help you come up with several titles.

If some chapters need to be rewritten, she will also use Wordtune, an artificial intelligence writing assistant released by the AI21 laboratory in Tel Aviv, Israel. She only needs to click "Rewrite" to convert Just rewrite passages in multiple versions and then choose carefully.

Lee will also ask GPT-3 to provide advice on some things in life. For example, when asking "How to introduce her boyfriend to her parents," GPT-3 suggested going to the beach. A restaurant.

Domenic Rosati, a computer scientist at Scite, a technology startup located in Brooklyn, New York, used the Generate language model to reorganize his ideas.

Link: https://cohere.ai/generate

Generate is run by a Canadian NLP Developed by the company Cohere, the workflow of the model is very similar to GPT-3.

You only need to enter notes, or just talk about some ideas, and finally add "summarize" or "turn it into an abstract concept", and the model will automatically organize it for you. ideas.

Why write the code yourself?

OpenAI researchers trained GPT-3 on a large set of text, including books, news stories, Wikipedia entries, and software code.

Later, the team noticed that GPT-3 could complete code just like normal text.

The researchers created a fine-tuned version of the algorithm called Codex, trained on more than 150G of text from the code sharing platform GitHub; GitHub has now integrated Codex into Copilot In the service, users can be assisted in writing code.

Luca Soldaini, a computer scientist at AI2 at the Allen Institute for Artificial Intelligence in Seattle, Washington, says at least half the people in their office are using Copilot

Soldaini said that Copilot is most suitable for repetitive programming scenarios. For example, one of his projects involved writing template code for processing PDFs, and Copilot directly completed it.

However, Copilot's completion content often makes mistakes, so it is best to use it in languages you are familiar with.

Literature retrieval

Perhaps the most mature application scenario of language models is searching and summarizing documents.

The Semantic Scholar search engine developed by AI2 uses the TLDR language model to give a Twitter-like description of each paper.

The search engine covers approximately 200 million papers, mostly from biomedicine and computer science.

TLDR was developed based on the BART model released earlier by Meta, and then AI2 researchers fine-tuned the model based on human-written summaries.

By today's standards, TLDR is not a large language model, as it only contains about 400 million parameters, while the largest version of GPT-3 contains 175 billion.

TLDR is also used in Semantic Reader, an extended scientific paper application developed by AI2.

When users use in-text citations in Semantic Reader, an information box containing a summary of the TLDR will pop up.

Dan Weld, chief scientist of Semantic Scholar, said that the idea is to use language models to improve the reading experience.

When a language model generates a text summary, the model may generate some facts that do not exist in the article. Researchers call this problem "illusion," but in fact language The model is simply making it up or lying.

TLDR performed well in the authenticity test. The author of the paper rated TLDR’s accuracy as 2.5 points (out of 3 points).

Weld said TLDR is more realistic because the summary is only about 20 words long, and possibly because the algorithm doesn't put words into the summary that don't appear in the text.

In terms of search tools, Ought, a machine learning nonprofit in San Francisco, California, launched Elicit in 2021. If users ask it, “What is the impact of mindfulness on decision-making?” it will Output a table containing ten papers.

Users can ask the software to fill in columns with things like summaries and metadata, as well as information about study participants, methods and results information, which are then extracted or generated from the paper using tools including GPT-3.

Joel Chan of the University of Maryland, College Park, studies human-computer interaction. Whenever he starts a new project, he uses Elicit to search for relevant papers.

Gustav Nilsonne, a neuroscientist at the Karolinska Institute in Stockholm, also used Elicit to find papers with data that could be added to the pooled analysis, using the tool to find papers not found in other searches. Found files.

Evolving Model

AI2’s prototype gives LLM a futuristic feel.

Sometimes researchers have questions after reading the abstract of a scientific paper but have not yet had time to read the full text.

A team at AI2 has also developed a tool that can answer these questions in the field of NLP.

The model first requires researchers to read the abstract of an NLP paper and then asks relevant questions (such as "What five conversational attributes were analyzed?")

The research team then asked other researchers to answer these questions after reading the entire paper.

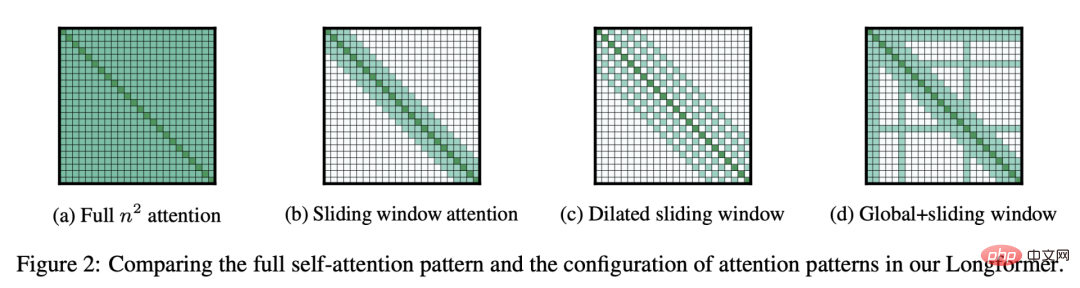

AI2 trained another version of the Longformer language model as input as a complete paper, and then used the collected The dataset generates answers to different questions about other papers.

The ACCoRD model can generate definitions and analogies for 150 scientific concepts related to NLP.

MS2 is a dataset containing 470,000 medical documents and 20,000 multi-document summaries. After fine-tuning BART with MS2, researchers were able to pose a question and a set of documents and generate A brief meta-analysis summary.

In 2019, AI2 fine-tuned the language model BERT created by Google in 2018 and created SciBERT with 110 million parameters based on Semantic Scholar's paper

Scite used artificial intelligence to create a scientific search engine, further fine-tuning SciBERT so that when its search engine lists papers that cite a target paper, it categorizes those papers as supporting, contrasting, or otherwise way to refer to the paper.

Rosati said this nuance helps people identify limitations or gaps in the scientific literature.

AI2’s SPECTER model is also based on SciBERT, which simplifies papers into compact mathematical representations.

Conference organizers use SPECTER to match submitted papers to peer reviewers, and Semantic Scholar uses it to recommend papers based on users’ libraries, Weld said.

Tom Hope, a computer scientist at Hebrew University and AI2, said they have research projects fine-tuning language models to identify effective drug combinations, links between genes and diseases, and scientific challenges in COVID-19 research and direction.

But, can language models provide deeper insights and even discovery capabilities?

In May, Hope and Weld co-authored a commentary with Microsoft Chief Science Officer Eric Horvitz outlining the challenges of achieving this goal, including teaching models to "infer ) is the result of reorganizing two concepts."

Hope said that this is basically the same thing as OpenAI's DALL · E 2 image generation model "generating a picture of a cat flying into space", but how can we move towards combined abstraction What about highly complex scientific concepts?

This is an open question.

To this day, large language models have had a real impact on research, and if people haven't started using these large language models to assist their work, they will miss these opportunities.

Reference materials:

https://www.nature.com/articles/d41586-022-03479-w

The above is the detailed content of Do you need to ask about language models when dating your boyfriend? Nature: Propose ideas and summarize notes. GPT-3 has become a contemporary 'scientific research worker'. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

The world's most powerful open source MoE model is here, with Chinese capabilities comparable to GPT-4, and the price is only nearly one percent of GPT-4-Turbo

May 07, 2024 pm 04:13 PM

Imagine an artificial intelligence model that not only has the ability to surpass traditional computing, but also achieves more efficient performance at a lower cost. This is not science fiction, DeepSeek-V2[1], the world’s most powerful open source MoE model is here. DeepSeek-V2 is a powerful mixture of experts (MoE) language model with the characteristics of economical training and efficient inference. It consists of 236B parameters, 21B of which are used to activate each marker. Compared with DeepSeek67B, DeepSeek-V2 has stronger performance, while saving 42.5% of training costs, reducing KV cache by 93.3%, and increasing the maximum generation throughput to 5.76 times. DeepSeek is a company exploring general artificial intelligence

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

KAN, which replaces MLP, has been extended to convolution by open source projects

Jun 01, 2024 pm 10:03 PM

Earlier this month, researchers from MIT and other institutions proposed a very promising alternative to MLP - KAN. KAN outperforms MLP in terms of accuracy and interpretability. And it can outperform MLP running with a larger number of parameters with a very small number of parameters. For example, the authors stated that they used KAN to reproduce DeepMind's results with a smaller network and a higher degree of automation. Specifically, DeepMind's MLP has about 300,000 parameters, while KAN only has about 200 parameters. KAN has a strong mathematical foundation like MLP. MLP is based on the universal approximation theorem, while KAN is based on the Kolmogorov-Arnold representation theorem. As shown in the figure below, KAN has

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Hello, electric Atlas! Boston Dynamics robot comes back to life, 180-degree weird moves scare Musk

Apr 18, 2024 pm 07:58 PM

Boston Dynamics Atlas officially enters the era of electric robots! Yesterday, the hydraulic Atlas just "tearfully" withdrew from the stage of history. Today, Boston Dynamics announced that the electric Atlas is on the job. It seems that in the field of commercial humanoid robots, Boston Dynamics is determined to compete with Tesla. After the new video was released, it had already been viewed by more than one million people in just ten hours. The old people leave and new roles appear. This is a historical necessity. There is no doubt that this year is the explosive year of humanoid robots. Netizens commented: The advancement of robots has made this year's opening ceremony look like a human, and the degree of freedom is far greater than that of humans. But is this really not a horror movie? At the beginning of the video, Atlas is lying calmly on the ground, seemingly on his back. What follows is jaw-dropping

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

Google is ecstatic: JAX performance surpasses Pytorch and TensorFlow! It may become the fastest choice for GPU inference training

Apr 01, 2024 pm 07:46 PM

The performance of JAX, promoted by Google, has surpassed that of Pytorch and TensorFlow in recent benchmark tests, ranking first in 7 indicators. And the test was not done on the TPU with the best JAX performance. Although among developers, Pytorch is still more popular than Tensorflow. But in the future, perhaps more large models will be trained and run based on the JAX platform. Models Recently, the Keras team benchmarked three backends (TensorFlow, JAX, PyTorch) with the native PyTorch implementation and Keras2 with TensorFlow. First, they select a set of mainstream

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI subverts mathematical research! Fields Medal winner and Chinese-American mathematician led 11 top-ranked papers | Liked by Terence Tao

Apr 09, 2024 am 11:52 AM

AI is indeed changing mathematics. Recently, Tao Zhexuan, who has been paying close attention to this issue, forwarded the latest issue of "Bulletin of the American Mathematical Society" (Bulletin of the American Mathematical Society). Focusing on the topic "Will machines change mathematics?", many mathematicians expressed their opinions. The whole process was full of sparks, hardcore and exciting. The author has a strong lineup, including Fields Medal winner Akshay Venkatesh, Chinese mathematician Zheng Lejun, NYU computer scientist Ernest Davis and many other well-known scholars in the industry. The world of AI has changed dramatically. You know, many of these articles were submitted a year ago.

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

Tesla robots work in factories, Musk: The degree of freedom of hands will reach 22 this year!

May 06, 2024 pm 04:13 PM

The latest video of Tesla's robot Optimus is released, and it can already work in the factory. At normal speed, it sorts batteries (Tesla's 4680 batteries) like this: The official also released what it looks like at 20x speed - on a small "workstation", picking and picking and picking: This time it is released One of the highlights of the video is that Optimus completes this work in the factory, completely autonomously, without human intervention throughout the process. And from the perspective of Optimus, it can also pick up and place the crooked battery, focusing on automatic error correction: Regarding Optimus's hand, NVIDIA scientist Jim Fan gave a high evaluation: Optimus's hand is the world's five-fingered robot. One of the most dexterous. Its hands are not only tactile

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

FisheyeDetNet: the first target detection algorithm based on fisheye camera

Apr 26, 2024 am 11:37 AM

Target detection is a relatively mature problem in autonomous driving systems, among which pedestrian detection is one of the earliest algorithms to be deployed. Very comprehensive research has been carried out in most papers. However, distance perception using fisheye cameras for surround view is relatively less studied. Due to large radial distortion, standard bounding box representation is difficult to implement in fisheye cameras. To alleviate the above description, we explore extended bounding box, ellipse, and general polygon designs into polar/angular representations and define an instance segmentation mIOU metric to analyze these representations. The proposed model fisheyeDetNet with polygonal shape outperforms other models and simultaneously achieves 49.5% mAP on the Valeo fisheye camera dataset for autonomous driving

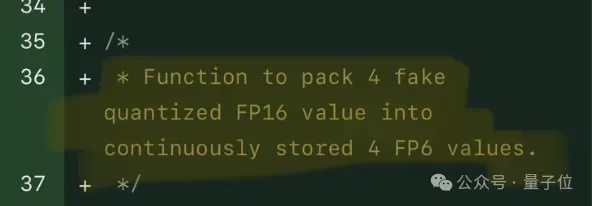

Single card running Llama 70B is faster than dual card, Microsoft forced FP6 into A100 | Open source

Apr 29, 2024 pm 04:55 PM

Single card running Llama 70B is faster than dual card, Microsoft forced FP6 into A100 | Open source

Apr 29, 2024 pm 04:55 PM

FP8 and lower floating point quantification precision are no longer the "patent" of H100! Lao Huang wanted everyone to use INT8/INT4, and the Microsoft DeepSpeed team started running FP6 on A100 without official support from NVIDIA. Test results show that the new method TC-FPx's FP6 quantization on A100 is close to or occasionally faster than INT4, and has higher accuracy than the latter. On top of this, there is also end-to-end large model support, which has been open sourced and integrated into deep learning inference frameworks such as DeepSpeed. This result also has an immediate effect on accelerating large models - under this framework, using a single card to run Llama, the throughput is 2.65 times higher than that of dual cards. one