Technology peripherals

Technology peripherals

AI

AI

Single-machine training of 20 billion parameter large model: Cerebras breaks new record

Single-machine training of 20 billion parameter large model: Cerebras breaks new record

Single-machine training of 20 billion parameter large model: Cerebras breaks new record

This week, chip startup Cerebras announced a new milestone: training an NLP (natural language processing) artificial intelligence model with more than 10 billion parameters in a single computing device.

The volume of AI models trained by Cerebras reaches an unprecedented 20 billion parameters, all without scaling workloads across multiple accelerators. This work is enough to satisfy the most popular text-to-image AI generation model on the Internet - OpenAI's 12 billion parameter large model DALL-E.

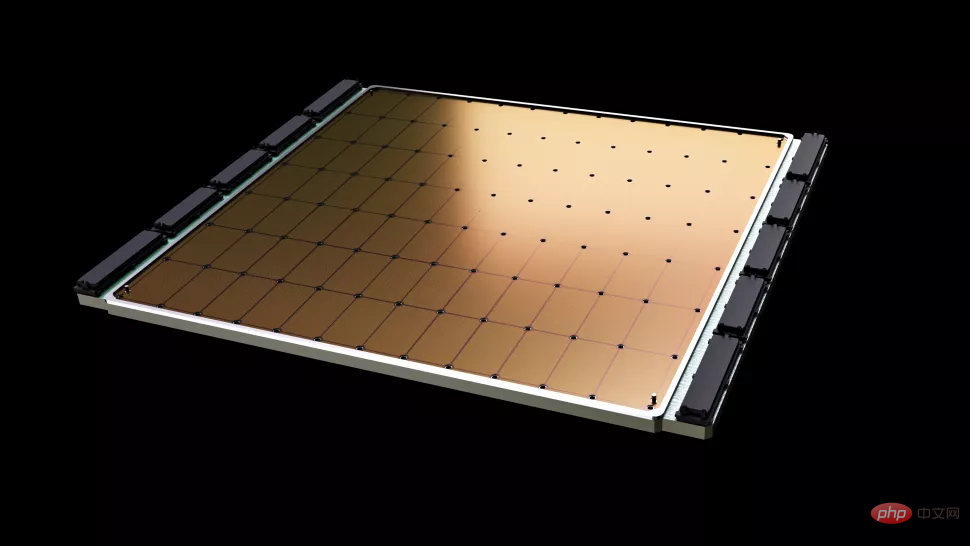

#The most important thing about Cerebras’ new job is the reduced infrastructure and software complexity requirements. The chip provided by this company, Wafer Scale Engine-2 (WSE2), is, as the name suggests, etched on a single entire wafer of TSMC's 7 nm process, an area typically large enough to accommodate hundreds of mainstream chips - with a staggering 2.6 trillion transistors. , 850,000 AI computing cores and 40 GB integrated cache, and the power consumption after packaging is as high as 15kW.

The Wafer Scale Engine-2 is close to the size of a wafer and is larger than an iPad.

Although Cerebras’ single machine is already similar to a supercomputer in terms of size, the NLP model retaining up to 20 billion parameters in a single chip is still Significantly reduces the cost of training on thousands of GPUs, and the associated hardware and scaling requirements, while eliminating the technical difficulty of splitting models among them. The latter is "one of the most painful aspects of NLP workloads" and sometimes "takes months to complete," Cerebras said.

This is a customized problem that is unique not only to each neural network being processed, but also to the specifications of each GPU and the network that ties them together - These elements must be set up in advance before the first training session and are not portable across systems.

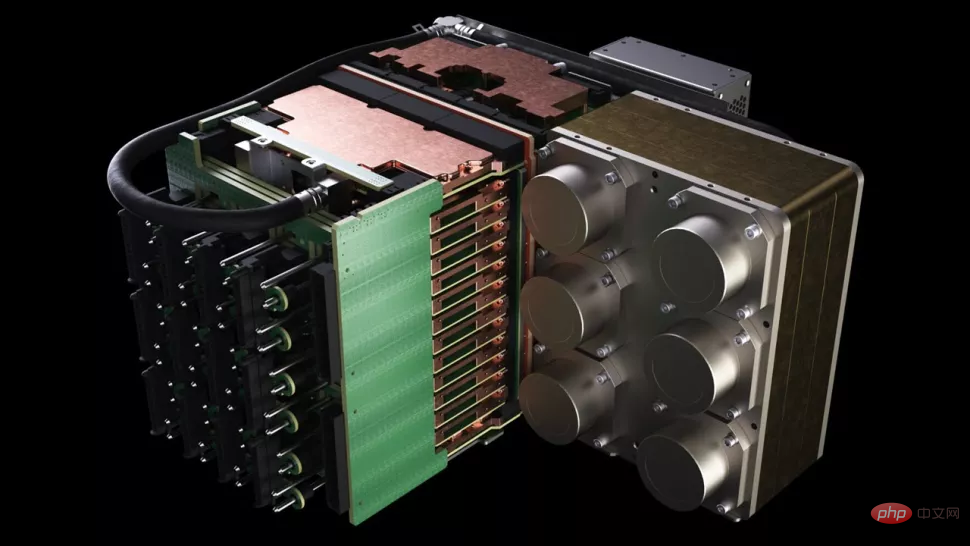

Cerebras’ CS-2 is a stand-alone supercomputing cluster that includes Wafer Scale Engine-2 chips, all Associated power, memory, and storage subsystems.

#What is the approximate level of 20 billion parameters? In the field of artificial intelligence, large-scale pre-training models are the direction that various technology companies and institutions are working hard to develop recently. OpenAI's GPT-3 is an NLP model that can write entire articles and do things that are enough to deceive human readers. Mathematical operations and translations with a staggering 175 billion parameters. DeepMind’s Gopher, launched late last year, raised the record number of parameters to 280 billion.

Recently, Google Brain even announced that it had trained a model with more than one trillion parameters, Switch Transformer.

"In the field of NLP, larger models have been proven to perform better. But traditionally, only a few companies have the resources and expertise to complete the decomposition of these large models. model, the hard work of distributing it across hundreds or thousands of graphics processing units," said Andrew Feldman, CEO and co-founder of Cerebras. "As a result, very few companies can train large NLP models - it is too expensive, time-consuming and unavailable for the rest of the industry."

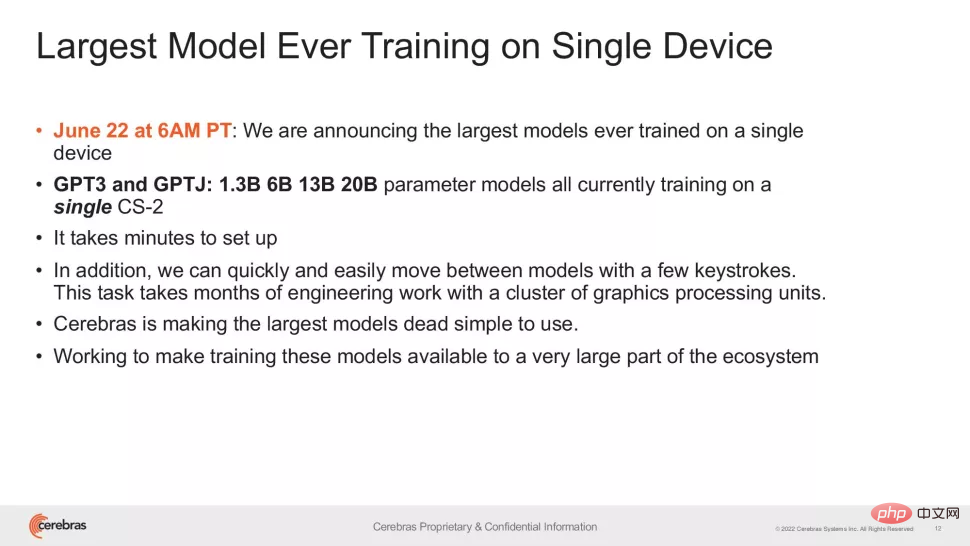

Now, Cerebras' approach Can lower the application threshold of GPT-3XL 1.3B, GPT-J 6B, GPT-3 13B and GPT-NeoX 20B models, enabling the entire AI ecosystem to build large models in minutes and train on a single CS-2 system they.

#However, just like the clock speed of flagship CPUs, the number of parameters is only one factor in the performance of large models an indicator. Recently, some research has achieved better results while reducing parameters, such as Chinchilla proposed by DeepMind in April this year, which surpassed GPT-3 and Gopher in conventional cases with only 70 billion parameters.

The goal of this type of research is of course working smarter, not working harder. Therefore, Cerebras's achievements are more important than what people first see - this research gives us confidence that the current level of chip manufacturing can adapt to increasingly complex models, and the company said that systems with special chips as the core have the support " The ability of models with hundreds of billions or even trillions of parameters.

The explosive growth in the number of trainable parameters on a single chip relies on Cerebras’ Weight Streaming technology. This technology decouples computation and memory footprint, allowing memory to scale at any scale based on the rapidly growing number of parameters in AI workloads. This reduces setup time from months to minutes and allows switching between models such as GPT-J and GPT-Neo. As the researcher said: "It only takes a few keystrokes."

"Cerebras provides people with the ability to run large language models in a low-cost and convenient way, opening the door to artificial intelligence This is an exciting new era of intelligence. It provides organizations that cannot spend tens of millions of dollars with an easy and inexpensive way to compete in large models," said Dan Olds, chief research officer at Intersect360 Research. "We very much look forward to new applications and discoveries from CS-2 customers as they train GPT-3 and GPT-J-level models on massive data sets."

The above is the detailed content of Single-machine training of 20 billion parameter large model: Cerebras breaks new record. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

Bytedance Cutting launches SVIP super membership: 499 yuan for continuous annual subscription, providing a variety of AI functions

Jun 28, 2024 am 03:51 AM

Bytedance Cutting launches SVIP super membership: 499 yuan for continuous annual subscription, providing a variety of AI functions

Jun 28, 2024 am 03:51 AM

This site reported on June 27 that Jianying is a video editing software developed by FaceMeng Technology, a subsidiary of ByteDance. It relies on the Douyin platform and basically produces short video content for users of the platform. It is compatible with iOS, Android, and Windows. , MacOS and other operating systems. Jianying officially announced the upgrade of its membership system and launched a new SVIP, which includes a variety of AI black technologies, such as intelligent translation, intelligent highlighting, intelligent packaging, digital human synthesis, etc. In terms of price, the monthly fee for clipping SVIP is 79 yuan, the annual fee is 599 yuan (note on this site: equivalent to 49.9 yuan per month), the continuous monthly subscription is 59 yuan per month, and the continuous annual subscription is 499 yuan per year (equivalent to 41.6 yuan per month) . In addition, the cut official also stated that in order to improve the user experience, those who have subscribed to the original VIP

Context-augmented AI coding assistant using Rag and Sem-Rag

Jun 10, 2024 am 11:08 AM

Context-augmented AI coding assistant using Rag and Sem-Rag

Jun 10, 2024 am 11:08 AM

Improve developer productivity, efficiency, and accuracy by incorporating retrieval-enhanced generation and semantic memory into AI coding assistants. Translated from EnhancingAICodingAssistantswithContextUsingRAGandSEM-RAG, author JanakiramMSV. While basic AI programming assistants are naturally helpful, they often fail to provide the most relevant and correct code suggestions because they rely on a general understanding of the software language and the most common patterns of writing software. The code generated by these coding assistants is suitable for solving the problems they are responsible for solving, but often does not conform to the coding standards, conventions and styles of the individual teams. This often results in suggestions that need to be modified or refined in order for the code to be accepted into the application

Can fine-tuning really allow LLM to learn new things: introducing new knowledge may make the model produce more hallucinations

Jun 11, 2024 pm 03:57 PM

Can fine-tuning really allow LLM to learn new things: introducing new knowledge may make the model produce more hallucinations

Jun 11, 2024 pm 03:57 PM

Large Language Models (LLMs) are trained on huge text databases, where they acquire large amounts of real-world knowledge. This knowledge is embedded into their parameters and can then be used when needed. The knowledge of these models is "reified" at the end of training. At the end of pre-training, the model actually stops learning. Align or fine-tune the model to learn how to leverage this knowledge and respond more naturally to user questions. But sometimes model knowledge is not enough, and although the model can access external content through RAG, it is considered beneficial to adapt the model to new domains through fine-tuning. This fine-tuning is performed using input from human annotators or other LLM creations, where the model encounters additional real-world knowledge and integrates it

Seven Cool GenAI & LLM Technical Interview Questions

Jun 07, 2024 am 10:06 AM

Seven Cool GenAI & LLM Technical Interview Questions

Jun 07, 2024 am 10:06 AM

To learn more about AIGC, please visit: 51CTOAI.x Community https://www.51cto.com/aigc/Translator|Jingyan Reviewer|Chonglou is different from the traditional question bank that can be seen everywhere on the Internet. These questions It requires thinking outside the box. Large Language Models (LLMs) are increasingly important in the fields of data science, generative artificial intelligence (GenAI), and artificial intelligence. These complex algorithms enhance human skills and drive efficiency and innovation in many industries, becoming the key for companies to remain competitive. LLM has a wide range of applications. It can be used in fields such as natural language processing, text generation, speech recognition and recommendation systems. By learning from large amounts of data, LLM is able to generate text

Kuaishou version of Sora 'Ke Ling' is open for testing: generates over 120s video, understands physics better, and can accurately model complex movements

Jun 11, 2024 am 09:51 AM

Kuaishou version of Sora 'Ke Ling' is open for testing: generates over 120s video, understands physics better, and can accurately model complex movements

Jun 11, 2024 am 09:51 AM

What? Is Zootopia brought into reality by domestic AI? Exposed together with the video is a new large-scale domestic video generation model called "Keling". Sora uses a similar technical route and combines a number of self-developed technological innovations to produce videos that not only have large and reasonable movements, but also simulate the characteristics of the physical world and have strong conceptual combination capabilities and imagination. According to the data, Keling supports the generation of ultra-long videos of up to 2 minutes at 30fps, with resolutions up to 1080p, and supports multiple aspect ratios. Another important point is that Keling is not a demo or video result demonstration released by the laboratory, but a product-level application launched by Kuaishou, a leading player in the short video field. Moreover, the main focus is to be pragmatic, not to write blank checks, and to go online as soon as it is released. The large model of Ke Ling is already available in Kuaiying.

Five schools of machine learning you don't know about

Jun 05, 2024 pm 08:51 PM

Five schools of machine learning you don't know about

Jun 05, 2024 pm 08:51 PM

Machine learning is an important branch of artificial intelligence that gives computers the ability to learn from data and improve their capabilities without being explicitly programmed. Machine learning has a wide range of applications in various fields, from image recognition and natural language processing to recommendation systems and fraud detection, and it is changing the way we live. There are many different methods and theories in the field of machine learning, among which the five most influential methods are called the "Five Schools of Machine Learning". The five major schools are the symbolic school, the connectionist school, the evolutionary school, the Bayesian school and the analogy school. 1. Symbolism, also known as symbolism, emphasizes the use of symbols for logical reasoning and expression of knowledge. This school of thought believes that learning is a process of reverse deduction, through existing

AI startups collectively switched jobs to OpenAI, and the security team regrouped after Ilya left!

Jun 08, 2024 pm 01:00 PM

AI startups collectively switched jobs to OpenAI, and the security team regrouped after Ilya left!

Jun 08, 2024 pm 01:00 PM

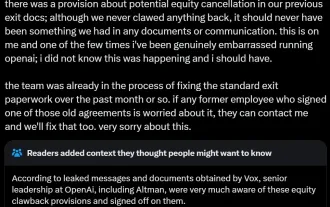

Last week, amid the internal wave of resignations and external criticism, OpenAI was plagued by internal and external troubles: - The infringement of the widow sister sparked global heated discussions - Employees signing "overlord clauses" were exposed one after another - Netizens listed Ultraman's "seven deadly sins" Rumors refuting: According to leaked information and documents obtained by Vox, OpenAI’s senior leadership, including Altman, was well aware of these equity recovery provisions and signed off on them. In addition, there is a serious and urgent issue facing OpenAI - AI safety. The recent departures of five security-related employees, including two of its most prominent employees, and the dissolution of the "Super Alignment" team have once again put OpenAI's security issues in the spotlight. Fortune magazine reported that OpenA

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

To provide a new scientific and complex question answering benchmark and evaluation system for large models, UNSW, Argonne, University of Chicago and other institutions jointly launched the SciQAG framework

Jul 25, 2024 am 06:42 AM

Editor |ScienceAI Question Answering (QA) data set plays a vital role in promoting natural language processing (NLP) research. High-quality QA data sets can not only be used to fine-tune models, but also effectively evaluate the capabilities of large language models (LLM), especially the ability to understand and reason about scientific knowledge. Although there are currently many scientific QA data sets covering medicine, chemistry, biology and other fields, these data sets still have some shortcomings. First, the data form is relatively simple, most of which are multiple-choice questions. They are easy to evaluate, but limit the model's answer selection range and cannot fully test the model's ability to answer scientific questions. In contrast, open-ended Q&A