Technology peripherals

Technology peripherals

AI

AI

Overview of the current status of federated learning technology and its applications in image processing

Overview of the current status of federated learning technology and its applications in image processing

Overview of the current status of federated learning technology and its applications in image processing

In recent years, graphs have been widely used to represent and process complex data in many fields, such as medical care, transportation, bioinformatics, and recommendation systems. Graph machine learning technology is a powerful tool for obtaining rich information hidden in complex data, and has demonstrated strong performance in tasks such as node classification and link prediction.

Although graph machine learning technology has made significant progress, most of them require graph data to be stored centrally on a single machine. However, with the emphasis on data security and user privacy, centralized storage of data has become unsafe and unfeasible. Graph data is often distributed across multiple data sources (data silos), and due to privacy and security reasons, it becomes infeasible to collect the required graph data from different places.

For example, a third-party company wants to train graph machine learning models for some financial institutions to help them detect potential financial crimes and fraudulent customers. Every financial institution holds private customer data, such as demographic data and transaction records. The customers of each financial institution form a customer graph, where edges represent transaction records. Due to strict privacy policies and business competition, each organization's private customer data cannot be shared directly with third-party companies or other organizations. At the same time, there may also be relationships between institutions, which can be regarded as structural information between institutions. The main challenge is therefore to train graph machine learning models for financial crime detection based on private customer graphs and inter-agency structural information without direct access to each institution's private customer data.

Federated learning (FL) is a distributed machine learning solution that solves the problem of data islands through collaborative training. It enables participants (i.e. customers) to jointly train machine learning models without sharing their private data. Therefore, combining FL with graph machine learning becomes a promising solution to the above problems.

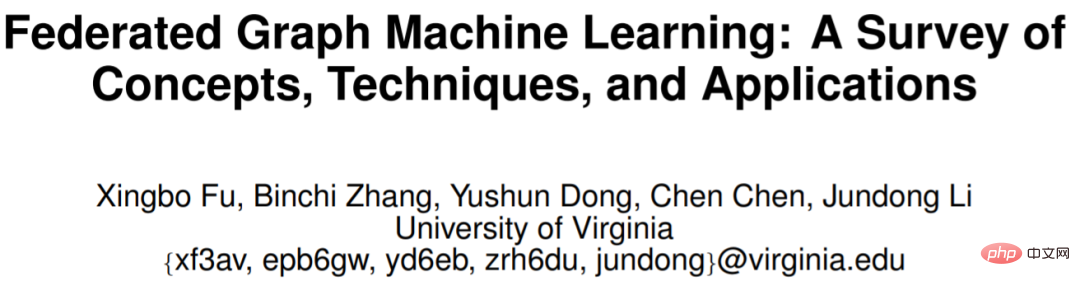

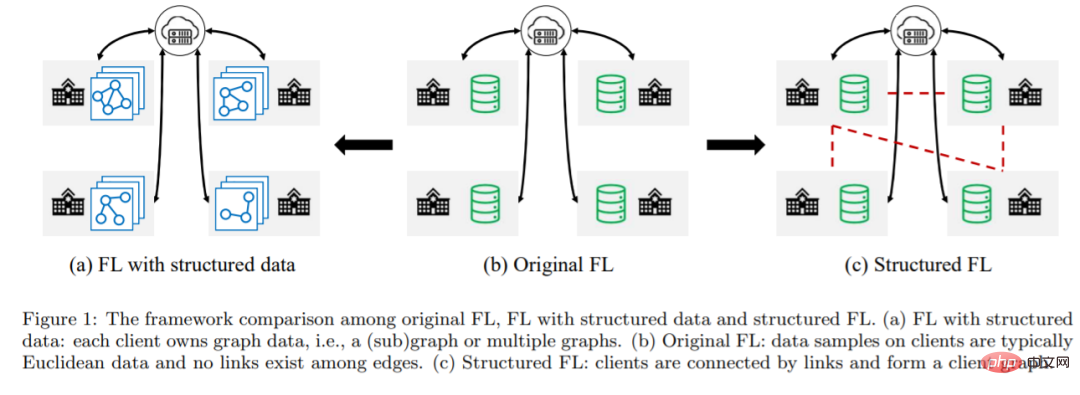

In this article, researchers from the University of Virginia propose Federated Graph Machine Learning (FGML). Generally speaking, FGML can be divided into two settings based on the level of structural information: the first is FL with structured data. In FL with structured data, customers collaboratively train graph machine learning models based on their graph data, while Keep graph data locally. The second type is structured FL. In structured FL, there is structural information between clients, forming a client graph. Client graphs can be exploited to design more efficient joint optimization methods.

Paper address: https://arxiv.org/pdf/2207.11812.pdf

Although FGML provides a promising blueprint, there are still some challenges:

1. Lack of information across clients. In FL with structured data, a common scenario is that each client machine has a subgraph of the global graph, and some nodes may have close neighbors belonging to other clients. For privacy reasons, nodes can only aggregate features of their immediate neighbors within the client, but cannot access features located on other clients, which leads to under-representation of nodes.

2. Privacy leakage of graph structure. In traditional FL, clients are not allowed to expose the features and labels of their data samples. In FL with structured data, the privacy of structural information should also be considered. Structural information can be exposed directly through a shared adjacency matrix or indirectly through transmission node embedding.

3. Cross-client data heterogeneity. Unlike traditional FL where data heterogeneity comes from non-IID data samples, graph data in FGML contains rich structural information. At the same time, the graph structure of different customers will also affect the performance of the graph machine learning model.

4. Parameter usage strategy. In structured FL, the client graph enables clients to obtain information from their neighboring clients. In structured FL, effective strategies need to be designed to fully exploit neighbor information that is coordinated by a central server or completely decentralized.

To address the above challenges, researchers have developed a large number of algorithms. Various algorithms currently focus mainly on challenges and methods in standard FL, with only a few attempts to address specific problems and techniques in FGML. Someone published a review paper classifying FGML, but did not summarize the main techniques in FGML. Some review articles only cover a limited number of relevant papers in FL and very briefly introduce the current technology.

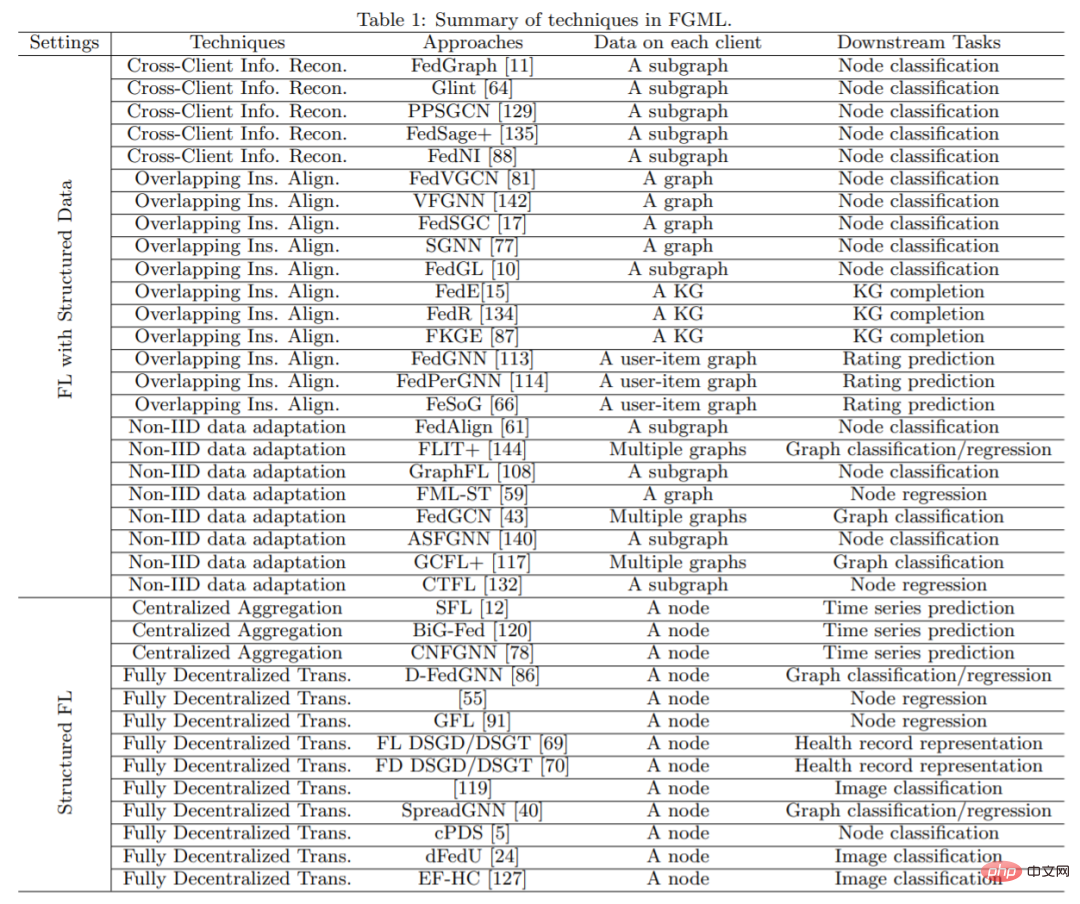

In the paper introduced today, the author first introduces the concepts of two problem designs in FGML. Then, the latest technological progress under each shezhi is reviewed, and the practical applications of FGML are also introduced. and summarizes accessible graph datasets and platforms available for FGML applications. Finally, the author gives several promising research directions. The main contributions of the article include:

Taxonomy of FGML technologies: The article presents a taxonomy of FGML based on different problems and summarizes the key challenges in each setting.

Comprehensive Technology Review: The article provides a comprehensive overview of existing technology in FGML. Compared with other existing review papers, the authors not only study a wider range of related work, but also provide a more detailed technical analysis instead of simply listing the steps of each method.

Practical application: This article summarizes the practical application of FGML for the first time. The authors classify them according to application areas and introduce related work in each area.

Datasets and Platforms: The article introduces existing datasets and platforms in FGML, which is very helpful for engineers and researchers who want to develop algorithms and deploy applications in FGML.

Future directions: The article not only points out the limitations of existing methods, but also gives the future development direction of FGML.

FGML Technical Overview Here is the main structure of the article Introduction.

Section 2 briefly introduces definitions in graph machine learning and concepts and challenges in both settings in FGML.

Sections 3 and 4 review the dominant techniques in both settings. Section 5 further explores real-world applications of FGML. Section 6 introduces the Open Graph Dataset and two platforms for FGML used in related FGML papers. Possible future directions are provided in Section 7 .

Finally Section 8 summarizes the full text. Please refer to the original paper for more details.

The above is the detailed content of Overview of the current status of federated learning technology and its applications in image processing. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

This article will take you to understand SHAP: model explanation for machine learning

Jun 01, 2024 am 10:58 AM

In the fields of machine learning and data science, model interpretability has always been a focus of researchers and practitioners. With the widespread application of complex models such as deep learning and ensemble methods, understanding the model's decision-making process has become particularly important. Explainable AI|XAI helps build trust and confidence in machine learning models by increasing the transparency of the model. Improving model transparency can be achieved through methods such as the widespread use of multiple complex models, as well as the decision-making processes used to explain the models. These methods include feature importance analysis, model prediction interval estimation, local interpretability algorithms, etc. Feature importance analysis can explain the decision-making process of a model by evaluating the degree of influence of the model on the input features. Model prediction interval estimate

Transparent! An in-depth analysis of the principles of major machine learning models!

Apr 12, 2024 pm 05:55 PM

Transparent! An in-depth analysis of the principles of major machine learning models!

Apr 12, 2024 pm 05:55 PM

In layman’s terms, a machine learning model is a mathematical function that maps input data to a predicted output. More specifically, a machine learning model is a mathematical function that adjusts model parameters by learning from training data to minimize the error between the predicted output and the true label. There are many models in machine learning, such as logistic regression models, decision tree models, support vector machine models, etc. Each model has its applicable data types and problem types. At the same time, there are many commonalities between different models, or there is a hidden path for model evolution. Taking the connectionist perceptron as an example, by increasing the number of hidden layers of the perceptron, we can transform it into a deep neural network. If a kernel function is added to the perceptron, it can be converted into an SVM. this one

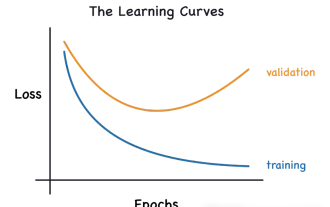

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

Identify overfitting and underfitting through learning curves

Apr 29, 2024 pm 06:50 PM

This article will introduce how to effectively identify overfitting and underfitting in machine learning models through learning curves. Underfitting and overfitting 1. Overfitting If a model is overtrained on the data so that it learns noise from it, then the model is said to be overfitting. An overfitted model learns every example so perfectly that it will misclassify an unseen/new example. For an overfitted model, we will get a perfect/near-perfect training set score and a terrible validation set/test score. Slightly modified: "Cause of overfitting: Use a complex model to solve a simple problem and extract noise from the data. Because a small data set as a training set may not represent the correct representation of all data." 2. Underfitting Heru

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

The evolution of artificial intelligence in space exploration and human settlement engineering

Apr 29, 2024 pm 03:25 PM

In the 1950s, artificial intelligence (AI) was born. That's when researchers discovered that machines could perform human-like tasks, such as thinking. Later, in the 1960s, the U.S. Department of Defense funded artificial intelligence and established laboratories for further development. Researchers are finding applications for artificial intelligence in many areas, such as space exploration and survival in extreme environments. Space exploration is the study of the universe, which covers the entire universe beyond the earth. Space is classified as an extreme environment because its conditions are different from those on Earth. To survive in space, many factors must be considered and precautions must be taken. Scientists and researchers believe that exploring space and understanding the current state of everything can help understand how the universe works and prepare for potential environmental crises

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Implementing Machine Learning Algorithms in C++: Common Challenges and Solutions

Jun 03, 2024 pm 01:25 PM

Common challenges faced by machine learning algorithms in C++ include memory management, multi-threading, performance optimization, and maintainability. Solutions include using smart pointers, modern threading libraries, SIMD instructions and third-party libraries, as well as following coding style guidelines and using automation tools. Practical cases show how to use the Eigen library to implement linear regression algorithms, effectively manage memory and use high-performance matrix operations.

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Explainable AI: Explaining complex AI/ML models

Jun 03, 2024 pm 10:08 PM

Translator | Reviewed by Li Rui | Chonglou Artificial intelligence (AI) and machine learning (ML) models are becoming increasingly complex today, and the output produced by these models is a black box – unable to be explained to stakeholders. Explainable AI (XAI) aims to solve this problem by enabling stakeholders to understand how these models work, ensuring they understand how these models actually make decisions, and ensuring transparency in AI systems, Trust and accountability to address this issue. This article explores various explainable artificial intelligence (XAI) techniques to illustrate their underlying principles. Several reasons why explainable AI is crucial Trust and transparency: For AI systems to be widely accepted and trusted, users need to understand how decisions are made

Outlook on future trends of Golang technology in machine learning

May 08, 2024 am 10:15 AM

Outlook on future trends of Golang technology in machine learning

May 08, 2024 am 10:15 AM

The application potential of Go language in the field of machine learning is huge. Its advantages are: Concurrency: It supports parallel programming and is suitable for computationally intensive operations in machine learning tasks. Efficiency: The garbage collector and language features ensure that the code is efficient, even when processing large data sets. Ease of use: The syntax is concise, making it easy to learn and write machine learning applications.

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

Is Flash Attention stable? Meta and Harvard found that their model weight deviations fluctuated by orders of magnitude

May 30, 2024 pm 01:24 PM

MetaFAIR teamed up with Harvard to provide a new research framework for optimizing the data bias generated when large-scale machine learning is performed. It is known that the training of large language models often takes months and uses hundreds or even thousands of GPUs. Taking the LLaMA270B model as an example, its training requires a total of 1,720,320 GPU hours. Training large models presents unique systemic challenges due to the scale and complexity of these workloads. Recently, many institutions have reported instability in the training process when training SOTA generative AI models. They usually appear in the form of loss spikes. For example, Google's PaLM model experienced up to 20 loss spikes during the training process. Numerical bias is the root cause of this training inaccuracy,