Backend Development

Backend Development

Python Tutorial

Python Tutorial

Commonly used loss functions and Python implementation examples

Commonly used loss functions and Python implementation examples

Commonly used loss functions and Python implementation examples

What is the loss function?

The loss function is an algorithm that measures the degree of fit between the model and the data. A loss function is a way of measuring the difference between actual measurements and predicted values. The higher the value of the loss function, the more incorrect the prediction is, and the lower the value of the loss function, the closer the prediction is to the true value. The loss function is calculated for each individual observation (data point). The function that averages the values of all loss functions is called the cost function. A simpler understanding is that the loss function is for a single sample, while the cost function is for all samples.

Loss functions and metrics

Some loss functions can also be used as evaluation metrics. But loss functions and metrics have different purposes. While metrics are used to evaluate the final model and compare the performance of different models, the loss function is used during the model building phase as an optimizer for the model being created. The loss function guides the model on how to minimize the error.

That is to say, the loss function knows how the model is trained, and the measurement index explains the performance of the model.

Why use the loss function?

Because the loss function measures is the difference between the predicted value and the actual value, so they can be used to guide model improvement when training the model (the usual gradient descent method). In the process of building the model, if the weight of the feature changes and gets better or worse predictions, it is necessary to use the loss function to judge whether the weight of the feature in the model needs to be changed, and the direction of change.

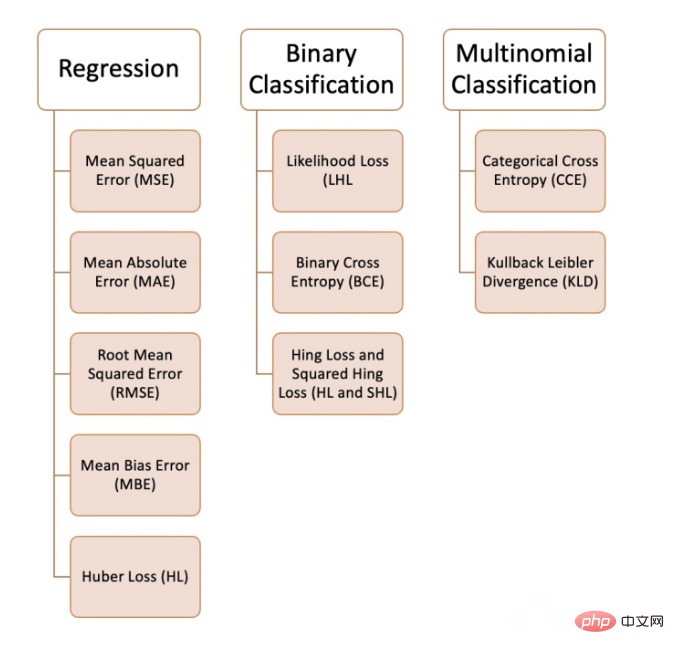

We can use a variety of loss functions in machine learning, depending on the type of problem we are trying to solve, the data quality and distribution, and the algorithm we use. The following figure shows the 10 we have compiled Common loss functions:

Regression problem

1. Mean square error (MSE)

Mean square error refers to all predicted values and the true values, and average them. Often used in regression problems.

def MSE (y, y_predicted): sq_error = (y_predicted - y) ** 2 sum_sq_error = np.sum(sq_error) mse = sum_sq_error/y.size return mse

2. Mean absolute error (MAE)

is calculated as the average of the absolute differences between the predicted value and the true value. This is a better measurement than mean squared error when the data has outliers.

def MAE (y, y_predicted): error = y_predicted - y absolute_error = np.absolute(error) total_absolute_error = np.sum(absolute_error) mae = total_absolute_error/y.size return mae

3. Root mean square error (RMSE)

This loss function is the square root of the mean square error. This is an ideal approach if we don't want to punish larger errors.

def RMSE (y, y_predicted): sq_error = (y_predicted - y) ** 2 total_sq_error = np.sum(sq_error) mse = total_sq_error/y.size rmse = math.sqrt(mse) return rmse

4. Mean deviation error (MBE)

is similar to the mean absolute error but does not seek the absolute value. The disadvantage of this loss function is that negative and positive errors can cancel each other out, so it is better to apply it when the researcher knows that the error only goes in one direction.

def MBE (y, y_predicted): error = y_predicted -y total_error = np.sum(error) mbe = total_error/y.size return mbe

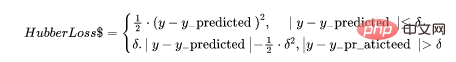

5. Huber loss

The Huber loss function combines the advantages of mean absolute error (MAE) and mean square error (MSE). This is because Hubber loss is a function with two branches. One branch is applied to MAEs that match expected values, and the other branch is applied to outliers. The general function of Hubber Loss is:

Here

def hubber_loss (y, y_predicted, delta) delta = 1.35 * MAE y_size = y.size total_error = 0 for i in range (y_size): erro = np.absolute(y_predicted[i] - y[i]) if error < delta: hubber_error = (error * error) / 2 else: hubber_error = (delta * error) / (0.5 * (delta * delta)) total_error += hubber_error total_hubber_error = total_error/y.size return total_hubber_error

binary classification

6. Maximum Likelihood Loss (Likelihood Loss/LHL)

This loss function is mainly used for binary classification problems. The probability of each predicted value is multiplied to obtain a loss value, and the associated cost function is the average of all observed values. Let us take the following example of binary classification where the class is [0] or [1]. If the output probability is equal to or greater than 0.5, the predicted class is [1], otherwise it is [0]. An example of the output probability is as follows:

[0.3 , 0.7 , 0.8 , 0.5 , 0.6 , 0.4]

The corresponding predicted class is:

[0 , 1 , 1 , 1 , 1 , 0]

and the actual class is:

[0 , 1 , 1 , 0 , 1 , 0]

Now the real class and output probability will be used to calculate loss. If the true class is [1], we use the output probability. If the true class is [0], we use the 1-probability:

((1–0.3)+0.7+0.8+(1–0.5)+0.6+(1–0.4)) / 6 = 0.65

The Python code is as follows:

def LHL (y, y_predicted): likelihood_loss = (y * y_predicted) + ((1-y) * (y_predicted)) total_likelihood_loss = np.sum(likelihood_loss) lhl = - total_likelihood_loss / y.size return lhl

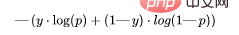

7. Binary crossover Entropy (BCE)

This function is a modification of the logarithmic likelihood loss. Stacking sequences of numbers can penalize highly confident but incorrect predictions. The general formula for the binary cross-entropy loss function is:

Let’s continue using the values from the above example:

- Output probability = [0.3, 0.7, 0.8, 0.5, 0.6, 0.4]

- Actual class = [0, 1, 1, 0, 1, 0]

- (0 . log (0.3) (1–0) . log (1–0.3)) = 0.155

- (1 . log(0.7) (1–1) . log (0.3)) = 0.155

- (1 . log(0.8) (1–1) . log (0.2)) = 0.097

- (0 . log (0.5) (1–0) . log (1–0.5) ) = 0.301

- (1 . log(0.6) (1–1) . log (0.4)) = 0.222

- (0 . log (0.4) (1–0) . log ( 1–0.4)) = 0.222

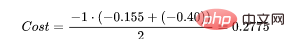

Then the result of the cost function is:

(0.155 + 0.155 + 0.097 + 0.301 + 0.222 + 0.222) / 6 = 0.192

The Python code is as follows:

def BCE (y, y_predicted): ce_loss = y*(np.log(y_predicted))+(1-y)*(np.log(1-y_predicted)) total_ce = np.sum(ce_loss) bce = - total_ce/y.size return bce

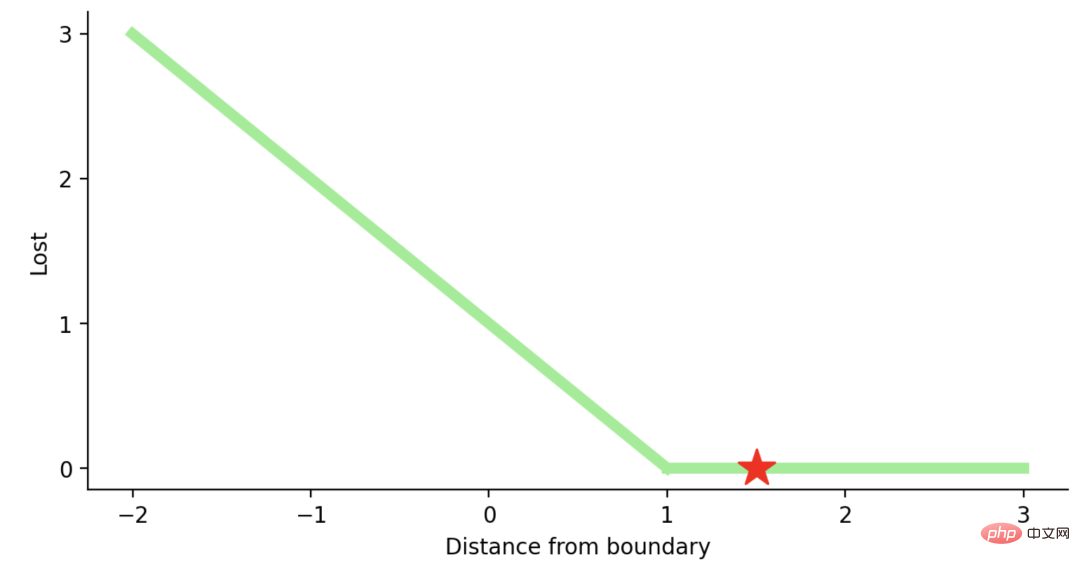

8, Hinge Loss and Squared Hinge Loss (HL and SHL)

Hinge Loss is translated as hinge loss or hinge loss. English shall prevail here.

Hinge Loss主要用于支持向量机模型的评估。错误的预测和不太自信的正确预测都会受到惩罚。所以一般损失函数是:

这里的t是真实结果用[1]或[-1]表示。

使用Hinge Loss的类应该是[1]或-1。为了在Hinge loss函数中不被惩罚,一个观测不仅需要正确分类而且到超平面的距离应该大于margin(一个自信的正确预测)。如果我们想进一步惩罚更高的误差,我们可以用与MSE类似的方法平方Hinge损失,也就是Squared Hinge Loss。

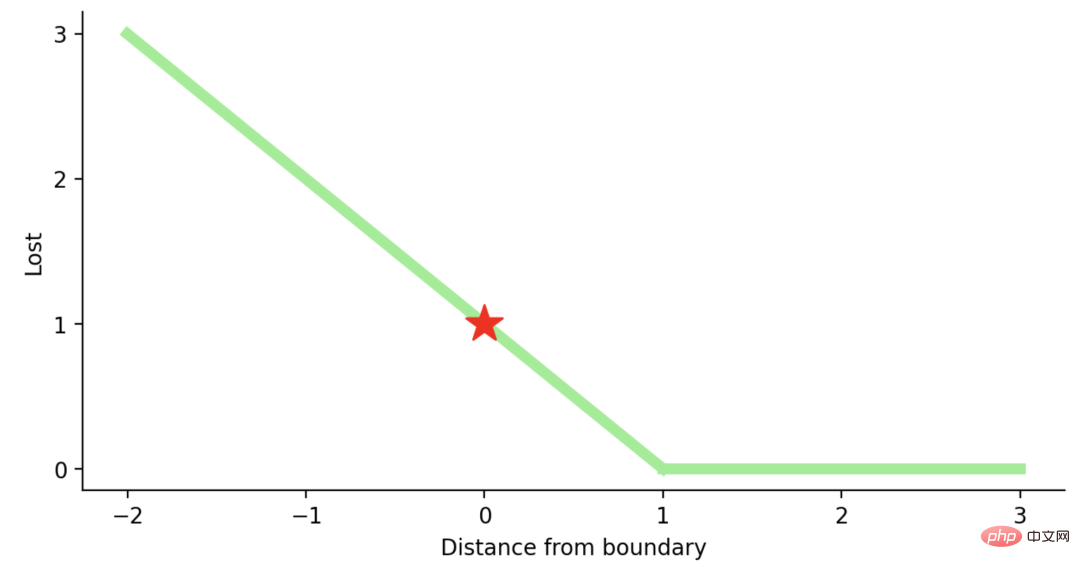

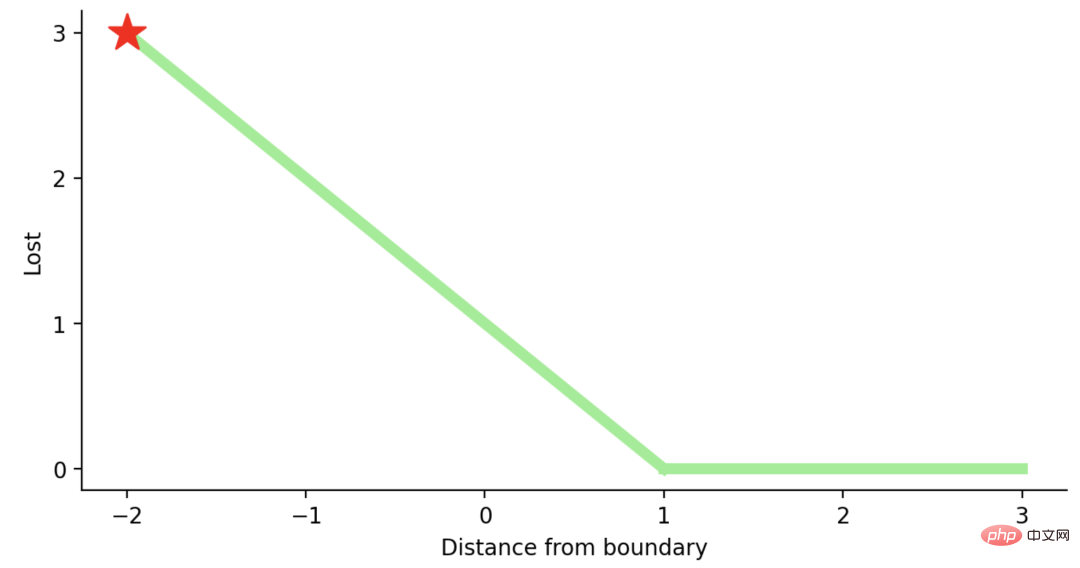

如果你对SVM比较熟悉,应该还记得在SVM中,超平面的边缘(margin)越高,则某一预测就越有信心。如果这块不熟悉,则看看这个可视化的例子:

如果一个预测的结果是1.5,并且真正的类是[1],损失将是0(零),因为模型是高度自信的。

loss= Max (0,1 - 1* 1.5) = Max (0, -0.5) = 0

如果一个观测结果为0(0),则表示该观测处于边界(超平面),真实的类为[-1]。损失为1,模型既不正确也不错误,可信度很低。

如果一次观测结果为2,但分类错误(乘以[-1]),则距离为-2。损失是3(非常高),因为我们的模型对错误的决策非常有信心(这个是绝不能容忍的)。

python代码如下:

#Hinge Loss def Hinge (y, y_predicted): hinge_loss = np.sum(max(0 , 1 - (y_predicted * y))) return hinge_loss #Squared Hinge Loss def SqHinge (y, y_predicted): sq_hinge_loss = max (0 , 1 - (y_predicted * y)) ** 2 total_sq_hinge_loss = np.sum(sq_hinge_loss) return total_sq_hinge_loss

多分类

9、交叉熵(CE)

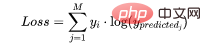

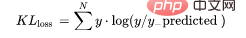

在多分类中,我们使用与二元交叉熵类似的公式,但有一个额外的步骤。首先需要计算每一对[y, y_predicted]的损失,一般公式为:

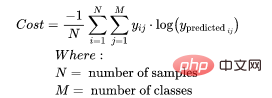

如果我们有三个类,其中单个[y, y_predicted]对的输出是:

这里实际的类3(也就是值=1的部分),我们的模型对真正的类是3的信任度是0.7。计算这损失如下:

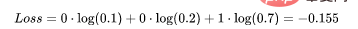

为了得到代价函数的值,我们需要计算所有单个配对的损失,然后将它们相加最后乘以[-1/样本数量]。代价函数由下式给出:

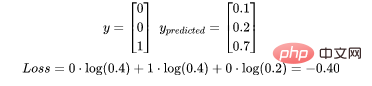

使用上面的例子,如果我们的第二对:

那么成本函数计算如下:

使用Python的代码示例可以更容易理解;

def CCE (y, y_predicted): cce_class = y * (np.log(y_predicted)) sum_totalpair_cce = np.sum(cce_class) cce = - sum_totalpair_cce / y.size return cce

10、Kullback-Leibler 散度 (KLD)

又被简化称为KL散度,它类似于分类交叉熵,但考虑了观测值发生的概率。如果我们的类不平衡,它特别有用。

def KL (y, y_predicted): kl = y * (np.log(y / y_predicted)) total_kl = np.sum(kl) return total_kl

以上就是常见的10个损失函数,希望对你有所帮助。

The above is the detailed content of Commonly used loss functions and Python implementation examples. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1378

1378

52

52

Python: Exploring Its Primary Applications

Apr 10, 2025 am 09:41 AM

Python: Exploring Its Primary Applications

Apr 10, 2025 am 09:41 AM

Python is widely used in the fields of web development, data science, machine learning, automation and scripting. 1) In web development, Django and Flask frameworks simplify the development process. 2) In the fields of data science and machine learning, NumPy, Pandas, Scikit-learn and TensorFlow libraries provide strong support. 3) In terms of automation and scripting, Python is suitable for tasks such as automated testing and system management.

The 2-Hour Python Plan: A Realistic Approach

Apr 11, 2025 am 12:04 AM

The 2-Hour Python Plan: A Realistic Approach

Apr 11, 2025 am 12:04 AM

You can learn basic programming concepts and skills of Python within 2 hours. 1. Learn variables and data types, 2. Master control flow (conditional statements and loops), 3. Understand the definition and use of functions, 4. Quickly get started with Python programming through simple examples and code snippets.

Navicat's method to view MongoDB database password

Apr 08, 2025 pm 09:39 PM

Navicat's method to view MongoDB database password

Apr 08, 2025 pm 09:39 PM

It is impossible to view MongoDB password directly through Navicat because it is stored as hash values. How to retrieve lost passwords: 1. Reset passwords; 2. Check configuration files (may contain hash values); 3. Check codes (may hardcode passwords).

How to use AWS Glue crawler with Amazon Athena

Apr 09, 2025 pm 03:09 PM

How to use AWS Glue crawler with Amazon Athena

Apr 09, 2025 pm 03:09 PM

As a data professional, you need to process large amounts of data from various sources. This can pose challenges to data management and analysis. Fortunately, two AWS services can help: AWS Glue and Amazon Athena.

How to start the server with redis

Apr 10, 2025 pm 08:12 PM

How to start the server with redis

Apr 10, 2025 pm 08:12 PM

The steps to start a Redis server include: Install Redis according to the operating system. Start the Redis service via redis-server (Linux/macOS) or redis-server.exe (Windows). Use the redis-cli ping (Linux/macOS) or redis-cli.exe ping (Windows) command to check the service status. Use a Redis client, such as redis-cli, Python, or Node.js, to access the server.

How to read redis queue

Apr 10, 2025 pm 10:12 PM

How to read redis queue

Apr 10, 2025 pm 10:12 PM

To read a queue from Redis, you need to get the queue name, read the elements using the LPOP command, and process the empty queue. The specific steps are as follows: Get the queue name: name it with the prefix of "queue:" such as "queue:my-queue". Use the LPOP command: Eject the element from the head of the queue and return its value, such as LPOP queue:my-queue. Processing empty queues: If the queue is empty, LPOP returns nil, and you can check whether the queue exists before reading the element.

How to view server version of Redis

Apr 10, 2025 pm 01:27 PM

How to view server version of Redis

Apr 10, 2025 pm 01:27 PM

Question: How to view the Redis server version? Use the command line tool redis-cli --version to view the version of the connected server. Use the INFO server command to view the server's internal version and need to parse and return information. In a cluster environment, check the version consistency of each node and can be automatically checked using scripts. Use scripts to automate viewing versions, such as connecting with Python scripts and printing version information.

How secure is Navicat's password?

Apr 08, 2025 pm 09:24 PM

How secure is Navicat's password?

Apr 08, 2025 pm 09:24 PM

Navicat's password security relies on the combination of symmetric encryption, password strength and security measures. Specific measures include: using SSL connections (provided that the database server supports and correctly configures the certificate), regularly updating Navicat, using more secure methods (such as SSH tunnels), restricting access rights, and most importantly, never record passwords.