Backend Development

Backend Development

Python Tutorial

Python Tutorial

Pandas and PySpark join forces to achieve both functionality and speed!

Pandas and PySpark join forces to achieve both functionality and speed!

Pandas and PySpark join forces to achieve both functionality and speed!

Data scientists or data practitioners who use Python for data processing are no strangers to the data science package pandas, and there are also heavy users of pandas like Yun Duojun. Most of the first lines of code written at the beginning of the project are import pandas as pd. Pandas can be said to be yyds for data processing! And its shortcomings are also very obvious. Pandas can only be processed on a single machine, and it cannot scale linearly with the amount of data. For example, if pandas attempts to read a data set larger than a machine's available memory, it will fail due to insufficient memory.

In addition, pandas is very slow in processing large data. Although there are other libraries like Dask or Vaex to optimize and improve data processing speed, it is a piece of cake in front of Spark, the god framework of big data processing.

Fortunately, in the new Spark 3.2 version, a new Pandas API has appeared, which integrates most of the pandas functions into PySpark. Using the pandas interface, you can use Spark, because Spark has The Pandas API uses Spark in the background, so that it can achieve the effect of strong cooperation, which can be said to be very powerful and very convenient.

It all started at Spark AI Summit 2019. Koalas is an open source project that uses Pandas on top of Spark. In the beginning, it only covered a small part of the functionality of Pandas, but it gradually grew in size. Now, in the new Spark 3.2 version, Koalas has been merged into PySpark.

Spark now integrates the Pandas API, so you can run Pandas on Spark. Only one line of code needs to be changed:

import pyspark.pandas as ps

From this we can gain many advantages:

- If we are familiar with using Python and Pandas, but not familiar with Spark, we can omit the complexity Start using PySpark immediately for your learning process.

- You can use one code base for everything: small and big data, single and distributed machines.

- You can run Pandas code faster on the Spark distributed framework.

The last point is particularly noteworthy.

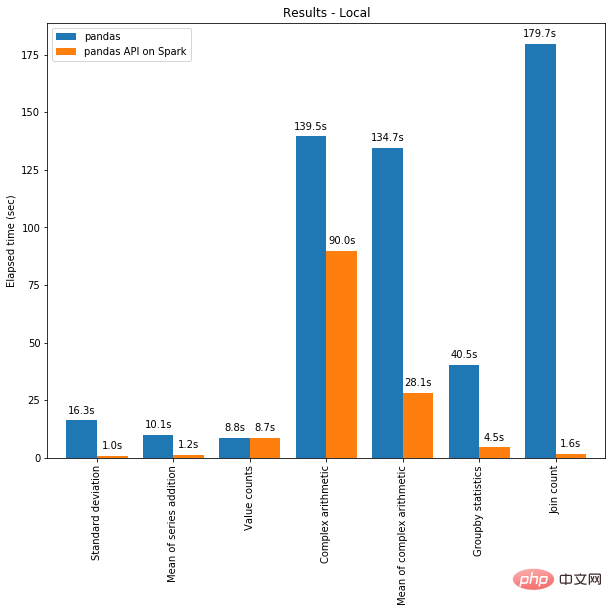

On the one hand, distributed computing can be applied to code in Pandas. And with the Spark engine, your code will be faster even on a single machine! The graph below shows the performance comparison between running Spark on a machine with 96 vCPUs and 384 GiBs of memory and calling pandas alone to analyze a 130GB CSV dataset.

Multithreading and Spark SQL Catalyst Optimizer both help optimize performance. For example, the Join count operation is 4x faster with full stage code generation: 5.9 seconds without code generation and 1.6 seconds with code generation.

Spark has particularly significant advantages in chaining operations. The Catalyst query optimizer recognizes filters to filter data intelligently and can apply disk-based joins, whereas Pandas prefers to load all data into memory at each step.

Now are you eager to try out how to write some code using the Pandas API on Spark? Let's get started now!

Switching between Pandas / Pandas-on-Spark / Spark

The first thing you need to know is what exactly we are using. When using Pandas, use the class pandas.core.frame.DataFrame. When using the pandas API in Spark, use pyspark.pandas.frame.DataFrame. Although the two are similar, they are not the same. The main difference is that the former is in a single machine, while the latter is distributed.

You can use Pandas-on-Spark to create a Dataframe and convert it to Pandas and vice versa:

# import Pandas-on-Spark import pyspark.pandas as ps # 使用 Pandas-on-Spark 创建一个 DataFrame ps_df = ps.DataFrame(range(10)) # 将 Pandas-on-Spark Dataframe 转换为 Pandas Dataframe pd_df = ps_df.to_pandas() # 将 Pandas Dataframe 转换为 Pandas-on-Spark Dataframe ps_df = ps.from_pandas(pd_df)

Note that if you use multiple machines, you will not be able to use Pandas-on-Spark before converting it to Pandas. When converting a Spark Dataframe to a Pandas Dataframe, data is transferred from multiple machines to a single machine and vice versa (see PySpark Guide [1]).

It is also possible to convert a Pandas-on-Spark Dataframe to a Spark DataFrame and vice versa:

# 使用 Pandas-on-Spark 创建一个 DataFrame ps_df = ps.DataFrame(range(10)) # 将 Pandas-on-Spark Dataframe 转换为 Spark Dataframe spark_df = ps_df.to_spark() # 将 Spark Dataframe 转换为 Pandas-on-Spark Dataframe ps_df_new = spark_df.to_pandas_on_spark()

How does the data type change?

The data types are basically the same when using Pandas-on-Spark and Pandas. When converting a Pandas-on-Spark DataFrame to a Spark DataFrame, the data type is automatically converted to the appropriate type (see the PySpark Guide [2])

The following example shows how the data is converted when converting Type conversion from PySpark DataFrame to pandas-on-Spark DataFrame.

>>> sdf = spark.createDataFrame([ ... (1, Decimal(1.0), 1., 1., 1, 1, 1, datetime(2020, 10, 27), "1", True, datetime(2020, 10, 27)), ... ], 'tinyint tinyint, decimal decimal, float float, double double, integer integer, long long, short short, timestamp timestamp, string string, boolean boolean, date date') >>> sdf

DataFrame[tinyint: tinyint, decimal: decimal(10,0), float: float, double: double, integer: int, long: bigint, short: smallint, timestamp: timestamp, string: string, boolean: boolean, date: date]

psdf = sdf.pandas_api() psdf.dtypes

tinyintint8 decimalobject float float32 doublefloat64 integer int32 longint64 short int16 timestampdatetime64[ns] string object booleanbool date object dtype: object

Pandas-on-Spark vs Spark Functions

DataFrame in Spark and its most commonly used functions in Pandas-on-Spark. Note that the only syntax difference between Pandas-on-Spark and Pandas is the import pyspark.pandas as ps line.

当你看完如下内容后,你会发现,即使您不熟悉 Spark,也可以通过 Pandas API 轻松使用。

导入库

# 运行Spark

from pyspark.sql import SparkSession

spark = SparkSession.builder

.appName("Spark")

.getOrCreate()

# 在Spark上运行Pandas

import pyspark.pandas as ps读取数据

以 old dog iris 数据集为例。

# SPARK

sdf = spark.read.options(inferSchema='True',

header='True').csv('iris.csv')

# PANDAS-ON-SPARK

pdf = ps.read_csv('iris.csv')选择

# SPARK

sdf.select("sepal_length","sepal_width").show()

# PANDAS-ON-SPARK

pdf[["sepal_length","sepal_width"]].head()删除列

# SPARK

sdf.drop('sepal_length').show()# PANDAS-ON-SPARK

pdf.drop('sepal_length').head()删除重复项

# SPARK sdf.dropDuplicates(["sepal_length","sepal_width"]).show() # PANDAS-ON-SPARK pdf[["sepal_length", "sepal_width"]].drop_duplicates()

筛选

# SPARK sdf.filter( (sdf.flower_type == "Iris-setosa") & (sdf.petal_length > 1.5) ).show() # PANDAS-ON-SPARK pdf.loc[ (pdf.flower_type == "Iris-setosa") & (pdf.petal_length > 1.5) ].head()

计数

# SPARK sdf.filter(sdf.flower_type == "Iris-virginica").count() # PANDAS-ON-SPARK pdf.loc[pdf.flower_type == "Iris-virginica"].count()

唯一值

# SPARK

sdf.select("flower_type").distinct().show()

# PANDAS-ON-SPARK

pdf["flower_type"].unique()排序

# SPARK

sdf.sort("sepal_length", "sepal_width").show()

# PANDAS-ON-SPARK

pdf.sort_values(["sepal_length", "sepal_width"]).head()分组

# SPARK

sdf.groupBy("flower_type").count().show()

# PANDAS-ON-SPARK

pdf.groupby("flower_type").count()替换

# SPARK

sdf.replace("Iris-setosa", "setosa").show()

# PANDAS-ON-SPARK

pdf.replace("Iris-setosa", "setosa").head()连接

#SPARK sdf.union(sdf) # PANDAS-ON-SPARK pdf.append(pdf)

transform 和 apply 函数应用

有许多 API 允许用户针对 pandas-on-Spark DataFrame 应用函数,例如:

DataFrame.transform() DataFrame.apply() DataFrame.pandas_on_spark.transform_batch() DataFrame.pandas_on_spark.apply_batch() Series.pandas_on_spark.transform_batch()

每个 API 都有不同的用途,并且在内部工作方式不同。

transform 和 apply

DataFrame.transform()和DataFrame.apply()之间的主要区别在于,前者需要返回相同长度的输入,而后者不需要。

# transform

psdf = ps.DataFrame({'a': [1,2,3], 'b':[4,5,6]})

def pandas_plus(pser):

return pser + 1# 应该总是返回与输入相同的长度。

psdf.transform(pandas_plus)

# apply

psdf = ps.DataFrame({'a': [1,2,3], 'b':[5,6,7]})

def pandas_plus(pser):

return pser[pser % 2 == 1]# 允许任意长度

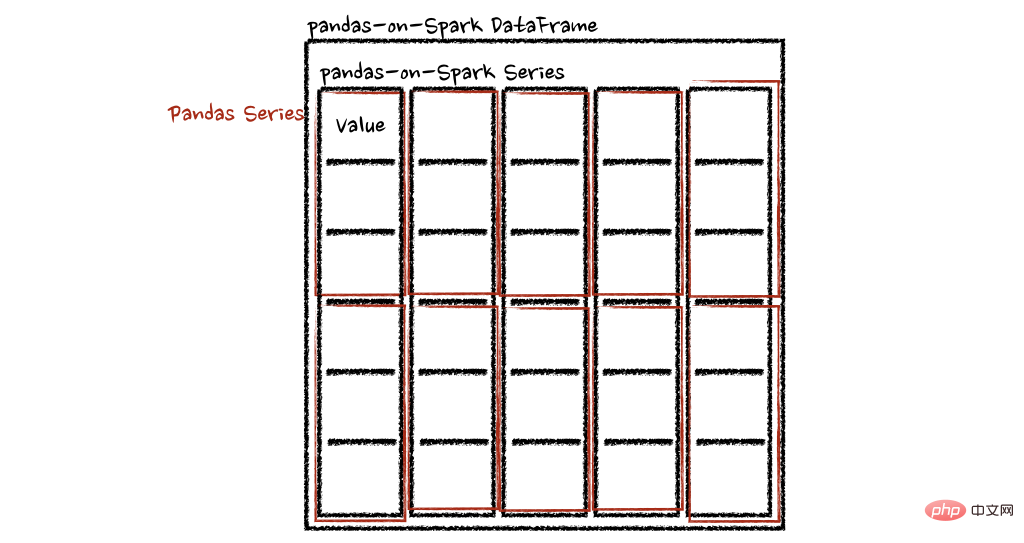

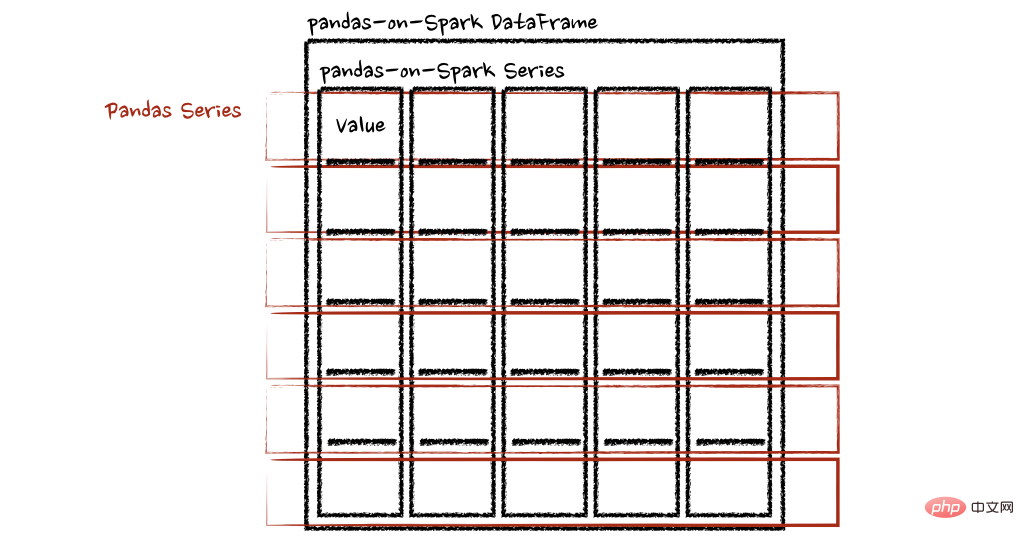

psdf.apply(pandas_plus)在这种情况下,每个函数采用一个 pandas Series,Spark 上的 pandas API 以分布式方式计算函数,如下所示。

在“列”轴的情况下,该函数将每一行作为一个熊猫系列。

psdf = ps.DataFrame({'a': [1,2,3], 'b':[4,5,6]})

def pandas_plus(pser):

return sum(pser)# 允许任意长度

psdf.apply(pandas_plus, axis='columns')上面的示例将每一行的总和计算为pands Series

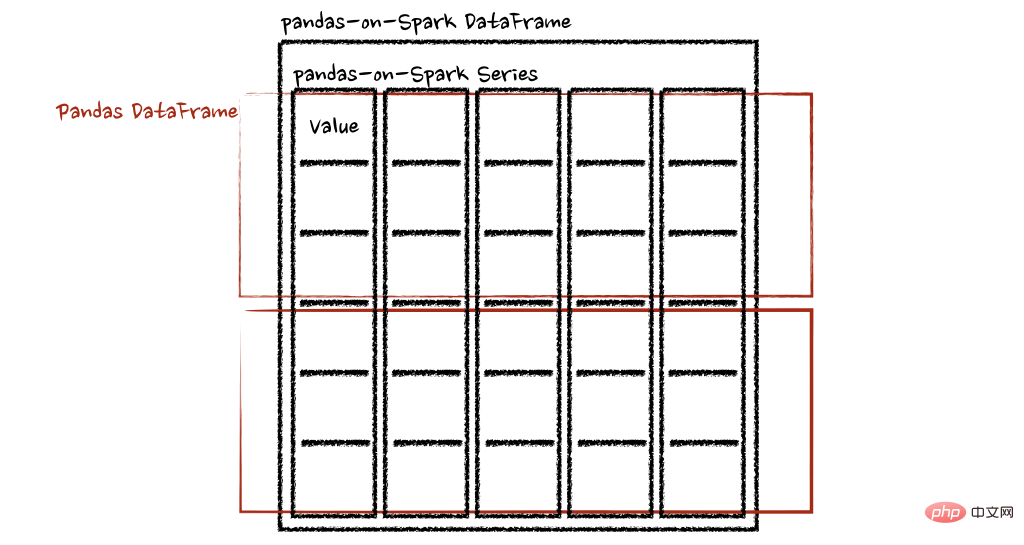

pandas_on_spark.transform_batch和pandas_on_spark.apply_batch

batch 后缀表示 pandas-on-Spark DataFrame 或 Series 中的每个块。API 对 pandas-on-Spark DataFrame 或 Series 进行切片,然后以 pandas DataFrame 或 Series 作为输入和输出应用给定函数。请参阅以下示例:

psdf = ps.DataFrame({'a': [1,2,3], 'b':[4,5,6]})

def pandas_plus(pdf):

return pdf + 1# 应该总是返回与输入相同的长度。

psdf.pandas_on_spark.transform_batch(pandas_plus)

psdf = ps.DataFrame({'a': [1,2,3], 'b':[4,5,6]})

def pandas_plus(pdf):

return pdf[pdf.a > 1]# 允许任意长度

psdf.pandas_on_spark.apply_batch(pandas_plus)两个示例中的函数都将 pandas DataFrame 作为 pandas-on-Spark DataFrame 的一个块,并输出一个 pandas DataFrame。Spark 上的 Pandas API 将 pandas 数据帧组合为 pandas-on-Spark 数据帧。

在 Spark 上使用 pandas API的注意事项

避免shuffle

某些操作,例如sort_values在并行或分布式环境中比在单台机器上的内存中更难完成,因为它需要将数据发送到其他节点,并通过网络在多个节点之间交换数据。

避免在单个分区上计算

另一种常见情况是在单个分区上进行计算。目前, DataFrame.rank 等一些 API 使用 PySpark 的 Window 而不指定分区规范。这会将所有数据移动到单个机器中的单个分区中,并可能导致严重的性能下降。对于非常大的数据集,应避免使用此类 API。

不要使用重复的列名

不允许使用重复的列名,因为 Spark SQL 通常不允许这样做。Spark 上的 Pandas API 继承了这种行为。例如,见下文:

import pyspark.pandas as ps

psdf = ps.DataFrame({'a': [1, 2], 'b':[3, 4]})

psdf.columns = ["a", "a"]Reference 'a' is ambiguous, could be: a, a.;

此外,强烈建议不要使用区分大小写的列名。Spark 上的 Pandas API 默认不允许它。

import pyspark.pandas as ps

psdf = ps.DataFrame({'a': [1, 2], 'A':[3, 4]})Reference 'a' is ambiguous, could be: a, a.;

但可以在 Spark 配置spark.sql.caseSensitive中打开以启用它,但需要自己承担风险。

from pyspark.sql import SparkSession

builder = SparkSession.builder.appName("pandas-on-spark")

builder = builder.config("spark.sql.caseSensitive", "true")

builder.getOrCreate()

import pyspark.pandas as ps

psdf = ps.DataFrame({'a': [1, 2], 'A':[3, 4]})

psdfaA 013 124

使用默认索引

pandas-on-Spark 用户面临的一个常见问题是默认索引导致性能下降。当索引未知时,Spark 上的 Pandas API 会附加一个默认索引,例如 Spark DataFrame 直接转换为 pandas-on-Spark DataFrame。

如果计划在生产中处理大数据,请通过将默认索引配置为distributed或distributed-sequence来使其确保为分布式。

有关配置默认索引的更多详细信息,请参阅默认索引类型[3]。

在 Spark 上使用 pandas API

尽管 Spark 上的 pandas API 具有大部分与 pandas 等效的 API,但仍有一些 API 尚未实现或明确不受支持。因此尽可能直接在 Spark 上使用 pandas API。

例如,Spark 上的 pandas API 没有实现__iter__(),阻止用户将所有数据从整个集群收集到客户端(驱动程序)端。不幸的是,许多外部 API,例如 min、max、sum 等 Python 的内置函数,都要求给定参数是可迭代的。对于 pandas,它开箱即用,如下所示:

>>> import pandas as pd >>> max(pd.Series([1, 2, 3])) 3 >>> min(pd.Series([1, 2, 3])) 1 >>> sum(pd.Series([1, 2, 3])) 6

Pandas 数据集存在于单台机器中,自然可以在同一台机器内进行本地迭代。但是,pandas-on-Spark 数据集存在于多台机器上,并且它们是以分布式方式计算的。很难在本地迭代,很可能用户在不知情的情况下将整个数据收集到客户端。因此,最好坚持使用 pandas-on-Spark API。上面的例子可以转换如下:

>>> import pyspark.pandas as ps >>> ps.Series([1, 2, 3]).max() 3 >>> ps.Series([1, 2, 3]).min() 1 >>> ps.Series([1, 2, 3]).sum() 6

pandas 用户的另一个常见模式可能是依赖列表推导式或生成器表达式。但是,它还假设数据集在引擎盖下是本地可迭代的。因此,它可以在 pandas 中无缝运行,如下所示:

import pandas as pd data = [] countries = ['London', 'New York', 'Helsinki'] pser = pd.Series([20., 21., 12.], index=countries) for temperature in pser: assert temperature > 0 if temperature > 1000: temperature = None data.append(temperature ** 2) pd.Series(data, index=countries)

London400.0 New York441.0 Helsinki144.0 dtype: float64

但是,对于 Spark 上的 pandas API,它的工作原理与上述相同。上面的示例也可以更改为直接使用 pandas-on-Spark API,如下所示:

import pyspark.pandas as ps import numpy as np countries = ['London', 'New York', 'Helsinki'] psser = ps.Series([20., 21., 12.], index=countries) def square(temperature) -> np.float64: assert temperature > 0 if temperature > 1000: temperature = None return temperature ** 2 psser.apply(square)

London400.0 New York441.0 Helsinki144.0

减少对不同 DataFrame 的操作

Spark 上的 Pandas API 默认不允许对不同 DataFrame(或 Series)进行操作,以防止昂贵的操作。只要有可能,就应该避免这种操作。

写在最后

到目前为止,我们将能够在 Spark 上使用 Pandas。这将会导致Pandas 速度的大大提高,迁移到 Spark 时学习曲线的减少,以及单机计算和分布式计算在同一代码库中的合并。

参考资料

[1]PySpark 指南: https://spark.apache.org/docs/latest/api/python/user_guide/pandas_on_spark/pandas_pyspark.html

[2]PySpark 指南: https://spark.apache.org/docs/latest/api/python/user_guide/pandas_on_spark/types.html

[3]默认索引类型: https://spark.apache.org/docs/latest/api/python/user_guide/pandas_on_spark/options.html#default-index-type

The above is the detailed content of Pandas and PySpark join forces to achieve both functionality and speed!. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1378

1378

52

52

Solving common pandas installation problems: interpretation and solutions to installation errors

Feb 19, 2024 am 09:19 AM

Solving common pandas installation problems: interpretation and solutions to installation errors

Feb 19, 2024 am 09:19 AM

Pandas installation tutorial: Analysis of common installation errors and their solutions, specific code examples are required Introduction: Pandas is a powerful data analysis tool that is widely used in data cleaning, data processing, and data visualization, so it is highly respected in the field of data science . However, due to environment configuration and dependency issues, you may encounter some difficulties and errors when installing pandas. This article will provide you with a pandas installation tutorial and analyze some common installation errors and their solutions. 1. Install pandas

How to read txt file correctly using pandas

Jan 19, 2024 am 08:39 AM

How to read txt file correctly using pandas

Jan 19, 2024 am 08:39 AM

How to use pandas to read txt files correctly requires specific code examples. Pandas is a widely used Python data analysis library. It can be used to process a variety of data types, including CSV files, Excel files, SQL databases, etc. At the same time, it can also be used to read text files, such as txt files. However, when reading txt files, we sometimes encounter some problems, such as encoding problems, delimiter problems, etc. This article will introduce how to read txt correctly using pandas

python pandas installation method

Nov 22, 2023 pm 02:33 PM

python pandas installation method

Nov 22, 2023 pm 02:33 PM

Python can install pandas by using pip, using conda, from source code, and using the IDE integrated package management tool. Detailed introduction: 1. Use pip and run the pip install pandas command in the terminal or command prompt to install pandas; 2. Use conda and run the conda install pandas command in the terminal or command prompt to install pandas; 3. From Source code installation and more.

Read CSV files and perform data analysis using pandas

Jan 09, 2024 am 09:26 AM

Read CSV files and perform data analysis using pandas

Jan 09, 2024 am 09:26 AM

Pandas is a powerful data analysis tool that can easily read and process various types of data files. Among them, CSV files are one of the most common and commonly used data file formats. This article will introduce how to use Pandas to read CSV files and perform data analysis, and provide specific code examples. 1. Import the necessary libraries First, we need to import the Pandas library and other related libraries that may be needed, as shown below: importpandasaspd 2. Read the CSV file using Pan

How to install pandas in python

Dec 04, 2023 pm 02:48 PM

How to install pandas in python

Dec 04, 2023 pm 02:48 PM

Steps to install pandas in python: 1. Open the terminal or command prompt; 2. Enter the "pip install pandas" command to install the pandas library; 3. Wait for the installation to complete, and you can import and use the pandas library in the Python script; 4. Use It is a specific virtual environment. Make sure to activate the corresponding virtual environment before installing pandas; 5. If you are using an integrated development environment, you can add the "import pandas as pd" code to import the pandas library.

Practical tips for reading txt files using pandas

Jan 19, 2024 am 09:49 AM

Practical tips for reading txt files using pandas

Jan 19, 2024 am 09:49 AM

Practical tips for reading txt files using pandas, specific code examples are required. In data analysis and data processing, txt files are a common data format. Using pandas to read txt files allows for fast and convenient data processing. This article will introduce several practical techniques to help you better use pandas to read txt files, along with specific code examples. Reading txt files with delimiters When using pandas to read txt files with delimiters, you can use read_c

Revealing the efficient data deduplication method in Pandas: Tips for quickly removing duplicate data

Jan 24, 2024 am 08:12 AM

Revealing the efficient data deduplication method in Pandas: Tips for quickly removing duplicate data

Jan 24, 2024 am 08:12 AM

The secret of Pandas deduplication method: a fast and efficient way to deduplicate data, which requires specific code examples. In the process of data analysis and processing, duplication in the data is often encountered. Duplicate data may mislead the analysis results, so deduplication is a very important step. Pandas, a powerful data processing library, provides a variety of methods to achieve data deduplication. This article will introduce some commonly used deduplication methods, and attach specific code examples. The most common case of deduplication based on a single column is based on whether the value of a certain column is duplicated.

Pandas easily reads data from SQL database

Jan 09, 2024 pm 10:45 PM

Pandas easily reads data from SQL database

Jan 09, 2024 pm 10:45 PM

Data processing tool: Pandas reads data in SQL databases and requires specific code examples. As the amount of data continues to grow and its complexity increases, data processing has become an important part of modern society. In the data processing process, Pandas has become one of the preferred tools for many data analysts and scientists. This article will introduce how to use the Pandas library to read data from a SQL database and provide some specific code examples. Pandas is a powerful data processing and analysis tool based on Python