Technology peripherals

Technology peripherals

AI

AI

Why are some major automakers rethinking their autonomous driving investments?

Why are some major automakers rethinking their autonomous driving investments?

Why are some major automakers rethinking their autonomous driving investments?

Until a few months ago, autonomous driving was one of the hottest investment themes. However, many major automakers, including Ford, have recently been reconsidering their investments in self-driving businesses, and other companies such as Alphabet are facing financial pressure to cut spending on self-driving businesses.

Uber was the first company to divest itself of its self-driving business

Uber was one of the first companies to give up its self-driving business. In 2020, the business was sold to autonomous driving start-up Aurora Innovation. In return, Uber received a majority stake in the company.

Aurora Innovation went public through a SPAC reverse merger, and its current share price is less than $2 per share. According to reports, Uber has incurred huge losses on its investments in companies such as Aurora, Grab and Zomato.

Aurora Innovation is a pure-play autonomous driving business developer, and its stock price plunge is reminiscent of investor pessimism about the industry. Not only that, but some companies are also reconsidering their investments in autonomous driving.

Ford exits the self-driving business

Last month, Argo AI, a self-driving startup jointly established by Ford and Volkswagen, collapsed, and Ford canceled its investment in the company. This marks that major automobile manufacturers have also "retired" from autonomous driving.

Ford said Argo AI has not attracted new investors. Ford also announced that it will not focus on developing L4 autonomous driving systems. The company's CEO said that although some investors have invested a total of $100 billion in L4 autonomous driving technology, no company has yet been able to determine a profitable business model.

On an earnings call, Doug Field, Ford’s director of advanced product development and technology, said: “Large-scale commercialization of L4 autonomous driving will take far longer than we previously anticipated. L2 and L3 driver assistance technology has a larger addressable customer base, which will allow it to scale more quickly and become profitable."

And Ford Chief Financial Officer John Lawler said that the company does not see the need Develop self-driving technology yourself.

Alphabet’s autonomous driving is facing loss pressure

Alphabet owns its autonomous driving business subsidiary Waymo, which is facing pressure from shareholders to question due to rising losses. TCI Fund Management, which holds about $6 billion worth of Alphabet stock, sent a letter to Alphabet management calling for Waymo's losses to be reduced.

TCI said in the letter, "Unfortunately, people have lost enthusiasm for autonomous driving, and competitors have also withdrawn from the market." TCI also mentioned in the letter that Volkswagen and Ford have withdrawn from this business. fact.

Coincidentally, Nuro, a self-driving startup backed by Alphabet, Tiger Global and SoftBank, recently announced that it would lay off one-fifth of its workforce in an effort to save money while investing in the long term.

GM said cars will not withdraw from the autonomous driving market

Industry insiders also pointed out that the situation in the autonomous driving market is not bleak, and some companies are still continuing to invest in autonomous driving. General Motors, for example, has said it will not withdraw from its investment in the business. The company owns Cruise, a company that develops autonomous driving business, and received investment from Microsoft last year.

GM CEO Mary Barra said: "We are the only self-driving car company ready to launch and bring revenue in three markets."

Barra on GM's self-driving Expressed optimism about business development. She said: "When we consider the strength of the business and the business we have established, we feel we can reinvest in the autonomous vehicle business because we see a tremendous opportunity."

General Motors also upgraded cash flow guidance for 2022 and expects its electric vehicle business to become profitable in 2025.

Tesla sees software as the main driver of its business

Tesla sees autonomous driving as a key driver of its business growth. The company has adjusted the price of its Fully Self-Driving System (FSD) twice this year, now raising it to $15,000.

Industry sources said that given the deteriorating macroeconomic environment, many autonomous driving companies are facing pressure to raise funds. Because the business is still in its infancy, many businesses are likely to continue to post losses in the coming years. Now with the Federal Reserve aggressively raising interest rates, few investors want to fund money-losing companies like self-driving cars.

On the one hand, there is the "unexpected future" and huge investment of autonomous driving, and on the other hand, there is the sluggish economic environment. What do you think the future direction of autonomous driving will be?

The above is the detailed content of Why are some major automakers rethinking their autonomous driving investments?. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1382

1382

52

52

Why is Gaussian Splatting so popular in autonomous driving that NeRF is starting to be abandoned?

Jan 17, 2024 pm 02:57 PM

Why is Gaussian Splatting so popular in autonomous driving that NeRF is starting to be abandoned?

Jan 17, 2024 pm 02:57 PM

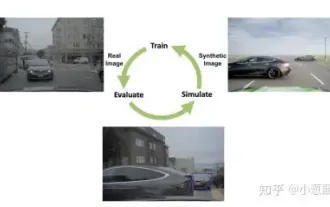

Written above & the author’s personal understanding Three-dimensional Gaussiansplatting (3DGS) is a transformative technology that has emerged in the fields of explicit radiation fields and computer graphics in recent years. This innovative method is characterized by the use of millions of 3D Gaussians, which is very different from the neural radiation field (NeRF) method, which mainly uses an implicit coordinate-based model to map spatial coordinates to pixel values. With its explicit scene representation and differentiable rendering algorithms, 3DGS not only guarantees real-time rendering capabilities, but also introduces an unprecedented level of control and scene editing. This positions 3DGS as a potential game-changer for next-generation 3D reconstruction and representation. To this end, we provide a systematic overview of the latest developments and concerns in the field of 3DGS for the first time.

How to solve the long tail problem in autonomous driving scenarios?

Jun 02, 2024 pm 02:44 PM

How to solve the long tail problem in autonomous driving scenarios?

Jun 02, 2024 pm 02:44 PM

Yesterday during the interview, I was asked whether I had done any long-tail related questions, so I thought I would give a brief summary. The long-tail problem of autonomous driving refers to edge cases in autonomous vehicles, that is, possible scenarios with a low probability of occurrence. The perceived long-tail problem is one of the main reasons currently limiting the operational design domain of single-vehicle intelligent autonomous vehicles. The underlying architecture and most technical issues of autonomous driving have been solved, and the remaining 5% of long-tail problems have gradually become the key to restricting the development of autonomous driving. These problems include a variety of fragmented scenarios, extreme situations, and unpredictable human behavior. The "long tail" of edge scenarios in autonomous driving refers to edge cases in autonomous vehicles (AVs). Edge cases are possible scenarios with a low probability of occurrence. these rare events

Choose camera or lidar? A recent review on achieving robust 3D object detection

Jan 26, 2024 am 11:18 AM

Choose camera or lidar? A recent review on achieving robust 3D object detection

Jan 26, 2024 am 11:18 AM

0.Written in front&& Personal understanding that autonomous driving systems rely on advanced perception, decision-making and control technologies, by using various sensors (such as cameras, lidar, radar, etc.) to perceive the surrounding environment, and using algorithms and models for real-time analysis and decision-making. This enables vehicles to recognize road signs, detect and track other vehicles, predict pedestrian behavior, etc., thereby safely operating and adapting to complex traffic environments. This technology is currently attracting widespread attention and is considered an important development area in the future of transportation. one. But what makes autonomous driving difficult is figuring out how to make the car understand what's going on around it. This requires that the three-dimensional object detection algorithm in the autonomous driving system can accurately perceive and describe objects in the surrounding environment, including their locations,

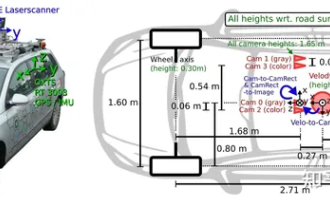

Have you really mastered coordinate system conversion? Multi-sensor issues that are inseparable from autonomous driving

Oct 12, 2023 am 11:21 AM

Have you really mastered coordinate system conversion? Multi-sensor issues that are inseparable from autonomous driving

Oct 12, 2023 am 11:21 AM

The first pilot and key article mainly introduces several commonly used coordinate systems in autonomous driving technology, and how to complete the correlation and conversion between them, and finally build a unified environment model. The focus here is to understand the conversion from vehicle to camera rigid body (external parameters), camera to image conversion (internal parameters), and image to pixel unit conversion. The conversion from 3D to 2D will have corresponding distortion, translation, etc. Key points: The vehicle coordinate system and the camera body coordinate system need to be rewritten: the plane coordinate system and the pixel coordinate system. Difficulty: image distortion must be considered. Both de-distortion and distortion addition are compensated on the image plane. 2. Introduction There are four vision systems in total. Coordinate system: pixel plane coordinate system (u, v), image coordinate system (x, y), camera coordinate system () and world coordinate system (). There is a relationship between each coordinate system,

This article is enough for you to read about autonomous driving and trajectory prediction!

Feb 28, 2024 pm 07:20 PM

This article is enough for you to read about autonomous driving and trajectory prediction!

Feb 28, 2024 pm 07:20 PM

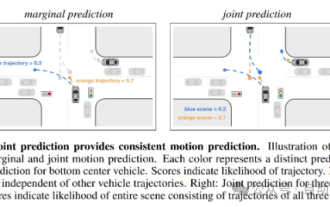

Trajectory prediction plays an important role in autonomous driving. Autonomous driving trajectory prediction refers to predicting the future driving trajectory of the vehicle by analyzing various data during the vehicle's driving process. As the core module of autonomous driving, the quality of trajectory prediction is crucial to downstream planning control. The trajectory prediction task has a rich technology stack and requires familiarity with autonomous driving dynamic/static perception, high-precision maps, lane lines, neural network architecture (CNN&GNN&Transformer) skills, etc. It is very difficult to get started! Many fans hope to get started with trajectory prediction as soon as possible and avoid pitfalls. Today I will take stock of some common problems and introductory learning methods for trajectory prediction! Introductory related knowledge 1. Are the preview papers in order? A: Look at the survey first, p

SIMPL: A simple and efficient multi-agent motion prediction benchmark for autonomous driving

Feb 20, 2024 am 11:48 AM

SIMPL: A simple and efficient multi-agent motion prediction benchmark for autonomous driving

Feb 20, 2024 am 11:48 AM

Original title: SIMPL: ASimpleandEfficientMulti-agentMotionPredictionBaselineforAutonomousDriving Paper link: https://arxiv.org/pdf/2402.02519.pdf Code link: https://github.com/HKUST-Aerial-Robotics/SIMPL Author unit: Hong Kong University of Science and Technology DJI Paper idea: This paper proposes a simple and efficient motion prediction baseline (SIMPL) for autonomous vehicles. Compared with traditional agent-cent

Let's talk about end-to-end and next-generation autonomous driving systems, as well as some misunderstandings about end-to-end autonomous driving?

Apr 15, 2024 pm 04:13 PM

Let's talk about end-to-end and next-generation autonomous driving systems, as well as some misunderstandings about end-to-end autonomous driving?

Apr 15, 2024 pm 04:13 PM

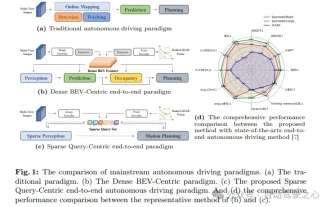

In the past month, due to some well-known reasons, I have had very intensive exchanges with various teachers and classmates in the industry. An inevitable topic in the exchange is naturally end-to-end and the popular Tesla FSDV12. I would like to take this opportunity to sort out some of my thoughts and opinions at this moment for your reference and discussion. How to define an end-to-end autonomous driving system, and what problems should be expected to be solved end-to-end? According to the most traditional definition, an end-to-end system refers to a system that inputs raw information from sensors and directly outputs variables of concern to the task. For example, in image recognition, CNN can be called end-to-end compared to the traditional feature extractor + classifier method. In autonomous driving tasks, input data from various sensors (camera/LiDAR

nuScenes' latest SOTA | SparseAD: Sparse query helps efficient end-to-end autonomous driving!

Apr 17, 2024 pm 06:22 PM

nuScenes' latest SOTA | SparseAD: Sparse query helps efficient end-to-end autonomous driving!

Apr 17, 2024 pm 06:22 PM

Written in front & starting point The end-to-end paradigm uses a unified framework to achieve multi-tasking in autonomous driving systems. Despite the simplicity and clarity of this paradigm, the performance of end-to-end autonomous driving methods on subtasks still lags far behind single-task methods. At the same time, the dense bird's-eye view (BEV) features widely used in previous end-to-end methods make it difficult to scale to more modalities or tasks. A sparse search-centric end-to-end autonomous driving paradigm (SparseAD) is proposed here, in which sparse search fully represents the entire driving scenario, including space, time, and tasks, without any dense BEV representation. Specifically, a unified sparse architecture is designed for task awareness including detection, tracking, and online mapping. In addition, heavy