Technology peripherals

Technology peripherals

AI

AI

Byte proposes an asymmetric image resampling model, with anti-compression performance leading SOTA on JPEG and WebP

Byte proposes an asymmetric image resampling model, with anti-compression performance leading SOTA on JPEG and WebP

Byte proposes an asymmetric image resampling model, with anti-compression performance leading SOTA on JPEG and WebP

The Image Rescaling (LR) task jointly optimizes image downsampling and upsampling operations. By reducing and restoring image resolution, it can be used to save storage space or transmission bandwidth. In practical applications, such as multi-level distribution of atlas services, low-resolution images obtained by downsampling are often subjected to lossy compression, and lossy compression often leads to a significant decrease in the performance of existing algorithms.

Recently, ByteDance-Volcano Engine Multimedia Laboratory tried for the first time to optimize image resampling performance under lossy compression and designed an asymmetric reversible Resampling framework , based on two observations under this framework, further proposes the anti-compression image resampling model SAIN. This study decouples a set of reversible network modules into two parts: resampling and compression simulation, uses a mixed Gaussian distribution to model the joint information loss caused by resolution degradation and compression distortion, and combines it with a differentiable JPEG operator for end-to-end training , which greatly improves the robustness to common compression algorithms.

Currently for image resampling research, the SOTA method is based on the Invertible Network to construct a bijective function (bijective function), and its positive operation converts the high resolution (HR) The image is converted into a low-resolution (LR) image and a series of hidden variables obeying the standard normal distribution. The inverse operation randomly samples the hidden variables and combines the LR image for upsampling restoration.

Due to the characteristics of the reversible network, the downsampling and upsampling operators maintain a high degree of symmetry, which makes it difficult for the compressed LR image to pass the originally learned upsampling. operator to restore. In order to enhance the robustness to lossy compression, this study proposes an anti-compression image resampling model SAIN (Self-Asymmetric I based on an asymmetric reversible framework nvertible Network).

The core innovations of the SAIN model are as follows:

- Proposes an asymmetric reversible image resampling framework. It solves the problem of performance degradation due to strict symmetry in previous methods; proposes an enhanced invertible module (E-InvBlock), which enhances model fitting capabilities while sharing a large number of parameters and operations, while modeling before and after compression. The two sets of LR images enable the model to perform compression recovery and upsampling through inverse operations.

- Construct a learnable mixed Gaussian distribution, model the joint information loss caused by resolution reduction and lossy compression, and directly optimize the distribution parameters through re-parameterization techniques, which is more consistent with the hidden variables actual distribution.

The SAIN model has been verified for performance under JPEG and WebP compression, and its performance on multiple public data sets is significantly ahead of the SOTA model. Related research has been selected for the AAAI 2023 Oral.

- ##Paper address: https://arxiv.org/abs/2303.02353

- Code link: https://github.com/yang-jin-hai/SAIN

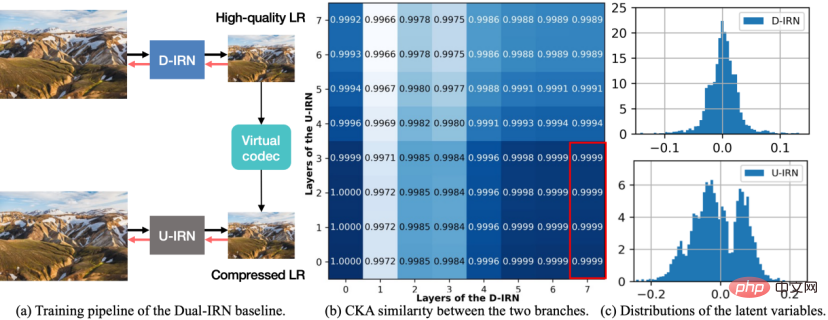

Figure 1 Dual-IRN model diagram.

In order to improve the anti-compression performance, this research first designed an asymmetric reversible image resampling framework, proposed the baseline scheme Dual-IRN model, and analyzed in depth After the shortcomings of this scheme, the SAIN model was proposed for further optimization. As shown in the figure above, the Dual-IRN model contains two branches, where D-IRN and U-IRN are two sets of reversible networks that learn the bijection between the HR image and the pre-compression/post-compression LR image respectively.

In the training phase, the Dual-IRN model passes the gradient between the two branches through the differentiable JPEG operator. In the testing phase, the model uses D-IRN to downsample to obtain high-quality LR images. After real compression in the real environment, the model then uses U-IRN with compression-aware to complete compression recovery and upsampling.

Such an asymmetric framework enables the upsampling and downsampling operators to avoid strict reversible relationships, fundamentally solves the problem caused by the compression algorithm destroying the symmetry of the upsampling and downsampling processes. The problem is that compared with SOTA's symmetrical solution, the anti-compression performance is greatly improved.

Subsequently, the researchers conducted further analysis on the Dual-IRN model and observed the following two phenomena:

- First , measure the CKA similarity of the middle layer features of the two branches of D-IRN and U-IRN. As shown in (b) above, the output features of the last layer of D-IRN (i.e., the high-quality LR images generated by the network) are highly similar to the output features of the shallow layers of U-IRN, indicating the shallow behavior of U-IRN is closer to the simulation of sampling loss, while the deep behavior is closer to the simulation of compression loss.

- Second, count the true distribution of the latent variables in the middle layer of the two branches D-IRN and U-IRN. As shown in (c) (d) above, the latent variables of D-IRN without compressed sensing satisfy the unimodal normal distribution assumption as a whole, while the latent variables of U-IRN with compressed sensing show a multi-modal shape. , indicating that the form of information loss caused by lossy compression is more complex.

Based on the above analysis, the researchers optimized the model from multiple aspects. The resulting SAIN model not only reduced the number of network parameters by nearly half, but also achieved further improvements. Performance improvements.

SAIN model details

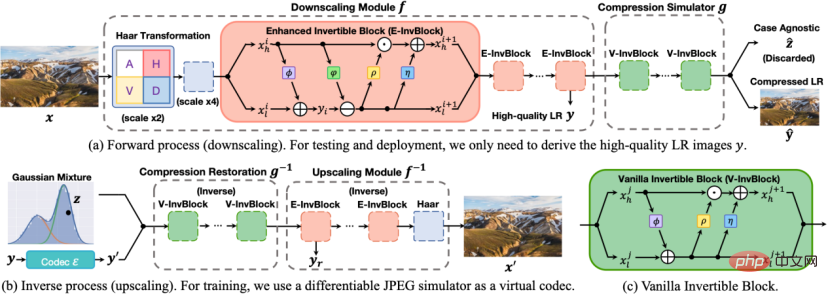

##Figure 2 SAIN model diagram.

The architecture of the SAIN model is shown in the figure above, and the following four main improvements have been made:

1. Overall framework. Based on the similarity of the middle layer features, a set of reversible network modules is decoupled into two parts: resampling and compression simulation, forming a self-asymmetric architecture to avoid using two complete sets of reversible networks. In the testing phase, use forward transformation

to obtain high-quality LR images, and first use inverse transformation

Perform compression recovery, and then use inverse transformation

for upsampling.

#2. Network structure. E-InvBlock is proposed based on the assumption that compression loss can be recovered with the help of high-frequency information. An additive transformation is added to the module, so that two sets of LR images before and after compression can be efficiently modeled while sharing a large number of operations.

3. Information loss modeling. Based on the true distribution of latent variables, it is proposed to use the learnable mixed Gaussian distribution to model the joint information loss caused by downsampling and lossy compression, and optimize the distribution parameters end-to-end through re-parameterization techniques.

4. Objective function. Multiple loss functions are designed to constrain the reversibility of the network and improve reconstruction accuracy. At the same time, real compression operations are introduced into the loss function to enhance the robustness to real compression schemes.

Experiment and Effect EvaluationThe evaluation data set is the DIV2K verification set and the four standard test sets Set5, Set14, BSD100 and Urban100.

The quantitative evaluation indicators are:

- PSNR: Peak Signal-to-Noise Ratio, peak signal-to-noise ratio, reflecting the mean square error of the reconstructed image and the original image, the higher the better;

- SSIM: Structural Similarity Image Measurement, measures the structural similarity between the reconstructed image and the original image, the higher the better.

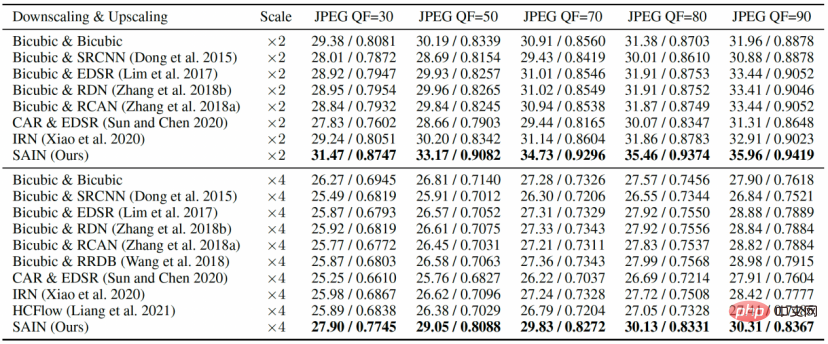

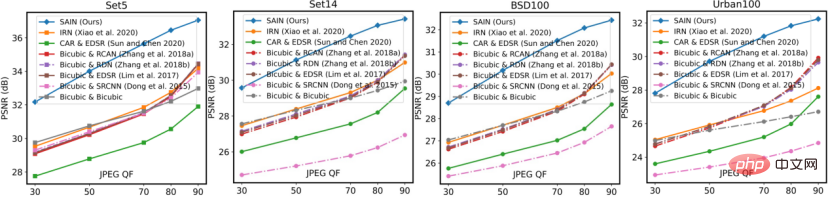

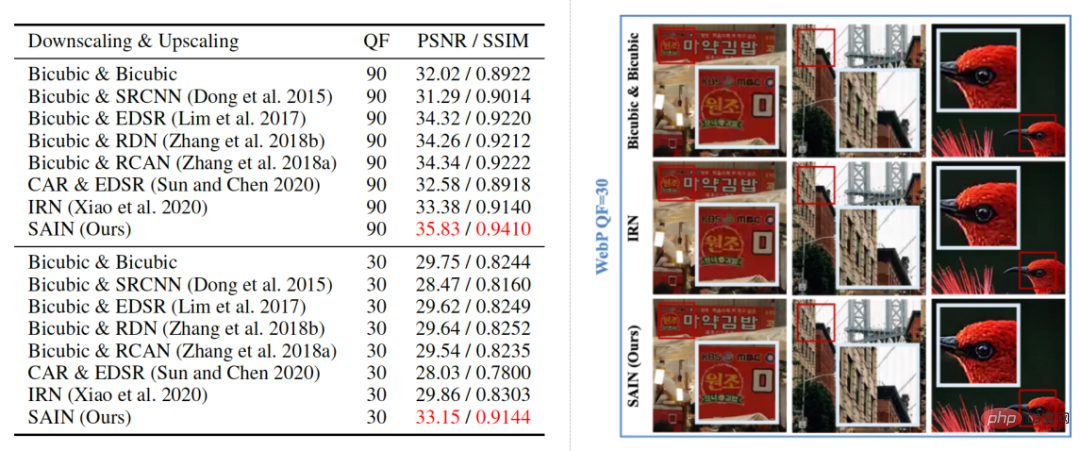

In the comparative experiments in Table 1 and Figure 3, SAIN’s PSNR and SSIM scores on all data sets are significantly ahead of SOTA’s image resampling model. At relatively low QF, existing methods generally experience severe performance degradation, while the SAIN model still maintains optimal performance.

Table 1 Comparative experiment, comparing different JPEG compression qualities (QF) on the DIV2K data set Reconstruction quality (PSNR/SSIM).

Figure 3 Comparative experiment, comparing different JPEG QF on four standard test sets reconstruction quality (PSNR).

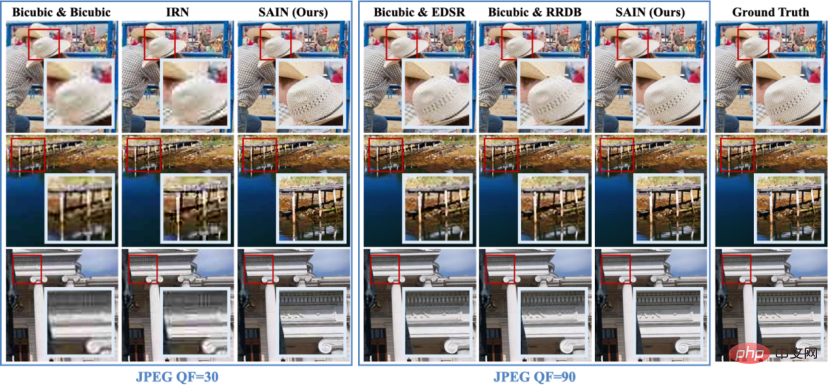

In the visualization results in Figure 4, it can be clearly seen that the HR image restored by SAIN is clearer and more accurate.

Figure 4 Comparison of visualization results of different methods under JPEG compression (×4 magnification).

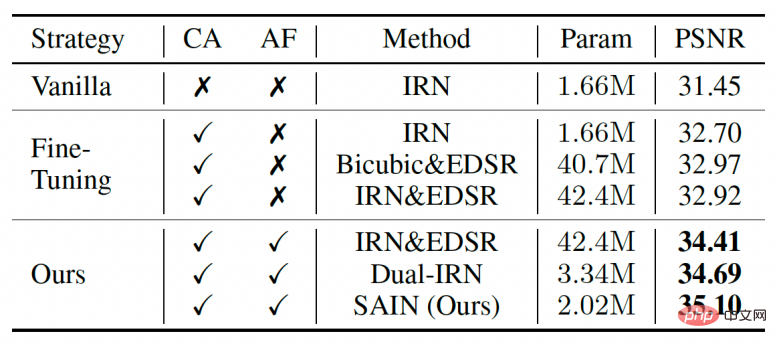

In the ablation experiments in Table 2, the researchers also compared several other candidates for training combined with real compression. These candidates are more resistant to compression than the fully symmetric existing model (IRN), but are still inferior to the SAIN model in terms of number of parameters and accuracy.

Table 2 Ablation experiments for the overall framework and training strategy.

In the visualization results in Figure 5, the researchers compared the reconstruction results of different image resampling models under WebP compression distortion. It can be found that the SAIN model also shows the highest reconstruction score under the WebP compression scheme and can clearly and accurately restore image details, proving SAIN's compatibility with different compression schemes.

Figure 5 Qualitative and quantitative comparison of different methods under WebP compression (×2 magnification).

In addition, this study also conducted ablation experiments on the mixed Gaussian distribution, E-InvBlock and loss function, proving that these improvements have a positive impact on the results. contribute.

Summary and Outlook

Volcano Engine Multimedia Laboratory proposed a model based on an asymmetric reversible framework for anti-compression image resampling: SAIN. The model consists of two parts: resampling and compression simulation. It uses a mixed Gaussian distribution to model the joint information loss caused by resolution reduction and compression distortion. It is combined with a differentiable JPEG operator for end-to-end training, and E-InvBlock is proposed to enhance the model. The fitting ability greatly improves the robustness to common compression algorithms.

The Volcano Engine Multimedia Laboratory is a research team under ByteDance. It is committed to exploring cutting-edge technologies in the multimedia field and participating in international standardization work. Its many innovative algorithms and software and hardware solutions have been widely used in Douyin, Douyin, etc. Multimedia business for Xigua Video and other products, and provides technical services to enterprise-level customers of Volcano Engine. Since the establishment of the laboratory, many papers have been selected into top international conferences and flagship journals, and have won several international technical competition championships, industry innovation awards and best paper awards.

In the future, the research team will continue to optimize the performance of the image resampling model under lossy compression, and further explore more complex application scenarios such as anti-compression video resampling and arbitrary magnification resampling. .

The above is the detailed content of Byte proposes an asymmetric image resampling model, with anti-compression performance leading SOTA on JPEG and WebP. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1665

1665

14

14

1424

1424

52

52

1322

1322

25

25

1270

1270

29

29

1249

1249

24

24

No OpenAI data required, join the list of large code models! UIUC releases StarCoder-15B-Instruct

Jun 13, 2024 pm 01:59 PM

No OpenAI data required, join the list of large code models! UIUC releases StarCoder-15B-Instruct

Jun 13, 2024 pm 01:59 PM

At the forefront of software technology, UIUC Zhang Lingming's group, together with researchers from the BigCode organization, recently announced the StarCoder2-15B-Instruct large code model. This innovative achievement achieved a significant breakthrough in code generation tasks, successfully surpassing CodeLlama-70B-Instruct and reaching the top of the code generation performance list. The unique feature of StarCoder2-15B-Instruct is its pure self-alignment strategy. The entire training process is open, transparent, and completely autonomous and controllable. The model generates thousands of instructions via StarCoder2-15B in response to fine-tuning the StarCoder-15B base model without relying on expensive manual annotation.

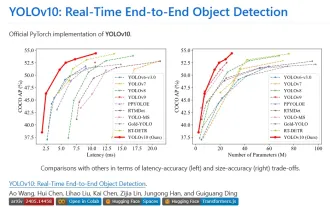

Yolov10: Detailed explanation, deployment and application all in one place!

Jun 07, 2024 pm 12:05 PM

Yolov10: Detailed explanation, deployment and application all in one place!

Jun 07, 2024 pm 12:05 PM

1. Introduction Over the past few years, YOLOs have become the dominant paradigm in the field of real-time object detection due to its effective balance between computational cost and detection performance. Researchers have explored YOLO's architectural design, optimization goals, data expansion strategies, etc., and have made significant progress. At the same time, relying on non-maximum suppression (NMS) for post-processing hinders end-to-end deployment of YOLO and adversely affects inference latency. In YOLOs, the design of various components lacks comprehensive and thorough inspection, resulting in significant computational redundancy and limiting the capabilities of the model. It offers suboptimal efficiency, and relatively large potential for performance improvement. In this work, the goal is to further improve the performance efficiency boundary of YOLO from both post-processing and model architecture. to this end

How to evaluate the cost-effectiveness of commercial support for Java frameworks

Jun 05, 2024 pm 05:25 PM

How to evaluate the cost-effectiveness of commercial support for Java frameworks

Jun 05, 2024 pm 05:25 PM

Evaluating the cost/performance of commercial support for a Java framework involves the following steps: Determine the required level of assurance and service level agreement (SLA) guarantees. The experience and expertise of the research support team. Consider additional services such as upgrades, troubleshooting, and performance optimization. Weigh business support costs against risk mitigation and increased efficiency.

Tsinghua University took over and YOLOv10 came out: the performance was greatly improved and it was on the GitHub hot list

Jun 06, 2024 pm 12:20 PM

Tsinghua University took over and YOLOv10 came out: the performance was greatly improved and it was on the GitHub hot list

Jun 06, 2024 pm 12:20 PM

The benchmark YOLO series of target detection systems has once again received a major upgrade. Since the release of YOLOv9 in February this year, the baton of the YOLO (YouOnlyLookOnce) series has been passed to the hands of researchers at Tsinghua University. Last weekend, the news of the launch of YOLOv10 attracted the attention of the AI community. It is considered a breakthrough framework in the field of computer vision and is known for its real-time end-to-end object detection capabilities, continuing the legacy of the YOLO series by providing a powerful solution that combines efficiency and accuracy. Paper address: https://arxiv.org/pdf/2405.14458 Project address: https://github.com/THU-MIG/yo

Google Gemini 1.5 technical report: Easily prove Mathematical Olympiad questions, the Flash version is 5 times faster than GPT-4 Turbo

Jun 13, 2024 pm 01:52 PM

Google Gemini 1.5 technical report: Easily prove Mathematical Olympiad questions, the Flash version is 5 times faster than GPT-4 Turbo

Jun 13, 2024 pm 01:52 PM

In February this year, Google launched the multi-modal large model Gemini 1.5, which greatly improved performance and speed through engineering and infrastructure optimization, MoE architecture and other strategies. With longer context, stronger reasoning capabilities, and better handling of cross-modal content. This Friday, Google DeepMind officially released the technical report of Gemini 1.5, which covers the Flash version and other recent upgrades. The document is 153 pages long. Technical report link: https://storage.googleapis.com/deepmind-media/gemini/gemini_v1_5_report.pdf In this report, Google introduces Gemini1

How does the learning curve of PHP frameworks compare to other language frameworks?

Jun 06, 2024 pm 12:41 PM

How does the learning curve of PHP frameworks compare to other language frameworks?

Jun 06, 2024 pm 12:41 PM

The learning curve of a PHP framework depends on language proficiency, framework complexity, documentation quality, and community support. The learning curve of PHP frameworks is higher when compared to Python frameworks and lower when compared to Ruby frameworks. Compared to Java frameworks, PHP frameworks have a moderate learning curve but a shorter time to get started.

Do different data sets have different scaling laws? And you can predict it with a compression algorithm

Jun 07, 2024 pm 05:51 PM

Do different data sets have different scaling laws? And you can predict it with a compression algorithm

Jun 07, 2024 pm 05:51 PM

Generally speaking, the more calculations it takes to train a neural network, the better its performance. When scaling up a calculation, a decision must be made: increase the number of model parameters or increase the size of the data set—two factors that must be weighed within a fixed computational budget. The advantage of increasing the number of model parameters is that it can improve the complexity and expression ability of the model, thereby better fitting the training data. However, too many parameters can lead to overfitting, making the model perform poorly on unseen data. On the other hand, expanding the data set size can improve the generalization ability of the model and reduce overfitting problems. Let us tell you: As long as you allocate parameters and data appropriately, you can maximize performance within a fixed computing budget. Many previous studies have explored Scalingl of neural language models.

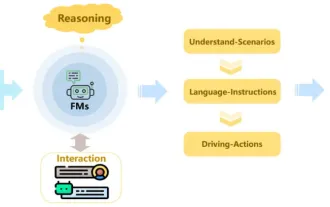

Review! Comprehensively summarize the important role of basic models in promoting autonomous driving

Jun 11, 2024 pm 05:29 PM

Review! Comprehensively summarize the important role of basic models in promoting autonomous driving

Jun 11, 2024 pm 05:29 PM

Written above & the author’s personal understanding: Recently, with the development and breakthroughs of deep learning technology, large-scale foundation models (Foundation Models) have achieved significant results in the fields of natural language processing and computer vision. The application of basic models in autonomous driving also has great development prospects, which can improve the understanding and reasoning of scenarios. Through pre-training on rich language and visual data, the basic model can understand and interpret various elements in autonomous driving scenarios and perform reasoning, providing language and action commands for driving decision-making and planning. The base model can be data augmented with an understanding of the driving scenario to provide those rare feasible features in long-tail distributions that are unlikely to be encountered during routine driving and data collection.