What is Python multithreading and how to use it

What is a thread? Why do you want it?

At its core, Python is a linear language, but the threading module comes in handy when you need more processing power. Although threads in Python cannot be used for parallel CPU computing, they are well suited for I/O operations such as web scraping because the processor is idle, waiting for data.

Threads are changing the game as many network/data I/O related scripts spend most of their time waiting for data from remote sources. Since downloads may be unlinked (i.e. crawling separate websites), the processor can download from different data sources in parallel and merge the results at the end. For CPU-intensive processes, there is little benefit to using the thread module.

Fortunately, threads are included in the standard library:

import threading from queue import Queue import time

You can use target as a callable object, using args Pass arguments to the function and start start the thread.

def testThread(num): print num if __name__ == '__main__': for i in range(5): t = threading.Thread(target=testThread, arg=(i,)) t.start()

If you've never seen it before if __name__ == '__main__':, this is basically a way to ensure that the code nested within it is only run directly by the script (instead of method to run when importing).

Lock

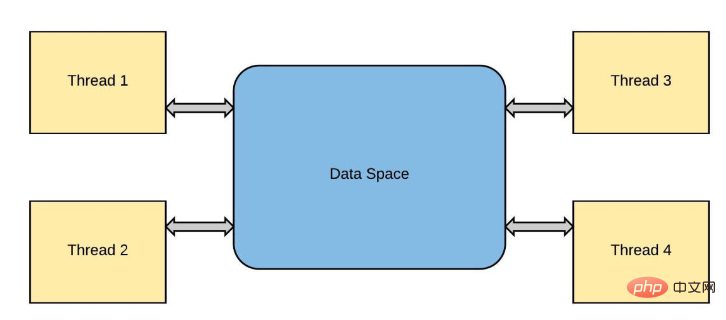

Threads of the same operating system process distribute the computing workload across multiple cores, as shown in programming languages such as C and Java. Normally, python only uses one process, from which a main thread is spawned to execute the runtime. Due to a locking mechanism called global interpreter lock (global interpreter lock), it remains on a single core regardless of how many cores the computer has or how many new threads are spawned. This mechanism is To prevent so-called race conditions.

When I think of competition, I think of NASCAR and Formula 1. Let's use this analogy and imagine all Formula 1 drivers trying to race in one car at the same time. Sounds ridiculous, right? , which is only possible if each driver has access to his or her own car, or better still, runs one lap at a time, handing the car off to the next driver each time.

This is very similar to what happens in threads. Threads are "forked" from the "main" thread, and each subsequent thread is a copy of the previous thread. These threads all exist in the same process "context" (event or race), so all resources (such as memory) allocated to the process are shared. For example, in a typical python interpreter session:

>>> a = 8

Here, a consumes very little memory (RAM) by letting some arbitrary location in memory temporarily hold the value 8.

So far so good, let's start some threads and observe their behavior when adding two numbers xy:

import time

import threading

from threading import Thread

a = 8

def threaded_add(x, y):

# simulation of a more complex task by asking

# python to sleep, since adding happens so quick!

for i in range(2):

global a

print("computing task in a different thread!")

time.sleep(1)

#this is not okay! but python will force sync, more on that later!

a = 10

print(a)

# the current thread will be a subset fork!

if __name__ != "__main__":

current_thread = threading.current_thread()

# here we tell python from the main

# thread of execution make others

if __name__ == "__main__":

thread = Thread(target = threaded_add, args = (1, 2))

thread.start()

thread.join()

print(a)

print("main thread finished...exiting")>>> computing task in a different thread! >>> 10 >>> computing task in a different thread! >>> 10 >>> 10 >>> main thread finished...exiting

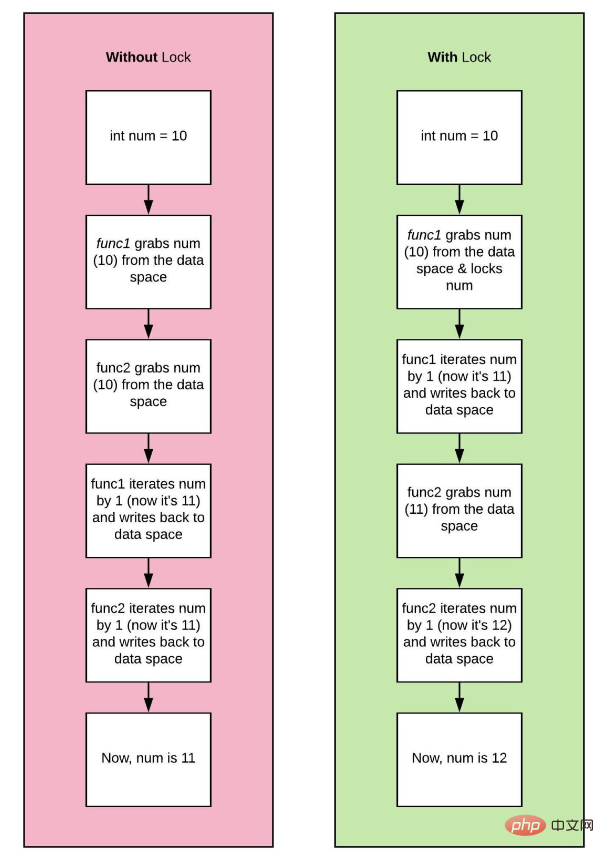

Two threads are currently running. Let's call them thread_one and thread_two. If thread_one wants to modify a with the value 10, and thread_two simultaneously tries to update the same variable, we have a problem! A condition called a data race will occur, and the resulting values for a will be inconsistent.

A racing event you didn't watch, but heard two conflicting results from two of your friends! thread_one Let me tell you one thing, thread two refutes this! Here’s a pseudocode snippet to illustrate:

a = 8 # spawns two different threads 1 and 2 # thread_one updates the value of a to 10 if (a == 10): # a check #thread_two updates the value of a to 15 a = 15 b = a * 2 # if thread_one finished first the result will be 20 # if thread_two finished first the result will be 30 # who is right?

What the hell is going on?

Python is an interpreted language, which means it comes with an interpreter - a program that parses its source code from another language! Some such interpreters in python include cpython, pypypy, Jpython and IronPython, among which, cpython is the original implementation of python.

CPython is an interpreter that provides external function interfaces with C and other programming languages. It compiles python source code into intermediate bytecode, which is interpreted by the CPython virtual machine. The discussion so far and in the future has been about CPython and understanding behavior in the environment.

内存模型和锁定机制

编程语言使用程序中的对象来执行操作。这些对象由基本数据类型组成,如string、integer或boolean。它们还包括更复杂的数据结构,如list或classes/objects。程序对象的值存储在内存中,以便快速访问。在程序中使用变量时,进程将从内存中读取值并对其进行操作。在早期的编程语言中,大多数开发人员负责他们程序中的所有内存管理。这意味着在创建列表或对象之前,首先必须为变量分配内存。在这样做时,你可以继续释放以“释放”内存。

在python中,对象通过引用存储在内存中。引用是对象的一种标签,因此一个对象可以有许多名称,比如你如何拥有给定的名称和昵称。引用是对象的精确内存位置。引用计数器用于python中的垃圾收集,这是一种自动内存管理过程。

在引用计数器的帮助下,python通过在创建或引用对象时递增引用计数器和在取消引用对象时递减来跟踪每个对象。当引用计数为0时,对象的内存将被释放。

import sys import gc hello = "world" #reference to 'world' is 2 print (sys.getrefcount(hello)) bye = "world" other_bye = bye print(sys.getrefcount(bye)) print(gc.get_referrers(other_bye))

>>> 4

>>> 6

>>> [['sys', 'gc', 'hello', 'world', 'print', 'sys', 'getrefcount', 'hello', 'bye', 'world', 'other_bye', 'bye', 'print', 'sys', 'getrefcount', 'bye', 'print', 'gc', 'get_referrers', 'other_bye'], (0, None, 'world'), {'__name__': '__main__', '__doc__': None, '__package__': None, '__loader__': <_frozen_importlib_external.sourcefileloader>, '__spec__': None, '__annotations__': {}, '__builtins__': <module>, '__file__': 'test.py', '__cached__': None, 'sys': <module>, 'gc': <module>, 'hello': 'world', 'bye': 'world', 'other_bye': 'world'}]</module></module></module></_frozen_importlib_external.sourcefileloader>需要保护这些参考计数器变量,防止竞争条件或内存泄漏。以保护这些变量;可以将锁添加到跨线程共享的所有数据结构中。

CPython 的 GIL 通过一次允许一个线程控制解释器来控制 Python 解释器。它为单线程程序提供了性能提升,因为只需要管理一个锁,但代价是它阻止了多线程 CPython 程序在某些情况下充分利用多处理器系统。

当用户编写python程序时,性能受CPU限制的程序和受I/O限制的程序之间存在差异。CPU通过同时执行许多操作将程序推到极限,而I/O程序必须花费时间等待I/O。

因此,只有多线程程序在GIL中花费大量时间来解释CPython字节码;GIL成为瓶颈。即使没有严格必要,GIL也会降低性能。例如,一个用python编写的同时处理IO和CPU任务的程序:

import time, os

from threading import Thread, current_thread

from multiprocessing import current_process

COUNT = 200000000

SLEEP = 10

def io_bound(sec):

pid = os.getpid()

threadName = current_thread().name

processName = current_process().name

print(f"{pid} * {processName} * {threadName} \

---> Start sleeping...")

time.sleep(sec)

print(f"{pid} * {processName} * {threadName} \

---> Finished sleeping...")

def cpu_bound(n):

pid = os.getpid()

threadName = current_thread().name

processName = current_process().name

print(f"{pid} * {processName} * {threadName} \

---> Start counting...")

while n>0:

n -= 1

print(f"{pid} * {processName} * {threadName} \

---> Finished counting...")

def timeit(function,args,threaded=False):

start = time.time()

if threaded:

t1 = Thread(target = function, args =(args, ))

t2 = Thread(target = function, args =(args, ))

t1.start()

t2.start()

t1.join()

t2.join()

else:

function(args)

end = time.time()

print('Time taken in seconds for running {} on Argument {} is {}s -{}'.format(function,args,end - start,"Threaded" if threaded else "None Threaded"))

if __name__=="__main__":

#Running io_bound task

print("IO BOUND TASK NON THREADED")

timeit(io_bound,SLEEP)

print("IO BOUND TASK THREADED")

#Running io_bound task in Thread

timeit(io_bound,SLEEP,threaded=True)

print("CPU BOUND TASK NON THREADED")

#Running cpu_bound task

timeit(cpu_bound,COUNT)

print("CPU BOUND TASK THREADED")

#Running cpu_bound task in Thread

timeit(cpu_bound,COUNT,threaded=True)>>> IO BOUND TASK NON THREADED >>> 17244 * MainProcess * MainThread ---> Start sleeping... >>> 17244 * MainProcess * MainThread ---> Finished sleeping... >>> 17244 * MainProcess * MainThread ---> Start sleeping... >>> 17244 * MainProcess * MainThread ---> Finished sleeping... >>> Time taken in seconds for running <function> on Argument 10 is 20.036664724349976s -None Threaded >>> IO BOUND TASK THREADED >>> 10180 * MainProcess * Thread-1 ---> Start sleeping... >>> 10180 * MainProcess * Thread-2 ---> Start sleeping... >>> 10180 * MainProcess * Thread-1 ---> Finished sleeping... >>> 10180 * MainProcess * Thread-2 ---> Finished sleeping... >>> Time taken in seconds for running <function> on Argument 10 is 10.01464056968689s -Threaded >>> CPU BOUND TASK NON THREADED >>> 14172 * MainProcess * MainThread ---> Start counting... >>> 14172 * MainProcess * MainThread ---> Finished counting... >>> 14172 * MainProcess * MainThread ---> Start counting... >>> 14172 * MainProcess * MainThread ---> Finished counting... >>> Time taken in seconds for running <function> on Argument 200000000 is 44.90199875831604s -None Threaded >>> CPU BOUND TASK THEADED >>> 15616 * MainProcess * Thread-1 ---> Start counting... >>> 15616 * MainProcess * Thread-2 ---> Start counting... >>> 15616 * MainProcess * Thread-1 ---> Finished counting... >>> 15616 * MainProcess * Thread-2 ---> Finished counting... >>> Time taken in seconds for running <function> on Argument 200000000 is 106.09711360931396s -Threaded</function></function></function></function>

从结果中我们注意到,multithreading在多个IO绑定任务中表现出色,执行时间为10秒,而非线程方法执行时间为20秒。我们使用相同的方法执行CPU密集型任务。好吧,最初它确实同时启动了我们的线程,但最后,我们看到整个程序的执行需要大约106秒!然后发生了什么?这是因为当Thread-1启动时,它获取全局解释器锁(GIL),这防止Thread-2使用CPU。因此,Thread-2必须等待Thread-1完成其任务并释放锁,以便它可以获取锁并执行其任务。锁的获取和释放增加了总执行时间的开销。因此,可以肯定地说,线程不是依赖CPU执行任务的理想解决方案。

这种特性使并发编程变得困难。如果GIL在并发性方面阻碍了我们,我们是不是应该摆脱它,还是能够关闭它?。嗯,这并不容易。其他功能、库和包都依赖于GIL,因此必须有一些东西来取代它,否则整个生态系统将崩溃。这是一个很难解决的问题。

多进程

我们已经证实,CPython使用锁来保护数据不受竞速的影响,尽管这种锁存在,但程序员已经找到了一种显式实现并发的方法。当涉及到GIL时,我们可以使用multiprocessing库来绕过全局锁。多处理实现了真正意义上的并发,因为它在不同CPU核上跨不同进程执行代码。它创建了一个新的Python解释器实例,在每个内核上运行。不同的进程位于不同的内存位置,因此它们之间的对象共享并不容易。在这个实现中,python为每个要运行的进程提供了不同的解释器;因此在这种情况下,为多处理中的每个进程提供单个线程。

import os

import time

from multiprocessing import Process, current_process

SLEEP = 10

COUNT = 200000000

def count_down(cnt):

pid = os.getpid()

processName = current_process().name

print(f"{pid} * {processName} \

---> Start counting...")

while cnt > 0:

cnt -= 1

def io_bound(sec):

pid = os.getpid()

threadName = current_thread().name

processName = current_process().name

print(f"{pid} * {processName} * {threadName} \

---> Start sleeping...")

time.sleep(sec)

print(f"{pid} * {processName} * {threadName} \

---> Finished sleeping...")

if __name__ == '__main__':

# creating processes

start = time.time()

#CPU BOUND

p1 = Process(target=count_down, args=(COUNT, ))

p2 = Process(target=count_down, args=(COUNT, ))

#IO BOUND

#p1 = Process(target=, args=(SLEEP, ))

#p2 = Process(target=count_down, args=(SLEEP, ))

# starting process_thread

p1.start()

p2.start()

# wait until finished

p1.join()

p2.join()

stop = time.time()

elapsed = stop - start

print ("The time taken in seconds is :", elapsed)>>> 1660 * Process-2 ---> Start counting... >>> 10184 * Process-1 ---> Start counting... >>> The time taken in seconds is : 12.815475225448608

可以看出,对于cpu和io绑定任务,multiprocessing性能异常出色。MainProcess启动了两个子进程,Process-1和Process-2,它们具有不同的PIDs,每个都执行将COUNT减少到零的任务。每个进程并行运行,使用单独的CPU内核和自己的Python解释器实例,因此整个程序执行只需12秒。

请注意,输出可能以无序的方式打印,因为过程彼此独立。这是因为每个进程都在自己的默认主线程中执行函数。

我们还可以使用asyncio库(上一节我已经讲过了,没看的可以返回到上一节去学习)绕过GIL锁。asyncio的基本概念是,一个称为事件循环的python对象控制每个任务的运行方式和时间。事件循环知道每个任务及其状态。就绪状态表示任务已准备好运行,等待阶段表示任务正在等待某个外部任务完成。在异步IO中,任务永远不会放弃控制,也不会在执行过程中被中断,因此对象共享是线程安全的。

import time

import asyncio

COUNT = 200000000

# asynchronous function defination

async def func_name(cnt):

while cnt > 0:

cnt -= 1

#asynchronous main function defination

async def main ():

# Creating 2 tasks.....You could create as many tasks (n tasks)

task1 = loop.create_task(func_name(COUNT))

task2 = loop.create_task(func_name(COUNT))

# await each task to execute before handing control back to the program

await asyncio.wait([task1, task2])

if __name__ =='__main__':

# get the event loop

start_time = time.time()

loop = asyncio.get_event_loop()

# run all tasks in the event loop until completion

loop.run_until_complete(main())

loop.close()

print("--- %s seconds ---" % (time.time() - start_time))>>> --- 41.74118399620056 seconds ---

我们可以看到,asyncio需要41秒来完成倒计时,这比multithreading的106秒要好,但对于cpu受限的任务,不如multiprocessing的12秒。Asyncio创建一个eventloop和两个任务task1和task2,然后将这些任务放在eventloop上。然后,程序await任务的执行,因为事件循环执行所有任务直至完成。

为了充分利用python中并发的全部功能,我们还可以使用不同的解释器。JPython和IronPython没有GIL,这意味着用户可以充分利用多处理器系统。

与线程一样,多进程仍然存在缺点:

数据在进程之间混洗会产生 I/O 开销

整个内存被复制到每个子进程中,这对于更重要的程序来说可能是很多开销

The above is the detailed content of What is Python multithreading and how to use it. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1386

1386

52

52

Can visual studio code be used in python

Apr 15, 2025 pm 08:18 PM

Can visual studio code be used in python

Apr 15, 2025 pm 08:18 PM

VS Code can be used to write Python and provides many features that make it an ideal tool for developing Python applications. It allows users to: install Python extensions to get functions such as code completion, syntax highlighting, and debugging. Use the debugger to track code step by step, find and fix errors. Integrate Git for version control. Use code formatting tools to maintain code consistency. Use the Linting tool to spot potential problems ahead of time.

How to run programs in terminal vscode

Apr 15, 2025 pm 06:42 PM

How to run programs in terminal vscode

Apr 15, 2025 pm 06:42 PM

In VS Code, you can run the program in the terminal through the following steps: Prepare the code and open the integrated terminal to ensure that the code directory is consistent with the terminal working directory. Select the run command according to the programming language (such as Python's python your_file_name.py) to check whether it runs successfully and resolve errors. Use the debugger to improve debugging efficiency.

Can vs code run in Windows 8

Apr 15, 2025 pm 07:24 PM

Can vs code run in Windows 8

Apr 15, 2025 pm 07:24 PM

VS Code can run on Windows 8, but the experience may not be great. First make sure the system has been updated to the latest patch, then download the VS Code installation package that matches the system architecture and install it as prompted. After installation, be aware that some extensions may be incompatible with Windows 8 and need to look for alternative extensions or use newer Windows systems in a virtual machine. Install the necessary extensions to check whether they work properly. Although VS Code is feasible on Windows 8, it is recommended to upgrade to a newer Windows system for a better development experience and security.

Is the vscode extension malicious?

Apr 15, 2025 pm 07:57 PM

Is the vscode extension malicious?

Apr 15, 2025 pm 07:57 PM

VS Code extensions pose malicious risks, such as hiding malicious code, exploiting vulnerabilities, and masturbating as legitimate extensions. Methods to identify malicious extensions include: checking publishers, reading comments, checking code, and installing with caution. Security measures also include: security awareness, good habits, regular updates and antivirus software.

Python: Automation, Scripting, and Task Management

Apr 16, 2025 am 12:14 AM

Python: Automation, Scripting, and Task Management

Apr 16, 2025 am 12:14 AM

Python excels in automation, scripting, and task management. 1) Automation: File backup is realized through standard libraries such as os and shutil. 2) Script writing: Use the psutil library to monitor system resources. 3) Task management: Use the schedule library to schedule tasks. Python's ease of use and rich library support makes it the preferred tool in these areas.

What is vscode What is vscode for?

Apr 15, 2025 pm 06:45 PM

What is vscode What is vscode for?

Apr 15, 2025 pm 06:45 PM

VS Code is the full name Visual Studio Code, which is a free and open source cross-platform code editor and development environment developed by Microsoft. It supports a wide range of programming languages and provides syntax highlighting, code automatic completion, code snippets and smart prompts to improve development efficiency. Through a rich extension ecosystem, users can add extensions to specific needs and languages, such as debuggers, code formatting tools, and Git integrations. VS Code also includes an intuitive debugger that helps quickly find and resolve bugs in your code.

Can vs code run python

Apr 15, 2025 pm 08:21 PM

Can vs code run python

Apr 15, 2025 pm 08:21 PM

Yes, VS Code can run Python code. To run Python efficiently in VS Code, complete the following steps: Install the Python interpreter and configure environment variables. Install the Python extension in VS Code. Run Python code in VS Code's terminal via the command line. Use VS Code's debugging capabilities and code formatting to improve development efficiency. Adopt good programming habits and use performance analysis tools to optimize code performance.

Can visual studio code run python

Apr 15, 2025 pm 08:00 PM

Can visual studio code run python

Apr 15, 2025 pm 08:00 PM

VS Code not only can run Python, but also provides powerful functions, including: automatically identifying Python files after installing Python extensions, providing functions such as code completion, syntax highlighting, and debugging. Relying on the installed Python environment, extensions act as bridge connection editing and Python environment. The debugging functions include setting breakpoints, step-by-step debugging, viewing variable values, and improving debugging efficiency. The integrated terminal supports running complex commands such as unit testing and package management. Supports extended configuration and enhances features such as code formatting, analysis and version control.