How to do image processing and deep learning in PHP?

PHP is a common development language that is widely used to build web applications and websites. Although it is not a tool specifically designed for image processing and deep learning, the PHP community provides many ready-made libraries and frameworks that can be used for these tasks. Below we will introduce several commonly used PHP libraries and frameworks and discuss how they perform image processing and deep learning.

- GD Image Library

GD Image Library is one of PHP's built-in libraries, which provides many image processing functions. You can use these functions to create, open, save images, and perform various operations such as resizing, rotating, cropping, adding text, and more. It also supports many different image formats, including JPEG, PNG, GIF, BMP, and more.

The following is a simple example showing how to use the GD library to create a red rectangle:

<?php

$width = 400;

$height = 200;

$image = imagecreate($width, $height);

$red = imagecolorallocate($image, 255, 0, 0);

imagefilledrectangle($image, 0, 0, $width, $height, $red);

header('Content-Type: image/png');

imagepng($image);

imagedestroy($image);

?>- Imagick extension

Imagick extension is an extension based on ImageMagick PHP extension provides more advanced image processing functions. It supports many different image formats and allows for various operations such as scaling, cropping, rotating, filters, and more. It also supports multiple image compositions as well as transparency and alpha channels.

Here is an example of using the Imagick extension to resize an image:

<?php

$image = new Imagick('image.jpg');

$image->resizeImage(800, 600, Imagick::FILTER_LANCZOS, 1);

$image->writeImage('image_resized.jpg');

?>- TensorFlow PHP

TensorFlow is a tool developed by Google that is widely used for depth A framework for learning. TensorFlow PHP is a PHP extension based on TensorFlow that allows you to use TensorFlow models in PHP. This extension can be used for a variety of deep learning tasks such as image classification, object detection, speech recognition, and more.

The following is an example of using TensorFlow PHP to implement image classification:

<?php

$graph = new TensorFlowGraph();

$session = new TensorFlowSession($graph);

$saver = new TensorFlowSaver($graph);

$saver->restore($session, '/tmp/model.ckpt');

$tensor = $graph->operation('input')->output(0);

$result = $session->run([$graph->operation('output')->output(0)], [$tensor->shape()]);

print_r($result);

?>- Php-ml machine learning library

Php-ml is a PHP-based A machine learning library that provides many common machine learning algorithms and tools. It can be used to process and analyze image data, as well as train and evaluate deep learning models.

The following is an example of training and evaluating a convolutional neural network using the Php-ml library:

<?php

use PhpmlDatasetObjectCollection;

use PhpmlDatasetDemoImagesDataset;

use PhpmlFeatureExtractionStopWordsEnglish;

use PhpmlFeatureExtractionTokenCountVectorizer;

use PhpmlFeatureExtractionTfIdfTransformer;

use PhpmlCrossValidationStratifiedRandomSplit;

use PhpmlMetricAccuracy;

use PhpmlNeuralNetworkLayer;

use PhpmlNeuralNetworkActivationFunctionSigmoid;

use PhpmlNeuralNetworkActivationFunctionReLU;

use PhpmlNeuralNetworkNetworkMultilayerPerceptron;

use PhpmlPreprocessingImputerMeanImputer;

use PhpmlPreprocessingStandardScaler;

use PhpmlSupportVectorMachineKernel;

$dataset = new ImagesDataset();

$vectorizer = new TokenCountVectorizer(new English());

$tfIdfTransformer = new TfIdfTransformer();

$stopWords = new English();

$vectorizer->fit($dataset->getSamples());

$vectorizer->transform($dataset->getSamples());

$tfIdfTransformer->fit($dataset->getSamples());

$tfIdfTransformer->transform($dataset->getSamples());

$stopWords->removeStopWords($dataset->getSamples());

$split = new StratifiedRandomSplit($dataset->getTargets(), 0.3);

$trainSamples = $split->getTrainSamples();

$trainLabels = $split->getTrainLabels();

$testSamples = $split->getTestSamples();

$testLabels = $split->getTestLabels();

$imputer = new MeanImputer();

$scaler = new StandardScaler();

$imputer->fit($trainSamples);

$scaler->fit($trainSamples);

$trainSamples = $imputer->transform($trainSamples);

$testSamples = $imputer->transform($testSamples);

$trainSamples = $scaler->transform($trainSamples);

$testSamples = $scaler->transform($testSamples);

$mlp = new MultilayerPerceptron(

[count($trainSamples[0]), 100, 50, count(array_unique($trainLabels))],

[new Sigmoid(), new ReLU(), new ReLU()]

);

$mlp->train($trainSamples, $trainLabels);

$predictedLabels = $mlp->predict($testSamples);

echo 'Accuracy: '.Accuracy::score($testLabels, $predictedLabels);

?>Summary

Although PHP is not dedicated to image processing and deep learning Tools, but the built-in GD library and open source extensions, libraries and frameworks provide a wealth of functions and tools that can be used to process images and train deep learning models to meet the needs of developers. Of course, this also requires developers to have relevant knowledge and skills to better apply these tools and develop efficient applications.

The above is the detailed content of How to do image processing and deep learning in PHP?. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1387

1387

52

52

Methods and steps for using BERT for sentiment analysis in Python

Jan 22, 2024 pm 04:24 PM

Methods and steps for using BERT for sentiment analysis in Python

Jan 22, 2024 pm 04:24 PM

BERT is a pre-trained deep learning language model proposed by Google in 2018. The full name is BidirectionalEncoderRepresentationsfromTransformers, which is based on the Transformer architecture and has the characteristics of bidirectional encoding. Compared with traditional one-way coding models, BERT can consider contextual information at the same time when processing text, so it performs well in natural language processing tasks. Its bidirectionality enables BERT to better understand the semantic relationships in sentences, thereby improving the expressive ability of the model. Through pre-training and fine-tuning methods, BERT can be used for various natural language processing tasks, such as sentiment analysis, naming

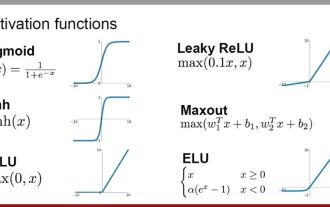

Analysis of commonly used AI activation functions: deep learning practice of Sigmoid, Tanh, ReLU and Softmax

Dec 28, 2023 pm 11:35 PM

Analysis of commonly used AI activation functions: deep learning practice of Sigmoid, Tanh, ReLU and Softmax

Dec 28, 2023 pm 11:35 PM

Activation functions play a crucial role in deep learning. They can introduce nonlinear characteristics into neural networks, allowing the network to better learn and simulate complex input-output relationships. The correct selection and use of activation functions has an important impact on the performance and training results of neural networks. This article will introduce four commonly used activation functions: Sigmoid, Tanh, ReLU and Softmax, starting from the introduction, usage scenarios, advantages, disadvantages and optimization solutions. Dimensions are discussed to provide you with a comprehensive understanding of activation functions. 1. Sigmoid function Introduction to SIgmoid function formula: The Sigmoid function is a commonly used nonlinear function that can map any real number to between 0 and 1. It is usually used to unify the

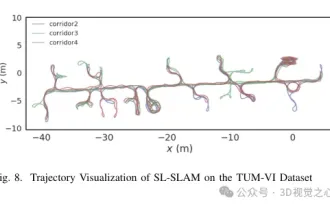

Beyond ORB-SLAM3! SL-SLAM: Low light, severe jitter and weak texture scenes are all handled

May 30, 2024 am 09:35 AM

Beyond ORB-SLAM3! SL-SLAM: Low light, severe jitter and weak texture scenes are all handled

May 30, 2024 am 09:35 AM

Written previously, today we discuss how deep learning technology can improve the performance of vision-based SLAM (simultaneous localization and mapping) in complex environments. By combining deep feature extraction and depth matching methods, here we introduce a versatile hybrid visual SLAM system designed to improve adaptation in challenging scenarios such as low-light conditions, dynamic lighting, weakly textured areas, and severe jitter. sex. Our system supports multiple modes, including extended monocular, stereo, monocular-inertial, and stereo-inertial configurations. In addition, it also analyzes how to combine visual SLAM with deep learning methods to inspire other research. Through extensive experiments on public datasets and self-sampled data, we demonstrate the superiority of SL-SLAM in terms of positioning accuracy and tracking robustness.

Latent space embedding: explanation and demonstration

Jan 22, 2024 pm 05:30 PM

Latent space embedding: explanation and demonstration

Jan 22, 2024 pm 05:30 PM

Latent Space Embedding (LatentSpaceEmbedding) is the process of mapping high-dimensional data to low-dimensional space. In the field of machine learning and deep learning, latent space embedding is usually a neural network model that maps high-dimensional input data into a set of low-dimensional vector representations. This set of vectors is often called "latent vectors" or "latent encodings". The purpose of latent space embedding is to capture important features in the data and represent them into a more concise and understandable form. Through latent space embedding, we can perform operations such as visualizing, classifying, and clustering data in low-dimensional space to better understand and utilize the data. Latent space embedding has wide applications in many fields, such as image generation, feature extraction, dimensionality reduction, etc. Latent space embedding is the main

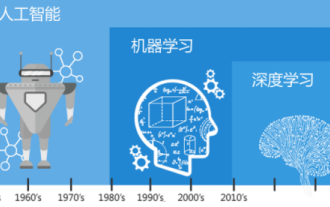

Understand in one article: the connections and differences between AI, machine learning and deep learning

Mar 02, 2024 am 11:19 AM

Understand in one article: the connections and differences between AI, machine learning and deep learning

Mar 02, 2024 am 11:19 AM

In today's wave of rapid technological changes, Artificial Intelligence (AI), Machine Learning (ML) and Deep Learning (DL) are like bright stars, leading the new wave of information technology. These three words frequently appear in various cutting-edge discussions and practical applications, but for many explorers who are new to this field, their specific meanings and their internal connections may still be shrouded in mystery. So let's take a look at this picture first. It can be seen that there is a close correlation and progressive relationship between deep learning, machine learning and artificial intelligence. Deep learning is a specific field of machine learning, and machine learning

From basics to practice, review the development history of Elasticsearch vector retrieval

Oct 23, 2023 pm 05:17 PM

From basics to practice, review the development history of Elasticsearch vector retrieval

Oct 23, 2023 pm 05:17 PM

1. Introduction Vector retrieval has become a core component of modern search and recommendation systems. It enables efficient query matching and recommendations by converting complex objects (such as text, images, or sounds) into numerical vectors and performing similarity searches in multidimensional spaces. From basics to practice, review the development history of Elasticsearch vector retrieval_elasticsearch As a popular open source search engine, Elasticsearch's development in vector retrieval has always attracted much attention. This article will review the development history of Elasticsearch vector retrieval, focusing on the characteristics and progress of each stage. Taking history as a guide, it is convenient for everyone to establish a full range of Elasticsearch vector retrieval.

Super strong! Top 10 deep learning algorithms!

Mar 15, 2024 pm 03:46 PM

Super strong! Top 10 deep learning algorithms!

Mar 15, 2024 pm 03:46 PM

Almost 20 years have passed since the concept of deep learning was proposed in 2006. Deep learning, as a revolution in the field of artificial intelligence, has spawned many influential algorithms. So, what do you think are the top 10 algorithms for deep learning? The following are the top algorithms for deep learning in my opinion. They all occupy an important position in terms of innovation, application value and influence. 1. Deep neural network (DNN) background: Deep neural network (DNN), also called multi-layer perceptron, is the most common deep learning algorithm. When it was first invented, it was questioned due to the computing power bottleneck. Until recent years, computing power, The breakthrough came with the explosion of data. DNN is a neural network model that contains multiple hidden layers. In this model, each layer passes input to the next layer and

AlphaFold 3 is launched, comprehensively predicting the interactions and structures of proteins and all living molecules, with far greater accuracy than ever before

Jul 16, 2024 am 12:08 AM

AlphaFold 3 is launched, comprehensively predicting the interactions and structures of proteins and all living molecules, with far greater accuracy than ever before

Jul 16, 2024 am 12:08 AM

Editor | Radish Skin Since the release of the powerful AlphaFold2 in 2021, scientists have been using protein structure prediction models to map various protein structures within cells, discover drugs, and draw a "cosmic map" of every known protein interaction. . Just now, Google DeepMind released the AlphaFold3 model, which can perform joint structure predictions for complexes including proteins, nucleic acids, small molecules, ions and modified residues. The accuracy of AlphaFold3 has been significantly improved compared to many dedicated tools in the past (protein-ligand interaction, protein-nucleic acid interaction, antibody-antigen prediction). This shows that within a single unified deep learning framework, it is possible to achieve