Technology peripherals

Technology peripherals

AI

AI

The domestic open source version of 'ChatGPT plug-in system' is here! Douban, search are all available, jointly released by Tsinghua University, Face Wall Intelligence, etc.

The domestic open source version of 'ChatGPT plug-in system' is here! Douban, search are all available, jointly released by Tsinghua University, Face Wall Intelligence, etc.

The domestic open source version of 'ChatGPT plug-in system' is here! Douban, search are all available, jointly released by Tsinghua University, Face Wall Intelligence, etc.

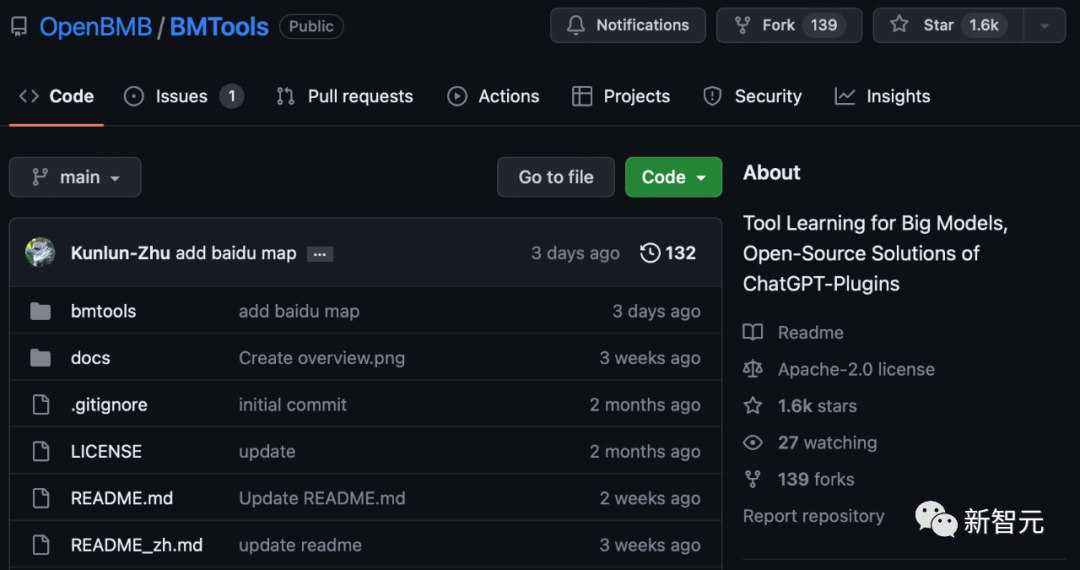

Recently, an open source project called "ChatGPT Plugins Domestic Alternative System" has a sharp increase in stars on GitHub.

This project is BMTools, a large model tool learning engine developed by Wallface Intelligence.

## Project address: https://www.php.cn/link/a330f9fecc388ce67f87b09855480ca3

Deeply explore the cutting-edge and quickly embed large model tool learningFirst of all, the most important question is, what is so great about BMTools?

As an open source scalable tool learning platform based on language models, the wall-facing R&D team has unified various tool calling processes into the BMTools framework, making the entire tool calling Process standardization and automation.

Currently, the plug-ins supported by BMTools cover entertainment, academic, life and other aspects, including douban-film (Douban movie), search (Bing search), Klarna (shopping), etc. .

Developers can use BMTools to use a given model (such as ChatGPT, GPT-4) to call a variety of tool interfaces to implement specific functions.

In addition, the BMTools toolkit has also integrated the recently popular Auto-GPT and BabyAGI.

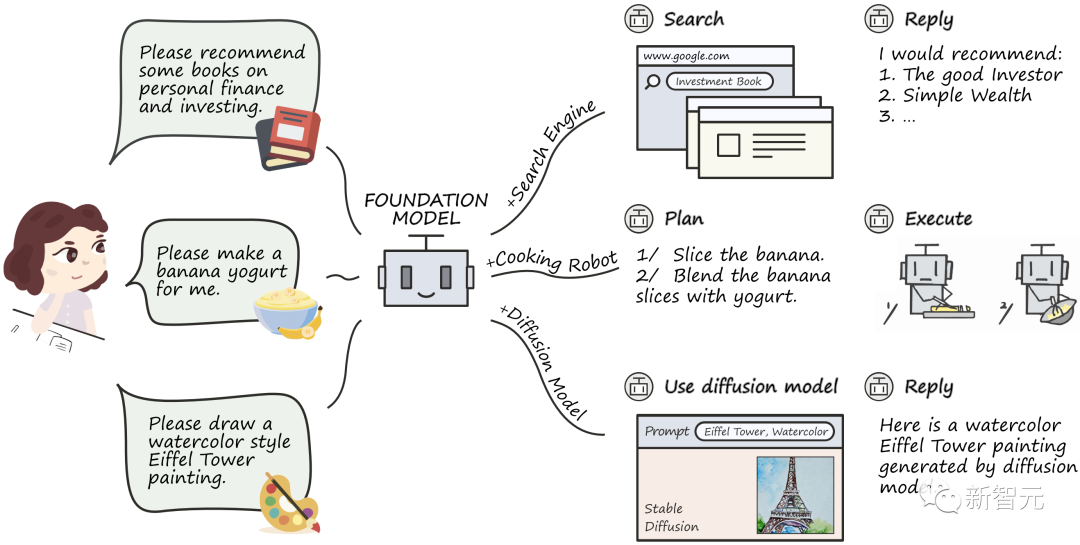

##So, what effect does this kind of tool learning have on large models?

Although large models have achieved remarkable results in many aspects, they still have certain limitations in tasks in specific fields. These tasks often require specialized tools or domain knowledge to effectively solve.Therefore, just like a smartphone needs to download an App to have a better user experience, large models need to have the ability to call various professional tools so that they can provide better solutions for real-world tasks. for full support.

The organic combination of large models and external tools has successfully made up for many shortcomings in previous capabilities, and tool learning has greatly unleashed the potential of large models.

Paper address: https://arxiv.org/abs/2304.08354

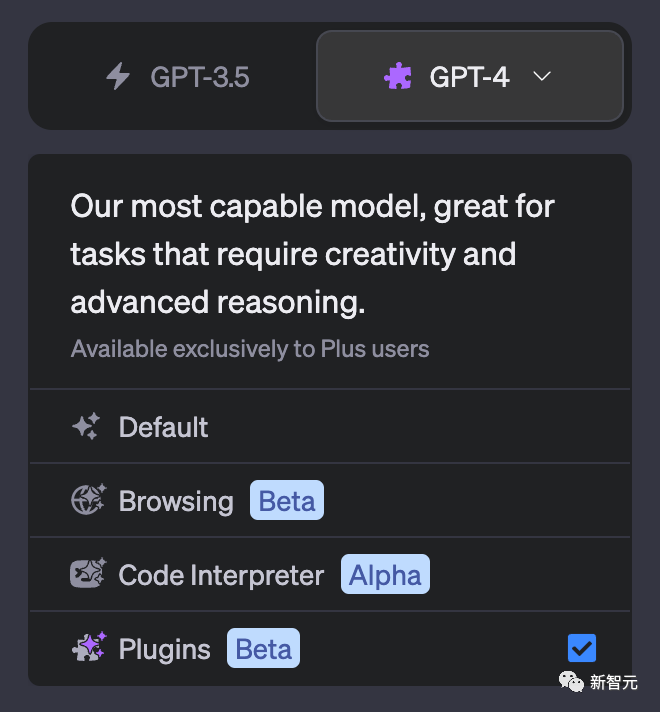

On March 23, 2023, OpenAI announced the launch of the plug-in system (Plugins). The ability of this plug-in is what we call tool learning.With the support of tool learning, Plugins can support ChatGPT to connect browsers, mathematical calculations and other external tools, greatly enhancing its capabilities.

The emergence of ChatGPT Plugins has supplemented the last shortcomings of ChatGPT, allowing it to support networking and solve mathematical calculations. It is called the "App Store" moment of OpenAI. However, until now, it was only supported for OpenAI Plus users and remained unavailable to most developers.

Why can Mianbi launch BMTools only ten days after the release of ChatGPT Plugins?

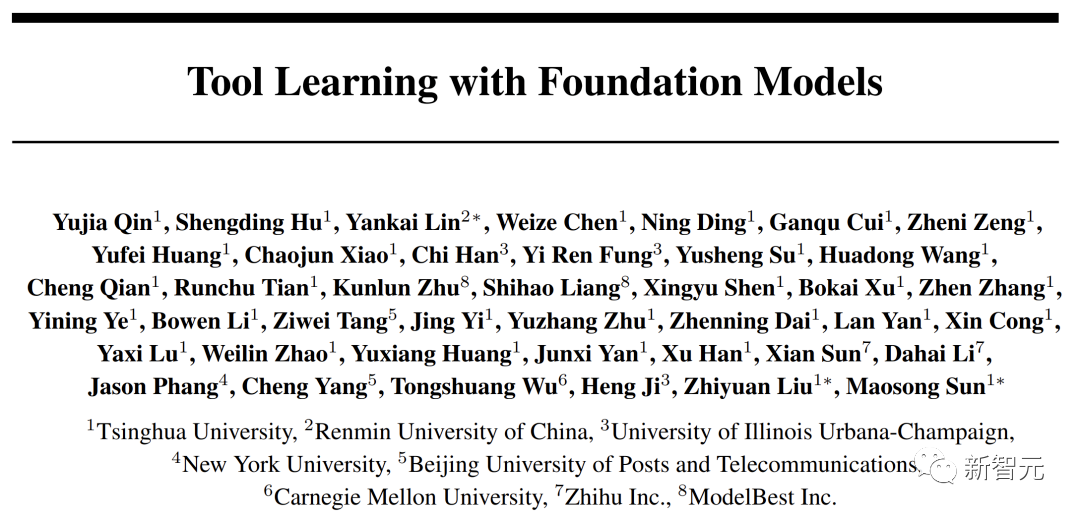

Face Wall Intelligence has been concentrating on the development of efficient computing tools for the entire process of large models. The R&D team has continued to carry out research on new paradigms of tool learning since 2022, trying to integrate existing language models into Combined with search engines, knowledge bases and other tools, good experimental results have been achieved. The team has also conducted fruitful explorations in the cutting-edge research field of tool learning.

In order to satisfy the eager expectations of many developers for the capabilities of OpenAI Plugins, based on the previous accumulation, the team quickly tooled the relevant research results and accumulated them into Toolkit BMTools embeds tool learning into the wall-facing intelligent large model capability system and officially joins the OpenBMB large model tool system "Family Bucket".

Tool learning is also another masterpiece launched by Wallface Intelligence after the efficient training, fine-tuning, inference, and compression suite.

##BMTools toolkit: https ://www.php.cn/link/a330f9fecc388ce67f87b09855480ca3

Leading the wall, the first online support Chinese question and answer modelRecently,面面INTELLIGENCE teamed up with researchers from Tsinghua University, National People’s Congress, and Tencent to jointly release the first Q&A open source model framework WebCPM based on interactive web search in the Chinese field. This initiative has filled the gap of domestic large-scale models. Blank field. And WebCPM is the successful practice of BMTools.

Currently, WebCPM-related work has been accepted into ACL 2023, the top conference on natural language processing.

WebCPM paper link: https://arxiv.org/abs/2305.06849

WebCPM data Link to the code: https://github.com/thunlp/WebCPM

It can be said that since ChatGPT became popular, large models from various factions in China have sprung up like mushrooms after a rain. , but most models are not connected to the Internet.

However, large models that are not connected to the Internet cannot obtain the latest information, and the generated content is based on old data sets, which has certain limitations.

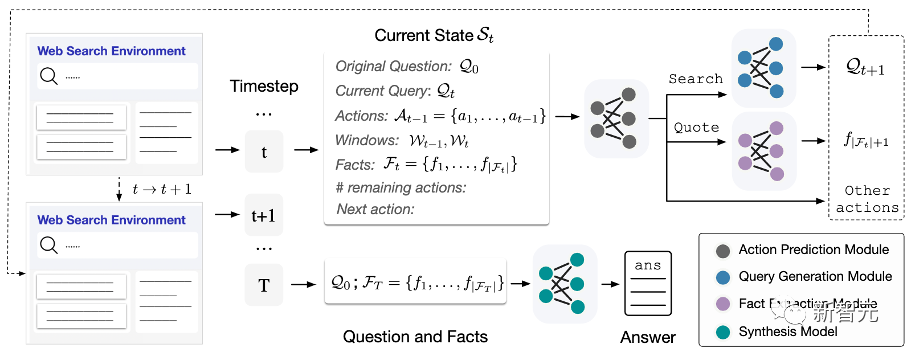

The characteristic of WebCPM is that its information retrieval is based on interactive web search, can interact with search engines like humans to collect factual knowledge required to answer questions and generate answers.

In other words, with the support of networking functions, the real-time and accuracy of answering questions of large models have been greatly enhanced.

WebCPM Model Framework

WebCPM targets WebGPT, which is also the new generation search technology behind Microsoft’s recently launched New Bing.

Like WebGPT, WebCPMovercomes the shortcomings of the traditional LFQA (Long-form Question Answering) long text open question and answer paradigm: relying on non-interactive retrieval method, which retrieves information using only the original question as a query statement.

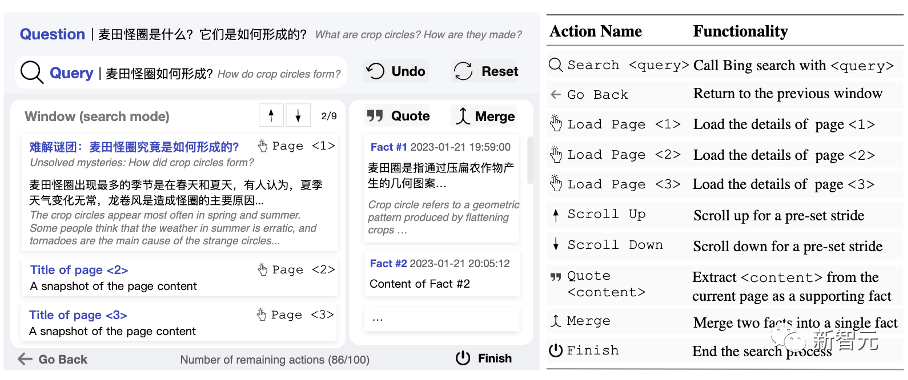

Under the WebCPM framework, the model can perform web searches and filter high-quality information by interacting with search engines in real time just like humans.

Not only that, when encountering complex problems, the model also breaks it down into multiple sub-problems like humans and asks questions in sequence.

Moreover, by identifying and browsing relevant information, the model will gradually improve its understanding of the original problem and continuously query new questions to search for more diverse information.

##WebCPM search interactive interface

Future , Wall-facing intelligence will also further promote the application and transformation of this scientific research results, and strive to promote the implementation of the WebCPM large model in corresponding fields.

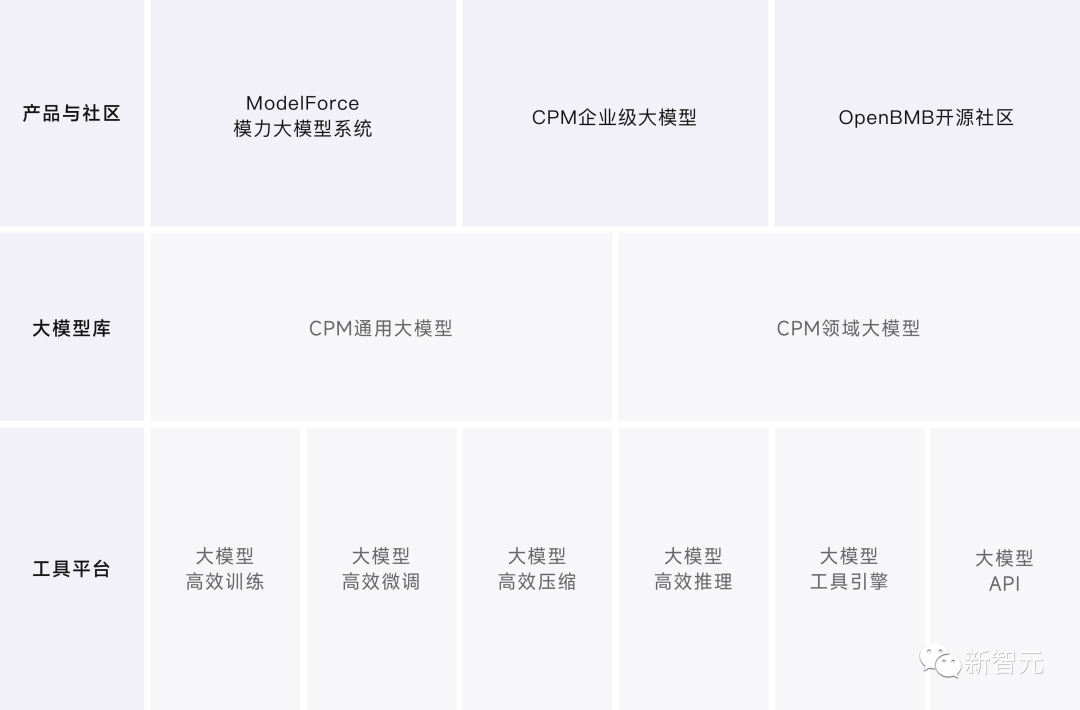

From a high position, we are committed to building a domestic large model systemFace Wall Intelligence has always strived to lead the original innovation of large models and is committed to building large model infrastructure and creation in the intelligent era Domestic large model system, with the aim of eventually realizing "let large models fly into thousands of households".

#The achievements of wall-facing intelligence are obvious to all and have been recognized by the industry.

Zhihu Chief Technology Officer Li Dahai once commented on Wall-Facing Intelligence: "The Wall-Facing Intelligence team is the first team in China to conduct large-scale language model research. The company reserves large model research and application With its full-stack technical capabilities, including fine-tuning technology and acceleration technology, its R&D capabilities are in an industry-leading position." Zhihu said that it believes that Wall-Facing Intelligence can grow into a core player in infrastructure in China's large-scale model field and contribute to China's large-scale model industry.

Wall-facing intelligent panorama

Relying on Tool platform and large model library, the company launched ModelForce large model system and CPM enterprise-level large model. ModelForce, an AI productivity platform based on large models, has a built-in efficient computing tool system for the entire process of large model training, fine-tuning, compression, and inference.

The platform is based on the general capabilities of large models with few samples and zero samples. It uses large model standardized fine-tuning methods and creates zero-code fine-tuning clients, which can significantly reduce the cost of data annotation in the AI R&D process. Computing power cost and labor cost.

CPM Large Model Enterprise Edition has upgraded its capabilities for the open source version model, and has the characteristics of multi-capability integration, incremental fine-tuning and flexible adaptation, and multi-scenario application.

Based on the CPM enterprise-level large model and the ModelForce large model system, Wallface Intelligence cooperated with Zhihu to train the "Zhihaitu AI" large model.

The "Zhihaitu AI" large model was applied to the Zhihu hot list, which can quickly extract elements, sort out opinions and aggregate content. It was presented at the Zhihu Discovery Conference on April 23 release.

It’s more than that. In fact, Wall-facing Intelligence stands high and has successfully created a "Trinity" large-model industry-university-research ecological pattern. By integrating the academic research power of top universities and continuing to build and operate the large-scale model open source community OpenBMB, Wall-facing Intelligence builds Create a closed-loop channel between industry demand, algorithm open source and industrial implementation, and strive to promote cutting-edge research, application research and development and industrial development in the field of domestic large models.

- ##OpenBMB Open Source Community ## To contribute to the construction of domestic large model open source ecosystem, we have released a series of large model full-process open source toolkits including OpenPrompt, OpenDelta, BMInf, BMCook, BMTrain, BMTools, etc., and launched them on Zhihu, Bilibili and other platforms Large model open class for all.

- Natural Language Processing and Social Humanities Computing Laboratory (THUNLP), Department of Computer Science, Tsinghua University

As a researcher in colleges and universities The research strength of Yiqi Juechen was established in the 1970s. It is the earliest and most influential scientific research unit in China to carry out NLP research. It has many well-known scholars and scientists working in it, and its research work in the field of large language models is very outstanding.

- Wall-Facing Intelligence

is committed to the application of large models in typical scenarios and fields of artificial intelligence Application and implementation, the CPM large model is a pre-trained language large model self-developed by the Wallface team based on years of large model training experience. The company has now completed tens of millions of yuan in angel round financing, and has reached strategic cooperation with a number of well-known institutions.

In the journey of striving to build a domestic large-scale model system, Wall-Facing Intelligence’s vision has always been to enable the implementation of large-scale models to empower more industries and benefit more people. many companies and individuals.

The spark has started a prairie fire, and we look forward to large models releasing their potential in more fields and showing surprising application value.

The above is the detailed content of The domestic open source version of 'ChatGPT plug-in system' is here! Douban, search are all available, jointly released by Tsinghua University, Face Wall Intelligence, etc.. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1664

1664

14

14

1422

1422

52

52

1316

1316

25

25

1268

1268

29

29

1240

1240

24

24

Ten recommended open source free text annotation tools

Mar 26, 2024 pm 08:20 PM

Ten recommended open source free text annotation tools

Mar 26, 2024 pm 08:20 PM

Text annotation is the work of corresponding labels or tags to specific content in text. Its main purpose is to provide additional information to the text for deeper analysis and processing, especially in the field of artificial intelligence. Text annotation is crucial for supervised machine learning tasks in artificial intelligence applications. It is used to train AI models to help more accurately understand natural language text information and improve the performance of tasks such as text classification, sentiment analysis, and language translation. Through text annotation, we can teach AI models to recognize entities in text, understand context, and make accurate predictions when new similar data appears. This article mainly recommends some better open source text annotation tools. 1.LabelStudiohttps://github.com/Hu

15 recommended open source free image annotation tools

Mar 28, 2024 pm 01:21 PM

15 recommended open source free image annotation tools

Mar 28, 2024 pm 01:21 PM

Image annotation is the process of associating labels or descriptive information with images to give deeper meaning and explanation to the image content. This process is critical to machine learning, which helps train vision models to more accurately identify individual elements in images. By adding annotations to images, the computer can understand the semantics and context behind the images, thereby improving the ability to understand and analyze the image content. Image annotation has a wide range of applications, covering many fields, such as computer vision, natural language processing, and graph vision models. It has a wide range of applications, such as assisting vehicles in identifying obstacles on the road, and helping in the detection and diagnosis of diseases through medical image recognition. . This article mainly recommends some better open source and free image annotation tools. 1.Makesens

PyCharm Beginner's Guide: Comprehensive understanding of plug-in installation!

Feb 25, 2024 pm 11:57 PM

PyCharm Beginner's Guide: Comprehensive understanding of plug-in installation!

Feb 25, 2024 pm 11:57 PM

PyCharm is a powerful and popular Python integrated development environment (IDE) that provides a wealth of functions and tools so that developers can write code more efficiently. The plug-in mechanism of PyCharm is a powerful tool for extending its functions. By installing different plug-ins, various functions and customized features can be added to PyCharm. Therefore, it is crucial for newbies to PyCharm to understand and be proficient in installing plug-ins. This article will give you a detailed introduction to the complete installation of PyCharm plug-in.

![Error loading plugin in Illustrator [Fixed]](https://img.php.cn/upload/article/000/465/014/170831522770626.jpg?x-oss-process=image/resize,m_fill,h_207,w_330) Error loading plugin in Illustrator [Fixed]

Feb 19, 2024 pm 12:00 PM

Error loading plugin in Illustrator [Fixed]

Feb 19, 2024 pm 12:00 PM

When launching Adobe Illustrator, does a message about an error loading the plug-in pop up? Some Illustrator users have encountered this error when opening the application. The message is followed by a list of problematic plugins. This error message indicates that there is a problem with the installed plug-in, but it may also be caused by other reasons such as a damaged Visual C++ DLL file or a damaged preference file. If you encounter this error, we will guide you in this article to fix the problem, so continue reading below. Error loading plug-in in Illustrator If you receive an "Error loading plug-in" error message when trying to launch Adobe Illustrator, you can use the following: As an administrator

Recommended: Excellent JS open source face detection and recognition project

Apr 03, 2024 am 11:55 AM

Recommended: Excellent JS open source face detection and recognition project

Apr 03, 2024 am 11:55 AM

Face detection and recognition technology is already a relatively mature and widely used technology. Currently, the most widely used Internet application language is JS. Implementing face detection and recognition on the Web front-end has advantages and disadvantages compared to back-end face recognition. Advantages include reducing network interaction and real-time recognition, which greatly shortens user waiting time and improves user experience; disadvantages include: being limited by model size, the accuracy is also limited. How to use js to implement face detection on the web? In order to implement face recognition on the Web, you need to be familiar with related programming languages and technologies, such as JavaScript, HTML, CSS, WebRTC, etc. At the same time, you also need to master relevant computer vision and artificial intelligence technologies. It is worth noting that due to the design of the Web side

Share three solutions to why Edge browser does not support this plug-in

Mar 13, 2024 pm 04:34 PM

Share three solutions to why Edge browser does not support this plug-in

Mar 13, 2024 pm 04:34 PM

When users use the Edge browser, they may add some plug-ins to meet more of their needs. But when adding a plug-in, it shows that this plug-in is not supported. How to solve this problem? Today, the editor will share with you three solutions. Come and try it. Method 1: Try using another browser. Method 2: The Flash Player on the browser may be out of date or missing, causing the plug-in to be unsupported. You can download the latest version from the official website. Method 3: Press the "Ctrl+Shift+Delete" keys at the same time. Click "Clear Data" and reopen the browser.

What is the Chrome plug-in extension installation directory?

Mar 08, 2024 am 08:55 AM

What is the Chrome plug-in extension installation directory?

Mar 08, 2024 am 08:55 AM

What is the Chrome plug-in extension installation directory? Under normal circumstances, the default installation directory of Chrome plug-in extensions is as follows: 1. The default installation directory location of chrome plug-ins in windowsxp: C:\DocumentsandSettings\username\LocalSettings\ApplicationData\Google\Chrome\UserData\Default\Extensions2. chrome in windows7 The default installation directory location of the plug-in: C:\Users\username\AppData\Local\Google\Chrome\User

Alibaba 7B multi-modal document understanding large model wins new SOTA

Apr 02, 2024 am 11:31 AM

Alibaba 7B multi-modal document understanding large model wins new SOTA

Apr 02, 2024 am 11:31 AM

New SOTA for multimodal document understanding capabilities! Alibaba's mPLUG team released the latest open source work mPLUG-DocOwl1.5, which proposed a series of solutions to address the four major challenges of high-resolution image text recognition, general document structure understanding, instruction following, and introduction of external knowledge. Without further ado, let’s look at the effects first. One-click recognition and conversion of charts with complex structures into Markdown format: Charts of different styles are available: More detailed text recognition and positioning can also be easily handled: Detailed explanations of document understanding can also be given: You know, "Document Understanding" is currently An important scenario for the implementation of large language models. There are many products on the market to assist document reading. Some of them mainly use OCR systems for text recognition and cooperate with LLM for text processing.