Technology peripherals

Technology peripherals

AI

AI

AI face-swapping fraud defrauded 4.3 million yuan. Immediately consumer experts recommend three defensive measures

AI face-swapping fraud defrauded 4.3 million yuan. Immediately consumer experts recommend three defensive measures

AI face-swapping fraud defrauded 4.3 million yuan. Immediately consumer experts recommend three defensive measures

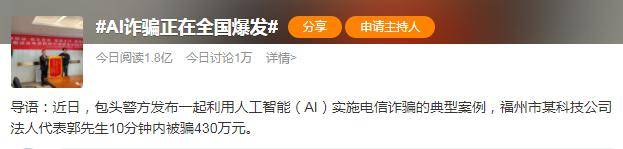

Recently, the Baotou City Public Security Bureau announced that Mr. Guo, the legal representative of a technology company in Fuzhou City, was cheated out of 4.3 million yuan in 10 minutes due to AI face-changing, triggering widespread heated discussions on the safety of artificial intelligence.

avoid the shortcomings of face recognition and effectively identify people. Photos, videos, masks, simulation models, etc., especially the face-changing and voice-changing fraud that occurred in this incident."

Regarding the potential risks brought by AI, "AI Godfather" Hinton once said in an interview with the New York Times, "Compared to climate change,AI may pose a 'more urgent' threat to humanity.”

Based on reality, this statement is not “alarmist”. The "evil" in Pandora's Box is gradually emerging. At the same time, AIGC is rapidly promoting the development of AI to a new stage.

Beware of the “Evils” of Technology: Seeing Is Not necessarily Believing

According to the "Safe Baotou" WeChat public account, the Telecommunications Cybercrime Investigation Bureau of Baotou City Public Security Bureau announced a case of telecommunications fraud. Mr. Guo, the legal representative of a technology company in Fuzhouwas defrauded of 4.3 million yuan in 10 minutes. Yuan, fortunately, the 3.3684 million yuan of defrauded funds has been intercepted, and the "intelligent AI face-changing and onomatopoeia technology" was used. How did they do it?

This is a complicated process. From the news itself, from the perspective of Mr. Guo, the victimwas mainly deceived by face-changing technology and onomatopoeia technology, but there may be some other hidden things behind the incident. Conditions, such as through which channel the criminal established contact with the victim, how to obtain the account of the relevant social software, how to know who he needs to impersonate, how to obtain the corresponding image and sound materials, and how to select the victim.

If we focus on the two key issues offace-changing technology and onomatopoeia technology, the main problems are obtaining basic data, training framework, and real-time rendering. At the data level, the audio and video information of the impersonator can be obtained from social engineering libraries such as social media, or it can be extracted from the photo album and chat history of a lost black phone. In terms of training framework, the editing software of several mainstream domestic short video platforms currently supports face-changing special effects without warning messages. There are more than 30 synthesis methods in foreign open source frameworks. In terms of real-time rendering, hijacked rendering can also be achieved through attack operations such as flashing the phone and installing intrusive software.

Therefore,"Seeing is not necessarily believing". There is always deliberate "evil" behind technology, which must be guarded against.

Nip in the bud and defeat "magic" with "魔"

Risks are inevitable, so how can we use "magic" to defeat "magic"?At present, many financial institutions have relevant implementation measures and related technology applications. For example, technologies such as

live anti-counterfeiting and intermediary identification have also been applied in financial business scenarios.

“The core function of live anti-counterfeiting, intermediary identification and other technologies isto avoid the shortcomings of face recognition and effectively identify photos, videos, masks, simulation models, etc., especially those that appeared in this incident. Face-changing and voice-changing fraud." Feng Yue, an intelligent core expert at MaMaConsumer Artificial Intelligence Research Institute, said, "Based on such technological innovations, MaMaConsumer Finance is currently able to intercept 99% of batch fraud attacks and effectively prevent forged information. , intermediary agency, counterfeit applications, long-term lending, telecommunications fraud and other risks. "

Compared with financial institutions at the end of the overall process, social software is obliged to introduce a counterfeiting detection mechanism, and provide real-time call authentication reminders to users to mark whether the peer user has abnormal behavior or abnormal image forgery. , abnormal sound forgery. Earlier risk warning information can more effectively prevent the occurrence of risk results.In addition to platform-related implementation, the public can also take some self-help measures. Currently, there is a type of technology that can protect user data from being used to change faces and voices. This type of technology is called

Adversarial Sample Technology. Taking human face images as an example, face-changing technology can be rendered ineffective by mixing an adversarial perturbation mask invisible to the naked eye into the image. Users can use this type of technology to protect their media information such as images and voices on social media before making it public.

Finally,"precautionary awareness" is the cornerstone of all "magic", and the public also needs to strengthen the security protection of personal belongings and information.

"Don't click on unknown text message links, email links, etc., don't scan QR codes from unknown sources, download APPs, don't easily provide your face and other personal biometric information to others, don't easily reveal your ID card, bank card , verification codes and other information, and do not overly disclose or share animations, videos, etc.," Feng Yue suggested. "There is no guarantee that mobile phones and other mobile devices will not be lost. You can regularly clear WeChat chat records, hide relevant traces with people you have close relationships with, encrypt mobile photo albums, or activate the data protection function of your mobile phone. These daily behaviors may lead to fraud. Intercepted outside."

It takes a long time to achieve success, step by step. Preventing the "misuse" of artificial intelligence technology will remain a core issue in the digital era.

Source: Financial Industry Information

The above is the detailed content of AI face-swapping fraud defrauded 4.3 million yuan. Immediately consumer experts recommend three defensive measures. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Undress AI Tool

Undress images for free

Clothoff.io

AI clothes remover

AI Hentai Generator

Generate AI Hentai for free.

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1377

1377

52

52

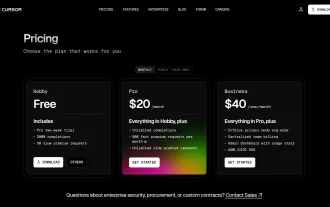

I Tried Vibe Coding with Cursor AI and It's Amazing!

Mar 20, 2025 pm 03:34 PM

I Tried Vibe Coding with Cursor AI and It's Amazing!

Mar 20, 2025 pm 03:34 PM

Vibe coding is reshaping the world of software development by letting us create applications using natural language instead of endless lines of code. Inspired by visionaries like Andrej Karpathy, this innovative approach lets dev

Top 5 GenAI Launches of February 2025: GPT-4.5, Grok-3 & More!

Mar 22, 2025 am 10:58 AM

Top 5 GenAI Launches of February 2025: GPT-4.5, Grok-3 & More!

Mar 22, 2025 am 10:58 AM

February 2025 has been yet another game-changing month for generative AI, bringing us some of the most anticipated model upgrades and groundbreaking new features. From xAI’s Grok 3 and Anthropic’s Claude 3.7 Sonnet, to OpenAI’s G

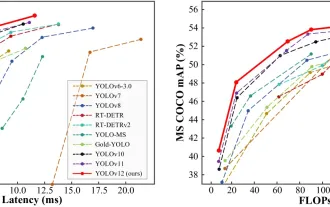

How to Use YOLO v12 for Object Detection?

Mar 22, 2025 am 11:07 AM

How to Use YOLO v12 for Object Detection?

Mar 22, 2025 am 11:07 AM

YOLO (You Only Look Once) has been a leading real-time object detection framework, with each iteration improving upon the previous versions. The latest version YOLO v12 introduces advancements that significantly enhance accuracy

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

Is ChatGPT 4 O available?

Mar 28, 2025 pm 05:29 PM

ChatGPT 4 is currently available and widely used, demonstrating significant improvements in understanding context and generating coherent responses compared to its predecessors like ChatGPT 3.5. Future developments may include more personalized interactions and real-time data processing capabilities, further enhancing its potential for various applications.

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

Best AI Art Generators (Free & Paid) for Creative Projects

Apr 02, 2025 pm 06:10 PM

The article reviews top AI art generators, discussing their features, suitability for creative projects, and value. It highlights Midjourney as the best value for professionals and recommends DALL-E 2 for high-quality, customizable art.

Google's GenCast: Weather Forecasting With GenCast Mini Demo

Mar 16, 2025 pm 01:46 PM

Google's GenCast: Weather Forecasting With GenCast Mini Demo

Mar 16, 2025 pm 01:46 PM

Google DeepMind's GenCast: A Revolutionary AI for Weather Forecasting Weather forecasting has undergone a dramatic transformation, moving from rudimentary observations to sophisticated AI-powered predictions. Google DeepMind's GenCast, a groundbreak

Which AI is better than ChatGPT?

Mar 18, 2025 pm 06:05 PM

Which AI is better than ChatGPT?

Mar 18, 2025 pm 06:05 PM

The article discusses AI models surpassing ChatGPT, like LaMDA, LLaMA, and Grok, highlighting their advantages in accuracy, understanding, and industry impact.(159 characters)

o1 vs GPT-4o: Is OpenAI's New Model Better Than GPT-4o?

Mar 16, 2025 am 11:47 AM

o1 vs GPT-4o: Is OpenAI's New Model Better Than GPT-4o?

Mar 16, 2025 am 11:47 AM

OpenAI's o1: A 12-Day Gift Spree Begins with Their Most Powerful Model Yet December's arrival brings a global slowdown, snowflakes in some parts of the world, but OpenAI is just getting started. Sam Altman and his team are launching a 12-day gift ex